Designing Agentic AI-Based Screening for Portfolio Investment

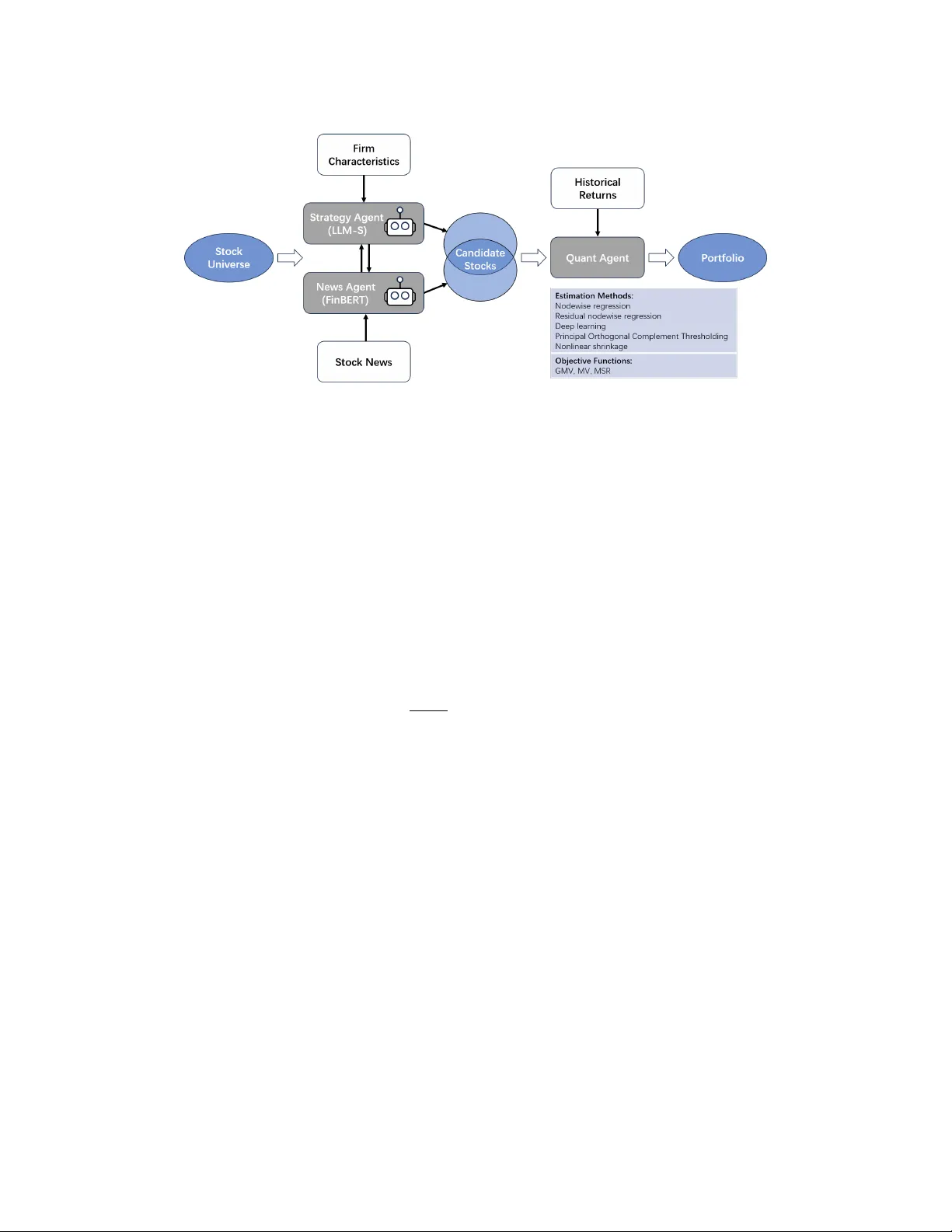

We introduce a new agentic artificial intelligence (AI) platform for portfolio management. Our architecture consists of three layers. First, two large language model (LLM) agents are assigned specialized tasks: one agent screens for firms with desira…

Authors: Mehmet Caner, Agostino Capponi, Nathan Sun