Stepwise Variational Inference with Vine Copulas

We propose stepwise variational inference (VI) with vine copulas: a universal VI procedure that combines vine copulas with a novel stepwise estimation procedure of the variational parameters. Vine copulas consist of a nested sequence of trees built from copulas, where more complex latent dependence can be modeled with increasing number of trees. We propose to estimate the vine copula approximate posterior in a stepwise fashion, tree by tree along the vine structure. Further, we show that the usual backward Kullback-Leibler divergence cannot recover the correct parameters in the vine copula model, thus the evidence lower bound is defined based on the Rényi divergence. Finally, an intuitive stopping criterion for adding further trees to the vine eliminates the need to pre-define a complexity parameter of the variational distribution, as required for most other approaches. Thus, our method interpolates between mean-field VI (MFVI) and full latent dependence. In many applications, in particular sparse Gaussian processes, our method is parsimonious with parameters, while outperforming MFVI.

💡 Research Summary

The paper introduces a novel variational inference (VI) framework that leverages vine copulas as a flexible variational family and proposes a stepwise estimation procedure for the variational parameters. Vine copulas decompose a high‑dimensional dependence structure into a hierarchy of bivariate (conditional) copulas arranged in a sequence of trees. More trees allow modeling increasingly complex latent dependencies. Traditional VI either uses a fully factorized mean‑field (MFVI) distribution, which cannot capture any dependence, or employs structured families with a pre‑specified complexity hyper‑parameter (e.g., rank of a low‑rank covariance, number of mixture components, or truncation level of a vine). Selecting such hyper‑parameters a priori is difficult and can lead to under‑ or over‑parameterized approximations.

The authors propose stepwise VI with vine copulas: starting from the first tree (T₁), all pair‑copula parameters are estimated by maximum likelihood; then, conditioned on the already estimated lower‑level copulas, the parameters of the second tree (T₂) are estimated, and so on up to tree T_{d‑1}. This mirrors the standard stepwise maximum‑likelihood approach used for fitting vines to data, but here the “data” are the latent variables of a Bayesian model. Crucially, a global stopping criterion is introduced: if every copula in the current tree is close to independence (e.g., Kendall’s τ below a small threshold), the algorithm stops adding further trees. This automatically determines the truncation level τ* of the vine, eliminating the need for a manually set complexity parameter. Consequently, the variational family interpolates smoothly between MFVI (τ* = 1) and a full, unrestricted vine (τ* = d‑1).

A second major contribution is the theoretical observation that the usual reverse Kullback‑Leibler (KL) divergence (KL(q‖p)) used to derive the evidence lower bound (ELBO) cannot recover the true vine parameters. The reverse KL exhibits a “zero‑forcing” effect that drives copula parameters toward independence, especially when higher‑order conditional dependencies are weak. To overcome this, the authors adopt Rényi α‑divergence as the divergence measure. Rényi divergence R_α(q‖p) reduces to KL as α → 1 but for α ∈ (0,1) places more weight on the true posterior, mitigating the zero‑forcing problem. They formulate a variational Rényi bound (VR) and employ the VR‑IWAE bound, an unbiased estimator of the gradient that generalizes the importance‑weighted auto‑encoder bound. The α‑parameter is tuned empirically (typically 0.5–0.8) to balance bias and variance.

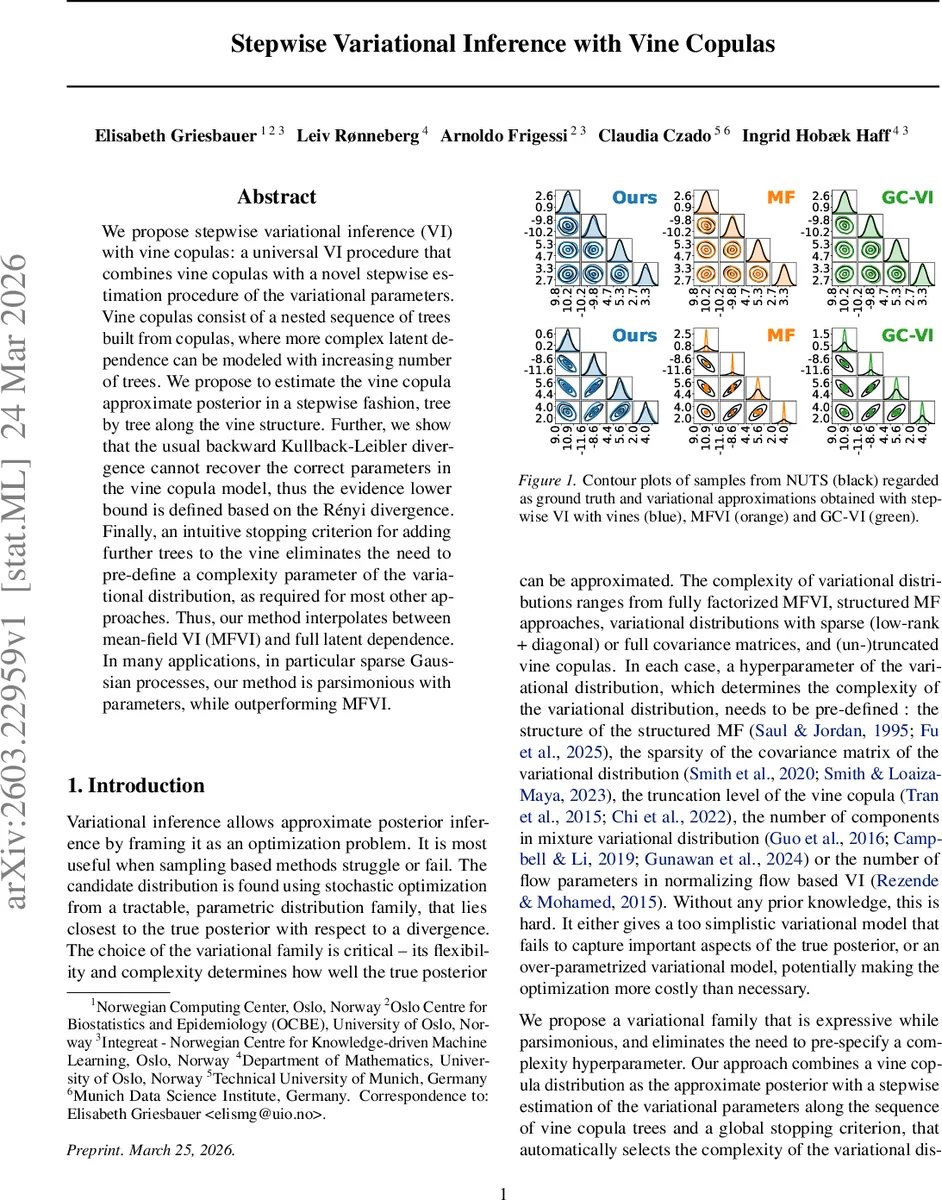

Experimental evaluation focuses on sparse Gaussian processes and multivariate Bayesian regression tasks. The authors compare three methods: MFVI, Gaussian‑copula VI (GC‑VI), and the proposed stepwise vine VI. Metrics include the ELBO, predictive root‑mean‑square error (RMSE), number of variational parameters, and visual comparison of posterior contours against ground‑truth samples obtained via the No‑U‑Turn Sampler (NUTS). Results show that stepwise vine VI consistently achieves higher ELBOs and lower RMSEs than MFVI and GC‑VI while using only modestly more parameters than MFVI. The stopping rule typically selects τ* ≈ 2–3, indicating that a few trees suffice to capture the essential dependence in these problems. Contour plots demonstrate that the vine‑based approximation closely matches the NUTS posterior, whereas MFVI yields overly simplistic, axis‑aligned contours.

In summary, the paper makes four key contributions: (1) introducing vine copulas as a universal, expressive variational family; (2) devising a tree‑wise, stepwise parameter estimation algorithm that aligns with the vine’s hierarchical structure; (3) providing an automatic, data‑driven truncation mechanism that removes the need for manual complexity tuning; and (4) showing that Rényi‑based ELBOs are necessary for correct parameter recovery in vine‑based VI. The approach offers a principled way to balance expressiveness and parsimony, making it attractive for Bayesian models where latent dependencies are important but computational resources are limited. Future work could explore adaptive selection of copula families, scalable stochastic implementations for very high dimensions, and applications to deep generative models.

Comments & Academic Discussion

Loading comments...

Leave a Comment