The Coordinate System Problem in Persistent Structural Memory for Neural Architectures

We introduce the Dual-View Pheromone Pathway Network (DPPN), an architecture that routes sparse attention through a persistent pheromone field over latent slot transitions, and use it to discover two independent requirements for persistent structural…

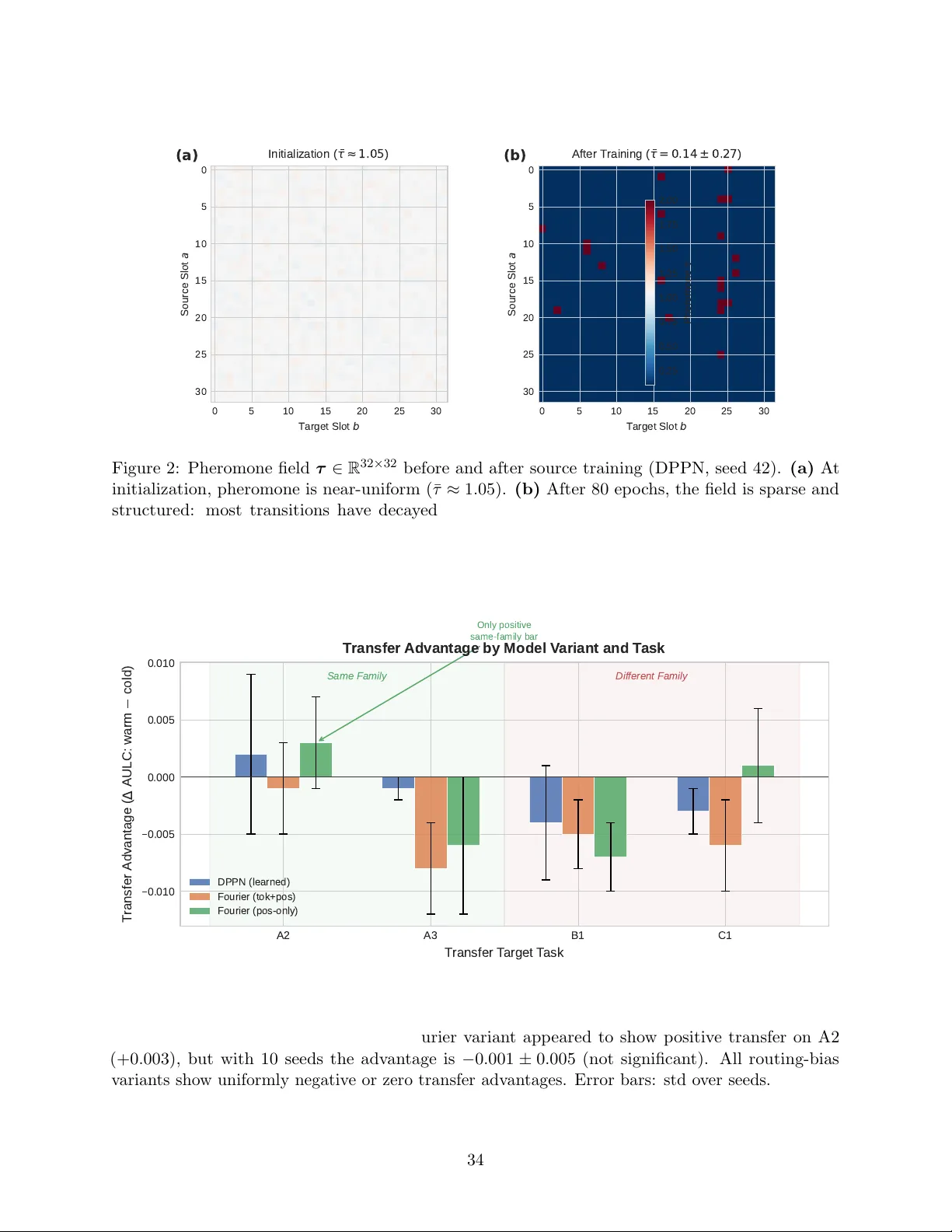

Authors: Abhinaba Basu