HyFI: Hyperbolic Feature Interpolation for Brain-Vision Alignment

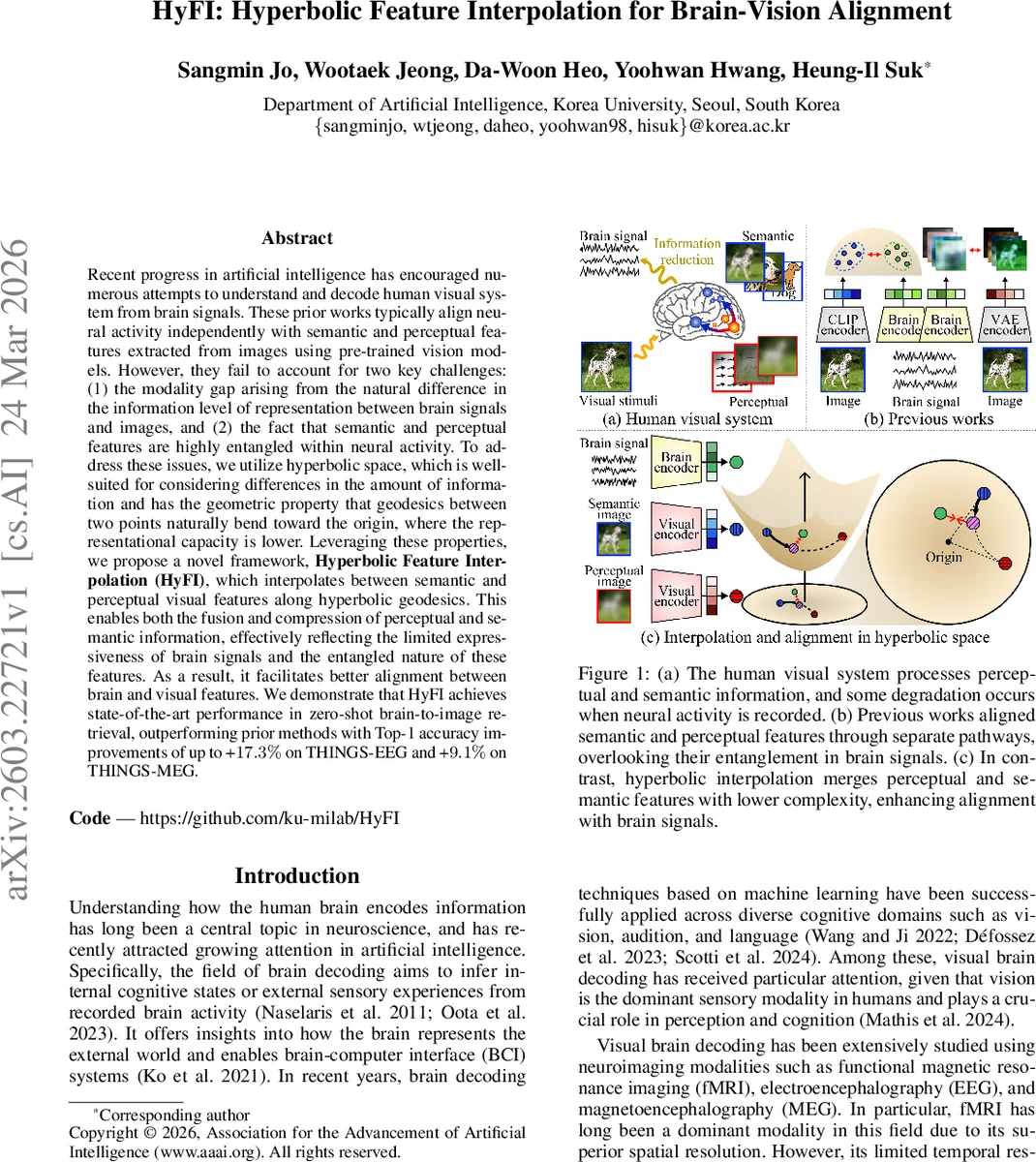

Recent progress in artificial intelligence has encouraged numerous attempts to understand and decode human visual system from brain signals. These prior works typically align neural activity independently with semantic and perceptual features extracted from images using pre-trained vision models. However, they fail to account for two key challenges: (1) the modality gap arising from the natural difference in the information level of representation between brain signals and images, and (2) the fact that semantic and perceptual features are highly entangled within neural activity. To address these issues, we utilize hyperbolic space, which is well-suited for considering differences in the amount of information and has the geometric property that geodesics between two points naturally bend toward the origin, where the representational capacity is lower. Leveraging these properties, we propose a novel framework, Hyperbolic Feature Interpolation (HyFI), which interpolates between semantic and perceptual visual features along hyperbolic geodesics. This enables both the fusion and compression of perceptual and semantic information, effectively reflecting the limited expressiveness of brain signals and the entangled nature of these features. As a result, it facilitates better alignment between brain and visual features. We demonstrate that HyFI achieves state-of-the-art performance in zero-shot brain-to-image retrieval, outperforming prior methods with Top-1 accuracy improvements of up to +17.3% on THINGS-EEG and +9.1% on THINGS-MEG.

💡 Research Summary

The paper tackles the longstanding challenge of aligning brain activity with visual representations, a problem that has become increasingly important with the rise of large vision‑language models (VLMs) and brain‑computer interface (BCI) research. Existing approaches typically treat semantic (high‑level) and perceptual (low‑level) visual features as separate streams, aligning each independently with neural data. This separation overlooks two fundamental issues: (1) the “modality gap” – brain recordings (EEG, MEG) contain far less information than the rich embeddings produced by pretrained vision models, and (2) semantic and perceptual information are highly entangled in the brain, not processed in isolation.

To address these problems, the authors propose Hyperbolic Feature Interpolation (HyFI), a framework that operates in hyperbolic space (specifically the Lorentz/hyperboloid model). Hyperbolic geometry has two properties that are especially useful for brain‑vision alignment: (i) geodesics naturally curve toward the origin, where representational capacity is low, and (ii) the space expands exponentially with radius, so points near the origin encode abstract, low‑information concepts. By interpolating semantic and perceptual visual features along a hyperbolic geodesic, HyFI simultaneously fuses the two modalities and compresses the resulting representation toward the origin, thereby matching the limited informational bandwidth of brain signals.

Method Overview

-

Feature Extraction – An input image is transformed into two versions: a “semantic” version obtained by fovea‑blur (preserving high‑level content while mimicking peripheral vision) and a “perceptual” version obtained by Gaussian blur (suppressing high‑frequency details). Both are fed to a frozen CLIP encoder, producing two d‑dimensional embeddings. Linear projection matrices (W_s, W_p) and a learnable scalar α_v scale these embeddings before they are lifted onto the hyperboloid via the exponential map at the time‑origin O.

-

Hyperbolic Interpolation – The perceptual embedding is logarithmically mapped to the tangent space at the semantic embedding, scaled by a learned interpolation coefficient t∈

Comments & Academic Discussion

Loading comments...

Leave a Comment