LOGSAFE: Logic-Guided Verification for Trustworthy Federated Time-Series Learning

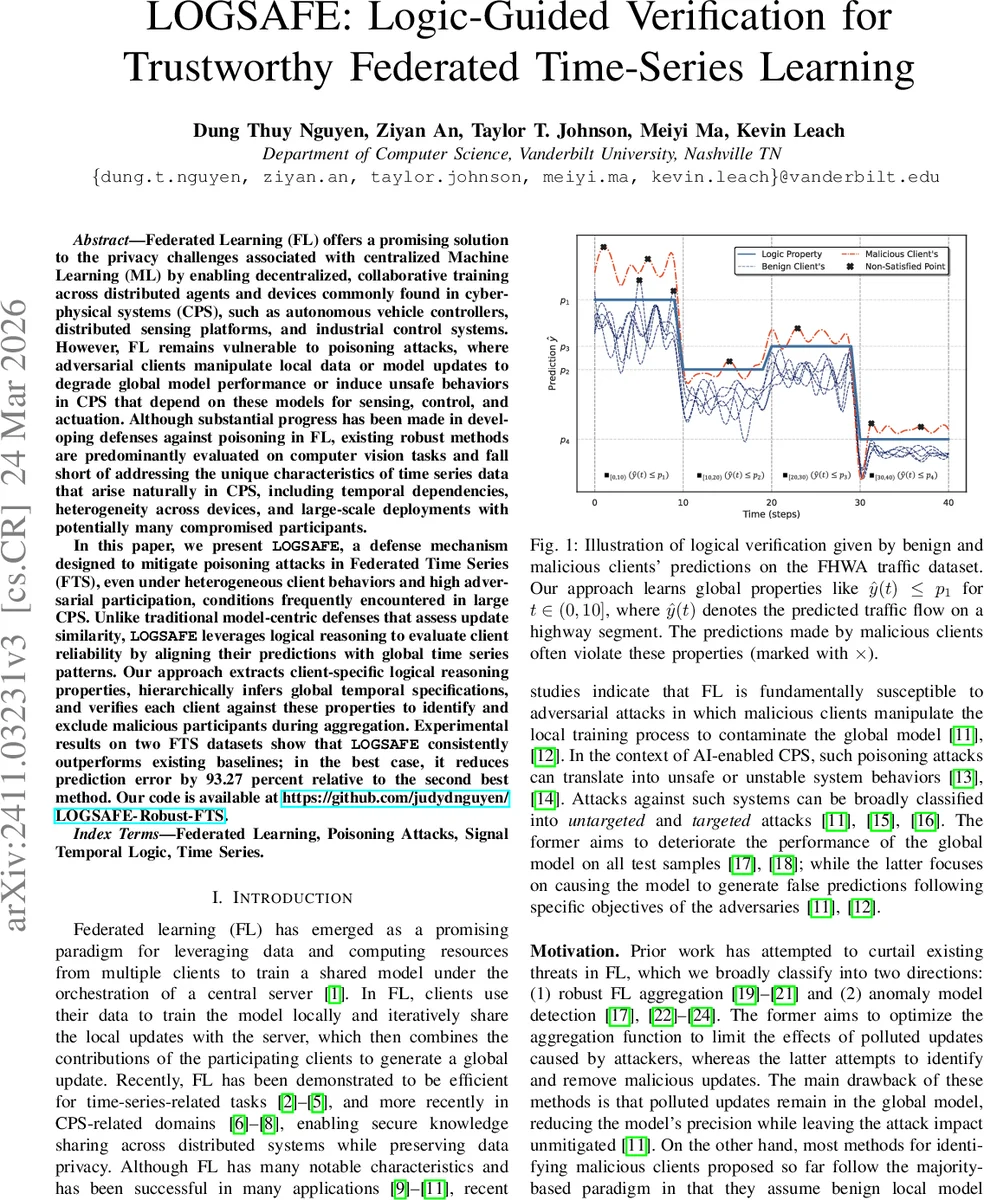

This paper introduces LOGSAFE, a defense mechanism for federated learning in time series settings, particularly within cyber-physical systems. It addresses poisoning attacks by moving beyond traditional update-similarity methods and instead using logical reasoning to evaluate client reliability. LOGSAFE extracts client-specific temporal properties, infers global patterns, and verifies clients against them to detect and exclude malicious participants. Experiments show that it significantly outperforms existing methods, achieving up to 93.27% error reduction over the next best baseline. Our code is available at https://github.com/judydnguyen/LOGSAFE-Robust-FTS.

💡 Research Summary

The paper introduces LOGSAFE, a novel defense for federated learning (FL) applied to time‑series forecasting in cyber‑physical systems (CPS). Traditional robust FL methods—such as Krum, Median, or trust‑score based aggregators—rely on similarity of model updates and have been primarily evaluated on static image datasets. These approaches break down for time‑series data because temporal dependencies, non‑stationarity, and client heterogeneity cause benign updates to diverge naturally, allowing malicious updates to blend in. LOGSAFE tackles this problem by shifting the focus from parameter space to prediction behavior. For each client, it automatically mines Signal Temporal Logic (STL) formulas that capture recurring temporal constraints in the client’s forecast traces (e.g., “whenever predicted traffic exceeds a threshold, it must fall below another threshold within k steps”). The extracted STL properties are represented by parameters such as thresholds, time windows, and operators. An unsupervised clustering step groups clients with similar STL parameters, enabling the system to infer hierarchical global specifications that summarize the collective temporal reasoning of the federation.

During each training round, the server evaluates how well each client’s model satisfies the global STL specifications, producing a robustness score. Clients with low scores are deemed untrustworthy and are either down‑weighted or excluded from the aggregation step. Because the verification is based on logical consistency rather than raw weight similarity, LOGSAFE can detect sophisticated poisoning attacks that deliberately mimic benign update statistics but produce temporally implausible predictions.

The authors validate LOGSAFE on two real‑world time‑series datasets—highway traffic flow and power load—and test a range of attacks, including untargeted Byzantine noise, targeted backdoor triggers, and PGD‑based precise poisoning. Compared against state‑of‑the‑art defenses, LOGSAFE reduces prediction error by up to 93.27 % relative to the second‑best baseline, and it remains effective even when more than 30 % of participants are compromised. The method also yields interpretable logical rules that can be audited, addressing the transparency needs of safety‑critical CPS deployments.

In summary, LOGSAFE demonstrates that formal logic‑guided verification, specifically STL property mining and hierarchical inference, provides a powerful, explainable, and robust defense for federated time‑series learning, opening new avenues for trustworthy AI in critical infrastructure.

Comments & Academic Discussion

Loading comments...

Leave a Comment