A Near-Raw Talking-Head Video Dataset for Various Computer Vision Tasks

Talking-head videos constitute a predominant content type in real-time communication, yet publicly available datasets for video processing research in this domain remain scarce and limited in signal fidelity. In this paper, we open-source a near-raw …

Authors: Babak Naderi, Ross Cutler

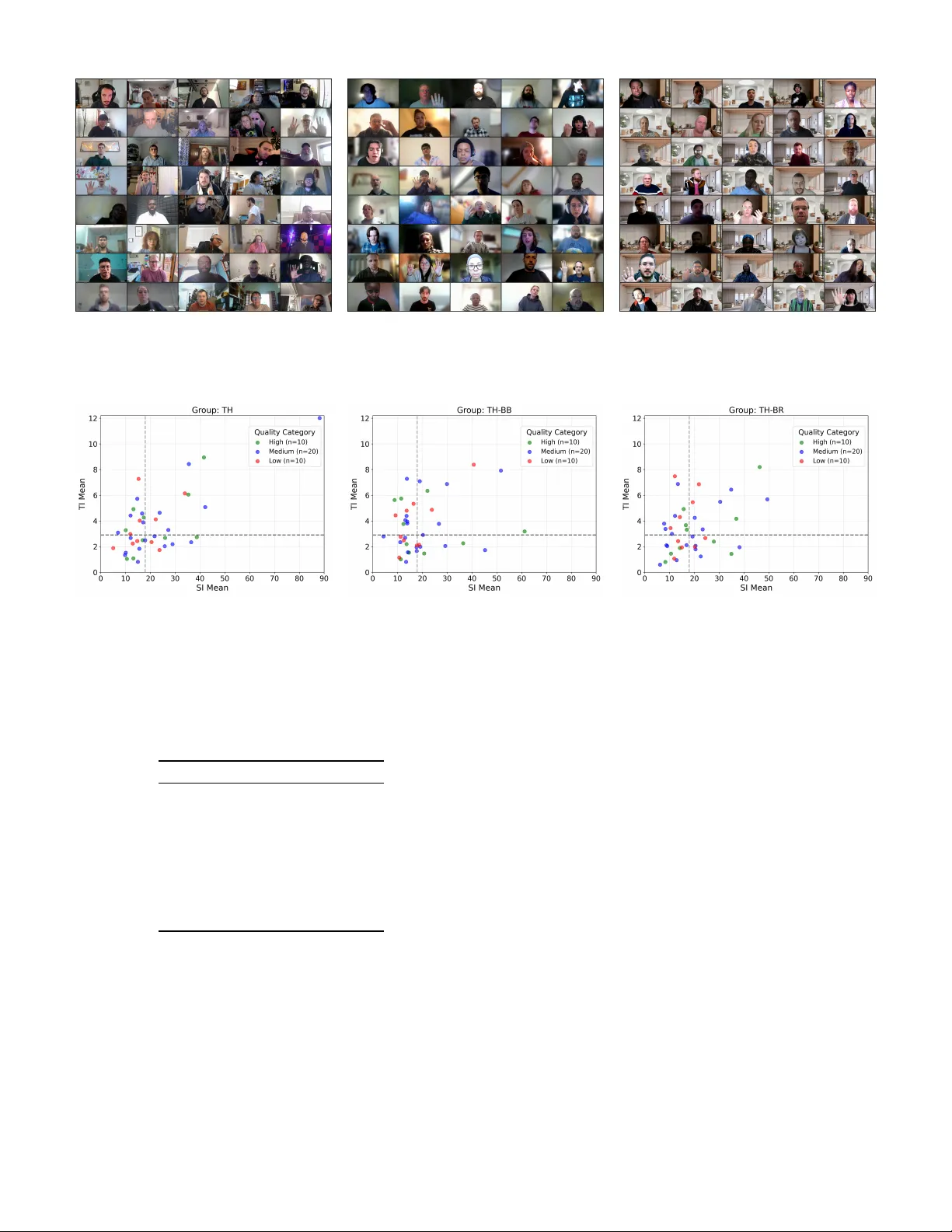

A Near -Ra w T alking-Head V ideo Dataset for V arious Computer V ision T asks Babak Naderi, Ross Cutler Microsoft Corporation, Redmond, USA Abstract —T alking-head videos constitute a predominant con- tent type in real-time communication, yet publicly a vailable datasets for video pr ocessing research in this domain r emain scarce and limited in signal fidelity . In this paper , we open- source a near-raw dataset of 847 talking-head recordings (ap- proximately 212 minutes), each 15 s in duration, captured from 805 participants using 446 unique consumer webcam devices in their natural en vironments. All recordings are stored using the FFV1 lossless codec, preserving the camera-native signal— uncompressed (24.4%) or MJPEG-encoded (75.6%)—without additional lossy processing . Each recording is annotated with a Mean Opinion Score (MOS) and ten per ceptual quality tokens that jointly explain 64.4% of the MOS variance. From this corpus, we curate a stratified benchmarking subset of 120 clips in thr ee content conditions: original, background blur , and background replacement. Codec efficiency e valuation acr oss f our datasets and four codecs, namely H.264, H.265, H.266, and A V1, yields VMAF BD-rate savings up to − 71 . 3% (H.266) relative to H.264, with significant encoder × dataset ( η 2 p = . 112 ) and encoder × content condition ( η 2 p = . 149 ) interactions, demonstrat- ing that both content type and background processing affect compression efficiency . The dataset offers 5 × the scale of the largest prior talking-head webcam dataset (847 vs. 160 clips) with lossless signal fidelity , establishing a resource for training and benchmarking video compr ession and enhancement models in r eal-time communication. Index T erms —T alking-head video, V ideo quality assessment, Lossless video dataset, Codec evaluation I . I N T R O D U C T I O N V ideo conferencing has become a primary mode of re- mote collaboration, with talking-head video constituting a predominant content type in real-time communication (R TC) platforms. The perceptual quality of this video directly af- fects communication effecti veness: degraded video reduces social presence, hampers non-verbal cue interpretation, and diminishes percei ved meeting quality [ 1 ]. Improving the video processing pipeline—through better compression, restoration, or enhancement—therefore has a direct impact on the user experience of R TC participants. Despite this importance, research on video processing tasks for talking-head content, including lossy compression, super- resolution (SR), and denoising, relies on datasets that either lack domain specificity or introduce compression artifacts during capture [ 2 ], [ 3 ]. Reliable ev aluation of these tasks requires camera feeds that preserve authentic scene-level degradations (noise, lo w-light conditions, motion blur) while av oiding post-processing artifacts such as lossy compression, spatial rescaling, or frame interpolation that would confound the measurement of algorithm performance. T o our kno wledge, no public dataset combines domain-representativ e webcam capture, lossless encoding, and the scale required for sys- tematic benchmarking and model development in the R TC domain. W e substantiate this gap belo w by revie wing the relev ant dataset landscape. T alking-head content exhibits distinct spatial and tempo- ral properties that dif ferentiate it from general video [ 2 ]. Backgrounds are typically static with low spatial complexity , faces require accurate texture preservation for fine detail, and subtle temporal variations from speech and gestures are sensitiv e to compression-induced artifacts. Standard codec test sequences [ 4 ], [ 5 ] and widely used benchmarks such as UVG [ 6 ] and MCL-JCV [ 7 ] contain professionally captured content that does not reflect these properties. Among talking-head corpora, large-scale web-sourced datasets provide scale and identity div ersity but limited signal fidelity . V oxCeleb [ 8 ] and V oxCeleb2 [ 9 ] together aggregate ov er one million utterances from Y ouT ube, but their quality is bounded by platform compression [ 3 ]. HDTF [ 10 ] and CelebV -HQ [ 11 ] curate higher-resolution face tracks from online video, yet remain subject to platform encoding. DH- FaceV id-1K [ 12 ] pro vides approximately 270,000 clips with multi-modal annotations but standardizes all content to 512 × 512 face crops, discarding background context relev ant to video conferencing. Controlled and specialized datasets address dif ferent limita- tions. MEAD [ 13 ] offers controlled multi-view studio capture at the cost of ecological validity for R TC scenarios, while FaceF orensics++ [ 14 ] targets manipulation detection rather than providing pristine references. VFHQ [ 3 ], among the most relev ant datasets for video face SR, curates over 16,000 high-fidelity clips and demonstrates that models trained on web-compressed video reproduce compression artifacts rather than recover genuine detail; ho wever , VFHQ content is still deriv ed from web video and does not provide lossless capture. Naderi et al . [ 2 ] introduced the first public dataset targeting video conferencing for codec e valuation, comprising 160 clips of 10 s duration with desktop and mobile recording scenarios and background processing variants. Howe ver , those record- ings were captured using the lossy output of consumer web- cams ( e.g ., VP8 or H.264), embedding compression artifacts into the reference signal. Beyond compression e valuation, these limitations also affect super-resolution research, where reference quality directly determines model fidelity . General-purpose SR benchmarks such as REDS [ 15 ] provide standardized ev aluation tracks, and models such as EDVR [ 16 ] and BasicVSR/BasicVSR++ [ 17 ], [ 18 ] serve as widely adopted baselines. Real-world SR datasets address the realism gap: RealVSR [ 19 ] captures paired se- quences with two cameras at dif ferent focal lengths, and V ideoLQ [ 20 ] provides a benchmark for blind real-world video SR. Howe ver , these datasets focus on general scenes and do not capture the domain-specific characteristics of webcam talking-head video, such as webcam sensor noise profiles, auto-exposure beha vior, and R TC-typical background processing. Chan et al . [ 20 ] corroborate that models trained on pre-compressed video reproduce compression artifacts rather than recover genuine detail, suggesting that pre-compressed references may similarly confound codec e valuation. Emerging compression paradigms further motiv ate lossless talking-head data: Generativ e Face V ideo Coding (GFVC) [ 21 ] benefits from clean identity and lip-motion ground truth, and neural R TC compression systems such as Gemino [ 22 ] combine SR with compression, making uncompressed references essential for isolating codec artifacts from camera noise. W e present a dataset of 847 talking-head recordings, each 15 s in duration (approximately 3.5 hours total), captured from 805 participants using their consumer webcams (446 unique camera models) in their natural en vironments. The capture application opens each camera at its largest supported resolu- tion and selects the highest-quality pix el format av ailable. The priority order is YUYV 4:2:2 or NV12 4:2:0 (uncompressed), with MJPEG as a fallback. All frames are encoded with the FFV1 lossless codec. When the camera exposes only lossy formats ( e.g ., MJPEG), the compressed stream is decoded and losslessly stored, preserving camera-compressed quality without further degradation from the capture pipeline. W e refer to this capture approach as “near-raw , ” as the capture pipeline applies no lossy compression beyond camera firmware processing (details in Section II-A ). Each recording is annotated with a Mean Opinion Score (MOS) obtained via subjective testing using the Absolute Category Rating (ACR) method according to the ITU-T Rec. P .910 [ 23 ] and with ten perceptual quality attributes ( e.g ., blur , noise, low resolution, lighting issues) deriv ed through a three-phase crowdsourced annotation process. From the full corpus, we curate a benchmarking subset of 120 clips stratified by Spatial Information (SI), T emporal Information (TI), and MOS. The subset is organized into three groups, each containing unique clips: original talking-head clips (TH), clips with production-grade background blur (TH-BB), and clips with background replacement (TH-BR). W e summarize our contributions as follows: 1) A large-scale, near-raw talking-head webcam video dataset comprising 847 losslessly encoded recordings from 805 participants across 446 unique camera configu- rations, with four recording scenarios cov ering common video conferencing behaviors. 2) A multi-dimensional quality annotation scheme com- bining A CR-based MOS with ten perceptual quality tokens, v alidated through cross-study reliability analysis (Pearson r ≥ 0 . 859 between independent annotation studies). 3) A stratified benchmarking subset of 120 clips in three groups (TH, TH-BB, TH-BR), balanced across quality lev els, spatial–temporal complexity , and distortion types. 4) An ev aluation of the dataset’ s utility for codec com- pression efficienc y analysis across H.264 (A VC) [ 24 ], H.265 (HEVC) [ 25 ], H.266 (VVC) [ 26 ], and A V1 [ 27 ] (see Section III ). I I . D A TA S E T This section describes the data collection methodology , the composition of the published dataset, the quality annotation process, and the construction of the benchmarking subset. A. Data Collection W e de veloped a custom recording application built on the DirectShow 1 multimedia framework and FFmpeg 2 . The application interfaces with webcams via the USB V ideo Class (UVC) protocol and captures video directly from the cam- era hardware with minimal software processing. Participants installed the application on their personal computers and recorded themselves in their natural environments ( e.g ., home offices, living rooms), ensuring authentic and div erse capture conditions. The application opens each camera at its largest supported resolution (minimum 1280 × 720 at 30 fps, 16:9 aspect ratio) and selects the highest-quality pixel format av ailable: uncom- pressed YUYV422 or NV12 when supported, with Motion JPEG (MJPEG) as a fallback. In the published dataset, 24.4% of recordings use uncompressed formats (YUYV422 or NV12) and 75.6% use MJPEG, reflecting the limited support for uncompressed output at high resolutions in consumer webcam hardware. All frames are encoded with the FFV1 lossless video codec [ 28 ], which guarantees bit-exact reconstruction, and frame timestamps are passed through from the camera without interpolation, dropping, or duplication. Each recording session captures a 20-second window , of which the first 5 s serve as pre-roll (discarded) and the re- maining 15 s constitute the published recording. Participants revie w the playback and accept or reject the recording; re- jected recordings are deleted and retaken. W e refer to this capture approach as “near-raw . ” Camera firmware applies demosaicing, white balance, and gamma correction before the signal reaches the recording software, but no further lossy compression is applied by the capture pipeline. This design eliminates the double-compression artifacts ( i.e ., cam- era compression followed by capture-software compression) present in con ventional webcam recording workflo ws [ 2 ]. The complete dataset, benchmarking subset, and a catalog with webcam metadata will be publicly released upon acceptance. Additional technical details on pixel format handling, lossless encoding configuration, and frame buf fering are provided in the supplementary material. 1 https://learn.microsoft.com/en- us/windows/win32/directsho w/ 2 https://ffmpe g.org/ T ABLE I: Distribution of recordings by resolution and in- put pixel format (% of 847 published clips). Uncompressed combines YUYV422 and NV12 formats. Percentages are independently rounded and may not sum exactly . Resolution Uncompressed MJPEG T otal 720p 10.7% 49.9% 60.7% 1080p 11.1% 22.1% 33.2% 1440p or larger 2.6% 3.5% 6.1% T otal 24.4% 75.6% 100% B. Dataset Composition Participants were recruited via the Prolific crowdsourcing platform and recorded themselves performing one of four randomly assigned scenarios designed to elicit div erse motion patterns: continuous slow body mov ement (S01, 315 clips), hand counting exercise (S02, 157 clips), text reading ex ercise (S03, 303 clips), and natural video call behavior (S04, 72 clips). S01 and S03 receiv ed higher assignment probability to provide lar ger sample sizes for the two scenarios with the most controlled motion characteristics. These scenarios introduce div erse temporal characteristics, from relati vely lo w motion in S03 to continuous upper-body motion in S01. A total of 1,119 recordings were collected. After quality control—removing 272 recordings due to low frame rate (below 15 fps), frame drops, perceptible blockiness detected through annotation, or other technical f ailures—847 clips from 805 unique participants are published. The dataset spans 446 unique camera models (identified by USB vendor and product identifiers), including integrated laptop cameras and external USB webcams from multiple manufacturers. T able I shows the distribution of recordings across resolutions and pixel formats. The majority of clips are captured at 720p or 1080p, reflecting the capabilities of participants’ consumer webcams, with higher-resolution captures (1440p, 4K) representing a small fraction. The participant pool comprises 63% male and 37% female contributors; full dataset statistics including demographics are provided in the supplementary material. C. Quality Annotation Each recording is annotated along two complementary dimensions using subjective assessment: an ov erall quality rating, represented as a Mean Opinion Score (MOS), and a set of multi-label perceptual quality attributes (problem tokens). The ov erall percei ved quality was assessed with the ACR methodology following ITU-T Recommendation P .910 [ 23 ], deployed via its crowdsourcing implementation [ 29 ]. The study was conducted on the Prolific platform with 216 ac- cepted workers, yielding approximately 7 votes per clip. Quality control followed established crowdsourcing best practices [ 29 ], [ 30 ], [ 31 ]. Participants passed qualification checks including Ishihara color vision plates [ 32 ], device validation (minimum 1920 × 1080 display at 30 Hz), attention checks, trapping questions with clips of known expected quality , and gold standard clips with unambiguous quality lev els. W orkers failing these checks were excluded from the aggregated ratings. T o provide fine-grained, multi-label diagnostic annotations, we de veloped a set of ten perceptual quality tokens through a three-phase process. In the first phase, 273 crowdsourced assessors described observed distortions in free-text comments for approximately 260 clips (a 31% sample of the dataset), yielding 2,596 comments that were analyzed using keyword- based tagging and large language model (LLM)-assisted clas- sification (the LLM step was assistive only; the final taxonomy was human-validated). The analysis identified blur (42.9% of comments), lighting/color issues (23.9%), and noise (21.0%) as the most frequently mentioned distortions. In the second phase, the derived token set was v alidated on a 200-clip random subsample with 24 workers providing 5–6 votes per clip; distortion tokens were presented in randomized order to mitigate primacy and recency biases. In the third phase, all 1,119 recorded clips (including those later excluded by quality control) plus 160 reference clips from prior work [ 2 ] were annotated by 112 workers with 7–8 votes per clip. A token is considered selected for a clip only if ≥ 2 assessors independently chose it, reducing noise from idiosyncratic selections. T able II lists the ten tokens and their selection rates. Cross-study Spearman correlations between token selection rates from the pilot (Phase 2) and the full annotation (Phase 3) show moderate to strong agreement ( ρ ≥ 0 . 5 ) for sev en of ten tokens. The near-zero correlation for blockiness ( ρ = − 0 . 009 ) is consistent with the lossless capture design: the capture pipeline applies no additional block-based compression, and the MJPEG encoding performed by camera firmware operates at sufficiently high quality that block boundaries are not perceptually salient at typical webcam bitrates. By contrast, 10.6% of clips from the VCD dataset [ 2 ], which uses lossy webcam output, exhibited blockiness in the same annotation study; in the present dataset, blockiness was detected in only 15 of 1,119 recordings (1.3%), and those clips were excluded from the published set. The MOS v alues obtained under the problem token paradigm correlate strongly with the independently collected A CR MOS (Pearson r = 0 . 859 on the full dataset of 1,119 clips, r = 0 . 893 on the 200-clip common subset), confirming that the additional annotation task does not degrade scalar quality judgments. A multiple linear regression of MOS on all ten token pro- portions yields R 2 = 0 . 644 , indicating that the tokens jointly explain 64.4% of the variance in overall quality . Blur and low resolution have the largest negati ve coefficients ( β ≈ − 1 . 0 ), followed by noise ( β = − 0 . 87 ) and motion ( β = − 0 . 80 ). The tokens represent largely independent perceptual dimen- sions (Kaiser–Meyer –Olkin measure = 0 . 475 , below the 0.50 threshold for factor analysis [ 33 ]), suggesting that they capture non-ov erlapping quality information rather than reflecting a smaller set of latent factors. Figure 1 shows the cumulativ e distribution function (CDF) of MOS v alues across all 847 published clips. More than 50% of clips receive a MOS belo w 3.5, indicating that a substantial fraction of consumer webcam feeds captured at their maximum T ABLE II: Perceptual quality tokens and their selection rates across the 847 published clips. A token is considered selected when ≥ 2 assessors independently chose it. T oken Description Sel. (%) Noisy Random pixel-lev el luminance/chrominance fluctuations (sensor noise) 69.8 Low resolution Insufficient spatial detail; ov erall poor definition 61.3 Lighting/color Over -/under-exposure, direct glare, white balance deviation, color cast 36.6 No issue No perceptible quality degradation 29.2 Blurry Loss of edge sharpness; defocus or motion blur 13.6 Choppy motion Irregular temporal motion; stuttering or judder 6.0 Framerate Perceptibly low or abnormal frame rate 5.9 Banding V isible color banding or scan-line artifacts 5.8 Other Uncategorized quality issues 4.4 Blockiness Block-based compression artifacts (8 × 8 DCT) 0.0 Fig. 1: Cumulative distribution function of MOS values across the 847 published clips. supported resolution contain perceptible quality impairments, suggesting a practical need for video enhancement in this domain. D. Benchmarking Subset From the 847 published clips, we curate a benchmarking subset of 120 clips organized into three mutually exclusi ve groups of 40 clips each. Each clip is trimmed to 10 s to provide a duration suitable for subjective quality testing. The three groups serve distinct ev aluation purposes: • T alking Head (TH): Original clips without any post- processing, representing unmodified webcam-captured content. • T alking Head – Background Blur (TH-BB): Clips pro- cessed with a production video conferencing background blur pipeline, simulating a common real-time processing scenario. • T alking Head – Background Replacement (TH-BR): Clips processed with a production video conferencing background replacement pipeline using four popular vir- tual backgrounds, with one background randomly as- signed per clip. Figure 2 shows thumbnail atlases for clips from each of the three benchmarking groups. 1) Stratification strate gy .: The subset selection employs a stratified sampling algorithm to ensure that each group covers the full range of quality le vels, spatial–temporal complexity , and distortion types. Clips are assigned to one of 12 strata formed by the Cartesian product of three MOS bins (Low: [1 . 0 , 2 . 8) , Medium: [2 . 8 , 4 . 0) , High: [4 . 0 , 5 . 0] ) and four SI × TI quadrants (split at the population medians of SI and TI, computed per ITU-T P .910 [ 23 ]). The target MOS distribution follows a 25%/50%/25% (Low/Medium/High) ratio, mirroring the population distribution which is skewed tow ard medium quality . A greedy scoring heuristic selects clips iterati vely , balancing fiv e objectiv es through a weighted composite score: (1) stra- tum quota fulfillment, (2) cov erage of rare perceptual quality tokens (each of the 10 tokens should appear ≥ 2 times per group), (3) feature-space div ersity in normalized MOS/SI/TI coordinates, (4) participant uniqueness within and across groups (no participant appears more than once per group), and (5) a soft preference for manually curated clips that exhibit suf ficient structural detail to challenge video processing pipelines. Groups are built sequentially with strict mutual exclusi vity . The resulting 120 clips represent an approximately 14% sampling rate from the 847 eligible recordings. Figure 3 shows the distribution of clips in each group across the SI–TI space, color-coded by quality category . The spread across all four quadrants confirms that the stratification achiev es the intended co verage of spatial–temporal complexity . T able III compares the token selection rates across the three benchmarking groups. The similar distributions confirm that the stratification preserves distortion-type balance across groups. I I I . A N A L Y S I S The full dataset of 847 clips (each 15 s) can serve as training data for data-dri ven models, while the stratified benchmarking subset of 120 clips (each trimmed to 10 s) is designed for systematic ev aluation. W e demonstrate the latter use case through codec compression efficienc y analysis. W e ev aluate four encoders: H.264 [ 24 ] (baseline, Intel Quick Sync V ideo, hardware-accelerated), H.265 [ 25 ] (Intel Quick Sync V ideo, hardware-accelerated), VV enC H.266 [ 26 ] (software), and libaom A V1 [ 27 ] (software, version 3.13.1). All clips are (a) TH (b) TH-BB (c) TH-BR Fig. 2: Thumbnail atlases of the three benchmarking groups. (a) T alking Head, (b) T alking Head - Background Blur , (c) T alking Head - Background Replacement (a) TH (b) TH-BB (c) TH-BR Fig. 3: Distribution of clips in the SI–TI space for each benchmarking group, color-coded by MOS category (green: High, blue: Medium, red: Low). Dashed lines indicate population medians. T ABLE III: Problem token selection rates (% of clips) per benchmarking group. A token is selected when ≥ 2 assessors chose it. T oken TH TH-BB TH-BR Noise 62 70 55 Low resolution 45 50 50 Lighting/color 45 42 42 No issue 22 28 40 Blur 15 10 10 Choppy motion 5 2 2 Framerate 2 5 8 Banding 5 8 8 Other 0 5 5 Blockiness 0 0 0 encoded in a low-delay configuration at multiple fixed quan- tization parameter (QP) levels (QP = 20–42 for H.264/H.265; adjusted ranges for A V1 and H.266), following the method- ology of Naderi et al . [ 2 ]; exact encoder settings are listed in the supplementary material. Bjontegaard delta rate (BD- rate) [ 34 ] is computed per clip relati ve to the H.264 baseline using Peak Signal-to-Noise Ratio (PSNR) and V ideo Multi- Method Assessment Fusion (VMAF) [ 35 ] as quality metrics. A. Comparison with Other Datasets W e compare BD-rate distributions across fi ve datasets: the HEVC common test sequences [ 4 ], UVG [ 6 ], VCD [ 2 ] (desktop subset), the proposed Near-Ra w T alking Head (NR- TH) benchmarking subset, and NR-TH after VP8 pre-encoding (NR-TH + VP8) which simulates W ebR TC capture conditions as in VCD. T able IV reports the mean BD-rate (%) for each dataset–encoder combination under both quality metrics. Figure 4 shows the rate–distortion curves for the NR- TH benchmarking subset av eraged across clips, with 95% confidence bands. H.266 and A V1 consistently outperform H.264 and H.265 across the entire bitrate range under both metrics, with H.266 achie ving the lar gest gains at low bitrates. A two-way mixed analysis of variance (ANO V A) with encoder (within-subject) and dataset (between-subject) on VMAF BD-rate ( N = 255 clips with complete BD-rate estimates across all encoders) reveals a significant main effect of encoder, F (2 , 502) = 937 . 10 , p < . 001 , η 2 p = . 789 3 , confirming that codec generation is the dominant factor in compression efficienc y . The main ef fect of dataset is also 3 Greenhouse–Geisser-corrected p -values are reported where sphericity was violated T ABLE IV: Mean BD-rate (%) relativ e to H.264 baseline. 95% confidence intervals are shown in parentheses. More negati ve values indicate greater bitrate savings. NR-TH + VP8 denotes NR-TH content pre-encoded with VP8 to simulate W ebR TC capture conditions. N VMAF BD-Rate (%) PSNR BD-Rate (%) Dataset Clips H.265 A V1 H.266 H.265 A V1 H.266 HEVC [ 4 ] 25 − 34 . 9 (3.0) − 32 . 6 (4.1) − 65 . 0 (3.9) − 34 . 0 (3.0) − 36 . 1 (5.1) − 64 . 5 (4.2) UVG [ 6 ] 16 − 34 . 5 (5.2) − 40 . 0 (9.9) − 70 . 4 (7.5) − 42 . 5 (6.1) − 48 . 2 (9.4) − 72 . 6 (7.5) VCD [ 2 ] 120 − 27 . 9 (2.3) − 36 . 3 (1.7) − 63 . 7 (1.8) − 32 . 1 (1.4) − 40 . 0 (1.8) − 62 . 5 (1.6) NR-TH (ours) 120 − 25 . 9 (2.7) − 42 . 2 (2.7) − 71 . 3 (1.7) − 36 . 0 (1.9) − 52 . 8 (2.4) − 70 . 2 (1.5) NR-TH + VP8 120 − 25 . 6 (2.1) − 35 . 1 (1.4) − 62 . 9 (1.9) − 35 . 0 (1.3) − 38 . 0 (1.3) − 61 . 4 (1.5) (a) NR-TH, PSNR (b) NR-TH, VMAF (c) VCD, VMAF (d) HEVC, VMAF Fig. 4: Rate–distortion curves for the NR-TH benchmarking subset ( a – b ) and VMAF rate–distortion curves for VCD ( c ) and HEVC ( d ) datasets. Shaded bands indicate 95% confidence intervals. significant, F (3 , 251) = 2 . 75 , p = . 044 , η 2 p = . 032 . A significant encoder × dataset interaction, F (6 , 502) = 10 . 59 , p < . 001 , η 2 p = . 112 , indicates that the relati ve adv antage of encoders depends on the content. PSNR-based results confirm these patterns with a larger dataset effect ( F (3 , 251) = 16 . 80 , p < . 001 , η 2 p = . 167 ). As shown in T able IV , the NR-TH subset yields larger BD- rate savings for software codecs (A V1 and H.266) than VCD, consistent with the significant encoder × dataset interaction. The PSNR and VMAF metrics agree for A V1 and H.266 but div erge for H.265, where the VMAF BD-rate on NR- TH shows reduced savings compared to VCD while the PSNR BD-rate is comparable, suggesting that the choice of quality metric affects the measured compression advantage of hardware H.265 on webcam content. B. Effect of Backgr ound Pr ocessing Having established that content type influences codec ef fi- ciency across datasets, we next examine whether background processing within the NR-TH subset produces similar effects. W e refer to the three benchmarking groups (TH, TH-BB, TH- BR) as content conditions in the statistical analyses that follo w . A two-way mixed ANO V A with encoder (within-subject) and content condition (between-subject) on VMAF BD-rate rev eals significant main effects of both encoder ( F (2 , 202) = 344 . 74 , p < . 001 , η 2 p = . 773 ) and content condition ( F (2 , 101) = 29 . 64 , p < . 001 , η 2 p = . 370 ), as well as a significant interaction ( F (4 , 202) = 8 . 84 , p < . 001 , η 2 p = . 149 ). The content condition ef fect is larger under VMAF ( η 2 p = . 370 ) than under PSNR ( η 2 p = . 185 ), indicating that background processing has a stronger influence on perceptual-quality-based compression efficienc y . Background replacement (TH-BR) yields the largest BD- rate savings for A V1 ( − 58 . 9% ) and H.266 ( − 77 . 1% ), com- pared to − 30 . 0% and − 71 . 6% on original content (TH), respectiv ely . H.265 shows a narro wer range across conditions ( − 24 . 3% to − 29 . 5% ) than A V1, though wider than H.266. The PSNR-based interaction is notably larger ( η 2 p = . 479 ), with A V1 on TH-BR reaching − 66 . 5% compared to − 45 . 4% on TH. These results indicate that the simplified backgrounds in TH-BR content are more ef ficiently exploited by modern software codecs, while hardware H.265 does not benefit to the same extent. Per-condition BD-rate statistics are provided in the supplementary material. C. Effect of P er ceptual Distortions W e in vestigate whether codec compression efficienc y is af- fected by the presence of perceptual distortions captured by the quality tokens (Section II-C ). F or each of the eight tokens with sufficient v ariance (excluding blockiness and choppy motion), a linear mixed-effects model (LMM) is fitted with BD-rate as the response, encoder , content condition, and the proportion of assessors selecting the token as fixed effects (including all interactions), and source clip as a random intercept. Under PSNR, no token shows a statistically significant ef fect on BD-rate ( p > . 05 for all terms), suggesting that PSNR- based compression efficiency is not substantially associated with the perceptual distortions captured by the tokens. Under VMAF , the noise token shows a significant encoder- specific effect. For H.265, higher noise prev alence is associ- ated with worse ( i.e ., less ne gative) VMAF BD-rate on original content (coefficient = +40 . 4 , p < . 001 ), meaning that the rate–distortion advantage of H.265 over H.264 diminishes on noisy clips. This penalty is attenuated in TH-BB ( p = . 006 ) and TH-BR ( p = . 020 ), suggesting that background processing partially mitigates the noise-related efficiency loss—which is expected, as background blur and replacement affect the majority of the picture area and thereby reduce the spatial noise that the encoder must code. Neither A V1 nor H.266 shows significant sensitivity to the noise token, indicating that these codecs maintain a stable performance ratio relativ e to H.264 across noise lev els—that is, both the test codec and the baseline appear similarly affected by noise, preserving their relativ e efficienc y gap. The no-issue token also shows a significant effect for H.265: clips perceived as artifact-free yield better VMAF BD-rate (coefficient = − 35 . 9 , p = . 002 ). These findings indicate that perceptual distortions, particu- larly noise, can influence codec ev aluation outcomes in metric- and encoder-dependent ways, highlighting the importance of including realistic source-lev el distortions in benchmarking content. D. Effect of Lossy Captur e Compression Beyond source-le vel distortions, the recording pipeline itself can alter codec ev aluation outcomes. T o quantify this effect, we simulate a W ebR TC-style capture pipeline by pre-encoding the NR-TH benchmarking clips with VP8 at a constant bitrate of 2500 kbps using the real-time preset (see supplementary material) and then repeating the codec efficienc y ev aluation on the decoded output. This setup approximates the record- ing conditions of the VCD dataset [ 2 ], which was captured through a W ebR TC-based application that uses VP8 or H.264 encoding [ 36 ]. A two-way repeated measures ANO V A ( N = 117 clips) rev eals a significant main effect of VP8 pre-processing on VMAF BD-rate ( F (1 , 116) = 113 . 10 , p < . 001 , η 2 p = . 494 ) and a significant VP8 × encoder interaction ( F (2 , 232) = 27 . 42 , p < . 001 , η 2 p = . 191 ) 4 , indicating that the degra- dation is encoder-dependent. Post-hoc paired comparisons (Bonferroni-corrected) sho w that VP8 pre-processing signif- icantly increases VMAF BD-rate for A V1 ( +7 . 1 pp, d = 0 . 60 , p < . 001 ) and H.266 ( +7 . 7 pp, d = 1 . 36 , p < . 001 ), while H.265 remains unaffected ( +0 . 3 pp, p Bonf = 1 . 00 ). The ov erall VMAF BD-rate worsens by 5.0 pp ( d = 0 . 98 ); PSNR- based results confirm the same pattern with larger effect sizes ( η 2 p = . 760 for the main effect; ov erall +8 . 0 pp, d = 1 . 77 ). As shown in T able IV , the NR-TH + VP8 BD-rates closely resemble those of VCD, consistent with the fact that VCD was recorded through a W ebR TC pipeline. The encoder efficienc y ranking (H.266, A V1, H.265, from most to least efficient) is preserved regardless of VP8 pre-processing. These results indicate that lossy capture compression reduces the content- dependent signal variation that advanced codecs exploit, di- minishing their measured advantage ov er simpler encoders. 4 Greenhouse–Geisser-corrected p -values are reported where Mauchly’ s test indicated sphericity violations. Lossless references are therefore important for accurately char - acterizing the efficienc y gains of modern codecs on webcam content. I V . C O N C L U S I O N W e presented a near-raw talking-head webcam video dataset comprising 847 losslessly encoded recordings (approx- imately 212 minutes) from 805 participants captured with 446 unique consumer webcam configurations. The dataset preserves camera-nativ e signal fidelity through a custom cap- ture pipeline that encodes all frames with the FFV1 lossless codec, eliminating the double-compression artifacts present in conv entional webcam recording workflows. Each record- ing is annotated with a MOS representing ov erall quality and ten perceptual quality tokens derived through a three- phase crowdsourced annotation process. W e introduced a stratified benchmarking subset of 120 clips in three content conditions (TH, TH-BB, TH-BR) and demonstrated its utility through codec compression efficienc y analysis. The significant encoder × dataset interaction ( η 2 p = . 112 under VMAF) showed that codec rankings shift across content types: for example, the VCD dataset, whose references contain lossy post-processing compression artifacts, yields dif ferent relativ e encoder gains than NR-TH, suggesting that lossless references may better isolate codec-induced distortions from capture-pipeline arti- facts. Standard test sequences (HEVC [ 4 ], UVG [ 6 ]) are professionally captured and do not reflect the noise, lo w- resolution, and lighting characteristics of consumer webcam content. The encoder × content condition interaction ( η 2 p = . 149 under VMAF) further indicated that background process- ing alters the rate–distortion landscape, with modern software codecs benefiting more from simplified backgrounds than hardware H.265. The perceptual distortion analysis showed that source-le vel noise selecti vely degrades the compression advantage of hardware H.265 under VMAF ev aluation, while A V1 and H.266 maintained stable relativ e performance across distortion lev els. Simulating W ebR TC-style capture by pre- encoding with VP8 confirmed that lossy recording substan- tially reduces the measured BD-rate adv antage of A V1 and H.266, with the resulting BD-rates aligning closely with VCD—corroborating that lossless references are important for isolating codec performance from capture-pipeline artifacts. Beyond codec ev aluation, the dataset supports tasks such as video quality assessment, real-video super-resolution, restora- tion, and denoising, where the aim is to extend ecological validity . The finding that more than 50% of clips ha ve MOS below 3.5 further indicates a practical need for enhancement models to improv e the perceptual quality of consumer webcam feeds. The full dataset, benchmarking subset, MOS ratings, and webcam camera catalog will be released under an open- source license upon acceptance. R E F E R E N C E S [1] J. Sko wronek, A. Raake, G. H. Berndtsson, O. S. Rummukainen, P . Usai, S. N. B. Gunkel, M. Johanson, E. A. P . Habets, L. Malfait, D. Lindero, and A. T oet, “Quality of experience in telemeetings and videoconferencing: A comprehensive survey , ” IEEE Access , vol. 10, pp. 63 885–63 931, 2022. [2] B. Naderi, R. Cutler, N. S. Khongbantabam, Y . Hosseinkashi, H. T urbell, A. Sadovniko v , and Q. Zou, “VCD: A V ideo Conferencing Dataset for V ideo Compression, ” in ICASSP 2024-2024 IEEE International Confer ence on Acoustics, Speech and Signal Processing (ICASSP) . IEEE, 2024, pp. 3970–3974. [3] L. Xie, X. W ang, H. Zhang, C. Dong, and Y . Shan, “VFHQ: A high-quality dataset and benchmark for video face super-resolution, ” in IEEE/CVF Conference on Computer V ision and P attern Recognition W orkshops (CVPR W) , 2022, pp. 657–666. [4] F . Bossen, “Common test conditions and software reference configura- tions, ” JCTVC-L1100 , v ol. 12, no. 7, 2013. [5] F . Bossen, J. Boyce, X. Li, V . Seregin, and K. Suhring, “JVET common test conditions and software reference configurations for SDR video, ” Joint V ideo Experts T eam (JVET) of ITU-T SG } , vol. 16, pp. 19–27, 2019. [6] A. Mercat, M. V iitanen, and J. V anne, “UVG dataset: 50/120fps 4K sequences for video codec analysis and development, ” in Pr oceedings of the 11th ACM Multimedia Systems Conference . Istanbul Turke y: A CM, May 2020, pp. 297–302. [Online]. A vailable: https://dl.acm.org/doi/10.1145/3339825.3394937 [7] H. W ang, W . Gan, S. Hu, J. Y . Lin, L. Jin, L. Song, P . W ang, I. Katsav ounidis, A. Aaron, and C.-C. J. Kuo, “MCL-JCV: A JND- based H.264/A VC video quality assessment dataset, ” in 2016 IEEE International Conference on Image Pr ocessing (ICIP) , Sep. 2016, pp. 1509–1513, iSSN: 2381-8549. [8] A. Nagrani, J. S. Chung, and A. Zisserman, “V oxCeleb: a large-scale speaker identification dataset, ” arXiv:1706.08612 [cs] , Jun. 2017, arXiv: 1706.08612. [Online]. A vailable: http://arxiv .org/abs/1706.08612 [9] J. S. Chung, A. Nagrani, and A. Zisserman, “V oxCeleb2: Deep Speaker Recognition, ” in Interspeech 2018 . ISCA, Sep. 2018, pp. 1086–1090. [Online]. A v ailable: http://www .isca- speech.org/archi ve/ Interspeech 2018/abstracts/1929.html [10] Z. Zhang, L. Li, Y . Ding, and C. Fan, “Flo w-guided one-shot talking face generation with a high-resolution audio-visual dataset, ” in IEEE/CVF Confer ence on Computer V ision and P attern Recognition (CVPR) , 2021, pp. 3661–3670. [11] H. Zhu, W . W u, W . Zhu, L. Jiang, S. T ang, L. Zhang, Z. Liu, and C. C. Loy , “CelebV -HQ: A large-scale video facial attributes dataset, ” in European Confer ence on Computer V ision (ECCV) , 2022, pp. 650– 667. [12] D. Di, H. Feng, W . Sun, Y . Ma, H. Li, W . Chen, L. Fan, T . Su, and X. Y ang, “DH-FaceV id-1K: A large-scale high-quality dataset for face video generation, ” 2024. [13] K. W ang, Q. W u, L. Song, Z. Y ang, W . W u, C. Qian, R. He, Y . Qiao, and C. C. Loy , “MEAD: A large-scale audio-visual dataset for emotional talking-face generation, ” in European Conference on Computer V ision (ECCV) , 2020, pp. 700–717. [14] A. R ¨ ossler , D. Cozzolino, L. V erdoliva, C. Riess, J. Thies, and M. Nießner, “FaceForensics++: Learning to detect manipulated facial images, ” in IEEE/CVF International Confer ence on Computer V ision (ICCV) , 2019, pp. 1–11. [15] S. Nah, R. Timofte, S. Gu, S. Baik, S. Hong, G. Moon, S. Son, and K. Mu Lee, “NTIRE 2019 Challenge on video super -resolution: Methods and results, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Reco gnition W orkshops , 2019, pp. 0–0. [16] X. W ang, K. C. K. Chan, K. Y u, C. Dong, and C. C. Loy , “EDVR: V ideo restoration with enhanced deformable con volutional networks, ” in IEEE/CVF Conference on Computer V ision and P attern Recognition W orkshops (CVPR W) , 2019, pp. 1954–1963. [17] K. C. Chan, X. W ang, K. Y u, C. Dong, and C. C. Loy , “Basicvsr: The search for essential components in video super -resolution and beyond, ” in Proceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 2021, pp. 4947–4956. [18] K. C. Chan, S. Zhou, X. Xu, and C. C. Loy , “Basicvsr++: Improving video super-resolution with enhanced propagation and alignment, ” in Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , 2022, pp. 5972–5981. [19] X. Y ang, W . Xiang, H. Zeng, and L. Zhang, “Real-world video super - resolution: A benchmark dataset and a decomposition based learning scheme, ” in IEEE/CVF International Confer ence on Computer V ision (ICCV) , 2021, pp. 4781–4790. [20] K. C. Chan, S. Zhou, X. Xu, and C. C. Loy , “Inv estigating tradeoffs in real-world video super-resolution, ” in Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , 2022, pp. 5962–5971. [21] B. Chen, J. W ang, Y . W ang, Y . Y e, S. W ang, and Y . Li, “Generati ve face video coding techniques and standardization efforts: A re view , ” 2024. [22] V . Siv araman, S. Fouladi, S. Bhatt, S. Puffer , and K. Winstein, “Gemino: Practical and robust neural compression for video conferencing, ” in USENIX Symposium on Networked Systems Design and Implementation (NSDI) , 2024. [23] { ITU-T Recommendation P .910 } , Subjective video quality assessment methods for multimedia applications . International T elecommunication Union, Gene va, Switzerland, 2023. [24] T . Wie gand, G. J. Sulliv an, G. Bjøntegaard, and A. Luthra, “Overview of the H.264 / A VC V ideo Coding Standard, ” IEEE Tr ansactions On Cir cuits And Systems F or V ideo T echnology , p. 19, 2003. [25] G. J. Sulliv an, J.-R. Ohm, W .-J. Han, and T . Wie gand, “Overview of the High Efficiency V ideo Coding (HEVC) Standard, ” IEEE T ransactions on Circuits and Systems for V ideo T echnology , vol. 22, no. 12, pp. 1649– 1668, Dec. 2012, conference Name: IEEE T ransactions on Circuits and Systems for V ideo T echnology . [26] B. Bross, Y .-K. W ang, Y . Y e, S. Liu, J. Chen, G. J. Sulliv an, and J.- R. Ohm, “Overvie w of the V ersatile V ideo Coding (VVC) Standard and its Applications, ” IEEE T ransactions on Cir cuits and Systems for V ideo T echnology , vol. 31, no. 10, pp. 3736–3764, Oct. 2021, conference Name: IEEE Transactions on Circuits and Systems for V ideo T echnology . [27] J. Han, B. Li, D. Mukherjee, C.-H. Chiang, A. Grange, C. Chen, H. Su, S. Park er, S. Deng, U. Joshi, Y . Chen, Y . W ang, P . W ilkins, Y . Xu, and J. Bankoski, “ A T echnical Overview of A V1, ” Proceedings of the IEEE , vol. 109, no. 9, pp. 1435–1462, Sep. 2021, conference Name: Proceedings of the IEEE. [28] M. Niedermayer , D. Rice, and J. Martinez, “FFV1 video coding format versions 0, 1, and 3, ” IETF RFC 9043, 2022. [29] B. Naderi and R. Cutler, “ A crowdsourcing approach to video quality assessment, ” in ICASSP , 2024. [30] S. Egger-Lampl, J. Redi, T . Hoßfeld, M. Hirth, S. M ¨ oller , B. Naderi, C. Keimel, and D. Saupe, “Crowdsourcing Quality of Experience Ex- periments, ” in Quality of Experience: Advanced Concepts, Applications and Methods . Springer , 2014, pp. 154–190. [31] F . Ribeiro, D. Flor ˆ encio, C. Zhang, and M. Seltzer, “CRO WDMOS: An approach for crowdsourcing mean opinion score studies, ” in 2011 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) , May 2011, pp. 2416–2419, iSSN: 2379-190X. [32] J. Clark, “The Ishihara test for color blindness. ” American Journal of Physiological Optics , 1924. [33] H. F . Kaiser , “ An index of factorial simplicity , ” Psyc hometrika , vol. 39, no. 1, pp. 31–36, 1974. [34] G. Bjontegaard, “Calculation of average PSNR differences between RD-curves, ” ITU-T V ideo Coding Experts Group (VCEG), Document VCEG-M33, 2001. [35] Z. Li, C. Bampis, J. Novak, A. Aaron, K. Swanson, A. Moorthy , and J. De Cock, “VMAF: The Journey Continues, ” T ech. Rep., 2018. [Online]. A vailable: https://netflixtechblog.com/ vmaf- the- journey- continues- 44b51ee9ed12 [36] H. T . Alvestrand, “W ebRTC video processing and codec requirements, ” RFC 7742, Internet Engineering T ask Force, 2016. A P P E N D I X A D A TA C O L L E C T I O N D E T A I L S This section provides additional technical details on the recording pipeline summarized in Sec. 2.1. a) Pixel format selection.: Consumer webcams expose multiple output formats over the USB V ideo Class (UVC) protocol, and the choice of format determines signal fi- delity before an y software processing occurs. The recording application implements a hierarchical pixel format priority: (1) YUYV422 (uncompressed 4:2:2 chroma subsampling, 16 bits/pixel), (2) NV12 (uncompressed 4:2:0, 12 bits/pixel), and (3) MJPEG (Motion JPEG, lossy intra-frame compression) as a fallback. Uncompressed formats preserve the camera’ s decoded sensor signal without additional quantization, whereas MJPEG introduces DCT -based compression artifacts within the camera firmware before the signal reaches the host. For YUYV422 and NV12 inputs, the software performs only a lossless memory layout conv ersion (packed to planar format) before encoding. For MJPEG inputs, the software decodes the JPEG stream and preserves the detected color range (full or limited) through metadata tagging; these clips are losslessly stored after camera compression, meaning their quality ceiling is bounded by the MJPEG encoding applied in camera firmware. b) Lossless encoding.: All recordings are encoded with the FFV1 lossless video codec (Level 3) in a Matroska (.mkv) container . FFV1 uses context-adapti ve arithmetic coding to achiev e lossless intra-frame compression, reducing file size relativ e to uncompressed storage while guaranteeing bit-exact reconstruction of the input signal. W e configure FFV1 Level 3 encoding with 16 slices per frame and per-slice CRC-32 integrity checks, allo wing detection of bit corruption during storage and transmission. The codec guarantees bit-exact re- construction: decoded pixel values are identical to the values provided to the encoder . c) F rame timing and b uffering.: The recording applica- tion operates in passthrough frame timing mode, which pre- serves the original frame timestamps from the camera without interpolation, frame dropping, or duplicate frame insertion. A 256 MB ring buf fer is allocated to absorb I/O latency spikes and prev ent frame drops during disk writes. d) Signal fidelity .: Before data reaches the recording soft- ware, the firmware of consumer UVC webcams typically ap- plies demosaicing, white balance, gamma correction (sRGB), noise reduction, and auto-exposure adjustments. For the web- cams used in this dataset, these processing steps are applied in camera firmware and are not bypassable through the standard UVC capture interface. The recording pipeline adds no further lossy processing: YUYV422 and NV12 inputs undergo only lossless format con version, and all frames are encoded with the mathematically lossless FFV1 codec. This approach avoids adding a second lossy compression stage after camera output and therefore pre vents the double-compression artifacts ( i.e . camera compression followed by capture-software compres- sion) present in con ventional webcam recording workflows. A P P E N D I X B F U L L D A TA S E T S T AT I S T IC S T able V provides summary dataset statistics, including per- scenario clip counts, resolution and pixel format distributions, and per-recording self-reported demographics. A P P E N D I X C P E R - G RO U P B D - R ATE S TA T I S T I C S This section reports the mean BD-rate (%) for each encoder–group combination in the NR-THD benchmarking T ABLE V: Full statistics of the published dataset. Eight participants withdrew consent for demographic disclosure only (not for data release); their recordings remain in the published dataset but are excluded from demographic counts. Property V alue Published clips 847 Unique participants 805 Unique camera models 446 Duration per clip 15 s Codec FFV1 (lossless) Container Matroska (.mkv) Resolution distrib ution 1280 × 720 (720p) 514 (60.7%) 1920 × 1080 (1080p) 281 (33.2%) 2560 × 1440 (1440p) 43 (5.1%) 3840 × 2160 (4K) 8 (0.9%) 1920 × 1440 1 (0.1%) Input pixel format MJPEG (camera-compressed) 640 (75.6%) YUYV422 (uncompressed) 206 (24.3%) NV12 (uncompressed) 1 (0.1%) Recor ding scenarios S01: Continuous slow body mov ement 315 (37.2%) S02: Hand counting ex ercise 157 (18.5%) S03: T ext reading exercise 303 (35.8%) S04: Natural video call behavior 72 (8.5%) P er-r ecording self-reported demographics † Female / Male / Prefer not to say 310 / 528 / 1 Not disclosed 8 White / Black / Asian / Mixed / Other 571 / 145 / 64 / 48 / 11 Not disclosed 8 MOS range (ACR, 5-point) 1.00–5.00 (mean 3.35) † Demographics are reported per recording (847 clips from 805 participants; 42 participants contrib uted two recordings each). T ABLE VI: Mean BD-rate (%) relative to H.264 baseline per benchmarking group, with 95% t -based confidence intervals computed over clips within each group. VMAF and PSNR metrics are reported separately . Metric Group H.265 A V1 H.266 VMAF TH − 24 . 3 ± 5 . 0 − 30 . 0 ± 3 . 2 − 71 . 6 ± 2 . 4 TH-BB − 24 . 0 ± 4 . 5 − 37 . 9 ± 1 . 6 − 65 . 2 ± 3 . 3 TH-BR − 29 . 5 ± 4 . 6 − 58 . 9 ± 3 . 1 − 77 . 1 ± 2 . 0 PSNR TH − 39 . 4 ± 3 . 4 − 45 . 4 ± 3 . 2 − 69 . 5 ± 2 . 9 TH-BB − 39 . 1 ± 2 . 3 − 46 . 3 ± 2 . 2 − 66 . 5 ± 2 . 4 TH-BR − 29 . 4 ± 3 . 2 − 66 . 5 ± 2 . 7 − 74 . 6 ± 1 . 6 subset: original talking-head (TH), talking-head with back- ground blur (TH-BB), and talking-head with background re- placement (TH-BR). V alues in T able VI are computed relati ve to the H.264 baseline. Figure 5 shows the rate–distortion curves for each bench- marking group, plotting mean PSNR and VMAF against bits per pixel (bpp) across all four codecs. A P P E N D I X D E N C O D I N G C O N FI G U R A T I O N T able VII lists the exact command lines used in the codec benchmarking experiments. All encoders are configured for (a) TH, PSNR (b) TH-BB, PSNR (c) TH-BR, PSNR (d) TH, VMAF (e) TH-BB, VMAF (f) TH-BR, VMAF Fig. 5: Rate–distortion curves per benchmarking group. T op row: PSNR vs. bpp; bottom ro w: VMAF vs. bpp. Each curv e shows the mean metric value across clips in the group. low-delay operation (no B-frames, no picture reordering) with a single encoding pass and single-threaded ex ecution to ensure deterministic output. Compression is controlled via fix ed quan- tization parameter (QP) sweeps; no rate-control mode is used. The resolution and frame rate flags in the H.266 command are set per clip; the values shown are representativ e. Hardware-accelerated H.264 and H.265 encoding was per- formed on an Intel Core i7-13800H (14 cores) with Intel Iris Xe Graphics (PCI de vice ID 8086:A7A0), running Win- dows 11 Enterprise 25H2, Intel graphics driv er 32.0.101.673, and FFmpeg build 2026-02-18-git-52b676bb2. A V1 encoding used libaom (A OMedia Project A V1 Encoder) version 3.13.1; the --i422 or --i420 flag was selected depending on the input pix el format. H.266 encoding used VV enC version 1.14.0 (64-bit, SIMD=A VX2). All inputs were conv erted to YUV 4:2:0 pixel format before H.266 encoding; objecti ve metrics were computed on the same format. VP8 pre-encoding was used to simulate W ebR TC-style capture conditions [ 36 ]. The NR-TH benchmarking clips were encoded with VP8 in constant bitrate (CBR) mode at 2500 kbps, a representativ e target for HD video calls. The VP8-encoded output was decoded back to raw YUV using FFmpeg’ s default VP8 decoder and then used as input to the codec benchmarking pipeline abov e. T ABLE VII: Encoder configurations used in the codec benchmarking experiments. Encoder Command H.264 ffmpeg -init_hw_device qsv=hw -filter_hw_device hw (Intel QSV) -i { input } -c:v h264_qsv -load_plugin h264_hw -scenario 2 -p_strategy 0 -b_strategy 0 -threads 1 -sc_threshold 0 -preset fast -bf 0 -look_ahead 0 -g 6000 -i_qfactor 1.0 -i_qoffset 0 -b_qfactor 1.0 -b_qoffset 0 -refs 1 -low_power 1 -q { qp } { output } H.265 ffmpeg -init_hw_device qsv=hw -filter_hw_device hw (Intel QSV) -i { input } -c:v hevc_qsv -load_plugin hevc_hw -scenario 2 -p_strategy 0 -b_strategy 0 -threads 1 -sc_threshold 0 -preset fast -bf 0 -look_ahead 0 -g 6000 -i_qfactor 1.0 -i_qoffset 0 -b_qfactor 1.0 -b_qoffset 0 -refs 1 -low_power 1 -q { qp } { output } A V1 aomenc --codec=av1 --ivf --i420 --end-usage=q (libaom 3.13.1) --threads=1 --passes=1 --disable-kf --lag-in-frames=0 --cpu-used=8 --sb-size=64 --psnr --rt --enable-cdef=1 --tune-content=default --cq-level= { qp } -o { output } { input } H.266 vvencFFapp -c lowdelay_fast.cfg -c extra_vvenc.cfg (VV enC 1.14.0) --InputFile { input } -s { W } x { H } -fr { fps } -b { bitstream } -o { output } --QP { qp } --Threads 1 extra vvenc.cfg: InternalBitDepth:8 OutputBitDepth:8 PicReordering:0 NumPasses:-1 LookAhead:-1 VP8 ffmpeg -i { input } -map 0:v:0 -an -c:v libvpx (libvpx, pre-encode) -pix_fmt yuv420p -deadline realtime -cpu-used 5 -lag-in-frames 0 -error-resilient 1 -g 60 -keyint_min 60 -b:v 2500k -minrate 2500k -maxrate 2500k -bufsize 2500k { output }

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment