AwesomeLit: Towards Hypothesis Generation with Agent-Supported Literature Research

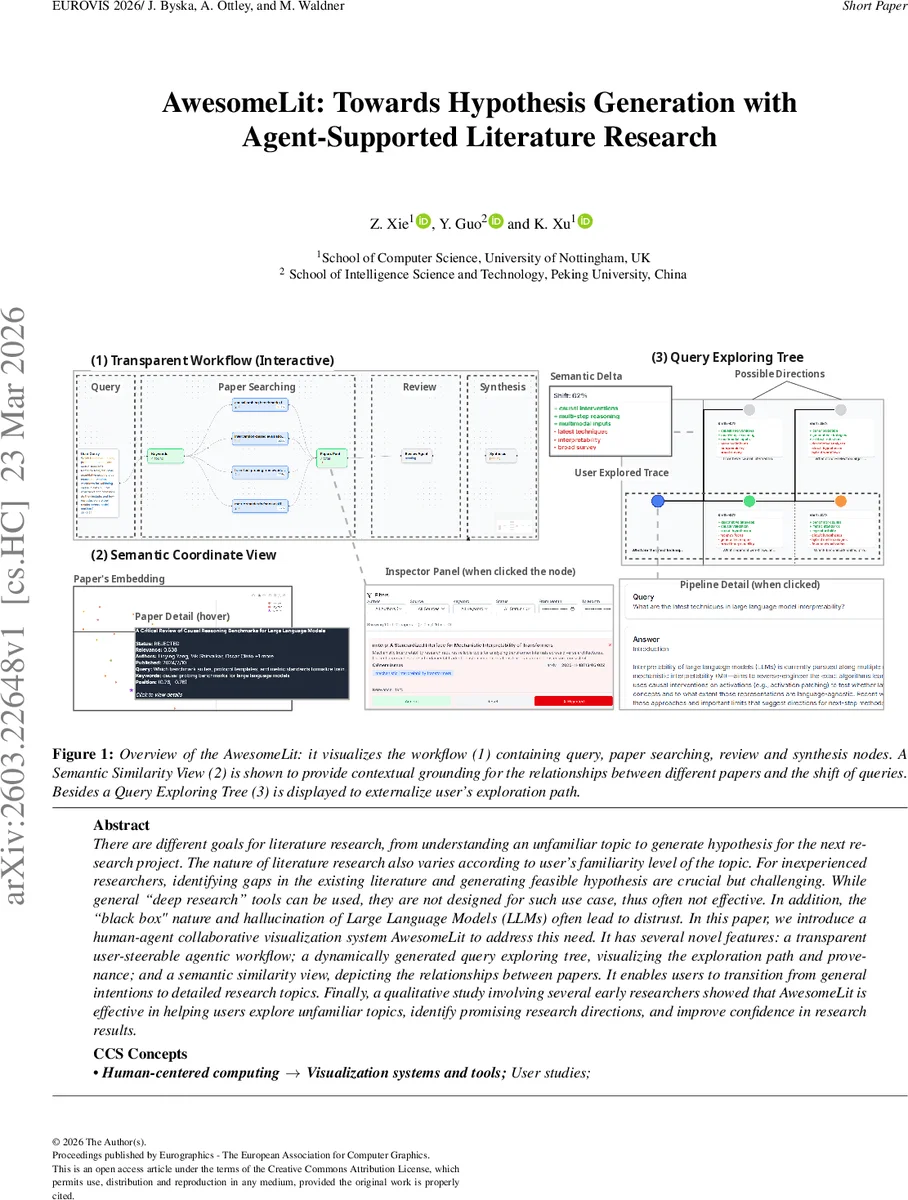

There are different goals for literature research, from understanding an unfamiliar topic to generate hypothesis for the next research project. The nature of literature research also varies according to user’s familiarity level of the topic. For inexperienced researchers, identifying gaps in the existing literature and generating feasible hypothesis are crucial but challenging. While general deep research'' tools can be used, they are not designed for such use case, thus often not effective. In addition, the black box" nature and hallucination of Large Language Models (LLMs) often lead to distrust. In this paper, we introduce a human-agent collaborative visualization system AwesomeLit to address this need. It has several novel features: a transparent user-steerable agentic workflow; a dynamically generated query exploring tree, visualizing the exploration path and provenance; and a semantic similarity view, depicting the relationships between papers. It enables users to transition from general intentions to detailed research topics. Finally, a qualitative study involving several early researchers showed that AwesomeLit is effective in helping users explore unfamiliar topics, identify promising research directions, and improve confidence in research results.

💡 Research Summary

AwesomeLit addresses a critical gap in literature research tools for novice researchers who lack deep domain knowledge and need to move from a vague research intent to concrete hypothesis generation. Existing “deep research” LLM services (e.g., OpenAI’s or Gemini’s) are largely black‑box, provide one‑shot answers, and do not expose intermediate reasoning, making them unsuitable for iterative, exploratory workflows. To remedy this, the authors designed a human‑agent collaborative visualization system that makes the LLM’s pipeline transparent, steerable, and visually grounded.

The system comprises three tightly integrated components. First, the Transparent Workflow visualizes the agent’s internal process as a directed node‑link diagram with three stages—Search, Review, and Synthesis. After each stage the pipeline automatically pauses, presenting the intermediate output in an Inspector Panel. Users can edit keywords, prune or rerun any node, and only after explicit confirmation does the next stage execute. This checkpoint mechanism demystifies the agent’s logic (addressing requirement R3), provides traceability to source papers (R2), and gives users fine‑grained control (R4).

Second, the Query Exploring Tree captures the natural evolution of research questions. Each node represents a possible research direction generated by the agent; edges are annotated with two quantitative metrics: semantic offset (percentage indicating how far the new query deviates from its parent) and semantic delta (a list of added or removed keywords). By visualizing these metrics, users can decide whether to broaden the search (high offset) or deepen it (low offset), thereby supporting the dynamic pivoting identified as D3. The tree also allows users to revisit earlier branches without losing context, facilitating non‑linear literature reviews.

Third, the Semantic Similarity View provides concrete evidence for the abstract branching decisions. Paper abstracts are embedded with OpenAI’s text‑embedding‑3‑small model, reduced to two dimensions via UMAP, and displayed as glyphs on a scatterplot. Glyph colors encode user feedback (green = accepted, red = rejected, blue = neutral). Hovering reveals a card with metadata (title, authors, year, URL). The view is linked to the Query Tree, so selecting a tree node highlights the corresponding set of papers, allowing users to assess cluster quality and filter out false positives—directly addressing D1.

Implementation uses GPT‑5‑mini for all LLM calls (chosen for a balance of reasoning power and latency) and the arXiv API as the primary paper source, though the architecture is modular enough to incorporate other databases. The front‑end is built with React and D3.js, enabling real‑time updates of node status, edge metrics, and the UMAP plot.

The authors evaluated AwesomeLit with a mixed‑methods study involving seven final‑year computer‑science students tasked with identifying a novel research direction in “Visualization for AI.” Each 90‑minute session consisted of free exploration, a focused hypothesis‑generation task, and a post‑session interview. Quantitative results (7‑point Likert) showed high satisfaction with transparency (M = 6.00), clear stage distinction (6/7 participants), and usefulness of the tree for understanding keyword expansion (5/7). Qualitative feedback highlighted the value of the checkpoint mechanism for verifying insights against source papers, while also noting that initial queries were sometimes overly broad (e.g., “XAI”), suggesting a need for better prompt scaffolding.

In discussion, the authors acknowledge limitations: reliance on a single data source (arXiv), lack of collaborative multi‑user features, and absence of automated hypothesis evaluation metrics. They propose future work to integrate additional scholarly APIs, support synchronous teamwork, and develop quantitative scoring of generated hypotheses.

Overall, AwesomeLit makes three substantive contributions: (1) a transparent, user‑steerable LLM workflow that builds trust and enables error correction; (2) a query‑exploration tree that visualizes the evolution of research questions with semantic offset/delta metrics; and (3) a semantic similarity view that grounds abstract decisions in concrete, interactive evidence. By combining these, the system empowers novice researchers to conduct systematic literature reviews, filter and verify papers efficiently, and iteratively refine their research focus—addressing the core challenges of trust, steerability, and evolutionary inquiry identified in the formative study.

Comments & Academic Discussion

Loading comments...

Leave a Comment