Wake Up to the Past: Using Memory to Model Fluid Wake Effects on Robots

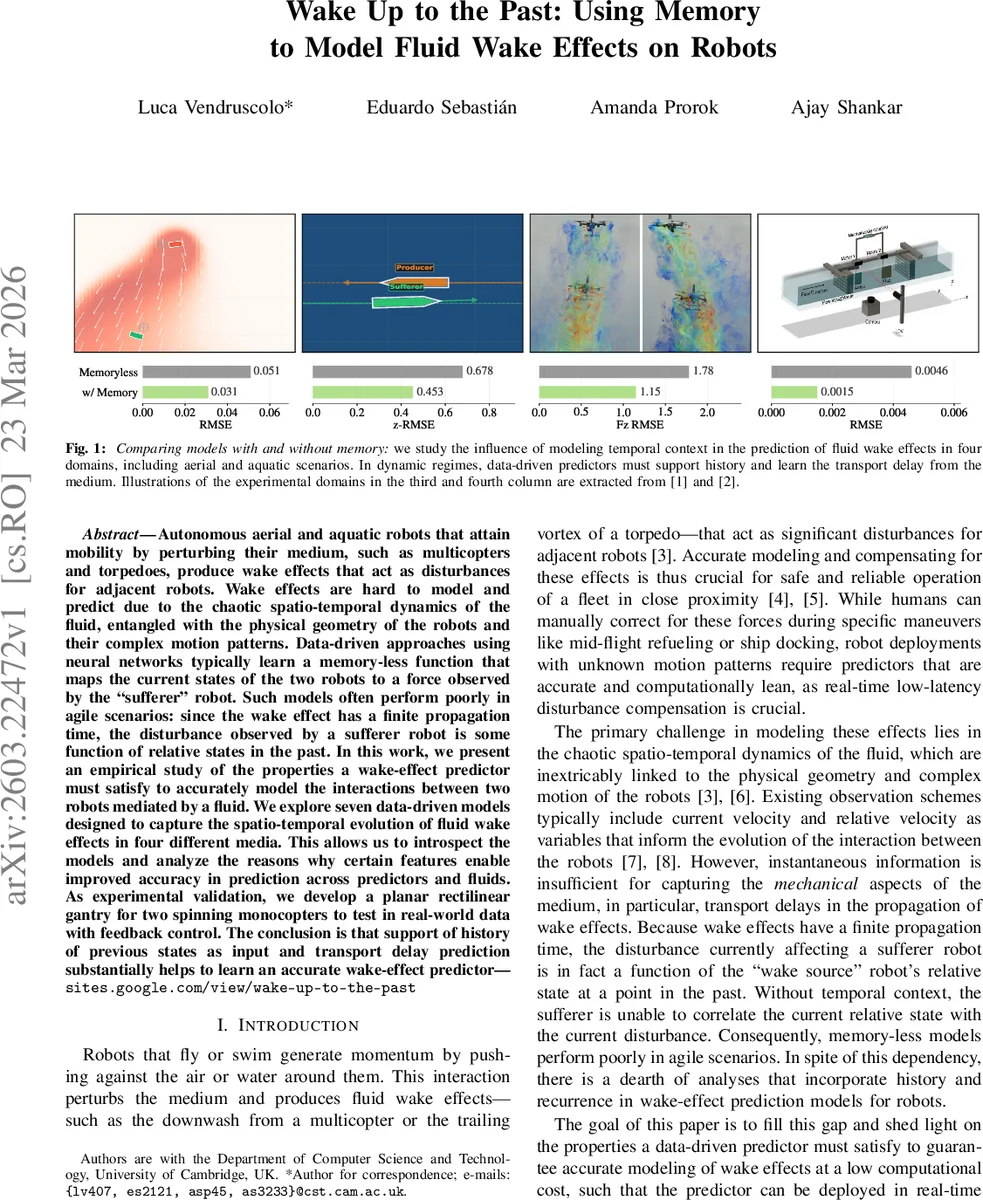

Autonomous aerial and aquatic robots that attain mobility by perturbing their medium, such as multicopters and torpedoes, produce wake effects that act as disturbances for adjacent robots. Wake effects are hard to model and predict due to the chaotic spatio-temporal dynamics of the fluid, entangled with the physical geometry of the robots and their complex motion patterns. Data-driven approaches using neural networks typically learn a memory-less function that maps the current states of the two robots to a force observed by the “sufferer” robot. Such models often perform poorly in agile scenarios: since the wake effect has a finite propagation time, the disturbance observed by a sufferer robot is some function of relative states in the past. In this work, we present an empirical study of the properties a wake-effect predictor must satisfy to accurately model the interactions between two robots mediated by a fluid. We explore seven data-driven models designed to capture the spatio-temporal evolution of fluid wake effects in four different media. This allows us to introspect the models and analyze the reasons why certain features enable improved accuracy in prediction across predictors and fluids. As experimental validation, we develop a planar rectilinear gantry for two spinning monocopters to test in real-world data with feedback control. The conclusion is that support of history of previous states as input and transport delay prediction substantially helps to learn an accurate wake-effect predictor.

💡 Research Summary

**

The paper addresses the challenge of predicting fluid‑mediated wake disturbances between two robots—a “wake source” and a “sufferer.” Traditional data‑driven approaches treat the problem as a memory‑less mapping from the instantaneous relative state (positions, velocities, thrusts) to the disturbance force observed by the sufferer. This assumption fails in agile aerial or aquatic scenarios because wakes propagate through the fluid at a finite speed, introducing a non‑negligible transport delay Δt. Consequently, the force experienced at time t + Δt depends on the source’s state at some earlier time t, and models that ignore temporal context produce large errors.

To investigate how temporal information should be incorporated, the authors design an empirical study involving seven neural‑network architectures across four distinct domains: (1) a high‑fidelity CFD dataset of two quadrotors (FlareDW), (2) a CFD ship‑to‑ship interaction dataset, (3) a numerical simulation of two fish swimming in line, and (4) a custom 2‑D downwash simulator used for closed‑loop control experiments. The seven models fall into three categories:

- Memoryless baseline (Agile MLP) – a simple feed‑forward MLP that uses only the current observation vector.

- Explicit‑History models – (a) History MLP concatenates a sliding window of past snapshots into a flattened input; (b) Delay Embedding learns a Gaussian attention over the past to select the most relevant delayed snapshot, explicitly forcing the network to rely on older data.

- Recurrent/Attention models – (a) GRU, (b) Temporal Convolutional Network (TCN) with causal dilated convolutions, (c) Mamba (a selective state‑space model), (d) Reservoir Computing with Echo State Networks (RC‑ESN), and (e) Cross‑Attention that queries the source’s past trajectory with multi‑head attention.

All models are kept lightweight (< 100 k trainable parameters) and hyper‑parameters are tuned per domain via Bayesian optimization (Optuna). Training uses a weighted mean‑squared error on the three force components, emphasizing horizontal forces.

Key Findings

- Across every domain, models that ingest past states outperform the memoryless MLP by a wide margin (30 %–70 % lower RMSE).

- The Delay Embedding and Cross‑Attention architectures achieve the best overall performance. Their advantage stems from an explicit mechanism that learns the transport delay Δt instead of relying on the network to infer it implicitly.

- Recurrent models (GRU, TCN, Mamba) also improve accuracy, but their performance depends heavily on the receptive field size; when properly configured they are competitive.

- Mamba, with only ~9 k parameters, matches the accuracy of larger models, demonstrating that a selective state‑space approach can be both efficient and precise.

- RC‑ESN, despite having a frozen large reservoir, performs reasonably well while requiring virtually no training beyond a linear read‑out, highlighting its suitability for ultra‑low‑power platforms.

Real‑World Validation

The authors built a planar gantry that holds two tethered monocopters. The source’s thrust is varied over time, creating a measurable downwash that reaches the sufferer after ≈0.12 s. When the predictions of each model are fed into a feedback controller, the history‑based models reduce the closed‑loop position error from 0.051 m (memoryless) to as low as 0.031 m. This demonstrates that the proposed architectures are not only accurate in offline prediction but also lightweight enough for real‑time deployment on embedded hardware.

Conclusions and Implications

The study establishes two design principles for fluid‑wake prediction in robotics:

- Incorporate a representation of past states (either as explicit windows or via recurrent hidden states).

- Model the transport delay explicitly (e.g., through learned attention over past timestamps or a dedicated delay‑embedding module).

Adhering to these principles yields predictors that are both accurate and computationally efficient, enabling real‑time disturbance compensation for swarms of drones, coordinated underwater vehicles, ship‑to‑ship navigation, and bio‑inspired schooling robots. Future work may extend the framework to multi‑source/multi‑sufferer interactions, turbulent regimes, and online adaptation to changing fluid properties.

Comments & Academic Discussion

Loading comments...

Leave a Comment