SteelDefectX: A Coarse-to-Fine Vision-Language Dataset and Benchmark for Generalizable Steel Surface Defect Detection

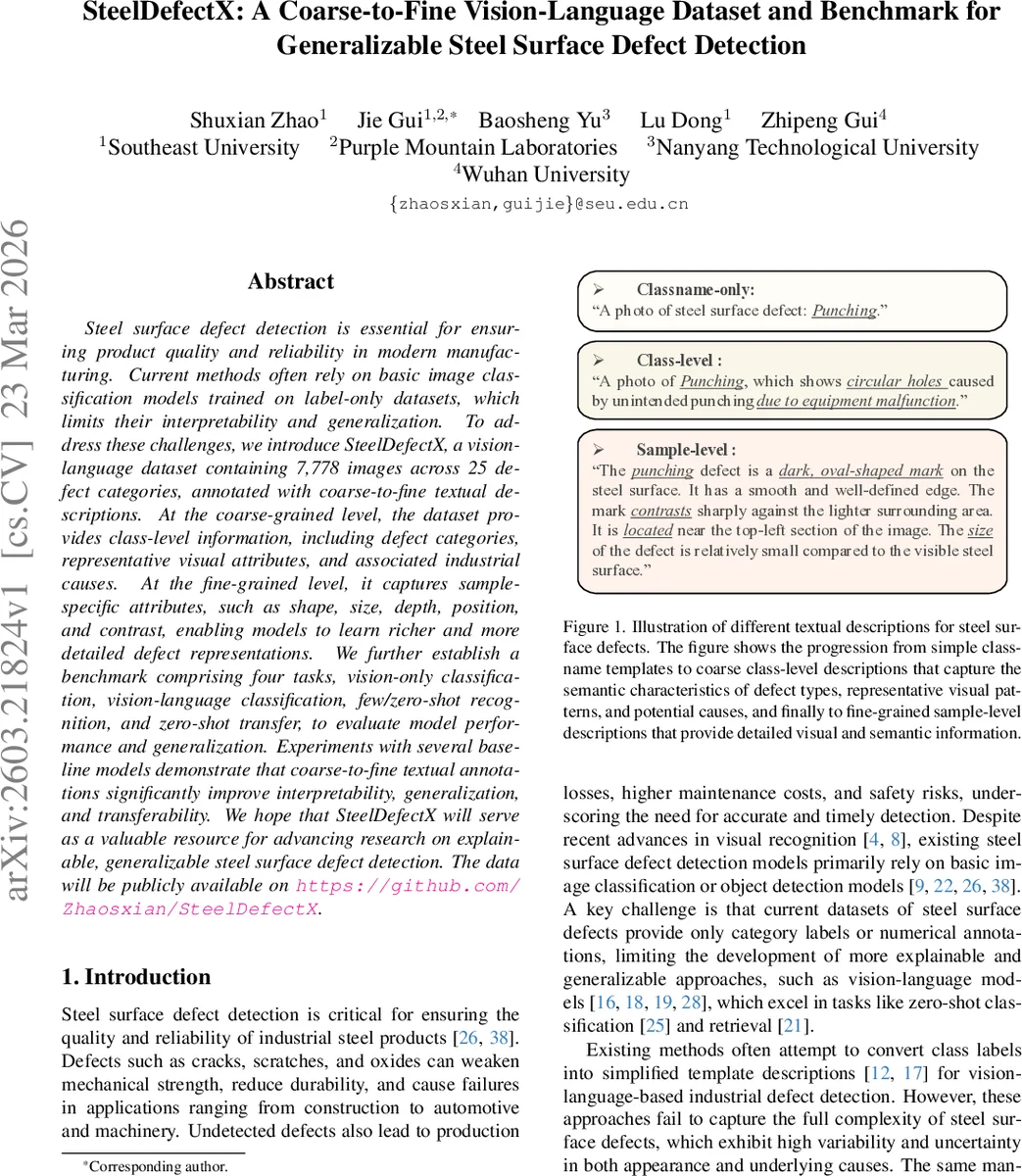

Steel surface defect detection is essential for ensuring product quality and reliability in modern manufacturing. Current methods often rely on basic image classification models trained on label-only datasets, which limits their interpretability and generalization. To address these challenges, we introduce SteelDefectX, a vision-language dataset containing 7,778 images across 25 defect categories, annotated with coarse-to-fine textual descriptions. At the coarse-grained level, the dataset provides class-level information, including defect categories, representative visual attributes, and associated industrial causes. At the fine-grained level, it captures sample-specific attributes, such as shape, size, depth, position, and contrast, enabling models to learn richer and more detailed defect representations. We further establish a benchmark comprising four tasks, vision-only classification, vision-language classification, few/zero-shot recognition, and zero-shot transfer, to evaluate model performance and generalization. Experiments with several baseline models demonstrate that coarse-to-fine textual annotations significantly improve interpretability, generalization, and transferability. We hope that SteelDefectX will serve as a valuable resource for advancing research on explainable, generalizable steel surface defect detection. The data will be publicly available on https://github.com/Zhaosxian/SteelDefectX.

💡 Research Summary

SteelDefectX introduces a novel vision‑language dataset for steel surface defect detection, addressing the long‑standing limitation of existing defect datasets that provide only categorical labels. By integrating four publicly available steel defect collections (NEU, GC10, X‑SDD, and S3D) and harmonizing their taxonomies, the authors assemble 7,778 high‑quality images covering 25 defect categories. The dataset is enriched with a two‑tier textual annotation scheme: (1) coarse‑level class descriptions that combine the defect name, representative visual attributes, and plausible industrial causes; and (2) fine‑level sample descriptions that explicitly capture five critical defect dimensions—shape, size, depth, position, and contrast.

The fine‑level annotations are generated through an automated pipeline powered by GPT‑4o. For each image, an open‑ended prompt produces multiple candidate sentences under a high temperature setting to encourage linguistic diversity. Redundancy is reduced using Sentence‑BERT embeddings, while a binary 5‑bit vector encodes the presence of each dimension in a candidate. A scoring function S(d) = λ₁·‖b‖₁/5 + λ₂·D(d) balances dimensional coverage (λ₁ = 0.6) and semantic uniqueness (λ₂ = 0.4). The highest‑scoring, grammatically sound description is retained, and a final human‑in‑the‑loop review ensures terminological consistency and linguistic quality. This structured approach yields rich, domain‑specific captions that go far beyond simple class‑name templates.

To evaluate the impact of these annotations, the authors define four benchmark tasks:

- Vision‑only classification – standard image classifiers (ResNet‑50, ViT‑B/16) with a linear probe assess baseline visual performance.

- Vision‑language classification – CLIP‑style models (CLIP, SLIP, BLIP‑2) are fine‑tuned using the paired image‑text data, measuring how textual supervision improves alignment.

- Few/zero‑shot recognition – a subset of classes is used for training while the remaining classes are held out, testing the model’s ability to generalize to unseen defect types via textual knowledge.

- Zero‑shot transfer – models trained on SteelDefectX are directly evaluated on unrelated defect datasets: ten aluminum surface defect categories from MSD‑Cls and five seamless steel tube defect categories from CGFSDS‑9.

Experimental results consistently demonstrate that the inclusion of coarse‑level and especially fine‑level textual annotations yields substantial gains. Vision‑language models outperform their vision‑only counterparts by an average of 7.3 percentage points in top‑1 accuracy across the first three tasks. In the zero‑shot transfer scenario, the performance boost reaches over 12.5 percentage points, highlighting the strong cross‑domain generalization afforded by the rich semantic cues. Ablation studies reveal that fine‑grained descriptions contribute more to improvement than coarse class‑level texts alone, confirming that explicit coverage of shape, size, depth, position, and contrast enables models to capture subtle visual variations that are critical in industrial inspection.

The paper’s contributions are threefold: (1) the creation of SteelDefectX, the first steel defect dataset with hierarchical textual annotations; (2) a comprehensive benchmark suite that evaluates both visual and multimodal capabilities, including few‑shot and cross‑domain transfer; and (3) extensive baseline experiments that validate the hypothesis that high‑quality, domain‑specific language supervision enhances interpretability, robustness, and transferability of defect detection models.

Limitations are acknowledged. The reliance on GPT‑4o for automatic captioning incurs API costs and may introduce occasional inaccuracies that require manual correction. All images are resized to 256 × 256 pixels, which may limit the detection of very fine‑grained defects that benefit from higher resolution. Future work is suggested in three directions: (i) expanding the dataset to higher resolutions and multi‑scale annotations; (ii) developing cost‑effective, domain‑adapted language models to replace external LLM APIs; and (iii) exploring large‑scale multimodal pre‑training strategies that can leverage SteelDefectX to improve defect detection across a broader range of industrial materials and inspection scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment