CellFluxRL: Biologically-Constrained Virtual Cell Modeling via Reinforcement Learning

Building virtual cells with generative models to simulate cellular behavior in silico is emerging as a promising paradigm for accelerating drug discovery. However, prior image-based generative approaches can produce implausible cell images that viola…

Authors: Dongxia Wu, Shiye Su, Yuhui Zhang

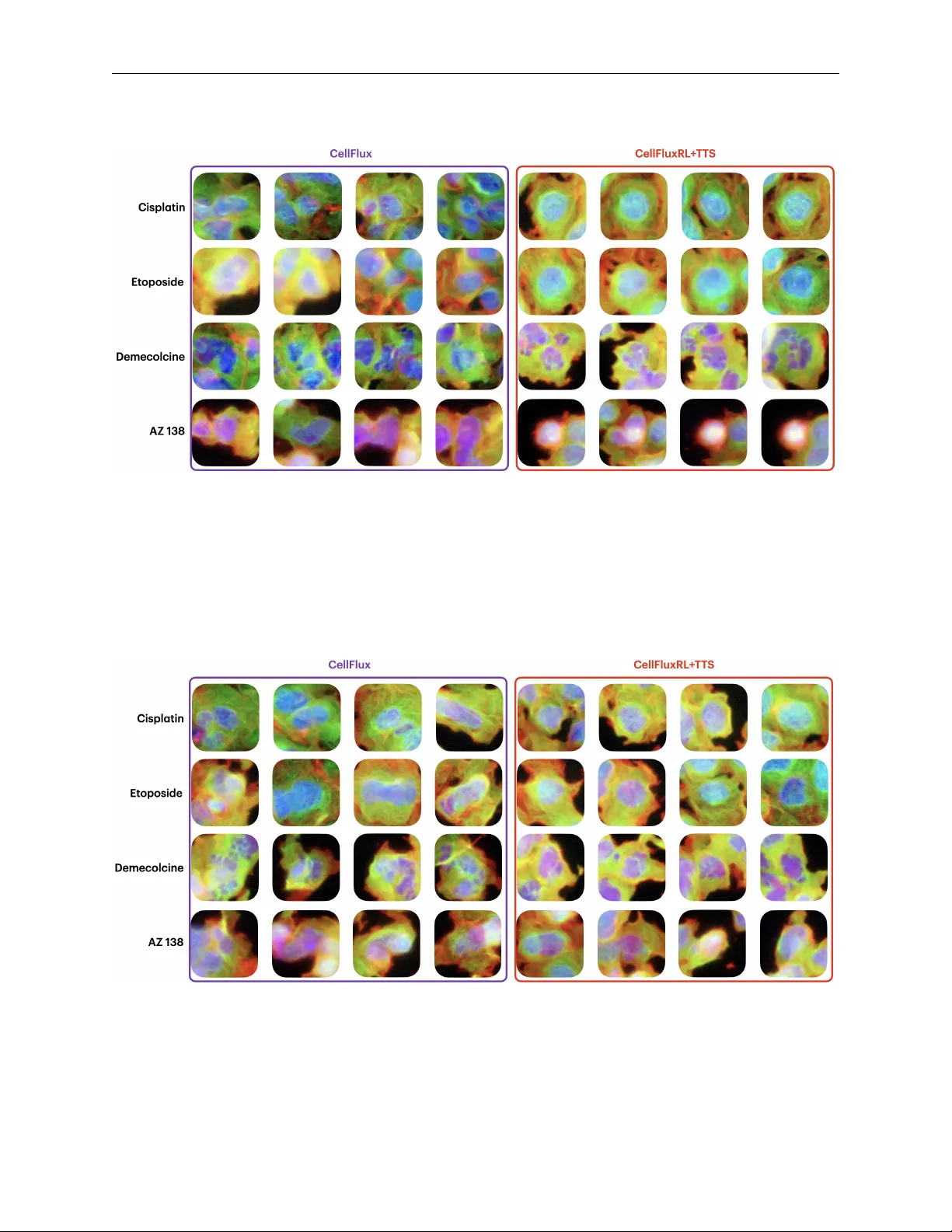

CellFluxRL : B I O L O G I C A L L Y - C O N S T R A I N E D V I RT U A L C E L L M O D E L I N G V I A R E I N F O R C E M E N T L E A R N I N G A P R E P R I N T Dongxia W u ∗ Stanford University Stanford, CA dowu@stanford.edu Shiye Su ∗ Stanford University Stanford, CA shiye@stanford.edu Y uhui Zhang ∗ Stanford University Stanford, CA yuhuiz@stanford.edu Elaine Sui Stanford University Stanford, CA esui@stanford.edu Emma Lundberg Stanford University Stanford, CA emmalu@stanford.edu Emily B. Fox † Stanford University Stanford, CA ebfox@stanford.edu Serena Y eung-Levy † Stanford University Stanford, CA syyeung@stanford.edu A B S T R AC T Building virtual cells with generati ve models to simulate cellular beha vior in silico is emerging as a promising paradigm for accelerating drug discovery . Howe ver , prior image-based generativ e ap- proaches can produce implausible cell images that violate basic ph ysical and biological constraints. T o address this, we propose to post-train virtual cell models with reinforcement learning (RL), lev er- aging biologically meaningful ev aluators as re ward functions. W e design seven re wards spanning three categories—biological function, structural validity , and morphological correctness—and op- timize the state-of-the-art CellFlux model to yield CellFluxRL . CellFluxRL consistently improv es ov er CellFlux across all rew ards, with further performance boosts from test-time scaling. Over - all, our results present a virtual cell modeling frame work that enforces physically-based constraints through RL, advancing beyond “visually realistic” generations to wards “biologically meaningful” ones. 1 Introduction W et-lab e xperiments have long been the primary bottleneck in drug discov ery . T o conduct a single experiment, re- searchers must not only purchase expensi ve reagents, consumables, and equipment, but also wait weeks or ev en months to synthesize drugs and culture cells. Consequently , biologists ha ve long en visioned b uilding virtual cells [37, 16, 4] to accelerate this process by simulating cell behavior in silico . W ith recent advances in generative modeling, such as diffusion models [39, 40, 13] and flo w matching [22, 23, 26, 27], alongside high-throughput image-based screening technologies [7, 8, 12] that produce terabytes of data, there is gro wing interest in de veloping image-based virtual cells to predict morphological responses to perturbations. Figure 1: Failure of cell generation. Despite its success, we observ e that these image-based virtual cell models can produce im- ages that look realistic yet are biologically implausible. For instance, using the state-of-the- art image-based virtual cell model, CellFlux [47], we observe anomalies such as the cell nucleus being generated outside of the cytoplasm (Figure 1). Such violations greatly limit real-world use of virtual cell models, reducing their practical v alue. W e hypothesize that these failures arise from a mismatch between the pixel-le vel flow- matching objectiv e and global physical constraints. Flo w-based generativ e models [23] learn velocity fields that transport samples from one distrib ution to another . While this objective can encourage visual fidelity , it does not explicitly enforce higher-le vel constraints on cell ∗ Equal contribution. † Equal advising. CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT Figure 2: Motivation. Current generative models for simulating cellular perturbations can fail to produce physically plausible cell images. For example, nuclei may appear outside the cell membrane. W e design a suite of biologically meaningful verifiers in three roles: (1) as evaluators to assess the biological correctness of generated images, (2) as r ewar d signals to improve generation via reinforcement learning, and (3) as verification modules to enhance sample quality through test-time scaling. structure and properties. This may yield samples that appear plausible locally but are incor- rect globally . T o address this issue, we propose CellFluxRL , applying reinforcement learning (RL)-based post-training with biologically-meaningful ev aluators as rew ard functions. Concretely , many constraints of interest admit existing or readily obtained ev aluators that can efficiently assign scores to generated images (e.g., whether a sample satisfies structural or functional criteria). Although these ev aluators are typically non-differentiable and thus cannot be op- timized via standard backpropagation, they can be used as reward signals for RL [24, 48]. At a high level, our RL procedure alternates between: (1) sampling , where we generate multiple images and ev aluate them using biologically- meaningful ev aluators; and (2) optimization , where we increase the likelihood of high-reward samples and decrease the likelihood of low-re ward samples. This directly penalizes physically inv alid generations while reinforcing physi- cally consistent ones. Although our work focuses on impro ving CellFlux , a flo w-based model, the same training ideas can be applied to other generativ e modeling frameworks. T o demonstrate the potential of this approach, we post-train CellFlux to yield CellFluxRL , optimizing sev en rew ard models that assess correctness across three key categories: biological function , such as mode of action; structural properties , including nuclear roundness and containment of the nucleus within the cytoplasm; and morphological properties , like the size and number of nuclei and the size of the cytoplasm. Our overall reward is a weighted linear combination of component re wards. Evaluating the learned model across all re ward components, CellFluxRL achieves higher scores than the base model. These results indicate that RL provides an ef fecti ve mechanism for aligning image- based generativ e models with biologically-meaningful constraints. W e additionally present qualitativ e case studies and ablations to analyze how RL h yperparameters and re ward design influence performance. Moreov er , these rew ard models enable a simple form of test-time scaling. Following the selection strate gy of [29], we sample N candidate images for a given condition and select the one with the highest reward. W e observe monotonic improv ements as N increases, indicating a clear test-time scaling trend, and achieving an additional gain over single- sample generation. Our work also shines a light on limitations of existing ev aluation metrics for image-based generative models of cellular morphology . T o date, focus has been on image generation quality scores, like FID and KID, rather than biological validity . Our considered rew ards not only enable RL-based training, but also new , biologically meaningful ev aluation criteria. In summary , our work identifies a ke y limitation of existing image-based virtual cell modeling: the lack of explicit enforcement of physical correctness under pixel-le vel training objecti ves. W e address this limitation by incorporating biologically-meaningful ev aluators through RL, which substantially impro ves physical plausibility of generated im- ages. W e further show that these gains can be amplified via test-time scaling. Finally , our rewards serve as new metrics for the community to benchmark against. Overall, our results mov e virtual cell image generation from “looking good” to being biologically consistent , supporting the do wnstream goal of drug discovery and personalized medicine with reduced reliance on costly wet-lab experiments. 2 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT 2 Related W ork V irtual cell modeling. A virtual cell model aims to simulate cellular responses to perturbations in silico . This can be formulated as a generative modeling problem p ( x 1 | x 0 , c ) , where x 0 and x 1 denote cell states before and after perturbation c . Early virtual cell models relied on mathematical and physics-based framew orks, such as systems of ordinary differential equations (ODEs) [46, 41, 36]. Howe ver , their expressiv eness is inherently limited by the strict assumptions and simplified dynamics that these formulations require. W ith recent adv ances in generativ e modeling and image-based high-throughput screening, a growing body of work has explored using V AEs, GANs, diffusion models, and flow matching to simulate cellular state transitions [1, 35, 18, 33, 3, 15, 47]. A notable paradigm is CellFlux [47], which reformulates the problem as a distribution-to-distrib ution transformation and lev erages flow matching to mitigate batch effects, achieving state-of-the-art performance. Nevertheless, we observe that while these models generate visually plausible cells, the generations can violate fundamental biological constraints. T o address this challenge, we introduce RL post-training guided by biologically meaningful rew ards, encouraging virtual cell models to generate more physically and biologically plausible cell images. Physics-awar e generative models. The growing interest in utilizing generativ e models as “world simulators” or “world models” necessitates strict adherence to physical accuracy [9, 30, 42]. Howe ver , recent studies indicate that state-of-the-art image and video generativ e models still struggle to maintain physical correctness, a limitation that cannot be resolved simply by scaling up data and model parameters [31, 17]. T o tackle this challenge, the community has proposed various approaches: modifying architectures to inject physical constraints [14, 45], altering the inference process by using LLMs for generation planning [21, 49], or modifying training objectives to incorporate physical preferences [43, 25, 34, 5]. Our approach aligns with objecti ve-based methods; in virtual cell modeling, we can readily define numerous biologically meaningful constraints. Optimizing these constraints requires minimal architectural modifications while yielding robust results. Furthermore, these rew ards facilitate test-time scaling to continuously enhance generation quality . Reinfor cement learning for generative modeling. While generativ e models based on diffusion or flow matching achiev e high visual fidelity , aligning them with strict ph ysical constraints remains an open challenge. RL has emer ged as a powerful solution [2, 11, 24, 44, 19], yet its de velopment is still in its nascent stages. The primary hurdle lies in ev aluating the exact likelihood of samples, necessary for adjusting generation probabilities based on rew ards, which is generally intractable in diffusion and flow matching frameworks. Prior works, such as FlowGRPO [24] and DanceGRPO [44], formulate this as a Markov Decision Process (MDP) to deriv e probabilities, whereas recent algorithms like DiffusionNFT [48] introduce forward processes to estimate these likelihoods. Our work builds upon the DiffusionNFT -style forward probability estimation process, which offers rapid estimation and state-of-the-art results. Crucially , it accommodates our distribution-to-distribution flo w matching (from original to perturbed states), whereas MDP-based approaches assume a standard Gaussian prior . 3 Cellular Perturbation Modeling Our approach takes as input a pretrained generative model for cellular morphology prediction and post-trains it using RL to improve ph ysical correctness. In this section, we describe the perturbation modeling problem and the properties required for the base model. Objective. Let X ⊆ R H × W × C denote the space of multi-channel fluorescence microscopy images, where each channel highlights a distinct cellular structure (e.g., nucleus, cytoskeleton, mitochondria). Let C denote the space of perturbations, encompassing chemical compounds (drugs). Gi ven an unperturbed cell image x 0 and a perturbation c ∈ C , the goal is to learn a generativ e model that samples from the conditional distribution p ( x 1 | x 0 , c ) , where x 1 represents the cell’ s morphology after treatment. Such a model enables in silico simulation of cellular responses that would otherwise require costly wet-lab experiments. Data and batch effects. Cell morphology data are collected via high-content microscopy screening, in which multi- well plates are prepared with both contr ol wells (untreated) and perturbed wells (treated with a chemical compound). Experiments are conducted across multiple batches, each introducing systematic technical variations—dif ferences in staining intensity , illumination, or imaging conditions—that are unrelated to the perturbation itself. Because imaging is destructiv e (cells are fix ed and stained), paired before-and-after observ ations of the same cell are una v ailable. Instead, we observe unpaired sets of control images { x 0 } and perturbed images { x 1 } within each batch. 3 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT Requirements f or the base model. These data characteristics impose two key requirements on the base generative model. First, because observ ations are unpaired, the model must learn a distributional transformation from control to perturbed cells rather than a pointwise mapping. Second, because batch effects confound perturbation signals, the model should condition on same-batch control images as its source distribution, so that it learns only the perturbation- induced changes rather than technical artifacts. While our approach is compatible with any such base model, we instantiate it as CellFlux [47]. CellFlux , based on flow matching, offers a natural framew ork satisfying the above requirements: it learns a velocity field v θ : X × [0 , 1] × C → X that continuously transports the control distribution p 0 to the perturbed distribution p 1 ( · | c ) within each batch. T raining proceeds by sampling pairs ( x 0 , x 1 ) from source and target distrib utions, constructing linear interpolations x t = (1 − t ) x 0 + t x 1 for t ∼ U [0 , 1] , and minimizing: L FM ( θ ) = E x 0 ∼ p 0 , x 1 ∼ p 1 , t ∼U [0 , 1] v θ ( x t , t, c ) − ( x 1 − x 0 ) 2 2 (1) At inference, a control image x 0 is transformed into a predicted perturbed image ˆ x 1 by solving the ODE d x t = v θ ( x t , t, c ) d t from t =0 to t =1 . W e denote the resulting pretrained model as v θ , which serves as the input to our method. 4 Method In this section, we present our approach to improving the physical and biological fidelity of virtual cell models. W e first introduce the formal objectiv e for reward-dri ven generation (§4.1). Next, we detail the suite of reward mod- els/ev aluators (§4.2) designed to capture biological and structural properties. W e then describe the RL algorithm (§4.3) used to post-train the base model via contrastiv e updates. Finally , we discuss test-time scaling (§4.4) to further enhance generation quality at inference time. 4.1 Reward-Driven Generation While the flow matching objective trains the base model to produce images that are distributionally close to real perturbed cells, it operates at the pixel-le vel and does not explicitly encourage higher-le vel physical or biological correctness. In practice, this can lead to generated images that appear visually plausible but violate known cellular properties—for example, producing nuclei of incorrect size, cells with implausible morphology , or images that fail to reflect the expected mode of action (MoA) of a compound (Figure 2). Such violations limit the utility of virtual cell models for downstream applications lik e drug screening, where physical correctness is essential. W e observe that many properties of interest can be assessed by evaluator functions that score generated images along specific axes of physical or biological validity . Formally , let { r k } K k =1 denote a set of re ward functions, where each r k : X × C → R measures ho w well a generated image ˆ x 1 satisfies a particular property given perturbation c . These rew ards capture complementary aspects of cell structure and function, such as whether the nuclear size is consistent with the expected ef fect of the perturbation, or whether the overall morphological profile matches the correct MoA. W e describe the specific rew ard functions used in this work in §4.2. Giv en the pretrained base model v θ , our objective is to obtain a post-trained model v θ ′ that generates images with higher physical fidelity as measured by { r k } , while remaining close to the pretrained model. Concretely , we seek to solve: max θ ′ E x 0 ∼ p 0 , c ∼ p ( c ) " K X k =1 w k r k ( ˆ x 1 , c ) # s.t. D KL ( v θ ′ ∥ v θ ) ≤ ϵ (2) where ˆ x 1 is the image generated by v θ ′ from input ( x 0 , c ) , w k is the weight of each reward r k ( . ) , and D KL ( ·∥· ) is the KL diver gence between the post-trained and pretrained models. This regularization is important both to preserve the visual quality and div ersity learned during pretraining, and to mitigate r ewar d hac king —generating images that achiev e high scores through degenerate solutions rather than genuine physical correctness. Critically , optimizing these re wards is complementary to, and not in conflict with, the original distributional objec- tiv e. The reward functions encode properties that the gr ound truth perturbed distribution satisfies. For instance, real cells treated with a microtub ule destabilizer exhibit smaller , fragmented nuclei, and real cells hav e mode-of-action- consistent morphological profiles. By directly optimizing for these properties, RL can improv e the match between generated and real distributions along biologically meaningful ax es that the pix el-le vel flow matching loss may under - weight. In this sense, the re wards provide a form of tar geted distributional alignment , focusing model capacity on the aspects of the distribution that matter most for physical correctness. 4 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT 4.2 Reward Models W e design a suite of reward functions that capture complementary aspects of biological validity in generated cell images. These re wards fall into three categories: biological function rewards that assess whether generated images reflect the expected biological effects of a perturbation, structural rewards that enforce known physical relationships between cellular components, and morphological rew ards that encourage generated images to match the size and density statistics of real cells. T ogether , these rew ards provide multi-scale supervision—from high-level biological semantics down to low-le vel geometric properties—that the pixel-le vel flo w matching objective does not explicitly optimize for . 4.2.1 Biological Function. Biological function rew ards ev aluate whether generated images faithfully reflect the biological effects of the applied perturbation. Mode of action (MoA). A drug’ s MoA describes the cellular process it tar gets, such as microtubule destabilization, DN A replication inhibition, or actin disruption. Because different MoAs produce distinct morphological signatures, the ability of a generated image to be correctly class ified by MoA serves as a strong indicator of biological fidelity . W e lev erage an MoA classifier pretrained on real perturbed images [47] and define the re ward as the predicted probability of the ground truth MoA class: r MoA ( ˆ x 1 , c ) = p cls ( y c | ˆ x 1 ) , (3) where y c is the ground truth MoA label associated with perturbation c and p cls is the pretrained MoA classifier . Higher values indicate that the generated image e xhibits the morphological profile expected for the giv en perturbation. 4.2.2 Structural Constraints. Structural constraint rew ards enforce known physical relationships between cellular components. Nucleus-in-cytoplasm. In correctly imaged cells, nuclei are fully enclosed within the cytoplasm. Generated im- ages that violate this containment are physically implausible. W e segment nuclei and cytoplasm from the generated images using Cellpose [32] and define the re ward as the degree to which all detected nuclei are fully enclosed by cyto- plasm. This re ward penalizes the failure mode of generati ve models wherein channel-lev el structures become spatially inconsistent: r Nuc-in-Cyto ( ˆ x 1 , c ) = area ( nucleus mask ∩ cytoplasm mask ) area ( cytoplasm mask ) (4) Nuclear roundness. Nuclear shape is a biologically informative feature that varies systematically across MoA classes. For instance, microtubule destabilizers produce fragmented, irregular nuclei, while other perturbations pre- serve smooth, round nuclear morphology . W e compute the roundness reward as: r Roundness ( ˆ x 1 , c ) = − h 1 N Nu N Nu X i =1 4 π · area i perimeter 2 i − µ ( y c ) i 2 / [ σ ( y c ) ] 2 , (5) where N Nu is the number of identified nuclei, and µ ( y c ) and σ ( y c ) are the av erage and standard deviation roundness of the MoA-conditioned ground truth distribution of ˆ x 1 with label y c . This encourages the model to produce nuclear shapes consistent with the known morphological ef fects of each perturbation category . 4.2.3 Morphological Statistics. These rew ards ensure that the size and density of cellular components in generated images match those of the ground truth distribution, conditioned on the MoA. Unlike the structural rewards above, which enforce qualitativ e relation- ships, these rew ards target quantitativ e statistics of the cell population. For each of the following statistics s —maximum nucleus size, maximum cytoplasm size, nucleus count, and cytoplasm count—we compute the value s ( ˆ x 1 ) from the generated image and compare it to the ground truth distribution for the corresponding MoA class. Let µ ( y c ) s and σ ( y c ) s denote the mean and standard deviation of statistic s across real images 5 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT Figure 3: CellFluxRL algorithm. RL post-training seeks to increase the likelihood of high-reward samples and de- crease the likelihood of low-re ward samples. Therefore, the core training loop of CellFluxRL consists of interleaved phases of sampling and training. (a) Sampling: we generate multiple rollouts from a fixed control image and pertur- bation condition, scoring each with the rew ard models. (b) Training: because exact likelihoods in flow matching are intractable, we construct positi ve and ne gati ve velocities from the batch of rollouts and optimize them contrasti vely to achiev e this goal, following Dif fusionNFT [48]. with MoA label y c . W e define the reward as the ne gative normalised de viation: r s ( ˆ x 1 , c ) = − s ( ˆ x 1 ) − µ ( y c ) s 2 σ ( y c ) s 2 , s ∈ { NucSize , CytoSize , NucCount , CytoCount } . (6) This formulation penalises generated images whose statistics deviate from the ground truth mean, scaled by the natural variability within each MoA class. The four statistics capture complementary aspects of cell morphology: • Maximum nucleus size and maximum cytoplasm size reflect the largest cellular components in the image, capturing whether the model produces cells of appropriate scale for the giv en perturbation. W e use the maximum rather than the a verage to mitigate the potential issue of partially visible cells biasing the statistics. • Nucleus count and cytoplasm count measure cell density , ensuring the model does not hallucinate or omit cells relativ e to what is observed under each treatment condition. T ogether , these four rewards encourage the generated cell population to be statistically consistent with real observa- tions in terms of both individual cell size and o verall cell density . 4.3 Reinfor cement Learning on Biological Rewards W e now describe how we optimize the pretrained base model v θ with respect to the reward functions introduced in §4.2. Algorithm overview . Our objectiv e is to maximize the combined rew ard defined in Eq.equation 2 (§4.1). Since these biological rew ard functions are non-differentiable, standard backpropagation is inapplicable, necessitating an RL approach. The core principle of our RL strategy is to increase the generation likelihood of high-reward samples while penalizing low-re ward ones. W e adopt DiffusionNFT [48], a state-of-the-art online RL algorithm for flow matching. It operates on the flow’ s forward process, avoiding intractable log likelihoods, and is constructed from distribution-a gnostic components, hence extending naturally to our source-to-target flow matching setting without modification. Algorithm detail. At each iteration, DiffusionNFT collects a batch of generated images, ev aluates them with respect to the re ward functions, and uses the re wards to define an impro vement direction ov er the current polic y . The key idea is to split generated samples into positive ( high-re ward ) and ne gative ( low-r ewar d ) subsets and learn a contrastiv e update that moves the model to wards the positive distribution. Concretely , giv en a generated image ˆ x 1 with optimality rew ard r ∈ [0 , 1] , the training objecti ve is: L ( θ ) = E c, π old ( ˆ x 1 | c,x 0 ) , t h r ∥ v + θ ( x t , c, t ) − v ∥ 2 2 + (1 − r ) ∥ v − θ ( x t , c, t ) − v ∥ 2 2 i + β D KL v θ ∥ v old , (7) 6 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT where β is the KL div ergence weight, x t = α t x 0 + γ t x 1 is the forward-noised version of the generated image, v = ˙ α t x 0 + ˙ γ t x 1 is the corresponding velocity target, and v + θ , v − θ are implicit positiv e and negati ve policies defined as: v + θ ( x t , c, t ) := (1 − γ ) v old ( x t , c, t ) + γ v θ ( x t , c, t ) (8) v − θ ( x t , c, t ) := (1 + γ ) v old ( x t , c, t ) − γ v θ ( x t , c, t ) . (9) Here v old is the data-collection policy (a lagging copy of v θ ), and γ > 0 is a hyperparameter controlling the guidance strength. The implicit parameterization is central to the algorithm: rather than training separate positive and negativ e models, a single policy v θ is optimized such that its mixture with v old simultaneously fits high-reward samples (via v + θ ) and avoids lo w-reward ones (via v − θ ). The optimal solution satisfies v θ ∗ = v old + 2 γ ∆ , where ∆ is the reinforcement guidance direction pointing from the negati ve to wards the positi ve distribution. This formulation naturally regularizes the post-trained model towards the pretrained policy: when γ is large, the guidance strength 2 γ is small and the model stays close to v old ; when γ is small, the model is allowed to deviate more aggressiv ely . The data-collection policy v old is itself updated via an exponential mo ving av erage of v θ . Rollout and advantage estimation. During sampling, we fix a perturbation condition c and a source control image x 0 , and generate a group of m candidate images { ˆ x ( i ) 1 } m i =1 . Since the base model, v θ , augments x 0 with Gaussian noise before transporting it via the learned velocity field, di versity within each group arises primarily from this stochastic noise injection as well as any ODE discretization error . W e find this yields sufficient v ariation for DiffusionNFT to distinguish positi ve from ne gative generations. Each candidate is scored by the re ward functions, and the raw re wards are normalized within the group to obtain optimality probabilities r ( i ) ∈ [0 , 1] , following the adv antage normalization scheme [48]. The forward process is then applied to each generated image, and the loss in Eq.equation 7 is computed ov er the group. 4.4 T est-Time Scaling A key benefit of having explicit re ward functions is that the y can not only be used for training, b ut also to select among candidate generations at inference time. This strategy has prov en highly ef fective in reasoning models, where best-of- N selection with a re ward model or verifier yields consistent impro vements that scale predictably with N [10, 20, 38]. W e apply the same principle to virtual cell generation. Giv en a perturbation condition c and a source control image x 0 , we generate N candidate images { ˆ x ( i ) 1 } N i =1 and select the one with the highest rew ard: ˆ x ∗ 1 = arg max i ∈{ 1 ,...,N } r ( ˆ x ( i ) 1 , c ) (10) This provides a simple, training-free mechanism to improve generation quality given additional inference compute budget. Moreover , best-of- N selection is complementary to reinforcement learning: RL post-training improves the base distribution from which candidates are drawn, so that e ven modest v alues of N yield high-quality outputs, while test-time selection contributes additional gains. 5 Results In this section, we ev aluate CellFluxRL ’ s ability to generate biologically faithful cellular images. After detailing our experimental setup (§5.1), we present the main results, demonstrating that RL with biologically-aligned rewards systematically improv es performance across all biological metrics (§5.2). W e sho w that test-time scaling enhances performance (§5.3) and provide ablation studies. 5.1 Experimental details Datasets. W e follo w the dataset configuration of the base CellFlux model and use the high-content microscopy perturbation dataset BBBC021 [6]. BBBC021 is a chemical perturbation dataset comprising 98K three-channel images at 96 × 96 resolution, collected under 26 chemical perturbations grouped into 12 modes of action (MoA). W e follow the train/test split used in CellFlux [47]. Baselines. W e compare our proposed method against the pretrained base model, CellFlux [47], as well as two prior generativ e baselines, PhenDiff [3] and IMP A [33]. 7 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT T able 1: Quantitative comparison across biological rewards and generativ e quality metrics. Each row represents a different e valuation metric, and each column represents a different method. TTS is CellFluxRL with test time scaling by best-of- N with N = 4 , where the best sample is selected by the weighted total rew ard. Bold values indicate the best performance; underlined values indicate the second best. Metric PhenDiff [3] IMP A [33] CellFlux [47] CellFluxRL +TTS Biological Rewar ds MoA 0.18 0.12 0.26 0.34 0.56 Nuc-in-Cyto 0.91 0.79 0.88 0.96 0.97 Roundness -0.24 -0.32 -0.34 -0.26 -0.19 NucSize -0.88 -1.02 -2.21 -1.04 -0.38 CytoSize -0.97 -1.59 -1.09 -0.65 -0.41 NucCount -2.61 -1.05 -0.83 -0.53 -0.28 CytoCount -3.22 -1.39 -1.03 -0.68 -0.33 Overall -5.20 -3.19 -2.44 0.46 3.15 Generation Quality FID 41.94 35.70 20.36 24.01 23.19 KID 0.028 0.029 0.015 0.014 0.016 Evaluation metrics. In addition to the rew ards reported in §4.2 (higher is better), which measure the biological meaningfulness of the generated cells, we also report standard image quality metrics (FID and KID). These metrics measure the image distribution similarity via Frechet and kernel-based distances between generated images and ground truth images (lower is better). T raining details. W e instantiate our method using a pretrained CellFlux flow matching model. CellFluxRL is post- trained using the online RL algorithm Dif fusionNFT [48] to optimize a weighted sum of 7 biologically-constrained rew ards: r = 5 . 0 r MoA + 2 . 0 r Nuc-in-Cyto + r Roundness + r NucSize + r CytoSize + r NucCount + r CytoCount . MoA and Nuc-in-Cyto are empirically harder to optimize, motiv ating their higher weights. The training objecti ve includes a KL diver gence weight ( β = 1 ) to re gularize the post-trained model against the pretrained policy and prev ent reward hacking. All other hyperparameter settings follow DiffusionNFT [48]. At inference, we further enhance performance using test- time scaling (TTS) with a best-of- N selection strategy ( N = 4 ), selecting the generated sample with the highest ov erall re ward. T raining is conducted for 1200 steps on 1 H100 GPU for 32 hours. 5.2 Reinfor cement Learning Leads to Better Results W e compare CellFluxRL against baselines on a suite of biological, structural, and morphological metrics, with results summarized in T able 1. CellFluxRL outperforms its pretrained counterpart CellFlux across all re ward metrics, con- firming that RL post-training successfully steers the generativ e model tow ard greater ph ysical correctness. The o verall rew ard improv es from − 4 . 44 to − 1 . 54 , with gains spanning all three reward categories: biological function, structural constraints, and morphological statistics. CellFluxRL rew ards also surpass those of PhenDiff and IMP A . The improvements are particularly pronounced on MoA, where CellFluxRL ’ s re ward of 0 . 34 substantially exceeds both PhenDif f ( 0 . 18 ) and IMP A ( 0 . 12 ). T o gi ve inter - pretable conte xt to these numbers, the corresponding MoA classification accuracies—the fraction of generated images whose predicted mode of action matches the ground truth label—are 0 . 56 , 0 . 53 , 0 . 61 , and 0 . 66 for PhenDiff , IMP A , CellFlux , and CellFluxRL respectively , indicating that CellFluxRL produces images whose morphological profiles more faithfully distinguish different drug classes. Applying best-of- N selection with N = 4 to CellFluxRL (+TTS) yields further improvements across all metrics, pushing the overall reward from − 1 . 54 to 1 . 17 . W e analyze test-time scaling behavior in further detail in §5.3. In terms of generati ve quality , while not a primary optimization objecti ve, CellFluxRL achiev es a state-of-the-art KID score and a competitiv e FID score compared to baselines. The quantitativ e gains are corroborated qualitativ ely in Figure 4. The baselines generate images that fail to reflect the expected biological response to each perturbation. CellFluxRL consistently corrects these failures, and test-time scaling pushes generations further toward the ground truth. 5.3 T est-time Scaling Further Enhances the Results Figure 5 shows how rew ard scores scale with the number of candidate samples N for both the base model ( CellFlux ) and the RL-post-trained model ( CellFluxRL ). W e generate N candidates and select the one with the highest ov erall 8 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT Figure 4: Qualitative comparisons. CellFluxRL generates more biologically-grounded images, better capturing drug- induced morphological changes. In these examples, Etoposide-induced cell rounding, Demecolcine-driven micro- tubule destabilization, and AZ138-associated cell shrinkage are all more faithfully reproduced, and cell density more closely matches the ground truth for Cisplatin. T est-time scaling (+TTS) further refines these predictions tow ard the target images. Figure 5: T est-time scaling by best-of- N further improves generation quality . The sample achieving the highest ov erall (combined) rew ard is selected from N rollouts, and each individual rew ard is plotted. RL (orange) consistently exhibits better scaling than the base model (blue) across all re wards. rew ard, the same weighted combination of indi vidual re wards used during RL post-training, then report the indi vidual rew ard metrics of the selected samples. Both models exhibit monotonic improvements as N increases across all metrics. RL post-training impro ves the scaling efficiency: the CellFluxRL curve sits consistently abov e the base model curve across all metrics, meaning that for any fixed compute b udget (i.e., any giv en N ), the RL-post-trained model achiev es higher rew ard. Because each sample drawn from the improv ed distribution is more likely to be physically correct, selection is more efficient. The effect is especially pronounced at small N , where the quality of the base distribution matters most. 9 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT Figure 6: Sensitivity analysis on KL weight β . Each subplot sho ws rew ard sensiti vity to β after RL post-training. T able 2: Single-reward optimization with reinforcement learning. Each column shows a model optimized for one rew ard only or CellFluxRL (all rewards), ev aluated across the metrics in each row . Bold values indicate the best performance; underlined v alues indicate the second best; dashed-underlined values indicate the third best. Metric MoA Nuc-in-Cyto Roundness NucSize CytoSize NucCount CytoCount CellFluxRL MoA 0.412 0.204 0.263 0.301 0.212 0.279 0.277 0.337 Nuc-in-Cyto 0.852 0.977 0.907 0.904 0.976 0.867 0.937 0.956 Roundness -0.342 -0.332 -0.198 -0.298 -0.340 -0.271 -0.304 -0.264 NucSize -3.319 -1.724 -2.328 -0.702 -1.784 -2.909 -2.853 -1.038 CytoSize -1.198 -0.545 -0.919 -0.917 -0.497 -1.113 -0.801 -0.652 NucCount -0.760 -0.904 -0.685 -0.818 -0.881 -0.476 -0.511 -0.528 CytoCount -0.922 -1.074 -0.905 -0.946 -1.063 -0.629 -0.580 -0.677 5.4 Sensitivity Analysis on Kullback–Leibler Di vergence W eight W e study the sensiti vity of final model performance on the KL di ver gence weight, β , varying it from 1.0 to 1.3. The results, presented in Figure 6, show a trade-off: structural and morphological correctness metrics fav or a smaller KL weight, whereas biological function re wards slightly impro ve with a larger weight. This suggests that achie ving higher biological accuracy requires more substantial model adjustments than impro ving structural or morphological fidelity . 5.5 Ablation Optimizing for a Single Reward T o isolate the ef fect of individual rewards, we conduct ablations optimizing for one re ward at a time. As shown in T able 2, this tar geted optimization significantly impro ves performance on the chosen metric, but the improv ements on other metrics are limited. In contrast, CellFluxRL , optimized with the combined weighted sum of all seven rewards, achiev es either the second- or third-best score on e very indi vidual metric. This demonstrates that the combined re ward strategy enables balanced, multi-faceted improvement across all biological, structural, and morphological criteria simultaneously . 6 Conclusion In this work, we tackle a central challenge for virtual cells: encouraging generated images to be physically correct and biologically plausible. W e accomplish this by introducing a suite of biologically-informed re wards, which serve a unified role—as ev aluation metrics, as training signals for reinforcement learning, and as a tool for test-time scaling. W e use these rew ards to optimize the state-of-the-art perturbation model CellFlux , yielding CellFluxRL . Across all rew ards, our approach consistently improves physical plausibility and maintains overall image quality over the base model. T ogether , these results ev olve virtual cell generation from pixel-le vel realism tow ard biological groundedness. Limitations and Future W ork. Our biological rewards are manually engineered based on domain expertise. A promising future direction is leveraging Large Language Models to automatically translate scientific literature into ex ecutable reward functions, significantly lowering the barrier to entry for novel domains. In this work, our core contribution is the modular multi-rew ard RL pipeline itself. As ne w generative architectures and more ef ficient RL algorithms emerge, they can be seamlessly integrated into this framework to further advance physically grounded 10 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT generation. Finally , while we demonstrate our RL pipeline on virtual cell modeling, our approach provides a domain- agnostic frame work for constrained generation. It can be adapted to other scientific fields governed by strict, non- differentiable rules, such as material science or medical imaging. Acknowledgement This work was supported in part by ONR Grant N00014-22-1-2110, NSF Grant 2205084, and the Stanford Institute for Human-Centered Artificial Intelligence (HAI). EBF is a Biohub, San Francisco, In vestigator . S.Y . is a Chan Zuckerber g Biohub — San Francisco In vestigator . References [1] Michael Bereket and Theofanis Karaletsos. Modelling cellular perturbations with the sparse additi ve mechanism shift v ariational autoencoder . In A. Oh, T . Naumann, A. Globerson, K. Saenko, M. Hardt, and S. Le vine, editors, Advances in Neural Information Pr ocessing Systems , volume 36, pages 1–12, 2023. 3 [2] Ke vin Black, Michael Janner , Y ilun Du, Ilya K ostrikov , and Sergey Le vine. Training diffusion models with reinforcement learning. arXiv preprint , 2023. 3 [3] Anis Bourou, Thomas Boyer , Marzieh Gheisari, K ´ evin Daupin, V ´ eronique Dubreuil, Aur ´ elie De Thonel, V al ´ erie Mezger , and Auguste Genovesio. Phendif f: Rev ealing subtle phenotypes with diffusion models in real images. In MICCAI , 2024. 3, 7, 8 [4] Charlotte Bunne, Y usuf Roohani, Y anay Rosen, Ankit Gupta, Xikun Zhang, Marcel Roed, Theo Alexandrov , Mohammed AlQuraishi, Patricia Brennan, Daniel B Burkhardt, et al. How to b uild the virtual cell with artificial intelligence: Priorities and opportunities. Cell , 2024. 1 [5] Y uanhao Cai, K unpeng Li, Menglin Jia, Jialiang W ang, Junzhe Sun, Feng Liang, W eifeng Chen, Felix Juefei-Xu, Chu W ang, Ali Thabet, Xiaoliang Dai, Xuan Ju, Alan Y uille, and Ji Hou. Phygdpo: Physics-aware groupwise direct preference optimization for physically consistent text-to-video generation, 2026. 3 [6] Peter D Caie, Rebecca E W alls, Alexandra Ingleston-Orme, Sandeep Daya, T om Houslay , Rob Eagle, Mark E Roberts, and Neil O Carragher . High-content phenotypic profiling of drug response signatures across distinct cancer cells. Molecular Cancer Therapeutics , 2010. 7, 15 [7] Sriniv as Niranj Chandrasekaran, Jeanelle Ackerman, Eric Alix, D Michael Ando, John Are valo, Melissa Ben- nion, Nicolas Boisseau, Adriana Boro wa, Justin D Boyd, Laurent Brino, et al. Jump cell painting dataset: morphological impact of 136,000 chemical and genetic perturbations. BioRxiv , pages 2023–03, 2023. 1 [8] Sriniv as Niranj Chandrasekaran, Beth A Cimini, Amy Goodale, Lisa Miller , Maria K ost-Alimova, Nasim Jamali, John G Doench, Briana Fritchman, Adam Skepner , Michelle Melanson, et al. Three million images and morpho- logical profiles of cells treated with matched chemical and genetic perturbations. Natur e Methods , pages 1–8, 2024. 1 [9] Chang Chen, Jaesik Y oon, Y i-Fu W u, and Sungjin Ahn. Transdreamer: Reinforcement learning with transformer world models. In Deep RL W orkshop NeurIPS 2021 , 2021. 3 [10] Karl Cobbe, V ineet Kosaraju, Mohammad Ba varian, Mark Chen, Hee woo Jun, Lukasz Kaiser , Matthias Plappert, Jerry T worek, Jacob Hilton, Reiichiro Nakano, et al. T raining verifiers to solve math word problems. arXiv pr eprint arXiv:2110.14168 , 2021. 7 [11] Y ing Fan, Oli via W atkins, Y uqing Du, Hao Liu, Moonkyung Ryu, Craig Boutilier , Pieter Abbeel, Mohammad Ghav amzadeh, Kangwook Lee, and Kimin Lee. Dpok: Reinforcement learning for fine-tuning text-to-image diffusion models. Advances in Neural Information Pr ocessing Systems , 36:79858–79885, 2023. 3 [12] Marta M Fay , Oren Kraus, Mason V ictors, Lakshmanan Arumugam, Kamal V uggumudi, John Urbanik, K yle Hansen, Safiye Celik, Nico Cernek, Ganesh Jagannathan, et al. Rxrx3: Phenomics map of biology . Biorxiv , pages 2023–02, 2023. 1 [13] Jonathan Ho, Ajay Jain, and Pieter Abbeel. Denoising diffusion probabilistic models. In NeurIPS , 2020. 1 [14] Siyuan Huang, Zan W ang, Puhao Li, Baoxiong Jia, T engyu Liu, Y ixin Zhu, W ei Liang, and Song-Chun Zhu. Diffusion-based generation, optimization, and planning in 3d scenes. In Pr oceedings of the IEEE/CVF Confer- ence on Computer V ision and P attern Recognition (CVPR) , pages 16750–16761, June 2023. 3 [15] Albert Z Hung, Charles J Zhang, Jonathan Z Sexton, Matthew James O’Meara, and Joshua D W elch. Lumic: Latent diffusion for multiple xed images of cells. bioRxiv , pages 2024–11, 2024. 3 11 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT [16] Graham T Johnson, Eran Agmon, Matthew Akamatsu, Emma Lundberg, Blair L yons, W ei Ouyang, Omar A Quintero-Carmona, Megan Riel-Mehan, Susanne Rafelski, and Rick Horwitz. Building the ne xt generation of virtual cells to understand cellular biology . Biophysical Journal , 2023. 1 [17] Bingyi Kang, Y ang Y ue, Rui Lu, Zhijie Lin, Y ang Zhao, Kaixin W ang, Gao Huang, and Jiashi Feng. How far is video generation from world model: A physical law perspective. In International Confer ence on Machine Learning , pages 28991–29017. PMLR, 2025. 3 [18] Alexis Lamiable, T iphaine Champetier , Francesco Leonardi, Ethan Cohen, Peter Sommer , David Hardy , Nicolas Argy , Achille Massougbodji, Elaine Del Nery , Gilles Cottrell, et al. Re vealing in visible cell phenotypes with conditional generativ e modeling. Nature Communications , 2023. 3 [19] Junzhe Li, Y utao Cui, T ao Huang, Y inping Ma, Chun Fan, Miles Y ang, and Zhao Zhong. Mixgrpo: Unlocking flow-based grpo ef ficiency with mixed ode-sde. arXiv pr eprint arXiv:2507.21802 , 2025. 3 [20] Hunter Lightman, V ineet Kosaraju, Y uri Burda, Harrison Edwards, Bo wen Baker , T eddy Lee, Jan Leike, John Schulman, Ilya Sutskev er, and Karl Cobbe. Let’ s verify step by step. In The twelfth international conference on learning r epresentations , 2023. 7 [21] Han Lin, Abhay Zala, Jaemin Cho, and Mohit Bansal. V ideodirectorgpt: Consistent multi-scene video generation via llm-guided planning. In COLM , 2024. 3 [22] Y aron Lipman, Ricky TQ Chen, Heli Ben-Hamu, Maximilian Nickel, and Matthew Le. Flow matching for generativ e modeling. In The Eleventh International Confer ence on Learning Representations , 2023. 1 [23] Y aron Lipman, Marton Ha vasi, Peter Holderrieth, Neta Shaul, Matt Le, Brian Karrer , Ricky TQ Chen, David Lopez-Paz, Heli Ben-Hamu, and Itai Gat. Flow matching guide and code. arXiv pr eprint arXiv:2412.06264 , 2024. 1 [24] Jie Liu, Gongye Liu, Jiajun Liang, Y angguang Li, Jiaheng Liu, Xintao W ang, Pengfei W an, Di Zhang, and W anli Ouyang. Flow-grpo: T raining flo w matching models via online rl. arXiv preprint , 2025. 2, 3 [25] Jie Liu, Gongye Liu, Jiajun Liang, Ziyang Y uan, Xiaokun Liu, Mingwu Zheng, Xiele W u, Qiulin W ang, W enyu Qin, Menghan Xia, et al. Improving video generation with human feedback. arXiv pr eprint arXiv:2501.13918 , 2025. 3 [26] Qihao Liu, Xi Y in, Alan Y uille, Andre w Bro wn, and Mannat Singh. Flowing from w ords to pixels: A framew ork for cross-modality ev olution. arXiv pr eprint arXiv:2412.15213 , 2024. 1 [27] Xingchao Liu, Chengyue Gong, and Qiang Liu. Flow straight and fast: Learning to generate and transfer data with rectified flow . In ICLR , 2023. 1 [28] V ebjorn Ljosa, Katherine L Sokolnicki, and Anne E Carpenter . Annotated high-throughput microscopy image sets for validation. Natur e Methods , 2012. 15 [29] Nanye Ma, Shangyuan T ong, Haolin Jia, He xiang Hu, Y u-Chuan Su, Mingda Zhang, Xuan Y ang, Y andong Li, T ommi Jaakkola, Xuhui Jia, et al. Inference-time scaling for diffusion models beyond scaling denoising steps. arXiv pr eprint arXiv:2501.09732 , 2025. 2 [30] Y utaka Matsuo, Y ann LeCun, Maneesh Sahani, Doina Precup, David Silver , Masashi Sugiyama, Eiji Uchibe, and Jun Morimoto. Deep learning, reinforcement learning, and world models. Neural Networks , 152:267–275, 2022. 3 [31] Fanqing Meng, Jiaqi Liao, Xinyu T an, W enqi Shao, Quanfeng Lu, Kaipeng Zhang, Y u Cheng, Dianqi Li, Y u Qiao, and Ping Luo. T ow ards world simulator: Crafting physical commonsense-based benchmark for video generation. arXiv preprint , 2024. 3 [32] Marius Pachitariu and Carsen Stringer . Cellpose 2.0: ho w to train your own model. Natur e methods , 19(12):1634–1641, 2022. 5 [33] Alessandro Palma, Fabian J Theis, and Mohammad Lotfollahi. Predicting cell morphological responses to per- turbations using generativ e modeling. Nature Communications , 2025. 3, 7, 8 [34] W enxu Qian, Chaoyue W ang, Hou Peng, Zhiyu T an, Hao Li, and Anxiang Zeng. Rdpo: Real data preference optimization for physics consistency video generation, 2025. 3 [35] Ladislav Ramp ´ a ˇ sek, Daniel Hidru, Petr Smirnov , Benjamin Haibe-Kains, and Anna Goldenber g. Dr .v ae: im- proving drug response prediction via modeling of drug perturbation effects. Bioinformatics , 35(19):3743–3751, 03 2019. 3 [36] Boris M Slepchenko and Leslie M Loe w . Use of virtual cell in studies of cellular dynamics. International r eview of cell and molecular biology , 283:1–56, 2010. 3 12 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT [37] Boris M Slepchenko, James C Schaf f, Ian Macara, and Leslie M Loew . Quantitativ e cell biology with the virtual cell. T rends in cell biology , 2003. 1 [38] Charlie Snell, Jaehoon Lee, K elvin Xu, and A viral Kumar . Scaling llm test-time compute optimally can be more effecti ve than scaling model parameters. arXiv preprint , 2024. 7 [39] Jascha Sohl-Dickstein, Eric W eiss, Niru Maheswaranathan, and Surya Ganguli. Deep unsupervised learning using nonequilibrium thermodynamics. In ICML , 2015. 1 [40] Y ang Song and Stefano Ermon. Generative modeling by estimating gradients of the data distrib ution. In NeurIPS , 2019. 1 [41] Dawn C. W alker and Jennifer Southgate. The virtual cell—a candidate co-ordinator for ‘middle-out’ modelling of biological systems. Briefings in Bioinformatics , 10(4):450–461, 03 2009. 3 [42] Philipp W u, Alejandro Escontrela, Danijar Hafner, Pieter Abbeel, and Ken Goldberg. Daydreamer: W orld models for physical robot learning. In Karen Liu, Dana Kulic, and Jeff Ichnowski, editors, Pr oceedings of The 6th Confer ence on Robot Learning , volume 205 of Pr oceedings of Mac hine Learning Resear ch , pages 2226– 2240. PMLR, 14–18 Dec 2023. 3 [43] Jiazheng Xu, Y u Huang, Jiale Cheng, Y uanming Y ang, Jiajun Xu, Y uan W ang, W enbo Duan, Shen Y ang, Qunlin Jin, Shurun Li, Jiayan T eng, Zhuoyi Y ang, W endi Zheng, Xiao Liu, Ming Ding, Xiaohan Zhang, Xiaotao Gu, Shiyu Huang, Minlie Huang, Jie T ang, and Y uxiao Dong. V isionreward: Fine-grained multi-dimensional human preference learning for image and video generation, 2024. 3 [44] Zeyue Xue, Jie W u, Y u Gao, Fangyuan K ong, Lingting Zhu, Mengzhao Chen, Zhiheng Liu, W ei Liu, Qiushan Guo, W eilin Huang, et al. Dancegrpo: Unleashing grpo on visual generation. arXiv pr eprint arXiv:2505.07818 , 2025. 3 [45] Y andan Y ang, Baoxiong Jia, Peiyuan Zhi, and Siyuan Huang. Physcene: Physically interactable 3d scene synthe- sis for embodied ai. In Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition (CVPR) , pages 16262–16272, June 2024. 3 [46] Lingchong Y ou. T o ward computational systems biology . Cell Biochemistry and Biophysics , 40(2):167–184, 2004. 3 [47] Y uhui Zhang, Y uchang Su, Chenyu W ang, T ianhong Li, Zoe W efers, Jef frey Nirschl, James Burgess, Daisy Ding, Alejandro Lozano, Emma Lundber g, et al. Cellflux: Simulating cellular morphology changes via flo w matching. arXiv pr eprint arXiv:2502.09775 , 2025. 1, 3, 4, 5, 7, 8, 15, 17 [48] Kaiwen Zheng, Huayu Chen, Haotian Y e, Haoxiang W ang, Qinsheng Zhang, Kai Jiang, Hang Su, Stef ano Ermon, Jun Zhu, and Ming-Y u Liu. Diffusionnft: Online diffusion reinforcement with forward process. arXiv pr eprint arXiv:2509.16117 , 2025. 2, 3, 6, 7, 8, 14, 15 [49] Hanxin Zhu, T ianyu He, Anni T ang, Junliang Guo, Zhibo Chen, and Jiang Bian. Compositional 3d-aware video generation with llm director . In A. Globerson, L. Mackey , D. Belgrave, A. Fan, U. P aquet, J. T omczak, and C. Zhang, editors, Advances in Neural Information Pr ocessing Systems , volume 37, pages 131618–131644, 2024. 3 13 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT A Algorithm of CellFluxRL W e present the full training procedure of CellFluxRL in Algorithm 1. The algorithm adapts DiffusionNFT [48] to the source-to-target flo w matching setting and replaces the generic reward with our suite of biologically grounded re ward functions. Relation to DiffusionNFT . CellFluxRL adapts DiffusionNFT in three key ways. (i) Source-to-tar get flow : unlike standard dif fusion where the forw ard process adds Gaussian noise from a fix ed prior , our forward process interpolates between the source control image x 0 and the generated target ˆ x 1 (Line 11), matching the CellFlux flow matching formulation. (ii) Biological re ward suite : the scalar re ward r (Lines 6–8) is the weighted combination of K biologi- cally grounded evaluators cov ering MoA consistency , structural plausibility , and morphological statistics, rather than a single generic reward. (iii) Gr oup rollouts conditioned on ( x 0 , c ) pair s : each rollout group is conditioned on a fixed control image alongside a perturbation condition, so within-group div ersity arises from the stochastic noise injection applied to x 0 by CellFlux, rather than from random latent initialization. Algorithm 1 CellFluxRL : Biologically-Constrained RL Post-T raining for Flo w Matching Require: Pretrained velocity v ref θ ; biological rewards { r k } K k =1 with weights { w k } ; dataset D data = { ( x 0 , c ) } ; group size m ; hyperparameters β and β KL ; learning rate λ 1: Initialize: data collection policy v old ← v ref θ ; training policy v θ ← v ref θ ; data buf fer D ← ∅ 2: for each iteration i = 1 , 2 , . . . do # — Phase 1: Rollout (Data Collection) — 3: for each ( x 0 , c ) sampled from D data do 4: Generate m candidate perturbed images x 1 by from the policy π old (by integrating v old ( ·| c ) from x 0 ): ˆ x ( j ) 1 m j =1 ∼ π old ( · | x 0 , c ) 5: Compute combined biological rew ard for each candidate: r ( j ) = P K k =1 w k r k ˆ x ( j ) 1 , c . 6: Normalize within the group and map to optimality probability r ( j ) ∈ [0 , 1] : r ( j ) norm = r ( j ) − 1 m P j r ( j ) , r ( j ) adv = 0 . 5 + 0 . 5 · clip r ( j ) norm Z c , − 1 , 1 , where Z c > 0 is a normalization hyperparameter . 7: Append x 0 , c, ˆ x ( j ) 1 , r ( j ) adv m j =1 to buf fer D . 8: end for # — Phase 2: T raining (Gradient Step) — 9: for each mini-batch ( x 0 , c, ˆ x 1 , r adv ) ⊂ D do 10: Sample timestep t ∼ U [0 , 1] 11: Apply source-to-target forw ard process: x t = α t x 0 + β t ˆ x 1 , v t = ˙ α t x 0 + ˙ β t ˆ x 1 12: Construct implicit positiv e and negati ve v elocities: v + θ ( x t , c, t ) = (1 − β ) v old ( x t , c, t ) + β v θ ( x t , c, t ) v − θ ( x t , c, t ) = (1 + β ) v old ( x t , c, t ) − β v θ ( x t , c, t ) 13: Compute contrastiv e loss: L ( θ ) = r adv v + θ − v 2 2 + (1 − r adv ) v − θ − v 2 2 + β KL D KL v θ ∥ v old 14: Update parameters: θ ← θ − λ ∇ θ L ( θ ) 15: end for # — Phase 3: P olicy Update — 16: EMA update of data collection policy: θ old ← η i θ old + (1 − η i ) θ 17: Clear buf fer: D ← ∅ 18: end for 19: return Post-trained v elocity field v θ 14 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT B Implementation Details T raining Configurations. Our setup largely follows DiffusionNFT [48] with a learning rate of 2 × 10 − 5 . For each col- lected generated image, forward noising and loss computation are performed at the corresponding sampling timesteps. W e employ a Heun (2nd-order) ODE sampler for data collection. C Dataset Details BBBC021 dataset. W e use the BBBC021v1 image set [28, 6] from the Broad Bioimage Benchmark Collection, a standard benchmark for fluorescence-microscop y-based phenotypic profiling. The dataset captures chemical perturba- tions in MCF-7 human breast cancer cells: approximately 97,500 three-channel fluorescence images stained for DN A, F-actin, and beta-tubulin, covering 113 small-molecule compounds administered at eight concentrations each. The compounds span a broad range of biological mechanisms, and each is annotated with a mode-of-action (MoA) label. Follo wing prior work [47], we preprocess images by correcting illumination, cropping to 96 × 96 patches centered on nuclei, and filtering out low-quality frames, yielding approximately 98K images across 26 perturbation conditions. T able 3 lists the MoA assignment for ev ery compound used in our experiments. D Qualitative Comparison Figures 7 and 8 provide additional qualitativ e comparisons between CellFluxRL + TTS and the pretrained CellFlux baseline. Figure 7 highlights cases where the base model fails to reproduce the perturbation-specific morphological profile expected for the given MoA— CellFluxRL + TTS recovers the correct morphological signature by optimizing the MoA re ward. Figure 8 shows cases where the base model produces nuclei with implausible shapes; by optimizing the roundness rew ard, CellFluxRL + TTS generates nuclei whose shape is consistent with the ground-truth MoA- conditioned distribution. E Normalized Reward Analysis T able 4 reports min-max normalized scores across all 7 reward components. CellFluxRL + TTS consistently outper- forms the pretrained CellFlux baselines on ev ery reward without requiring manual weight tuning. 15 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT Figure 7: MoA reward failure cases. The pretrained CellFlux baseline (left) generates images that do not match the expected morphological profile for the gi ven perturbation, as measured by the MoA re ward. CellFluxRL + TTS (right) corrects these failures by explicitly optimizing for MoA consistency during RL post-training. Ground-truth target images for the same perturbation conditions are sho wn in Figure 4 of the main paper . Figure 8: Roundness reward failure cases. The pretrained CellFlux baseline (left) produces nuclei with irregular , implausible shapes that de viate from the MoA-conditioned ground-truth distribution. CellFluxRL + TTS (right) gen- erates nuclei with roundness statistics consistent with real cells under the same perturbation condition. Ground-truth target images for the same perturbation conditions are sho wn in Figure 4 of the main paper . 16 CellFluxRL : Biologically-Constrained V irtual Cell Modeling via Reinforcement Learning A P R E P R I NT T able 3: Modes of action (MoA) for compounds in BBBC021. Compound MoA Cytochalasin B Actin disruptors Cytochalasin D Actin disruptors Latrunculin B Actin disruptors AZ258 Aurora kinase inhibitors AZ841 Aurora kinase inhibitors Mevinolin/Lo vastatin Cholesterol-lowering Simvastatin Cholesterol-lowering Chlorambucil DNA damage Cisplatin DN A damage Etoposide DN A damage Mitomycin C DN A damage Camptothecin DN A replication Floxuridine DN A replication Methotrexate DN A replication Mitoxantrone DN A replication AZ138 Eg5 inhibitors PP-2 Epithelial Alsterpaullone Kinase inhibitors Bryostatin Kinase inhibitors PD-169316 Kinase inhibitors Colchicine Microtubule destabilizers Demecolcine Microtub ule destabilizers Nocodazole Microtubule destabilizers V incristine Microtubule destabilizers Docetaxel Microtubule stabilizers Epothilone B Microtubule stabilizers T axol Microtubule stabilizers ALLN Protein degradation Lactacystin Protein degradation MG-132 Protein degradation Proteasome inhibitor I Protein degradation Anisomycin Protein synthesis Cyclohexamide Protein synthesis Emetine Protein synthesis DMSO DMSO T able 4: Quantitative comparison across biological ev aluation metrics (rows) for CellFlux baselines and CellFluxRL variants (columns), where CellFluxRL is post-trained with min-max normalized rew ards. Bold values indicate the best performance per metric. Metric CellFlux [47] CellFluxRL Biological Rewar ds MoA 0.26 0.28 Nuc-in-Cyto 0.88 0.96 Roundness -0.34 -0.25 NucSize -2.21 -1.34 CytoSize -1.09 -0.64 NucCount -0.83 -0.46 CytoCount -1.03 -0.62 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment