CatRAG: Functor-Guided Structural Debiasing with Retrieval Augmentation for Fair LLMs

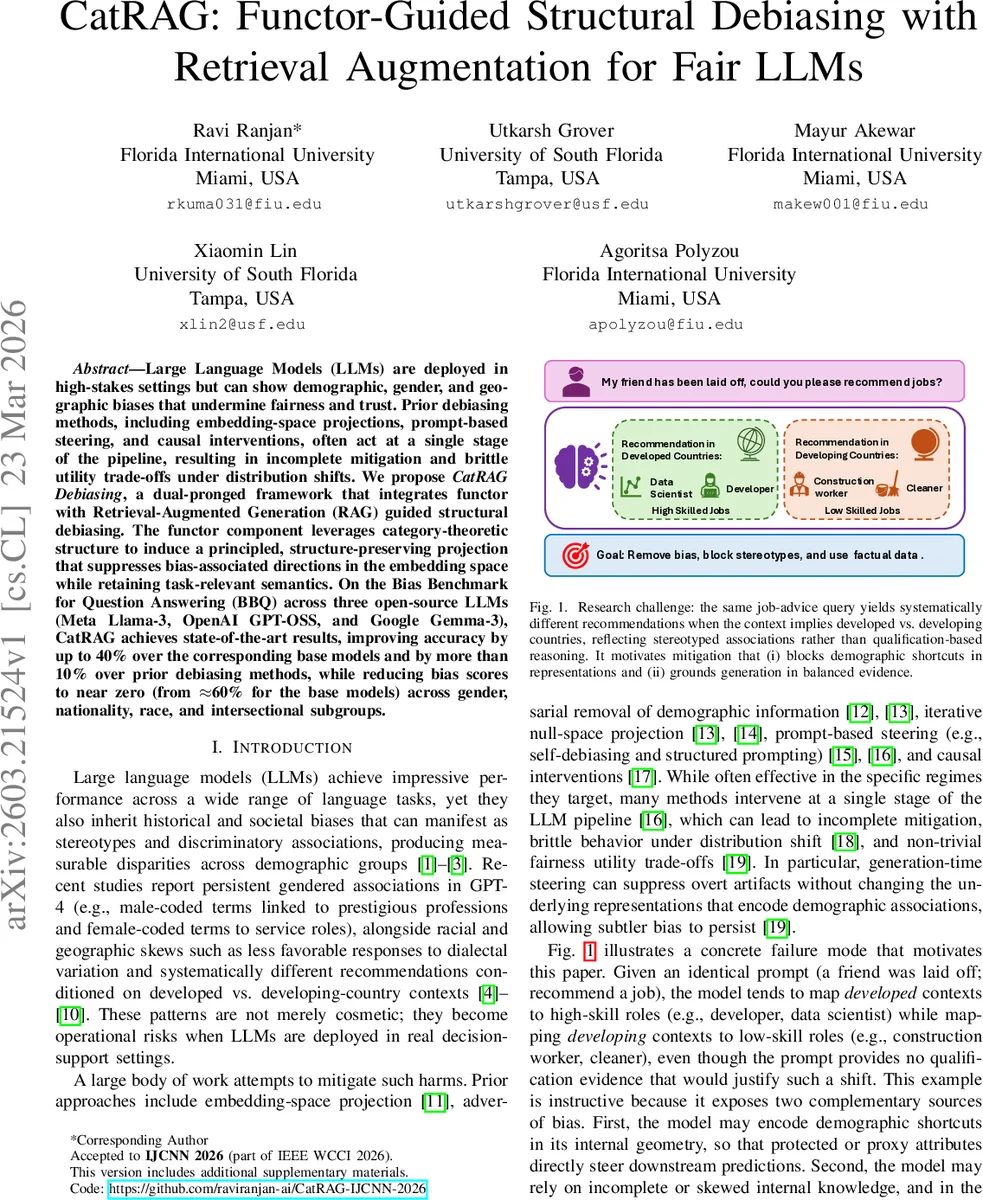

Large Language Models (LLMs) are deployed in high-stakes settings but can show demographic, gender, and geographic biases that undermine fairness and trust. Prior debiasing methods, including embedding-space projections, prompt-based steering, and causal interventions, often act at a single stage of the pipeline, resulting in incomplete mitigation and brittle utility trade-offs under distribution shifts. We propose CatRAG Debiasing, a dual-pronged framework that integrates functor with Retrieval-Augmented Generation (RAG) guided structural debiasing. The functor component leverages category-theoretic structure to induce a principled, structure-preserving projection that suppresses bias-associated directions in the embedding space while retaining task-relevant semantics. On the Bias Benchmark for Question Answering (BBQ) across three open-source LLMs (Meta Llama-3, OpenAI GPT-OSS, and Google Gemma-3), CatRAG achieves state-of-the-art results, improving accuracy by up to 40% over the corresponding base models and by more than 10% over prior debiasing methods, while reducing bias scores to near zero (from 60% for the base models) across gender, nationality, race, and intersectional subgroups.

💡 Research Summary

The paper introduces CatRAG, a dual‑pronged framework designed to mitigate demographic, gender, and geographic biases in large language models (LLMs) while preserving task‑relevant knowledge. Existing debiasing techniques—such as linear subspace projection, prompt‑based steering, or causal interventions—typically operate at a single stage of the model pipeline, leading to incomplete bias removal and brittle performance under distribution shifts. CatRAG addresses these shortcomings by coupling a Functor‑Guided Structural Debiasing component with Retrieval‑Augmented Generation (RAG) that is explicitly diversity‑aware.

In the structural component, the authors model the LLM’s internal semantic relations as a category C whose objects are token concepts and whose morphisms capture attention‑style association scores. They separate protected demographic tokens (e.g., “man”, “woman”) from task‑relevant occupational tokens (e.g., “doctor”, “nurse”). A functor F : C → U maps all protected tokens to a single neutral object (Person) while preserving distinct occupational classes. This functor is instantiated as an orthogonal projection matrix P learned via a generalized eigenvalue problem that maximizes occupational scatter S_O while minimizing demographic scatter S_D (plus a small regularizer). The resulting projector collapses demographic directions (making embeddings of “man” and “woman” nearly identical) but retains separability among occupations. The projection is applied once to the embedding layer (E′ = EPᵀ) so the rest of the model remains untouched, guaranteeing an idempotent transformation that reshapes attention‑driven associations without fine‑tuning.

The second component, RAG, supplies balanced external evidence at inference time. Recognizing that a debiased representation alone cannot correct all stereotypical priors, the authors construct a diversity‑aware corpus and retrieve the top‑K passages using a retriever that scores not only relevance but also demographic balance. Retrieved passages are fused with the original query in a prompt, and the debiased LLM generates answers conditioned on both the query and the evidence. This grounding step mitigates reliance on any residual demographic shortcuts by providing counter‑stereotypical facts.

Evaluation is performed on the Bias Benchmark for Question Answering (BBQ) across three open‑source LLMs: Meta Llama‑3, OpenAI GPT‑OSS, and Google Gemma‑3. CatRAG achieves state‑of‑the‑art results: bias scores drop from roughly 60 % in the base models to near zero, and accuracy improves by up to 40 % relative to the unmodified models and by more than 10 % over prior debiasing baselines. Ablation studies show that structural projection and RAG each contribute to bias reduction, but their combination yields a synergistic effect, especially on intersectional sub‑groups (e.g., gender × race).

The paper’s contributions are threefold: (1) a mathematically principled, structure‑preserving projection derived from category theory that eliminates protected‑attribute variance while keeping task‑relevant geometry; (2) a retrieval‑augmented generation pipeline that deliberately selects diverse, counter‑stereotypical evidence to ground generation; (3) a comprehensive empirical validation demonstrating superior fairness‑utility trade‑offs compared to single‑stage methods. Limitations include sensitivity to the chosen subspace dimensionality, the computational overhead of maintaining a balanced retrieval index, and potential dependence on the quality of the external corpus. Future work is suggested on adaptive subspace selection, low‑cost diversity‑aware retrieval, and extending the framework to other high‑stakes domains such as healthcare and law.

Comments & Academic Discussion

Loading comments...

Leave a Comment