NOWA: Null-space Optical Watermark for Invisible Capture Fingerprinting and Tamper Localization

Ensuring the authenticity and ownership of digital images is increasingly challenging as modern editing tools enable highly realistic forgeries. Existing image protection systems mainly rely on digital watermarking, which is susceptible to sophistica…

Authors: Edwin Vargas, Jhon Lopez, Henry Arguello

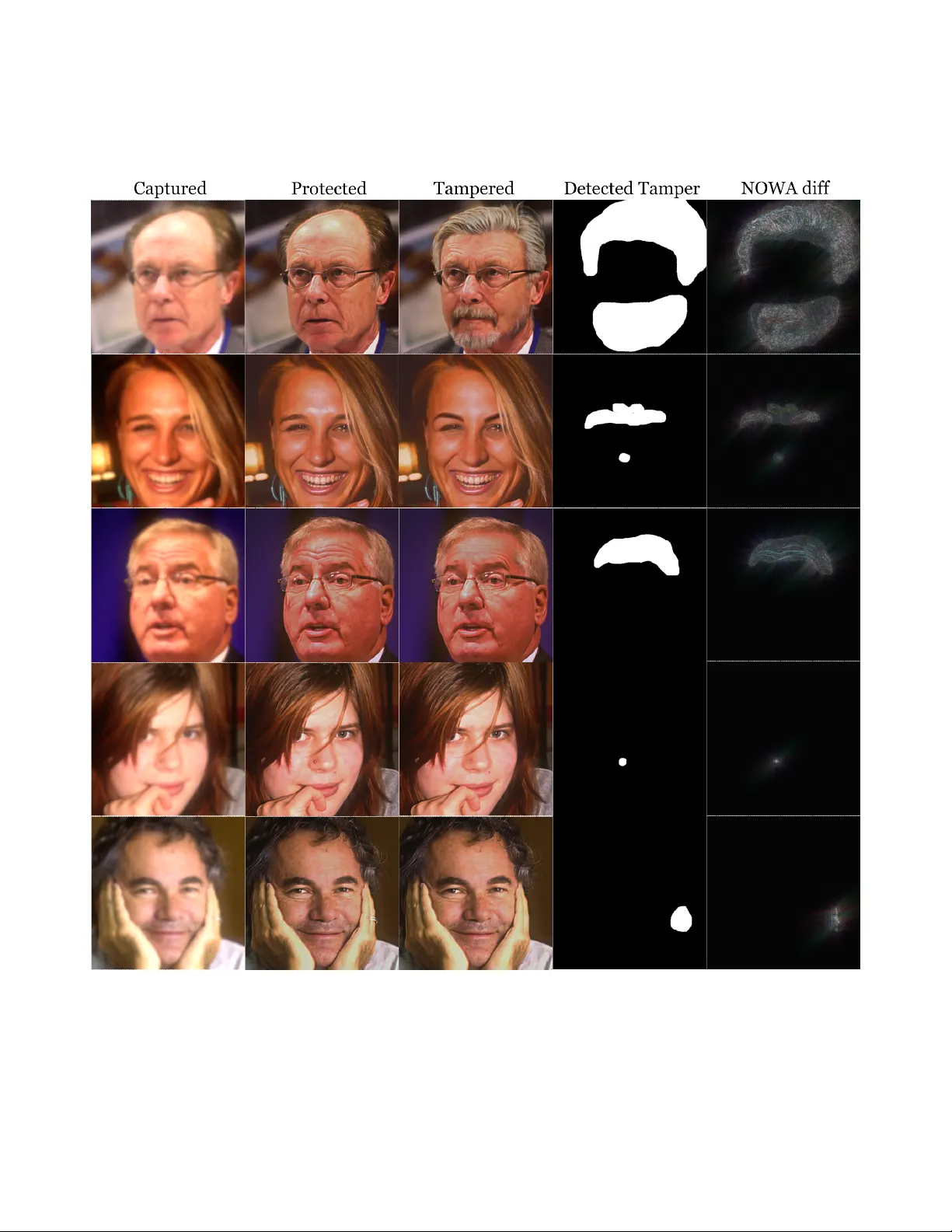

NO W A: Null-space Optical W atermark f or In visible Captur e Fingerprinting and T amper Localization Edwin V arg as 1 ∗ Jhon Lopez 2 Henry Arguello 2 Ashok V eeraraghav an 1 1 Rice Uni versity 2 Uni versidad Industrial de Santander ∗ edwin.vargas@ieee.org Abstract Ensuring the authenticity and ownership of digital imag es is incr easingly challenging as modern editing tools enable highly r ealistic for geries. Existing image pr otection sys- tems mainly r ely on digital watermarking, which is sus- ceptible to sophisticated digital attacks. T o addr ess this limitation, we pr opose a hybrid optical-digital fr amework that incorporates physical authentication cues during im- age formation and pr eserves them thr ough a learned re- construction pr ocess. At the optical level, a phase mask in the camera apertur e pr oduces a Null-space Optical W a- termark (NO W A) that lies in the Null Space of the imag- ing operator and ther efore remains in visible in the cap- tur ed imag e. Then, a Null-Space Network (NSN) performs measur ement-consistent reconstruction that deliver s high- quality pr otected images while pr eserving the NO W A sig- natur e. The pr oposed design enables tamper localization by projecting the image onto the camera’ s null space and detecting pixel-level inconsistencies. Our design pr eserves per ceptual quality , r esists common de gradations such as compr ession, and establishes a structur al security asymme- try: without access to the optical or NSN parameter s, ad- versaries cannot for ge the NO W A signatur e. Experiments with simulations and a pr ototype camera demonstr ate com- petitive performance in terms of ima ge quality preservation and tamper localization accur acy compar ed to state-of-the- art digital watermarking and learning-based authentication methods. 1. Introduction W e liv e in an era dominated by digital media, where images and videos ha ve become a primary medium for communi- cation, documentation, and evidence. At the same time, the technological advances that f acilitate this ubiquity hav e also enabled the creation, manipulation, and dissemination of digital images and videos with unprecedented ease, erod- ing trust in visual media. From generati ve editing tools to large-scale diffusion models, synthetic forgeries can no w mimic reality with alarming fidelity , prompting the increas- ingly common question: Is this r eal or AI? . For instance, a deepfake video of Ukrainian President V olodymyr Zelen- skyy in 2022 demonstrates how fake or doctored media ar- tifacts can significantly degrade people’ s trust in digital me- dia today . Further, this highlights the urgent need for rob ust forensic methods for images and videos to detect and coun- teract media attacks. Most existing protection methods rely on digital water- marking [ 13 , 25 , 71 ], which embeds imperceptible codes into images for later v erification. Howe ver , most of these techniques are applied post-capture, making them suscepti- ble to remov al or degradation through common editing or compression processes. T o overcome this limitation, opti- cal watermarking embeds authentication cues at the point of image formation [ 20 , 23 , 62 ], of fering hardware-lev el protection. Prior optical watermarking systems have been engineered with a focus on rob ustness, aiming to preserve watermark integrity under various transformations such as printing, scanning, and lossy compression. Howe ver , these systems often rely on complex hardware configurations, including structured light projection and tightly calibrated camera setups [ 22 , 37 , 42 , 62 ], which can be costly , and difficult to deplo y . While robustness is desirable in cop yright protection and media tracing, it can be counterproductive in security tasks that demand sensiti vity to e ven minimal tampering. F ragile watermarking addresses this need by making the watermark highly sensitiv e to changes, supporting pixel-le vel integrity verification [ 44 , 53 , 67 ]. Howe ver , fragile watermarking re- mains largely underexplored in the optical domain. Recent efforts [ 4 ] using coded apertures begin to bridge this gap by embedding imperceptible optical signatures, but exhibit a notably lo w image quality and only offer a binary authen- ticity verification without the ability to localize tampering. Every camera inherently leaves a unique optical signa- ture in its images arising from the physics of its lenses, aper - tures, and sensors. W e lev erage this intrinsic property for authentication by introducing a custom phase mask (PM) in the optical path of the camera to transform the natural op- tical signature into a deterministic and verifiable physical- 1 Figure 1. A hybrid physical-digital pipeline for fragile authentication . (a) Optical-digital fingerprint: A phase mask (PM) in the camera aperture optically encodes the scene, embedding a unique physical signature before digitization. A neural network f θ reconstructs high-quality protected images by reco vering the information hidden in the camera’ s null space N . (b) Imag e verification: The tested image is projected onto N to obtain a signatur e map re vealing the embedded optical fingerprint. A detector d ψ analyzes this map to detect and localize tampering, by distinguishing predictable system noise from adversarial errors, confirming inte grity at the pixel lev el. layer w atermark embedded during image formation and be- fore the digital life cycle. Unlike post-capture digital wa- termarks, this optical encoding cannot be remov ed or ac- curately reproduced without kno wledge of the exact hard- ware configuration. Additionally , this subtle and spatially distributed modulation by the PM enables the use of com- pact, standard imaging de vices under e veryday incoherent illumination, eliminating the need for complex holographic systems or specialized lighting. Howe ver , operating solely in raw optical encoded data presents two key challenges: 1) the encoded images e xhibit inherent blur , making them unsuitable for direct visualization or con ventional image processing, and 2) access to raw sensor data could allo w ad- versaries to spoof or reverse-engineer the optical signature. These limitations moti vate the integration of a learned digi- tal component that restores image quality while preserving the underlying physical signature. Here, we introduce a hybrid optical–digital framework that unifies the PM encoding with learned digital process- ing for end-to-end image protection. This inte gration al- lows our system to proactiv ely embed optical authentica- tion cues at the moment of capture while preserving the vi- sual characteristics expected from end-consumer imaging devices. More precisely , our approach b uilds on the obser- vation that the null space of the imaging operator defined by the PM contains information that is optically unmeasurable but mathematically defined. W e e xploit this property to em- bed a Null-space Optical W atermark (NO W A) that is in vis- ible during capture and recov erable only through the cam- era’ s forward model. T o restore high-quality protected im- ages, we use a Null-Space Network (NSN) [ 52 ]. The NSN learns the inv erse optical mapping with measurement con- sistency , ensuring reconstructions preserve the NOW A. This design creates a b uilt-in security asymmetry : without the optical parameters and trained NSN parameters, the NO W A signature cannot be forged. Furthermore, our hybrid design enables flexible and high-accuracy verification via a two-stage mechanism: iso- lating the unique optical signature through a null space pro- jection of the designed camera and classifying tampering with a dedicated neural network. W e ev aluate our approach using different attack scenarios in simulations, demonstrat- ing competitive tamper localization accuracy compared to state-of-the-art digital watermarking and learning-based au- thentication methods. W e translate our digitally designed phase mask to hardware and demonstrate in real experi- ments high-quality protected images. 2. Related W ork Optical W atermarking. Optically Coded Captur e. Se v- eral works e xplore embedding authentication cues di- rectly in the optical domain. Ma et al. [ 36 ] introduce physical refractiv e objects ( totems ) whose captured re- gions act as coarse signatures for manipulation detection. Ptychography-based phase encoding enables optical wa- termarking using single-shot and non-mechanical setups [ 37 , 62 ]. Natural chromatic aberrations can also serv e as in- trinsic encoders [ 40 ], revealing forged regions through aber- 2 ration inconsistencies. Sensor-le vel fingerprints have been explored via pattern noise [ 35 ] and learned noise residuals [ 8 ], enabling device-specific for gery localization. Coded Illumination. W atermarks can also be embedded via structured lighting. Controlled illumination schemes [ 21 , 22 , 56 ] and noise-coded illumination [ 42 ] enable spa- tial and temporal forgery detection. Related works extend this to single-pix el imaging [ 63 ], display-based encoding [ 15 ], and time-varying illumination for simultaneous imag- ing and concealment [ 64 ]. Optical Coding for Encryption. Optical encryption methods [ 48 ] and hardware-based key systems such as OpEnCam [ 28 ] encode scenes using proprietary masks, en- suring decryption only with kno wn hardware keys. Simi- larly , color -coded apertures [ 4 ] embed authentication signa- tures during capture, though at the cost of degraded image quality . Digital W atermarking. Deep learning has transformed digital watermarking by improving both imperceptibility and robustness. HiDDeN [ 72 ] first introduced an end-to- end encoder–decoder for data embedding and recov ery , fol- lowed by flo w-based generati ve models [ 12 , 38 ] that exploit in vertibility for high-fidelity watermark extraction. Dif fer- entiable augmentations during training, such as JPEG com- pression and realistic perturbations, enhance robustness to signal distortions [ 2 , 11 , 31 , 60 ]. Self-supervised embed- ding further improv es watermark security [ 14 ]. Recent efforts focus on watermark resilience under AI-generated content (AIGC). Rob ust-W ide [ 19 ] and VINE [ 33 ] use diffusion models [ 50 ] for robust watermark recov ery un- der text-guided edits, albeit with high computational cost. Proactiv e watermarking introduces identity-sensitiv e and tamper-a ware encoding [ 70 ], shifting from passive protec- tion to active defense. EditGuard [ 68 ] extends this idea by unifying copyright verification with tamper localization through dual-in visible watermarks, while OmniGuard [ 69 ] generalizes the approach to high-resolution, manipulation- aware scenarios. Computational Photography . The co-design of optics and algorithms is at the core of computational photogra- phy . This paradigm has been vastly accelerated by modern automatic differentiation programming tools [ 1 , 46 ], which enable the implementation of fully differentiable pipelines. This approach has driven innov ation across numerous appli- cations, including color imaging and demosaicing [ 5 ], ex- tended depth of field [ 54 ], depth imaging [ 6 , 32 , 61 ], spec- tral imaging [ 3 , 57 , 58 ], high dynamic range (HDR) imag- ing [ 41 ], image classification [ 7 , 43 ], microscopy [ 24 ] and many others. Our work e xtends this end-to-end optimiza- tion technique for the purpose of authentication. 3. Method W e propose an authentication method that leverages the advantages of a physical and digital solution. Our pro- posed hybrid authentication system integrates optical front- end engineering with a Null-Space Network to form a differentiable end-to-end pipeline. The light propagation through the optical system is simulated using Fourier op- tics [ 16 , 27 , 61 ], enabling the joint optimization of both the optical parameters and the neural network for the authen- tication task. Mathematically , the proposed hybrid water- marked image is x p = f θ ( g ϕ ( x )) , (1) where x ∈ R n denotes the input scene, g ϕ ( · ) models the optical image formation process using a phase mask param- eterized by ϕ , and f θ ( · ) is the reconstruction NSN with pa- rameters θ , responsible for processing the optical encoded images and finally obtain the protected image x p ∈ R n . Follo wing reconstruction, the final stage of our pipeline is a verification mechanism that operates on the protected im- age x p to detect and localize tampering at the pixel level. In the following, we present more details about the imaging model, NSN, and tamper detection and localization. 3.1. Preliminaries Imaging Model. Our optical system g ϕ consists of a con- ventional imaging lens and a custom phase mask at the pupil plane. The combined effect of the lens and the phase mask defines the system’ s point spread function (PSF), which en- codes a unique physical signature into e very captured im- age. In the paraxial regime, the corresponding phase shift imparted by the conv ex lens thickness profile with focal length f and wav elength-dependent refractive inde x n ( λ ) produces the lens transmission function [ 17 ] t L ( x, y ) = exp − i k 2 f ( x 2 + y 2 ) , (2) with k = 2 π /λ denoting the wa venumber and ( x, y ) the spatial coordinates on the normalized pupil plane. T o model the additional modulation introduced by the engineered phase mask, we define a spatially varying height profile h ϕ ( x, y ) that produces a wav elength-dependent phase de- lay ϕ M ( x, y ) = k ( n M ( λ ) − 1) h ϕ ( x, y ) , (3) where n M ( λ ) is the refractive index of the mask mate- rial. The corresponding transmission function of the mask is then t M ( x, y ) = exp ( i ϕ M ( x, y )) . (4) The combined pupil function of the lens-phase mask optical system is giv en by P ( x, y ) = A ( x, y ) t L ( x, y ) t M ( x, y ) , (5) 3 where A ( x, y ) is an aperture function that equals 1 within the open pupil and 0 elsewhere. Giv en an input optical field U in ( x, y ) incident on the pupil, the modulated field imme- diately after the lens–mask assembly is U out ( x, y ) = P ( x, y ) U in ( x, y ) . (6) This field then propagates a distance s to the sensor plane according to the angular spectrum propagation model [ 17 ] resulting in U sensor ( x ′ , y ′ ) = F − 1 {F { U out ( x, y ) } · H s ( f x , f y ) } , (7) with H s ( f x , f y ) = exp ik s q 1 − ( λf x ) 2 − ( λf y ) 2 , ( f x , f y ) denote spatial frequency coordinates, F {·} and F − 1 {·} denote the forward and inv erse 2D F ourier trans- forms, and x ′ , y ′ denote the spatial coordinates on the sen- sor plane. Finally , the sensor measures light intensity , and the wa velength-specific PSF is gi ven by p ϕ,λ ( x ′ , y ′ ) = | U sensor ( x ′ , y ′ ) | 2 . (8) Giv en this formulation, the sensor measurement in the color channel centered at wav elength λ , i.e., y λ ∈ R n , can be approximated as the con volution between the input scene x λ and the engineered PSF y λ = g ϕ ( x λ ) = x λ ∗ p ϕ,λ + n , (9) where ∗ is the con volution operator, p ϕ,λ is the vector rep- resentation of the the PSF , and n ∈ R n models additive noise. The design parameters ϕ directly control the optical response through h ϕ ( x, y ) , enabling programmable mod- ulation of the image formation process. This Fourier op- tics formulation follows the standard treatment in compu- tational imaging and phase mask design [ 16 , 27 , 61 ]. For clarity , we henceforth omit the wavelength subscript and as- sume each color channel is processed independently . PSF Parameterization. T o ensure smoothness and man- ufacturability of the designed phase mask, we parameterize the height profile h ϕ ( u, v ) using a truncated expansion of Zernike polynomials : h ϕ ( x, y ) = K X k =1 ϕ k Z k ( x, y ) , (10) where Z k ( x, y ) denotes the k -th Zernike mode and ϕ k its corresponding coef ficient. This parameterization constrains the optimization space to physically realizable surfaces while maintaining differentiability for end-to-end learning. The coefficients ϕ k are optimized jointly with the digital re- construction network through gradient-based optimization, allowing the optical and computational components to co- adapt for optimal image authentication performance. Null-Space Networks f or Reconstruction. The mea- surement process in ( 9 ) can be modeled as y = A ϕ x + n , (11) where A ϕ encodes the optical forward model determined by phase mask and aperture parameters ϕ . Due to dif frac- tion and sampling limits, A ϕ is ill-conditioned, meaning that only part of the signal is observable, while information in its null space, N ( A ϕ ) = { z | A ϕ z = 0 } , (12) is completely in visible to the sensor . T o address this, Null-Space Networks (NSNs) [ 51 ] hav e been designed to both reconstruct an accurate signal and the lost information in N ( A ϕ ) . More precisely , given a regularized estimate ˆ x r = r ( y ) ∈ N ( A ϕ ) ⊥ , obtained via a regularized inv erse (e.g., pseudoin verse, T ikhonov , or W iener decon volution), the NSN recov ers an image defined as x p = f θ ( ˆ x r ) = ˆ x r + Π N U θ ( ˆ x r ) , (13) where Π N = I − A † ϕ A ϕ is the orthogonal projector onto N . This formulation enforces strict measurement consis- tency , A ϕ f θ ( ˆ x r ) = A ϕ ˆ x r = y , while allowing the learned network U θ to recov er the unobservable components. 3.2. Null-space Optical W atermark (NO W A) W e propose to e xploit the unobservable subspace N ( A ϕ ) as a secure channel to embed a Null-space Optical W ater- mark (NO W A) that cannot be imitated without access to A ϕ . Unlike digital watermarks, the NO W A is ph ysically encoded during image formation and remains in visible in the raw capture. Ho wever , the encoded images are not di- rectly usable for final users, and any standard reconstruc- tion algorithm would treat the NOW A as noise and remove it. T o prev ent this, we use the NSN framework to recover the protected image x p while anchoring the NOW A within it. By le veraging measur ement consistency , the null space provides a mathematically secure embedding: its energy is annihilated by A ϕ but recoverable through projection Π N . Any modification to x p that is inconsistent with N ( A ϕ ) can be detected by re-projecting the image and analyzing the residual null-space statistics. This observ ation forms the foundation of our detection and localization pipeline. T amper Detection and Localization. The authentication stage of our frame work operates entirely in the null-space domain defined by A ϕ . Gi ven a protected reconstruction x p , we extract a raw signatur e map by projecting it onto N ( A ϕ ) s = Π N ( x p ) = Π N U θ ( ˆ x r ) , (14) where we use that Π N ( ˆ x r ) = 0 for ˆ x r ∈ N ( A ϕ ) ⊥ and Π 2 N = Π N , thus isolating the learned component constrained to the unobserv able subspace of the optical model. This representation captures physically consistent 4 but measurement-in visible features that serve as the intrin- sic signature of the designed optics. For genuine images, s exhibits a stable, predictable spatial pattern determined by the optical encoding. T ampered regions, in contrast, disrupt this structure, leading to anomalous null-space responses. T o interpret anomaly de viations in the null-space, we em- ploy a con volutional neural netw ork (CNN) detector d ψ that maps s to per-pix el authenticity probabilities: m = d ψ ( s ) , (15) where m i ∈ [0 , 1] denotes the confidence that pixel i be- longs to an authentic re gion. The detector is trained with pixel-wise supervision to separate natural null-space vari- ations from tampering artifacts that violate the physical imaging constraints imposed by A ϕ . 3.3. Optimization W e jointly optimize the entire pipeline, including the optical forward model g ϕ , the Nullspace Network f θ , and the tam- per detector d ψ , in an end-to-end fashion. This joint train- ing allo ws the optics and neural modules to co-adapt for both faithful image reconstruction and reliable authenticity verification. The total objecti ve combines reconstruction fi- delity , perceptual consistency , and tamper discrimination: L total = L rec + β L perc + λ L cls , (16) where β and λ balance the relativ e importance of perceptual quality and tamper detection, respectiv ely . The reconstruction term enforces data fidelity L rec = ∥ x − x p ∥ 2 2 , while the perceptual term en- courages semantic similarity in a feature space extracted from a pretrained network L perc = P i ∥ φ i ( x ) − φ i ( x p ) ∥ 2 2 , where φ i ( · ) denotes the acti vation of the i -th layer in the reference network. Finally , the classification loss supervises per-pix el authenticity prediction: L cls = − [ c log m + (1 − c ) log (1 − m )] , (17) with c ∈ { 0 , 1 } n denoting the ground-truth authenticity mask and m = d ψ (Π N ( x p )) representing the predicted au- thenticity map. By jointly optimizing these objectiv es, the system learns to (1) reconstruct images consistent with the optical for- ward model, (2) encode verifiable null-space signatures unique to the camera’ s phase mask, and (3) detect de via- tions caused by digital tampering. This integrated train- ing strategy naturally aligns the Nullspace Netw ork’ s re- construction behavior with the tamper detector’ s sensitiv- ity , allo wing the model to focus on physically plausible, authentication-relev ant features. Finally , because the physical phase mask ϕ and the learned models are co-optimized, the resulting system in- herits an intrinsic unfor geability before the digital life cycle of the image: only images produced through the authen- tic optical path could produce consistent NOW As and pass the verification stage. If the NSN were compromised, the absence of the optical encoding prevents an attacker from reproducing v alid signatures, preserving the system’ s phys- ical integrity guarantees. 4. Results 4.1. Experimental Setup T o train our model, we utilize the FFHQ dataset [ 26 ], which offers a di verse collection of high-resolution f acial images, enabling robust ev aluation of image authenticity and degradation resistance. For image modification, we adopt BiSeNetV2 [ 65 ] as the face parsing model to generate precise se gmentation maps m , followed by Stable Dif fu- sion Inpainting [ 49 ] to produce realistic and context-a ware edits. Model ev aluation is conducted on the EditGuard test set [ 68 ], comprising 1,000 images sampled from the COCO 2017 dataset [ 29 ]. All experiments are performed on a sin- gle NVIDIA H100 GPU with 80 GB of VRAM. The size of the designed phase mask is 2 . 835 mm × 2 . 835 mm with a resolution of 256 × 256 pixels. W e employ the AdamW optimizer with a learning rate of 1 × 10 − 4 , weight decay of 1 × 10 − 2 , and a batch size of 32. Each model is trained for N epochs epochs. T o enhance generalization and robustness to real-world conditions, we apply data augmentations, in- cluding random cropping and additiv e Gaussian noise. 4.2. Comparison to competing baselines W e compare our method with representati ve models for ma- nipulations localization and image authenticity detection. MVSS-Net [ 9 ] lev erages multi-view self-supervision to de- tect splicing and forgery cues from local texture inconsis- tencies. OSN [ 59 ] introduces object-centric supervision to improv e robustness against editing operations. PSCC-Net [ 30 ] employs progressi ve semantic context consistency for detecting subtle manipulations in complex scenes. IML- V iT [ 39 ] incorporates transformer-based long-range depen- dencies for improv ed pixel-lev el localization. HiFi-Net [ 18 ] adopts a hierarchical multi-scale strate gy to balance detail preserv ation and detection sensiti vity . Finally , Ed- itGuard [ 68 ] learns tamper localization, using diffusion- model-guided consistency maps to achieve high accuracy on AIGC-based edits. All baselines are ev aluated using their of ficial implementations and recommended configu- rations in fiv e representativ e generativ e editing processes: Stable Diffusion [ 49 ], ControlNet [ 66 ], SDXL [ 47 ], RePaint [ 34 ], and Lama [ 55 ] to ensure a fair and comprehensiv e comparison. As shown in T able 1 , our hybrid optical–digital frame- work consistently outperforms all competing methods across all ev aluation metrics. While prior approaches such 5 Method Stable Diffusion [ 49 ] ControlNet [ 66 ] SDXL [ 47 ] RePaint [ 34 ] Lama [ 55 ] F1 ↑ A UC ↑ IoU ↑ F1 ↑ A UC ↑ IoU ↑ F1 ↑ A UC ↑ IoU ↑ F1 ↑ A UC ↑ IoU ↑ F1 ↑ A UC ↑ IoU ↑ MVSS-Net [ 9 ] 0.178 0.488 0.103 0.178 0.492 0.103 0.037 0.503 0.028 0.104 0.546 0.082 0.024 0.505 0.022 OSN [ 59 ] 0.174 0.486 0.101 0.191 0.644 0.110 0.200 0.755 0.118 0.183 0.644 0.105 0.170 0.430 0.099 PSCC-Net [ 30 ] 0.166 0.501 0.112 0.177 0.565 0.116 0.189 0.704 0.115 0.140 0.469 0.109 0.132 0.329 0.104 IML-VIT [ 39 ] 0.213 0.596 0.135 0.200 0.576 0.128 0.221 0.603 0.145 0.103 0.497 0.059 0.105 0.465 0.064 HiFi-Net [ 18 ] 0.547 0.734 0.128 0.542 0.735 0.123 0.633 0.828 0.261 0.681 0.896 0.339 0.483 0.721 0.029 EditGuard [ 68 ] 0.966 0.971 0.936 0.968 0.987 0.940 0.965 0.989 0.936 0.967 0.977 0.938 0.965 0.969 0.934 NO W A 0.993 0.999 0.987 0.992 0.999 0.985 0.929 0.997 0.867 0.974 0.999 0.949 0.965 0.999 0.933 T able 1. Comparison with other competitiv e tamper localization methods under different AIGC-based editing methods. Figure 2. Qualitativ e comparison between the proposed method and state-of-the-art approaches. Our method produces more precise localization of manipulated regions and better preserv es structural details compared to existing techniques. as EditGuar d achieve strong performance (F1=0.97), our method further improv es to an F1 of 0.993 , A UC of 0.999 , and IoU of 0.987 . This impro vement highlights the ben- efit of embedding physically grounded NOW A at cap- ture time. In contrast, purely digital detectors, such as MVSS-Net, OSN, or PSCC-Net, show degraded perfor- mance (F1 < 0.2) under generati ve editing, indicating lim- ited resistance to content preservation manipulations typical of AIGC models. Even transformer and diffusion guided methods struggle to generalize to unseen edits, whereas our null-space–based detection remains stable and pre- cise across all scenarios. Notably , our model was trained on FFHQ and ev aluated on COCO, demonstrating strong cross-domain generalization. 4.3. Ablations Effect of null-space projection on detection perfor - mance. T o e valuate the role of the null-space projection in our detection framew ork, we compare two inputs to the classifier d ψ : (i) the protected image x p , and (ii) its null- Figure 3. Qualitative ablation of detector input. Comparison of detected tamper when input to d ψ is the image or the null space. Using the image domain sho ws scattered f alse positiv es, while de- tector output on the null-space projection Π N ( x p ) domain, pro- ducing precise tamper localization. space projection Π N ( x p ) , which isolates the embedded physical signature. Both settings use identical architectures and training protocols. Using x p directly yields a lo wer performance (F1 ∼ 0.75, A UC ∼ 0.82, IoU ∼ 0.69), suggesting that tampering lea ves 6 Figure 4. Robustness of the proposed system against generative and analytical adversaries. F or each attack, we show the protected image produced by our system ( f θ output) alongside its corresponding Null-Space projection. Although counterfeit images closely replicate the visual appearance, they fail to reproduce the underlying null-space signature, enabling reliable detection and rejection by our method. Method Metrics Gaussian Noise JPEG σ = 1 σ = 5 Q = 70 Q = 80 Q = 90 HiFi-Net [ 18 ] F1 ↑ 0.236 0.156 0.315 0.404 0.476 A UC ↑ 0.604 0.568 0.551 0.602 0.661 EditGuard [ 68 ] F1 ↑ 0.908 0.871 0.552 0.813 0.785 A UC ↑ 0.985 0.977 0.875 0.973 0.961 NOW A F1 ↑ 0.984 0.982 0.885 0.893 0.890 A UC ↑ 0.999 0.999 0.994 0.994 0.992 T able 2. Comparison of tampering localization under different lev- els of noise and compression degradations. weak cues in the image domain. In contrast, feeding the null-space projection produces near-perfect scores, demon- strating that Π N effecti vely suppresses natural image v ari- ation and e xposes the in variant watermark. Qualitative re- sults in Fig. 3 further sho w that image-based predictions are noisy with scattered f alse positiv es, whereas null-space pro- jections enable clean and spatially coherent detection maps. Effect of Degradations. As shown in T ab . 2 , we ev aluate robustness against common degradations (JPEG, noise) and advanced tampering (Stable Dif fusion Inpaint [ 49 ]). Our NO W A approach retains high localization accuracy with F1 scores around 0.9 ev en under compression, and minimal performance loss under Gaussian noise degradation. In con- trast, the purely digital HiFi-Net [ 18 ] degrades significantly . This confirms that embedding the watermark at the optical lev el preserv es the null-space signature and suppresses ir- relev ant v ariability , ensuring reliable detection e ven under sev ere perturbations. Effect of Learned Phase Mask. W e also e xamine a con- figuration without the phase mask. The optical system still has a null space, allowing the NSN to imprint a NO W A; howe ver , this signature is significantly less structured than the one produced with the learned mask. As a result, the re- constructed images retain only a faint watermark, and the detection module struggles to distinguish genuine devia- tions from natural v ariation (F1=0.89, IoU=0.81). These observations confirm that the proper design of the null space is essential for making the NO W A strong, stable, and dis- criminativ e. 4.4. Adversarial Robustness Evaluation W e assess robustness under three adv ersarial scenarios in which the attacker attempt to replicate the distribution of protected images having access to 7 , 000 camera outputs y or protected reconstructions x p , along with 10 , 000 unpro- tected public images. The adv ersarial attacks are as follows: Attack 1: Camera Imitation. From camera outputs y , the adv ersary attempts to replicate the beha vior of the phys- ical camera by learning a generati ve model G 1 that maps unprotected images to camera outputs G 1 : X u → Y ′ . The resulting fake camera images y ′ = G 1 ( x u ) are then passed through the original protection network f θ (asumming is ex- posed) to generate fake protected images x ′ p . Attack 2: Protected Image Imitation. In this case, the adversary directly targets the distribution of protected im- ages by training a generativ e model G 2 that learns to map public unprotected data into the protected domain G 2 : X u → X ′ p . The generated images x ′ p = G 2 ( x u ) are sub- sequently processed by the image verification network d ψ . Attack 3: Blind Decon volution. From camera outputs, the adversary can also attempt to estimate the system PSF via blind decon volution techniques and apply it to arbitrary public images ˆ y = ˆ A ϕ x u . The generated ˆ y is then passed through the protection function f θ (asumming is exposed) and the verification module d ψ . For G 1 and G 2 , we employ a diffusion-based image- to-image translation model [ 45 ], and for blind decon volu- tion we use the method of Eboli et al. [ 10 ]. As shown in Fig. 4 , all three attacks produce images that are visu- ally similar to our system; howe ver , they fail to reproduce the intrinsic null-space correlations imposed by the physi- cal camera model. Specifically , for 1 , 000 spoofed images 7 Figure 5. T amper localization fr om r eal captur es. Each column shows (top) the protected image with the target edit region outlined in green, and (bottom) the tampered image with the estimated manipulation mask overlaid in translucent red. Edits were manually made using Photoshop tools. The IoU scores below each example indicate strong localization accuracy and rob ust detection of real digital edits, as supported by the visual results. per attack, our system achiev es detection accuracies (F1 scores) of 0.901, 0.913, and 0.946 for attacks 1, 2, and 3, respectiv ely , confirming the rob ustness of our hybrid opti- cal–digital design against both learned and physics-based adversaries. Notably , ev en when the NSN is accessible to the attacker (Attacks 1 and 3), the absence of the authen- tic optical system prev ents the generation of valid NO W As. Like wise, the failure of Attack 2 shows that, without the op- tical encoding, the protected and natural image distributions remain statistically indistinguishable. 5. Real Captures Experiments W e verify the proposed frame work in a real-world cap- ture experiment by translating the forward model A ϕ used in simulation to a physical optical prototype. The setup comprises a Canon EOS5DMarkIV DSLR coupled with a 50mm f/1.8 YONGNUO lens and the designed phase mask fabricated using two-photon lithography on a fused-silica substrate. The phase mask was placed in the aperture plane of the YONGNUO lens. Additional f abrication and opti- cal setup details are provided in the Supplementary Mate- rial. For accurate protection under real imaging conditions, we fine-tune the Null-Space Network f θ and the detector d ψ for 30 epochs with a learning rate of 1 × 10 − 5 , using scenes from the FFHQ dataset rendered through the mea- sured point spread functions (PSFs) of the real prototype. Captured images, fine-tuning details, and calibration, in- cluding PSF measurement and alignment procedures, are provided in the Supplementary Material. T o simulate realistic tampering, we manually edited cap- tured protected images using Adobe Photoshop, employ- ing the lasso selection tool followed by generativ e fill prompts based on Adobe generative AI technology . These edits introduce semantically plausible modifications but measurement-inconsistent modifications. Despite the real- ism of the generated content, our detector accurately lo- calized the tampered re gions with high precision, achie v- ing pixel-lev el consistency across multiple test captures. Figure 5 sho ws real-world examples of protected, edited, and detected tampering demonstrating the rob ustness of the proposed NOW A to authentic image manipulations in real- world captures. 6. Discussion Our results demonstrate that jointly optimizing optical and digital components enables a physically grounded authen- tication system. By embedding optical cues at capture through the phase mask, the Null-space Optical W atermark (NO W A) becomes a hardware-dependent signature, invisi- ble in the image b ut recov erable via the null-space projec- tion. Furthermore the NOW A acts as a non-in vertible physi- cal key , creating a security asymmetry , v alid signatures can- not be reproduced without the genuine phase mask, e ven if the digital pipeline is exposed. Limitations include the small and fixed aperture size of the phase mask, which con- strains light throughput. Further, for larger apertures the depth-dependent beha vior of the PSF should be accounted for correct computation of the null-space. Additionally , as observed in the real capture experiments, optical misalign- ment or imperfect calibration can degrade null-space esti- mation and violate measurement consistency . Finally , we only assume attack models that hav e protected x p or cap- tured images y . Future w ork will in vestigate randomized or key-based digital embeddings to mitigate potential attacks that e xploit access to large paired ( x p , y ) datasets for ap- proximating the imaging operator . 8 Acknowledgments This work was supported by NSF award IIS-2107313 and DoD ARO W911NF-24-2-0008. W e thank Aniket Dash- pute and V iv ek Boominathan for helpful discussions. W e thank Salman Khan for fabricating the phase mask. References [1] Mart ´ ın Abadi, Paul Barham, Jianmin Chen, Zhifeng Chen, Andy Da vis, Jeffrey Dean, Matthieu De vin, Sanjay Ghe- mawat, Geof frey Irving, Michael Isard, et al. T ensorflow: A system for large-scale machine learning. In 12th { USENIX } symposium on oper ating systems design and implementation ( { OSDI } 16) , pages 265–283, 2016. 3 [2] Mahdi Ahmadi, Alireza Norouzi, Nader Karimi, Shadrokh Samavi, and Ali Emami. Redmark: Framew ork for resid- ual diffusion watermarking based on deep networks. Expert Systems with Applications , 146:113157, 2020. 3 [3] Henry Arguello, Jorge Bacca, Hasindu Kariyawasam, Edwin V argas, Miguel Marquez, Ramith Hettiarachchi, Hans Gar- cia, Kithmini Herath, Udith Haputhanthri, Balpreet Singh Ahluwalia, et al. Deep optical coding design in computa- tional imaging: a data-driven framew ork. IEEE Signal Pro- cessing Magazine , 40(2):75–88, 2023. 3 [4] Kevin Arias, P ablo Gomez, Carlos Hinojosa, Juan Carlos Niebles, and Henry Arguello. Protecting images from ma- nipulations with deep optical signatures. IEEE J ournal of Selected T opics in Signal Processing , 2025. 1 , 3 [5] A yan Chakrabarti. Learning sensor multiplexing design through back-propagation. In Advances in Neural Informa- tion Pr ocessing Systems , pages 3081–3089, 2016. 3 [6] Julie Chang and Gordon W etzstein. Deep optics for monocu- lar depth estimation and 3d object detection. In Pr oceedings of the IEEE International Conference on Computer V ision , pages 10193–10202, 2019. 3 [7] Julie Chang, V incent Sitzmann, Xiong Dun, W olfgang Hei- drich, and Gordon W etzstein. Hybrid optical-electronic con- volutional neural networks with optimized diffractiv e optics for image classification. Scientific r eports , 8(1):1–10, 2018. 3 [8] Davide Cozzolino and Luisa V erdoliv a. Noiseprint: A cnn- based camera model fingerprint. IEEE T ransactions on In- formation F orensics and Security , 15:144–159, 2019. 3 [9] Chengbo Dong, Xinru Chen, Ruohan Hu, Juan Cao, and Xirong Li. Mvss-net: Multi-view multi-scale supervised net- works for image manipulation detection. IEEE T ransactions on P attern Analysis and Mac hine Intelligence , 45(3):3539– 3553, 2022. 5 , 6 [10] Thomas Eboli, Jean-Michel Morel, and Gabriele Facciolo. Fast two-step blind optical aberration correction. In Eu- r opean Conference on Computer V ision , pages 693–708. Springer , 2022. 7 [11] Han Fang, Zhaoyang Jia, Zehua Ma, Ee-Chien Chang, and W eiming Zhang. Pimog: An effecti ve screen-shooting noise- layer simulation for deep-learning-based watermarking net- work. In Pr oceedings of the 30th ACM international confer - ence on multimedia , pages 2267–2275, 2022. 3 [12] Han F ang, Y upeng Qiu, K ejiang Chen, Jiyi Zhang, W eim- ing Zhang, and Ee-Chien Chang. Flow-based robust water- marking with inv ertible noise layer for black-box distortions. In Pr oceedings of the AAAI confer ence on artificial intelli- gence , pages 5054–5061, 2023. 3 [13] Hany Farid. Image forgery detection. IEEE Signal pr ocess- ing magazine , 26(2):16–25, 2009. 1 [14] Pierre Fernandez, Alexandre Sablayrolles, T eddy Furon, Herv ´ e J ´ egou, and Matthijs Douze. W atermarking images in self-supervised latent spaces. In ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 3054–3058. IEEE, 2022. 3 [15] Candice R Gerstner and Hany Farid. Detecting real-time deep-fake videos using active illumination. In Proceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 53–60, 2022. 3 [16] Joseph W . Goodman. Intr oduction to F ourier Optics . Roberts and Company Publishers, 2005. 3 , 4 [17] Joseph W Goodman. Introduction to F ourier optics . Roberts and Company Publishers, 2005. 3 , 4 [18] Xiao Guo, Xiaohong Liu, Zhiyuan Ren, Stev en Grosz, Ia- copo Masi, and Xiaoming Liu. Hierarchical fine-grained im- age forgery detection and localization. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 3155–3165, 2023. 5 , 6 , 7 [19] Runyi Hu, Jie Zhang, T ing Xu, Jiwei Li, and Tianwei Zhang. Robust-wide: Robust watermarking against instruction- driv en image editing. In Eur opean Confer ence on Computer V ision , pages 20–37. Springer , 2024. 3 [20] Sheng Huang and Jian Kang W u. Optical watermarking for printed document authentication. IEEE T ransactions on In- formation F orensics and Security , 2(2):164–173, 2007. 1 [21] Y asunori Ishikawa, Kazutake Uehira, and Kazuhisa Y anaka. Practical ev aluation of illumination watermarking technique using orthogonal transforms. Journal of Display T echnology , 6(9):351–358, 2010. 3 [22] Y asunori Ishikawa, Kazutake Uehira, and Kazuhisa Y anaka. T olerance ev aluation for defocused images to optical water - marking technique. Journal of Display T echnology , 10(2): 94–100, 2013. 1 , 3 [23] Shuming Jiao, Changyuan Zhou, Y ishi Shi, W enbin Zou, and Xia Li. Re view on optical image hiding and watermarking techniques. Optics & Laser T echnology , 109:370–380, 2019. 1 [24] Lingbo Jin, Y ubo T ang, Jackson B Coole, Melody T T an, Xuan Zhao, Ha wraa Badaoui, Jacob T Robinson, Michelle D W illiams, Nadarajah V igneswaran, Ann M Gillenwater , et al. Deepdof-se: affordable deep-learning microscopy platform for slide-free histology . Natur e communications , 15(1):2935, 2024. 3 [25] Poonam Kadian, Shiafali M Arora, and Nidhi Arora. Robust digital watermarking techniques for copyright protection of digital data: A survey . W ir eless P ersonal Communications , 118(4):3225–3249, 2021. 1 [26] T ero Karras, Samuli Laine, and Timo Aila. A style-based generator architecture for generati ve adversarial networks. In Pr oceedings of the IEEE/CVF Confer ence on Computer 9 V ision and P attern Recognition (CVPR) , pages 4401–4410, 2019. 5 [27] Micah Kellman, Eftychios Bostan, Matthew Chen, and Laura W aller . Phase imaging with an untrained neural network. Optica , 6(6):686–689, 2019. 3 , 4 [28] Salman S Khan, Xiang Y u, Kaushik Mitra, Manmohan Chandraker , and Francesco Pittaluga. Opencam: Lensless optical encryption camera. IEEE T ransactions on Computa- tional Imaging , 2024. 3 [29] Tsung-Y i Lin, Michael Maire, Serge Belongie, James Hays, Pietro Perona, Dev a Ramanan, Piotr Doll ´ ar , and C Lawrence Zitnick. Microsoft coco: Common objects in context. In Eur opean confer ence on computer vision , pages 740–755. Springer , 2014. 5 [30] Xiaohong Liu, Y aojie Liu, Jun Chen, and Xiaoming Liu. Pscc-net: Progressive spatio-channel correlation netw ork for image manipulation detection and localization. IEEE T rans- actions on Circuits and Systems for V ideo T echnology , 32 (11):7505–7517, 2022. 5 , 6 [31] Y ang Liu, Mengxi Guo, Jian Zhang, Y uesheng Zhu, and Xiaodong Xie. A novel two-stage separable deep learning framew ork for practical blind watermarking. In Pr oceedings of the 27th A CM International conference on multimedia , pages 1509–1517, 2019. 3 [32] Jhon Lopez, Edwin V argas, and Henry Arguello. Depth esti- mation from a single optical encoded image using a learned colored-coded aperture. IEEE T ransactions on Computa- tional Imaging , 10:752–761, 2024. 3 [33] Shilin Lu, Zihan Zhou, Jiayou Lu, Y uanzhi Zhu, and Adams W ai-Kin Kong. Robust watermarking using generative pri- ors against image editing: From benchmarking to advances. arXiv pr eprint arXiv:2410.18775 , 2024. 3 [34] Andreas Lugmayr, Martin Danelljan, Andres Romero, Fisher Y u, Radu Timofte, and Luc V an Gool. Repaint: Inpainting using denoising diffusion probabilistic models. In Proceed- ings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 11461–11471, 2022. 5 , 6 [35] Jan Luk ´ a ˇ s, Jessica Fridrich, and Miroslav Goljan. Detecting digital image forgeries using sensor pattern noise. In Secu- rity , ste ganography , and watermarking of multimedia con- tents VIII , pages 362–372. SPIE, 2006. 3 [36] Jingwei Ma, Lucy Chai, Minyoung Huh, T ongzhou W ang, Ser-Nam Lim, Phillip Isola, and Antonio T orralba. T otems: Physical objects for verifying visual integrity . In Eur opean Confer ence on Computer V ision , pages 164–180. Springer, 2022. 2 [37] Rui Ma, Y uan Li, Huizhu Jia, Y ishi Shi, Xiaodong Xie, and Tiejun Huang. Optical information hiding with non- mechanical ptychography encoding. Optics and Lasers in Engineering , 141:106569, 2021. 1 , 2 [38] Rui Ma, Mengxi Guo, Y i Hou, Fan Y ang, Y uan Li, Huizhu Jia, and Xiaodong Xie. T owards blind watermarking: Com- bining in vertible and non-inv ertible mechanisms. In Pr o- ceedings of the 30th A CM International Confer ence on Mul- timedia , pages 1532–1542, 2022. 3 [39] Xiaochen Ma, Bo Du, Zhuohang Jiang, Ahmed Y Al Ham- madi, and Jizhe Zhou. Iml-vit: Benchmarking image ma- nipulation localization by vision transformer . arXiv preprint arXiv:2307.14863 , 2023. 5 , 6 [40] Owen Mayer and Matthew C Stamm. Accurate and ef ficient image forgery detection using lateral chromatic aberration. IEEE T ransactions on information for ensics and security , 13 (7):1762–1777, 2018. 2 [41] Christopher A Metzler , Hayato Ikoma, Y ifan Peng, and Gor- don W etzstein. Deep optics for single-shot high-dynamic- range imaging. 2020 IEEE Computer Society Confer ence on Computer V ision and P attern Reco gnition (CVPR’20) , 2019. 3 [42] Peter Michael, Zekun Hao, Serge Belongie, and Abe Davis. Noise-coded illumination for forensic and photometric video analysis. ACM T ransactions on Gr aphics , 2025. 1 , 3 [43] Alex Muthumbi, Amey Chaware, Kanghyun Kim, K evin C Zhou, Pav an Chandra Konda, Richard Chen, Benjamin Jud- ke witz, Andreas Erdmann, Barbara Kappes, and Roarke Horstmeyer . Learned sensing: jointly optimized microscope hardware for accurate image classification. Biomedical op- tics expr ess , 10(12):6351–6369, 2019. 3 [44] Paarth Neekhara, Shehzeen Hussain, Xinqiao Zhang, Ke Huang, Julian McAuley , and Farinaz K oushanfar . Facesigns: Semi-fragile watermarks for media authentication. A CM T ransactions on Multimedia Computing, Communications and Applications , 20(11):1–21, 2024. 1 [45] Gaurav Parmar , T aesung Park, Srinivasa Narasimhan, and Jun-Y an Zhu. One-step image translation with text-to-image models. arXiv pr eprint arXiv:2403.12036 , 2024. 7 [46] Adam Paszk e, Sam Gross, Soumith Chintala, Gregory Chanan, Edw ard Y ang, Zachary DeV ito, Zeming Lin, Al- ban Desmaison, Luca Antiga, and Adam Lerer . Automatic differentiation in p ytorch. 2017. 3 [47] Dustin Podell, Zion English, K yle Lacey , Andreas Blattmann, Tim Dockhorn, Jonas M ¨ uller , Joe Penna, and Robin Rombach. Sdxl: Improving latent diffusion mod- els for high-resolution image synthesis. arXiv pr eprint arXiv:2307.01952 , 2023. 5 , 6 [48] Philippe Refregier and Bahram Javidi. Optical image en- cryption based on input plane and fourier plane random en- coding. Optics letters , 20(7):767–769, 1995. 3 [49] Robin Rombach, Andreas Blattmann, Dominik Lorenz, Patrick Esser, and Bj ¨ orn Ommer . High-resolution image synthesis with latent diffusion models. In Proceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 10684–10695, 2022. 5 , 6 , 7 [50] Axel Sauer , Dominik Lorenz, Andreas Blattmann, and Robin Rombach. Adversarial dif fusion distillation. In Eur opean Confer ence on Computer V ision , pages 87–103. Springer , 2024. 3 [51] Michael T . Schaub, Carola-Bibiane Sch ¨ onlieb, and Benedikt W irth. Nullspace networks for linear in verse problems. SIAM Journal on Imaging Sciences , 14(4):1613–1645, 2021. 4 [52] Johannes Schwab, Stephan Antholzer , and Markus Halt- meier . Deep null space learning for in verse problems: con vergence analysis and rates. In verse Pr oblems , 35(2): 025008, 2019. 2 10 [53] Durgesh Singh and Sanjay K Singh. Dct based efficient fragile watermarking scheme for image authentication and restoration. Multimedia T ools and Applications , 76(1):953– 977, 2017. 1 [54] V incent Sitzmann, Stev en Diamond, Y ifan Peng, Xiong Dun, Stephen Boyd, W olfgang Heidrich, Felix Heide, and Gor- don W etzstein. End-to-end optimization of optics and image processing for achromatic extended depth of field and super- resolution imaging. A CM T ransactions on Graphics (TOG) , 37(4):1–13, 2018. 3 [55] Roman Suvoro v , Elizaveta Logache va, Anton Mashikhin, Anastasia Remizova, Arsenii Ashukha, Aleksei Silvestro v , Naejin Kong, Harshith Goka, Kiwoong Park, and V ictor Lempitsky . Resolution-rob ust lar ge mask inpainting with fourier conv olutions. In Pr oceedings of the IEEE/CVF winter confer ence on applications of computer vision , pages 2149– 2159, 2022. 5 , 6 [56] Y i-zhou T an, Hai-bo Liu, Shui-hua Huang, and Ben-jian Sheng. An optical watermarking solution for color personal identification pictures. In 2009 International Conference on Optical Instruments and T echnology: Optoelectr onic Infor- mation Security , pages 79–84. SPIE, 2009. 3 [57] Edwin V arg as, Julien NP Martel, Gordon W etzstein, and Henry Arguello. T ime-multiplexed coded aperture imaging: Learned coded aperture and pix el exposures for compressiv e imaging systems. In Pr oceedings of the IEEE/CVF Inter- national Confer ence on Computer V ision , pages 2692–2702, 2021. 3 [58] Edwin V arg as, Hoover Rueda-Chac ´ on, and Henry Arguello. Learning time-multiplexed phase-coded apertures for snap- shot spectral-depth imaging. Optics Expr ess , 31(24):39796– 39810, 2023. 3 [59] Haiwei W u, Jiantao Zhou, Jinyu Tian, and Jun Liu. Robust image forgery detection ov er online social network shared images. In Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , pages 13440– 13449, 2022. 5 , 6 [60] Xiaoshuai W u, Xin Liao, and Bo Ou. Sepmark: Deep separa- ble watermarking for unified source tracing and deepfak e de- tection. In Pr oceedings of the 31st ACM International Con- fer ence on Multimedia , pages 1190–1201, 2023. 3 [61] Y icheng W u, V iv ek Boominathan, Huaijin Chen, Aswin Sankaranarayanan, and Ashok V eeraraghavan. Phase- cam3d—learning phase masks for passiv e single view depth estimation. In 2019 IEEE International Confer ence on Com- putational Photo graphy (ICCP) , pages 1–12. IEEE, 2019. 3 , 4 [62] W enHui Xu, HongFeng Xu, Y ong Luo, T uo Li, and Y iShi Shi. Optical watermarking based on single-shot- ptychography encoding. Optics Express , 24(24):27922– 27936, 2016. 1 , 2 [63] Zhiyuan Y e, P anghe Qiu, Haibo W ang, Jun Xiong, and Kaige W ang. Image w atermarking and fusion based on fourier single-pixel imaging with weighed light source. Optics Ex- pr ess , 27(25):36505–36523, 2019. 3 [64] Zhiyuan Y e, Haibo W ang, Jun Xiong, and Kaige W ang. Si- multaneous full-color single-pixel imaging and visible wa- termarking using hadamard-bayer illumination patterns. Op- tics and Lasers in Engineering , 127:105955, 2020. 3 [65] Changqian Y u, Changxin Gao, Jingbo W ang, Gang Y u, Chunhua Shen, and Nong Sang. Bisenet v2: Bilateral net- work with guided aggre gation for real-time semantic seg- mentation. International journal of computer vision , 129 (11):3051–3068, 2021. 5 [66] Lvmin Zhang, Anyi Rao, and Maneesh Agraw ala. Adding conditional control to te xt-to-image dif fusion models. In Pr oceedings of the IEEE/CVF international conference on computer vision , pages 3836–3847, 2023. 5 , 6 [67] Xinpeng Zhang and Shuozhong W ang. Fragile w atermarking with error-free restoration capability . IEEE T ransactions on Multimedia , 10(8):1490–1499, 2008. 1 [68] Xuanyu Zhang, Runyi Li, Jiwen Y u, Y oumin Xu, W eiqi Li, and Jian Zhang. Editguard: V ersatile image watermarking for tamper localization and copyright protection. In Pro- ceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , pages 11964–11974, 2024. 3 , 5 , 6 , 7 [69] Xuanyu Zhang, Zecheng T ang, Zhipei Xu, Run yi Li, Y oumin Xu, Bin Chen, Feng Gao, and Jian Zhang. Omniguard: Hy- brid manipulation localization via augmented versatile deep image watermarking. In Proceedings of the Computer V i- sion and P attern Reco gnition Confer ence , pages 3008–3018, 2025. 3 [70] Y uan Zhao, Bo Liu, Ming Ding, Baoping Liu, T ianqing Zhu, and Xin Y u. Proacti ve deepfake defence via identity water- marking. In Pr oceedings of the IEEE/CVF winter conference on applications of computer vision , pages 4602–4611, 2023. 3 [71] Lilei Zheng, Y ing Zhang, and Vrizlynn LL Thing. A surve y on image tampering and its detection in real-world photos. Journal of V isual Communication and Image Repr esentation , 58:380–399, 2019. 1 [72] Jiren Zhu, Russell Kaplan, Justin Johnson, and Li Fei-Fei. Hidden: Hiding data with deep networks. In Pr oceedings of the Eur opean confer ence on computer vision (ECCV) , pages 657–672, 2018. 3 11 NO W A: Null-space Optical W atermark for In visible Capture Fingerprinting and T amper Localization Supplementary Material 7. Hardware Prototype and Fabrication In this section, we provide the specific manufacturing pa- rameters and assembly details for the physical prototype used in our real-world v alidation. 7.1. Phase Mask Fabrication The height profile h ϕ of the phase mask was fabricated via T wo-Photon Polymerization 3D lithography on a (10mm × 10mm) fused-silica substrate. W e utilized a Nanoscribe Photonic Professional GT laser writing system operating in Dip-in Liquid Lithography (DiLL) mode. For reliable printing, the height map of the designed phase mask was discretized into 6 layers with steps of 200 nm. W e em- ployed the IP-DIP photoresist, which has a refractiv e index of n = 1 . 52 at 550 nm, closely matching the fused-silica substrate to ensure structural stability . A 63 × objective was used to focus the laser into the photoresist. 7.2. Optical Assembly The optical setup consists of a Canon EOS 5D Mark IV DSLR (Full-frame sensor , 6 . 5 µ m pixel pitch) and a com- mercial YONGNUO 50 mm f/1.8 lens. T o integrate the phase mask into the system, the lens was disassembled to access the physical aperture stop (see Fig. 6 left). Then, the fabricated phase mask was cut to a smaller size to fit the camera’ s aperture and attached to it using carbon tape. The substrate was cut to a smaller size to fit into the camera’ s aperture (see Fig. 6 right). 8. PSF Measurement A critical step in bridging the simulation-to-reality gap is the accurate characterization of the physical Point Spread Function (PSF) generated by the fabricated mask. W e mea- sured the system PSF using a point source setup: 1. A 20 µ m pinhole was back-illuminated by a broadband white LED source. 2. The prototype camera was aligned to the optical axis. 3. The distance between the pinhole and cam- era is 50 cm. Figure 7 demonstrates the agreement between the simulated PSF and the physically measured PSF . The observed deviations are attributed to fabrication tolerances and misalignment of the mask relativ e to the optical axis. 9. Fine-T uning and Implementation Details While the Null-Space Network f θ and detector d ψ were ini- tially trained in a purely simulated en vironment, real-world optical aberrations and sensor noise introduce a domain Figure 6. Optical encoding . A commercial Y ONGNUO lens is disassembled to attach the design phase mask on the back side of the lens aperture. The inset shows the quantized designed phase mask. Figure 7. PSF Calibration. Comparison between the optimized simulated PSF (left) and the experimentally measured PSF from the physical prototype (right). The similarity in structure confirms the fidelity of the fabrication process. shift. T o ensure robust protection in physical experiments, we performed a fine-tuning stage. Ideally , one would col- lect thousands of real-world captures to fine-tune the model; howe ver , acquiring such a large-scale paired dataset im- poses a prohibitiv e workload. T o address this, we adopted a hybrid training strategy . Instead of relying on the analytical wa ve-optics model, we employ the measured PSFs described in Section 8 com- bined with clean images from the FFHQ dataset to synthet- ically generate optically encoded measurements y . W e fur- ther augmented these measurements by adding read noise 1 and photon shot noise calibrated to the Canon 5D sensor characteristics (ISO 100). With these measurements, we fine-tune the Null-Space Network f θ and the detector d ψ for 30 epochs with a learning rate of 1 × 10 − 5 . 10. Real-W orld Captur e Procedur e and Addi- tional Analysis W e conducted the capture e xperiments in a controlled, real- istic indoor lighting scenario. W e displayed all test scenes on a high-resolution LCD monitor rather than using printed targets. This approach ensured high spatial fidelity , stable il- lumination, and rapid switching between scenes. The moni- tor was positioned perpendicular to the optical axis at a fixed distance of 50 cm from the camera aperture. All captures were made using the Canon EOS-5D Mark IV with the 50 mm f/1.8 YONGNUO lens and the fabricated phase mask mounted at the aperture stop. All images were captured in RA W format to av oid in-camera processing. Captured images are cropped and resized to 512 × 512, matching the resolution used in our simulations. The first column of Fig. 8 sho ws the ra w measurements y captured with the prototype. Passing these measurements through the fine-tuned NSN f θ yields the protected recon- structions x p , shown in the second column. W e can re- cov er the scene content with high visual fidelity . T o sim- ulate realistic manipulations, we manually edited the pro- tected images using Photoshop (lasso selection + Genera- tiv e Fill). The resulting tampered images and their corre- sponding detection masks produced by our NOW A frame- work are presented in the third and fourth columns, respec- tiv ely . These results confirm that the rob ustness observ ed in simulation carries ov er to real-world captures, demonstrat- ing the physical-layer security provided by the NO W A. The final column visualizes the discrepancy between the NO W A of the authentic and edited images. High-frequency de vi- ations emer ge within the manipulated regions, while mild low-frequenc y differences appear near the edges. Still, our proposed detector reliably isolates the tampered areas. Discussion and Handling of Depth V ariations. Al- though our real-capture experiments use a high-resolution LCD monitor positioned at a fixed distance, this setup faith- fully represents the optical behavior expected in real scenes for two reasons. First, the phase-mask prototype oper- ates with a small effecti ve aperture, producing a naturally large depth of field. In this re gime, objects lying within a broad depth range produce sensor measurements whose point spread function (PSF) and corresponding null-space structure vary only minimally with respect to depth. Sec- ond, bsuooecause the NO W A embedding arises from the null space of the imaging operator A ϕ , and because this operator is largely inv ariant within the depth-of-field lim- its, the NO W A behaves consistently whether the content is displayed on a monitor or originates from a real 3D en vi- ronment. For scenes with large depth variations or macro-scale imaging, the PSF becomes depth-v ariant. In such cases, one can extend the framework by calibrating a small set of depth-dependent PSFs or by incorporating a depth- conditioned NSN stage. Both are compatible with our cur- rent formulation and require the proper augmentations to the reconstruction pipeline. W e leav e the dev elopment of depth-aware calibration strategies as an interesting future direction, enabling the NO W A framew ork to handle scenes beyond the current depth-of-field re gime. Robustness against real-world camera imperfections The phase mask induces a deterministic, structured PSF , whereas contaminants (e.g., dust) and minor aberrations in- troduce stochastic optical distortions. The null-space pro- jection acts as a matched filter , isolating the watermark’ s structured residuals from uncorrelated noise. Howe ver , in our real-world experiments, imperfect calibration or slight misalignment de grade null-space estimation that raises the energy baseline of the null space. Even after precise PSF calibration, the residual null-space energy ∥ Π N ( ˆ x r ) ∥ in- creases from a baseline of ∼ 10 − 5 (not 0 due to numeri- cal precision limits) in simulation to ∼ 10 − 4 in real-world captures. While this indicates minor spectral leakage of un- wanted signals, this “real-world noise floor” remains sig- nificantly lo wer than the residuals induced by tampering. Consequently , the detector successfully differentiates be- tween the stochastic artifacts of physical imperfections and the specific null-space violations of a forgery . 2 Figure 8. Real-world prototype results. From left to right: raw capture y , protected reconstruction x p , tampered image (Photoshop Gen- erativ e Fill), detected tamper mask, and NO W A discrepanc y between authentic and edited captures. Manipulations introduce measurement- inconsistent NO W A residuals that are reliably localized by our detector . 3 Figure 8. (cont.). Real-world prototype results. From left to right: raw capture y , protected reconstruction x p , tampered image (Pho- toshop Generative Fill), detected tamper mask, and NOW A discrepancy between authentic and edited captures. Manipulations introduce measurement-inconsistent NO W A residuals that are reliably localized by our detector . 4

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment