Bridging neuroscience and AI: adaptive, culturally sensitive technologies transforming aphasia rehabilitation

Aphasia, a language impairment primarily resulting from stroke or brain injury, profoundly disrupts communication and everyday functioning. Despite advances in speech therapy, barriers such as limited therapist availability and the scarcity of person…

Authors: Andreea I. Niculescu, Jochen Ehnes, Minghui Dong

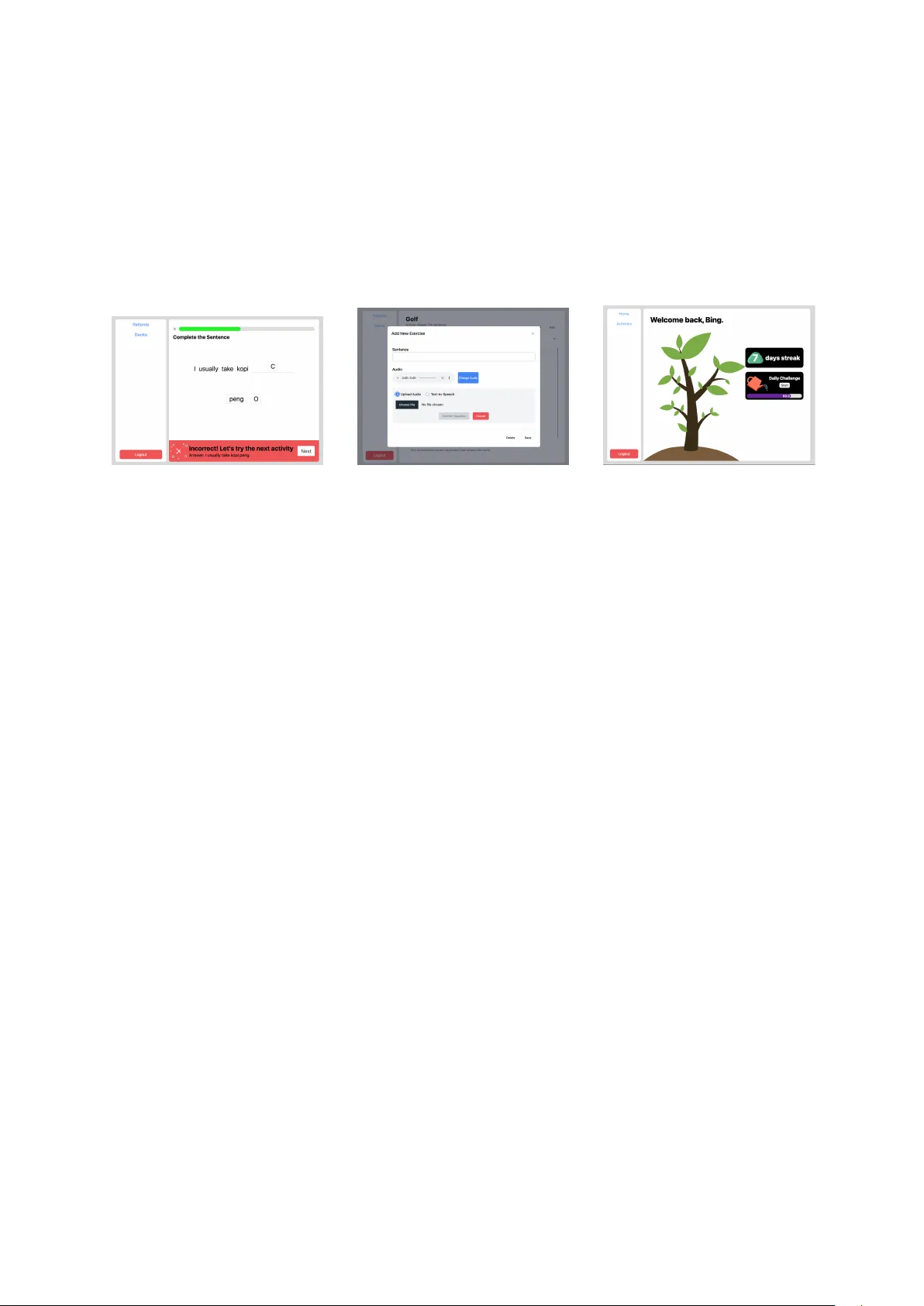

BRIDGING NEUR OSCIENCE AND AI: AD APTIVE, CUL TURALL Y SENSITIVE TECHNOLOGIES TRANSFORMING APHASIA REHABILIT A TION Andreea I. Niculescu 1 , Jochen Ehnes 2 , AND Minghui Dong 3 1 A*ST AR Institute for Infocomm Resear ch (I2R) andreeaniculescu@sigchi.org 2 A*ST AR Institute for Infocomm Resear ch (I2R) jehnes@gmail.com 3 A*ST AR Institute for Infocomm Resear ch (I2R) dongminghui@gmail.com Abstract Aphasia, a language impairment primarily resulting from stroke or brain injury , profoundly disrupts com- munication and ev eryday functioning. Despite advances in speech therapy , barriers such as limited ther- apist av ailability and the scarcity of personalized, culturally rele vant tools continue to hinder optimal rehabilitation outcomes. This paper re views recent de velopments in neurocogniti ve research and language technologies that contrib ute to the diagnosis and therapy of aphasia. Drawing on findings from our ethno- graphic field study , we introduce two digital therapy prototypes designed to reflect local linguistic di ver- sity and enhance patient eng agement. W e also show ho w insights from neuroscience and the local conte xt guided the design of these tools to better meet patient and therapist needs. Our work highlights the po- tential of adapti ve, AI-enhanced assistiv e technologies to complement con ventional therapy and broaden access to therapy . W e conclude by outlining future research directions for advancing personalized and scalable aphasia rehabilitation. Keyw ords: applied linguistics, NLP integration, assisti ve technology , speech therapy , aphasia 1 Introduction Aphasia is a comple x language disorder , typically caused by stroke or brain injury , that significantly im- pairs an indi vidual’ s ability to communicate. It af fects core language functions—speaking, understand- ing, reading, and writing—and has profound implications for quality of life. Each year, approximately 15 million people suf fer strokes, and up to 40% dev elop aphasia (W orld Health Organization, 2004). This condition can seriously hinder communication, making it dif ficult for individuals to e xpress them- selves or understand others, often leading to social isolation and emotional distress (Hilari and Byng, 2009) (P arr , 2007). Aphasia presents in v arious forms, each affecting language dif ferently . Broca’ s aphasia (non-fluent) in volv es slo w , effortful speech with preserv ed comprehension, while W ernick e’ s aphasia (fluent) results in fluent but often nonsensical speech and impaired understanding (Damasio, 1992). Another major subtype is Primary Pr ogr essive Aphasia (PP A), a neurodegenerativ e condition marked by gradual atrophy in language-related brain regions. Unlike strok e-induced aphasia, PP A stems from progressi ve diseases lik e frontotemporal dementia or Alzheimer’ s, and it w orsens over time. It often begins with subtle word-finding issues and progresses to more sev ere impairments, while other cogni- ti ve functions remain initially intact. PP A includes three variants—nonfluent/agrammatic, semantic, and logopenic—each with distinct linguistic and neural profiles (Mesulam, 2003). Currently , no medical or sur gical treatments e xist for aphasia, making speech therapy the primary in- tervention. Research has sho wn that consistent speech therapy can lead to significant improv ements in 1 patients’ conditions (Roberts et al., 2022). Rehabilitation programs are tailored to address the specific challenges e xperienced by indi viduals with dif ferent types of aphasia. These highly personalized inter - ventions tar get each patient’ s unique communication needs, often strengthened through a collaborative therapeutic alliance between the clinician and the individual. T o guide therapy effecti vely , clinicians use assessments such as the Boston Diagnostic Aphasia Examination (BD AE) to e valuate language abilities and design customized treatment plans (Marie and AlSwaiti, 2024) (Cherney et al., 2011). The success of aphasia therapy depends on factors such as aphasia type, sev erity , patient moti vation, cogni- ti ve profile, and therapy intensity , with evidence indicating that early , intensiv e, and tailored interv entions yield the best outcomes (Berthier , 2005) (Biel et al., 2022). Despite its importance, many indi viduals recei ve insufficient therapy . Barriers such as therapist short- ages, limited insurance coverage, mobility constraints, and care gi ver a vailability , along with traditional sessions—often lasting up to two hours daily for optimal results—pose further challenges (Stahl et al., 2018), (Guo et al., 2014). T ele-rehabilitation emerged as a promising way for patients to get therap y from home, helping to o vercome distance or other practical problems (T o wey , 2012). Computer programs can also help with rehabilitation, training skills like speaking, reading aloud, writing, understanding, mem- ory , and finding words (Katz, 2010) (Loverso et al., 1992). Howe ver , their repetitive nature and lack of personalization often reduce patient moti vation ov er time (Niculescu et al., 2023). This paper integrates insights from neuroscience, language technology , and clinical practice, present- ing findings from our fieldwork and prototype dev elopment of culturally and linguistically adapti ve tools for aphasia rehabilitation. Specifically , we explore the inte gration of neurolinguistic principles and nat- ural language processing (NLP) methods into assisti ve technology to support speech therapy . Our work focuses on developing a prototype tailored to the needs of aphasia patients in Singapore, aiming to bridge the gap between clinical intervention and home-based practice through adapti ve, user -centered design. 2 Background and r elated work Breakthroughs in neuroscience hav e significantly deepened our understanding of the neural foundations of language, while innov ations in language technologies are opening new avenues for diagnosis and in- tervention, positioning aphasia research at the intersection of multiple disciplines. This section provides a two-part revie w: the first part surv eys cogniti ve neuroscience research that underpins current therapeu- tic approaches; the second examines emer ging developments in language technology—from automatic speech recognition and AI-driv en assessments to digital therapy platforms—that are reshaping diagnostic and treatment practices. 2.1 Neuroscience insights Progress in neuroscience and neurolinguistics rev eals how the brain processes language, the ways apha- sia disrupts these functions, and possible pathways for reco very . Neuroplasticity plays a key role in ho w the brain reor ganizes after injury , supported by insights from neuroimaging and electrophysiologi- cal markers that track language deficits and recov ery . Additionally , recognizing that language in volves multifunctional brain networks highlights the importance of addressing cognitiv e impairments alongside linguistic rehabilitation. 2.1.1 Neuroplasticity and non-in vasive brain stimulation (NIBS) A main focus in neuroscience and language research is neuroplasticity , the brain’ s remarkable ability to reorg anize and form ne w connections after injury . Brain imaging sho ws that the brain can compensate for language problems by recruiting alternativ e paths, often in the right hemisphere or areas near the injury . Understanding these compensatory processes helps researchers learn how the brain adapts after stroke or neurodegenerati ve diseases (T urkeltaub et al., 2025). Based on this knowledge, researchers are de veloping personalized rehabilitation programs tailored to each person’ s type of aphasia and brain injury . Studies show that therapy matched to a patient’ s neural profile is more ef fecti ve at promoting recov ery (Kiran and Thompson, 2019). Building on this, non-in vasive brain stimulation (NIBS) —including transcranial direct current stim- ulation (tDCS) and repetiti ve transcranial magnetic stimulation (rTMS)—is being used alongside speech 2 therapy . These techniques modulate brain activity and support the reor ganization of language networks (Fridriksson et al., 2018) (Sebastian et al., 2016). Clinical trials suggest that tDCS, combined with ther- apy , could help patients with both post-stroke and primary progressiv e aphasia (PP A) improv e skills such as naming, fluency , and communication (Meinzer et al., 2014)(Tsapkini et al., 2018). Research on post-stroke aphasia also suggests tDCS may reduce mental fatigue and help patients maintain attention, supporting longer-term benefits (Fridriksson et al., 2018) (Kang et al., 2009). 2.1.2 Neuroimaging and electr ophysiological markers Adv anced neuroimaging techniques, including functional MRI (fMRI), diffusion tensor imaging (DTI), and magnetoencephalography (MEG), are crucial for understanding how the brain reor ganizes after stroke, particularly in chronic aphasia (Stockert et al., 2020) (Li et al., 2022). These methods are often combined with electrophysiological techniques such as event-related potentials (ERPs), which provide detailed timing information about language processing. T wo ERP components—N400 and P600—are especially informati ve. The N400, a negati ve deflection around 400 milliseconds after a word is presented, reflects semantic processing , or the retriev al and integration of w ord meaning. It occurs relati vely quickly because understanding meaning relies on direct access to memory and context. The P600, a positi ve deflection around 600 milliseconds, is linked to syntactic processing , or the analysis of grammatical structure, which is computationally more complex and therefore slower . In aphasia, the N400 is often delayed or reduced, indicating semantic impairments, while the P600 may be diminished or absent, signaling syntactic deficits. Importantly , both components can change with therapy: successful treatment can increase N400 amplitude and normalize its timing, while re-emergence of the P600 may indicate recovery of syntactic integration (Stalpaert et al., 2022). These ERP patterns can therefore be used to diagnose language impairments and to track progress during rehabilitation, serving as sensiti ve neural markers of both deficits and neuroplasticity in aphasia recov ery . 2.1.3 Neural multifunctionality and cognitive impairments While these neurophysiological markers provide important insights into core language processes, re- search increasingly sho ws that aphasia often affects broader cogniti ve functions beyond language. Stud- ies in neurolinguistics ha ve found that deficits in attention, theory of mind, and executi ve function fre- quently occur alongside morphosyntactic, semantic, and pragmatic difficulties (Caplan, 2020). These cogniti ve impairments are associated with the sev erity of aphasia and with structural changes in the brain, particularly in frontotemporal regions (Strikwerda-Brown et al., 2019) (Fittipaldi et al., 2019). For example, individuals with primary progressiv e aphasia (PP A) may show early problems with emotion recognition and empathy , even when language abilities remain relati vely intact. Similarly , post-stroke aphasia is linked to reduced performance on attention and executi ve function tasks, which closely relates to communication dif ficulties (Murray , 2012). These findings support the concept of neural multifunc- tionality—the idea that language operates within broader domain-general brain systems—and highlight the importance of interventions that tar get both linguistic and cognitiv e aspects of aphasia (Cahana- Amitay and Albert, 2014) (K ytnarov ´ a et al., 2017). From this broader perspecti ve, pharmacological interv entions are being inv estigated as complements to beha vioral therapy , with the potential to improve not only language deficits but also co-occurring cog- niti ve impairments (Stockbridge, 2022). Although still in early clinical stages, some compounds aim to enhance cerebral blood flo w , modulate neurochemical balance, or support neural recovery , thereby pro- moting impro vements in both language and cognitiv e function (Berthier , 2021) (Mayo Clinic, 2025). As understanding of the neural basis of language and cognition grows, pharmacological strate gies may be- come an increasingly important component of personalized, integrated aphasia rehabilitation programs. 2.2 Language technology dev elopments Language technologies are increasingly important for supporting aphasia diagnosis, therapy , and com- munication. Advances in artificial intelligence (AI) have made these tools more scalable, adaptiv e, and personalized, complementing traditional clinical care. They can be used for automated assessment and 3 diagnosis, personalized or adaptiv e therapy , and augmentative or alternati ve communication (AA C) to help patients communicate when natural speech is se verely limited. 2.2.1 A pahsia assessment and diagnosis AI technologies are increasingly used for the early detection, accurate diagnosis, and ongoing moni- toring of aphasia. Machine learning models can now extract detailed linguistic and acoustic features from speech to identify language impairments with high precision. F or example, (W agner et al., 2023) de veloped an automated speech recognition (ASR) and natural language processing (NLP) pipeline that classified aphasia subtypes with up to 90% accuracy—comparable to expert clinicians. Similarly , (Herath et al., 2022) used deep neural networks and Mel Frequency Cepstral Coefficients (MFCCs) to classify ten lev els of aphasia se verity with over 99% accurac y , sho wing promise for more precise severity grading and personalized therapy planning. Beyond classification, AI models are increasingly being applied to fine-grained error analysis and discourse-le vel interpretation. (Salem et al., 2023) fine-tuned a large language model (LLM) on picture- elicited narrati ves to identify target words in paraphasic speech, achie ving 50.70% accuracy . While further work is needed, this study demonstrates ho w AI can automatically detect and analyze paraphasic expressions in connected speech, taking a step closer to automatic aphasic discourse analysis. AI is also advancing longitudinal tracking in progressi ve language disorders such as PP A. Subtle changes in lexical di versity , syntactic complexity , or speech rate can signal cognitiv e decline. As noted by (Zhong, 2024), AI-integrated systems offer non-in vasi ve, cost-ef fective tools for continuous monitoring, supporting earlier intervention and personalized therap y adjustments. 2.2.2 A ugmentative & alternati ve communication Language technologies are increasingly providing new ways for people with aphasia to communicate, through both AI-enhanced Augmentativ e and Alternativ e Communication (AAC) systems and emerg- ing Brain–Computer Interfaces (BCIs). Modern AAC devices now use machine learning and natural language processing to generate contextually appropriate phrases and predicti ve text. For example, T ransformer-based models can adapt vocab ulary to a user’ s communication history and con versational context, impro ving fluency and reducing cognitiv e effort (Caria, 2025). User-centered studies also sho w that features such as real-time grammar support and visual feedback mak e communication more accurate and boost user confidence (Mao et al., 2025). At the same time, non-inv asi ve BCIs are sho wing promise in decoding imagined speech—translating neural acti vity into words. Recent EEG-based research highlights major progress in signal processing, feature e xtraction, and classification accurac y , especially using attention-based deep learning models (Su and T ian, 2025), (P anachakel and Ramakrishnan, 2021). Some clinical trials ha ve begun using BCI-dri ven neurofeedback in aphasia therapy . For instance, (Musso et al., 2022) found that EEG-based feedback during word detection tasks improved language abilities across se veral domains in chronic post- stroke aphasia, suggesting these systems may both restore communication and enhance neural plasticity . T ogether , these de velopments point to ward a shared goal: bridging the gap between thought and ex- pression for indi viduals with severe speech or motor impairments. Although most BCI applications are still experimental, the combination of adaptiv e AI and brain-based communication technologies holds great promise for improving e xpressive communication in aphasia related disorders. 2.2.3 Language technologies f or aphasia therapy Recent advances in language technologies—such as automatic speech recognition (ASR), neural ma- chine translation (NMT), and natural language processing (NLP)—are increasingly being applied to aphasia therapy to strengthen pronunciation, grammar , vocab ulary , and overall communication skills. For example, in a small study of an iPad-based speech therapy app, the ASR system correctly identified whether participants pronounced each word accurately in the same way as human raters about 80% of the time, and participants showed improv ed word production after four weeks of ASR-guided practice (Ballard et al., 2019). NMT models have also been used to automatically translate aphasic sentences into 4 fluent ones (BLEU ≈ 38), demonstrating the feasibility of providing grammatical and lexical support to aid communication (Smaili et al., 2022). In addition, integrated ASR–NLP approaches can transcribe spontaneous aphasic speech with a word error rate of 24.5% and, when combined with feature-based classifiers, can detect aphasia with 86% accuracy (Barberis et al., 2024). Building on these capabilities, mobile and gamified apps hav e made therapy more accessible and engaging for long-term self-directed use. V irtual reality app, such as (Bu et al., 2022), of fer immer- si ve language and cogniti ve training targeting oral e xpression, comprehension, and cognti ve skills; the y hav e been pilot-tested with patients, healthy volunteers, and clinicians. Commercial programs including (V ASttx, 2017), (Constant Therapy , 2023), (Bungalow Software, 2021), (AphasiaScripts, 2021), (Sen- tence Sharper, 2023), and (iT alkBetter , 2024) of fer structured e xercises targeting multiple language and cogniti ve skills (reading, writing, speaking, and problem-solving). Many no w use adaptiv e algorithms that adjust dif ficulty based on performance (Zhong, 2024). Last b ut not least, Melodic Intonation Therapy (MIT) remains an important complementary inter- vention for non-fluent aphasia. By using melody , rhythm, and pitch to stimulate speech, it has shown ef fectiveness in both subacute and chronic stages. A randomized controlled trial conducted across mul- tiple therapy centers reported significant gains in repetition and communication after six weeks of MIT (v an der Meulen et al., 2014). A crossov er trial also confirmed functional improv ements using the Com- municati ve Activity Log (CAL), a standardized tool measuring how well patients communicate in daily life rather than only in the clinic(Haro-Mart ´ ınez et al., 2018). Neuroimaging evidence shows that MIT promotes right-hemisphere reor ganization and increases structural connectivity of the ar cuate fasciculus in patients with non-fluent aphasia (Zhang et al., 2023). Ne w approaches are now combining MIT with ASR-based prosody feedback, though these innov ations hav e yet to be fully ev aluated. 3 An aphasia training tool f or Singaporean patients 3.1 Motivation Despite advances in language technologies and the gro wing av ailability of digital tools for home-based aphasia therapy , significant limitations remain—particularly in generalizing rehearsed speech to spon- taneous, real-world communication (Simmons-Mackie et al., 2014). Many existing systems still lack adapti ve feedback, contextual relev ance, cultural and linguistic personalization. Moti vated by these challenges, our team—drawing on expertise in speech recognition under noisy and rev erberant condi- tions (Chong et al., 2019), (Niculescu et al., 2010), (D’Haro et al., 2017), (Niculescu et al., 2016a), (Niculescu et al., 2015), (Niculescu et al., 2016b), as well as in chatbot development across various do- mains (Niculescu et al., 2020), (Niculescu et al., 2009) — engaged in a focused collaboration with T an T ock Seng Hospital in Singapore to dev elop assistiv e tools to local patient needs. 3.2 Field study T o gain a nuanced understanding of the challenges faced by both patients and clinicians in aphasia ther- apy , we conducted an ethnographic field study . The study included structured observations of therapy practices, qualitati ve analysis of data samples from the Open AphasiaBank corpus (AphasiaBank, 2017), and interviews with speech-language pathologists from T an T ock Seng Hospital (TTSH). The results of this study , published in (Niculescu et al., 2023) identified sev eral structural and interactional chal- lenges in aphasia therapy . Patients struggled with sentence construction, word retriev al, and pronun- ciation. Therapists, in turn, emphasized the importance of individualized therapy plans incorporating storytelling, con versational practice, grammatical exercises, and music-based tasks—such as singing fa- miliar phrases—to acti vate automatic speech sequences. Moti vating patients, adapting materials to local cultural contexts, and supporting long-term indepen- dent training were recurrent themes. Therapists frequently used mental connections between words and images to stimulate speech production and relied on family input to establish a core vocab ulary drawn from the patient’ s en vironment and everyday routines. Howe ver , technological limitations—such as ASR 5 tools that fail with non-American accents or therapy apps that use culturally irrelev ant imagery—continue to hinder ef fective therap y delivery . Further interviews highlighted that local patients present wide v ariation in language proficiency , liter- acy lev els, and dominant languages (e.g., English, Malay , Chinese dialects, T amil, etc.). Therapy must often be adapted to suit these differences, especially when literacy impairments or apraxia of speech are present. Y et current therapy apps frequently rely on W estern-centric content or accent-dependent recognition, limiting their usability in the local context. As patients improv e, they seek more advanced training—such as more complex sentence construc- tion—but lack appropriate digital tools be yond therapist-guided sessions. The absence of structured feedback during home practice poses a risk of reinforcing errors, especially for individuals li ving alone. These insights underscore the need for culturally relev ant, feedback-rich tools that can support both patients and therapists in tracking progress and promoting recov ery . 3.3 Mapping neurolinguistic and contextual insights to therapy goals and pr ototype features Drawing on ethnographic findings and neurocognitiv e research on aphasia, we mapped key neurolin- guistic and contextual insights to corresponding NLP applications and speech therapy goals, highlight- ing potential solution strategies realized through prototype implementation. This mapping guided the design of two digital therapy prototypes, emphasizing tar geted, adaptiv e, and linguistically grounded in- terventions. T able 1 summarizes these connections, with implemented features highlighted in bold and planned features in italic , sho wing ho w advances in speech recognition and NLP are applied to support personalized and engaging home-based rehabilitation that complements traditional clinical therapy . Neurolinguistic insights NLP applications Speech therapy goals Prototype implementation 1. Kno wledge of syntactic deficits Use parsers to detect malformed sentences Sentence-lev el training Prototype 2: ’Complete the Sentence’ tar gets syntax via sentence construction (1:1 match) 2. Lexical-semantic mapping in the brain Semantic vector models Suggest related words during naming Planned: Future versions of both prototypes may use LLMs to offer fle xible suggestions 3. Multimodal associa- tion in lexical access V ision-language models Use image cues to trigger speech Prototype 1: Current 1:1 image - word pronunciation match; visual stimuli planned 4. Real-time language processing deficits ERP-based dialogue pacing Adapt turn-taking pace Planned: Potential for future interaction design 5. Pronunciation errors Classification models on aphasic speech Error-specific feedback Prototype 1: Custom scoring + DTW detect phonetic errors, giv e personalized feedback 6. Reduced speech fluency & word retrie val Rhythmic/formulaic language models Support automaticity; use fixed patterns Planned: Singing-based or patterned repetition ex ercises may be added 7. Storytelling & con versation deficits Use LLMs Support long sentence formation Planned : GPT model embeddings Other contextual insights Strategies Speech therapy goals Prototype implementation 1. Lack of personal & cultural adaptation Enable caregi ver in volvement Engage patients with relev ant content Prototype 2: Exercises tailored to personal, cultural context via care giver input 2. Lack of remote progress monitoring Enable therapist in volvement T rack improvement outside the clinic Prototype 1: pronunciation scoring Prototype 2: Session logs + therapist view 3. Fatigue & repetiti ve ex ercise routine Gamification Increase patient engagement Prototype 2: Uses tree-growth metaphor to encourage consistent training 4. Local accent challenges in ASR Accent-adapted ASR models Broader accessibility for div erse speakers Prototype 1: uses ASR adapted to Singaporean English T able 1: Mapping neurolinguistic and contextual insights to speech therapy goals and prototype features 6 3.3.1 Prototype 1 The first prototype, as presented in (Niculescu et al., 2023), aims to facilitate home-based speech training for indi viduals with mild aphasia by addressing the lack of immediate and personalized feedback in existing digital interventions. It is a web-based system that employs multimodal prompting—comprising text, images, audio, and synchronized lip videos — to accommodate patients with varying degrees of aphasia and to enhance comprehension of therapy tasks (see Figure 1). Figure 1: Prototype 1 – exercises for w ord reading, object naming, and pronunciation with lip sync Each word is paired one-to-one with an image cue. Patients are shown an image and in vited to speak the name of the object. They can practice reading, naming, listing, and repetition. At the end of each task, patients receive a pronunciation score along with an explanation of errors , and can replay a lip-synchronization video to re view the correct pr onunciation . 1 . At the core of the system is a speech recognition engine adapted specifically for Singaporean-accented English to better reflect the phonetic characteristics of the target user population. While British English is often preferred in applications for its percei ved authority and prestige (Niculescu et al., 2008), Singapore English—a local v ariety influenced by Chinese and Malay—is commonly used in everyday interaction. The initial speech-to-text con version facilitates basic content matching, while a secondary e valuation layer assesses pronunciation accuracy using Dynamic T ime W arping (DTW) (Senin, 2008). This method compares patient utterances against multiple prerecorded templates, enabling detailed and nuanced feed- back that accommodates accent v ariability and low-resource language conditions. T o enhance semantic generalization—particularly in object-naming tasks—the system compares rec- ognized speech against a set of acceptable alternati ve responses, minimizing false-negati ve ev aluations. While the current semantic matching operates via rule-based logic, future iterations aim to incorporate large language models (LLMs) to support more natural and fle xible user input. The system is cross-platform, supports patient progress tracking, and include therapist in volvement . 3.4 Prototype 2 Building on the foundational Prototype 1, Prototype 2, SpeakNow , was designed and implemented by (Lim, 2024) as a web-based speech therap y platform featuring dual interf aces: one for patients engaging in therapy , and another for caregiv ers and therapists to create, assign, and monitor exercises. A key feature is the customizability of therapy decks, which allows caregiv ers to design e xercises tailored to the patient’ s personal history , cultural backgr ound , and language pr oficiency . F or in- stance, practice materials can reference familiar local dishes, ha wker foods, or recognizable streets and neighborhoods in Singapore, alongside meaningful life events. For example, as shown in Figure 2a, one exercise refers to K opi C—a popular local coffee made with e vaporated milk and sugar—reflecting e veryday cultural references familiar to many Singaporeans. This personalization enhances emotional rele vance and moti vation by grounding therapy in the patient’ s li ved experiences. Therapy exercises are organized into decks, which can be created manually or generated with ChatGPT -assisted input, with audio integrated via text-to-speech (TTS). Each deck may include mul- tiple ex ercise types, such as Complete the Sentence (Figure 2a) and Repeat the Sentence (Figure 2b). 1 A video demonstration of the prototype is av ailable here: https://www .youtube.com/watch?v=JkL7lXQm7z8 7 Complete the Sentence targets syntax and sentence construction through 1:1 word-to-blank matching. Patients reconstruct incomplete sentences by dragging and dropping words into blanks, with distractors included to increase task complexity . In the future, we plan to allo w users to construct their o wn sentences based on gi ven scenarios. T o support this, we are e xploring the integration of automatic parsers to detect lexical and syntactic errors, e xtending beyond simple rule-based matching. Repeat the Sentence focuses on improving pronunciation and auditory comprehension. P atients listen to a sentence—either generated using Google T ext-to-Speech or manually uploaded—and repeat it aloud. Real-time automatic speech recognition (ASR) analyzes the spoken response and pro vides feedback based on a predefined similarity threshold (set at 75%). (a) Complete Sentence (b) Adding a Repeat Sentence item (c) V irtual Tree Figure 2: Prototype 2 screenshots T o encourage daily engagement, caregiv ers can assign personalized tasks, which patients access via a Daily Challenge feature. The system selects exercises randomly from pre-set decks. All user inter - actions—including accuracy , duration, and frequency—are logged in MongoDB and visualized through the therapist dashboard. Performance summaries are generated at the end of each session and can be re viewed daily or weekly . This feature supports remote monitoring and adaptive planning . The in- terface is minimalistic and aphasia-friendly , with large, clearly labeled elements accommodating motor and cogniti ve impairments (Janeb ¨ ack and Jonsson, 2020). Therapists can register patients, link caregi ver accounts, and manage therapy routines. T o promote consistent engagement, SpeakNow employs lightweight gamification grounded in the Oc- talysis frame work (Gellner and Buchem, 2022). A virtual tree metaphor represents progress: completing daily tasks nurtures its growth, while missed sessions reset it, tapping into the emotional dri ve to av oid loss (Figure 2c). Instant feedback via encouraging messages further reinforces engagement. Built with the MERN stack and hosted on A WS, SpeakNow supports media APIs, secure storage, and scalable backend infrastructure. 4 Future Dir ections and Conclusions Our work represents an early but important step tow ard AI-enhanced assistiv e technologies for apha- sia therapy in Singapore. A key limitation is that, although our prototypes ha ve been conceptually and technically validated, they have not yet been tested with patients. Meaningful progress in aphasia reha- bilitation depends on iterativ e, user-centered ev aluations, which we plan to conduct once approv als are granted. Without this feedback, no technology—howe ver sophisticated—can fully meet users’ complex needs. Our ethnographic findings have provided valuable insights into everyday therapy challenges, helping us shape tools that reflect local linguistic div ersity , cultural context, and patient motiv ation. These in- sights guided the design of our second prototype, SpeakNow , which supports functional communication through personalized, adapti ve, and culturally grounded therapy experiences. Looking ahead, we plan system-level enhancements, including replacing rule-based parsing with syn- tactic parsers to detect malformed utterances; integrating semantic v ector and visual-to-language models to suggest related words during naming tasks; and incorporating music-based therapy elements such as rhythmic repetition and singing to stimulate formulaic language patterns. W e also intend to experiment 8 with LLM-based conv ersational agents to train pragmatic and turn-taking skills—bridging structured e x- ercises and spontaneous conv ersation. Once implemented, these enhancements will be e v aluated with patients for usability , engagement, and therapeutic ef fectiveness. Adv ancing aphasia research will require div erse and representativ e datasets, particularly for non- English and disfluent speech, to improve the robustness of automatic speech recognition and language modeling. Future systems should embrace multilingual and multimodal interaction—including gestures, facial e xpressions, and prosody—to better mirror real-world communication. Integrating neurocognitive markers, such as ERP-based recovery indicators, into adapti ve therapy algorithms is another promising direction requiring close collaboration between AI researchers and neuroscientists. Despite rapid progress, most current technologies lack real-time adaptability , personalized feedback, and cultural sensitivity . Fe w systems have been ev aluated for long-term engagement or sustainability in clinical and home settings. Addressing these gaps requires interdisciplinary collaboration and continu- ous co-design with patients and clinicians. Future systems that combine clinical insight with adaptiv e, feedback-dri ven designs can more ef fectiv ely help individuals with aphasia regain functional communi- cation. References AphasiaBank. 2017. Aphasiabank. https://aphasia.talkbank.org/ . Accessed: July 2025. AphasiaScripts. 2021. Aphasiascripts. https://www.sralab.org/aphasiascripts . Accessed: July 2025. Kirrie J. Ballard, Nicole M. Etter , Songjia Shen, Penelope Monroe, and Chek T ien T an. 2019. Feasibility of automatic speech recognition for providing feedback during tablet-based treatment for apraxia of speech plus aphasia. American Journal of Speec h-Language P athology , 28(2S):818–834. Mara Barberis, Pieter De Clercq, Bastiaan T amm, Hugo V an hamme, and Maaike V andermosten. 2024. Automatic recognition and detection of aphasic natural speech. arXiv pr eprint arXiv:2023arXiv230801327W . Marcelo L. Berthier . 2005. Poststroke aphasia. Drugs & Aging , 22(2):163–182. Marcelo L. Berthier . 2021. T en ke y reasons for continuing research on pharmacotherapy for post-stroke aphasia. Aphasiology , 35(6):824–858. Michael Biel, Hillary Enclade, Amber Richardson, Anna Guerrero, and Janet Patterson. 2022. Motiv ation theory and practice in aphasia rehabilitation: A scoping revie w . American Journal of Speech-Languag e P athology , 31:2421–2443. Xiaofan Bu, Peter Hf. Ng, Y ing T ong, Peter Q. Chen, Rongrong Fan, Qingping T ang, Qinqin Cheng, Shuangshuang Li, Andy Sk Cheng, and Xiangyu Liu. 2022. A mobile-based virtual reality speech rehabilitation app for patients with aphasia after stroke: dev elopment and pilot usability study . JMIR Serious Games , 10(2):e30196. Bungalo w Software. 2021. Recover speech and language. https://www.bungalowsoftware.com/ . Accessed: October 2025. Dalia Cahana-Amitay and Martin L. Albert. 2014. Brain and language: Evidence for neural multifunctionality . Behavioural Neur ology , 2014:260381. David Caplan. 2020. Neur olinguistics and lingustc aphasiology . Cambridge Univ ersity Press, Cambridge. Andrea Caria. 2025. T oward predictiv e communication: The fusion of large language models and non-inv asiv e BCI spellers. Sensors , 25(13):3987. Leora R. Cherne y , Janet P . Patterson, and Anastasia M. Raymer . 2011. Intensity of aphasia therap y: Evidence and efficac y . Curr ent Neur ology and Neur oscience Reports , 11(6):560–569. Tze Y uang Chong, Kye Min T an, Kah Kuan T eh, Chang Huai Y ou, Hanwu Sun, and Huy Dat T ran. 2019. The I2R‘sASR system for the V OiCES from a Distance Challenge 2019. In Pr oc. of the 20th Annual Conference of the Int. Speech Communication Association, Interspeec h 2019 , pages 2458–2462, Graz, Austria. Constant Therapy . 2023. Constant therapy . https://constanttherapyhealth.com/ constant- therapy/ . Accessed: July 2025. 9 Antonio R. Damasio. 1992. Aphasia. New England J ournal of Medicine , 326(8):531–539. Luis F . D’Haro, Andreea I. Niculescu, Caixia Cai, Suraj Nair , Rafael E. Banchs, Alois Knoll, and Haizhou. Li. 2017. An integrated framew ork for multimodal human-robot interaction. In Pr oc. of Asia-P acific Signal and Information Pr ocessing Association Annual Summit and Confer ence , Kuala Lumpur , Malaysia. IEEE (APSIP A). Sol Fittipaldi, Agustin Ibanez, Sandra Baez, Facundo Manes, Lucas Sede ˜ no, and Adolfo M. Garcia. 2019. More than words: Social cognition across variants of primary progressiv e aphasia. Neuroscience and Biobehavioral Revie ws , 100:263–284. Julius Fridriksson, Chris Rorden, Jordan Elm, Souvik Sen, Mark S. George, and Leonardo Bonilha. 2018. Tran- scranial direct current stimulation vs sham stimulation to treat aphasia after stroke: A randomized clinical trial. J AMA Neur ology , 75(12):1470–1476. Carolin Gellner and Ilona Buchem. 2022. Evaluation of a gamification approach for older people in e-learning. In Pr oc. of INTED2022 , pages 596–605. Y iting Emily Guo, Leanne T ogher , and Emma Power . 2014. Speech pathology services for people with aphasia: What is the current practice in Singapore? Disability and Rehabilitation , 36(8):691–704, April. Ana M. Haro-Mart ´ ınez, Genny Lubrini, Rosario Madero-Jarabo, Exuperio D ´ ıez-T ejedor , and Blanca Fuentes. 2018. Melodic intonation therapy in post-stroke nonfluent aphasia: a randomized pilot trial. Clinical rehabili- tation , 33(1):44–53. Mudiyanselage D.P .M. Herath, Arachchilage S.A. W eraniyagoda, Thilina M. Rajapaksha, P atikiri A. D. S. N. W ijesekara, Kalupahana L. K. Sudheera, and Peter H.J. Chong. 2022. Automatic assessment of aphasic speech sensed by audio sensors for classification into aphasia se verity le vels to recommend speech therapies. Sensors (Basel, Switzerland) , 22(18):6966. Katerina Hilari and Sally Byng. 2009. Health-related quality of life in people with sev ere aphasia. Int. Journal of Language & Communication Disor ders , 44(2):193–205. iT alkBetter. 2024. https://quiley.com/italk.html . Accessed: October 2025. Johanna Janeb ¨ ack and Ella Jonsson. 2020. Designing an activity application for people with cognitiv e disabili- ties: What should be considered when designing a UI for people with cognitive disabilities? Master’ s thesis, Chalmers Univ ersity of T echnology . Kyoung Eun Kang, Min Jae Baek, Sangyun Kim, and Nam-Jong P aik. 2009. Non-inv asiv e cortical stimulation improv es post-stroke attention decline. Restorative neur ology and neur oscience , 27(6):645—-650. Richard C. Katz. 2010. Computers in the treatment of chronic aphasia. Seminars in Speech and Language , 31(1):34–41. Swathi Kiran and Cynthia K. Thompson. 2019. Neuroplasticity of language networks in aphasia: Advances, updates, and future challenges. F rontier s in Neurolo gy , 10:295. Lucie Kytnaro v ´ a, Petr Nilius, and Kate ˇ rina V it ´ askov ´ a. 2017. Aphasia and nonlinguistic cognitive rehabilitation: Case study . In Eur opean Proc. of Social Behaviour al Sciences , pages 402–412. Ran Li, Nishaat Mukadam, and Swathi Kiran. 2022. Functional MRI evidence for reorganization of language networks after stroke. In Handbook of Clinical Neur ology , volume 185, pages 131–150. Else vier . Bing Hong Lim. 2024. Design and implementation of web application for aphasia training. Final Y ear Project Report. Frank L. Lov erso, Thomas E. Prescott, and Michael Selinger . 1992. Microcomputer treatment applications in aphasiology . Aphasiology , 6(2):155–163. Lei Mao, Jong Ho Lee, Y asmeen F . Shah, and Stephanie V alencia. 2025. Design probes for AI-driv en AA C: Addressing complex communication needs in aphasia. arXiv preprint . Basem Marie and Fadi AlSwaiti. 2024. V alidity and validation in language testing: Current state and future guidelines in aphasiology . Aphasiology , 38(1):1–21. Mayo Clinic. 2025. Aphasia: Diagnosis and treatment. https://www.mayoclinic.org/ diseases- conditions/aphasia/diagnosis- treatment/drc- 20369523 . Accessed: July 2025. 10 Marcus Meinzer , Robert Lindenber g, Mira M. Sieg, Laura Nachtigall, Lena Ulm, and Agnes Fl ¨ oel. 2014. T ran- scranial direct current stimulation of the primary motor cortex improves word-retriev al in older adults. F r ontiers in Aging Neur oscience , 6. Marsel M. Mesulam. 2003. Primary progressi ve aphasia–a language-based dementia. The New England Journal of Medicine , 349(16):1535–1542. Laura L. Murray . 2012. Attention and other cognitiv e deficits in aphasia: Presence and relation to language and communication measures. American Journal of Speec h-Language P athology , 21(2):51–64. Mariacristina Musso, David H ¨ ubner , Sarah Schwarzkopf, Maria Bernodusson, Pierre LeV an, Cornelius W eiller , and Michael T angermann. 2022. Aphasia reco very by language training using a brain–computer interface: a proof-of-concept study . Brain Communications , 4(1):fcac008, 02. Andreea I. Niculescu, Geor ge M. White, Swee Lan See, Ratna U. W aloejo, and Y oko Kawaguchi. 2008. Impact of English regional accents on user acceptance of voice user interfaces. In Pr oc. of the 5th Nor dic confer ence on Human-computer interaction: building bridges - Nor diCHI ’08. , pages 523–526, Lund, Sweden. ACM. Andreea I. Niculescu, Y ujia Cao, and Anton Nijholt. 2009. Stress and cognitiv e load in multimodal con versational interactions. In Constantine Stephanidis, editor , Pr oceedings of the 13th International Conference on Human- Computer Interaction (HCI International 2009) , pages 891–895. Springer . (Proceedings and Poster D VD). Andreea I. Niculescu, Betsy van Dijk, Anton Nijholt, Dilip K. Limbu, Swee Lan See, and Anthon y H.Y . W ong. 2010. Socializing with Oli via, the youngest robot receptionist outside the lab . In S.S. Ge et al., editor , Pr oc. of the Int. Confer ence in Social Robotics , volume 6414 of LN AI , pages 50–62, Berlin, Germany . Springer-V erlag. Andreea I. Niculescu, Mei Quin Lim, Seno A. W ibowo, Kheng Hui. Y eo, Boon Pang Lim, Michael. Popow , Dan Chia, and Rafael E. Banchs. 2015. Designing ID A – an intelligent driv er assistant for smart city parking in Singapore. In Pr oc. of the 15th IFIP Confer ence on Human Computer Interaction (INTERA CT) , LNCS, pages 510–513, Bamberg, German y . Springer Int. Publishing. Andreea I. Niculescu, Bimlesh W adhwa, and Evan Quek. 2016a. Smart city technologies: design and ev alua- tion of an intelligent driving assistant for smart parking. Int. J ournal on Advanced Science, Engineering and Information T echnology (IJ ASEIT) , pages 143–149. Andreea I. Niculescu, Bimlesh W adhwa, and Ev an Quek. 2016b. T echnologies for the future: e valuating a voice enabled smart city parking application. In Pr oc. of the 4th Int. Confer ence on User Science and Engineering (i-USEr) , pages 46–50, Melaka, Malaysia. IEEE. Andreea I. Niculescu, Iv an Kukano v , and Bimlesh W adhwa. 2020. DigiMo – tow ards developing an emotional intelligent chatbot in Singapore. In Pr oc. of the 2020 Symposium on Emerging Researc h fr om Asia and on Asian Contexts and Cultur es . Andreea I. Niculescu, Jochen Ehnes, Minghui Dong, Changhuai Y ou, and Paul Chan Y aozhu. 2023. Opportunities and challenges of designing assistive technologies for aphasia patients in Singapore: The case of a speech ev aluation prototype. In Pr oc. of the AAAI Symposium Series , volume 1, pages 90–93. Jerrin T . Panachakel and Angarai G. Ramakrishnan. 2021. Decoding cov ert speech from EEG —a comprehensi ve revie w . F r ontiers in Neur oscience , 15:642251. Susie Parr . 2007. Living with se vere aphasia: Tracking social e xclusion. Aphasiology , 21(1):98–123, January . Sophie Roberts, Rachel M. Bruce, Louise Lim, Hayley W oodgate, Kate Ledingham, Storm Anderson, Diego L. Lorca-Puls, Andrea Gajardo-V idal, Alexander P . Lef f, Thomas M. H. Hope, Da vid W . Green, Jennifer T . Crin- ion, and Cath y J. Price. 2022. Better long-term speech outcomes in stroke survi vors who receiv ed early clinical speech and language therapy: What’ s driving reco very? Neur opsychological Rehabilitation , 32(9):2319–2341. Alexandra C. Salem, Robert C. Gale, Mikala Fleegle, Gerasimos Fergadiotis, and Ste ven Bedrick. 2023. Automat- ing intended target identification for paraphasias in discourse using a large language model. Journal of Speec h, Language , and Hearing Researc h (JSLHR) , 66(12):4949–4966. Rajani Sebastian, Kyrana Tsapkini, and Donna C. T ippett. 2016. Transcranial direct current stimulation in post- stroke aphasia and primary progressive aphasia: Current knowledge and future clinical applications. Neur oRe- habilitation , 39(1):141–152. Pa vel Senin. 2008. Dynamic time warping algorithm re view . T echnical Report CSDL-08-04, CSDL. 11 Sentence Sharper. 2023. An innov ativ e approach to language software for aphasia. https:// sentenceshaper.com/ . Accessed: October 2025. Nina Simmons-Mackie, Meghan C. Sa vage, and Linda W orrall. 2014. Con versation therapy for aphasia: a quali- tativ e revie w of the literature. Int. Journal of Languag e & Communication Disorders , 49(5):511–526. Kamel Smaili, David Langlois, and Peter Pribil. 2022. Language rehabilitation of people with Broca aphasia using deep neural machine translation. In Pr oc. of the F ifth Int. Confer ence on Computational Linguistics in Bulgaria (CLIB 2022) , page 162—170, Sofia, Bulgaria, September . Department of Computational Linguistics, IBL – B AS. Benjamin Stahl, Bettina Mohr , V erena B ¨ uscher , Felix R. Dreyer , Guglielmo Lucchese, and Friedemann Pul- verm ¨ uller . 2018. Efficac y of intensi ve aphasia therapy in patients with chronic stroke: A randomised controlled trial. Journal of Neur ology , Neurosur gery & Psychiatry , 89(6):586–592, June. Jara Stalpaert, Sofie Standaert, Lien D’Helft, Marijke Miatton, Anne Sieben, T im V an Langenho ve, W outer Duyck, Pieter v an Mierlo, and Miet De Letter . 2022. Therapy-induced electrophysiological changes in primary pro- gressiv e aphasia: A preliminary study . F r ontiers in Human Neur oscience , 16. Melissa D. Stockbridge. 2022. Better language through chemistry: Augmenting speech-language therapy with pharmacotherapy in the treatment of aphasia. In Handbook of Clinical Neur ology , v olume 185, pages 261–272. Elsevier . Anika Stockert, Max W awrzyniak, Julian Klingbeil, Katrin Wrede, Dorothee K ¨ ummerer , Gesa Hartwigsen, Christoph P . Kaller , Cornelius W eiller, and Dorothee Saur . 2020. Dynamics of language reorganization af- ter left temporo-parietal and frontal stroke. Brain , 143(3):844–861. Cherie Strikwerda-Brown, Siddharth Ramanan, and Muireann Irish. 2019. Neurocognitive mechanisms of theory of mind impairment in neurodegeneration: a transdiagnostic approach. Neuropsyc hiatric Disease and T reat- ment , 15:557–573. Ke Su and Liang T ian. 2025. Systematic re view: progress in EEG-based speech imagery brain-computer interf ace decoding and encoding research. P eerJ Computer Science , 11:e2938. Michael P . T owe y . 2012. Speech therapy telepractice for vocal cord dysfunction (VCD): Mainecare (Medicaid) cost savings. Int. Journal of T elerehabilitation , 4(1):33–36. Kyrana Tsapkini, Kimberly T . W ebster , Bronte N. Ficek, John E. Desmond, Chiadi U. Onyike, Brenda Rapp, Constantine E. Frangakis, and Ar gye E. Hillis. 2018. Electrical brain stimulation in dif ferent variants of primary progressiv e aphasia: A randomized clinical trial. Alzheimer’s & Dementia (Ne w Y ork, N. Y .) , 4:461–472. Peter E. T urkeltaub, Kelly C. Martin, Alycia B. Laks, and Andrew T . DeMarco. 2025. Right hemi- sphere language network plasticity in aphasia. Brain:a journal of neur ology , Advance online publication. https://doi.org/10.1093/brain/a waf308. Ineke v an der Meulen, W . Mieke E. van de Sandt-K oenderman, Majanka H. Heijenbrok-Kal, Evy G. V isch-Brink, and Gerard M. Ribbers. 2014. The ef ficacy and timing of melodic intonation therapy in subacute aphasia. Neur or ehabilitation and Neural Repair , 28(6):536–544. V ASttx. 2017. Sample. aphasia software finder . https://www.aphasiasoftwarefinder.org/ vasttx- therapy- samples . Accessed: July 2025. Laurin W agner, Mario Zusag, and Theresa Bloder . 2023. Careful whisper—lev eraging advances in automatic speech recognition for robust and interpretable aphasia subtype classification. In Pr oc. of Interspeech 2023 , Dublin, Irland. W orld Health Org anization. 2004. The Atlas of Heart Disease and Str oke . W orld Health Org anization. Published in collaboration with the U.S. Centers for Disease Control and Prev ention. Xiaoying Zhang, Zuliyaer T alifu, Jianjun Li, Xiaobing Li, and Feng Y u. 2023. Melodic intonation therapy for non-fluent aphasia after stroke: A clinical pilot study on behavioral and DTI findings. iScience , 26(9):107453. Xiaoyun Zhong. 2024. AI-assisted assessment and treatment of aphasia: a re view . F rontier s in Public Health , 12:1401240. 12

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment