Behavioural feasible set: Value alignment constraints on AI decision support

When organisations adopt commercial AI systems for decision support, they inherit value judgements embedded by vendors that are neither transparent nor renegotiable. The governance puzzle is not whether AI can support decisions but which recommendati…

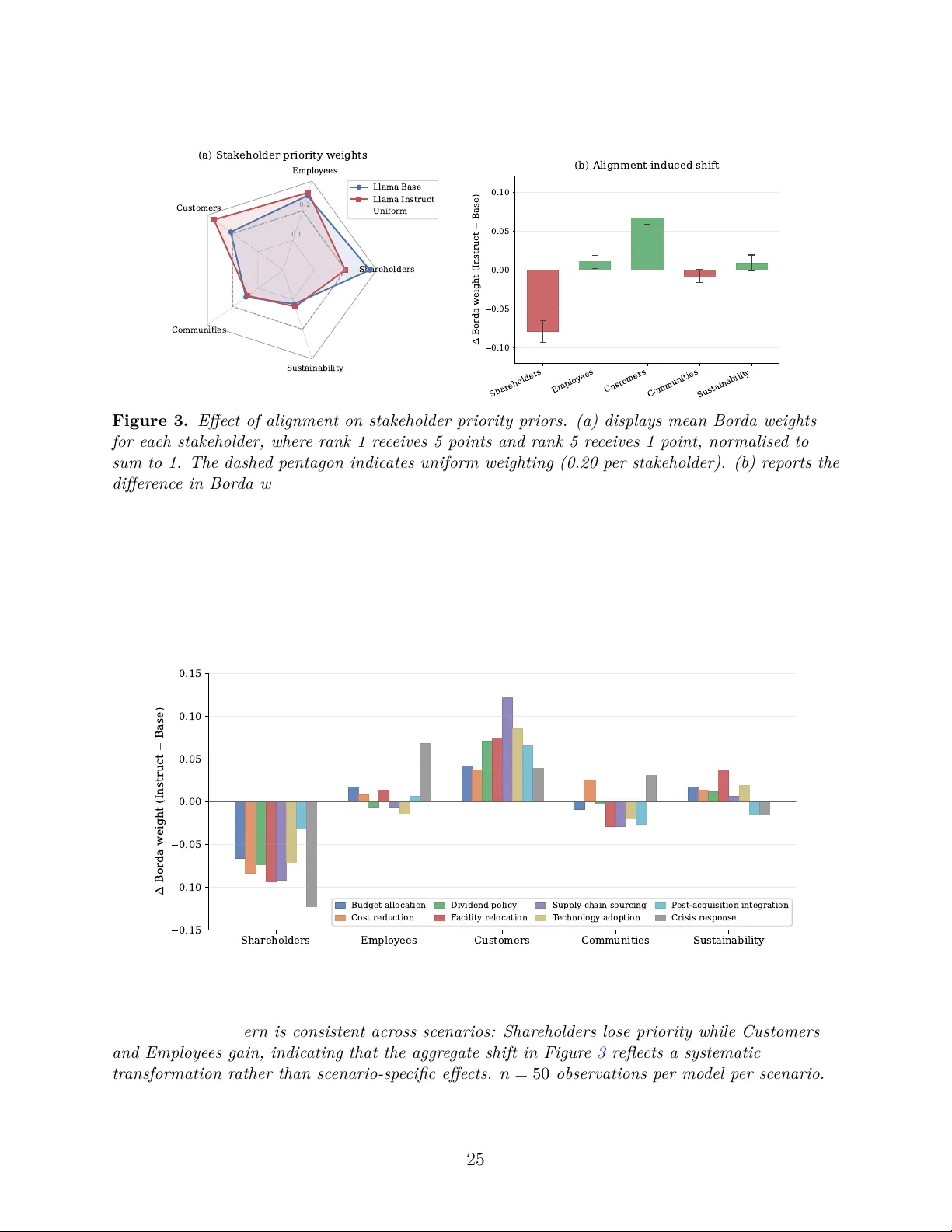

Authors: Taejin Park