ARYA: A Physics-Constrained Composable & Deterministic World Model Architecture

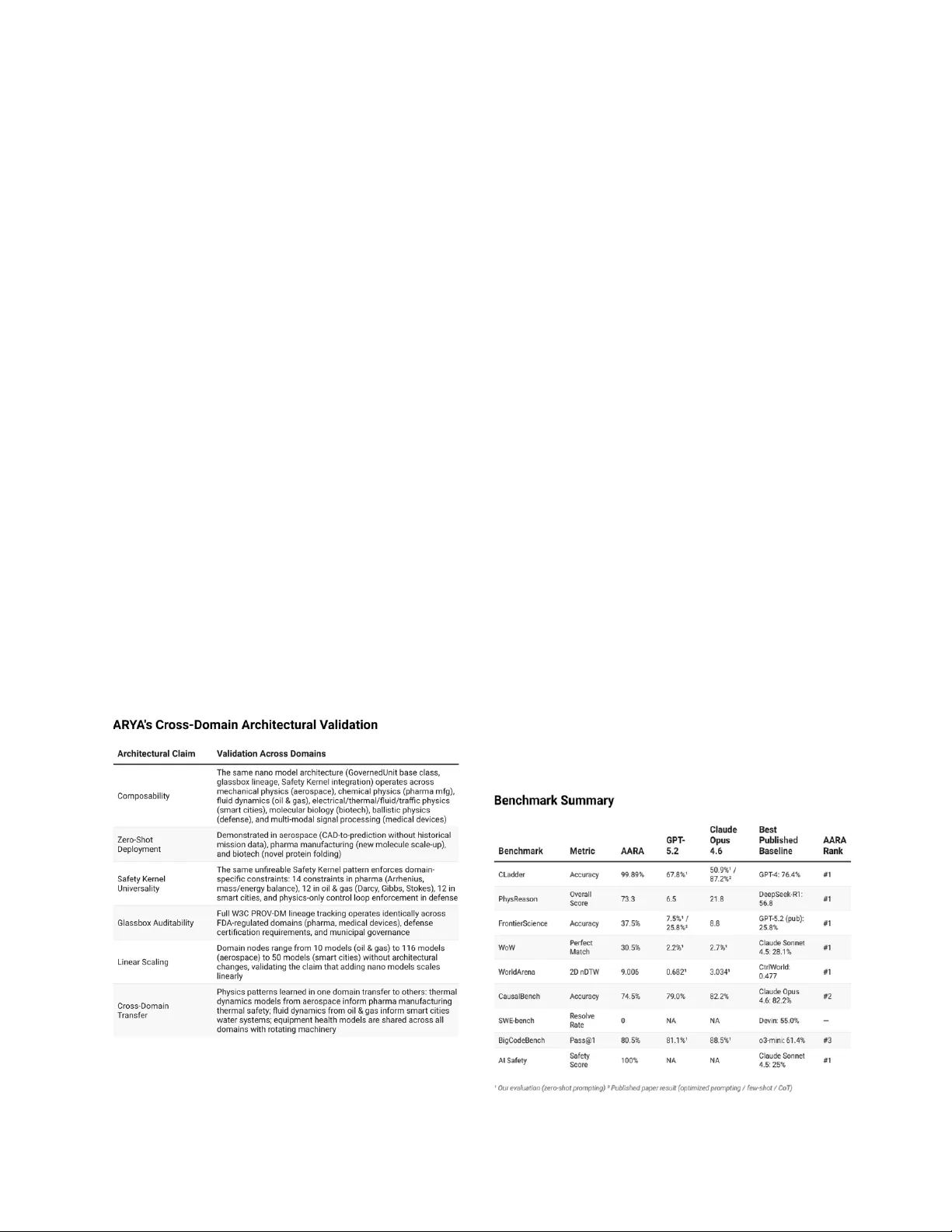

This paper presents ARYA, a composable, physics-constrained, deterministic world model architecture built on five foundational principles: nano models, composability, causal reasoning, determinism, and architectural AI safety. We demonstrate that ARY…

Authors: Seth Dobrin, Lukasz Chmiel