Multi-Session Client-Centered Treatment Outcome Evaluation in Psychotherapy

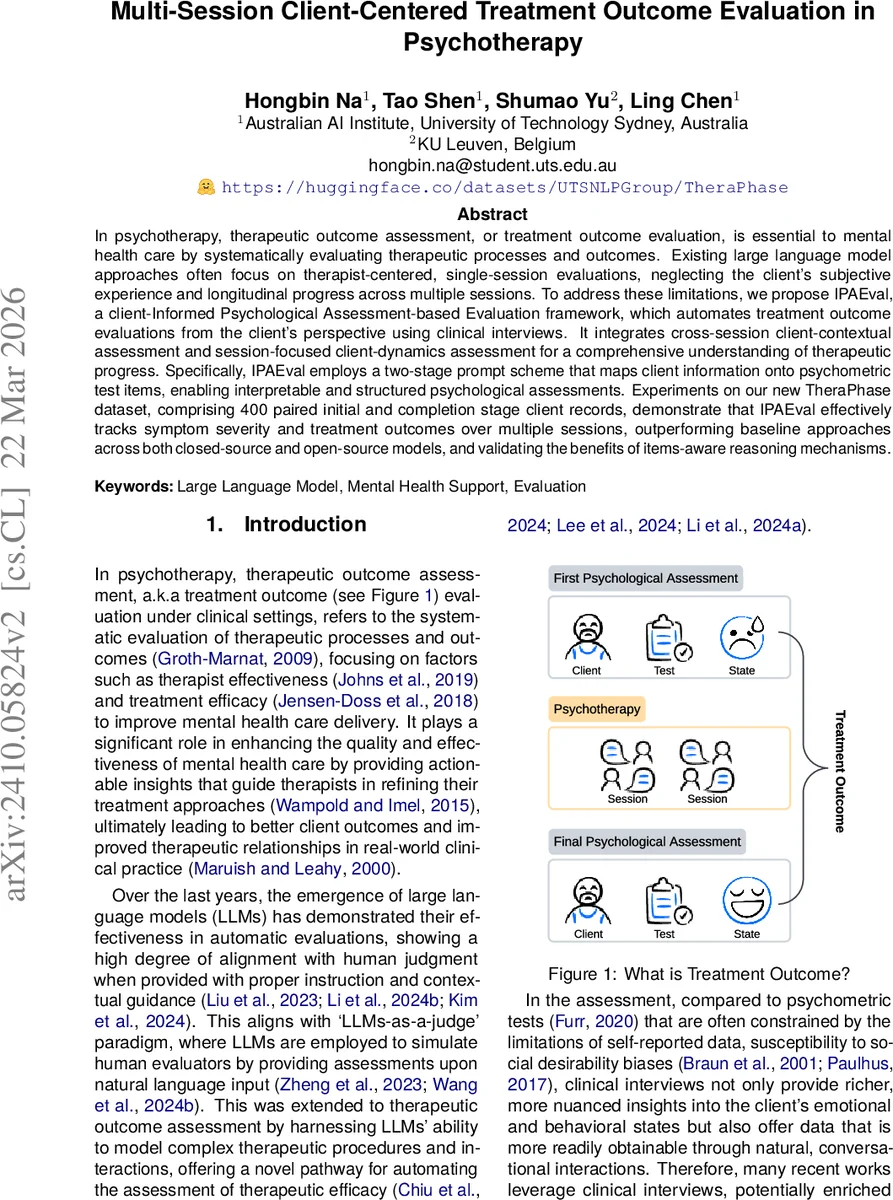

In psychotherapy, therapeutic outcome assessment, or treatment outcome evaluation, is essential to mental health care by systematically evaluating therapeutic processes and outcomes. Existing large language model approaches often focus on therapist-centered, single-session evaluations, neglecting the client’s subjective experience and longitudinal progress across multiple sessions. To address these limitations, we propose IPAEval, a client-Informed Psychological Assessment-based Evaluation framework, which automates treatment outcome evaluations from the client’s perspective using clinical interviews. It integrates cross-session client-contextual assessment and session-focused client-dynamics assessment for a comprehensive understanding of therapeutic progress. Specifically, IPAEval employs a two-stage prompt scheme that maps client information onto psychometric test items, enabling interpretable and structured psychological assessments. Experiments on our new TheraPhase dataset, comprising 400 paired initial and completion stage client records, demonstrate that IPAEval effectively tracks symptom severity and treatment outcomes over multiple sessions, outperforming baseline approaches across both closed-source and open-source models, and validating the benefits of items-aware reasoning mechanisms.

💡 Research Summary

The paper addresses a critical gap in the automated evaluation of psychotherapy outcomes: most existing large‑language‑model (LLM) approaches are therapist‑centric, focus on a single session, and ignore the client’s subjective narrative and longitudinal change. To overcome these limitations, the authors introduce IPAEval (Client‑Informed Psychological Assessment‑based Evaluation), a framework that automatically derives treatment‑outcome assessments from clinical interview transcripts, explicitly from the client’s perspective, and tracks progress across multiple sessions.

IPAEval consists of two tightly coupled prompting stages. In the first stage, called Items‑Aware Reasoning, the LLM is instructed to act as a psychologist and map the client’s statements onto individual items of a chosen psychometric instrument (the authors use a comprehensive symptom checklist derived from SCL‑90). For each item the model extracts structured evidence: symptom category, specific symptom, presence judgment (yes/no), and a natural‑language justification. This step yields a transparent, item‑level evidence record that clinicians can inspect, thereby addressing the “black‑box” criticism often levied against LLM‑based assessments.

In the second stage, Symptom Assessment, the model consumes the item‑level evidence and the scoring rubric of the instrument to produce dimension‑level scores. Because not every item will be addressed in a single interview, the scoring is designed to adjust for missing items, avoiding over‑estimation that can occur in purely simulated client responses (e.g., ClientC AST).

The core outcome metric is the Positive Symptom Distress Index (PSDI), defined as the average of all positive (greater than zero) dimension scores. By computing PSDI for an initial session (c_i) and a final session (c_f) and taking the difference ΔPSDI = PSDI_f – PSDI_i, the framework quantifies treatment effect: a negative ΔPSDI indicates symptom reduction and thus a successful intervention. Although PSDI originates from the SCL‑90, the authors note that the averaging principle can be transferred to other scales (e.g., PHQ‑9, GAD‑7), making the approach broadly applicable.

To evaluate IPAEval, the authors curate a new dataset called TheraPhase, built on the CPsyCoun resource. TheraPhase contains 400 paired transcripts, each consisting of an initial therapy interview and a completion‑stage interview for the same client. This design enables direct measurement of within‑client change over time, a feature missing from prior public datasets that only provide single‑session data. The dataset is released on HuggingFace, ensuring reproducibility.

The experimental protocol tests nine LLMs (both open‑source and closed‑source) on two tasks: (1) generating accurate psychometric scores for each session, and (2) predicting ΔPSDI. Baseline methods include therapist‑centric single‑session models such as Cactus and the client‑centric but single‑session ClientC AST. Across all models, IPAEval consistently outperforms baselines, achieving an average F1 improvement of over 12 percentage points on symptom detection and a 8–15 pp boost when the Items‑Aware Reasoning prompt is employed. An ablation study confirms that removing the item‑level reasoning stage leads to a substantial performance drop, underscoring its pivotal role.

Key contributions of the work are:

- A novel, client‑focused, multi‑session evaluation framework that bridges unstructured interview text and structured psychometric assessment.

- An interpretable two‑stage prompting strategy that yields item‑level evidence and transparent scoring.

- The TheraPhase dataset, the first publicly available resource enabling longitudinal outcome evaluation from clinical dialogues.

- Comprehensive empirical validation showing superior performance over existing single‑session, therapist‑centric approaches.

Limitations acknowledged by the authors include reliance on a single psychometric instrument (SCL‑90‑derived PSDI), lack of comparison with other common scales, and potential biases stemming from transcription errors or LLM hallucinations. While the framework avoids fabricating client responses, real‑time clinical deployment would require handling multimodal cues (tone, facial expression) and ensuring robust bias mitigation.

Future directions suggested are: extending the PSDI concept to other validated scales, expanding the dataset to multilingual and culturally diverse populations, integrating multimodal data for richer client modeling, and embedding IPAEval into clinical decision‑support tools that provide therapists with actionable, client‑centered feedback.

In summary, IPAEval represents a significant step toward automated, transparent, and longitudinal assessment of psychotherapy outcomes from the client’s viewpoint, offering both methodological innovation and a valuable benchmark dataset for the research community.

Comments & Academic Discussion

Loading comments...

Leave a Comment