Bot Meets Shortcut: How Can LLMs Aid in Handling Unknown Invariance OOD Scenarios?

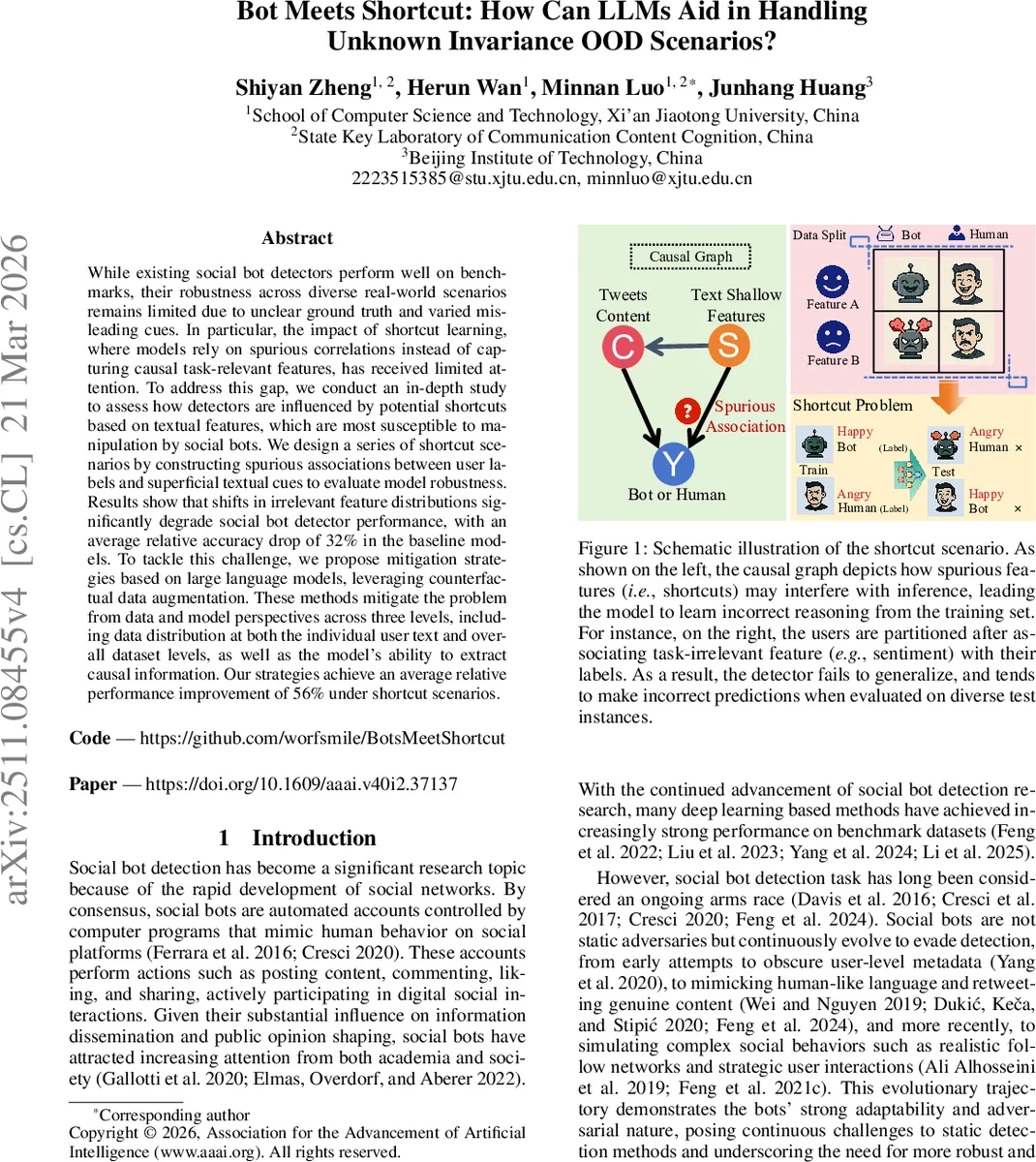

While existing social bot detectors perform well on benchmarks, their robustness across diverse real-world scenarios remains limited due to unclear ground truth and varied misleading cues. In particular, the impact of shortcut learning, where models rely on spurious correlations instead of capturing causal task-relevant features, has received limited attention. To address this gap, we conduct an in-depth study to assess how detectors are influenced by potential shortcuts based on textual features, which are most susceptible to manipulation by social bots. We design a series of shortcut scenarios by constructing spurious associations between user labels and superficial textual cues to evaluate model robustness. Results show that shifts in irrelevant feature distributions significantly degrade social bot detector performance, with an average relative accuracy drop of 32% in the baseline models. To tackle this challenge, we propose mitigation strategies based on large language models, leveraging counterfactual data augmentation. These methods mitigate the problem from data and model perspectives across three levels, including data distribution at both the individual user text and overall dataset levels, as well as the model’s ability to extract causal information. Our strategies achieve an average relative performance improvement of 56% under shortcut scenarios.

💡 Research Summary

The paper investigates the vulnerability of social‑bot detection systems to shortcut learning, a phenomenon where models rely on superficial textual cues rather than truly causal signals. The authors focus on four shallow text attributes—sentiment, topic, emotion, and human values—that are easy for bots to manipulate. By deliberately constructing spurious correlations between these attributes and the bot/human labels, they create “shortcut” training and test splits on three widely used datasets (Cresci‑2015, Cresci‑2017, and Twibot‑20). In the shortcut training set, for example, all positively‑sentiment tweets are labeled as bots while negative‑sentiment tweets are labeled as humans; the shortcut test set reverses this relationship.

Two representative detection models are evaluated: a text‑only classifier that uses frozen RoBERTa embeddings followed by a multilayer perceptron, and a graph‑based classifier (BotRGCN) that incorporates the same embeddings as node features. Under the shortcut scenario, both models suffer dramatic performance drops—average accuracy declines of about 33 % for the text‑only model and 30 % for the graph model. Certain attributes, especially emotion and values, cause accuracy reductions exceeding 90 % in extreme cases. The authors also test a causal‑decoupling debiasing method (CIGA) combined with abstract‑meaning‑representation graphs (AMR), but find it insufficient to mitigate the degradation.

To address this, the paper proposes a counterfactual data augmentation (CDA) pipeline powered by large language models (LLMs). The key idea is to generate counterfactual versions of each tweet that flip a targeted shallow attribute while preserving the original semantic content and the ground‑truth label. This is achieved via carefully crafted prompts (e.g., “Change the sentiment from positive to negative, keep the label unchanged, and modify as few words as possible”). The LLM (implemented via the DeepSeek API) rewrites each tweet, producing a paired dataset of original and counterfactual texts.

The mitigation strategy operates on three levels:

- Text‑level debiasing – For each user, the system selects a balanced subset of original and rewritten tweets that minimizes the bias score for the targeted attribute.

- Dataset‑level debiasing – Across the whole training corpus, class‑conditional distributions of the shallow attributes are re‑balanced by mixing original and counterfactual examples, discarding overly biased samples, and augmenting under‑represented ones.

- Model‑level debiasing – A contrastive learning objective is added so that embeddings of original–counterfactual pairs are pulled together while embeddings of texts with different labels are pushed apart. This encourages the language model to focus on causal semantics rather than superficial cues.

When all three components are applied, the same shortcut test conditions yield substantial recovery: the text‑only model improves by an average of 59 % relative to the degraded baseline, and the graph‑based model improves by 53 %. Even the most severely affected attribute groups (e.g., emotion, values) see performance rebounds of 70 % or more.

The contributions of the work are threefold: (1) a systematic quantification of shortcut learning in social‑bot detection, demonstrating that reliance on shallow textual features can cripple out‑of‑distribution (OOD) robustness; (2) an empirical demonstration that existing debiasing techniques (e.g., AMR+CIGA) are inadequate for this problem; and (3) a novel LLM‑driven counterfactual augmentation framework that mitigates spurious correlations at the text, dataset, and model levels, substantially enhancing OOD performance.

Beyond bot detection, the study highlights a general recipe for combating shortcut learning in any text‑centric classification task: identify vulnerable shallow attributes, generate high‑quality counterfactuals with LLMs, and integrate them through balanced sampling and contrastive training. This approach leverages the generative flexibility of modern LLMs to produce realistic, label‑preserving variations, thereby forcing downstream classifiers to learn deeper, causally relevant representations. The paper thus offers both a diagnostic lens for hidden biases in social‑media analytics and a practical, scalable solution that can be adopted by researchers and practitioners aiming for more trustworthy AI systems in dynamic, real‑world environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment