Triple/Double-Debiased Lasso

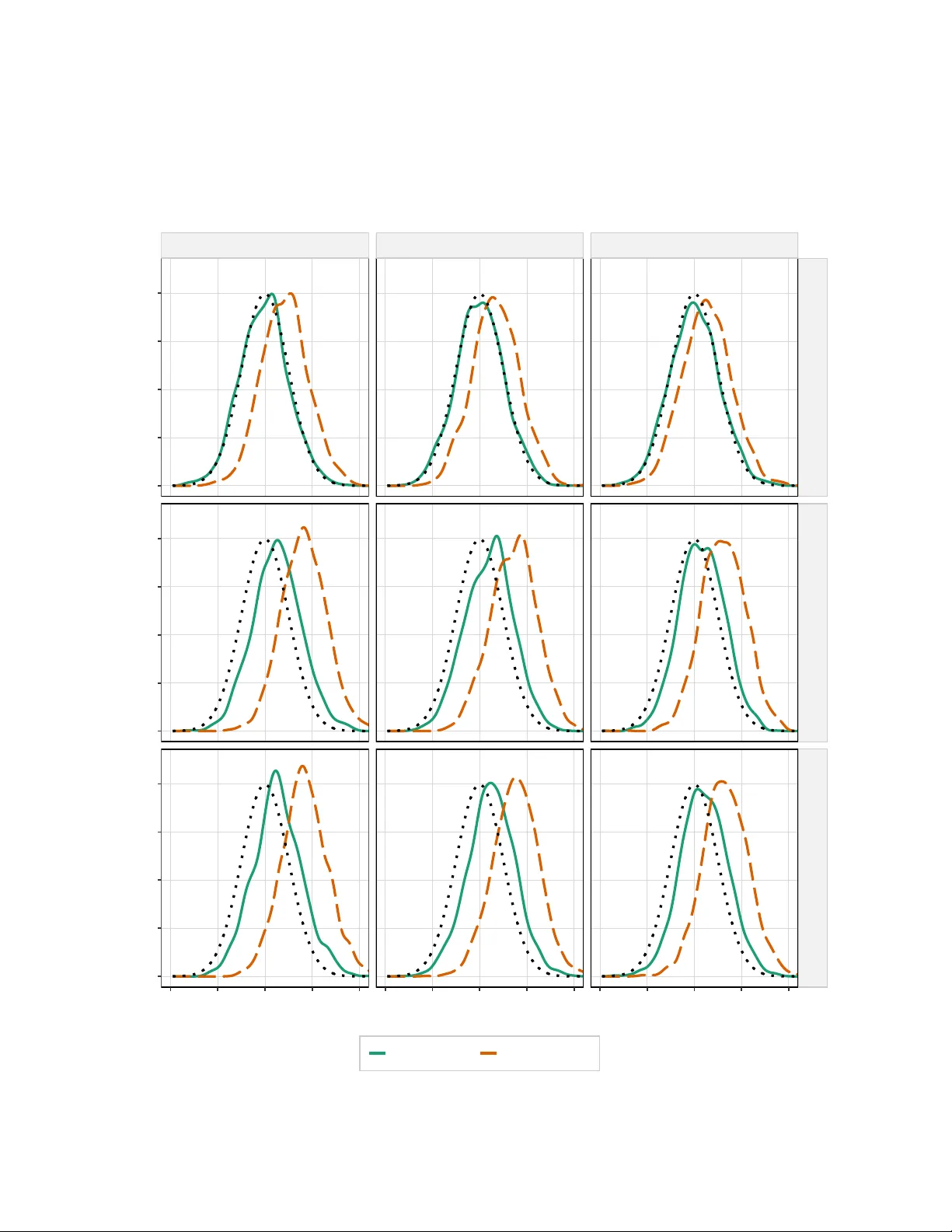

In this paper, we propose a triple (or double-debiased) Lasso estimator for inference on a low-dimensional parameter in high-dimensional linear regression models. The estimator is based on a moment function that satisfies not only first- but also sec…

Authors: Denis Chetverikov, Jesper R. -V. Sørensen, Aleh Tsyvinski