Scalable Learning of Multivariate Distributions via Coresets

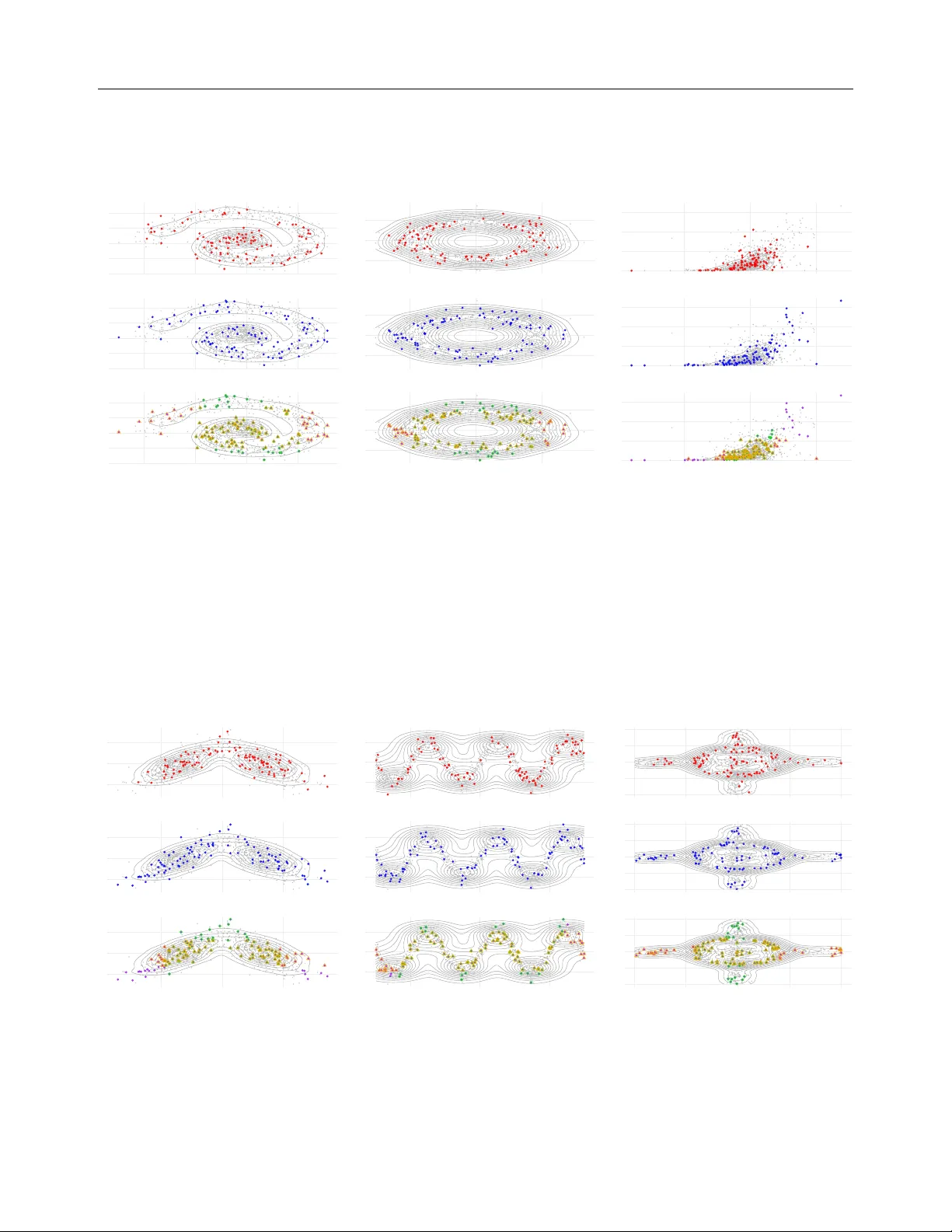

Efficient and scalable non-parametric or semi-parametric regression analysis and density estimation are of crucial importance to the fields of statistics and machine learning. However, available methods are limited in their ability to handle large-sc…

Authors: Zeyu Ding, Katja Ickstadt, Nadja Klein