Embodied Science: Closing the Discovery Loop with Agentic Embodied AI

Artificial intelligence has demonstrated remarkable capability in predicting scientific properties, yet scientific discovery remains an inherently physical, long-horizon pursuit governed by experimental cycles. Most current computational approaches a…

Authors: Xiang Zhuang, Chenyi Zhou, Kehua Feng

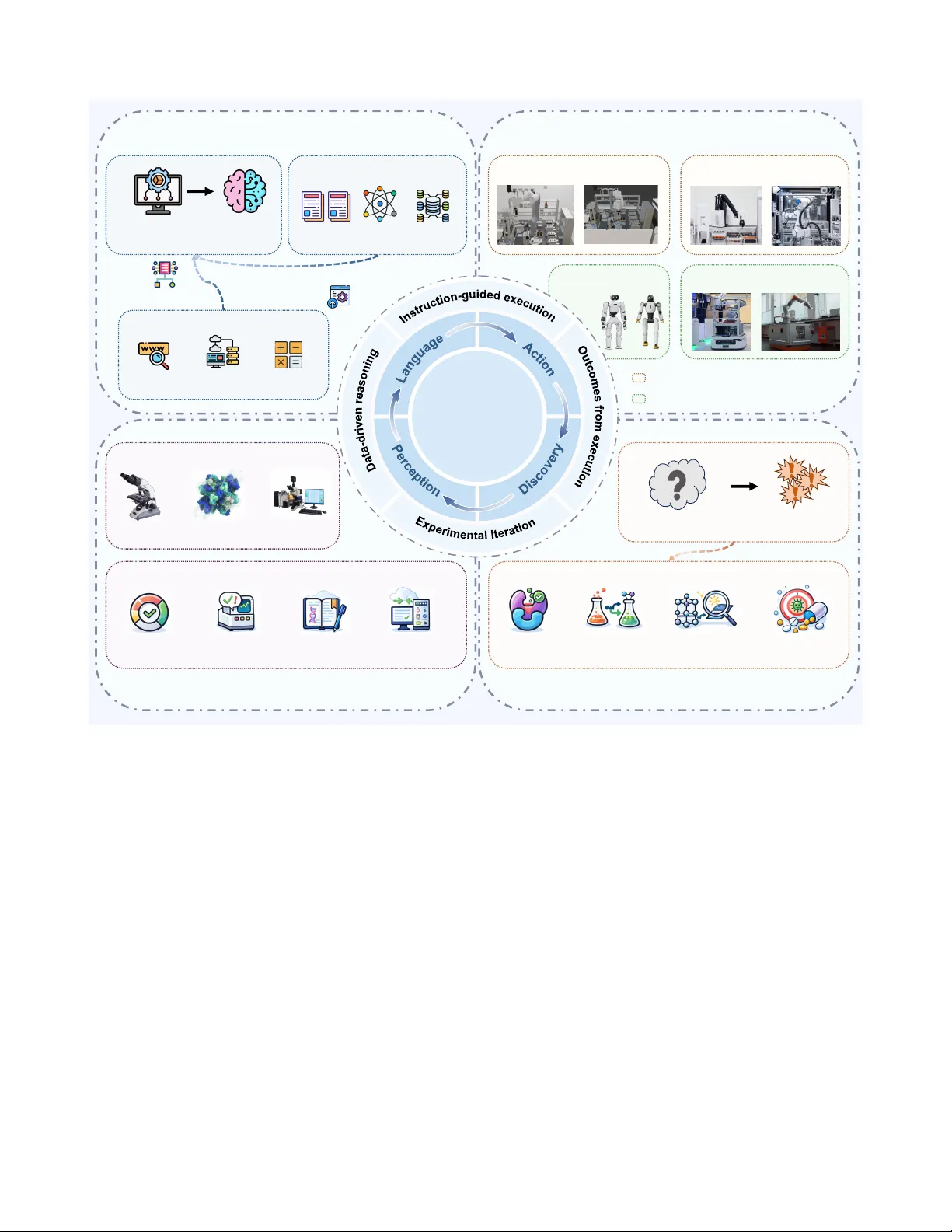

Embodied Science: Closing the Disco very Loop with Agentic Embodied AI Xiang Zhuang 1,2 , Chenyi Zhou 1 , Kehua Feng 1 , Zhihui Zhu 1 , Y unfan Gao 3 , Yijie Zhong 3 , Yichi Zhang 1 , Junjie Huang 1 , Ke y an Ding 1 B , Lei Bai 2 B , Haofen W ang 3 B , Qiang Zhang 1 B , and Huajun Chen 1 B 1 Zhejiang University , Hangzhou, China 2 Shanghai Ar tificial Intelligence Laboratory , Shanghai, China 3 T ongji University , Shanghai, China B baisanshi@gmail.com, haof en.wang@tongji.edu.cn, { dingk ey an,qiang.zhang.cs,huajunsir } @zju.edu.cn ABSTRA CT Ar tificial intelligence has demonstrated remarkable capability in predicting scientific proper ties , yet scientific discov er y remains an inherently physical, long-hor iz on pursuit gov er ned by experimental cycles. Most current computational approaches are misaligned with this reality , framing discov er y as isolated, task-specific predictions rather than continuous interaction with the ph ysical world. Here, we argue for embodied science, a paradigm that reframes scientific disco very as a closed loop tightly coupling agentic reasoning with physical e x ecution. We propose a unified P erception– L anguage– A ction– D iscov er y ( PLAD ) frame work, wherein embodied agents perceiv e e xperimental en vironments, reason over scientific knowledge , ex ecute physical inter ventions , and inter nalize outcomes to drive subsequent e xploration. By grounding computational reasoning in rob ust ph ysical f eedback, this approach bridges the gap betw een digital prediction and empir ical validation, off ering a roadmap for autonomous discov er y systems in the lif e and chemical sciences. 1 Introduction Artificial intelligence is reshaping how scientific knowledge is produced and acted upon 1 . Over the past decade, data-dri ven models hav e deliv ered striking advances in problems once considered intractable, from accurate protein structure prediction 2 , 3 to learned models for molecular property prediction 4 – 6 , generati ve design 7 , 8 , and synthesis planning 9 . These successes, together with the rise of foundation models that unify representations across modalities 10 – 12 , hav e begun to shift AI for Science (AI4S) from a collection of specialized predictors to ward more general-purpose scientific engines 13 . Y et a central tension remains: scientific discovery is not a single-shot infer ence pr oblem . Breakthroughs typically emerge from long-horizon, iterati ve interaction with the ph ysical world, through a sustained loop of hypothesis formation, experimental design, ex ecution under real constraints, analysis, and model re vision 14 , 15 . Even when a predicti ve model is e xcellent, the discovery process can stall if the system cannot decide what to do ne xt, cannot percei ve the signals from instruments, or cannot reliably translate decisions into ex ecutable laboratory operations. Recent ef forts make this mismatch visible in the form of a bifurcated landscape. On one side, lar ge language model (LLM)-based agents 16 – 20 expand cogniti ve scope through language-mediated reasoning, planning, and tool use, often translating high-le vel scientific intent into experimental plans, code, and workflo ws. On the other side, automated and robotic laboratories 21 , 22 demonstrate reliable embodied execution, enabling sustained experimentation and closed-loop optimization within well-defined experimental spaces. This split reflects broader empirical evidence that AI’ s currently attributed scientific impact remains concentrated in cogniti ve augmentation, especially in data processing and pattern recognition, whereas the next critical step is to e xpand sensory and e xperimental capacity to enable the search for and acquisition of ne w forms of evidence be yond “standing” datasets 23 . Crucially , this split is not an incidental inte gration gap that will v anish by “connecting” modules. Each line optimizes a dif ferent Human-orchestr ated, AI- assisted scientific discovery Long - horizon autonomous scientific discovery Data Collection Model T raining Prediction Experiments Predictio n - cent ric AI for science Discovery Perception Action Embodied science Language Disembodied AI assistant Agentic embodi ed AI Scienti fic data from instruments Scienti fic cognit ion Embodie d executio n I nternaliz e outcomes as insight Reasoning Planning Reflecting ... AI mode l Input Output Design Tr ai ni n g AI Mode l Instruments Output Analysis Dataset Source … Figure 1. Fr om prediction-centric AI f or science to embodied science. Left: the dominant AI4S workflo w is human-orchestrated. Experts curate data sources into datasets, train task-specific models, and use predictions to inform the next e xperimental step; ex ecution remains human-managed, yielding a loosely coupled loop across stages. Right: embodied science reframes discov ery as a closed-loop process interaction with the physical world. Agentic embodied AI provides the enabling system layer that operationalizes this paradigm by integrating Perception – Language – Action – Discov ery (PLAD): it grounds cognition in instrument-deriv ed e vidence (Perception), conducts reasoning and planning (Language), carries out embodied experimentation (Action), and internalizes outcomes into scientific insight (Discov ery). The system can sustain long-horizon autonomous scientific discovery , ex ecuting iterativ e cycles be yond task-bounded, single-shot assistance. projection of discov ery under the prev ailing framing: cognition is powerful b ut weakly grounded in instrument-lev el e vidence and physical constraints, whereas e xecution is rob ust but often optimized around predefined objectiv es and procedural boundaries. W ithout reframing discov ery as an end-to-end closed loop, incremental scaling (such as stronger planners, better robots, or larger models) tends to reproduce fragmentation rather than resolv e it. Thus, autonomy in scientific discovery is a property of the coupled system, and realizing the capacity expansion required for continued exploration 23 collapses when any of the three structural requirements is e xternalized: perception , cognition , and action . At the level of per ception , scientific evidence is generated by instruments, not datasets: raw spectra, chromatograms, microscop y streams, sensor logs, calibration traces, and meta-data that capture drift, f ailure, and context. Models trained on curated tables often lack the ability to parse these multimodal, imperfect signals and to perform goal-directed sensing (e.g., adapti vely zoom, r e-measure, recalibrate, or switch modalities when anomalies occur). At the level of cognition , most AI4S systems optimize well-defined tasks (predict a structure, rank candidates, re gress a property), b ut long-horizon discovery requires persistent goal management, experimental reasoning under uncertainty , and planning over contingencies and costs 24 . It demands not only selecting the next e xperiment, but also deciding what to measur e , when to intervene , and how to r evise hypotheses as e vidence accumulates. At the level of action , discovery hinges on interventions in the world 25 . Many AI4S pipelines terminate at candidate suggestions, while real laboratories require precise, v erifiable actions: manipulating reagents, configuring instruments, e xecuting protocols, ensuring safety constraints, and reco vering from errors. W ithout rob ust action grounding, “recommendations” cannot mature into discov eries. 2/ 17 Here, we argue for embodied science as a foundational paradigm for long-horizon autonomous disco very . In embodied science, AI does not merely analyze data or recommend actions; it expands sensory and experimental capacity by participating directly in experimental w orkflows as part of a closed loop that couples perception of instrument signals, knowledge-grounded reasoning, and physical intervention. Scientific progress, under this paradigm, emerges from continuous eng agement with real experimental en vironments rather than from one-off computation ov er static datasets. This perspectiv e reframes AI-driv en discov ery as an exploration-dri ven process, in which hypotheses, experimental strategies, and operational beha viors co-e volv e through repeated interaction with the physical world. 2 Scoping Embodied Science and Agentic Embodied AI AI-dri ven discov ery is transitioning from tool-based augmentation to system-le vel reconfiguration of the scientific method. T o av oid terminology drift, we scope two concepts that or ganize this Perspectiv e, Embodied Science and Agentic Embodied AI , and we define long-horizon autonomous scientific discov ery as the operational outcome they aim to enable. 2.1 Embodied Science Definition. W e use Embodied Science to denote a paradigm in which discovery is treated as an embodied, long- horizon, closed-loop process. AI is inte grated into real experimental w orkflows and operates across the complete cycle of disco very by percei ving instrument-generated signals, reasoning with scientific knowledge, and e xecuting laboratory interv entions. Scientific progress therefore arises from sustained interaction with the ph ysical world, rather than from isolated computation ov er static datasets. Why embodiment matters in scientific exploration. Embodiment, in this view , is not laboratory automation in disguise. It is what makes AI-driv en disco very actionable: plans are realized as physical interventions in the laboratory , and their consequences are returned as instrument-grounded feedback. Rather than mechanically ex ecuting fixed protocols, embodied capabilities enable systems to carry intent into the real world, observe what actually happens, and adapt subsequent decisions accordingly . 2.2 Agentic Embodied AI Embodied Science defines what kind of discovery process is needed; Agentic Embodied AI specifies what kind of AI system can realize it. Definition. Agentic Embodied AI is a persistent cyber–physical scientific agent that couples (i) scientific cognition, (ii) experimental perception, and (iii) laboratory action within a single closed-loop controller , operating under explicit feasibility and safety constraints. Three properties are essential: (1) Agentic autonomy: the ability to manage goals over e xtended horizons, plan under uncertainty and cost, and re vise strategies in response to outcomes; (2) Embodiment in the experimental loop: interfaces to raw instrument streams, operational state, and actuation primiti ves to ground reasoning in laboratory reality; (3) Long-horizon persistence: memory , prov enance, monitoring, and reco very beha viors that maintain continuity across cycles rather than treating each run as a standalone episode. 2.3 Long-Horizon A utonomous Scientific Discovery Because “autonomy” is often used loosely , we adopt an operational criterion: Operational criterion. A system demonstrates long-horizon autonomous disco very if it can sustain multiple end-to-end discov ery cycles—h ypothesis → experiment design → physical e xecution → interpretation → re vision— ov er extended time spans with minimal human interv ention, while maintaining reproducibility , pro venance, and safety . This criterion sets a higher bar than most current demonstrations: it requires the loop to remain closed be yond a single episode, sustaining autonomous operation across instrument drift, stochastic outcomes, and accumulating uncertainty , while preserving reproducibility , provenance, and safety . 3/ 17 3 Analyzing the Current Landscape: Reasoning-Centric and Ex ecution-Centric P aradigms Moti vated by the need to bridge computational reasoning and physical experimentation, scientific agents and embodied AI hav e recently emerged as promising routes to ward more autonomous scientific discov ery . In practice, ho wev er , existing ef forts ha ve lar gely bifurcated into two partial realizations: reasoning-centric systems that advance hypothesis generation and in silico exploration, and ex ecution-centric platforms that enable autonomous, high- throughput experimentation within well-defined procedural boundaries. Each excels at a single component of the discov ery process while remaining fundamentally decoupled from the others. Belo w , we analyze the current landscape and argue that these limitations are structural rather than incremental. 3.1 Disembodied Scientific Cognition: Reasoning without Ph ysical Action Artificial intelligence has long supported scientific research through data-dri ven modeling and prediction, a trajectory often associated with the Fourth Paradigm of data-intensi v e science 26 . In recent years, this role has expanded tow ard cognition-centric scientific agents that aim to ele vate AI from a passi ve analytical tool to an acti v e reasoning entity . As summarized in T able 1 , these approaches are unified by a common paradigm: they operate primarily on curated datasets, emphasize language-based scientific reasoning, and remain physically disembodied, with experimental ex ecution and validation performed by humans. W ithin this paradigm, a first subclass focuses on task decomposition and planning. Systems such as ChemCro w 27 , Biomni 28 , SciT oolAgent 29 , and T oolUni verse 30 le verage large language models to orchestrate domain-specific tools, dynamically assembling workflo ws for synthesis planning, reaction optimization, or biomedical analysis. Their strength lies in structuring complex scientific tasks and coordinating heterogeneous computational resources. Ho wev er , their operational boundary is fundamentally cogniti v e: experimental actions are abstracted as tool calls, and physical ex ecution remains external. A second subclass emphasizes hypothesis generation and problem-space exploration. Platforms such as V irtual Lab 31 , Robin 32 , and AI Co-scientist 33 aim to emulate aspects of collaborative scientific reasoning by coordinating e vidence gathering, hypothesis refinement, and iterativ e discussion across specified research problems. These systems move beyond task execution toward exploratory inquiry , yet their exploration remains confined to literature, databases, and simulations. Hypotheses are e valuated through manual e valuation by human experts rather than autonomous experimental falsification, leaving the core scientific loop incomplete. A third line of w ork frames discov ery as iterati ve search and optimization in silico. Systems such as AlphaEvolv e 34 , DeepScientist 35 and InternAgent 36 , 37 formalize scientific progress as a feedback-dri ven process in which hypotheses or programs are iterati vely refined based on computational e v aluation. While this paradigm introduces an explicit notion of iteration and feedback, the feedback signal is constrained to the execution of computational algorithms. Lacking physical embodiment and the ability to interact with the material world, these systems struggle to generalize across broader scientific domains, often producing candidates that fail to translate into physical experimental succe ss. Finally , some efforts to ward end-to-end research in silico, ex emplified by systems such as AI Scientist 38 and K osmos 39 , extend cognition-centric agents to include automated manuscript writing and reporting. Although these systems appear to close the research loop across problem formulation, methodological design, experimental ex ecution, and writing, their scope remains confined to computational domains. W ithout physical embodiment, they remain incapable of engaging with tangible e xperimental en vironments, leaving the loop fundamentally decoupled from the complexities of the material w orld. Despite their diversity , reasoning-centric scientific agents share a fundamental structural limitation. W ithout embodied execution in real experimental environments, hypotheses cannot be autonomously tested, falsified, or re vised through sustained interaction with the physical world. Feedback is indirect, delayed, or entirely com- putational, leading to a form of cogniti ve closure in which reasoning saturates without experimental grounding. Consequently , these approaches struggle to support long-horizon autonomous scientific disco very , which requires repeated, embodied cycles of hypothesis testing and re vision. 3.2 Execution-Centric Embodiment: Action without Scientific Understanding In parallel with advances in cognition-centric scientific agents, embodied automation has made substantial progress to ward the physical e xecution of e xperiments 47 . As characterized in T able 1 , ex ecution-centric embodied systems 4/ 17 T able 1. Landscape of current AI4S approaches tow ard autonomous scientific discov ery . Existing efforts cluster into two partial realizations: reasoning-centric but physically disembodied systems, and e xecution-centric b ut cogniti vely shallo w platforms. Notably , neither paradigm achie ves a sustained coupling between language-le vel scientific reasoning and embodied experimental action. Paradigm Characteristics Subtypes Example approaches Language-heavy , action-light (physically disembodied) 16 , 17 • Strong language-based reasoning, but no coupling to embodied action; • Operate primarily on curated datasets rather than raw signals from instruments; • Remain physically disembodied, with experimental e xecution carried out by human scientists. T ask decomposition & planning e.g., ChemCro w 27 ; Biomni 28 ; SciT oolA- gent 29 ; T oolUni verse 30 Hypothesis & problem exploration e.g., V irtual Lab 31 ; Robin 32 ; AI Co-scientist 33 Iterati ve search & opti- mization in silico e.g., AlphaEvolv e 34 ; DeepScientist 35 ; InternA- gent 36 , 37 End-to-end research in silico e.g., AI Scientist 38 ; K os- mos 39 Action-heavy , language-light (cogniti vely shallo w) 21 , 22 • Reliable embodied execution, b ut limited language-le vel reasoning and hypothesis formation; • Operate directly on raw instrument signals using heuristic decision-making (e.g., Bayesian optimization); • Enable autonomous execution within narro w task scopes and fixed procedural boundaries. Single-step, instrument-bound e.g., automated liquid han- dlers; robotic pipetting and weighing systems Multi-step, protocol- dri ven automation e.g., Chemputer 40 ; FLUID 41 Closed-loop ex ecution and optimization e.g., A-Lab 42 RoboChem 43 ; CRESt 44 Language-instructed experimental ex ecution e.g., Coscientist 45 ; ChemAgents 46 operate on raw instrument signals, rely primarily on heuristic or statistical decision-making, and enable direct interaction with the physical w orld. Ho wev er , these systems are typically cogniti vely shallo w: while they e xcel at ex ecuting experiments, the y lack the capacity for mechanism-aware reasoning and hypothesis-dri v en inquiry . At one end of the e xecution-centric spectrum are single-step, instrument-bound systems. Automated liquid handlers, robotic pipetting platforms, and weighing systems e xemplify this class. They automate isolated e xperi- mental operations with high precision and repeatability , yet each action is ex ecuted independently of the broader scientific conte xt. Consequently , these systems constitute the basic infrastructure of automated experimentation: they robustly perform prescribed single-step actions, yet lack the capacity to analyze outcomes, reason about experimental context, or adapt beha vior beyond explicit instructions. A more advanced, yet still ex ecution-bound subclass extends automation to multi-step, protocol-driven e xecution. Platforms such as the Chemputer 40 and FLUID 40 encode experimental procedures as machine-e xecutable w orkflows, enabling the automated realization of comple x, multi-stage protocols. Compared to single-step systems, this approach extends automation from isolated actions to coordinated, multi-stage e xperimental e xecution. Although e xecution no w spans multiple stages, the system does not analyze intermediate outcomes, reason about e xperimental context, or de viate from the prescribed workflo w when unexpected results occur . At the most integrated end of execution-centric systems are closed-loop, feedback-dri v en platforms, including A-Lab 42 , RoboChem 43 , and CRESt 44 . By inte grating robotic e xecution with online charac- terization and statistical optimization methods, including Bayesian optimization and ev olutionary search, these platforms demonstrate impressi ve autonomy over e xtended e xperimental campaigns, adapting parameters in response to observed outcomes and achieving local optimization in high-dimensional spaces. Howe ver , the closed loop operates primarily at the lev el of numerical feedback rather than scientific representation. When experiments fail or yield anomalous results, adaptation proceeds through parameter adjustment rather than counterfactual reasoning or 5/ 17 hypothesis re vision. W ith the introduction of lar ge language models, robotic systems ha ve be gun to blur the boundary between ex ecution-centric automation and cogniti ve planning, using LLMs to design experimental w orkflows and dri ve embodied laboratory systems for physical e xecution 45 , 46 . Despite this progress, LLM integration primarily improv es the flexibility of workflo w design and control, without conferring sustained, long-horizon scientific agency . Experimental outcomes are used to refine individual tasks, leading to episodic, task-bounded reasoning rather than cumulati ve, discov ery-dri ven iteration. Despite increasing integration, ex ecution-centric systems face tw o persistent limitations at the le vel of embod- iment. First, most platforms rely on fixed or rail-mounted manipulators tightly coupled to predefined laboratory layouts, which provide reliability b ut limit physical flexibility across heterogeneous instruments and reconfigurable en vironments. Second, the development, calibration, and maintenance of such systems remain labor-intensi ve, requiring substantial human effort to debug workflo ws and adapt execution logic to new experimental settings. T o alleviate these constraints, ex ecution-centric autonomy has been e xtended through mobile embodiments and digital twin–based simulation. Mobile robotics 48 , 49 address the limitation of rigid embodiment by physically interconnecting spatially distrib uted instruments, thereby expanding the scope of ex ecutable workflo ws beyond fixed workcells. In parallel, digital twin framew orks such as MA TTERIX 50 target the engineering bottleneck by enabling virtual v alidation and sim-to-real transfer 51 , reducing the cost and risk associated with deploying automated experimental w orkflows. Howe v er , both advances operate strictly at the le vel of ex ecution. They improv e flexibility and reliability in ho w experiments are carried out, but do not enable systems to interpret e xperimental outcomes, re vise scientific hypotheses, or engage in mechanism-aware reasoning. In this sense, mobile robots and digital twins address ex ecution bottlenecks, not scientific cognition. Consequently , execution-centric embodied systems often function as po werful ex ecution engines rather than as scientists. Their decision-making is guided by predefined objecti ve functions and fix ed parameter spaces, limiting their ability to generalize be yond narrowly scoped tasks. Although they may achie ve ef ficient optimization within constrained domains, they do not accumulate transferable scientific insight. Experimentation is treated as a process of ex ecuting and scoring trials, rather than as an acti ve inquiry in which hypotheses are formulated, challenged, and re vised. This gap between action and understanding gi v es rise to an illusion of scientific progress: local performance improv es, yet discov ery remains decoupled from scientific understanding. 3.3 Why Incremental Scaling Is Insufficient The limitations above do not disappear by scaling one side. More po werful language reasoning does not automatically yield procedural correctness, instrument awareness, or safe execution. More capable robotics does not automatically yield hypothesis-dri ven inquiry , evidence inte gration, or scientific generalization. Long-horizon autonomy requires a unified system-lev el coupling between perception, language-level reasoning, embodied action, and cumulativ e discov ery 52 , 53 . This moti v ates a closed-loop framework that treats autonomy as an end-to-end property rather than a component upgrade. 4 PLAD: T owards Closed-Loop Agentic Embodied AI f or Scientific Discovery W e ar gue that agentic embodied AI represents a critical technological pathw ay to ward long-horizon autonomous scientific disco very . T o this end, we propose the Perception–Language–Action–Discovery (PLAD) closed-loop paradigm (Figure 2 ) as an ov erarching frame w ork for implementation. Unlike the V ision–Language–Action (VLA) 54 paradigm that underpins general-purpose embodied intelligence, where the primary objecti ve is to understand open en vironments, generate linguistic descriptions, and e xecute physical actions. PLAD is explicitly centered on scientific discov ery as its core goal. Accordingly , its design is aligned with the distinctive requirements of scientific research across cogniti ve structures, perceptual tar gets, and forms of action. W ithin PLAD, scientific discovery is modeled as a continuously operating closed-loop process. An agent percei ves the experimental en vironment (Perception), reasons and plans under the support of scientific language and kno wledge (Language), ex ecutes experiments through embodied actions in real laboratory settings (Action), and internalizes experimental outcomes as ne w scientific insights (Discov ery), which in turn drive subsequent rounds of exploration. Belo w , we introduce each component of the PLAD paradigm in detail. 6/ 17 Long - horizon Autonomous Scientific D iscovery Reasoning with Models , Knowledge, and T ools Scientific Brain General Larg e Language Model · Spe cialized Knowledge ↓ grounds & bounds invokes & orchestrates Paper Database Sci - KG · Spe cialized T ools ↓ Web search Database query Model execution … … Embodied Execution in Physical W orld · St ationary Manipulat or · Li near T rack Manipulator · Mobile Manipulator · Hu manoid Robots Spatially Constrai ned: Autono my Spatially Unconst rained: Flexibility Instruments as Extensions o f Scientific Senses Internalizing Outcomes as S cientific Insight · Instrument - defined Experim ental States ↓ · Instrument - mediated Physical Observables ↓ Microsco py image s Cryo - EM outputs … Progress states Equipment status … … Spectral measureme nts Electronic lab notebook … Exploration quest ion New insight · Cu mulative sci entific know ledge ↓ Enzyme function Reaction pathway … … Material property … Drug Activity Laboratory information systems … Figure 2. The PLAD loop f or long-horizon autonolous scientific discovery . Per ception transforms raw instrument signals into structured e vidence. Language integrates foundation models with specialized kno wledge and tools to support hypothesis formation, reasoning, and experimental planning. Action compiles plans into verifiable laboratory operations. Discovery internalizes outcomes into transferable scientific knowledge that shapes subsequent exploration across c ycles. 4.1 P erception: Instruments as Extensions of Scientific Senses Perception characterizes an agent’ s capacity to sense the scientific en vironment through instruments that extend the limits of human perception. In embodied science, instruments do not merely record data; they function as artificial scientific senses, defining what aspects of the ph ysical world are observ able and ho w experimental information is structured. Scientific perception comprises two complementary forms of instrument sensing. First, instruments provide instrument-mediated physical observ ations, through which latent physical phenomena are rendered observable. These include high-dimensional signals such as microscopy images, cryo-electron microscopy reconstructions, spectral measurements, and other modality-specific outputs. Such observations e xpose structural, dynamical, or compositional properties of experimental systems that are inaccessible to unaided human senses. Second, instruments define instrument-defined experimental states, which encode the operational and procedural context of e xperimentation. These include progress indicators, equipment status, and structured records maintained in electronic lab notebooks or laboratory information systems. Rather than capturing physical phenomena 7/ 17 directly , these signals formalize the evolving state of an experiment, enabling agents to track execution, detect de viations, and coordinate multi-step workflows. T ogether , these two forms allo w embodied agents to perceiv e not only what is occurring in an e xperiment, b ut also where it resides within the broader scientific process, forming a stable foundation for closed-loop reasoning and action. 4.2 Language: Reasoning with Models, Knowledge, and T ools Language constitutes the “scientific brain” of an agent, responsible for scientific reasoning, interpretation, and planning. Within PLAD, this component is centered on large language models (LLMs) 18 , 19 , understood as a general class of foundation models that encompass multimodal LLMs 11 , 12 , 55 capable of reasoning over heterogeneous scientific inputs. While LLMs supply general reasoning capability , reliable scientific intelligence cannot emer ge from models alone. Instead, it arises from a structured integration of models, specialized kno wledge, and task-specific tools, enabling a dynamic balance between generality and specialization. At the model le vel, LLMs used for scientific tasks must extend beyond natural language understanding to interpret multimodal scientific inputs produced by perception, including experimental data, instrument outputs, and structured records. In addition, the slo w-thinking 56 of LLMs enables sustained reasoning ov er complex instrument data as well as hypothesis inference and e xperimental planning. Such capacity allows models to integrate heterogeneous e vidence, examine intermediate conclusions, and reason counterfactually about alternativ e e xplanations, experimental conditions, or mechanistic hypotheses. These requirements motiv ate a set of science-oriented design considerations. These include modality-aware encoding for spectra, images, and time series; specialized tokenization schemes for chemical, biological, or materials representations; and architectural support for reasoning over long contexts. T raining pipelines further benefit from integrating scientific literature with instrument-generated data, enabling LLMs to acquire long chain-of-thought reasoning patterns that align with empirical scientific practice. Kno wledge provides the specialized grounding and constraint that anchors general LLM reasoning in domain reality . Such knowledge spans unstructured scientific literature and structured resources, including databases and scientific knowledge graphs (Sci-KGs) 57 . Sci-KGs systematically encode scientific concepts and their relationships in the form of triples, integrating structured databases with unstructured textual knowledge to provide a more comprehensi ve and stable foundation. Moreover , knowledge graphs can incorporate multimodal information, such as omics data, imaging data, and dynamical trajectories from computational simulations, thereby offering rich contextual support for scientific reasoning. Importantly , grounding and constraint are not abstract properties but are operationalized through concrete mechanisms 58 . During training, structured kno wledge can be transformed into reasoning supervision, for example, via kno wledge-graph-to-corpus approaches 59 that con vert graph structure into long-chain scientific reasoning data, yielding high-reliability learning signals. During inference, literature, databases, and kno wledge graphs are integrated through retrie v al-augmented generation (RA G) 60 , 61 , supplying authoritati ve context that constrains model outputs and stabilizes reasoning under uncertainty . In this way , kno wledge injects precision, consistency , and domain depth that general-purpose LLMs alone cannot guarantee. T ools constitute a second axis of specialization by e xtending reasoning into executable operations. Through tool in v ocation, such as web search, database querying, and computational model in v ocation, LLMs can acti vely acquire external e vidence, perform specialized analyses, and v alidate intermediate hypotheses. Unlike the general reasoning capacity of LLMs, tools encode expert procedures and formal methods that deli v er accuracy and reliability on well-defined scientific tasks, ef fectiv ely externalizing domain e xpertise into verifiable operations. T ogether , this model–kno wledge–tool inte gration enables a dynamic balance between generality and special- ization. General-purpose LLMs pro vide adaptability , contextual understanding, and analogical reasoning across domains; specialized knowledge and tools deliv er precision, depth, and task reliability . W ithin PLAD, agents dynamically adjust their reliance on these components according to task demands, allo wing them to rob ustly interpret scientific data, perform mechanism-aware reasoning, and plan experiments that are both flexible across domains and dependable within specific scientific contexts. 8/ 17 4.3 Action: Embodied Execution in Ph ysical W orld Action corresponds to the scientific body of an embodied agent and denotes its capacity to intervene in the physical world through e xperimental ex ecution. In PLAD, action is defined by the agent’ s ability to physically manipulate materials, instruments, and experimental processes, thereby grounding scientific reasoning in reality . Embodied ex ecution can be broadly organized along a ke y dimension: the degree of physical constraint under which an agent operates. Along this axis, embodied forms range from spatially constrained embodiments, which trade autonomy for reliability , to spatially unconstrained embodiments, which prioritize flexibility at the cost of ex ecution certainty . Spatially constrained embodiments operate within predefined mechanical boundaries and are tightly coupled to laboratory infrastructure, with tw o representativ e forms: stationary manipulators and linear track manipulators 62 . Stationary manipulators are fixed robotic arms deplo yed at indi vidual experimental stations, automating well-defined manual operations such as dispensing, sample loading or unloading, and instrument handling. Their limited work en velope enables high precision, stability , and repeatability , but also confines them to step-le vel e xecution within isolated experimental stages. Linear track manipulators extend this capability by mounting robotic arms on meter - scale rails that connect multiple functional stations, such as synthesis and characterization zones. This configuration enables coordinated, multi-step experimental w orkflows and sustained high-throughput e xecution under predefined pipelines, significantly expanding e xperimental coverage while preserving e xecution reliability . Nev ertheless, both forms remain constrained by fixed layouts and preconfigured trajectories, fav oring rob ustness and safety ov er autonomy and limiting their adaptability to unstructured or rapidly e volving laboratory settings. In contrast, spatially unconstrained embodiments operate without predefined trajectories or fixed work en velopes. This category includes mobile manipulators, which integrate robotic arms with wheels, as well as humanoid robots 48 , 49 . Such embodiments can navigate comple x laboratory spaces, transport samples and consumables, and interact with diverse instruments across distributed en vironments. Their physical freedom enables higher-le vel autonomy and more closely mimics the beha viors of human researchers. At the same time, this flexibility introduces challenges in motion planning and execution reliability , particularly in safety-critical laboratory settings characterized by dense equipment and intricate procedures. These two classes of embodiment define complementary regimes of embodied action 63 , 64 . Spatially constrained systems provide a stable and reliable foundation for routine experimentation, while spatially unconstrained systems enable flexibility and integration across heterogeneous laboratory contexts. Among the latter , humanoid robots of fer a distinctiv e long-term advantage: by directly operating within human-oriented laboratory layouts, tools, and protocols, they minimize the need for en vironment reconfiguration and thus represent a promising pathway to ward general-purpose laboratory autonomy . Long-horizon autonomous scientific disco very arises from integrating constrained reliability with unconstrained adaptability , with embodied action in PLAD bridging reasoning and sustained physical experimentation. 4.4 Discovery: Internalizing Execution Outcomes as Scientific Insight Discov ery denotes the process by which an agent internalizes the outcomes of embodied action into scientific insight. Rather than treating experimental results as isolated observ ations, discovery abstracts execution feedback into transferable scientific understanding. W ithin PLAD, this process con verts e xploratory questions into ne w insights, such as refined interpretations of enzyme function, inferred reaction pathways, or updated structure–property relationships. By feeding these insights back into subsequent reasoning and action, discovery enables the continual refinement of research objecti ves and supports long-horizon autonomous scientific discov ery . 4.5 PLAD Examples Using enzyme design and chemical reaction optimization as representati v e examples, this section illustrates how the PLAD frame work can be instantiated across di verse e xperimental settings (Figure 3 ). While the underlying scientific questions and experimental modalities dif fer , PLAD provides a common or ganizational structure for inte grating perception, reasoning, and action into closed-loop discov ery processes. 9/ 17 Language Action Perception Discovery Structural a nd functional signals · Structu ral data Structure – function hy pothesis · MD simulation · Structu re predict ion · Database sear ch Active - site muta tion Biofoundry - driven mutant generation and screening · Mutant library · Screeni ng · Purifi cation · Express ion Internalized design rules for activity – stabilit y Functional mutation Silent mutation L A D P Enzyme Design Language Action Perception Di s c o v e r y Spectrosco pic data and reaction trajec tories Mechanism - driven chemical reasoning Automated reaction execu tion In te r n a l i z e d i n s i g h t i n to r e a c ti o n p a t hway s · Spectro scopic characterization data · Time - resolved informatio n · Mechanis tic reasoning · Competing pathway infe rence · Intervention strategy · Mobile robotic chemist · Autom ated workstation L A D P Chemical Reaction Optimiza tion · Competing pat hway · Updated rule · Functional data · Stabil ity metrics a b Figure 3. Instantiating PLAD in scientific discov ery . (a) Enzyme design: Perception integrates structural and functional measurements; Language forms structure–function hypotheses under constraints; Action executes library construction and screening; Discov ery internalizes design rules and counterexamples for future c ycles. (b) Chemical reaction optimization: Perception tracks spectroscopic and kinetic trajectories; Language performs mechanism-aw are reasoning; Action executes tar geted campaigns; Discov ery internalizes mechanistic explanations and pathway-le vel constraints that generalize be yond parameter tuning. 4.5.1 Enzyme Design In enzyme design, Perception focuses on processing instrument-derived protein structural outputs (e.g., from cryo-electron microscopy or X-ray crystallography), functional and kinetic measurements (such as K m , k cat , and time-resolved acti vity profiles), and experimental indicators of stability and e xpression (including thermal stability , expression le vels, and purification yield), among other inputs. Language is centered on constructing hypotheses about enzyme structure–function relationships. It aims to infer which structural changes are lik ely to dri ve functional improv ements. For example, it must determine whether enhanced acti vity arises from optimized substrate-binding conformations or from increased global stability , and distinguish whether activity loss results from direct perturbation of the acti ve site or from conformational instability caused by distal mutations. Based on these inferences, it proposes design strategies, such as prioritizing mutations at acti ve-site residues or shifting focus to modifying secondary-structure interfaces to enhance stability . This reasoning process is supported by computational and analytical tools, including protein structure prediction and molecular dynamics simulations to assess ho w mutations af fect conformational stability and dynamics, as well as database queries to examine e v olutionary conserv ation or 10/ 17 homologous v ariant distributions. Action subsequently translates these reasoning outcomes into concrete, embodied experimental e xecution. Action is implemented through control of physical experiments, such as generating mutant libraries on a biofoundry and orchestrating high-throughput e xpression, purification, and acti vity screening. Through iterati ve cycles, structure–function h ypotheses are tested and refined over e xtended experimental horizons. 4.5.2 Chemical Reaction Optimization In chemical reaction optimization, Perception emphasizes dynamic and process-lev el signals, including spectroscopic characterization data (such as NMR and IR) as well as time-resolved information, including reaction progress curves and by-product formation trajectories. T ogether , these signals reflect the temporal e volution of reaction pathways, intermediate states, and operational stability . Language is centered on mechanism-driven chemical reasoning. It focuses on ho w solvent, additi ves, and lig and structure modulate key elementary steps, such as oxidati ve addition, migratory insertion, and reductiv e elimination. When undesired outcomes arise, such as diminished enantioselecti vity or increased by-product formation, the focus shifts from global parameter adjustment to targeted hypothesis refinement. The formation of specific side products may indicate a competing mechanistic pathway , prompting structural modifications or the introduction of additi ves to suppress or intercept that pathway . This reasoning process is also supported by computational tools, including quantum chemical modeling and kinetic analysis, which are used to assess relative energetics, barrier heights, and selecti vity trends under different conditions. Action then translates these into embodied e xperimental e xecution. Actions are realized through automated workstations or mobile robotic chemists that execute tar geted reaction campaigns, experimentally v alidating mechanistic hypotheses and suppressing undesired pathways. Through such embodied interv ention, mechanism-aw are exploration can be sustained across extended e xperimental cycles. 5 Challenges and Design Considerations In this section, we identify challenges that constrain the stability , reliability , and safety of long-horizon PLAD loops, and outline corresponding design responses that are necessary to k eep such systems operational, cumulati ve, and trustworthy (Figure 4 ). 5.1 Reasoning over Scientific Data Reasoning ov er scientific data is fundamental to bridging the gap between Perception and Language. In experiments, perceptual inputs typically manifest as complex, noisy signals, such as liquid chromatography–mass spectrometry (LC–MS) spectra, nuclear magnetic resonance (NMR) and infrared (IR) spectra, time-resolved reaction kinetic profiles, microscopy images, and instrument status logs. Interpretation of these data is deeply contingent on domain-specific knowledge and conte xtual experimental details. Consider LC–MS data as an illustrati ve example: a spectrum is not a simple “product fingerprint” b ut rather the superposition of multiple ph ysicochemical processes, including v ariations in ionization efficienc y , matrix effects, di verse fragmentation pathw ays, co-elution of isomers, and dynamic changes in signal-to-noise ratio ov er time. Consequently , the appearance, disappearance, or intensity shift of a peak does not necessarily correlate with reaction progress or the formation of a target compound; accurate interpretation requires integrating retention tim e, isotopic distribution patterns, fragment ion signatures, and experimental conditions into a coherent assessment. Similarly , scientific instruments characterize reaction through process signals and operational states. Parameters such as temperature, pressure, and flo w rate, along with sensor readings or visual cues (e.g., phase transitions or color ev olution captured in images), are routinely used to assess whether a reaction has stalled or de viated from its intended trajectory . Such judgments, ho we ver , also rely on a deep understanding of the underlying reaction mechanisms and experimental protocols. Addressing this challenge requires coordinated adv ances in model design and tool-assisted reasoning. At the architectural level, large language models must be adapted to scientific en vironments so as to accommodate the structural or temporal properties of experimental data. This enables reasoning to operate directly o ver instrument- generated representations, rather than relying solely on linguistic descriptions. Such science-adapted language models are often described as scientific large language models (Sci-LLMs) 11 . Even with these adaptations, it is dif ficult for LLMs to nati vely co ver all dimensions of scientific data analysis. Reliable interpretation also depends on 11/ 17 a. Reasoning over S cientific Data b. Embodied Execution Reliabilit y Challenge Resolution Heterogeneous, noisy instrument signals Interpretation requires a mechanistic understanding Modality - aware encoding of raw scientific signals To o l - based, precise, and verifi able analysis Sci - LLMs with tool - grounded interpretation Sci - LLM To o l s Signals Structural/T emporal Encoding Challenge Resolution Heterogeneous physical embodiments High - precision, safety - critical execution c. Long -Horizon Knowledg e Accumulation Challenge Resolution Digital Tw i n simulation & trajectory learning Sim - to - real transfer Experimental memory is fra gmented across cycles Knowledge struggles to accumul ate beyond local updates Knowledge graph for outcome - to - knowledge internalization Knowledge graph Experiment 1 Experiment 2 Experiment 3 update update update Experimental record Scientific hypotheses & conclusion Newly identified regularities, causal relations Experiment 1 Experiment 2 Experiment 3 d. Protocolization of Scientific In frastructure Challenge Resolution Fragmented, non - interoperable infrastructure Agent - interpretable action - observation protocols Challenge Resolution Irreversible embodiment amplifies cascading risk in long - horizon autonomy Governance embedded throughou t the PLAD loop e. Safety and Risk G overnance Experiment 1 Experiment 2 Experiment 3 Success Failure anomaly Perception from instrument Scientific brain Embodied action Pre - execution safety gating Model - based risk assessment Knowledge - based safety boundaries PLAD loop Observation Action Reasoning & planning Standardized actions Structured observations Explicit failure states Chemical hazards Biological risks … Figure 4. Challenges and design r esolution for sustaining long-horizon PLAD loops . (a) Reasoning over scientific data : Heterogeneous, noisy instrument signals require mechanism-aware interpretation; science-adapted LLMs, modality-aw are encoders, and tool-grounded analysis support reliable reasoning. (b) Embodied execution reliability : Robust e xecution across di verse robotic embodiments requires lar ge-scale simulation, trajectory learning, and sim-to-real transfer . (c) Long-horizon knowledge accumulation : Knowledge graphs con vert fragmented experimental records into cumulati ve scientific insight across c ycles. (d) Protocolization of scientific infrastructure : Agent-interpretable action–observ ation protocols standardize actions, observations, and f ailure states, enabling composable closed-loop workflo ws. (e) Safety and risk governance : T rustworthy long-horizon autonomy requires integrated safety gating, model-based risk assessment, and kno wledge-based safety boundaries. tool in v ocation, in which models work in concert with specialized computational tools to execute precise analytical procedures while maintaining control ov er higher-le vel scientific inference. Both model-based and tool-assisted reasoning rely critically on appropriate training paradigms. Agentic reinforcement learning has been sho wn to be ef fectiv e in training slo w-thinking and contextual tool use, enabling agents to in voke, interpret, and integrate tool outputs within sustained reasoning and planning processes. T ogether , these design and training strategies support the analysis of raw e xperimental signals within long-horizon autonomous discov ery loops. 12/ 17 5.2 Embodied Execution Reliability The core challenge of embodied ex ecution in scientific scenarios lies in e xecution reliability: whether the e xperi- mental actions can be e xecuted correctly , consistently , and repeatedly in comple x laboratory en vironments under strict safety constraints. Scientific experimentation may not be carried out by a single, uniform robotic form. Instead, embodied ex ecution spans a di verse set of physical carriers, including stationary or mobile manipulators and humanoid robots. Ensuring reliable execution across such heterogeneous embodiments amplifies the difficulty of autonomy in scientific en vironments. Beyond the di versity of embodiments, scientific experiments themselves demand a wide range of precise and highly specialized skills, such as liquid dispensing, po wder weighing, apparatus grasping, sample transfer , and instrument interfacing. More importantly , real experiments often in v olve hazardous chemicals, high temperatures, high pressures, or biosafety risks, making it difficult to scale up learning approaches that rely on physical trial and error . T o address these challenges, sim-to-real approaches based on digital twin environments play a central role 51 . Digital twins enable systematic modeling not only of laboratory layouts and instrument geometries, b ut also of the interaction dynamics specific to dif ferent embodiments and e xperimental mechanisms. In scientific scenarios, the ke y to digital twins is not limited to simulation accuracy in terms of geometry or motion, but also includes the accurate depiction of experimental mechanisms. For example, by modeling heat transfer processes, temperature changes in the virtual en vironment can accurately reflect thermal behaviors in physical experiments, ensuring that ex ecution strategies de v eloped in the simulation en vironment remain v alid in real experiments 50 . Lev eraging simulation en vironments for large-scale, lo w-cost training and trial and error , combined with high-quality trajectory data generated from human demonstrations and robot executions, can gradually narrow the gap between virtual and real worlds, enabling smooth migration from simulation training to real-world deplo yment. 5.3 Long-Horizon Knowledge Accum ulation The essence of long-horizon autonomy lies in the sustained bidirectional closure between cognition and e xecution across multiple experimental c ycles. Scientific discovery is not a collection of isolated experiments, b ut a process of cumulativ e knowledge construction, re vision, and e xtension. First, long-horizon autonomy imposes stringent requirements on memory management 65 . Agents must continuously record, organize, and re visit historical hypothe- ses, experimental designs, and observ ations across e xtended timescales, such that prior experience can be reliably carried forward into future decision-making. Such continuity cannot be reliably supported by language-model context windo ws or unstructured experimental logs alone. Second, long-horizon discov ery requires that experimental outcomes be systematically analyzed and internalized as e v olving scientific kno wledge. Newly generated results should not remain as transient observations or isolated performance metrics; rather , they must be integrated into the agent’ s internal state. This integration ensures that past successes, failures, and anomalies ex ert lasting influence, thereby enabling cumulati ve rather than repetiti ve disco very . A central strategy for addressing these challenges is the introduction of kno wledge graphs as structured repre- sentational skeletons 66 . At the memory lev el, the y transform fragmented experimental records, hypotheses, and conclusions into structured representations that support retriev al, comparison, and reasoning across temporal scales. At the disco very le vel, ne wly identified scientific re gularities, causal relationships, or anomalous behaviors can be incorporated as ne w nodes or relationships, allowing scientific understanding to be continuously expanded and refined. Overall, long-horizon knowledge accumulation is a systemic requirement for the stability and effecti v eness of the entire closed loop. Only when scientific knowledge can be persistently accumulated, structured, and re vised across experimental cycles can embodied agents move beyond short-term optimization and genuinely assume responsibility for long-horizon autonomous scientific discov ery . 5.4 Protocolization of Scientific Infrastructure In most laboratories, experimental devices, sensing instruments, and ex ecution modules typically operate as isolated systems. Scientific instruments extend the perceptual boundary of discovery by rendering processes observ able; their states and outputs need to be acquired continuously to support reasoning. In practice, howe ver , measurements, de vice states, and operational logs are recorded locally or exposed only as low-le v el signals. As a result, perceptual 13/ 17 information cannot flo w continuously into the scientific brain, and reasoning outcomes cannot be reliably grounded in the ev olving experimental state. This challenge is further amplified in complex experimental settings that in v olve multi-step procedures and coordinated embodied action. Executing such workflo ws requires agents to orchestrate heterogeneous robots and manipulators. W ithout a unified representation of actions and system states, high-le vel experimental plans cannot be systematically decomposed into executable embodied beha viors. T ogether , these factors create a structural disconnection between perception, reasoning, and action, constituting a fundamental bottleneck for closed-loop autonomy . Overcoming this bottleneck requires a principled reorg anization of ho w experimental components are repre- sented and interfaced. Protocolized infrastructure addresses this challenge by defining a unified, agent-interpretable abstraction ov er distributed e xperimental components 67 , 68 . While networked connecti vity enables heterogeneous instruments, sensors, and ex ecution systems to expose their states and outputs, protocolization lifts raw connec- ti vity into agent-operable capability . For example, the Science Context Protocol (SCP) 67 of fers a standardized way to expose and orchestrate scientific resources, including tools, models, datasets, and physical instruments, thereby transforming heterogeneous laboratory connecti vity into agent-operable capability . By standardizing how experimental actions, perceptual observations, and failure or exception states are represented, protocols enable agents to interpret, compare, and compose interactions across instruments and e xperimental contexts. This allows actions and observations to function as reusable, verifiable primiti v es within the PLAD loop, supporting traceability , composability , and cumulativ e discov ery across long-horizon cycles. 5.5 Safety and Risk Go vernance Safety constitutes a fundamental challenge for long-horizon autonomous scientific e xploration. A defining feature of PLAD is that e xperimental actions are embodied and irr e versible . Physical interventions permanently alter materials, instruments, and en vironmental states, and erroneous actions cannot be rolled back as f ailed computations. Risk is further amplified by the iteration of PLAD. It also creates the potential for runaway autonomy , progressiv ely relax implicit safety mar gins. These factors substantially amplify safety hazards during e xperimentation, including the ex ecution of unsafe procedures or the generation of hazardous outcomes. For e xample, operating under extreme temperatures or pressures, employing toxic reagents outside validated re gimes, or producing products that pose chemical, biological, or en vironmental risks beyond the e xperimental boundary . Safety gov ernance in PLAD relies on a deliberate complementarity between kno wledge-dri ven constraints and model-based risk assessment. Explicit safety kno wledge can be deri ved from laboratory re gulations, instrument specifications, hazard databases, and e xperimental manuals. It defines operational boundaries that delimit where autonomous exploration must not go. These constraints encode known hazards and non-ne gotiable limits, ensuring that hypothesis generation and e xperimental planning remain within v alidated and auditable regimes. Howe ver , long-horizon autonomous discov ery routinely encounters risk that cannot be exhausti v ely specified in advance. As PLAD iterates, hazards may emer ge from contextual int eractions, cumulativ e de viations, or gradual extrapolation to ward extreme conditions. Model-based safety supervision is therefore required to assess such conte xt-dependent and emergent risks. Safety-aware guard models e valuate candidate plans within their historical and e xperimental context, estimating whether sequences of otherwise permissible actions collecti vely approach unsafe re gimes. 6 Conclusion Embodied Science reframes disco very as a long-horizon closed-loop process in which reasoning is continuously grounded, corrected, and enriched through physical interaction with the world. This perspectiv e highlights a structural limitation in today’ s AI4S landscape: scaling language reasoning improv es cogniti ve breadth, and adv ancing laboratory automation impro ves throughput, b ut neither alone satisfies the core requirement of autonomous discov ery , namely the ability to iterati vely generate, test, falsify , and revise hypotheses o ver e xtended horizons. W ithout embodiment, reasoning risks becoming self-referential; without scientific cognition, ex ecution risks degenerating into blind optimization. PLAD pro vides an org anizing principle for o vercoming this di vide. By integrating Perception, Language, Action, and Discov ery into a coupled system, PLAD shifts autonomy from short-term performance to cumulative scientific 14/ 17 understanding, learning not only from success but also from failure, anomaly , and uncertainty . Realizing this vision will require coordinated advances across foundation models, instrument-aware perception, protocol compilation and control, scientific infrastructure, ev aluation standards, and safety gov ernance. The central question is no longer whether AI can assist science, but whether scientific disco very can be engineered as an embodied, long-horizon process that remains trustworthy as autonomy scales. References 1. W ang, H. et al. Scientific discov ery in the age of artificial intelligence. Nature 620 , 47–60 (2023). 2. Jumper , J. et al. Highly accurate protein structure prediction with alphafold. Nature 596 , 583–589 (2021). 3. Baek, M. et al. Accurate prediction of protein structures and interactions using a three-track neural network. Science 373 , 871–876 (2021). 4. W u, Z. et al. Moleculenet: A benchmark for molecular machine learning. Chem. science 9 , 513–530 (2018). 5. Y u, T . et al. Enzyme function prediction using contrastiv e learning. Science 379 , 1358–1363 (2023). 6. Fang, Y . et al. Knowledge graph-enhanced molecular contrasti ve learning with functional prompt. Nat. Mach. Intell. 5 , 542–553 (2023). 7. Krishna, R. et al. Generalized biomolecular modeling and design with rosettafold all-atom. Science 384 , eadl2528 (2024). 8. Zeni, C. et al. A generativ e model for inorganic materials design. Nature 639 , 624–632 (2025). 9. Han, Y . et al. Retrosynthesis prediction with an iterati ve string editing model. Nat. Commun. 15 , 6404 (2024). 10. W ang, X. et al. Multimodal learning with next-token prediction for large multimodal models. Nature 1–7 (2026). 11. Bai, L. et al. Intern-s1: A scientific multimodal foundation model (2025). 12. Zhuang, X. et al. Adv ancing biomolecular understanding and design following human instructions. Nat. Mach. Intell. 7 , 1154–1167 (2025). 13. Xu, W . et al. Probing scientific general intelligence of llms with scientist-aligned w orkflows. arXiv pr eprint arXiv:2512.16969 (2025). 14. Kuhn, T . S. & Hacking, I. The structure of scientific r evolutions , vol. 2 (Uni v ersity of Chicago press Chicago, 1970). 15. Popper , K. The logic of scientific discovery (Routledge, 2005). 16. Gao, S. et al. Empowering biomedical disco very with ai agents. Cell 187 , 6125–6151 (2024). 17. W ei, J. et al. From AI for science to agentic science: A surv ey on autonomous scientific discov ery . CoRR abs/2508.14111 (2025). 18. Nav eed, H. et al. A comprehensive overvie w of large language models. A CM T ransactions on Intell. Syst. T echnol. 16 , 1–72 (2025). 19. Achiam, J. et al. Gpt-4 technical report. arXiv preprint arXiv:2303.08774 (2023). 20. Li, B., Saini, A. K., Hernandez, J. G. & Moore, J. H. Agentic ai and the rise of in silico team science in biomedical research. Nat. Biotechnol. 1–15 (2026). 21. T om, G. et al. Self-driving laboratories for chemistry and materials science. Chem. Rev. 124 , 9633–9732 (2024). 22. Canty , R. B. et al. Science acceleration and accessibility with self-driving labs. Nat. Commun. 16 , 3856 (2025). 23. Hao, Q., Xu, F ., Li, Y . & Evans, J. Artificial intelligence tools expand scientists’ impact but contract science’ s focus. Natur e 1–7 (2026). 15/ 17 24. Y ao, S. et al. React: Synergizing reasoning and acting in language models. In ICLR (OpenRe view .net, 2023). 25. Fung, P . et al. Embodied AI agents: Modeling the world. CoRR abs/2506.22355 (2025). 26. Hey , T ., T ansley , S., T olle, K. M. et al. The fourth paradigm: Data-intensive scientific discovery , v ol. 1 (Microsoft research Redmond, W A, 2009). 27. M. Bran, A. et al. Augmenting large language models with chemistry tools. Nat. Mach. Intell. 6 , 525–535 (2024). 28. Huang, K. et al. Biomni: A general-purpose biomedical ai agent. bioRxiv 2025–05 (2025). 29. Ding, K. et al. Scitoolagent: A knowledge-graph-dri ven scientific agent for multitool integration. Nat. Comput. Sci. 5 , 962–972 (2025). 30. Gao, S. et al. Democratizing AI scientists using tooluniv erse. CoRR abs/2509.23426 (2025). 31. Swanson, K., W u, W ., Bulaong, N. L., Pak, J. E. & Zou, J. The virtual lab of ai agents designs new sars-cov-2 nanobodies. Natur e 646 , 716–723 (2025). 32. Ghareeb, A. E. et al. Robin: A multi-agent system for automating scientific discovery . CoRR abs/2505.13400 (2025). 33. Penadés, J. R. et al. Ai mirrors e xperimental science to uncov er a mechanism of gene transfer crucial to bacterial e volution. Cell 188 , 6654–6665 (2025). 34. Novik ov , A. et al. Alphae volv e: A coding agent for scientific and algorithmic disco very . CoRR abs/2506.13131 (2025). 35. W eng, Y . et al. Deepscientist: Adv ancing frontier-pushing scientific findings progressi v ely . CoRR abs/2509.26603 (2025). 36. T eam, I. et al. Internagent: When agent becomes the scientist–building closed-loop system from hypothesis to verification. arXiv e-prints arXi v–2505 (2025). 37. Feng, S. et al. Internagent-1.5: A unified agentic framew ork for long-horizon autonomous scientific discovery . arXiv pr eprint arXiv:2602.08990 (2026). 38. Lu, C. et al. The AI scientist: T o wards fully automated open-ended scientific discovery . CoRR abs/2408.06292 (2024). 39. Mitchener , L. et al. K osmos: An AI scientist for autonomous discovery . CoRR abs/2511.02824 (2025). 40. Steiner , S. et al. Organic synthesis in a modular robotic system dri v en by a chemical programming language. Science 363 , eaav2211 (2019). 41. Kuw ahara, M. et al. Dev elopment of an open-source 3d-printed material synthesis robot fluid: Hardware and software blueprints for accessible automation in materials science. A CS Appl. Eng. Mater. 3 , 978–987 (2025). 42. Szymanski, N. J. et al. An autonomous laboratory for the accelerated synthesis of inorganic materials. Natur e 624 , 86–91 (2023). 43. Slattery , A. et al. Automated self-optimization, intensification, and scale-up of photocatalysis in flow . Science 383 , eadj1817 (2024). 44. Zhang, Z. et al. A multimodal robotic platform for multi-element electrocatalyst disco very . Natur e 647 , 390–396 (2025). 45. Boiko, D. A., MacKnight, R., Kline, B. & Gomes, G. Autonomous chemical research with large language models. Natur e 624 , 570–578 (2023). 46. Song, T . et al. A multiagent-driven robotic ai chemist enabling autonomous chemical research on demand. J. Am. Chem. Soc. 147 , 12534–12545 (2025). 47. King, R. D. et al. The automation of science. Science 324 , 85–89 (2009). 16/ 17 48. Burger , B. et al. A mobile robotic chemist. Natur e 583 , 237–241 (2020). 49. Dai, T . et al. Autonomous mobile robots for exploratory synthetic chemistry . Natur e 635 , 890–897 (2024). 50. Darvish, K. et al. Matterix: T o ward a digital twin for robotics-assisted chemistry laboratory automation. Nat. computational science 1–16 (2025). 51. Zhao, W ., Queralta, J. P . & W esterlund, T . Sim-to-real transfer in deep reinforcement learning for robotics: A surve y . In 2020 IEEE symposium series on computational intelligence (SSCI) , 737–744 (IEEE, 2020). 52. Xu, Y . et al. Lumi-lab: A foundation model-driven autonomous platform enabling disco very of ionizable lipid designs for mrna deli very . Cell (2026). 53. Shi, T . et al. Knowledge-dri v en autonomous materials research via collaborati v e multi-agent and robotic system. Matter (2026). 54. Zitko vich, B. et al. R T-2: V ision-language-action models transfer web knowledge to robotic control. In 7th Annual Confer ence on Robot Learning (2023). 55. W ang, P . et al. Qwen2-vl: Enhancing vision-language model’ s perception of the world at an y resolution. arXiv pr eprint arXiv:2409.12191 (2024). 56. Guo, D. et al. Deepseek-r1 incentivizes reasoning in llms through reinforcement learning. Natur e 645 , 633–638 (2025). 57. Ding, K. et al. Bridging data and disco very: A survey on kno wledge graphs in ai for science. Natl. Sci. Rev. nwag140 (2026). 58. Meng, Z., Chen, J., Zhuang, X. & Zhang, Q. Inte grating kno wledge graphs and lar ge language models for adv ancing scientific research. In 2025 IEEE 45th International Confer ence on Distrib uted Computing Systems W orkshops (ICDCSW) , 393–396 (IEEE, 2025). 59. Agarwal, O., Ge, H., Shakeri, S. & Al-Rfou, R. Knowledge graph based synthetic corpus generation for kno wledge-enhanced language model pre-training. In NAA CL-HL T , 3554–3565 (Association for Computational Linguistics, 2021). 60. Gao, Y . et al. Retrie val-augmented generation for large language models: A survey . CoRR abs/2312.10997 (2023). 61. Liang, L. et al. Kag: Boosting llms in professional domains via kno wledge augmented generation. In Companion Pr oceedings of the A CM on W eb Confer ence 2025 , WWW ’25, 334–343 (Association for Computing Machinery , Ne w Y ork, NY , USA, 2025). 62. Lu, J.-M., Pan, J.-Z., Mo, Y .-M. & Fang, Q. Automated intelligent platforms for high-throughput chemical synthesis. Artif. Intell. Chem. 2 , 100057 (2024). 63. Orouji, N. et al. Autonomous catalysis research with human–ai–robot collaboration. Nat. Catal. 1–11 (2025). 64. Zhao, H. Biofoundry: Nsf ibiofoundry for basic and applied biology . NSF A war d. Number 2400058. Dir. for Biol. Sci. 24 , 58 (2024). 65. Hu, Y . et al. Memory in the age of ai agents. arXiv pr eprint arXiv:2512.13564 (2025). 66. Chhikara, P ., Khant, D., Aryan, S., Singh, T . & Y ada v , D. Mem0: Building production-ready ai agents with scalable long-term memory . arXiv preprint arXiv:2504.19413 (2025). 67. Jiang, Y . et al. Scp: Accelerating discovery with a global web of autonomous scientific agents. arXiv pr eprint arXiv:2512.24189 (2025). 68. Sim, M. et al. Chemos 2.0: An orchestration architecture for chemical self-dri ving laboratories. Matter 7 , 2959–2977 (2024). 17/ 17

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment