FedRG: Unleashing the Representation Geometry for Federated Learning with Noisy Clients

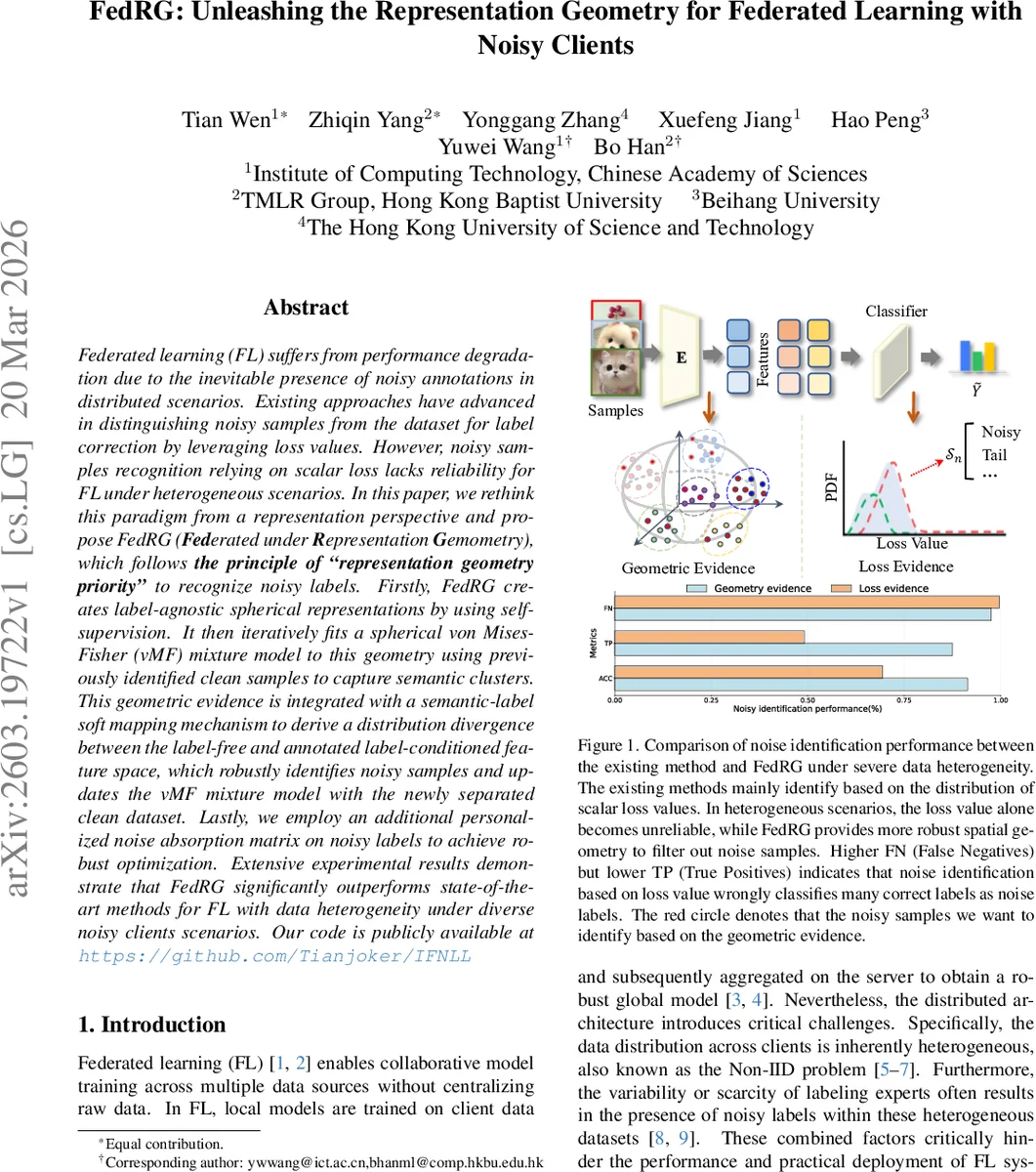

Federated learning (FL) suffers from performance degradation due to the inevitable presence of noisy annotations in distributed scenarios. Existing approaches have advanced in distinguishing noisy samples from the dataset for label correction by leveraging loss values. However, noisy samples recognition relying on scalar loss lacks reliability for FL under heterogeneous scenarios. In this paper, we rethink this paradigm from a representation perspective and propose \method~(\textbf{Fed}erated under \textbf{R}epresentation \textbf{G}emometry), which follows \textbf{the principle of ``representation geometry priority’’} to recognize noisy labels. Firstly, \methodcreates label-agnostic spherical representations by using self-supervision. It then iteratively fits a spherical von Mises-Fisher (vMF) mixture model to this geometry using previously identified clean samples to capture semantic clusters. This geometric evidence is integrated with a semantic-label soft mapping mechanism to derive a distribution divergence between the label-free and annotated label-conditioned feature space, which robustly identifies noisy samples and updates the vMF mixture model with the newly separated clean dataset. Lastly, we employ an additional personalized noise absorption matrix on noisy labels to achieve robust optimization. Extensive experimental results demonstrate that \methodsignificantly outperforms state-of-the-art methods for FL with data heterogeneity under diverse noisy clients scenarios.

💡 Research Summary

Federated learning (FL) suffers from two intertwined challenges in real‑world deployments: noisy labels and data heterogeneity (Non‑IID). Existing FL methods that aim to mitigate label noise typically rely on the “small‑loss” heuristic—identifying clean samples as those with low training loss and noisy samples as those with high loss. While this works on balanced, IID data, it collapses under heterogeneous settings where tail‑class samples naturally incur higher loss regardless of label correctness. Consequently, loss‑based detectors produce many false positives (clean samples mislabeled as noisy) and false negatives (noisy samples mistakenly kept).

The paper “FedRG: Unleashing the Representation Geometry for Federated Learning with Noisy Clients” introduces a fundamentally different principle: representation geometry priority. Instead of using scalar loss values, the method assesses the intrinsic geometry of label‑free feature representations and compares it with the geometry induced by the (potentially noisy) annotated labels. If the two geometries align, the sample is likely clean; if they diverge, the sample is flagged as noisy.

Key components of FedRG

-

Label‑free spherical representation via self‑supervision

Each client first trains a feature encoder using a contrastive self‑supervised objective (SimCLR). Two stochastic augmentations of the same image are encoded, normalized to unit length, and optimized with the NT‑Xent (InfoNCE) loss. This forces the representations onto a hypersphere (S^{d-1}) where semantically similar instances cluster around a few directions, while out‑of‑distribution or heavily corrupted instances spread uniformly. After a few communication rounds (denoted (T_1)), a well‑conditioned spherical manifold is obtained without any label information. -

Probabilistic modeling on the hypersphere

On the learned hypersphere, FedRG fits a mixture of von Mises‑Fisher (vMF) distributions plus a uniform background component: \

Comments & Academic Discussion

Loading comments...

Leave a Comment