Stochastic Averaging and Statistical Inference of Glycolytic Pathway

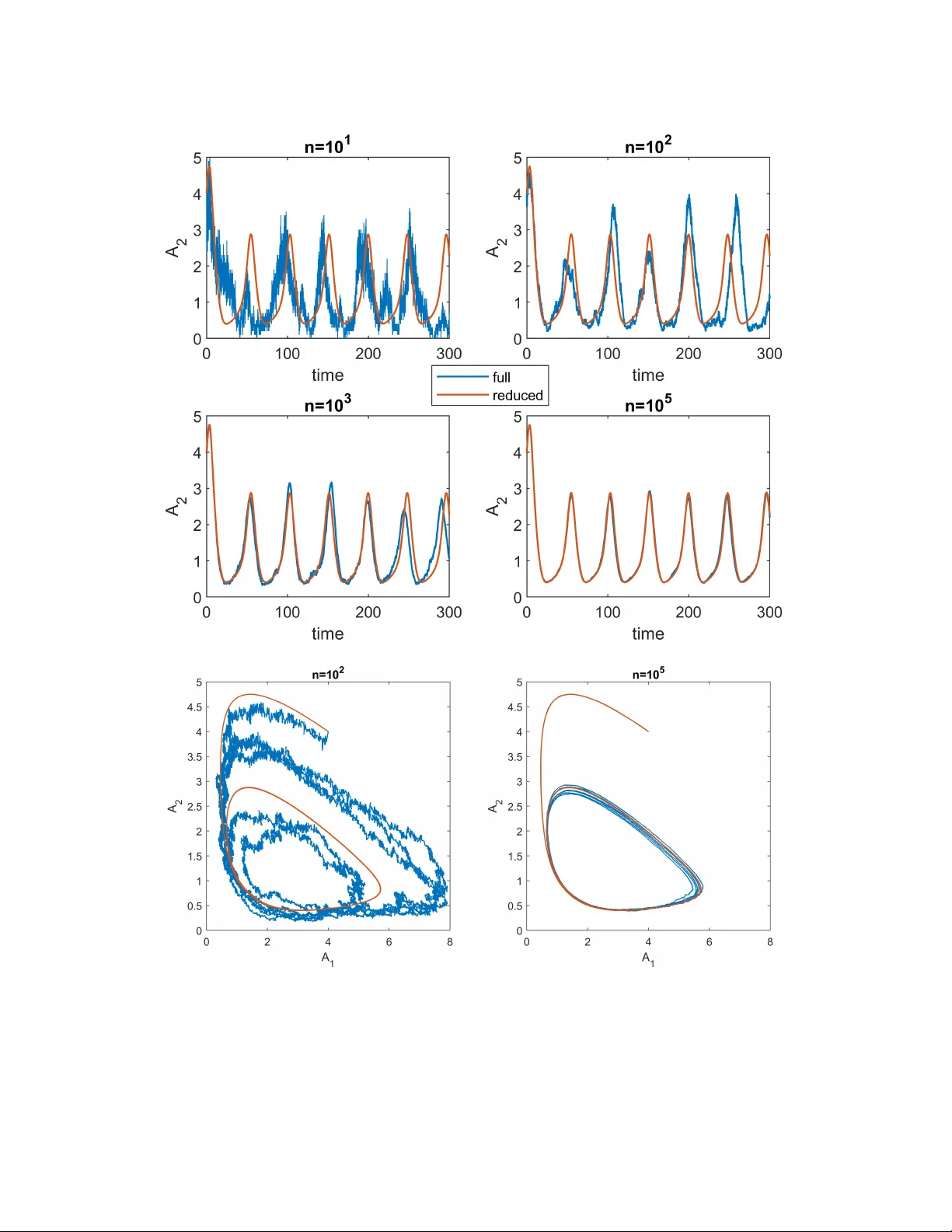

Many biological processes exhibit oscillatory behavior. Among these, glycolytic oscillations have been extensively studied due to their well-characterized biochemical reaction networks. However, the complexity of these networks necessitates low-dimen…

Authors: Arnab Ganguly, Hye-Won Kang