Approximate virtual quantum broadcasting

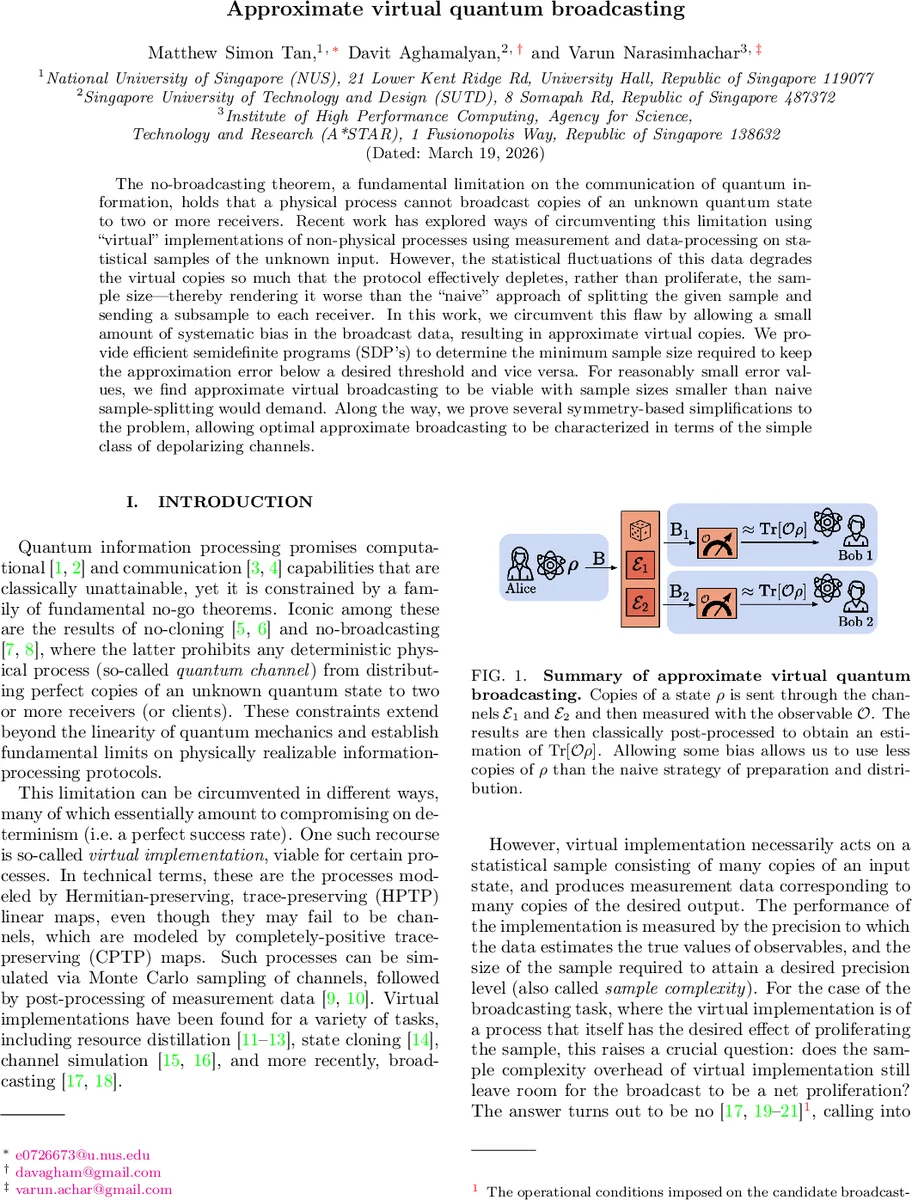

The no-broadcasting theorem, a fundamental limitation on the communication of quantum information, holds that a physical process cannot broadcast copies of an unknown quantum state to two or more receivers. Recent work has explored ways of circumventing this limitation using “virtual” implementations of non-physical processes using measurement and data-processing on statistical samples of the unknown input. However, the statistical fluctuations of this data degrades the virtual copies so much that the protocol effectively depletes, rather than proliferate, the sample size – thereby rendering it worse than the “naive” approach of splitting the given sample and sending a subsample to each receiver. In this work, we circumvent this flaw by allowing a small amount of systematic bias in the broadcast data, resulting in approximate virtual copies. We provide efficient semidefinite programs (SDP’s) to determine the minimum sample size required to keep the approximation error below a desired threshold and vice versa. For reasonably small error values, we find approximate virtual broadcasting to be viable with sample sizes smaller than naive sample-splitting would demand. Along the way, we prove several symmetry-based simplifications to the problem, allowing optimal approximate broadcasting to be characterized in terms of the simple class of depolarizing channels.

💡 Research Summary

The paper tackles the well‑known no‑broadcasting theorem, which forbids any physical quantum channel from producing perfect copies of an unknown quantum state for multiple receivers. Recent work has shown that “virtual” implementations—realizing non‑physical Hermitian‑preserving, trace‑preserving (HPTP) maps by Monte‑Carlo sampling of physical channels, measurement, and classical post‑processing—can simulate such forbidden processes. However, the statistical fluctuations inherent in the sampling dramatically increase the required sample size, so that virtual broadcasting actually consumes more copies of the input state than the naïve strategy of simply splitting the sample and sending each part to a receiver.

The authors propose a remedy: allow a small systematic bias in the broadcasted data, thereby defining approximate virtual broadcasting. An (a,b)‑approximate broadcasting map is an HPTP map whose two marginal channels deviate from the identity by at most a and b (in the diamond norm, i.e., ½‖·‖⋄ ≤ a, b). When a = b = δ the map is called a δ‑approximate broadcaster.

Two semidefinite programs (SDPs) are introduced. The first SDP, for given error thresholds (a,b), minimizes the sample‑complexity overhead S(a,b), defined as the squared scaling factor (x + y)² that appears when a virtual map is decomposed as E = x E₁ − y E₂ with E₁,E₂ CPTP. The objective is to minimize x + y subject to the diamond‑norm constraints on the marginals and the decomposition constraints. The second SDP fixes a budget of samples (i.e., a bound on x + y) and minimizes the achievable error δ.

A key theoretical insight is that optimal solutions can be assumed unitarily covariant without loss of optimality. By twirling the map over the full unitary group, the authors show that each marginal can be reduced, via Schur’s lemma, to a depolarizing channel Λₜ(ρ) = t ρ + (1 − t) I/d with a single parameter t. Consequently, the high‑dimensional SDP collapses to a one‑parameter optimization over t, dramatically simplifying both analytical and numerical treatment.

Numerical results for qubits (d = 2) reveal that allowing a modest error of δ ≈ 0.15 (i.e., the broadcast copies differ from the original by at most 15 % of the worst‑case distinguishability) yields a sample‑overhead factor √S < √2. This means that the approximate virtual broadcaster uses fewer copies of the input state than the naïve split‑and‑send approach, achieving genuine sample‑efficiency (SE). Analytically, the authors derive a dimension‑dependent error bound δ_max = (2d − 2)/(2d + 1), which approaches ≈ 0.42 for large d. Hence, even for high‑dimensional systems, permitting up to ~42 % error still beats the naïve strategy.

The paper emphasizes that the depolarizing form of the optimal virtual channel is both mathematically convenient and experimentally realistic, since depolarizing noise is a standard model in quantum hardware. The authors discuss several extensions: broadcasting to more than two receivers (1→t), alternative error metrics, incorporation of realistic hardware noise, and the integration of approximate virtual broadcasting with other virtual tasks such as cloning or channel simulation.

In summary, the work demonstrates that approximate virtual broadcasting—by tolerating a controlled systematic bias—can overcome the prohibitive sample overhead that plagued exact virtual broadcasting. The combination of symmetry‑based simplifications, efficient SDP formulations, and concrete error‑vs‑sample trade‑offs provides a practical pathway for distributing quantum information in scenarios where perfect broadcasting is impossible, thereby opening new design possibilities for quantum communication networks and distributed quantum computing architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment