SODIUM: From Open Web Data to Queryable Databases

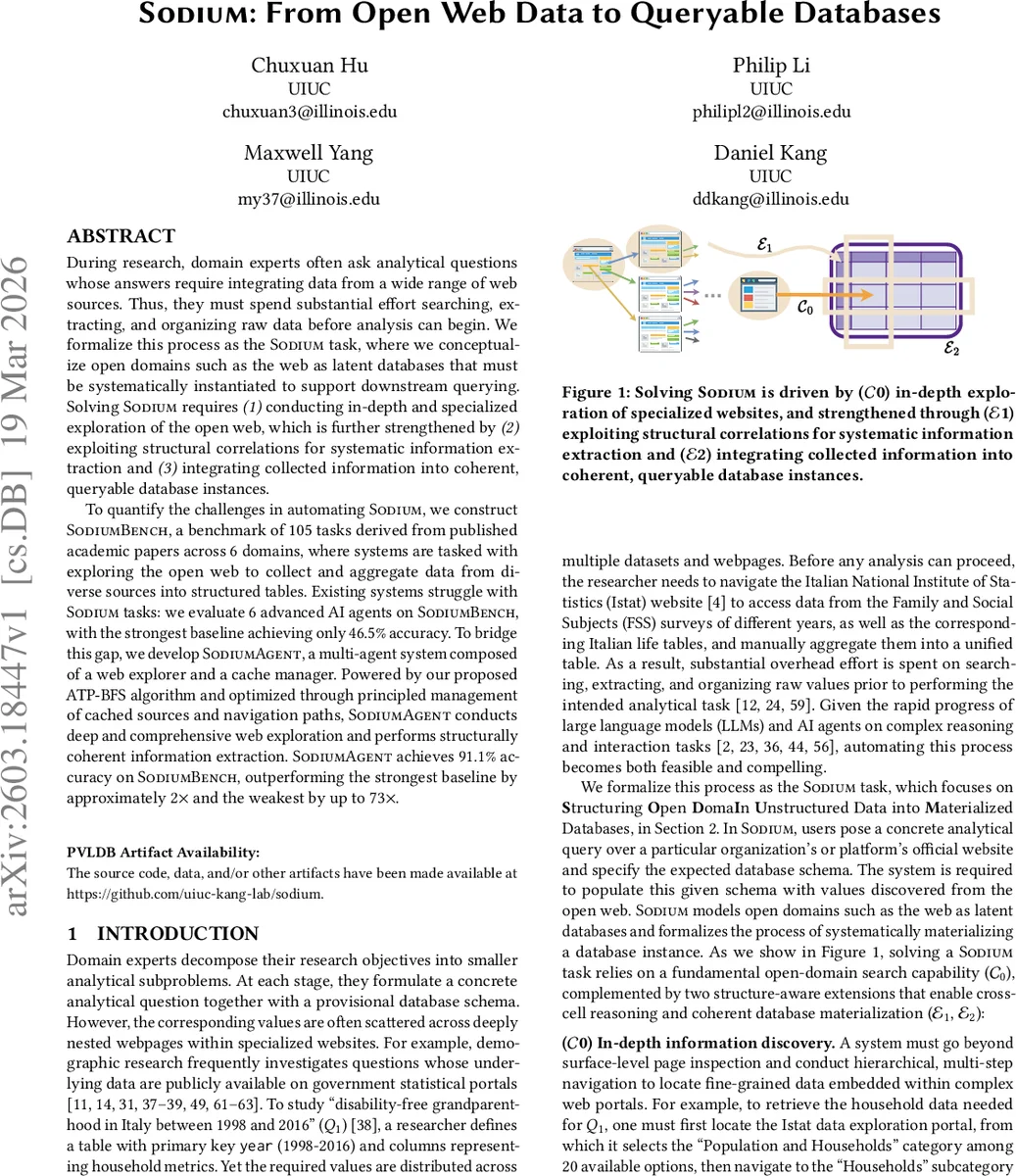

During research, domain experts often ask analytical questions whose answers require integrating data from a wide range of web sources. Thus, they must spend substantial effort searching, extracting, and organizing raw data before analysis can begin. We formalize this process as the SODIUM task, where we conceptualize open domains such as the web as latent databases that must be systematically instantiated to support downstream querying. Solving SODIUM requires (1) conducting in-depth and specialized exploration of the open web, which is further strengthened by (2) exploiting structural correlations for systematic information extraction and (3) integrating collected information into coherent, queryable database instances. To quantify the challenges in automating SODIUM, we construct SODIUM-Bench, a benchmark of 105 tasks derived from published academic papers across 6 domains, where systems are tasked with exploring the open web to collect and aggregate data from diverse sources into structured tables. Existing systems struggle with SODIUM tasks: we evaluate 6 advanced AI agents on SODIUM-Bench, with the strongest baseline achieving only 46.5% accuracy. To bridge this gap, we develop SODIUM-Agent, a multi-agent system composed of a web explorer and a cache manager. Powered by our proposed ATP-BFS algorithm and optimized through principled management of cached sources and navigation paths, SODIUM-Agent conducts deep and comprehensive web exploration and performs structurally coherent information extraction. SODIUM-Agent achieves 91.1% accuracy on SODIUM-Bench, outperforming the strongest baseline by approximately 2 times and the weakest by up to 73 times.

💡 Research Summary

The paper introduces the SODIUM task, a novel formulation that treats the open web as a latent database that must be materialized into a relational table matching a user‑specified schema. Researchers often need to collect fragmented data from multiple web pages before any analysis can begin; SODIUM automates this entire pipeline—search, extraction, normalization, and integration—so that downstream analytical queries can be executed directly on the generated table. To benchmark this problem, the authors construct SODIUM‑Bench, a collection of 105 real‑world analytical queries drawn from 48 published papers across six domains (demographics, sports, finance, economics, food, climate). Each task provides a natural‑language question, a target domain (e.g., a government statistics portal), and a relational schema with primary keys and attributes. The benchmark deliberately selects tasks that require multi‑step navigation, cross‑page structural regularities, and consistent formatting, thereby exposing the limitations of existing AI agents.

Six state‑of‑the‑art agents—including web‑search‑augmented LLMs, RAG‑based systems, and table‑population pipelines—are evaluated on SODIUM‑Bench. The best baseline reaches only 46.5 % cell‑level accuracy, confirming that current methods fail to satisfy three essential components of SODIUM: (C0) deep, domain‑specific exploration; (E1) exploitation of structural correlations across cells; and (E2) coherent integration of extracted values into a consistent database.

To address these gaps, the authors design SODIUM‑Agent, a multi‑agent architecture comprising a Web Explorer and a Cache Manager. The Web Explorer employs a novel Adaptive Tree‑Pruned Breadth‑First Search (ATP‑BFS) algorithm. ATP‑BFS builds a dynamic exploration tree guided by the query and schema, expands candidate pages level‑by‑level, and prunes low‑scoring branches using a composite score that blends keyword relevance, link text analysis, and layout cues. This adaptive pruning dramatically reduces the combinatorial explosion typical of naïve BFS while still reaching deep, specialized pages. The Cache Manager records visited URLs, extracted metadata (e.g., year, country, dataset identifiers), and navigation paths. When filling a new cell, the manager reuses previously discovered structural patterns (row or column dependencies), thereby minimizing redundant navigation and preserving consistency across related cells.

Empirical results show that SODIUM‑Agent achieves 91.1 % overall accuracy on the benchmark, more than double the strongest baseline and up to 73× the weakest. Gains are especially pronounced for the E2 component: the agent improves structured‑database consistency by a factor of 16.6 over baselines. Ablation studies reveal that both ATP‑BFS and the cache‑based reuse of structural cues contribute substantially; removing either component drops accuracy below 70 %. Error analysis highlights remaining challenges such as dynamically rendered JavaScript pages, sites requiring authentication, and mismatches between the prescribed schema and the actual web layout.

The paper’s contributions are threefold: (1) formalizing the SODIUM task, which bridges the gap between analytical intent and fragmented web data; (2) releasing SODIUM‑Bench, the first benchmark that captures the full complexity of open‑web data materialization; (3) presenting SODIUM‑Agent, an agentic system that combines adaptive deep web exploration with principled cache management to achieve over 90 % task success. The authors discuss future directions, including handling dynamic APIs, integrating multimodal large language models for richer content understanding, and incorporating user feedback loops to further refine navigation strategies. Overall, the work establishes a solid foundation for research at the intersection of web navigation, information extraction, and automated data engineering.

Comments & Academic Discussion

Loading comments...

Leave a Comment