Structural Monotonicity in Transmission Scheduling for Remote State Estimation with Hidden Channel Mode

This study treats transmission scheduling for remote state estimation over unreliable channels with a hidden mode. A local Kalman estimator selects scheduling actions, such as power allocation and resource usage, and communicates with a remote estima…

Authors: Hampei Sasahara

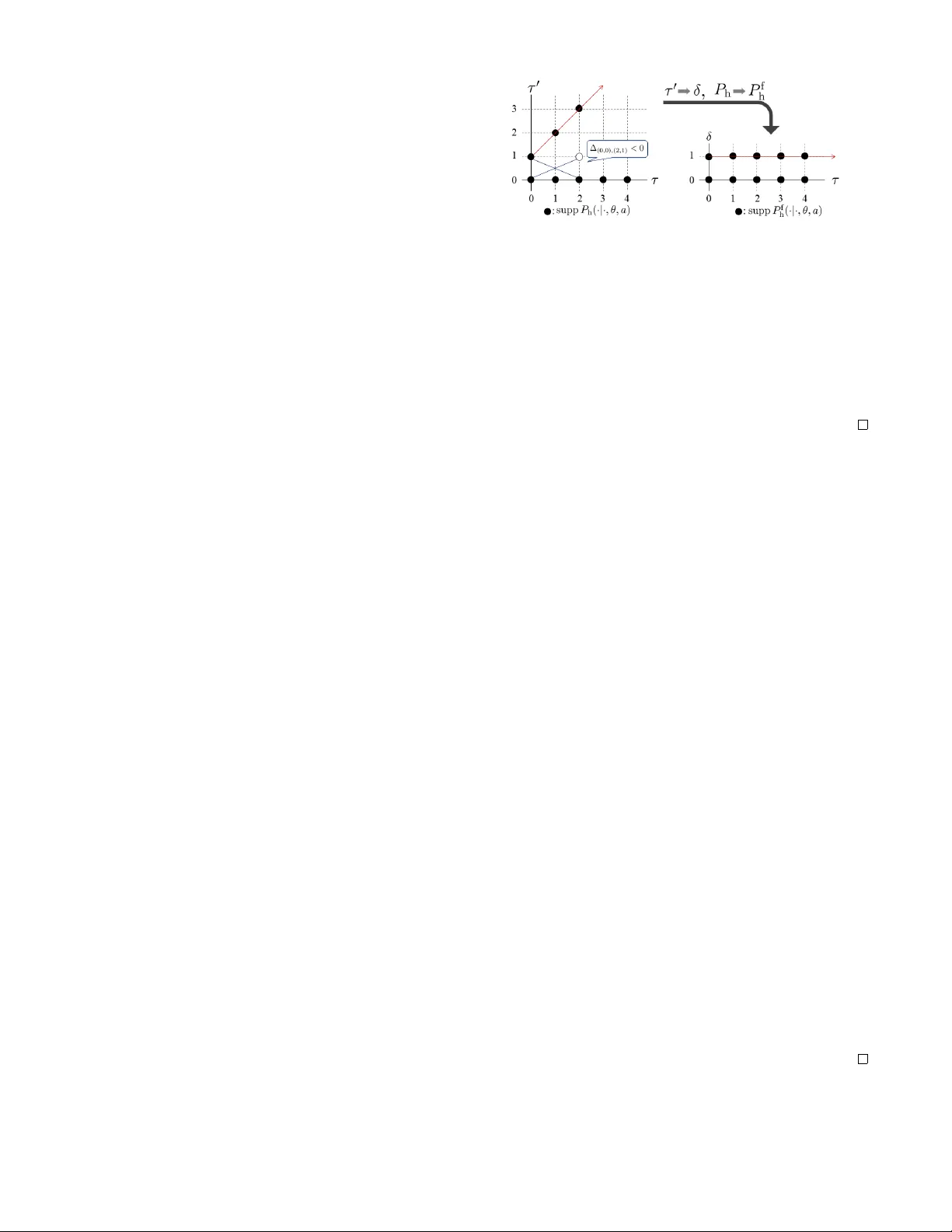

Structural Monotonicity in T ransmission Scheduling f or Remote State Estimation with Hidden Channel Mode Hampei Sasahara Abstract — This study treats transmission scheduling for re- mote state estimation over unreliable channels with a hidden mode. A local Kalman estimator selects scheduling actions, such as power allocation and resource usage, and communicates with a remote estimator based on acknowledgement feedback, balancing estimation performance and communication cost. The resulting problem is naturally formulated as a partially observable Markov decision process (POMDP). In settings with observable channel modes, it is well known that monotonicity of the value function can be established via in vestigating order- preser ving property of transition kernels. In contrast, under partial observability , the transition kernels generally lack this property , which pr events the direct application of standard monotonicity arguments. T o over come this difficulty , we in- troduce a novel technique, referred to as state-space folding, which induces transformed transition kernels recovering order preser vation on the folded space. This transformation enables a rigorous monotonicity analysis in the partially observable setting. As a repr esentative implication, we focus on an associ- ated optimal stopping f ormulation and show that the resulting optimal scheduling policy admits a threshold structure. I . I N T RO D U C T I O N Networked control systems are now ubiquitous, making remote state estimation over unreliable communication chan- nels a fundamental task [1], [2]. Packet losses and communi- cation delays degrade estimation performance, highlighting the importance of transmission scheduling, including trans- mission decisions and power allocation [3]. Notably , this issue has recently gained renewed attention from a security perspectiv e due to adv ersarial interference and denial-of- service attacks [4]–[6]. W ithin the framework of transmission scheduling, an im- portant line of work has examined the structural properties, notably the monotonicity of value functions and policies for the underlying Markov decision processes (MDPs) identified in [7]. The underlying intuition is that the system perfor- mance degrades as the holding time becomes longer or as the channel mode deteriorates, which consequently worsens the value function. These results have since been extended to settings with time-varying channel modes, multiple parallel processes, and related generalizations [8]–[12]. Such struc- tural monotonicity not only provides insight into the nature of optimal policies b ut also significantly reduces computational complexity for policy computation and implementation [13]– [15]. *This work was supported in part by JSPS KAKENHI Grant Number JP24K17296 and JST -ASPIRE Program Grant Number JPMJ AP2402. Hampei Sasahara is with the Department of Information Physics and Computing, Graduate School of Information Science and T echnology , The Univ ersity of T ok yo, T okyo, 113-8656 Japan hsasahara@g.ecc.u-tokyo.ac.jp . Howe ver , existing analyses typically assume full observ- ability of the channel mode, which is often hidden and not di- rectly observable in practice. Although extensions to hidden channel modes have been explored, structural monotonicity has not been achiev ed for the resulting partially observable Markov decision processes (POMDPs). A common approach to monotonicity analysis under partial observability relies on total positi vity of order two (TP2) properties of the underly- ing transition kernels. This approach, howe ver , fails because the relev ant state-transition probabilities have been shown to violate TP2 in the presence of holding-time dynamics [16], [17], posing a fundamental obstacle to extending monotonic- ity results. Consequently , alternativ e methods that do not rely on monotonicity hav e been proposed, including rollout-based approximations [16] and two-stage approaches that explicitly estimate the channel mode prior to scheduling [12]. This study establishes structural monotonicity ev en under partial observ ability by introducing a no vel technique, re- ferred to as state-space folding . The key idea is to transform the transition kernels onto a folded state space, where the hidden order in the original kernel, which is lost due to the shape of its support under holding-time dynamics rather than any intrinsic property of the kernel itself, becomes explicit. This transformation restores TP2 properties and enables monotonicity analysis under partial observ ability . In particular , the analysis re veals that the v alue function is monotonically increasing in both the holding time and the belief of the unfa vorable channel mode, consistent with the results under full observ ation. As a representati ve implication of this analysis, we focus on an associated optimal stopping formulation where the scheduler chooses between continu- ation and termination. It is further sho wn that the resulting optimal scheduling policy admits a threshold structure, with the stopping action becoming optimal once the holding time and the belief exceed a critical level. The remainder of this paper is organized as follo ws. Section II revie ws mathematical preliminaries. Section III formulates the problem as a POMDP . Section IV introduces state-space folding, prov es TP2 recovery of transformed kernels, and deriv es the monotonicity and threshold results. Section V presents a numerical example that validates the theoretical findings, and Section VI concludes the paper . Notation: W e denote the set of nonnegativ e integers by Z + , the trace of a matrix M by T r ( M ) , the spectral radius of a matrix M by ρ ( M ) , the maximum singular v alue of a matrix M by ∥ M ∥ 2 , and the positiv e and neg ativ e definiteness of a symmetric matrix M by M ≻ 0 and M ≺ 0 , respectiv ely . Throughout this paper , the terms “increasing” and “decreasing” are used in the weak sense to mean non- decreasing and non-increasing. I I . M A T H E M AT I C A L P R E L I M I N A R I E S W e revie w the mathematical preliminaries required for the subsequent analysis. Throughout this section, every domain is assumed to be a subset of Z for notational simplicity , although the results are not restricted to the discrete case. W e begin by defining first-order stochastic dominance (FSD) order and monotone likelihood ratio (MLR) order for probability distrib utions. Definition 1: Let p 1 ( x ) and p 2 ( x ) be probability mass functions. Then p 2 dominates p 1 in the MLR order (denoted by p 1 ≤ r p 2 ) if p 1 ( x 1 ) p 2 ( x 2 ) − p 1 ( x 2 ) p 2 ( x 1 ) ≥ 0 for any x 1 < x 2 . Note that for a binary set X = { 0 , 1 } MLR dominance p 1 ≤ r p 2 is equiv alent to p 1 (1) ≤ p 2 (1) . Moreover , MLR dominance implies ordering in expectation for all increasing functions [15, Theorem 10.4]. Lemma 1: If the MLR dominance p 1 ≤ r p 2 holds, P x v ( x ) p 1 ( x ) ≤ P x v ( x ) p 2 ( x ) for any increasing function v : X → R . Subsequently , we define TP2 for nonnegati ve functions. Definition 2: A nonnegativ e function f ( x, y ) is said to be totally positiv e of order two (TP2) if f ( x 1 , y 1 ) f ( x 2 , y 2 ) − f ( x 1 , y 2 ) f ( x 2 , y 1 ) ≥ 0 for any x 1 < x 2 and y 1 < y 2 . For stochastic kernels, its induced transformation preserves the MLR order of the input distribution, and the conv erse also holds [15, Theorem 11.10]. Lemma 2: Let P ( y | x ) be a stochastic kernel and define q i ( y ) : = P x ∈X P ( y | x ) p i ( x ) for i = 1 , 2 . Then q 1 ≤ r q 2 for any p 1 ≤ r p 2 iff P is TP2. TP2 is preserved under marginalization [18, Prop. 3.4]. Lemma 3: Let f 1 ( x, y ) and f 2 ( x, y ) be TP2 functions. Then f ( x, z ) : = P y f 1 ( x, y ) f 2 ( y , z ) is TP2. Furthermore, TP2 is preserved under summation with positiv e weights. Lemma 4: Let f i ( x, y ) be TP2 functions. Then f ( x, y ) : = P i α i f i ( x, y ) is TP2 for any α i ≥ 0 . An important application of the TP2 property arises in Bayesian inference [15, Theorems 11.5, 11.11]. Lemma 5: Define the posterior distribution p + ( x | y ) : = K ( y | x ) p ( x ) / ( P x ′ ∈X K ( y | x ′ ) p ( x ′ )) with a stochastic kernel K ( y | x ) . Then p + 1 ( ·| y ) ≤ r p + 2 ( ·| y ) for p 1 ≤ r p 2 . Further , if K ( y | x ) is TP2, then p + ( ·| y 1 ) ≤ r p + ( ·| y 2 ) for y 1 ≤ y 2 . Another related application is the T opkis’ monotonicity theorem [15, Theorem 10.2] under submodularity . Definition 3: A function Q ( x, a ) is said to be submodular if Q ( x 2 , a 2 ) − Q ( x 2 , a 1 ) ≤ Q ( x 1 , a 2 ) − Q ( x 1 , a 1 ) for any x 1 ≤ x 2 and a 1 ≤ a 2 . Lemma 6: If Q ( x, a ) is submodular , the minimum of arg min a Q ( x, a ) is incrasing. I I I . P RO B L E M F O R M U L A T I O N A. Remote State Estimation, T ransmission Scheduling, Channel Model Follo wing the standard setting in remote state estima- tion [1], [2], we consider the discrete-time linear time- Fig. 1. Overall system architecture. in variant system x t +1 = Ax t + w t and y t = Cx t + v t , where x t ∈ R n is the system state, y t ∈ R m is the measured output, and w t and v t are independent and identically dis- tributed (i.i.d.) Gaussian random noises with zero mean and cov ariance matrices Q ⪰ 0 and R ≻ 0 , respectiv ely . As in standard Kalman filtering, we assume that the pair (A , C) is observable and (A , √ Q) is controllable. The overall system architecture considered in this study is illustrated by Fig. 1. First, the local estimator , often referred to as a smart sensor [5], estimates the state x t at each time step according to the minimum mean-squared error (MMSE) criterion: ˆ x t : = E [x t | y 0 , . . . , y t ] , which can be computed us- ing the standard Kalman filter [19]. The corresponding error cov ariance matrix ˆ P t : = E (x t − ˆ x t )(x t − ˆ x t ) T | y 0 , . . . , y t satisfies the recursion ˆ P t +1 = ˜ g ◦ h ( ˆ P t ) where h (X) : = AXA T + Q , ˜ g (X) : = X − X C T (CX C T + R) − 1 CX [2]. Under the controllability and observability assumption, there exists a steady-state error cov ariance ¯ P such that ˜ g ◦ h ( ¯ P) = ¯ P for any initial condition [19]. In the following, for simplicity , we assume that the local estimation has already con verged to the steady state, i.e., ˆ P t = ¯ P for any t ∈ Z + . The local estimator transmits the estimated state ˆ x t to the remote estimator ov er an unreliable communication channel. Let γ t = 1 and γ t = 0 indicate successful and failed transmissions at time t , respecti vely . Denote by ˇ x t the MMSE estimate of x t at the remote estimator: ˇ x t : = E [x t | γ 0 , . . . , γ t , γ 0 ˆ x 0 , . . . , γ t ˆ x t ] . This remotely estimated state and the corresponding error cov ariance matrix, defined by P t : = E [( ˇ x t − x t )(ˇ x t − x t ) T | γ 0 , . . . , γ t , γ 0 ˆ x 0 , . . . , γ t ˆ x t ] , ev olve recursiv ely according to (ˇ x t +1 , P t +1 ) = (ˆ x t +1 , ¯ P) , if γ t +1 = 1 , (Aˇ x t , h (P t )) , otherwise . The estimation performance is ev aluated using the trace of the error covariance matrix, which depends solely on the holding time τ t ∈ T : = Z + , defined as the number of time steps since the most recent successful transmission. The holding time evolv es as τ t +1 = 0 if γ t +1 = 1 and τ t +1 = τ t +1 otherwise. Consequently , the estimation error at time t can be expressed as c s ( τ t ) : = T r (P t ) = T r h τ t ( ¯ P) , where h τ denotes the τ -fold composition of h . An important property is its monotonic dependence on the holding time [7, Lemma 4.1]. Pr oposition 1: The estimation error c s ( τ ) is increasing with respect to the holding time τ ∈ T . At ev ery time step, the remote estimator sends an ac- knowledgment (A CK) signal to the local estimator indicating whether the most recent transmission was successful. Based on the received A CK, the local estimator updates its infor- mation regarding the transmission outcome and the holding time. W e put the standard assumption that the A CK link is reliable [7], [8], reflecting the fact that the remote estimator is usually more po werful than the local sensor and that A CK packets carry only a very small amount of information, thereby requiring minimal communication capacity . The scheduler implemented with the local estimator selects a transmission scheduling action a t ∈ A , incurring an associated cost c a ( a t ) ∈ R , which influences the probability of successful transmission and the ev olution of the channel mode, as described below . T ypical examples of such actions include deciding whether to send a packet in a giv en time slot [7], adjusting the transmission power to improve com- munication quality [16], or refraining from using the channel. For simplicity , we assume the action set to be finite. W e consider a communication channel with a hidden binary mode θ t ∈ Θ = { 0 , 1 } , which evolv es according to a Markov process. The two modes 0 and 1 correspond to fa vorable and unfav orable channel conditions, respectiv ely , satisfying λ (0 , a ) ≥ λ (1 , a ) for any a ∈ A , where λ ( θ , a ) denotes the probability of successful transmission under action a . The channel mode ev olves according to the tran- sition probability P c ( θ t +1 | θ t , a t ) . This modeling frame work captures a broad class of communication en vironments with temporally correlated uncertainties, including the Gilbert– Elliott (GE) channel [20] and persistent failure models with geometrically distrib uted change time. W e make the following assumption. Assumption 1: The channel mode transition kernel P c ( θ ′ | θ , a ) is TP2 in ( θ, θ ′ ) for any a ∈ A . Assumption 1 is satisfied by many commonly used channel models. For instance, in the GE channel model, it is typically assumed that P c (0 | 0 , a ) ≫ P c (1 | 0 , a ) , and similarly for the channel mode θ = 1 . This dominance of self-transition probabilities yields a positi ve cross-difference term, and hence it has the TP2 property . In the persistent failure model, once the channel mode switches from 0 to 1 , it remains in that mode thereafter , where P c (0 | 1 , a ) = 0 and it implies a positiv e cross-dif ference term. B. POMDP F ormulation W e formulate the transmission scheduling problem as a POMDP . The system state is gi ven by the pair ( τ t , θ t ) . Accordingly , the state space is defined as X : = T × Θ , the action space as A , and the observation space as Y : = T . The state transition kernel factorizes as P ( τ ′ , θ ′ | τ , θ , a ) = P h ( τ ′ | τ , θ ′ , a ) P c ( θ ′ | θ , a ) , where the holding-time transition kernel P h is gi ven by P h ( τ ′ | τ , θ ′ , a ) : = λ ( θ ′ , a ) , if τ ′ = 0 , 1 − λ ( θ ′ , a ) , if τ ′ = τ + 1 , 0 , otherwise . The initial distributions are giv en by τ 0 ∼ p h and θ 0 ∼ p c , respectiv ely . Since the holding time is perfectly observed through A CK, the observation kernel is deterministic and giv en by B ( y | τ , θ ) = I { y = τ } , where I denotes the indicator function. The instantaneous cost is defined as c ( τ , θ , a ) : = c s ( τ ) + c a ( a ) , which captures the trade-off between estimation performance and the cost associated with the scheduling action. W e consider an infinite-horizon discounted cost criterion with discount factor γ ∈ (0 , 1) . T o facilitate analysis, we consider the equiv alent belief MDP formulation. Let b t ∈ [0 , 1] denote the belief that the channel is in the unfav orable state. W e denote the probability mass function of the belief by β ( θ ) , i.e., β (0) = 1 − b and β (1) = b . The initial belief is b 0 = p c (1) . Given the current state ( τ t , b t ) , action a t , and observation y t = τ t +1 , the belief is updated by Bayes’ rule b t +1 = T ( τ t , b t , y t , a t ) where T ( τ , b, y , a ) = K ( τ , 1 , y , a ) ˆ β (1; b, a ) /σ ( τ , b, y , a ) with the predictive belief, the composite k ernel, and the observation likelihood ˆ β ( θ ; b, a ) : = P ϕ ∈ Θ P c ( θ | ϕ, a ) β ( ϕ ) , K ( τ , θ , y , a ) : = P τ ′ ∈T B ( y | τ ′ , θ ) P h ( τ ′ | τ , θ , a ) , σ ( τ , b, y , a ) : = P θ ∈ Θ K ( τ , θ , y , a ) ˆ β ( θ ; b, a ) , respectiv ely . The belief MDP has the augmented state space S b : = T × [0 , 1] . The expected instantaneous cost un- der belief b is gi ven by c ( τ , b, a ) : = c ( τ , 0 , a )(1 − b ) + c ( τ , 1 , a ) b = c s ( τ ) + c a ( a ) . The belief MDP is thus described by the tuple ( S b , A , Y , P , B , c, γ , ( p h , b 0 )) . Our objectiv e is to find an optimal belief-dependent policy π ( a | τ , b ) that minimizes the infinite-horizon discounted cumulativ e cost J = E π [ P ∞ t =0 γ t c ( τ t , b t , a t )] . A subtle technical issue arises from the fact that the state space is countably infinite and the cost function becomes unbounded when the system matrix A is not Schur stable, i.e., ρ (A) > 1 . T o keep the focus on our main objectiv e, we impose the following assumption, which is standard in the literature to guarantee the con ver gence of the estimation error in the fully observable setting (e.g., [11, Assumption 3]). Assumption 2: The successful transmission probability satisfies λ : = min θ ∈ Θ ,a ∈A λ ( θ , a ) > 1 − 1 /ρ (A) 2 . Under Assumption 2, we can deriv e the corresponding Bell- man equation in the partially observable setting. Pr oposition 2: Let Assumption 2 hold. Then there exists a Q -function that satisfies the Bellman equation T Q = Q where T denotes the Bellman operator defined by ( T Q )( τ , b, a ) : = c ( τ , b, a ) + γ P y ∈Y min a ′ ∈A Q ( y , T ( τ , b, y , a ) , a ′ ) σ ( τ , b, y , a ) . Accordingly , the v alue function and the optimal policy are giv en by V ( τ , b ) = min a ∈A Q ( τ , b, a ) and π ( τ , b ) = arg min a ∈A Q ( τ , b, a ) , respecti vely . The proof is provided in Appendix. I V . M O N OT O N I C I T Y P RO P E RT I E S Existing studies hav e rev ealed that, under fully observable settings, the value function exhibits monotonicity properties with respect to both the holding time and the channel mode [7]–[12]. The underlying intuition is that the system performance degrades as the holding time becomes longer or as the channel mode deteriorates, which consequently worsens the value function. Such monotonicity properties directly imply a threshold structure of the optimal policy , whereby the optimal action switches when the system state crosses certain critical v alues. This structural result not only provides v aluable insight into the nature of the optimal policy but also significantly reduces the computational complexity of policy computation and implementation [13], [14]. The objectiv e of this section is to extend this line of analysis to our partially observable setting and in vestigate whether similar monotonicity properties can be established for the belief-state v alue function. A. Issue: Lack of Order Pr eservation in T ransition Kernel A common approach to monotonicity analysis for POMDPs is to exploit the TP2 properties of the transition kernels. It is well known that, if the state transition kernel P and the observ ation kernel B are TP2 for all actions, then the value function inherits a monotonicity property , provided that the instantaneous cost function is monotone (see Prop. 1 for our setting) [15, Sec. 12.2]. Moreov er , since the product of TP2 kernels remains TP2, it suffices to examine the TP2 properties of the individual kernels P h , P c , B . Howe ver , as pointed out in [16], [17], the holding-time transition kernel P h is never TP2 in ( τ , τ ′ ) . This fact can be readily verified by a simple counterexample. Specifically , consider ( τ 1 , τ ′ 1 ) = (0 , 0) and ( τ 2 , τ ′ 2 ) = (2 , 1) . Then P h ( τ ′ 1 | τ 1 ) P h ( τ ′ 2 | τ 2 ) − P h ( τ ′ 2 | τ 1 ) P h ( τ ′ 1 | τ 2 ) = − (1 − λ ) λ < 0 (1) where the dependence on θ and a is omitted for notational simplicity . Since TP2 requires this cross-dif ference to be non- negati ve, the kernel P h fails to satisfy the TP2 property . As a consequence, standard TP2-based monotonicity arguments do not directly apply , which has hindered the in vestigation of monotonicity properties in partially observable settings in volving holding-time dynamics. B. Core Idea: State-Space F olding The key observation is that this non-TP2 behavior is not intrinsic to the transition kernel itself, but stems from the structure of supp P h ( ·|· , θ , a ) , as illustrated in the left part of Fig. 2, where ∆ (0 , 0) , (2 , 1) denotes the cross-difference in (1). Our core idea, termed state-space folding , is to fold the space of the subsequent state so as to collapse states outside supp P h ( ·|· , θ , a ) and to conduct the monotonicity analysis on the resulting folded space. Specifically , we introduce a binary set D : = { 0 , 1 } , representing transmission success and failure, respectiv ely , and define the transition kernels on the folded space as P f h ( δ | τ , θ , a ) : = P h (0 | τ , θ , a ) , if δ = 0 , P h ( τ + 1 | τ , θ , a ) , if δ = 1 , B f ( ζ | δ, θ ) : = 1 , if δ = ζ , 0 , otherwise . This construction yields the composite kernel K f ( τ , θ , ζ , a ) : = P δ ∈D B f ( ζ | δ, θ ) P f h ( δ | τ , θ , a ) . The right part of Fig. 2 illustrates the folding operation that remov es states outside the support. Note that the original observed signal is recov ered by y = 0 if ζ = 0 and y = τ + 1 Fig. 2. State-space folding. if ζ = 1 . W e denote this v alue by y ( τ , ζ ) with ab use of notation. This transformation is well-defined, in the sense that they are equiv alent under the transformation of the observed signal. Pr oposition 3: The kernels satisfy K ( τ , θ , y ( τ , ζ ) , a ) = K f ( τ , θ , ζ , a ) . Pr oof: It is straightforward from the definition. This transformed kernel induces the Bayesian update and the observation likelihood over the folded space gi ven by T f ( τ , b, ζ , a ) : = K f ( τ , 1 , ζ , a ) ˆ β (1; b, a ) /σ f ( τ , b, ζ , a ) , σ f ( τ , b, ζ , a ) : = P θ ∈ Θ K f ( τ , θ , ζ , a ) ˆ β ( θ ; b, a ) . The bellman operator can be rewritten in terms of them by ( T Q )( τ , b, a ) = c ( τ , b, a ) + γ X ζ ∈D min a ′ ∈A Q ( y ( τ , ζ ) , T f ( τ , b, ζ , a ) , a ′ ) σ f ( τ , b, ζ , a ) . (2) It should be noted that this folding operation does not transform the state space itself; rather , it only af fects the subsequent state space and the observation space. Therefore, this is not a transformation of the POMDP itself, but merely a modification of the transition kernels. C. Monotonicity Pr operties W e examine the TP2 properties of the transformed k ernels. Lemma 7: The kernel P f h ( δ | τ , θ , a ) is TP2 in ( δ, τ ) and ( δ, θ ) . The kernel B f ( ζ | δ, θ ) is TP2 in ( ζ , δ ) . Further, the kernel K f ( τ , θ , ζ , a ) is TP2 in ( τ , ζ ) and ( θ , ζ ) . Pr oof: For ( δ , τ ) in P f h , consider the cross- difference ∆ τ ,δ : = P f h (0 | τ 1 , θ , a ) P f h (1 | τ 2 , θ , a ) − P f h (1 | τ 2 , θ , a ) P f h (0 | τ 1 , θ , a ) for τ 1 ≤ τ 2 . It can be confirmed that ∆ τ ,δ = 0 because P f h is independent of τ . F or ( δ, θ ) , consider ∆ θ,δ : = P f h (0 | τ , 0 , a ) P f h (1 | τ , 1 , a ) − P f h (1 | τ , 0 , a ) P f h (0 | τ , 1 , a ) = λ (0 , a )(1 − λ (1 , a )) − (1 − λ (0 , a )) λ (1 , a ) = λ (0 , a ) − λ (1 , a ) ≥ 0 from the property of the channel modes. The TP2 property of B f in ( ζ , δ ) is straightforward from the definition. For ( τ , ζ ) in K f , Lemma 3 leads to the TP2 property since K f is defined by marginalization with respect to δ . Finally , because B f ( ζ | δ, θ ) is independent of θ and K f is defined by marginalization with respect to δ , K f is TP2 in ( θ , ζ ) . These properties, in turn, yield monotonicity of the belief update and the observation likelihood. Lemma 8: Let Assumption 1 hold. The Bayesian update ov er the folded space T f ( τ , b, ζ , a ) is increasing in ( τ , b, ζ ) . The observation likelihood o ver the folded space satisfies σ f ( τ 1 , b 1 , · , a ) ≤ r σ f ( τ 2 , b 2 , · , a ) for τ 1 ≤ τ 2 and b 1 ≤ b 2 . Pr oof: First, since T f is indepdendent of τ , it is increasing in τ . From Assumption 1 and Lemma 2, ˆ β (1; b, a ) is increasing in b . Since K f ( τ , θ , ζ , a ) is TP2 in ( θ , ζ ) from Lemma 7, Lemma 5 implies that T f ( τ , b, ζ , a ) is increasing in b . Further, Lemma 5 implies that T f ( τ , b, ζ , a ) is increas- ing in ζ . Next, since ˆ β ( θ ; b, a ) ≥ 0 is independent of τ , ζ and Lemma 7, Lemma 4 implies that σ f is TP2 in ( τ , ζ ) . Further, since σ f is gi ven by marginalization with respect to θ , Lemmas 3 and 7 imply that σ f is TP2 in ( b, ζ ) . Those are equiv alent to the MLR dominance. These results lead to our main objectiv e: monotonicity of the v alue function. Theor em 1: Under Assumptions 1 and 2, the value func- tion V ( τ , b ) is increasing in τ and b . Furthermore, the Q - function Q ( τ , b, a ) is also increasing in τ and b for any a . Pr oof: Define Q n +1 : = T Q n with Q 0 = 0 inductiv ely . W e show the claim by induction. As- sume that Q n ( τ , b, a ) is increasing in τ and b . Since T f ( τ , b, ζ , a ) is increasing in ( τ , b, ζ ) from Lemma 8 and y ( τ , ζ ) is increasing in ( τ , ζ ) , Q n ( y ( τ , ζ ) , T f ( τ , b, ζ , a ) , a ′ ) is increasing in ( τ , b, ζ ) . Because taking minimum preserves monotonicity , min a ′ Q n ( y ( τ , ζ ) , T ( τ , b, ζ , a ) , a ′ ) is increasing in ( τ , b, ζ ) . From Lemmas 1 and 8, γ P ζ ∈D min a ′ Q n ( y ( τ , ζ ) , T f ( τ , b, ζ , a ) , a ′ ) σ f ( τ , b, ζ , a ) is increasing in τ , b . Since summation preserves monotonicity , Prop. 1 and (2) imply that Q n +1 ( τ , b, a ) is increasing in τ and b . Then Q , the limit of Q n , is also increasing in τ and b . Therefore, V ( τ , b ) is increasing in τ and b as well. D. Structural Thr eshold Pr operty of Optimal Stopping P olicy As a representativ e consequence of the monotonicity prop- erty , we examine an associated optimal stopping formulation. In particular , we consider a binary action space A = { 0 , 1 } , where 0 and 1 correspond to continuation and termination, respectiv ely . Once a = 1 is selected, the process terminates immediately and incurs a stopping cost c a (1) = c stop ∈ R . W ithout loss of generality , we assume c a (0) = 0 . This formulation naturally captures scenarios such as the quickest detection of a persistent mode change induced by equipment failure or adversarial intrusion. Under this setting, the Bell- man equation is given by Q ( τ , b, 0) : = c ( τ , b, 0) + γ P y ∈Y min a ′ ∈A Q ( y , T ( τ , b, y , 0) , a ′ ) σ ( τ , b, y , 0) , Q ( τ , b, 1) : = c stop . The monotonicity directly leads to the structural threshold property of the optimal stopping policy . Theor em 2: Let Assumptions 1 and 2 hold. In the optimal stopping setting, there exists a monotone threshold function b th : T → [0 , 1] such that the optimal policy satisfies π ( τ , b ) = 1 if b ≥ b th ( τ ) and π ( τ , b ) = 0 otherwise, where b th ( τ ) is decreasing in τ . Pr oof: Since c stop is a constant and − Q ( τ , b, 0) is decreasing from Theorem 1, ∆ Q ( τ , b ) : = Q ( τ , b, 1) − Fig. 3. Threshold structure of optimal stopping policy . Q ( τ , b, 0) = c stop − Q ( τ , b, 0) is decreasing, and hence the T opkis’ theorem (Lemma 6) leads to the claim. Theorem 2 implies that the optimal stopping policy is characterized by a monotone threshold function b th ( τ ) . In- tuitiv ely , as the holding time increases or the belief of the unfa vorable channel mode becomes stronger , the expected benefit of continuing diminishes, and stopping becomes optimal once it exceeds the critical level. V . N U M E R I C A L E X A M P L E W e validate the theoretical results through a numerical ex- ample in an optimal stopping setting. The system parameters are giv en by A = 0 . 85 , C = 1 . 0 , Q = 0 . 3 , R = 0 . 3 . The channel parameters are λ (0) = 0 . 9 , λ (1) = 0 . 2 , P c (0 | 0) = 0 . 9 , P c (1 | 1) = 1 . 0 . The stopping cost is c stop = 10 . 0 , and the discount factor is γ = 0 . 95 . The optimal policy is computed via value iteration with pruning [21] implemented using pomdp py [22]. The code is av ailable online [23]. Fig. 3 illustrates the optimal policy . Each subfigure is as- sociated with a specific holding time τ , where the horizontal axis represents the belief and the vertical axis represents the corresponding optimal action. W e first observe that the optimal policy exhibits a threshold structure, where the stop action is taken when the belief exceeds a certain value that depends on the holding time. W e further observe that the threshold b th ( τ ) is decreasing in τ . This result is consistent with Theorem 2. V I . C O N C L U S I O N In this paper , we have examined transmission scheduling for remote state estimation with hidden binary modes by formulating the problem as a POMDP and introducing a state-space folding technique to recover order -preserving properties of the transformed transition kernels. Lev eraging TP2-based analysis on the folded space, we hav e established monotonicity in both the holding time and the belief of the unfa vorable channel mode. As a representative application, we hav e prov ed that the optimal stopping policy admits a monotone threshold structure. Future work includes the dev elopment of computationally efficient algorithms for op- timal policy design, extensions to more general scenarios, and game-theoretic analyses of security settings in which the channel mode is manipulated by an adversary . A P P E N D I X W e prove Prop. 2 beginning with the follo wing lemma. Lemma 9: For any ϵ > 0 , there exists C > 0 such that ∥ A τ ∥ 2 ≤ C ( ρ (A) + ϵ ) τ for any τ ∈ T . Pr oof: First, from the Gelfand’ s formula [24], we hav e lim τ →∞ ∥ A τ ∥ 1 /τ 2 = ρ (A) . Thus, for any ϵ > 0 there exists τ ∈ T such that ∥ A τ ∥ 2 ≤ ( ρ (A) + ϵ ) τ for any τ > τ . Hence, for sufficiently large τ , the desired inequality holds with C = 1 . Since the set of τ for which the inequality does not hold with C = 1 is finite, there exists a sufficiently large constant C > 0 such that it holds for any τ ∈ T . Define the weighted sup-norm of a function f : T × [0 , 1] × A → R as sup τ ,b,a | f ( τ , b, a ) | /s ( τ ) with a positiv e-valued function s ( τ ) : = ( ρ (A) + ϵ ) 2 τ . Define also the function space B as the set of all functions being bounded with respect to the norm. The following lemma holds. Lemma 10: The three properties hold: (a) c ( τ , b, a ) ∈ B , (b) P y ∈T σ ( τ , b, y , a ) s ( y ) ∈ B , (c) Under Assumption 2, for any ϵ > 0 that satisfies α : = (1 − λ )( ρ (A) 2 + ϵ ) < 1 there exists m ∈ N such that γ m P y ∈Y P ( τ m = y | τ 0 = τ ) s ( y ) /s ( τ ) < 1 for any τ ∈ T . Pr oof: For (a), take q > 0 such that P ⪯ q I and Q ⪯ q I . From the commutativ e property of trace, T r ( X Y ) ≤ ρ ( X )T r ( Y ) , and ρ ( X T X ) = ∥ X ∥ 2 2 , Lemma 9 implies that c s ( τ ) = T r (A T A) τ P + P τ − 1 t =0 T r (A T A) t Q ≤ T r (A T A) τ T r P + P τ − 1 t =0 T r (A T A) t T r (Q) ≤ q P τ t =0 T r (A T A) t ≤ q P τ t =0 ∥ A t ∥ 2 2 ≤ q C 2 s ( τ ) . Since c a ( a ) is bounded, the property (a) holds. For (b), note that the inequality σ ( τ , b, y , a ) ≤ 1 trivially holds. Thus P y ∈Y σ ( τ , b, y , a ) s ( y ) /s ( τ ) = ( σ ( τ , b, 0 , a ) s (0) + σ ( τ , b, τ + 1 , a ) s ( τ + 1)) /s ( τ ) ≤ ( s (0) + s ( τ + 1)) /s ( τ ) ≤ 1 / ( ρ (A) + ϵ ) 2 τ + ( ρ (A) + ϵ ) 2 < + ∞ . Finally , we sho w (c). For y = 0 , . . . , m − 1 , the e vent τ m = y means that a successful transmission occurs at time m − y , followed by y consecutive transmission failures. Hence, P ( τ m = y | τ 0 = τ ) ≤ (1 − λ ) y . Similarly , P ( τ m = τ + m | τ 0 = τ ) ≤ (1 − λ ) m . Further, other probabilities are zero. Thus, P y ∈Y P ( τ m = y | τ 0 = τ ) s ( y ) ≤ P m − 1 y =0 α y + α m s ( τ ) . From the hypothesis in the claim, γ m P m − 1 y =0 α y /s ( τ ) ≤ γ m / { (1 − α )( ρ (A) + ϵ ) 2 τ } → 0 as m → ∞ . In addition, since γ m α m s ( τ ) /s ( τ ) = γ m α m → 0 as m → ∞ , the desired inequality holds for sufficiently large m ∈ N . Based on Lemma 10, we can show that the m -fold compo- sition of the Bellman operator is a contraction map ov er B , by closely follo wing the existing proof [25, Sec. 1.5]. Finally , Prop. 2 is straightforward from the contraction property . Pr oof: Since T m is a contraction map ov er B for sufficiently small ϵ > 0 , the m -stage contraction mapping fixed-point theorem [25, Prop. 1.5.4] leads to the claim. R E F E R E N C E S [1] B. Sinopoli, L. Schenato, M. Franceschetti, K. Poolla, M. I. Jordan, and S. S. Sastry , “Kalman filtering with intermittent observations, ” IEEE T rans. Autom. Control , vol. 49, no. 9, pp. 1453–1464, 2004. [2] L. Shi, M. Epstein, and R. M. Murray , “Kalman filtering over a packet- dropping network: A probabilistic perspective, ” IEEE T rans. Autom. Contr ol , vol. 55, no. 3, pp. 594–604, 2010. [3] J. Wu, Q. Jia, K. H. Johansson, and L. Shi, “Event-based sensor data scheduling: T rade-off between communication rate and estimation quality , ” IEEE T rans. Autom. Contr ol , vol. 58, no. 4, pp. 1041–1046, 2012. [4] H. Zhang, P . Cheng, L. Shi, and J. Chen, “Optimal denial-of-service attack scheduling with energy constraint, ” IEEE T rans. Autom. Con- tr ol , vol. 60, no. 11, pp. 3023–3028, 2015. [5] Y . Li, D. E. Quevedo, S. Dey , and L. Shi, “SINR-based DoS attack on remote state estimation: A game-theoretic approach, ” IEEE T rans. Contr ol Netw . Syst. , vol. 4, no. 3, pp. 632–642, 2017. [6] H. Ishii and Q. Zhu, Security and Resilience of Control Systems . Springer , 2022. [7] A. S. Leong, S. Dey , and D. E. Quev edo, “On the optimality of threshold policies in event triggered estimation with packet drops, ” in Proc. Eur opean Control Confer ence (ECC) , 2015, pp. 927–933. [8] Y . Qi, P . Cheng, and J. Chen, “Optimal sensor data scheduling for remote estimation over a time-varying channel, ” IEEE T rans. Autom. Contr ol , vol. 62, no. 9, pp. 4611–4617, 2017. [9] S. Wu, X. Ren, S. Dey , and L. Shi, “Optimal scheduling of multiple sensors over shared channels with packet transmission constraint, ” Automatica , vol. 96, pp. 22–31, 2018. [10] S. Wu, X. Ren, Q.-S. Jia, K. H. Johansson, and L. Shi, “Learning optimal scheduling policy for remote state estimation under uncertain channel condition, ” IEEE T rans. Contr ol Netw . Syst. , vol. 7, no. 2, pp. 579–591, 2020. [11] J. W ei and D. Y e, “Double threshold structure of sensor scheduling policy over a finite-state Markov channel, ” IEEE T rans. Cybern. , vol. 53, no. 11, pp. 7323–7332, 2023. [12] B. Sun and X. Cao, “Optimal sensor scheduling for remote state estimation over hidden Markovian channels, ” IEEE Contr . Syst. Lett. , vol. 8, pp. 2541–2546, 2024. [13] A. Roy , V . Borkar, A. Karandikar , and P . Chaporkar, “Online rein- forcement learning of optimal threshold policies for Markov decision processes, ” IEEE T rans. A utom. Contr ol , vol. 67, no. 7, pp. 3722–3729, 2021. [14] K. Nakhleh and I.-H. Hou, “DeepT OP: Deep threshold-optimal policy for MDPs and RMABs, ” Pr oc. Conference on Neural Information Pr ocessing Systems (NeurIPS) , vol. 35, pp. 28 734–28 746, 2022. [15] V . Krishnamurthy , P artially Observed Markov Decision Pr ocesses: F rom Filtering to Contr olled Sensing , 2nd ed. Cambridge Univ ersity Press, 2025. [16] H. Liu, Y . Li, K. H. Johansson, J. M ˚ artensson, and L. Xie, “Rollout approach to sensor scheduling for remote state estimation under integrity attack, ” Automatica , vol. 144, no. 110473, 2022. [17] B. Sun and X. Cao, “Optimal sensor scheduling for remote state estimation with partial channel observation, ” IEEE/CAA Journal of Automatica Sinica , vol. 12, no. 7, pp. 1510–1512, 2025. [18] S. Karlin and Y . Rinott, “Classes of orderings of measures and related correlation inequalities. I. Multivariate totally positive distributions, ” Journal of Multivariate Analysis , vol. 10, no. 4, pp. 467–498, 1980. [19] B. D. Anderson and J. B. Moore, Optimal F iltering . Dover Publica- tions, 2005. [20] M. Mushkin and I. Bar-David, “Capacity and coding for the Gilbert- Elliott channels, ” IEEE Tr ans. Inf. Theory , vol. 35, no. 6, pp. 1277– 1290, 1989. [21] A. Cassandra, M. L. Littman, and N. L. Zhang, “Incremental pruning: A simple, fast, exact method for partially observable Mark ov decision processes, ” in Proc. Thirteenth Conference on Uncertainty in Artificial Intelligence , 1997, p. 54–61. [22] K. Zheng and S. T ellex, “pomdp py: A frame work to build and solve POMDP problems, ” in ICAPS 2020 W orkshop on Planning and Robotics (PlanRob) , 2020. [23] H. Sasahara, monotone-rse-pomdp, https://github.com/ HampeiSasahara/monotone- rse- pomdp, v1.0.0, 2026, DOI: 10.5281/zenodo.18372633. [24] V . K ozyakin, “On accuracy of approximation of the spectral radius by the Gelfand formula, ” Linear Algebra and Its Applications , vol. 431, no. 11, pp. 2134–2141, 2009. [25] D. Bertsekas, Dynamic Pr ogramming and Optimal Control: V olume II , 4th ed. Athena Scientific, 2012.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment