FEMBA on the Edge: Physiologically-Aware Pre-Training, Quantization, and Deployment of a Bidirectional Mamba EEG Foundation Model on an Ultra-low Power Microcontroller

Objective: To enable continuous, long-term neuro-monitoring on wearable devices by overcoming the computational bottlenecks of Transformer-based Electroencephalography (EEG) foundation models and the quantization challenges inherent to State-Space Mo…

Authors: Anna Tegon, Nicholas Lehmann, Yawei Li

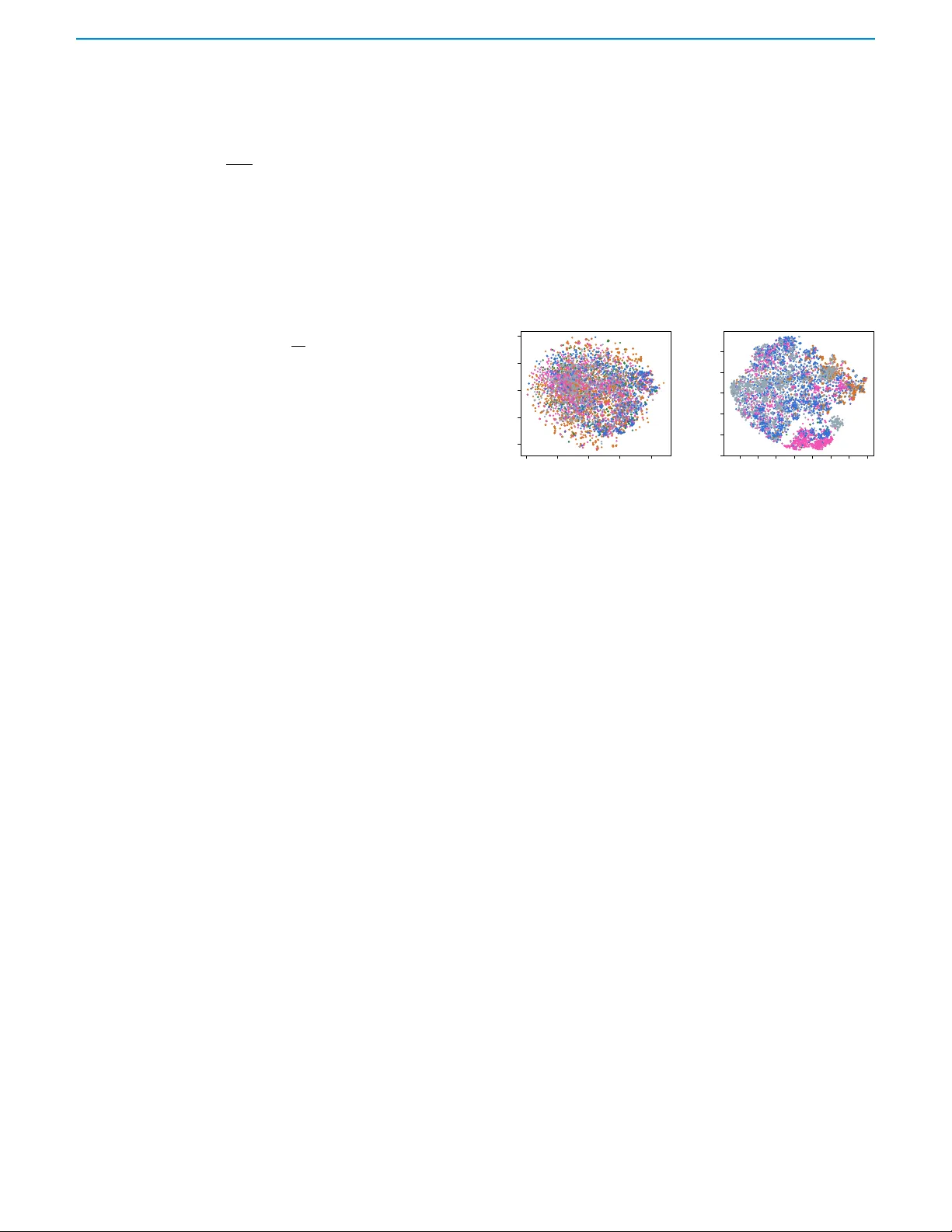

FEMBA on the Edge: Ph ysiologically-A w are Pre-T raining, Quantization, and Deplo yment of a Bidirectional Mamba EEG F oundation Model on an Ultra-lo w P o wer Microcontroller Anna T egon, Graduate Student Member , IEEE , Nicholas Lehmann, Y a wei Li, Andrea Cossettini, Senior Member , IEEE , Luca Benini, Fello w , IEEE and Thorir Mar Ingolfsson Member , IEEE Abstract — Objective: T o enable continuous, long-term neuro- monitoring on wearable devices by overcoming the computational bottlenecks of T ransformer -based Electroencephalograph y (EEG) foundation models and the quantization challenges inherent to State-Space Models (SSMs). Methods: W e present FEMBA, a bidi- rectional Mamba ar chitecture pre-trained on over 21,000 hours of EEG. We introduce a novel Ph ysiologically-Aware pre-training objective, consisting of a reconstruction with low-pass filtering, to prioritiz e neural oscillations over high-frequency artifacts. T o address the activation outliers common in SSMs, we employ Quantization-Aware T raining (QA T) to compress the model to 2-bit weights. The framew ork is deploy ed on a parallel ultra-low-power RISC-V microcontroller (GAP9) using a custom double-b uffered memory streaming scheme . Results: The proposed low-pass pre- training impro ves downstream AUR OC on TU AB from 0.863 to 0.893 and AUPR from 0.862 to 0.898 compared to the best con- trastive baseline. QA T successfully compresses weights with neg- ligible perf ormance loss, whereas standar d post-training quantiza- tion degrades accuracy b y appro ximately 30%. The embedded im- plementation achie ves deterministic real-time inference (1.70 s per 5 s window) and reduces the memory footprint b y 74% (to ≈ 2 MB), achieving competitive accuracy with up to 27 × fewer FLOPs than T ransf ormer benchmarks. Conclusion: FEMBA demonstrates that Mamba-based foundation models can be effectively quantized and deployed on extreme-edge har dware without sacrificing the repre- sentation quality required f or r obust clinical anal ysis. Significance: This work establishes the first full-stack framework for deploying large-scale EEG f oundation models on ultra-low-power wearables, facilitating continuous, SSM based monitoring for epilepsy and sleep disorder s. Index T erms — Electr oencephalography , Foundation Models, Mamba, Quantization, Edg e AI, Wearab les, RISC-V . I . I N T R O D U C T I O N Electroencephalography (EEG) measures cortical electrical activity using non-in vasi ve electrodes. Since its earliest use in the 1920s, EEG has become a fundamental tool for monitoring brain activity [1], with clinical applications spanning the diagnosis of sleep disorders, neurodegenerati ve diseases, and epilepsy [2], [3]. In recent years, EEG applications hav e been moving outside controlled clinical en vironments. Clinically , there is a need for continuous, long-term monitoring in ambulatory and at-home settings, for example in the context of epilepsy monitoring [4]. At the same time, the consumer market sho ws increased interest towards wellness- oriented brain monitoring solutions and brain computer interfaces (BCI), with applications spanning aided meditation, boosting pro- ductivity , and gaming [5], [6]. This project w as suppor ted by the Swiss National Science Foundation (Project PEDESITE) under grant agreement 193813. This work was also suppor ted in par t by the ETH Future Computing Laborator y (EFCL) and by a grant from the Swiss National Supercomputing Centre (CSCS) under project ID lp12 on Alps. Anna T egon, Nicolas Lehmann, Y aw ei Li, Andrea Cossettini, Luca Benini, and Thorir Mar Ingolfsson are with the Integrated Systems Laboratory , ETH Z ¨ urich, Z ¨ urich, Switzerland (thor iri@iis.ee.ethz.ch). Luca Benini is also with the DEI, University of Bologna, Bologna, Italy . As EEG expands beyond controlled clinical environments, there is a pressing need to enable continuous monitoring and on-device EEG signal analysis on resource-constrained wearable devices. Such systems must operate under tight computational and po wer con- straints [7], while being more susceptible to mov ement and en vi- ronmental artifacts affecting signal quality [8], [9]. In this context, the algorithmic landscape for EEG analysis is rapidly ev olving. Classical EEG analysis initially relied on hand- crafted spectral, temporal, or spatial features combined with tradi- tional classifiers such as Support V ector Machines, Linear Discrimi- nant Analysis, and tree-based methods [10]. Overcoming the limitations of manual feature engineering prompted a shift toward end-to-end learning, with conv olutional neural networks [11] enabling automatic feature extraction and im- prov ed decoding performance. Despite the increased memory and computational demands, there ha ve been multiple demonstrations of model deployments on edge de vices for lo w-power ex ecution on wearables [12]–[14]. Howev er, existing approaches still struggle to generalize across subjects, recording platforms, and electrode configurations, often requiring task-specific and patient-specific data collections and training. Foundation Models (FMs) address the generalization limitations of deep learning by pre-training on large-scale, unlabeled EEG corpora and adapting to downstream tasks through transfer learning. Recent models such as BENDR [15], LaBraM [16], and CbraMod [17] demonstrate improved cross-subject generalization and reduced anno- tation requirements. Ho wever , while FMs offer the robust generaliza- tion capabilities required for di verse patient populations, their reliance on Transformer architectures with quadratic complexity O ( N 2 ) renders them computationally prohibitive for wearable devices. This disconnect pre vents the deployment of state-of-the-art AI on the very edge devices required for long-term, continuous neuro-monitoring. State-space models (SSMs), such as Mamba [18], of fer a promising av enue to avoid the fundamental quadratic complexity bottleneck, by reformulating sequence modeling as a latent dynamical system with linear scaling O ( N ) in sequence length. Y et, two critical barriers re- main for their adoption in biomedical edge computing. First, standard self-supervised pre-training objectives (e.g., masked reconstruction) often force models to reconstruct high-frequency artifacts (e.g., EMG noise), wasting model capacity on non-physiologically meaningful characteristics of the raw signals. Second, Mamba architectures are notoriously difficult to quantize due to activ ation outliers, so nai ve integer post-training quantization can lead to sev ere performance collapse on low-precision microcontrollers (MCUs). Building on our previous FEMBA architecture [19], which in- troduced a bidirectional Mamba-based EEG foundation encoder , we present an end-to-end framework that bridges the gap between large- scale FMs and ultra–low-power wearable hardware. Our specific contributions are in addition to open-sourcing our code and models for reproducibility 1 : 1 https://github .com/pulp-bio/BioFoundation © This work has been submitted to the IEEE for possible publication. Copyright may be transferred without notice, after which this version may no longer be accessible. 2 IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. XX, NO . XX, XXXX 2025 • Physiologically-A ware Pre-training : W e introduce a self- supervised objecti ve, Reconstruction with Low-pass F iltering , that acts as a denoising autoencoder by forcing the encoder to reconstruct a low-pass–filtered target instead of raw EEG. On T emple University Abnormal Corpus (TU AB), this objective improv es Area Under the Recei ver Operating Characteristic (A UR OC) from 0 . 863 to 0 . 893 and Area Under the Precision- Recall (A UPR) from 0 . 862 to 0 . 898 compared to the best contrastiv e baseline, with significant accuracy improv ement ( 78 . 6% → 81 . 9% ). On T emple Univ ersity Artifact Corpus (TU AR) and T emple Uni versity Slo wing Corpus (TUSL), perfor - mance differences between the pre-training strategies are within ov erlapping confidence interv als. The lo w-pass variant remains competitiv e and does not compromise performance on these smaller datasets, while consistently av oiding the degradation observed with contrastiv e masking. • Robust Mamba Quantization : W e systematically study post- training quantization (PTQ) and show that acti vation outliers in Mamba-style SSMs make naiv e W8A8 PTQ collapse per - formance (A UROC 0 . 89 → 0 . 77 , accuracy 81% → 55% ), and that W2A8 PTQ fails completely . By switching to Quantization- A ware T raining (QA T), we recov er near-floating-point perfor- mance for W8A8 and W4A8 (within ≈ 0 . 01 A UR OC of FP32) and e ven for W2A8 2 . This yields a 2-bit–weight, 8-bit–acti vation FEMB A-Tin y variant that is both accurate and deployable on tightly constrained MCUs where standard PTQ fails. • Full-Stack Edge Deployment : W e demonstrate, to the best of our knowledge, the first deployment of a SSM-based EEG foundation model on a parallel ultra–lo w-power RISC-V MCU. Using optimized kernels and a double-buf fered multi-lev el mem- ory streaming scheme, we achieve deterministic inference of a 5 s window in 1.70 s at 370 MHz, at an energy cost of 75 mJ per inference , at an av erage power env elope of 44.1 mW , while compressing the model footprint from 7.8 MB (INT8) to ∼ 2 MB (2-bit weights). This shows that foundation-scale EEG encoders can be made compatible with the memory and latency budgets of continuous MCU-based, wearable monitoring devices. These three components—physiologically-aware pre-training, robust quantization, and full-stack deployment—are tightly coupled. The low-pass reconstruction objectiv e stabilizes the encoder’ s frequency content, which in turn simplifies the quantization landscape. The re- sulting quantized model is architected to match the memory hierarchy and processing architecture of MCUs. Compared to our preliminary FEMB A paper [19], which intro- duced the original bidirectional Mamba encoder and demonstrated the feasibility of pre-training and fine-tuning on TUAB, TU AR, and TUSL, this work makes three key extensions. First, we sys- tematically compare four self-supervised objectives and propose a physiologically-aw are low-pass reconstruction target that significantly improv es downstream performance on TU AB. Second, we present, to our kno wledge, the first comprehensive quantization study of Mamba for EEG, showing that quantization-aw are training enables a W2A8 configuration that remains competitive with full-precision baselines. Third, we de velop a full-stack deployment frame work tar geting a low- power MCU, including custom kernels, hierarchical streaming, and a detailed cycle-accurate analysis, demonstrating that FEMBA can meet the memory and latency of MCU’ s highly constrained compute and storage. I I . R E L A T E D W O R K 2 while activ ation 4-bit quantization remains unstable in our experiments A. Super vised Deep Lear ning f or EEG Early applications of supervised deep learning to EEG primar- ily relied on conv olutional neural networks (CNNs). An important early contribution was the DeepConvNet and ShallowCon vNet [20] architectures, which showed that end-to-end CNNs operating directly on raw EEG could match the performance of traditional feature- engineering pipelines. Building on these ideas, EEGNet [21] intro- duced a more compact and efficient CNN design based on depthwise- separable con volutions, better capturing the spatiotemporal struc- ture of EEG while remaining lightweight and portable. Numerous variants and derived models emerged, including MBEEGNet [22], TIDNet [23] and related architectures, which extend its capabilities through temporal modules, multi-scale processing, and attention mechanisms. In parallel to CNN-based models, recurrent architectures were explored to better capture the temporal dynamics of EEG signals. Early examples include LSTM-based classifiers [24], which demonstrated that sequence models applied to sliding windows of raw EEG can effecti vely learn temporal dependencies, and RNN framew orks combined with sliding-window CSP features [25], which highlighted the potential of recurrent networks for modeling temporal structure in motor-imagery decoding. B. F oundation Models for EEG Analysis Recent foundation models for EEG increasingly rely on self- supervised learning (SSL) to exploit large-scale unlabeled recordings. Early work such as BENDR [15] adapted masked prediction frame- works by combining con volutional encoders with contrastive objec- tiv es to reconstruct masked EEG representations. Later approaches extended this paradigm using T ransformer-based architectures: Brain- BER T [26] performs masked prediction on channel-independent spectrograms for iEEG, while models such as LaBraM [16] apply vector -quantized masking and discrete latent spaces to learn robust codebooks. Recent developments, as CBraMod [17], reconstruct masked raw signal patches directly , enabling end-to-end learning of temporal and spatial EEG structure. Howe ver , a common limitation across these approaches is their agnostic treatment of frequenc y content. By attempting to reconstruct the full spectral bandwidth, which includes high-frequency noise and muscle artifacts [9], these models may allocate capacity to modeling non-physiological interference rather than cortical dynamics. This suggests an opportunity for physiologically-a ware pre-training objec- tiv es that prioritize neural oscillations ov er broadband reconstruction. Despite the progress of T ransformer-based foundation models, their quadratic time and memory complexity with respect to sequence length ( O ( n 2 ) ) limits their practicality in many real-world EEG scenarios, especially in wearable or edge-computing settings where compute and memory resources are constrained [13]. Applications such as continuous epilepsy monitoring further impose real-time requirements and strict false-alarm tolerances [9]. State-space model (SSM) architectures offer a compelling alterna- tiv e, as their linear complexity ( O ( N ) ) enables efficient processing of long sequences, and recent designs, such as Mamba [18], demon- strate strong sequence-modeling performance. While Mamba-based EEG models hav e begun to emer ge—such as EEGMamba [27] and EEGM2 [28], which employ Mixture-of-Experts and U-Net archi- tectures, respecti vely—these works focus primarily on algorithmic performance on high-end GPUs, leaving the challenges of edge deployment and quantization largely unexplored. C . Efficient Edge AI and Quantization Challenges EEG processing is commonly performed offline or on high- performance hardware, and existing surve ys mainly focus on acqui- sition and usability rather than embedded computation. A few recent TEGON et al. : FEMBA 3 works started to explore edge-based EEG processing, showing that lightweight CNNs can run on MCUs, though with strict constraints on memory and latency [29]. Due to these limitations, se veral studies have inv estigated model compression—including pruning, quantization, and compact architectures—to enable deployment on low-po wer devices [13]. Howe ver , deploying Foundation Models (specifically SSMs) presents unique challenges compared to standard CNNs. While CNNs are often robust to low-bit quantization (e.g., 8-bit or 4-bit), Mamba architectures are notoriously difficult to quantize. Recent studies in computer vision [30] highlight that Mamba’ s selective scan mech- anism generates activ ation outliers that destroy performance under standard Post-Training Quantization (PTQ). Consequently , bridging the gap between Mamba’ s theoretical efficiency and actual hard- ware implementation requires dedicated Quantization-A ware T raining (QA T) strategies that have not yet been applied to the EEG domain. D . Limitations of Prior Works In this context, prior works demonstrated the growing interest in self-supervised EEG representation learning, yet they reveal clear limitations. As shown in T able I, existing foundation models rely on Transformer architectures with quadratic complexity , translating to computational costs up to 27 × higher than linear alternatives. Simultaneously , man y EEG applications, from Brain-Computer In- terfaces to continuous ambulatory monitoring, require online infer- ence on low-po wer wearable devices. Howev er, the development of foundation models and edge-AI solutions has largely progressed in isolation: as shown in T able I, none of these models report edge deployment , leaving the gap between algorithmic performance and embedded feasibility unaddressed. While state-space models such as Mamba [18] offer linear complexity , their deployment on ultra-lo w- power de vices remains unexplored in the biomedical literature. W e address these gaps by deploying a bidirectional Mamba foun- dation model for biosignal analysis running on an ultra-low-po wer edge MCU. T ABLE I : Comparison of EEG foundation models Model Architecture Size Datasets Complexity Deployment EEGFormer T ransformer 2.3M TUAR / TUSL O ( C N 2 ) No LaBraM Transformer 5.9M TUAB O ( C 2 N 2 ) No LUNA Transformer 7M TU AB / TUAR / TUSL O ( C N 2 ) + O ( C N ) No FEMBA Mamba-based 7.8M TUAB / TUAR / TUSL O ( C N ) Y es C number of EEG channels N number of temporal patches I I I . M E T H O D S A. Datasets W e leveraged the T emple Univ ersity EEG Corpus (TUEG) [31] for pretraining, as it is one of the largest publicly a vailable clinical EEG repositories. The corpus contains ov er 21 , 600 hours of record- ings from more than 14 , 000 patients. The TUEG dataset includes sev eral labeled subsets designed for specific diagnostic tasks. The TU AB subset provides recordings labeled as normal or abnormal (binary classification) for 2 , 329 subjects. The TU AR comprises data from 213 subjects and, following prior work [19], we treat it as a multiclass (single-label) classification task with fi ve classes corresponding to five artifact types. The TUSL includes recordings from 38 subjects for the detection and classification of slowing ev ents, seizures, complex backgrounds, and normal EEG activity [31] (multiclass classification). T ABLE II : Dataset Statistics Property TUEG TU AB TU AR TUSL Number of Subjects 14 , 987 2 , 329 213 38 Number of Channels 22 22 22 22 Sampling Rate 256 Hz 256 Hz 256 Hz 256 Hz Hours of Recordings 21 , 787 . 32 1 , 139 . 31 83 . 74 27 . 54 T raining Samples 13 , 236 , 000 591 , 357 49 , 241 16 , 088 V alidation Samples 489 , 600 154 , 938 5 , 870 1 , 203 T est Samples 489 , 600 74 , 010 5 , 179 2 , 540 B. Preprocessing W e applied a standard pre-processing pipeline to the raw EEG recordings. All signals were band-pass filtered between 1 Hz and 75 Hz, and a 60 Hz notch filter was used to remov e power line interference. Signals were then resampled to 256 Hz for consistency across recordings. After resampling, each raw signal was segmented into non-overlapping 5 s windows for training and evaluation. An initial analysis of the TUEG dataset showed that most signals (about 96%) were within the range of –20.16 µ V to 19.96 µ V , while the remaining recordings contained values with much higher mag- nitudes. T o preserve dataset integrity and ensure comparability with prior work using the full TUEG dataset, we retained all recordings, including those with extreme values. Given x , raw EEG signal, x norm corresponds to its normalized version, which is then provided as input to the model. T o reduce the influence of these artifacts during training, we applied quartile-based normalization [32], scaling each channel by its interquartile range (IQR): x norm = x − q lower ( q upper − q lower ) + 1 × 10 − 8 . where q lower and q upper denote the 25th and 75th percentiles (i.e., the lower and upper quartiles) of each channel’ s amplitude distribution, respectiv ely . The small constant 1 × 10 − 8 is added for numerical stability . C . Model Architecture W e build on the architecture introduced in our previous work [19]. W ith the goal of enabling model deployment on low-po wer MCUs, we adopt the FEMBA-T iny configuration for all the following experi- ments. The T iny v ariant consists of a 2D conv olutional tokenizer that projects the raw EEG input into an embedding space of dimension d model = 385 . The encoder is composed of two Bidirectional Mamba (Bi-Mamba) blocks, which process the sequence in both forward and backward directions to capture complex temporal de- pendencies. A residual connection is included within each Bi-Mamba block to support gradient flow during training. A ke y architectural nov elty is the introduction of an additional lightweight Transformer layer in the decoder . Specifically , we inte- grate this layer to enhance the model’ s contextual reasoning capabili- ties. This hybrid design leverages the structured state-space modeling of Mamba with the contextual reasoning ability of self-attention [33]. The decoder is used only during pretraining and is discarded in down- stream classification, preserving the linear computational complexity of the model during fine-tuning. In the classification stage, a simple linear classifier is employed, consisting of a single fully connected layer . This minimalistic task- specific output layer highlights the key role of the pretrained encoder . D . Pre-training Recent work on foundational EEG models has explored a range of pretraining strategies, showing promise for both mask ed- reconstruction approaches [17] and contrasti ve learning methods [15]. 4 IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. XX, NO . XX, XXXX 2025 In this study , we aim to in vestigate whether one pretraining paradigm consistently yields stronger performance. T o this end, we e valuate four pretraining techniques: two based on contrastive learning and two based on masked reconstruction. W e pretrain FEMBA-T iny on the TUEG dataset (see Sect. III-A). All subjects and recordings contained in the downstream datasets (TU AB, TU AR, and TUSL) were excluded from the pretraining to ensure a fair assessment of the model’ s generalization capabilities. 1) Masked Reconstr uction Approaches : In these setups, the input signal is divided into 80 non-overlapping patches of size 16 . A random subset of these patches is replaced with a fixed mask token using a masking ratio between 0 . 5 and 0 . 6 , consistent with prior work [16]. The model is trained to reconstruct the original signal ˆ x = f ( x m ) by minimizing the Smooth L1 loss [34]: SmoothL1 ( ˆ x, x ) = ( 0 . 5 ( x − ˆ x ) 2 /β , if | x − ˆ x | < β , | x − ˆ x | − 0 . 5 β , otherwise. (1) W e compute the loss o ver all patches, weighting unmask ed patches by 0 . 1 to maintain consistent representations. W e e valuate two specific reconstruction targets: a) Low-pass Filtering : While high-frequenc y components (e.g., HFOs) contain relevant biomarkers, they are frequently contaminated by muscle artifacts (Electromyography (EMG)) in scalp EEG [9]. For robust ambulatory monitoring, we prioritize low-frequenc y morphol- ogy (0.5–40 Hz) [35]. W e apply a 2 nd -order biquad low-pass filter (40 Hz cutoff) to the targ et signal only . This introduces an implicit denoising objective, encouraging the model to recov er meaningful neural activity while ignoring the high-frequency noise. b) Clustered Random P atches : T o increase reconstruction task difficulty , we employ a clustered masking strategy . Instead of inde- pendent random masking, we group masked regions into contiguous segments, maintaining the 0 . 5 to 0 . 6 ratio. This prevents the model from relying on local interpolation and forces it to learn longer -range relations, temporal structure, and cross-channel dependencies. 2) Contrastiv e Learning Approaches : W e also evaluate con- trastiv e learning, which aims to learn discriminative representations by pulling together positive pairs (augmented views of the same signal) and pushing away negati ve pairs [36]. W e maximize the similarity between views using the standard InfoNCE loss [37]: L InfoNCE = − log exp( sim ( z i , z + i ) /τ ) P N j =1 exp( sim ( z i , z j ) /τ ) , (2) where z i and z + i are embeddings of two vie ws of the same signal, and τ is the temperature parameter . W e explore two view-generation strategies: a) F requency-domain Augmentations : Follo wing Rommel et al. [38], we make views using three complementary transformations which simulate inter-subject v ariability and sensor noise: (1) FT Sur- r ogate [39], which randomizes phase while preserving the magnitude spectrum; (2) F r equency Shift [40], which shifts spectral components via the Hilbert transform; and (3) additive Gaussian noise. b) Masking-based Augmentations : Inspired by self-supervised audio learning [41], we generate positiv e pairs by applying two non- ov erlapping binary masks to the same input signal. Unlike recon- struction, which focuses on wa veform details, this objective forces the model to identify latent neural patterns that are semantically consistent across different temporal views of the recording. E. Fine-tuning Methodology W e ev aluated FEMBA-Tiny on EEG-based classification tasks, specifically three do wnstream tasks: epilepsy detection (TU AB), slowing event detection (TUSL), and artifact detection (TUAR). W e maintained the same downstream tasks as in our prior work [19], as they provide a broad spectrum of classification scenar- ios, including both binary and multi-class settings. Moreov er, these tasks span dif ferent application domains, from clinical diagnostics to artifact identification, requiring the model to adapt to diverse signal characteristics. For TU AB, we preserved the standard train- test partition pro vided with the dataset. F or TUSL and TUAR, which lack predefined subject-le vel splits, we followed the ev aluation protocol established by recent state-of-the-art methods, including EEGFormer [42], applying a randomized 80%/10%/10% division at the sample lev el for training, validation, and testing to ensure fair and direct comparison with existing benchmark results. For TU AR specifically , we formulated the problem as a 5-class single- label classification task, focusing on five distinct artifact categories, consistent with prior work. For the classifier architecture, we remov ed the decoder and re- placed it with a lightweight linear classification head. Thanks to the improvements introduced during pretraining, we were able to eliminate the additional Mamba block used in the pre vious setup. This change reduced the number of parameters in the classification head from approximately 0.7 million to just a few thousand, significantly simplifying the fine-tuning process. For the TUAB dataset, which is relatively balanced, we adopted the standard cross-entropy loss, as it consistently yields stable and robust training performance. Con versely , for the imbalanced TU AR and TUSL datasets, we employed the Focal Loss [43] to mitigate class imbalance. The loss function is defined as: FL( p t ) = − α t (1 − p t ) γ log( p t ) (3) where p t denotes the predicted probability of the correct class, α t is a class-balancing factor , and γ is a focusing parameter . Focal Loss reduces the impact of well-classified samples and emphasizes harder, minority-class examples, while rebalancing each class contribution by frequency . This approach proved particularly effecti ve for the TUSL dataset, which exhibits significant class imbalance. W e performed fine-tuning using the AdamW optimizer with a batch size of 256 over 50 epochs. The learning rate was set to 5 e- 4 with a layer-wise learning rate decay of 0.7. Additionally , we applied a weight decay of 0.05 and a gradient clipping threshold of 1.0, while dropout was set to 0.0. F . Quantization T o quantize FEMB A-Tiny , we utilized Br evitas , a PyT orch- based library for neural network quantization that supports both Post-T raining Quantization (PTQ) and Quantization A ware T raining (QA T) [44]. These represent the two general approaches to quantiza- tion, depending on when quantization is applied. PTQ is performed after training, while QA T is conducted during training [45]. PTQ is the simplest approach for quantizing a pre-trained model. It typically relies on basic assumptions and statistics to perform the quantization. This process can be improv ed through calibration , which in Brevitas is implemented using the calibration mode and bias correction mode functions. These allow collecting activ ation statistics in floating point with quantization temporarily disabled, and then re-enabling quantization with properly initialized scales, followed by optional bias correction. On the other hand, QA T is the most complex yet effectiv e quanti- zation method, as it simulates quantization during training, allo wing the model to adapt to quantization effects and reduce accuracy degradation. In our ev aluation of FEMB A-T iny , we quantized both weights and activ ations down to 2-bit precision. W e use per-channel unif orm TEGON et al. : FEMBA 5 quantization for the weights. F or each output channel c , a separate scale factor s ( c ) w is learned. The quantization process is given by: q ( c ) w = round w ( c ) s ( c ) w ! , ˆ w ( c ) = s ( c ) w · q ( c ) w , where w ( c ) denotes the original weights in channel c , s ( c ) w is the per-channel floating-point scale, q ( c ) w is the quantized integer representation, and ˆ w ( c ) is the reconstructed (dequantized) weight. For activ ations, instead of using a floating-point scale, we opted for a more hardware-friendly fixed-point quantization by constraining the scale to be a power -of-two value: s a = 2 − n , q a = round a s a , ˆ a = s a · q a , where a is the original acti vation value, s a is the power-of-tw o scale, q a is the quantized activ ation, and ˆ a is the reconstructed activ ation. This design choice enables efficient implementation on hardware accelerators by replacing multiplication with bit-shifting operations. G. Embedded deployment W e deploy the quantized FEMBA-T iny model on the GAP9 RISC- V MCU [46] to demonstrate for the first time the feasibility of running Mamba-based foundation models on extreme edge devices. 1) Hardware and Memory Hierarch y : The GAP9 features a cluster of 9 RISC-V cores (8 workers, 1 orchestrator) with a hierar- chical memory architecture: 128 kB L1 scratchpad, 1.5 MB shared L2, and off-chip L3 HyperRAM [47]. W e utilize the XpulpV2 ISA extensions, including a 4-way INT8 SIMD dot-product and hardware loops, to accelerate compute-intensi ve operations. T o handle weights exceeding on-chip capacity , we implemented a hierarchical double-buffered streaming strategy . Large weight matrices are partitioned into ≈ 80 kB chunks and streamed L3 → L2 via DMA. Simultaneously , the cluster orchestrator manages L2 → L1 tiling, prefetching weights into L1 double-buf fers while worker cores compute on the current tile. This hides memory latency and enables deployment of models limited only by external storage size. 2) Hybrid Quantization and Kernels : W e employ a h ybrid quantization strategy tailored to the numerical requirements of SSMs. W e de veloped a custom toolchain to export parameters directly from Brevitas to optimized C kernels. a) Linear Projections (INT8) : The input/output projections and gate generations dominate the parameter count. W e quantize these to INT8 and parallelize execution across the 8 worker cores. The kernels use an output stationary dataflow with 4 × 4 loop unrolling (over outputs and timesteps) to maximize register reuse. The innermost loop utilizes SIMD instructions to perform four MA Cs per cycle, accumulating into an INT32 to prev ent ov erflow . b) Selective SSM Scan (Q15) : The recurrent scan h t = ¯ A · h t − 1 + ¯ B · x t is sensitiv e to quantization noise. W e implement this operation using Q15 fixed-point arithmetic (15-bit fractional precision). Unlike the linear layers, the scan contains sequential temporal dependencies, preventing time-dimension parallelization. Instead, we adopt a channel-parallel strategy : the d inner = 1540 channels are distributed across cores, with each core processing its subset sequentially over time. T o also avoid expensiv e runtime floating-point exponentials, the discretizations parameters ( ¯ A, ¯ B ) and SiLU activ ations are precomputed using Lookup T ables (LUTs). 3) Deployment T oolchain : W e developed a custom code genera- tion pipeline to deploy FEMBA-T iny on GAP9. The pipeline extracts quantized weights and activ ation scales directly from the Brevitas- trained PyT orch model [44], bypassing ONNX to maintain precise control ov er quantization parameters. A template-based C code gen- erator produces layer-specific kernels with tiling configurations opti- mized for GAP9’ s memory hierarchy . The Mamba-specific operators (selectiv e scan, gating, bidirectional combination) are implemented as custom kernels integrated into the GAP SDK build system. This approach enables bit-exact reproducibility between the Python reference implementation and the embedded deployment [48]. I V . R E S U LT S − 40 − 20 0 20 40 − 40 − 20 0 20 40 t-SNE Component 1 t-SNE Component 2 − 60 − 40 − 20 0 20 40 60 80 − 60 − 40 − 20 0 20 40 t-SNE Component 1 t-SNE Component 2 • Normal • Che wing • Electrode Artifact • Eye Mov ement • Muscle Mov ement • Shiv ering Fig. 1 : t-SNE visualization of embedding spaces on downstream tasks. Left : Embeddings generated using the earlier training pipeline show lower class separability . Right : Embeddings obtained with the updated pipeline yield tighter , more distinct clusters for artifact classes (e.g., muscle movement), illustrating an improvement in the learned representation quality . A. Pre-training P erf or mance- Compar isons T o identify the optimal pre-training objective, we compared the four proposed strategies across the TU AB, TUAR, and TUSL datasets, as summarized in T able III. For the smaller TU AR and TUSL datasets, the dif ferences between pre-training strategies are modest and fall within overlapping confi- dence intervals, precluding claims of a superior method. In contrast, the larger TUAB dataset rev eals distinct performance hierarchies. The Reconstruction–Random with LowP ass strategy emerges as the superior approach, outperforming all alternativ es across ev ery metric. Most notably , it achiev es a > 3.5% impro vement in A UROC compared to the Contrastive–F r equency baseline and maintains a ∼ 2% lead in A UPR against all other methods. In terms of accuracy , it remains competitiv e with the Clustered Random v ariant while surpassing the contrasti ve approaches by margins of 1–3%. Having established Reconstruction–Random with LowP ass as the most ef fective pretraining strategy for FEMBA, we also compared the resulting encoder with the previous version of the model [19]. Since the decoder is discarded during fine-tuning, the downstream performance depends entirely on the quality of the learned repre- sentations. As illustrated qualitativ ely in Fig. 1, the new pipeline produces embeddings that form more coherent class-specific clusters (e.g., distinguishing muscle artifacts from eye mov ements), indicating a substantial improvement in representation quality . B. Fine-tuning P erf or mance Giv en the considerations discussed in Section IV -A, we adopt the Reconstruction–Random with Lo wPass configuration as the new 6 IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. XX, NO . XX, XXXX 2025 T ABLE III : Comparison of Fine-tuning Results Across TUAR, TUSL, and TUAB Datasets for Different Pre-training Strategies SSL T ask TU AR TUSL TU AB A UROC A UPRC A UROC A UPRC Accuracy (%) A UROC A UPRC Rec. – Random Masking 0 . 912 ± 0 . 002 0 . 532 ± 0 . 011 0 . 699 ± 0 . 028 0 . 281 ± 0 . 019 80 . 38 ± 0 . 08 0 . 8762 ± 0 . 0012 0 . 8773 ± 0 . 0011 Rec. – Clustered Random 0 . 917 ± 0 . 004 0 . 547 ± 0 . 027 0 . 712 ± 0 . 023 0 . 281 ± 0 . 019 81 . 38 ± 0 . 09 0 . 8663 ± 0 . 001 0 . 8654 ± 0 . 0011 Rec. – Random w/ Lowpass 0 . 916 ± 0 . 008 0 . 533 ± 0 . 024 0 . 750 ± 0 . 035 0 . 294 ± 0 . 009 81 . 95 ± 0 . 09 0 . 8930 ± 0 . 0007 0 . 8976 ± 0 . 0018 Contrastiv e – Frequency 0 . 921 ± 0 . 007 0 . 534 ± 0 . 023 0 . 751 ± 0 . 020 0 . 291 ± 0 . 014 78 . 63 ± 0 . 09 0 . 8633 ± 0 . 0007 0 . 8615 ± 0 . 0015 Contrastiv e – Masking 0 . 915 ± 0 . 012 0 . 533 ± 0 . 014 0 . 695 ± 0 . 020 0 . 272 ± 0 . 004 80 . 69 ± 0 . 07 0 . 8732 ± 0 . 0006 0 . 8691 ± 0 . 0007 pretraining strategy for all downstream tasks. In T ables IV and V, we report results for all do wnstream ev aluations and compare them with the smallest a vailable architecture of the original FEMBA model for each respectiv e dataset, as well as to recent state-of-the-art (SoA) EEG foundation models. Since the new FEMBA architecture is fine- tuned using the smallest possible classifier (a linear head), whereas the original FEMBA required a heavy Mamba classifier , we ensure fairness by comparing the new FEMBA model against the original pretrained FEMB A encoder fine-tuned with both a Mamba classifier and a linear classifier . 1) TU AB dataset : Compared to the smallest model previously used (FEMB A-Base [19]), the updated FEMB A demonstrates im- prov ed performance when ev aluated against both variants of the original model, i.e., the version fine-tuned with the Mamba classifier and the version fine-tuned with a linear classifier . W ith respect to the Mamba-classifier baseline, we observe slightly higher accuracy (around 1%) and A UPR (around 1%), while obtain- ing similar A UROC values. When comparing against the Linear- classifier version of the original model, gains are more substantial: approximately 2% in accuracy , 4% in A UROC, and up to 5% in A UPR, despite having roughly 6 × fe wer parameters. Overall, the ne w FEMB A ranks among the strongest models on the benchmark. It is analogous to LaBraM-Base [16], per- forming marginally better in accuracy (around a 0.5% improve- ment) while sho wing minimally worse A UR OC and A UPR (around 0.5%). Compared to much larger models such as LaBraM-Huge and CBraMod [17], the accuracy drop remains modest (only 0.5– 0.6%), despite their massi vely larger parameter counts, highlighting the efficienc y of the proposed 7.8M-parameter architecture. Notably , this improved performance is achie ved with only 1.3G FLOPs, up to 6 × fe wer than FEMB A Base and 27 × fewer than LaBraM-Base. 2) TU AR dataset : Among the previous FEMBA variants, the smallest directly comparable model is FEMB A-Tin y . When compar- ing the updated FEMB A to the original Tin y model fine-tuned with the Mamba-classifier , the two models exhibit equi valent A UROC performance, as their confidence intervals nearly ov erlap, while the updated version achieves a modest improvement in A UPR (about 2% on average). Against the T iny model fine-tuned with a Linear- classifier , the updated FEMBA shows clearer gains: A UR OC im- prov es by roughly 2–3%, and A UPR by nearly 6%, despite operating at essentially the same parameter scale. In comparison to state-of-the- art models, the updated FEMBA achieves competitiv e performance. Compared to the much larger LUN A-Huge [49] (SoA A UR OC), it achiev es slightly higher average A UPR (on the order of 0.5%), while exhibiting A UR OC confidence intervals that closely overlap, thus reaching comparable results with approximately 40 × fe wer param- eters. When compared to the larger FEMBA-Base (SoA A UC-PR), the updated model reaches similar A UR OC values while sho wing lower A UPR (around 2–3%). Overall, these results demonstrate that the updated FEMBA achiev es a fa vorable trade-off between model size and performance across both metrics. 3) TUSL dataset : On the TUSL dataset, as sho wn in T able IV, the updated FEMBA demonstrates consistent improvements over previous models of comparable size. Relati ve to the original Tin y model (the smallest version of the previous FEMBA) equipped with the Mamba-classifier , A UR OC and A UPR increase by approximately 4% and 1.5%, respectiv ely . A comparison with the Linear -classifier variant re veals even lar ger gains in A UR OC, around 5%, while the improv ement in A UPR remains similar to the previous case. When benchmarked against leading models, the updated FEMB A maintains a competitive standing. Although LUNA-Huge (SO T A A UR OC) surpasses it by roughly 5–6% in A UR OC, their A UPR scores are nearly indistinguishable, with overlapping confidence intervals, de- spite FEMB A using approximately 40 × fewer parameters. Compared to EEGFormer-Base (SOT A A UPR), the updated model attains a higher A UROC ( ≈ 3–4%), but lags in A UPR by approximately 10%. T ABLE IV : Comparison of model performance on TU AR and TUSL datasets. Model Size TU AR TUSL A UROC ↑ A UC-PR ↑ AUR OC ↑ AUC-PR ↑ Supervised Models EEGNet [21] - 0 . 752 ± 0 . 006 0 . 433 ± 0 . 025 0 . 635 ± 0 . 015 0 . 351 ± 0 . 006 EEG-GNN [50] - 0 . 837 ± 0 . 022 0 . 488 ± 0 . 015 0 . 721 ± 0 . 009 0 . 381 ± 0 . 004 GraphS4mer [51] - 0 . 833 ± 0 . 006 0 . 461 ± 0 . 024 0 . 632 ± 0 . 017 0 . 359 ± 0 . 001 Self-supervised Models BrainBER T [26] 43.2M 0 . 753 ± 0 . 012 0 . 350 ± 0 . 014 0 . 588 ± 0 . 013 0 . 352 ± 0 . 003 EEGFormer-Base [42] 2.3M 0 . 847 ± 0 . 014 0 . 483 ± 0 . 026 0 . 713 ± 0 . 010 0 . 393 ± 0 . 003 EEGFormer-Lar ge [42] 3.2M 0 . 852 ± 0 . 004 0 . 483 ± 0 . 014 0 . 679 ± 0 . 013 0 . 389 ± 0 . 003 FEMBA-Base [19] 47.7M 0 . 900 ± 0 . 010 0 . 559 ± 0 . 002 0 . 731 ± 0 . 012 0 . 289 ± 0 . 009 FEMBA-Lar ge [19] 77.8M 0 . 915 ± 0 . 003 0 . 521 ± 0 . 001 0 . 714 ± 0 . 007 0 . 282 ± 0 . 010 LUNA-Base [49] 7M 0 . 902 ± 0 . 011 0 . 495 ± 0 . 010 0 . 767 ± 0 . 023 0 . 301 ± 0 . 003 LUNA-Huge 311.4M 0 . 921 ± 0 . 011 0 . 528 ± 0 . 012 0 . 802 ± 0 . 005 0 . 289 ± 0 . 008 FEMBA old - Mamba classifier [19] 8.5M ∗ 0 . 918 ± 0 . 003 0 . 518 ± 0 . 002 0 . 708 ± 0 . 005 0 . 277 ± 0 . 007 FEMBA old - Linear classifier 7.8M ∗ 0 . 893 ± 0 . 021 0 . 475 ± 0 . 025 0 . 688 ± 0 . 030 0 . 272 ± 0 . 007 FEMBA new - Linear classifier 7.8M ∗ 0 . 916 ± 0 . 008 0 . 533 ± 0 . 024 0 . 750 ± 0 . 035 0 . 294 ± 0 . 009 ∗ Model size including the classification head † Bold indicates state-of-the-art models under 10M parameters. T ABLE V : Performance comparison on TUAB abnormal EEG detec- tion. Model Size Bal. Acc. (%) ↑ A UC-PR ↑ AUR OC ↑ Supervised Models SPaRCNet [52] 0.8M 78.96 ± 0.18 0.8414 ± 0.0018 0.8676 ± 0.0012 ContraWR [53] 1.6M 77.46 ± 0.41 0.8421 ± 0.0140 0.8456 ± 0.0074 CNN-Transformer [54] 3.2M 77.77 ± 0.22 0.8433 ± 0.0039 0.8461 ± 0.0013 FFCL [55] 2.4M 78.48 ± 0.38 0.8448 ± 0.0065 0.8569 ± 0.0051 ST -Transformer [56] 3.2M 79.66 ± 0.23 0.8521 ± 0.0026 0.8707 ± 0.0019 Self-supervised Models BENDR [15] 0.39M 76.96 ± 3.98 - 0.8397 ± 0.0344 BrainBER T [26] 43.2M - 0.8460 ± 0.0030 0.8530 ± 0.0020 EEGFormer-Base [42] 2.3M - 0.8670 ± 0.0020 0.8670 ± 0.0030 BIOT [57] 3.2M 79.59 ± 0.57 0.8692 ± 0.0023 0.8815 ± 0.0043 EEG2Rep [58] - 80.52 ± 2.22 - 0.8843 ± 0.0309 CEREbRO [59] 85.15M 81.67 ± 0.23 0.9049 ± 0.0026 0.8916 ± 0.0038 LaBraM-Base [16] 5.9M 81.40 ± 0.19 0 . 8965 ± 0 . 0016 0 . 9022 ± 0 . 0009 LaBraM-Huge [16] 369.8M 82.58 ± 0.11 0.9204 ± 0.0011 0.9162 ± 0.0016 CBraMod [17] 69.3M 82.49 ± 0.25 0.9221 ± 0.0015 0.9156 ± 0.0017 FEMBA old - Mamba classifier [19] 48.3M ∗ 81.05 ± 0.14 0.8894 ± 0.0050 0.8829 ± 0.0021 FEMBA old - Linear classifier 47.6M ∗ 79.75 ± 0.15 0.8511 ± 0.0011 0.8456 ± 0.0011 FEMBA new - Linear classifier 7.8M ∗ 81 . 95 ± 0 . 09 0.8930 ± 0.0007 0.8976 ± 0.0018 ∗ Model size including the classification head † Bold indicates state-of-the-art models under 10M parameters. TEGON et al. : FEMBA 7 C . Quantization Analysis W e focus our quantization analysis on TUAB, being the most extensi vely explored dataset in the literature [60]. TUAB also offers a controlled ev aluation setting thanks to its balanced binary classi- fication task, which allows us to isolate the effects of quantization more reliably . T able VI summarizes the quantization results. W e first ev aluated the behavior of the model quantizing only the weights to 8-bit, 4-bit, and 2-bit. The 8-bit as well as 4-bit quantization resulted in negligible performance degradation (0.1% A UR OC for 8-bit and 0.1% for both A UR OC and A UPR for 4- bit). Howe ver , reducing to 2-bit led to a substantial degradation in accuracy , A UROC and A UPR. Quantizing activ ations, ev en at 8-bit precision, yielded an accuracy drop of approximately 30%, resulting in performance comparable to random guessing (accuracy ≈ 0.5). W e extensiv ely explored PTQ calibration pipelines in an attempt to mitigate this degradation. Following the procedure detailed in Section III-F, we performed activ ation calibration in floating point with quantization temporarily disabled, using a dedicated calibration split constructed to be class-balanced and representative of both seizure and non-seizure windows. W e experimented with calibration sets ranging from approximately 5% to 20% of the TUAB training windows, confirming that neither increasing the calibration set size nor enforcing class balance produced any measurable improvement. After collecting activ ation statistics, we re-enabled quantization with the calibrated scales and applied bias correction, yet performance remained essentially unchanged. This persistent failure of PTQ aligns with recent analyses of Mamba and other state-space models, which report that these ar- chitectures naturally generate large activ ation outliers, particularly in gate and output projections and their associated matrix multipli- cations. The parallel scan operation further amplifies these effects, resulting in heavy-tailed activation distributions that are inherently difficult to capture with low-precision quantization [30]. Giv en the relativ ely small model size, we were able to apply QA T. After a few epochs, QA T recovered the original A UR OC, A UPR, and accuracy for weight-only 8-bit and for weight 4-bit with 8-bit acti vations. Even in the 2-bit weight and 8-bit acti vation setting, despite the significant drop with PTQ in A UROC and A UPR, QA T ef fectively restored per- formance, reaching results comparable to those obtained for weight 8-bit or weight 4-bit. Howev er, when quantizing activ ations to 4-bit, QA T was not sufficient to recov er performance. T ABLE VI : Quantization Results: Comparison of PTQ and QA T Performance Configuration Method A UROC AUPR Accuracy FP32 (Baseline) – 0.89 0.89 81.84 W8A8 PTQ 0.77 0.71 55.91 QA T 0.88 0.88 81.02 W4A8 PTQ 0.71 0.67 55.79 QA T 0.88 0.88 80.88 W2A8 PTQ 0.56 0.49 54.12 QA T 0.88 0.88 80.61 W4A4 PTQ 0.68 0.63 54.95 QA T 0.69 0.68 65.40 W2A4 PTQ 0.54 0.54 48.38 QA T 0.55 0.55 49.20 Only W eight 8 PTQ 0.88 0.89 81.61 Only W eight 4 PTQ 0.88 0.88 80.86 Only W eight 2 PTQ 0.54 0.48 54.71 In the remainder of this work we therefore focus on a QA T -trained FEMBA-T iny model with a W2A8 configuration for the encoder weights and acti vations, combined with a small number of higher- precision accumulators where required for numerical stability . On GAP9, this scheme primarily reduces the model’ s memory footprint, from 7.8 MB for a uniform INT8 implementation to approximately 2 MB, while leaving the runtime dominated by the sequential SSM scan. W e use the corresponding W8A8 model as our on-device INT8 baseline. In other words, quantization is crucial to make FEMB A- T iny fit within the L3/L2 memory b udgets of the device and to enable efficient streaming, whereas further reductions in latency will require architectural changes to the SSM itself rather than more aggressive bitwidth scaling. In the next subsection, we deploy both the W8A8 and W2A8 FEMBA-T iny models on GAP9 and characterize their end-to-end latency , energy , and cycle breakdown. D . Model deplo yment on a low-power microcontroller W e no w e valuate the QA T -trained FEMBA-T iny models on a GAP9-based ultra-low-po wer MCU platform. In particular , we com- pare the uniform INT8 (W8A8) baseline against the 2-bit weight variant (W2A8) selected in the quantization analysis and quantify the impact of 2-bit weights on latency , energy , and memory footprint. As discussed abo ve, both models share the same network architecture and differ only in the numerical representation of the encoder weights and the associated packing/unpacking logic; all activations remain 8-bit on device. 1) Experimental Setup : W e ev aluated FEMBA-T iny on a GAP9 dev elopment board [9], [46] with the compute cluster operating at 370 MHz. Performance measurements use the GAP9 hardware performance counters to obtain cycle-accurate timing and instruction counts. Each inference processes a 5 s input window with 22 channels and 1 , 280 timesteps (256 Hz sampling), represented as a tensor of shape (22 , 1280) , with embedding dimension d model = 385 . All reported metrics are av eraged ov er 10 runs with identical inputs to ensure measurement stability . MA Cs/cycle v alues refer to dense- equiv alent INT8 multiply-accumulate operations. T able VII summarizes the end-to-end execution metrics for the standard INT8 implementation and the 2-bit weight quantization variant. 2) Latency and Efficiency : The INT8 deployment achiev es an inference latency of 1.70 seconds for a 5-second input windo w (629.4 million cycles), which in turn yields a real-time slack factor of approximately 3x. At the measured average power of 44.1 mW , this corresponds to an energy cost of 75 mJ per 5 s inference window (1.70 s of acti ve compute). At the TUSL sampling rate this corresponds to roughly 3 × faster-than-real-time processing, leaving ample slack for on-device pre- and post-processing. Our double- buf fered streaming architecture successfully hides nearly all memory transfer latency , achieving 99.4–100% overlap between computation and L3 → L2 DMA transfers in the Mamba blocks. T o put these numbers into a more practical perspectiv e, for a typical 300 mAh, 3.7 V wearable battery (around 4.0 kJ of stored energy), this energy cost would allo w on the order of 5 . 3 × 10 4 such 5 s inference windows, corresponding to roughly 3 days of contin- uous operation for FEMBA inference alone, ignoring the additional ov erhead of sensing, storage, and wireless communication. While a full device-le vel po wer budget is beyond the scope of this work, these estimates suggest that foundation-scale EEG encoders can be inte grated into wear able neuro-monitoring systems without violating typical battery and form-factor constraints. As T able VIII shows, the two bidirectional Mamba blocks account for 98.3% of total cycles, while other layers, patch embedding, positional encoding, global pooling, and classification contribute neg- ligibly to latency . This concentration of runtime in the Mamba blocks confirms that they are the primary target for further optimization. 3) Sub-operation Analysis and Efficiency : T o localize bot- tlenecks within the Mamba blocks, we instrumented each sub- 8 IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. XX, NO . XX, XXXX 2025 operation. T able IX reports the breakdown for the first Mamba block ( mamba blocks.0 ). a) Memory Bandwidth Saturation: Our hierarchical memory management strategy prov es highly effecti ve for the compute- intensiv e layers. The Input and Output Projections achieve high com- putational density , reaching 2.65 and 3.91 MACs/c ycle, respectiv ely . This performance confirms that our multi-level double-buf fered tiling successfully eliminates memory bandwidth bottlenecks: despite the Input Projection requiring the streaming of 1.13 MB of weights from off-chip L3 memory , the DMA/Compute overlap remains > 99%, ensuring that the cores are nev er stalled waiting for data. b) Shift fr om MACs/Cycle to IPC: In contrast, the Selectiv e SSM Scan dominates execution time (64.6% of cycles) while con- tributing only a small fraction of the total MA Cs. This discrepancy highlights a critical distinction for deploying State Space Models: unlike CNNs or T ransformers, where performance scales with arith- metic throughput (MA Cs/cycle), efficiency in Mamba-based models is defined by Instructions per Cycle (IPC) T o validate this, we analyzed hardware performance counters. The SSM scan achiev es a high IPC of 1.36 , indicating that the GAP9 dual- issue pipeline is fully utilized. Howe ver , the recurrence inherently requires approximately 4.3 supporting instructions (pointer arith- metic, LUT -based parameter discretization, and state management) per MAC operation. Therefore, low MA C utilization in the SSM scan is not a sign of inefficienc y , but a characteristic of the algorithm. W e conclude that future hardware-software optimization for SSMs must piv ot away from maximizing MA C density and instead to ward handling complex, scalar instruction streams at high IPC. 4) Impact of 2-bit Quantization : W e compared the INT8 baseline against 2-bit weight quantization (T able VII). While 2-bit quantiza- tion reduces the model storage by 74% ( ≈ 2 MB vs. 7.8 MB), end-to- end latency remains nearly unchanged (1.69 s vs. 1.70 s). The 2-bit weights are packed four values per byte using a ternary encoding ( {− 1 , 0 , +1 } 7→ { 0 , 1 , 2 } ), yielding 16 weights per 32- bit word. During inference, weights are unpacked on the fly within the dot-product loop: each iteration extracts four 2-bit values via shift-and-mask operations, maps them back to {− 1 , 0 , +1 } as INT8, and feeds them to the same SIMD dot-product instructions used by the INT8 kernel. This adds roughly eight simple ALU operations per eight weights, which is negligible compared to the memory- access and accumulation costs, explaining why latency is nearly unchanged despite the 4 × reduction in weight memory traf fic [48], [61]. This behavior is consistent with prior work on PULP-class MCUs, where sub-byte quantization mainly improv es model size and energy efficienc y , while 8-bit SIMD remains throughput-optimal in the absence of dedicated low-bit instructions [48], [61]. These results confirm that FEMB A-Tiny is compute-bound rather than memory-bound on GAP9. The 2-bit v ariant is therefore primarily beneficial for reducing e xternal-memory energy and storage footprint on resource-constrained wearables. 5) Accuracy : The embedded implementation achiev es bit-exact consistency with the Python simulation. W e validated the output of ev ery layer, confirming a bit-to-bit exact implementation for both the INT8 and 2-bit configurations. V . D I S C U S S I O N A. Physiological A wareness in F oundation Models Our results demonstrate that the Reconstruction–Random with LowP ass strategy significantly outperforms standard masking and contrastiv e approaches. W e hypothesize that this objective functions as a domain-specific regularizer . Standard masked modeling forces the network to allocate capacity to reconstructing high-frequency T ABLE VII : FEMBA-T iny Performance on GAP9 @ 370 MHz Metric INT8 (W8A8) 2-bit (W2A8) T otal Cycles 629.4 M 625.9 M Inference Time 1.70 s 1.69 s Compute Utilization 98.6% 98.7% Idle/Overhead 0.5% 0.5% DMA/Compute Overlap 99.4% 99.5% T ABLE VIII : Layer-by-Layer Cycle Breakdown Layer Cycles (M) % T otal Overlap patch embed 8.0 1.3% 81.1% pos embed 2.3 0.4% 100.0% mamba blocks.0 310.3 49.3% 100.0% mamba blocks.1 308.2 49.0% 99.4% global pool 0.5 0.1% 100.0% classifier < 0.1 < 0.01% 41.9% T otal 629.4 100% — components, which in scalp EEG are often dominated by electromyo- graphic (EMG) artif acts and en vironmental noise [62]. By filtering the reconstruction target, we explicitly direct the model’ s attention toward the delta–beta bands ( 0 . 5 – 30 Hz), which contain the majority of clinically rele vant biomarkers for seizure and artifact detection. This suggests that for biomedical time-series, ”faithful” reconstruction of the raw signal is suboptimal; instead, reconstruction targets should be aligned with the physiological bandwidth of interest. B. The Quantization-Efficiency T rade-off The successful compression of FEMBA to 2-bit weights (W2A8) without performance collapse is a key finding. Prior work on Mamba has highlighted the difficulty of PTQ due to activ ation outliers in the selective scan [30]. Our experiments confirm this: PTQ failed catastrophically (A UR OC ≈ 0 . 5 ). Howev er , QA T allo wed the model to adapt its weights to the lo w-precision regime. Interestingly , while 2-bit quantization reduced the memory footprint by 74% (enabling deployment on the 1.5MB L2 memory of GAP9), it did not sig- nificantly reduce latency . This confirms that our implementation is compute-bound , not memory-bound. The implications are two-fold: (1) aggressive quantization is essential for storage on MCUs, but (2) accelerating inference requires dedicated hardware support for the SSM scan operations, rather than just memory bandwidth reduction. C . Limitations and Future W or k While FEMBA demonstrates robust performance across several adult EEG datasets, important limitations exist. First, our pre-training and ev aluation are confined to the TUEG, TUAB, TU AR, and TUSL corpora, which primarily comprise clinical adult recordings from related acquisition pipelines. Generalization to substantially different domains—such as neonatal EEG with burst–suppression patterns, high-density research montages, consumer headsets with few dry electrodes, or home-based long-term monitoring—remains unestab- lished. Extending the pre-training to more diverse data sources and explicitly quantifying out-of-distribution robustness will be crucial. Second, the current deployment operates on fixed, non-overlapping 5s windows and performs of fline classification. Many clinical and consumer applications, ho wever , require streaming or e vent-triggered processing with strict low-latency constraints. Adapting FEMBA to a fully streaming inference regime—e.g., by exploiting causal variants of the state-space recurrence and reusing internal states across windows—could reduce latency and energy further , but may require retraining and task-specific calibration. TEGON et al. : FEMBA 9 T ABLE IX : Sub-operation Breakdo wn for mamba blocks.0 Operation Cycles (M) % MA Cs (M) MA Cs/Cyc Input Projection 71.7 23.2% 189.7 2.65 Sequence Re versal 1.6 0.5% — — Local T emporal Conv . 8.8 2.8% 1.0 0.11 Selectiv e SSM Scan 199.5 64.6% 63.1 0.32 Output Projection 24.3 7.9% 94.9 3.91 Sequence Re versal 1.0 0.3% — — Bidirectional Fusion 2.1 0.7% — — T otal 308.9 100% 348.7 1.13 Third, our quantization scheme is validated primarily on the FEMB A-Tin y architecture and TUAB-based downstream tasks. Al- though the W2A8 configuration with a small number of higher- precision accumulators proved sufficient in this setting, different hyperparameters, model scales, or target MCUs may exhibit different sensitivity to quantization noise. Systematically exploring mixed- precision assignments and automatically tuning quantization param- eters for new hardware platforms is, therefore, an open direction. Fourth, this study focuses on algorithmic and embedded feasi- bility rather than clinical workflow integration. All ev aluations are performed on retrospectiv e datasets; we do not assess prospective performance, user comfort, or the impact of FEMBA-based alerts on clinical decision-making. Future work must in vestigate how such models af fect false-alarm rates, time-to-detection, and interpretability in real-world monitoring scenarios, ideally with clinical partners. Finally , our hardware analysis highlights a fundamental architec- tural bottleneck: the selecti ve SSM scan achie ves only about 0.32 MA Cs/cycle on the GAP9 cluster due to its inherently sequential nature and limited instruction-lev el parallelism, whereas dense linear projections can reach up to 3.9 MA Cs/cycle. Recent formulations such as Mamba-2, which recast state-space recurrences into structured matrix multiplications, offer a promising path to replace this scan with matmul-friendly kernels that better exploit the GAP9 compute fabric. Given the strong efficiency of our linear layers, integrating such “matmul-friendly” SSM v ariants is a natural av enue to further accelerate edge inference in future versions of FEMBA. V I . C O N C L U S I O N This work bridges the gap between large-scale Foundation Models and the resource constraints of wearable biomedical devices. W e in- troduced FEMB A, a bidirectional Mamba architecture that le verages a nov el Physiologically-A ware pre-training strategy to prioritize the re- construction of neural oscillations over high-frequency artifacts. This approach yields superior generalization on diverse downstream tasks (TU AB, TUAR, TUSL) compared to standard masked modeling. Furthermore, we addressed the critical challenge of deploying State-Space Models on MCUs. By utilizing QA T, we overcame the activ ation outlier issue inherent to Mamba, successfully compressing the model to 2-bit weights with negligible performance degradation. The resulting deployment on a parallel RISC-V MCU (GAP9) achiev es deterministic real-time inference (1.70 s per window) with a 74% reduction in memory footprint. These results demonstrate that the linear complexity of SSMs, combined with quantization, makes them a viable alternati ve to T ransformer-based models for amb ulatory neuro-monitoring. By en- abling high-performance artifact and seizure detection directly at the edge, FEMBA pa ves the way for ener gy-efficient, long-term wearable health systems that do not rely on continuous cloud connecti vity . Future work will focus on architectural approximations to further parallelize the selecti ve scan mechanism, unlocking the full through- put potential of embedded parallel clusters. R E F E R E N C E S [1] T . F . Collura, “History and evolution of electroencephalographic instru- ments and techniques, ” Journal of clinical neur ophysiology , vol. 10, no. 4, pp. 476–504, 1993. [2] D. Petit, J.-F . Gagnon, M. L. Fantini, L. Ferini-Strambi, and J. Mont- plaisir , “Sleep and quantitativ e eeg in neurodegenerativ e disorders, ” Journal of psychosomatic resear ch , vol. 56, no. 5, pp. 487–496, 2004. [3] S. Noachtar and J. R ´ emi, “The role of ee g in epilepsy: a critical re view , ” Epilepsy & Behavior , vol. 15, no. 1, pp. 22–33, 2009. [4] S. Beniczky , S. W iebe, J. Jeppesen, W . O. T atum, M. Brazdil, Y . W ang, S. T . Herman, and P . Ryvlin, “ Automated seizure detection using wearable devices: a clinical practice guideline of the international league against epilepsy and the international federation of clinical neurophysi- ology , ” Clinical Neurophysiolo gy , v ol. 132, no. 5, pp. 1173–1184, 2021. [5] R. L. Acabchuk, M. A. Simon, S. Low , J. M. Brisson, and B. T . Johnson, “Measuring meditation progress with a consumer-grade eeg device: Caution from a randomized controlled trial, ” Mindfulness , vol. 12, no. 1, pp. 68–81, 2021. [6] L.-D. Liao, C.-Y . Chen, I.-J. W ang, S.-F . Chen, S.-Y . Li, B.-W . Chen, J.- Y . Chang, and C.-T . Lin, “Gaming control using a wearable and wireless eeg-based brain-computer interface device with novel dry foam-based sensors, ” Journal of neuroengineering and r ehabilitation , vol. 9, no. 1, p. 5, 2012. [7] A. Ahmadi, O. Dehzangi, and R. Jafari, “Brain-computer interface signal processing algorithms: A computational cost vs. accuracy analysis for wearable computers, ” in 2012 Ninth International Conference on W earable and Implantable Body Sensor Networks . IEEE, 2012, pp. 40–45. [8] D. Seok, S. Lee, M. Kim, J. Cho, and C. Kim, “Motion artifact removal techniques for wearable eeg and ppg sensor systems, ” F r ontiers in Electr onics , vol. 2, p. 685513, 2021. [9] T . M. Ingolfsson, S. Benatti, X. W ang, A. Bernini, P . Ducouret, P . Ryvlin, S. Beniczky , L. Benini, and A. Cossettini, “Minimizing artifact-induced false-alarms for seizure detection in wearable EEG devices with gradient-boosted tree classifiers, ” Sci Rep , vol. 14, no. 1, p. 2980, Feb . 2024. [10] F . Lotte, M. Congedo, A. L ´ ecuyer , F . Lamarche, and B. Arnaldi, “ A revie w of classification algorithms for ee g-based brain–computer interfaces, ” J ournal of neural engineering , vol. 4, no. 2, p. R1, 2007. [11] T . M. Ingolfsson, M. Hersche, X. W ang, N. Kobayashi, L. Cavigelli, and L. Benini, “EEG-TCNet: An accurate temporal con volutional network for embedded motor -imagery brain–machine interfaces, ” in 2020 IEEE International Conference on Systems, Man, and Cybernetics (SMC) , 2020, pp. 2958–2965. [12] R. Zanetti, A. Arza, A. Aminifar , and D. Atienza, “Real-time ee g-based cognitiv e workload monitoring on wearable de vices, ” IEEE transactions on biomedical engineering , vol. 69, no. 1, pp. 265–277, 2021. [13] T . M. Ingolfsson, X. W ang, U. Chakraborty , S. Benatti, A. Bernini, P . Ducouret, P . Ryvlin, S. Beniczky , L. Benini, and A. Cossettini, “BrainFuseNet: Enhancing W earable Seizure Detection Through EEG- PPG-Accelerometer Sensor Fusion and Efficient Edge Deployment, ” IEEE T ransactions on Biomedical Circuits and Systems , vol. 18, no. 4, pp. 720–733, Aug. 2024. [14] S. Frey , M. A. Lucchini, V . Kartsch, T . M. Ingolfsson, A. H. Bernardi, M. Segessenmann, J. Osieleniec, S. Benatti, L. Benini, and A. Cossettini, “Gapses: V ersatile smart glasses for comfortable and fully-dry acqui- sition and parallel ultra-low-po wer processing of eeg and eog, ” IEEE T ransactions on Biomedical Cir cuits and Systems , 2024. [15] D. Kostas, S. Aroca-Ouellette, and F . Rudzicz, “Bendr: Using transform- ers and a contrastive self-supervised learning task to learn from massive amounts of EEG data, ” Fr ontiers in Human Neur oscience , vol. 15, p. 653659, 2021. [16] W . Jiang, L. Zhao, and B. liang Lu, “Large brain model for learning generic representations with tremendous EEG data in BCI, ” in The T welfth International Conference on Learning Representations , 2024. [17] J. W ang, S. Zhao, Z. Luo, Y . Zhou, H. Jiang, S. Li, T . Li, and G. Pan, “CBramod: A criss-cross brain foundation model for EEG decoding, ” in The Thirteenth International Conference on Learning Repr esentations , 2025. [18] A. Gu and T . Dao, “Mamba: Linear-time sequence modeling with selectiv e state spaces, ” in Fir st confer ence on languag e modeling , 2024. [19] A. T egon, T . M. Ingolfsson, X. W ang, L. Benini, and Y . Li, “Femba: Efficient and scalable eeg analysis with a bidirectional mamba founda- tion model, ” in 2025 47th Annual International Confer ence of the IEEE Engineering in Medicine and Biology Society (EMBC) , 2025, pp. 1–7. 10 IEEE TRANSACTIONS ON BIOMEDICAL ENGINEERING, VOL. XX, NO . XX, XXXX 2025 [20] R. T . Schirrmeister , J. T . Springenber g, L. D. J. Fiederer, M. Glasstetter , K. Eggensperger , M. T angermann, F . Hutter , W . Burgard, and T . Ball, “Deep learning with convolutional neural networks for eeg decoding and visualization, ” Human brain mapping , vol. 38, no. 11, pp. 5391–5420, 2017. [21] V . J. Lawhern, A. J. Solon, N. R. W aytowich, S. M. Gordon, C. P . Hung, and B. J. Lance, “EEGNet: a compact con volutional neural network for EEG-based brain–computer interfaces, ” Journal of neural engineering , vol. 15, no. 5, p. 056013, 2018. [22] G. A. Altuwaijri and G. Muhammad, “ A multibranch of conv olutional neural network models for electroencephalogram-based motor imagery classification, ” Biosensors , vol. 12, no. 1, p. 22, 2022. [23] D. K ostas and F . Rudzicz, “Thinker in variance: enabling deep neural networks for BCI across more people, ” Journal of Neural Engineering , vol. 17, no. 5, p. 056008, 2020. [24] S. Kumar , A. Sharma, and T . Tsunoda, “Brain wave classification using long short-term memory network based optical predictor, ” Scientific r eports , vol. 9, no. 1, p. 9153, 2019. [25] T .-j. Luo, C.-l. Zhou, and F . Chao, “Exploring spatial-frequenc y- sequential relationships for motor imagery classification with recurrent neural network, ” BMC bioinformatics , vol. 19, no. 1, p. 344, 2018. [26] C. W ang, V . Subramaniam, A. U. Y aari, G. Kreiman, B. Katz, I. Cases, and A. Barbu, “BrainBER T: Self-supervised representation learning for intracranial recordings, ” in The Eleventh International Conference on Learning Representations , 2023. [27] Y . Gui, M. Chen, Y . Su, G. Luo, and Y . Y ang, “EEGMamba: Bidirec- tional state space model with mixture of experts for EEG multi-task classification, ” arXiv preprint , 2024. [28] J. Hong, G. Mackellar , and S. Ghane, “Eegm2: An efficient mamba- 2-based self-supervised framework for long-sequence EEG modeling, ” arXiv preprint arXiv:2502.17873 , 2025. [29] T . M. Ingolfsson, X. W ang, M. Hersche, A. Burrello, L. Cavigelli, and L. Benini, “Ecg-tcn: W earable cardiac arrhythmia detection with a temporal con volutional network, ” in 2021 IEEE 3rd International Confer ence on Artificial Intelligence Circuits and Systems (AICAS) , 2021, pp. 1–4. [30] Z. Xu, Y . Y ue, X. Hu, D. Y ang, Z. Y uan, Z. Jiang, Z. Chen, JiangyongY u, XUCHEN, and S. Zhou, “Mambaquant: Quantizing the mamba family with variance aligned rotation methods, ” in The Thirteenth International Confer ence on Learning Representations , 2025. [31] I. Obeid and J. Picone, “The T emple University Hospital EEG Data Corpus, ” F r ontiers in Neur oscience , vol. 10, May 2016. [32] M. Bedeeuzzaman, O. Farooq, and Y . U. Khan, “ Automatic seizure de- tection using inter quartile range, ” Int. J ournal of Computer Applications , vol. 44, no. 11, pp. 1–5, 2012. [33] O. Lieber, B. Lenz, H. Bata, G. Cohen, J. Osin, I. Dalmedigos, E. Safahi, S. Meirom, Y . Belinkov , S. Shalev-Shwartz et al. , “Jamba: A hybrid transformer-mamba language model, ” arXiv preprint , 2024. [34] R. Girshick, “Fast R-CNN ICCV, ” in Pr oc. of the IEEE Int. Conf. on Computer V ision (ICCV) , 2015, pp. 1440–1448. [35] P . Arpaia, M. De Luca, L. Di Marino, D. Duran, L. Gargiulo, P . Lanteri, N. Moccaldi, M. Nalin, M. Picciafuoco, R. Robbio et al. , “ A systematic revie w of techniques for artifact detection and artifact category iden- tification in electroencephalography from wearable devices, ” Sensors , vol. 25, no. 18, p. 5770, 2025. [36] L. Y ang and S. Hong, “Unsupervised time-series representation learning with iterati ve bilinear temporal-spectral fusion, ” in International confer- ence on machine learning . PMLR, 2022, pp. 25 038–25 054. [37] A. v . d. Oord, Y . Li, and O. V inyals, “Representation learning with contrastiv e predicti ve coding, ” arXiv pr eprint arXiv:1807.03748 , 2018. [38] C. Rommel, J. Paillard, T . Moreau, and A. Gramfort, “Data augmentation for learning predictive models on EEG: a systematic comparison, ” Journal of Neural Engineering , vol. 19, no. 6, p. 066020, 2022. [39] J. T . Schwabedal, J. C. Snyder , A. Cakmak, S. Nemati, and G. D. Clifford, “ Addressing class imbalance in classification problems of noisy signals by using Fourier transform surrogates, ” arXiv pr eprint arXiv:1806.08675 , 2018. [40] C. Rommel, T . Moreau, J. Paillard, and A. Gramfort, “CADD A: Class- wise automatic differentiable data augmentation for EEG signals, ” in International Conference on Learning Representations , 2022. [41] O. Y onay , T . Hammond, and T . Y ang, “Myna: Masking-based contrastiv e learning of musical representations, ” arXiv pr eprint arXiv:2502.12511 , 2025. [42] Y . Chen, K. Ren, K. Song, Y . W ang, Y . W ang, D. Li, and L. Qiu, “EEGFormer: T owards transferable and interpretable large-scale EEG foundation model, ” in AAAI 2024 Spring Symposium on Clinical F oun- dation Models , 2024. [43] T .-Y . Lin, P . Goyal, R. Girshick, K. He, and P . Doll ´ ar , “F ocal loss for dense object detection, ” in Proceedings of the IEEE international confer ence on computer vision , 2017, pp. 2980–2988. [44] G. Franco, A. Pappalardo, and N. J. Fraser, “Xilinx/brevitas, ” 2025. [Online]. A vailable: https://doi.org/10.5281/zenodo.3333552 [45] B. Rokh, A. Azarpeyv and, and A. Khanteymoori, “ A comprehensi ve survey on model quantization for deep neural networks in image classification, ” A CM T ransactions on Intelligent Systems and T echnology , vol. 14, no. 6, pp. 1–50, 2023. [46] GreenW aves T echnologies, “GAP SDK: Sdk for greenwav es technolo- gies’ gap8 iot application processor, ” https://github.com/GreenW aves- T echnologies/gap sdk, 2022, version 4.12.0. [47] F . Daghero, D. J. Pagliari, F . Conti, L. Benini, M. Poncino, and A. Burrello, “Lightweight software kernels and hardware e xtensions for efficient sparse deep neural networks on microcontrollers, ” in Eighth Confer ence on Machine Learning and Systems , 2025. [48] A. Garofalo, M. Rusci, F . Conti, D. Rossi, and L. Benini, “Pulp-nn: Accelerating quantized neural networks on parallel ultra-low-po wer risc- v processors, ” Philosophical T ransactions of the Royal Society A , vol. 378, no. 2164, p. 20190155, 2020. [49] B. D ¨ oner , T . M. Ingolfsson, L. Benini, and Y . Li, “LUN A: Efficient and topology-agnostic foundation model for EEG signal analysis, ” in The Thirty-ninth Annual Confer ence on Neural Information Pr ocessing Systems , 2025. [50] A. Demir, T . Koike-Akino, Y . W ang, M. Haruna, and D. Erdogmus, “EEG-GNN: Graph neural networks for classification of electroen- cephalogram (EEG) signals, ” in 2021 43rd Annual International Confer- ence of the IEEE Engineering in Medicine & Biology Society (EMBC) . IEEE, 2021, pp. 1061–1067. [51] S. T ang, J. A. Dunnmon, Q. Liangqiong, K. K. Saab, T . Baykaner, C. Lee-Messer, and D. L. Rubin, “Modeling multi variate biosignals with graph neural networks and structured state space models, ” in Conference on health, infer ence, and learning . PMLR, 2023, pp. 50–71. [52] J. Jing, W . Ge, S. Hong, M. B. Fernandes, Z. Lin, C. Y ang, S. An, A. F . Struck, A. Herlopian, I. Karakis et al. , “Development of expert-lev el classification of seizures and rhythmic and periodic patterns during EEG interpretation, ” Neur ology , vol. 100, no. 17, pp. e1750–e1762, 2023. [53] C. Y ang, C. Xiao, M. B. W estover , and J. Sun, “Self-supervised elec- troencephalogram representation learning for automatic sleep staging: model development and ev aluation study , ” JMIR AI , vol. 2, no. 1, p. e46769, 2023. [54] W . Y . Peh, Y . Y ao, and J. Dauwels, “T ransformer conv olutional neural networks for automated artifact detection in scalp EEG, ” in 2022 44th Annual International Conference of the IEEE Engineering in Medicine & Biology Society (EMBC) . IEEE, 2022, pp. 3599–3602. [55] H. Li, M. Ding, R. Zhang, and C. Xiu, “Motor imagery EEG clas- sification algorithm based on CNN-LSTM feature fusion network, ” Biomedical signal pr ocessing and control , vol. 72, p. 103342, 2022. [56] Y . Song, X. Jia, L. Y ang, and L. Xie, “Transformer-based spatial-temporal feature learning for EEG decoding, ” arXiv preprint arXiv:2106.11170 , 2021. [57] C. Y ang, M. W estov er, and J. Sun, “Biot: Biosignal transformer for cross- data learning in the wild, ” Advances in Neural Information Pr ocessing Systems , vol. 36, pp. 78 240–78 260, 2023. [58] N. Mohammadi Foumani, G. Mackellar , S. Ghane, S. Irtza, N. Nguyen, and M. Salehi, “Eeg2rep: enhancing self-supervised EEG representation through informativ e masked inputs, ” in Proceedings of the 30th ACM SIGKDD Confer ence on Knowledge Discovery and Data Mining , 2024, pp. 5544–5555. [59] A. Dimofte, G. A. Bucagu, T . M. Ingolfsson, X. W ang, A. Cossettini, L. Benini, and Y . Li, “CEReBrO: Compact encoder for representations of brain oscillations using efficient alternating attention, ” arXiv pr eprint arXiv:2501.10885 , 2025. [60] N. Lee, K. Barmpas, A. K oliousis, Y . Panagakis, D. Adamos, N. Laskaris, and S. Zafeiriou, “ A comprehensi ve revie w of biosignal foundation models, ” Author ea Pr eprints , 2025. [61] G. Rutishauser , J. Mihali, M. Scherer, and L. Benini, “xtern: Energy- efficient ternary neural network inference on risc-v-based edge systems, ” in 2024 IEEE 35th International Conference on Application-specific Systems, Architectur es and Pr ocessors (ASAP) . IEEE, 2024, pp. 206– 213. [62] I. I. Goncharova, D. J. McFarland, T . M. V aughan, and J. R. W olpaw , “Emg contamination of eeg: spectral and topographical characteristics, ” Clinical neurophysiolo gy , vol. 114, no. 9, pp. 1580–1593, 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment