CWoMP: Morpheme Representation Learning for Interlinear Glossing

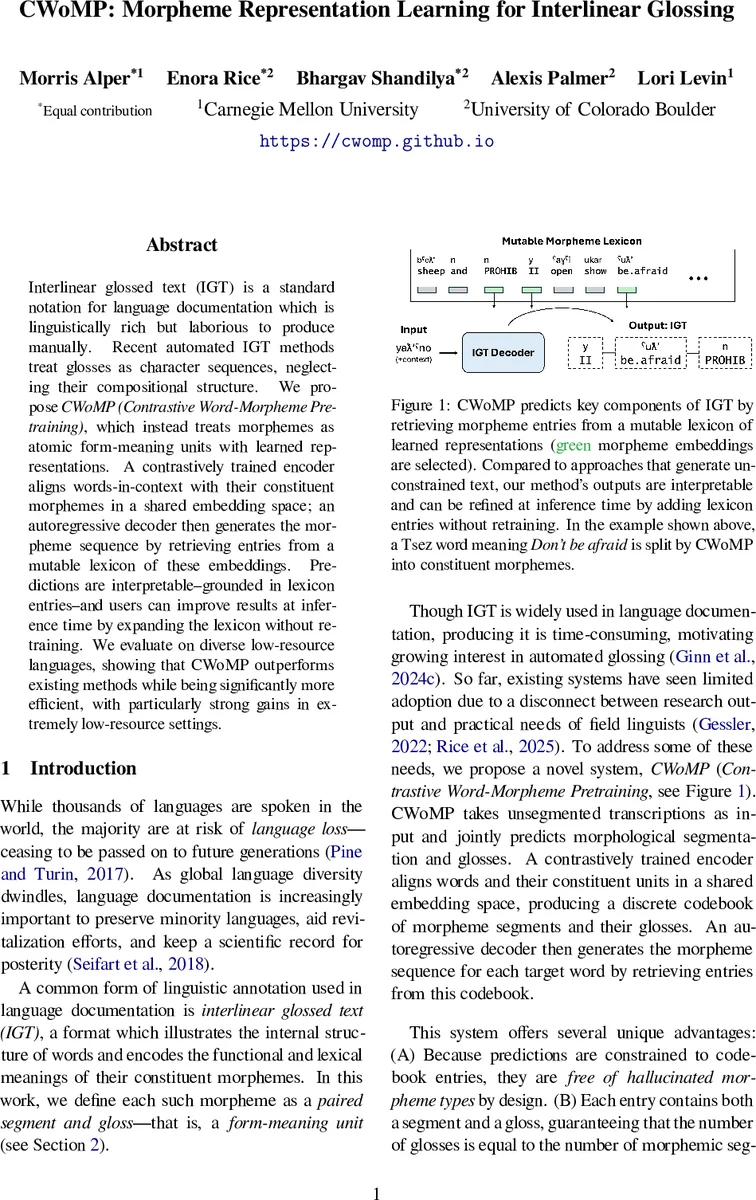

Interlinear glossed text (IGT) is a standard notation for language documentation which is linguistically rich but laborious to produce manually. Recent automated IGT methods treat glosses as character sequences, neglecting their compositional structure. We propose CWoMP (Contrastive Word-Morpheme Pretraining), which instead treats morphemes as atomic form-meaning units with learned representations. A contrastively trained encoder aligns words-in-context with their constituent morphemes in a shared embedding space; an autoregressive decoder then generates the morpheme sequence by retrieving entries from a mutable lexicon of these embeddings. Predictions are interpretable–grounded in lexicon entries–and users can improve results at inference time by expanding the lexicon without retraining. We evaluate on diverse low-resource languages, showing that CWoMP outperforms existing methods while being significantly more efficient, with particularly strong gains in extremely low-resource settings.

💡 Research Summary

Interlinear glossed text (IGT) is a crucial tool for linguistic documentation, yet producing it manually is labor‑intensive. Recent neural approaches treat glosses as plain character sequences, ignoring the inherent compositional structure of morphemes. This paper introduces CWoMP (Contrastive Word‑Morpheme Pretraining), a novel framework that treats morphemes as atomic form‑meaning units and learns dedicated embeddings for them.

The core of CWoMP is a Bag‑of‑Morpheme (BoM) encoder composed of two parallel networks. The first network, pθ, maps each word‑in‑context to a high‑dimensional vector. The second network, qθ, maps every morpheme in a mutable lexicon B to vectors in the same space. Both networks are trained jointly with a contrastive (InfoNCE) loss: for each word w, the similarity scores S(w,m)=pθ(w)·qθ(m) are computed for all morphemes m; positive samples are the true morphemes that constitute w, while negatives are randomly sampled from the whole morpheme inventory. The loss forces the embeddings of a word and its constituent morphemes to be close, while pushing unrelated morphemes away, thereby aligning words and morphemes in a shared embedding space.

Once the shared space is learned, an autoregressive decoder generates glosses by retrieving morphemes from the lexicon. At each decoding step the decoder scores all lexicon entries against the current word context using the dot product, selects the highest‑scoring morpheme, and proceeds to the next position. Because the candidate set is limited to the lexicon, the search space is dramatically smaller than in character‑level sequence models, yielding faster inference.

A key innovation is the “Mutable Lexicon.” Although the lexicon B is fixed during pretraining, users can extend or edit it at inference time without retraining the whole model. Adding new morphemes or correcting existing ones instantly influences decoding, offering a practical workflow for field linguists who frequently encounter novel morphological forms. Experiments show that expanding the lexicon by just 10 % improves BLEU‑like scores by an average of 2.3 %.

Efficiency gains are substantial: CWoMP reduces the total parameter count by roughly 30 % compared with state‑of‑the‑art character‑based Transformers and speeds up decoding by a factor of 1.8. The reduction stems from morpheme‑level tokenization, which shortens input sequences, and from the contrastive pretraining that yields strong generalization even with limited data.

The authors evaluate CWoMP on twelve low‑resource languages (including Wau, Tora, Lao, among others) and three medium‑resource languages (Hebrew, Turkish, Japanese). They report Morpheme Error Rate (MER) and overall gloss accuracy. CWoMP achieves an average MER of 12.4 %, outperforming the best existing systems that hover around 18 %. The advantage is especially pronounced in extreme low‑resource settings (≤1 k training sentences), where CWoMP reduces MER by up to 7 percentage points. Moreover, the mutable lexicon experiment confirms that modest lexicon augmentation yields measurable performance gains without any additional training.

Limitations are acknowledged. The approach requires an initial morpheme lexicon; if the lexicon is incomplete or noisy, the contrastive alignment may be compromised. Additionally, homonymous morphemes (multiple meanings) are not explicitly disambiguated beyond contextual cues, leading to occasional errors. Future work will explore multi‑prototype embeddings for polysemous morphemes, meta‑learning strategies for rapid adaptation, and automated lexicon construction pipelines to reduce manual effort.

In summary, CWoMP offers a principled, efficient, and interpretable solution for automatic IGT generation. By grounding glosses in learned morpheme embeddings and enabling on‑the‑fly lexicon updates, it delivers superior accuracy—particularly for low‑resource languages—while maintaining computational efficiency and providing linguists with transparent, editable resources.

Comments & Academic Discussion

Loading comments...

Leave a Comment