From Virtual Environments to Real-World Trials: Emerging Trends in Autonomous Driving

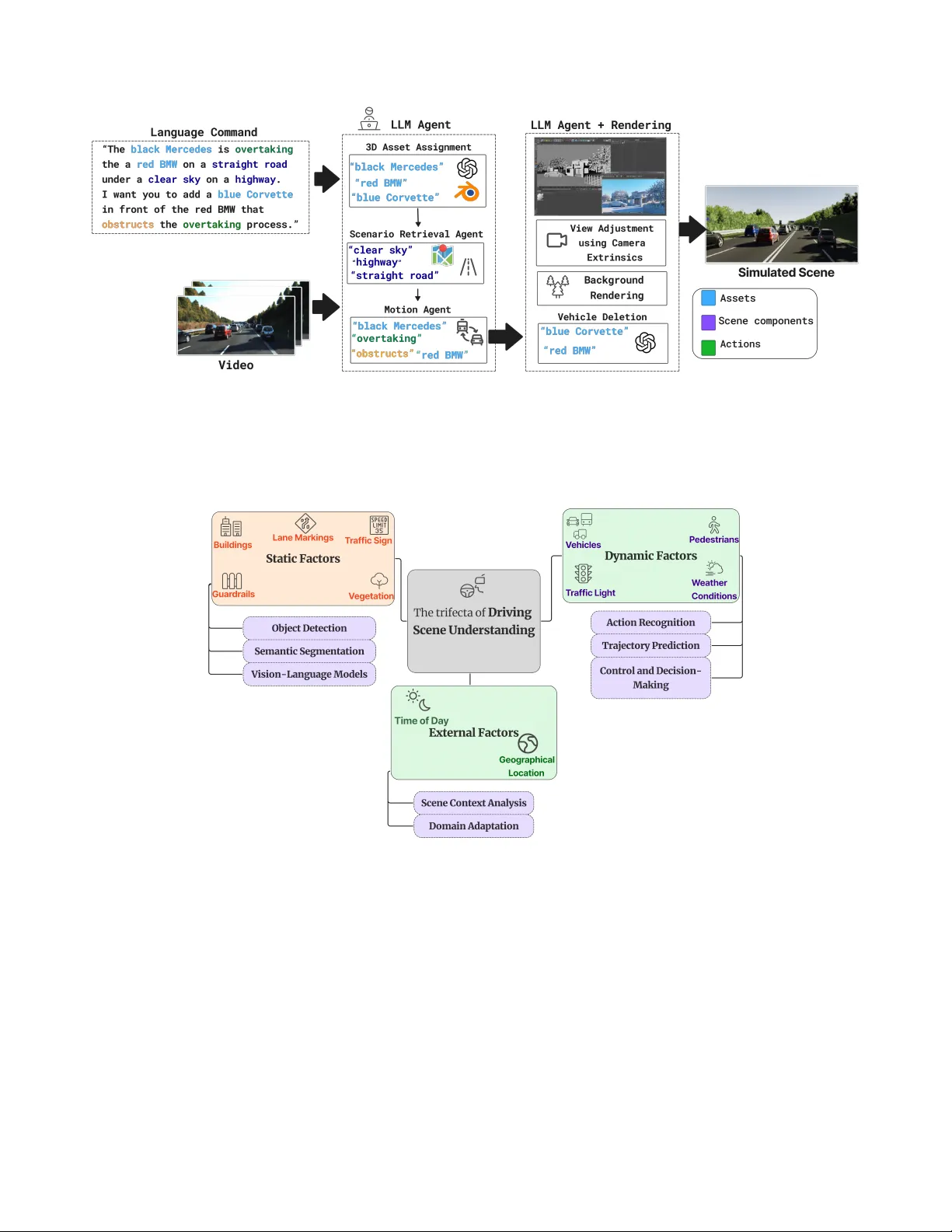

Autonomous driving technologies have achieved significant advances in recent years, yet their real-world deployment remains constrained by data scarcity, safety requirements, and the need for generalization across diverse environments. In response, s…

Authors: A. Humnabadkar, A. Sikdar, B. Cave