rSDNet: Unified Robust Neural Learning against Label Noise and Adversarial Attacks

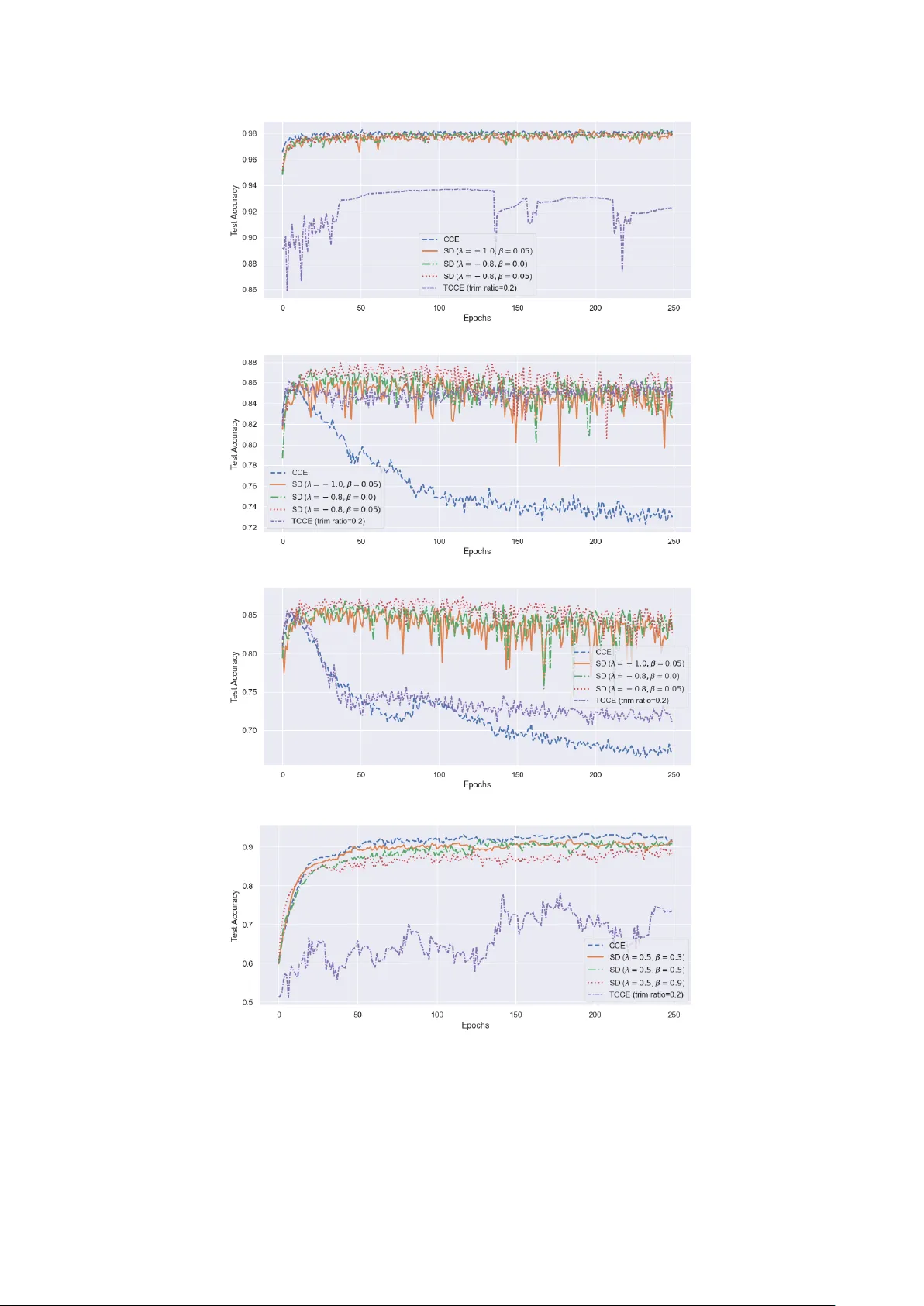

Neural networks are central to modern artificial intelligence, yet their training remains highly sensitive to data contamination. Standard neural classifiers are trained by minimizing the categorical cross-entropy loss, corresponding to maximum likel…

Authors: Suryasis Jana, Abhik Ghosh