EI: Early Intervention for Multimodal Imaging based Disease Recognition

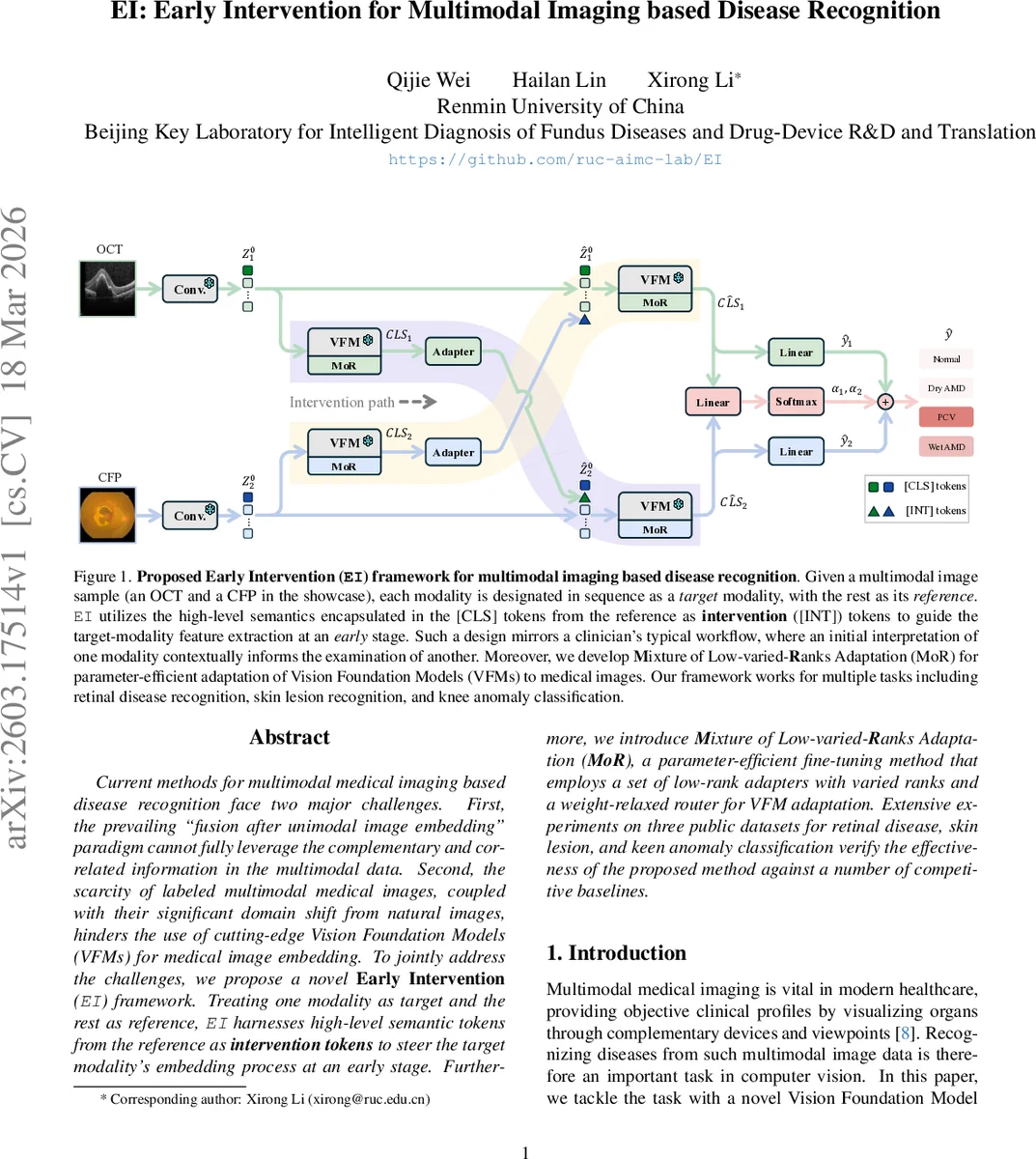

Current methods for multimodal medical imaging based disease recognition face two major challenges. First, the prevailing “fusion after unimodal image embedding” paradigm cannot fully leverage the complementary and correlated information in the multimodal data. Second, the scarcity of labeled multimodal medical images, coupled with their significant domain shift from natural images, hinders the use of cutting-edge Vision Foundation Models (VFMs) for medical image embedding. To jointly address the challenges, we propose a novel Early Intervention (EI) framework. Treating one modality as target and the rest as reference, EI harnesses high-level semantic tokens from the reference as intervention tokens to steer the target modality’s embedding process at an early stage. Furthermore, we introduce Mixture of Low-varied-Ranks Adaptation (MoR), a parameter-efficient fine-tuning method that employs a set of low-rank adapters with varied ranks and a weight-relaxed router for VFM adaptation. Extensive experiments on three public datasets for retinal disease, skin lesion, and keen anomaly classification verify the effectiveness of the proposed method against a number of competitive baselines.

💡 Research Summary

The paper addresses two fundamental challenges in multimodal medical image disease recognition: (1) the prevailing “fusion after unimodal embedding” paradigm fails to fully exploit complementary information across modalities, and (2) the scarcity of labeled medical images combined with a large domain gap from natural images limits the direct use of state‑of‑the‑art Vision Foundation Models (VFMs) such as CLIP or DINOv2. To overcome these issues, the authors propose an Early Intervention (EI) framework together with a novel parameter‑efficient fine‑tuning method called Mixture of Low‑varied‑Ranks Adaptation (MoR).

In EI, for each multimodal sample one modality is designated as the target while the remaining modalities serve as references. Each reference modality is processed by an auxiliary VFM to extract its final‑layer

Comments & Academic Discussion

Loading comments...

Leave a Comment