ReLMXEL: Adaptive RL-Based Memory Controller with Explainable Energy and Latency Optimization

Reducing latency and energy consumption is critical to improving the efficiency of memory systems in modern computing. This work introduces ReLMXEL (Reinforcement Learning for Memory Controller with Explainable Energy and Latency Optimization), a exp…

Authors: Panuganti Chirag Sai, G, holi Sarat

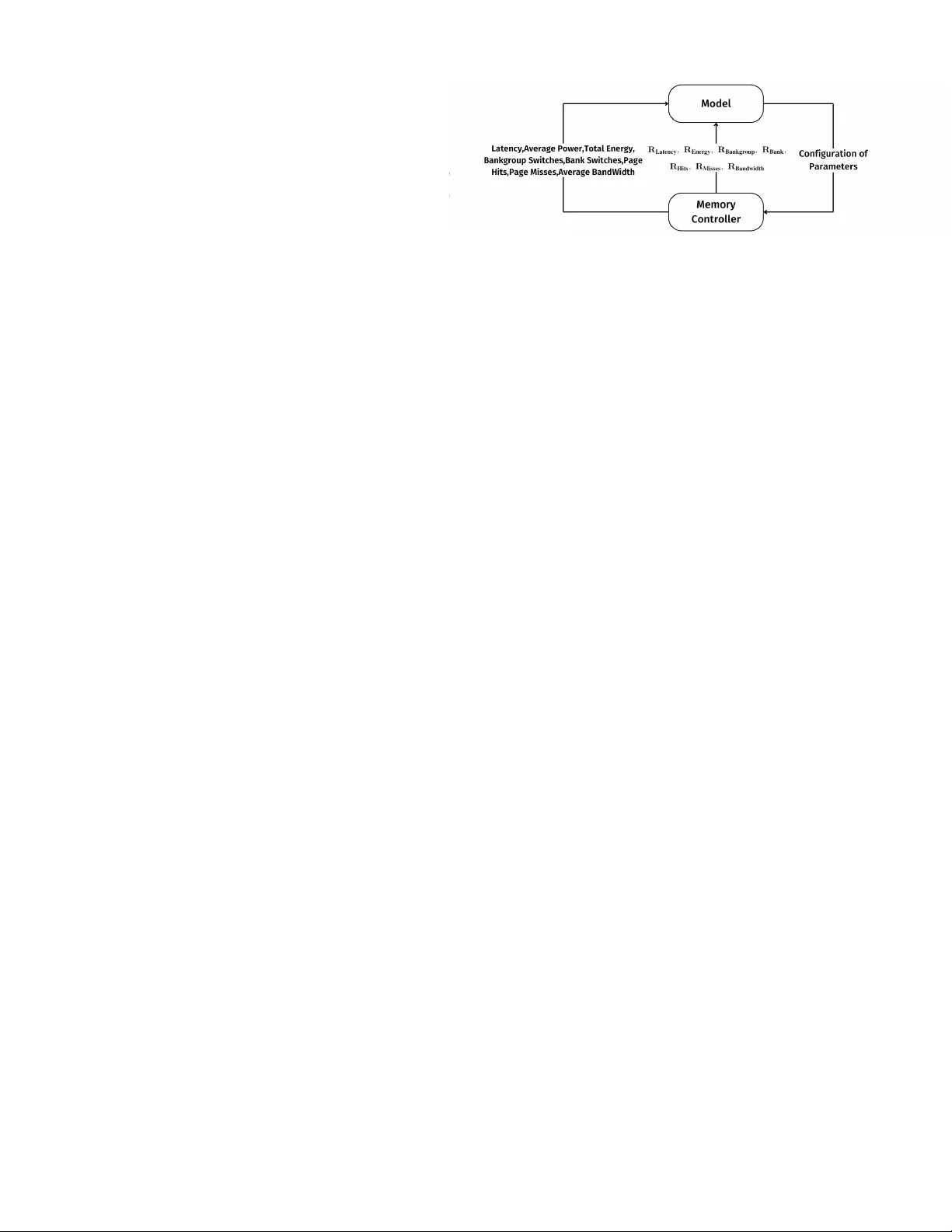

ReLMXEL: Adapti v e RL-Based Memory Controller with Explainable Ener gy and Latency Optimization Panuganti Chirag Sai Department of Mathematics and Computer Science Sri Sathya Sai Institute of Higher Learning chiragsaipanuganti@sssihl.edu.in Gandholi Sarat Department of Mathematics and Computer Science Sri Sathya Sai Institute of Higher Learning gandholisarat@sssihl.edu.in R. Raghunatha Sarma Department of Mathematics and Computer Science Sri Sathya Sai Institute of Higher Learning rraghunathasarma@sssihl.edu.in V enkata Kalyan T a vva Department of Computer Science and Engineering Indian Institute of T echnology Ropar kalyantv@iitrpr .ac.in Na veen M AI P erformance Engineer Red Hat nmiriyal@redhat.com Abstract —Reducing latency and ener gy consumption is critical to impro ving the efficiency of memory systems in modern computing. This w ork introduces ReLMXEL (Reinfor cement Learning f or Memory Controller with Ex- plainable Energy and Latency Optimization), a explainable multi-agent online r einfor cement learning framework that dynamically optimizes memory controller parameters using reward decomposition. ReLMXEL operates within the memory contr oller , leveraging detailed memory beha vior metrics to guide decision-making. Experimental evalu- ations across div erse workloads demonstrate consistent performance gains over baseline configurations, with refinements driven by workload-specific memory access beha viour . By incorporating explainability into the learning process, ReLMXEL not only enhances performance but also increases the transparency of control decisions, paving the way f or more accountable and adaptive memory system designs. I . I N T R O D U C T I O N In modern computing systems, Dynamic Random Access Memory (DRAM) is de-facto memory technology and plays a critical role in overall system performance, especially for memory- and compute-intensiv e workloads such as those encountered in machine learning (ML) training and inference. Consequently , significant research focuses on improving DRAM ef ficiency , particularly in reducing latency and energy consumption. The memory controller managing the communication between the processor and DRAM, is piv otal in achie ving these optimizations. A survey by W u et al. [1] re views the gro wing use of machine learning in computer archi- tecture, highlighting reinforcement learning (RL) as a promising technique for designing self optimizing memory controllers. These controllers are modeled as RL agents that choose DRAM commands based on long- term expected benefits and incorporate techniques such as genetic algorithms and multi-factor state representations to handle di verse objectiv es like energy and throughput. One prominent approach is the self-optimizing memory controller proposed by Ipek et al. [2], that uses RL to adapt scheduling decisions and outperform static policies across v arious workloads. Despite these improvements, there is a lack of trans- parency in RL-dri ven decisions, hindering their adoption in real-w orld systems that require e xplainability , reliability and trust. T o bridge this gap, we introduce Reinforcement Learning for Memory Controller with Explainable En- ergy and Latency Optimization (ReLMXEL), a novel multi-agent RL-based memory controller . ReLMXEL dynamically tunes memory policies to optimize latency and energy across div erse workloads, including several that exhibit computational patterns commonly found in machine learning (ML) applications, such as dense linear algebra (GEMM), memory-bound operations (STREAM, mcf) and irregular data access patterns (BFS, omnetpp) while incorporating explainability techniques to make its decisions interpretable. This approach builds upon prior 1 work in adaptiv e memory systems and aims to balance performance with accountability in complex computing en vironments. I I . L I T E R A T U R E R E V I E W I S t a t e 5 z+ 1 > Ag en t R e wa r d Rt / A ct i o n A t En vir o n m e n t S t \ / 1 Fig. 1. Reinforcement Learning Framework [3] In a RL frame work, an agent interacts with the en vironment over discrete timesteps. At each timestep t , the agent observes the current state S t , selects an action A t based on a policy π ( a | s ) , receiv es a rew ard R t , and transitions to a new state S t +1 . The goal of the agent is to learn an optimal policy that maximizes the expected cumulativ e re ward over time. This process continues iterati vely , allo wing the agent to learn a policy π ( a | s ) that maximizes the expected cumulati ve reward ov er time. Machine learning approaches generally require large, labeled datasets and assume that data distributions remain stationary . Howe ver , memory systems exhibit highly dynamic behavior , with workloads and access patterns changing rapidly over time. T raditional ML methods lack the capability to adapt on-the-fly and cannot effecti vely capture the dynamism in memory systems. Whereas, an RL agent learns through direct interaction with the envi- ronment, making decisions based on real-time feedback rather than relying on pre-collected data. This allows RL to effecti vely handle non-stationary en vironments by continuously adapting its policy as system conditions e volv e. Additionally , RL optimizes long-term cumulativ e re wards, and supports multi-objective optimization tasks such as balancing energy efficiency , bandwidth, and latency . These strengths make RL particularly well-suited for memory controller parameter tuning. A. Self-Optimizing Memory Contr ollers: A Reinfor cement Learning Appr oach The Self-Optimizing Memory Controller by Ipek et al. [2] overcomes the limitations of static DRAM controllers by using reinforcement learning to dynamically adapt command scheduling. It models the controller as an RL agent interacting with an environment composed of processor cores, caches, buses, DRAM banks, and scheduling queues. The state includes features such as read/write counts and load misses, while actions include Precharge, Acti vate, Read-CAS, Write-CAS, REF , or NOP commands. The agent recei ves a rew ard of 1 for read/write and 0 otherwise. SARSA [3], [4] updates Q-values [5] using a Cerebellar Model Artic- ulation Controller (CMA C) function approximator [6] with overlapping coarse-grained Q-tables to handle the large state space. This approach enables adaptability to workload changes, optimizing scheduling decisions. Ho wev er, it focuses solely on scheduling, neglecting important parameters such as arbitration, refresh policies, page policies, scheduler buf fer policies, and the maximum number of permitted active transactions. Furthermore, the lack of explainability in learned policies limits interpretability and reliability , highlighting the need for memory controllers that balance adaptability with transparency . B. Pythia: A Customizable Hardwar e Prefetc hing F rame- work Using Online Reinfor cement Learning The Pythia [7] framework proposes a prefetcher for cache optimization using reinforcement learning. Pythia treats the prefetcher as an RL agent, where, for each demand request, it observes various types of program context information to make a prefetch decision. After each decision, Pythia receiv es a numerical reward that e v aluates the quality of the prefetch, considering current memory bandwidth usage. This reward strengthens the correlation between the observed program context and the prefetch decisions, helping generate more accurate, timely , and system-aware prefetch requests in the future. The primary objecti ve of Pythia is to discov er the optimal prefetching policy that maximizes the number of accurate and timely prefetch requests while incorporating system- le vel feedback. The state space is a k -dimensional vector of program features, S ≡ { φ S 1 , φ S 2 , . . . , φ S k } . The action is the selection of a prefetch offset from a set of pre- determined offsets. The reward is calculated based on factors like Accurate and T imely , Accurate but Late, Loss of Cov erage, Inaccurate, and No Prefetch [7]. C. Reinfor cement Learning using Rewar d Decomposition In Explainable Reinfor cement Learning via Rewar d Decomposition [8], the scalar reward in con ventional reinforcement learning is decomposed into a re ward 2 vector , where each element represents the reward from a specific component. Say , we have two possible actio ns a 1 and a 2 av ailable to the agent in a giv en state s . The reward vector helps explain why an action a 1 is preferred ov er another a 2 in a state s . The explanati on is provided through the Rewar d Differ ence Explanati on (RDX) , defined as: ∆( s, a 1 , a 2 ) = Q ( s, a 1 ) − Q ( s, a 2 ) , (1) wherein, each component ∆ c ( s, a 1 , a 2 ) represents the dif ference in expected return with respect to a component c . A positiv e ∆ c indicates an advantage of a 1 ov er a 2 , and vice versa. When the rew ard components are numerous, the authors introduce Minimal Sufficient Explanation (MSX) . An MSX is a minimal subset of components whose cumulativ e advantage justifies the preference of one action over another . Specifically , an MSX for a 1 ov er a 2 is giv en by the smallest subset MSX + ⊆ C such that: X c ∈ MSX + ∆ c ( s, a 1 , a 2 ) > d, (2) where d is the total disadvantage from negati vely con- tributing components: d = − X ∆ c ( s,a 1 ,a 2 ) < 0 ∆ c ( s, a 1 , a 2 ) . (3) T o verify whether each component in MSX + is necessary , a necessity check is introduced and calculated as: v = X c ∈ MSX + ∆ c ( s, a 1 , a 2 ) − min c ∈ MSX + ∆ c ( s, a 1 , a 2 ) , (4) Finally , checking if any subset of negati ve components MSX − has a total disadvantage exceeding v , if so, all the elements in MSX + are deemed necessary , leading to the formal definition: MSX − = arg min M ⊆C | M | s.t. X c ∈ M − ∆ c ( s, a 1 , a 2 ) > v (5) I I I . R E L M X E L W e no w propose a strategy: Reinforcement Learn- ing for Memory Controller with Explainable Energy and Latency Optimization (ReLMXEL), that operates within an RL setting. The memory controller serves as the environment, providing information/metrics such as latency , av erage power , total energy consumption, bandwidth utilization, bank and bankgroup switches, and ro w buf fer (page) hits and misses. Latency is tracked per request to reflect internal delays. A verage power and \ > Mo d e l I L a t e n cy, Ave r a g e Po w e r, T o t a l En e r g y, Ba n kg r o u p Sw i t c h e s, Ba n k Sw i t c h e s, p a g e H i t s, p a g e Mi sse s, A ve ra g e Ba n d w i d t h R L a t e n cy- R E n e r g y- R B a n kg r o u p - R B a n k- \ - I I i t s- vl l l i sscs R B a n d w i d t h C o n f i g u ra t i on o f Par am e t e rs M em o ry C o nt r ol l er N / E Fig. 2. ReLMXEL Framew ork total energy are deriv ed from DRAM state transitions and activity counters. The bandwidth utilization captures interface efficienc y . The bank and bank group switches are logged to monitor access locality , and the ro w buf fer hits and misses indicate the effecti veness of row management. These metrics provide deep visibility into DRAM behavior and serve as observations for the RL agent, which computes per-metric re wards and selects actions to optimize the ov erall DRAM performance. The actions consist of configurable DRAM param- eters, including PagePolicy (Open, OpenAdapti ve, Closed, ClosedAdaptiv e), which gov erns whether a ro w remains open or closed immediately after access. Scheduler (FIFO, FR-FCFS, FR-FCFS Grp), defines ho w memory requests are prioritized and ordered to balance fairness and throughput. SchedulerBuffer (Bankwise, ReadWrite, Shared), determines ho w request queues are or ganized, either by bank, by read/write separation, or as a shared buf fer . Arbiter (Simple, FIFO, Reorder), selects which commands proceed to DRAM based on fixed priorities, order, or dynamic reordering to improve timing ef ficiency . RespQueue (FIFO, Reorder), controls the order in which responses are sent back to the requester . RefreshPolicy (NoRe- fresh, AllBank), manages how DRAM refresh opera- tions are performed to maintain data integrity while minimizing interference. RefreshMaxPostponed ( 0 , . . . , 7 ), and RefreshMaxPulledin ( 0 , . . . , 7 ), al- lo w the controller to delay or advance refreshes within limits to reduce conflicts with memory ac- cesses. RequestBufferSize limits the number of outstanding requests the controller can hold and MaxActiveTransactions ( 2 x where x = 0 , . . . , 7 ) controls the number of concurrent active DRAM com- mands. Through iterati ve interaction, the agent learns to tune DRAM parameters for optimal efficiency . It can be noted that the framework is generalized and can be extended/adapted to various standards (DDR/GDDR/LPDDR, etc.,) and generations, and v arying polices like SameBank Refresh,chopped-BurstLength, etc. 3 Algorithm 1 ReLMXEL Algorithm 1: Input: T imesteps T , base seed s , threshold w , learning rate α , discount factor γ 2: Output: All Q-tables Q i and R C 3: Initialize ϵ old , ϵ new , R C ← 0 4: f or i = 1 to N do ▷ N agents 5: s i ← s + i ▷ Seed per agent 6: Initialize Q i ( s, a i , r ) 7: end for 8: Initialize current state s old 9: Select initial action vector a ← ( a 1 , . . . , a N ) using 10: ϵ -greedy strategy 11: f or t = 1 to T do 12: Apply action a to memory controller 13: Extract performance metrics ( R j, obs ) M j =1 14: Compute re wards metric-wise using Eq. (6) 15: if t < w then 16: ϵ ← ϵ old 17: else 18: ϵ ← ϵ new 19: R C ← R C + R T ▷ Cumulative Reward 20: end if 21: f or i = 1 to N do ▷ Each agent chooses action 22: if random number < ϵ then 23: a ′ i ← random action for agent i 24: else 25: a ′ i ← arg max a ′ i P j Q i ( s old ,i , a ′ i , r j ) 26: end if 27: end f or 28: a ′ ← ( a ′ 1 , a ′ 2 , . . . , a ′ N ) ▷ Next Action 29: s new ← a ▷ New State 30: f or i = 1 to N do 31: f or each rew ard r j do 32: Compute Q i ( s old ,i , a i , r j ) using Eq. (8) 33: end f or 34: end f or 35: s old ← s new 36: a ← a ′ 37: end for 38: r eturn All Q-tables Q i , R C As described in Algorithm 1, each configurable pa- rameter is associated with a Q-table [5]. The rew ard is calculated by the function: R X = R target | R target − R observed | (6) wherein, the subscript X corresponds to the reward R of a performance metric, R target and R observed corresponds to the ideal rew ard and the rew ard of current timestep respecti vely . R T is defined as: R T = 7 X i =1 R X i ; where X i is a performance metric (7) The Q-value [5], denoted as Q ( s, a ) , represents the expected cumulativ e rew ard for taking an action a in the state s and follo wing the current policy . These Q-v alues [5] are stored in a Q-table, a lookup table org anized such that each dimension corresponds to discrete states and possible actions for specific DRAM parameters. During decision-making, the agent uses the current state and possible actions as indices to retriev e the associated Q-v alues, enabling ef ficient ev aluation of expected rewards.The model follows the SARSA [3], [4] update rule to continuously improv e its policy based on observed transitions. Q ( s t , a t ) ← Q ( s t , a t )+ α h r t + γ Q ( s t +1 , a t +1 ) − Q ( s t , a t ) i (8) where s t and a t are the current state and action, r t is the receiv ed rew ard, s t +1 is the next state, and a t +1 is the next action chosen using the current policy . Here, α is the learning rate ( 0 < α ≤ 1 ) and γ is the discount factor ( 0 ≤ γ ≤ 1 ). T o guide the learning process, we define a warmup threshold w , representing the initial number of iterations focused on exploration, this allows the algorithm to adequately explore v arious memory controller parameters before commencing the optimization. A base seed is used to generate a unique seed for each agent. A. Explainability of ReLMXEL Follo wing the approach giv en by Juozapaitis et al., in ReLMXEL, the conv entional scalar RL reward is decomposed into a vector representing system-level performance metrics. The Q-function is decomposed into indi vidual Q-v alues for each of the rew ard types. For a gi ven state s , an action a 1 is selected ov er a 2 if f: X c Q c ( s, a 1 ) > X c Q c ( s, a 2 ) (9) T o understand further we use RDX. But this setup leads us to consider ev ery possible action state pair . T o simplify , we apply the MSX as in II-C which provides a rationale for selecting action a 1 ov er a 2 if X c ∈ MSX + ∆ c ( s, a 1 , a 2 ) > d (10) where d is the disadvantage from negati vely contributing factors. 4 Consider an action a 1 which uses open page policy and improv es latency and bandwidth but negati vely impacts energy . Another action a 2 which uses closed page policy of fers huge improvement in energy but negati vely impacts latency and bandwdith. MSX identifies the smallest subset of components that adequately justifies the preference for a 2 . For example, if the energy improv ement is substantial enough to outweigh the latency and bandwidth drawbacks, MSX helps explain the decision as ’the improv ement in energy alone justifies the action, despite losses in other components’. Similarly , consider an action a 3 that uses simple arbitra- tion policy and reduces energy consumption significantly but negati vely impacts the latency and bandwidth usage. On the other hand, another action a 4 using reorder arbitration policy provides moderate improv ements in both latency and bandwidth with slight increase in energy consumption. MSX could justify the action a 3 by explain- ing: ’The significant reduction in energy consumption is enough to justify a 3 against moderate improvements in latency and bandwidth of a 4 . ’ I V . E X P E R I M E N TA L S E T U P A N D R E S U L T S W e performed experiments using DDR4 memory [9] in DRAMSys simulator [10], featuring a burst length of eight, four bank groups with four banks each, and each bank comprising of 32,768 rows and 1024 columns of size 8 bytes per device. The system uses a single channel, single rank configuration, made up of x 8 DRAM de vices. The baseline memory controller employs an OpenAdapti ve Page Policy , outperforming static open and closed policies [11], and uses the widely adopted FR-FCFS scheduling [12] algorithm with a bank wise scheduler buf fer supporting up to eight requests. It also supports an All-bank refresh policy with up to eight postponed and eight pulled-in refreshes. The controller manages up to 128 activ e transactions, and an arbitration unit reorders incoming requests. W e consider traces, generated using Intel’ s Pin T ool [13], from the GEMM [14], STREAM [15] benchmarks and Breadth First Search ( BFS ). GEMM , represents dense linear algebra operations, while STREAM consists of vector -based operations. Both demonstrate computational patterns that are characteristic of ML workloads. Addition- ally , we use traces from the SPEC CPU 2017 [16] suite, specifically high memory intensiv e applications, namely , fotonik_3d_s , mcf_s , lbm_s , and roms_s stress the memory hierarchy due to their large data sets and frequent memory accesses. The compute intensive workloads include xalancbmk_s and gcc_s , which in volve heavy computation for tasks such as XML transformations and code compilation. The omnetpp_s requires intensive processing for network simulations while handling large amounts of simulation data, placing equal strain on the CPU and memory system. The SPEC CPU 2017 traces are generated using the ChampSim [17] simulator , the traces are captured by monitoring last-level cache misses during simula- tions that execute at least ten billion instructions. The DRAMSys simulator integrated with DRAMPower [18] provides performance metrics such as latency , average po wer consumption, total energy usage and, average and maximum bandwidth, etc. T o gain deeper insights into memory behavior , we also extract additional metrics, including the number of bank group switches which occur when the memory controller switches between dif ferent bank groups within the DRAM, bank switches refers to switching between different banks within a bankgroup. Additionally , we track row buf fer hits, which represent instances where the requested data is already in the row buf fer , while row buf fer misses occur when data is not in the buf fer, requiring additional time to fetch from the corresponding ro w . A. Results The experiments use a discount factor ( γ ) of 0.9 and a learning rate ( α ) of 0.1. These values are chosen based on design space exploration across γ ∈ { 0 . 9 , 0 . 95 , 0 . 99 } and α ∈ { 0 . 01 , 0 . 1 , 0 . 3 , 0 . 5 , 0 . 6 , 0 . 7 , 0 . 8 } . While each workload has its own optimal ( γ , α ) pair , the combination providing the highest re ward across all workloads is used for all subsequent ev aluations. W e also introduce a T race- split parameter , that segments the trace file into fixed- size partitions. After each partition, the model makes decisions about the parameters and takes feedback from the SARSA using rew ard vector and Q-T ables, improving performance for the next timestep. Through experimentation, we set the trace split pa- rameter to 30,000 and the exploration parameter ϵ new to 0 . 001 , as v alues like 0 . 01 hinder con vergence due to excessi ve randomness, and 0 . 0001 limit exploration, slow- ing recovery from suboptimal choices. The percentage improv ements are computed relativ e to the baseline as follo ws: for energy and latency metrics, the improv ement is calculated as Improv ement (%) = Baseline − ReLMXEL Baseline × 100 , so that a positiv e value indicates a reduction compared to the baseline. For the bandwidth metric, the improvement 5 W orkload Time Steps Threshold w Baseline Reward ReLMXEL Reward A verage Energy (%) A verage Bandwidth (%) A verage Latency (%) STREAM 20170 16000 15555.06 17597.07 3.84 8.39 0.23 GEMM 19468 17000 6572.88 7121.46 3.83 4.95 0.01 BFS 17995 14000 9673.14 10842.41 7.66 7.22 -0.03 fotonik 3d 20770 17000 4870.89 9165.52 7.66 2.90 0.07 xalancbmk 16494 14000 3092.9 3320.38 7.68 107.03 -0.02 gcc 17863 14000 9154.29 9556.25 7.66 1.70 -0.24 roms 17563 14000 8017.8 13554.84 7.67 35.63 0.08 mcf 17894 14000 6013.5 6075.53 7.67 40.19 -4.43 lbm 18473 15000 5496.77 14934.6 7.67 26.73 0.05 omnetpp 16682 14000 4743.99 6688.05 4.06 138.78 -0.09 T ABLE I C O M PA R I S O N O F B A S E L I N E A N D R E L M X E L P E R F O R M A N C E is calculated as Improv ement (%) = ReLMXEL − Baseline Baseline × 100 , so that a positiv e value indicates an increase compared to the baseline. STREAM GEMM BFS fotonik 3d xalancbmk gcc roms mcf lbm omnetpp 0 500 1 , 000 1 , 500 W orkloads A vg Energy (pJ/ 10 9 ) Baseline ReLMXEL Fig. 3. A verage energy consumption The % improv ement of average Energy , Bandwidth and Latency columns in T able I show that ReLMXEL consistently outperforms the baseline across all w orkloads. ReLMXEL achieves high bandwidth utilization and re- duced latency , while also exhibiting slightly better energy ef ficiency than the baseline in memory-bound workloads, such as STREAM and GEMM . It also performs well in band- width utilization and energy ef ficiency for irregular and graph-based workloads, including BFS , fotonik_3d , and roms , as well as on compute-intensiv e workloads, such as xalancbmk , gcc , and lbm , reflecting opti- mized computation scheduling. W orkloads with high memory traffi c or communication demands, including mcf and omnetpp , achiev e improvement in energy consumption and bandwidth utilization; howe ver a slight increase in latency , indicates a trade-off between energy ef ficiency and data transfer overhead. STREAM GEMM BFS fotonik 3d xalancbmk gcc roms mcf lbm omnetpp 0 50 100 150 W orkloads A vg Bandwidth (Gb/s) Baseline ReLMXEL Fig. 4. A verage bandwidth utilization STREAM GEMM BFS fotonik 3d xalancbmk gcc roms mcf lbm omnetpp 0 2 4 · 10 6 W orkloads A vg Latency (ps/ 10 6 ) Baseline ReLMXEL Fig. 5. A verage latency Figures 3, 4, and 5 illustrate how ReLMXEL ’ s dy- namic tuning approach incrementally optimizes memory controller parameters through a step-by-step, feedback dri ven process that adapts to real time workload charac- teristics. Lev eraging a multi-agent reinforcement learning frame work with explainability , it balances competing 6 objecti ves to optimize overall system performance. As a result, significant reductions in energy consumption and bandwidth gains are achiev ed across diverse workloads, particularly in memory-bound and irregular patterns, without causing substantial latency degradation. This minimal impact on latency demonstrates that ReLMXEL successfully navig ates the tradeoffs inherent in system optimization, proving the eff ectiv eness of its adaptiv e, feedback dri ven parameter tuning in deliv ering balanced and robust performance improv ements. V . C O N C L U S I O N A N D F U T U R E D I R E C T I O N S The proposed ReLMXEL based memory controller achie ved enhanced efficienc y and transparency . The RL framework proposed optimizes memory controller parameters while decomposing rew ards to model energy , bandwidth, and latency trade-offs. Experimental results sho wed significant performance improv ements across di verse workloads, confirming the framew ork’ s ability to balance competing system objectiv es. This integration of adaptive learning with interpretable decision-making marks a key advancement in memory systems, paving the way for future research into self-optimizing, high- performance architectures with explainability . As RL optimizes memory controller parameters, en- abling adaptiv e responses to dynamic workloads, RL based optimizations can be extended to heterogeneous memory architectures, such as hybrid non volatile memory systems, to assess its robustness in real-world scenarios. Integrating RL with hardware in the loop setups allows real time interaction with actual hardware, bridging the gap between simulations and real-world applications. Additionally , RL can help in efficient detection and mitigation of DRAM security threats like row hammer attacks, by identifying malicious memory access patterns and adjusting memory access strategies to pre vent data corruption or security breaches. R E F E R E N C E S [1] N. W u and Y . Xie, “ A surve y of machine learning for computer architecture and systems, ” ACM Computing Surve ys , vol. 55, no. 3, pp. 1–39, Feb. 2022. [Online]. A vailable: http://dx.doi.org/10.1145/3494523 [2] E. Ipek, O. Mutlu, J. F . Mart ´ ınez, and R. Caruana, “Self- optimizing memory controllers: A reinforcement learning ap- proach, ” in 2008 International Symposium on Computer Arc hi- tectur e , 2008, pp. 39–50. [3] R. S. Sutton and A. G. Barto, Reinfor cement Learning: An Intr oduction . Cambridge, MA, USA: A Bradford Book, 2018. [4] G. A. Rummery and M. Niranjan, On-line Q-learning using connectionist systems . Uni versity of Cambridge, Department of Engineering Cambridge, UK, 1994, vol. 37. [5] C. J. C. H. W atkins and P . Dayan, “Q-learning, ” Machine Learning , vol. 8, no. 3, pp. 279–292, May 1992. [Online]. A vailable: https://doi.org/10.1007/BF00992698 [6] J. Albus, “New approach to manipulator control: The cerebellar model articulation controller (cmac)1, ” 1975-09-30 1975. [Online]. A vailable: https://tsapps.nist.gov/publication/get pdf. cfm?pub id=820151 [7] R. Bera, K. Kanellopoulos, A. Nori, T . Shahroodi, S. Subramoney , and O. Mutlu, “Pythia: A customizable hardware prefetching framework using online reinforcement learning, ” in MICR O-54: 54th Annual IEEE/ACM International Symposium on Micr oarc hitectur e . A CM, oct 2021, pp. 1121–1137. [Online]. A vailable: http://dx.doi.org/10.1145/3466752.3480114 [8] Z. Juozapaitis, A. K oul, A. Fern, M. Erwig, and F . Doshi-V elez, “Explainable reinforcement learning via reward decomposition, ” in Pr oceedings of the International Joint Confer ence on Arti- ficial Intelligence (IJCAI) W orkshop on Explainable Artificial Intelligence , 2019. [9] (2021) Jedec ddr4 sdram standard document. [Online]. A vailable: https://www .jedec.org/standards- documents/docs/jesd79- 4a [10] L. Steiner, M. Jung, F . S. Prado et al. , “Dramsys4.0: An open-source simulation framew ork for in-depth dram analyses, ” International Journal of P arallel Pro gramming , vol. 50, pp. 217–242, 2022. [11] Intel Corporation, “Performance differences for open- page / close-page policy , ” Jul. 2024. [Online]. A vailable: https://www .intel.com/content/www/us/en/content- details/826015/performance- differences- for- open- page- close- page- policy .html [12] S. Rixner , “Memory controller optimizations for web servers, ” in 37th International Symposium on Microar chitectur e (MICRO- 37’04) , 2004, pp. 355–366. [13] V . J. Reddi, A. Settle, D. A. Connors, and R. S. Cohn, “Pin: a binary instrumentation tool for computer architecture research and education, ” in Pr oceedings of the 2004 W orkshop on Computer Architec tur e Education: Held in Conjunction with the 31st International Symposium on Computer Arc hitectur e , ser . WCAE ’04. New Y ork, NY , USA: Association for Computing Machinery , 2004, pp. 22–es. [Online]. A vailable: https://doi.org/10.1145/1275571.1275600 [14] A. Lokhmotov , “Gemmbench: a framework for reproducible and collaborative benchmarking of matrix multiplication, ” 2015. [Online]. A vailable: https://arxiv .org/abs/1511.03742 [15] J. D. McCalpin, “Memory bandwidth and machine balance in current high performance computers, ” IEEE Computer Society T echnical Committee on Computer Ar chitectur e (TCCA) Ne wslet- ter , pp. 19–25, dec 1995, http://tab .computer.or g/tcca/NEWS/ DEC95/dec95 mccalpin.ps. [16] Standard Performance Evaluation Corporation, “Spec cpu 2017 benchmark suite, ” 2017. [Online]. A vailable: https: //www .spec.org/cpu2017/ [17] N. Gober, G. Chacon, L. W ang, P . V . Gratz, D. A. Jimenez, E. T eran, S. Pugsle y, and J. Kim, “The Championship Simulator: Architectural Simulation for Education and Competition, ” arXiv e-prints , p. arXiv:2210.14324, Oct. 2022. [18] K. Chandrasekar , C. W eis, Y . Li, S. Goossens, M. Jung, O. Naji, B. Akesson, N. W ehn, and K. Goossens, “DRAMPower: Open- source DRAM Power & Energy Estimation T ool, ” http://www . drampower .info, 2014, accessed: April 2025. 7

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment