PhasorFlow: A Python Library for Unit Circle Based Computing

We present PhasorFlow, an open-source Python library introducing a computational paradigm operating on the $S^1$ unit circle. Inputs are encoded as complex phasors $z = e^{iθ}$ on the $N$-Torus ($\mathbb{T}^N$). As computation proceeds via unitary wa…

Authors: Dibakar Sigdel, Namuna P, ay

Abstract W e presen t PhasorFlo w , an op en-source Python library in tro ducing a computational paradigm operating on the S 1 unit circle. Inputs are enco ded as complex phasors z = e iϕ on the N -T orus ( T N ). As computation pro ceeds via unitary wa v e interference gates, global norm is preserv ed while individual comp onen ts drift in to C N , allowing algorithms to nativ ely lev erage con tin uous geometric gradien ts for predictive learning. PhasorFlow pro vides three core con tributions. First, we formalize the Phasor Cir cuit mo del ( N unit circle threads, M gates) and in troduce a 22-gate library cov ering Standard Unitary , Non-Linear, Neuro- morphic, and Encoding op erations with full matrix algebra sim ulation. Second, w e presen t the V ariational Phasor Cir cuit (VPC), analogous to V ariational Quantum Circuits (V QC), enabling optimization of contin uous phase parameters for classical machine learning tasks. Third, we introduce the Phasor T r ansformer , replacing expensive QK T V attention with a parameter-free, DFT-based token mixing lay er inspired b y FNet. W e v alidate PhasorFlow on non-linear spatial classification, time-series prediction, financial v olatilit y detection, and neuromorphic tasks including neural binding and oscillatory asso ciativ e memory . Our results establish unit circle computing as a deterministic, light w eigh t, and mathematically princi- pled alternative to classical neural netw orks and quantum circuits. It op erates on classical hardw are while sharing quantum mechanics’ unitary foundations. PhasorFlow is av ailable at https://github.com/mindverse- computing/phasorflow . Keyw ords: unit circle computing, phasor circuits, v ariational circuits, F ourier transform, transformer, brain–computer in terface, oscillatory computing 1 PhasorFlo w: A Python Library for Unit Circle Based Computing Dibak ar Sigdel 1 ∗ , Nam una P anda y 1 1 Mindv erse Computing LLC, W A 98087 Marc h 19, 2026 1 In tro duction The landscap e of computation has historically b een defined b y the mathematical structures up on whic h data is represen ted and transformed. Classical digital computers operate on discrete bits—p oin ts on a zero-dimensional manifold { 0 , 1 } . Quan tum computers lev erage qubits, whic h reside in the complex pro jectiv e space CP 1 , enabling true quantum superp osition and non-lo cal en tanglemen t through unitary op erations on exponential ly scaling Hilbert spaces [ 1 ]. Bet w een these extremes lies a ric h hierarch y of computational manifolds that remain largely unexplored in the con text of programmable classical computing frameworks. W e refer to this hierarch y as the Ge ometric L adder of Computation : 1. Bits : P oin ts on a discrete set { 0 , 1 } —zero-dimensional. 2. Phasors : P oin ts on the unit circle S 1 ∼ = U(1) —one-dimensional contin uous manifold. 3. Qubits : Poin ts in complex pro jectiv e space CP n —parameterized b y both amplitude and phase. W e visualize these three primary computational paradigms in Figure 1 . The unit circle S 1 , corresponding to the unitary group U(1) , occupies the middle ground of this ladder. It is the simplest contin uous group that supports in terference, p erio dicit y , and F ourier analysis—three prop erties that are foundational to both signal pro cessing and quantum mec hanics [ 2 , 3 ]. A computational elemen t on S 1 , which we term a phasor , is a complex n um- b er of unit modulus: z = e iϕ , where ϕ ∈ [0 , 2 π ) is a contin uous phase angle. Unlik e qubits, whose state v ectors reside in a linear Hilbert space allowing for true quantum sup erposition, phasor states b egin strictly constrained to the N -T orus ( T N ), a compact, non-linear manifold. Because the N -T orus is not closed under addition, linear in terference (mixing) naturally shifts the state off the manifold into the broader C N complex space. Unlike rigid digital logic, this transien t departure from unit magnitude allo ws phasor net w orks to naturally scale contin uous w av e in terference dynamically across la yers. This comparison is summarized in T able 1 . In this paper, we presen t PhasorFlo w , a Python library that pro vides a complete framework for unit cir cle b ase d c omputing . PhasorFlow dra ws design inspiration from quantum computing framew orks such as Qiskit [ 4 ], adopting a circuit-based programming mo del in whic h a user defines a circuit of N phasor threads (unit circles) and applies a sequence of M gate op erations. Ho wev er, unlik e quantum sim ulators, PhasorFlo w circuits are ev aluated analytically through direct matrix m ultiplication, yielding deterministic outputs without sampling noise. ∗ devdeep137@gmail.com 2 Classical Bit X 0 1 1 0 • En tity: Discrete Bit ∈ { 0 , 1 } . • Op eration: Logic Gate (Bo olean Switc h). • Dynamics: Jumps b et ween isolated states. Unit Circle e iθ ϕ ϕ + θ R i R e iϕ • En tity: Contin uous Phase ϕ ∈ [0 , 2 π ) . • Op eration: Phase Shift (Scalar Mult.). • Dynamics: Smo oth rotation on a circle. Qubit H | 0 ⟩ | + ⟩ | 0 ⟩ | 1 ⟩ | ψ ⟩ • En tity: Sup erp osition α | 0 ⟩ + β | 1 ⟩ . • Op eration: Quantum Gate (Unitary Matrix). • Dynamics: Complex rotation in Hilb ert Space. Figure 1: The three paradigms of computation. PhasorFlo w in tro duces the Unit Circle paradigm as a contin uous, deterministic bridge b et ween discrete classical bits and complex, non-deterministic quantum qubits. T able 1: Summary matrix of the three computational paradigms. F eature Classical Bit Unit Circle Qubit State Space 2 N Discrete Poin ts Con tinuous Angles Hilb ert Space P arameters 0 (Rigid Logic) N (Linear Scaling) 2 N +1 − 2 (Exp onent ial) Manifold 0D Hyp ercub e N -T orus ( T N ) Complex Pro jective CP 2 N − 1 Gate T yp e Logic (AND/OR) Rotation Matrices Unitary Matrices Execution Deterministic Deterministic (Phase) Probabilistic (Sup erp osition) Connection Wire Connectors Phase Coupling / Mixing En tanglement The deve lopmen t of PhasorFlow is fundamentally motiv ated by the prev alence of complex spatio-temp oral dynamics across div erse real-world domains. Man y natural and artificial sys- tems are inherently oscillatory or cyclical, rendering traditional Euclidean representations less effectiv e. F or instance, contin uous dynamical systems often present complex p erio dic b ound- ary interactions that are difficult to isolate with standard dense linear lay ers. In neuroscience, Brain-Computer Interface (BCI) data consists of con tinuous, high-density neural signals that are fundamen tally spatio-temp oral and oscillatory in nature, naturally demanding phase-based analysis [ 5 ]. In systems biology , gene expression and multi-omics cellular activities are tigh tly regulated by circadian rhythms and other biological clo cks, pro ducing profound spatio-temporal transcriptomic profiles [ 6 , 7 ]. Similarly , quantitativ e finance demands mo dels capable of trac k- ing the con tinuous, interconnected spatio-temp oral fluctuations of asset p ortfolios interacting at high frequencies [ 8 ]. By elev ating computation natively to the unit circle, PhasorFlo w pro vides a mathematical architecture optimally aligned for encoding, mixing, and extracting phase-based in teraction dynamics inherent in these complex systems. The contributions of this w ork are threefold: 1. Phasor Circuit F ormalism. W e define a complete gate algebra for unit circle com- putation comprising an expanded library of 22 gates categorized into Standard Unitary , Non-Linear, Neuromorphic, and Enco ding op erations. W e prov e that the linear gate set forms a unitary group action on the N -torus T N = ( S 1 ) N , and demonstrate the capabilit y of PhasorFlow to perform Neuromorphic computing (such as neural binding and oscillatory 3 asso ciativ e memory) natively . 2. V ariational Phasor Circuits (VPC). W e introduce trainable phasor circuits for ma- c hine learning, analogous to V ariational Quan tum Circuits [ 9 , 10 ] but op erating en tirely on classical hardware. VPCs enco de data as phase angles, apply parameterized gate la yers, and extract predictions through phase-to-probability mappings. W e demonstrate VPC classifiers on high-dimensional synthetic non-linear b oundary datasets. 3. Phasor T ransformer and Single-Blo c k Sequence Mo dels. Inspired b y the FNet arc hitecture [ 11 ], we construct a Phasor T ransformer that replaces the O ( n 2 ) self-atten tion mec hanism with a parameter-free DFT-based token mixing la yer. By utilizing a single transformer blo c k with trainable phase pro jections, w e establish a minimal, highly efficien t sequence prediction mec hanism built entirely from unit circle op erations. The remainder of this paper is organized as follo ws. Section 2 establishes the mathematical foundations of U(1) phasor computing. Section 3 details the three methodological contributions: phasor circuits, VPCs, and Phasor T ransformers. Section 4 describ es the PhasorFlo w soft ware arc hitecture. Section 5 presen ts applications to non-linear binary classification, time-series pre- diction, and financial anomaly detection. Section 6 rep orts experimental results. Section 7 and Section 8 pro vide discussion, future directions, and concluding remarks. 2 Theory This section establishes the mathematical framew ork for unit circle computing. W e first define phasor states on the N -torus, then formalize the unitary op erators that act on those states. Throughout this section, ϕ denotes phasor state angles and θ denotes unitary-op erator param- eters. 2.1 State Space Definition 2.1 (Phasor Circuit State) . F or N c omputational thr e ads, a phasor cir cuit state is a ve ctor z = ( z 1 , . . . , z N ) ⊤ ∈ C N , z k = e iϕ k , ϕ k ∈ [0 , 2 π ) , (1) with admissible enc o de d states c onstr aine d to T N = ( S 1 ) N by | z k | = 1 for al l k . A phasor circuit with N independent unit circles (which we term thr e ads ) has its state residing on the N -torus: T N = S 1 × S 1 × · · · × S 1 | {z } N = ( S 1 ) N . (2) The state of the system is represented as a complex vector: z = z 1 z 2 . . . z N = e iϕ 1 e iϕ 2 . . . e iϕ N ∈ C N , | z k | = 1 ∀ k . (3) The initial state of the circuit is defined as all phasors at zero phase: z 0 = 1 1 . . . 1 , (4) 4 corresp onding to ϕ k = 0 for all threads k = 1 , . . . , N . It is crucial to distinguish this T N geometry from a standard linear v ector space (suc h as the R N hidden states of classical neural netw orks or the C 2 N Hilb ert space of quan tum mechanics). The N -T orus is a compact, non-linear manifold. While the state is represented mathematically as a complex vector, the strict constraint that | z k | = 1 implies that the linear addition of tw o v alid states z A + z B generally do es not pro duce a v alid state on the torus. The loss of the linear sup erposition principle is traded for absolute geometric stabilit y—the state cannot explo de to infinit y . Prop osition 2.1 (T orus-Preserving Diagonal Action) . L et D = diag( e iθ 1 , . . . , e iθ N ) ∈ U (1) N b e a diagonal phase-shift op er ator with gate p ar ameters θ k ∈ [0 , 2 π ) , and let z ∈ T N . Then D z ∈ T N . Pr o of. Eac h co ordinate transforms as ( D z ) k = e iθ k z k and therefore | ( D z ) k | = | e iθ k | | z k | = 1 . ϕ 1 1 Unit ( S 1 ) ϕ 1 ϕ 2 2 Units ( T 2 ) 3 Units ( T 3 ) ϕ 1 ϕ 2 ϕ 3 ≈ ≈ ≈ ≈ Figure 2: The geometric ev olution of the in ternal phase manifold strictly dep ends connecting dimension lines on b oundaries. An N -thread state resolv es mathematically onto the p erio dic co ordinate map of an N -T orus ( T N ). 2.2 Unitary Op erations Unitary op erators act on phasor state v ectors in the ambien t v ector space C N . A linear operator U is unitary when U U † = U † U = I , where U † = ¯ U T . (5) The three operator structures used b y PhasorFlo w are: U(1) : single-thread phase rotation. A one-thread unitary has the scalar form u ( θ ) = e iθ ∈ U(1) , θ ∈ [0 , 2 π ) . (6) Em b edded in to an N -thread state, this becomes a diagonal operator acting on one co ordinate: S k ( θ ) = diag(1 , . . . , e iθ , . . . , 1) ∈ U( N ) . (7) U(2) : t w o-thread mixing. A t wo-thread mixing op erator acts on a 2D subspace as M ( θ ) = a ( θ ) b ( θ ) c ( θ ) d ( θ ) ∈ U(2) , (8) with orthonormal columns/rows implied b y Equation ( 5 ). When det M ( θ ) = 1 , the op erator b elongs to SU(2) . 5 U( N ) : global mixing. Global couplers act on all threads sim ultaneously: W ( θ ) ∈ U( N ) , (9) with the DFT matrix as a canonical parameter-free example in this class. Hence, Shift, Mix, and DFT are interpreted at the lev el of group structure as Shift: U(1) , Mix: U(2) , DFT/global mixing: U( N ) . (10) If det( U ) = 1 , the corresponding op eration is special unitary ( SU( n ) ). Under a unitary transformation, the state evolv es as: z ′ = U z . (11) Since unitary matrices preserve the ℓ 2 -norm of complex vectors, ∥ z ′ ∥ = ∥ U z ∥ = ∥ z ∥ , the total energy of the system is conserved. F or a circuit with M sequen tial gates U 1 , U 2 , . . . , U M , the final state is: z final = U M · · · U 2 · U 1 · z 0 = M Y m =1 U m ! z 0 . (12) The pro duct of unitary matrices is itself unitary , so the entire linear circuit represen ts a single comp osite isometric op eration. Ho wev er, a critical geometric shift arises during execution. While a unitary mixing operation (suc h as U(2) Mix or global U( N ) mixing) preserv es the global ℓ 2 -norm ∥ z ′ ∥ = ∥ z ∥ , individual thread magnitudes | z ′ k | generally drift a w ay from 1 due to in terference. Thu s, the state departs from the N -torus manifold in to the ambien t v ector space C N . This drift is inten tionally allow ed during linear propagation for represen tational expressivity . A pull-back mechanism is then in tro duced in the Metho ds section (non-linear gates) to re-pro ject states back to w ard T N b efore the next cycle when top ology control is required. Theorem 2.1 (Unitary Energy Conserv ation with Co ordinate Drift) . L et U ∈ U ( N ) and z ∈ T N . Then ∥ U z ∥ 2 = ∥ z ∥ 2 = √ N . (13) If U is non-diagonal, ther e exists z ∈ T N such that U z / ∈ T N . Pr o of. Unitarit y gives norm preserv ation directly . F or non-diagonal U , some output co ordinate is a non-trivial linear com bination of at least t wo unit-mo dulus entries, so coordinatewise modulus is generally not preserved under interference. Corollary 2.2 (Ambien t-Space Propagation) . Interme diate states of VPC and Phasor T r ans- former layers ar e natur al ly pr op agate d in C N even when enc o de d inputs lie on T N . Remark 2.1 (State Space vs. Operator Space) . It is essential to distinguish two distinct math- ematic al obje cts thr oughout this p ap er. 1. Phasor state ve ctors z ∈ C N ar e elements of a v ector space . When al l c o or dinates satisfy | z k | = 1 , the state lies on the sub-manifold T N ⊂ C N ; after unitary mixing it may le ave T N while r emaining in C N . The phase angle of c o or dinate k is denote d ϕ k = arg( z k ) ∈ [0 , 2 π ) . 2. Gate op er ators G ∈ U( N ) ar e group elemen ts acting on C N . Their internal p ar ameters ar e denote d θ . Shift op er ations ar e U(1) on individual thr e ads (or U(1) N diagonal ly), Mix op er ations ar e U(2) on thr e ad p airs, and DFT op er ations ar e glob al U( N ) maps. When an op er ator has determinant one, it is in the c orr esp onding sp e cial unitary gr oup SU( n ) . In summary: ϕ (Gr e ek phi) always denotes a phasor angle (state); θ (Gr e ek theta) always denotes a gate rotation parameter (op er ator). 6 2.3 Phase Coherence and In terference A key observ able in phasor systems is the phase c oher enc e , which measures the degree of align- men t among the N phasors: C = 1 N N X k =1 z k = 1 N N X k =1 e iϕ k . (14) When all phasors are perfectly aligned ( ϕ k = ϕ for all k ), the coherence reac hes its maximum C = 1 . When phases are uniformly distributed around the circle, destructiv e in terference drives C → 0 . This observ able provides a natural, parameter-free measure of the internal structure of a phasor state, and will b e used as a feature detector in our applications. 2.4 Manifold Comparison T able 2 summarizes the key differences b et ween the computational manifolds on the Geometric Ladder. T able 2: Comparison of computational manifolds. Prop ert y Bit Phasor ( S 1 ) Qubit ( CP 1 ) Manifold dimension 0 1 2 State parameters 1 (binary) 1 ( ϕ ) 2 ( θ , ϕ ) Deterministic Y es Y es No In terference No Y es Y es Sup erposition No No Y es Group structure Z 2 U(1) SU(2) Hardw are requiremen t Classical Classical Quan tum The phasor mo del inherits the classical wa ve interference prop ert y (enabling constructiv e and destructive combination) while remaining fully deterministic and executable on standard arc hitecture without requiring quan tum sup erp osition or non-local quan tum entanglemen t. This p ositions S 1 computing as an intermediate paradigm—more expressive than bit-based comput- ing for oscillatory dynamics, yet fundamen tally classical and strictly practical for immediate application. 3 Metho d This section presen ts the three principal metho dological con tributions of PhasorFlo w: the Phasor Circuit mo del (Section 3.1 ), the V ariational Phasor Circuit for machine learning (Section 3.2 ), and the Phasor T ransformer architecture for sequence mo deling (Section 3.3 ). 3.1 Phasor Circuits A Phasor Cir cuit P ( N , M ) is defined by N unit circle threads and an ordered sequence of M gate op erations. The circuit maps an initial state z 0 ∈ T N to a final state z f through sequential application of gate matrices. The PhasorFlo w library organizes 22 core gate primitives into four op erational categories: Standard Unitary , Non-Linear, Neuromorphic, and Enco ding gates. 3.1.1 Shift Gate S ( θ ) The Shift gate applies a phase rotation of angle θ ∈ [0 , 2 π ) to a single thread k . A cting on the full N -dimensional state, it is represented as an N × N diagonal matrix with e iθ at p osition 7 ϕ 1 ϕ 2 ϕ 3 ϕ 4 ϕ 5 S S M M S M ϕ ′ 1 ϕ ′ 2 ϕ ′ 3 ϕ ′ 4 ϕ ′ 5 Figure 3: An example of an N = 5 contin uous Phasor Circuit constructed from parameterized Shift ( S ) gates and fixed entangling Mix ( M ) gates, visually analogous to a parameterized quan tum circuit cascade. ( k , k ) and ones elsewhere: S k ( θ ) = diag (1 , . . . , e iθ |{z} k -th , . . . , 1) . (15) Since | e iθ | = 1 , the diagonal matrix S k ( θ ) is trivially unitary: S k ( θ ) S k ( θ ) † = I . Applied to a single phasor state e iϕ , the 1 × 1 matrix form is simply: S ( θ ) = e iθ , (16) pro ducing S ( θ ) e iϕ = e i ( ϕ + θ ) . In v ariational circuits, θ serves as a trainable phase w eight, analogous to rotation parameters in parameterized quantum circuits [ 12 ]. Group mem b ership. S k ( θ ) acts as a U(1) op eration on the target thread; embedded in the full N -dimensional op erator algebra it b ecomes a diagonal elemen t of U(1) N ⊂ U( N ) with determinan t det S k ( θ ) = e iθ . It is in SU( N ) only when θ = 0 (identit y). The N -fold product of indep enden t Shift gates Q N k =1 S k ( θ k ) generates the maximal torus U(1) N of U( N ) . ϕ in ϕ out = ϕ in + θ Shift S ( θ ) : e iϕ 7→ e i ( ϕ + θ ) Figure 4: Circuit represen tation of the Shift gate acting on a single computation thread. 3.1.2 Mix Gate M j k The Mix gate creates interference betw een t wo threads j and k . It is defined as a 2 × 2 unitary matrix that acts as a 50/50 b eam splitter: M j k = 1 √ 2 1 i i 1 . (17) V erification of unitarit y: M j k M † j k = 1 2 1 i i 1 1 − i − i 1 = 1 0 0 1 = I . (18) The Mix gate transforms a pair of phasors ( z j , z k ) as: z ′ j z ′ k = 1 √ 2 z j + iz k iz j + z k . (19) 8 This operation in tro duces phase-dep enden t coupling: the output phases dep end on the r elative phase difference ϕ j − ϕ k b et ween the t wo input threads. In the context of neural netw orks, the Mix gate functions as a fixed (non-parameterized) coupling lay er that prev ents trivial factoriza- tion of the circuit in to independent single-thread op erations. Group membership. M j k is an elemen t of SU(2) on the tw o-thread subspace: one can v erify det( M j k ) = 1 2 (1 · 1 − i · i ) = 1 , so M j k ∈ SU(2) ⊂ U(2) . When embedded into the N -dimensional space it acts as the iden tity on all other threads, yielding M j k ∈ U( N ) . ϕ j ϕ ′ j ϕ k ϕ ′ k M Figure 5: Circuit representation of the Mix gate, en tangling tw o adjacent con tinuous phase threads. 3.1.3 Discrete F ourier T ransform (DFT) Gate The DFT gate applies a global N × N unitary transformation across all threads simultaneously , mixing all phases through the discrete F ourier basis: F N = 1 √ N 1 1 1 · · · 1 1 ω ω 2 · · · ω N − 1 1 ω 2 ω 4 · · · ω 2( N − 1) . . . . . . . . . . . . . . . 1 ω N − 1 ω 2( N − 1) · · · ω ( N − 1) 2 , (20) where ω = e − 2 π i/ N is the N -th ro ot of unity . The DFT matrix is unitary b y construction: eac h ro w (and column) forms an orthonormal set under the standard inner pro duct on C N [ 13 ]. The action on the state vector transforms from the “spatial” domain to the “frequency” domain: z ′ k = 1 √ N N − 1 X n =0 z n ω kn , k = 0 , 1 , . . . , N − 1 . (21) Unlik e the Shift and Mix gates, the DFT gate couples al l N threads simultaneously , creating global interference patterns. This mak es it the most pow erful mixing op eration in PhasorFlo w, and it pla ys a central role in the Phasor T ransformer arc hitecture (Section 3.3 ). Group membership. F N ∈ U( N ) is a global isometry of the state space C N ; each ro w/- column is an orthonormal F ourier basis v ector. Whether F N ∈ SU( N ) dep ends on N (the determinan t is a ro ot of unity whose exact v alue dep ends on N modulo 4), but in all cases | det F N | = 1 . 3.1.4 In v ert Gate The Inv ert gate is a sp ecial case of the Shift gate with θ = π : I k = S k ( π ) = diag (1 , . . . , − 1 |{z} k -th , . . . , 1) , (22) whic h maps z k 7→ − z k = e i ( ϕ k + π ) , reflecting the phasor across the origin. 9 ϕ 1 ϕ ′ 1 ϕ 2 ϕ ′ 2 ϕ 3 ϕ ′ 3 ϕ 4 ϕ ′ 4 ϕ 5 ϕ ′ 5 DFT ( F 5 ) Figure 6: Circuit representation of the N = 5 Discrete F ourier T ransform (DFT) gate. Unlike strictly lo cal or pairwise op erations, the DFT acts intrinsically as an all-to-all global unitary op erator, extracting F ourier-basis classical wa ve interference phases across the en tire thread registry simultaneously . 3.1.5 A dditional Standard Unitary Gates Bey ond the core mixing and phase shift operations, PhasorFlo w includes structural wire routing and aggregation gates: P ermute Gate. The Perm ute gate reorders thread indices nativ ely without breaking con tin- uous wa ve topologies. Giv en a p ermutation v ector π = ( π 1 , . . . , π N ) , it maps the system state as: P π ( z ) = ( z π 1 , z π 2 , . . . , z π N ) . (23) Rev erse Gate. The Rev erse gate executes a time-rev ersal op erator by globally conjugating the complex state vector across all N threads: R ( z ) = z ∗ = ( z ∗ 1 , z ∗ 2 , . . . , z ∗ N ) . (24) A ccumulate Gate. The Accum ulate gate p erforms a lo cal cum ulative complex summation sw eeping sequen tially across adjacent threads: A ( z ) k = k X j =1 z j , k = 1 , . . . , N . (25) This coheren t phase summation p o wers wa v e-fron t dynamics, seamlessly generating recursive structures like the Fib onacci sequence strictly through con tin uous w av e in terference. GridPropagate Gate. F or 2D dynamic programming, the GridPropagate gate simula tes w av efront propagation across a lattice top ology , where the state of eac h no de ( r , c ) accumu- lates its top and left neigh b ors: G ( Z ) r,c = Z r − 1 ,c + Z r,c − 1 , (26) enabling native unit-circle ev aluation of lo calized connectivity graphs. 10 3.1.6 Gate Library Summary PhasorFlo w ships with a comprehensive library of 22 primitiv e gates that organically comp ose in to fully functional algorithmic and machine learning pip elines. T able 3 summarizes the com- plete op erational toolkit. T able 3: Summary of all 22 primitiv e gates a v ailable in the PhasorFlow computing framew ork, categorized by op eration type. Category Gate Name Operation / F unction Description Standard Unitary Shift Phase rotation proportional to input v alue ( z 7→ z · e iθ ). In vert Phase flip by π radians ( z 7→ − z ). Mix T wo-thread in terference (beam splitter). DFT Global sequence tok en mixing via Discrete F ourier T ransform. P ermute Reordering of computing thread state indices. Rev erse Time-rev ersal via global complex conjugation ( z 7→ z ∗ ). A ccumulate Cum ulative complex wa ve summation ( z n +1 = z n +1 + z n ). GridPropagate W a v efron t propagation accum ulation across a 2D lattice. Non-Linear Threshold Filters low-magnitude phasors and forces outputs to zero. Saturate Quan tizes phase geometry tow ard discrete binary anchors. Normalize Pulls an y generic C N state rigidly bac k to the T N unit circle. LogCompress Logarithmic amplitude compression ( µ -la w analog). CrossCorrelate Ev aluates phase coherence betw een discrete pattern sequences. Con volv e Sliding contin uous spatial con volution along threads. Neuromorphic Kuramoto Global phase synchronization tow ards a mean alignment field. Hebbian Asso ciative memory adaptation via nearest-neigh b or phase pull. Ising An ti-ferromagnetic coupling driving bi-mo dal ( 0 , π ) symmetry . Synaptic Con tinuous drag/coupling betw een targeted neural oscillators. AsymmetricCouple Non-recipro cal directed phase influence across nodes. Enco ding Enco dePhase Maps real-v alued r i in to the spatial phase domain [0 , 2 π ) . Enco deAmplitude Maps scalar magnitudes ph ysically on to the wa ve norm. 3.1.7 Non-Linear Gates While the linear gates (Shift, Mix, DFT) execute unitary transformations that conserve global energy , they dynamically shift the individual threads aw a y from the unit magnitude constraint. Mo ving the state v ector off the N -T orus manifold into the full C N complex space expands the system’s abilit y to natively scale laten t in teraction magnitudes. F or situations requiring explicit top ology control or discrete programmatic decisions, PhasorFlo w provides optional non-linear gates that act as geometric pro jections, breaking unitarit y to force the state back tow ards T N . Threshold Gate. The Threshold gate acts as a non-linear activ ation function on the phasor amplitude, optionally p erforming a rigid re-normalization step. Giv en a complex v alue z with magnitude | z | and a threshold parameter τ : T ( τ )( z ) = ( z / | z | if | z | ≥ τ , 0 if | z | < τ . (27) After Mix or DFT op erations, individual thread amplitudes deviate from unity . The Thresh- old gate provides a discrete decision mechanism: phasors with sufficient geometric stabilit y are rigidly mapped straigh t back to the T N manifold, while w eak destructive signals are strictly suppressed. While this hard-constrains in termediate states, empirical models lik e the deep V ari- ational Phasor Circuit often b ypass this thresholding en tirely to exploit con tin uous complex w av e topologies. 11 Saturate Gate. The Saturate gate discretizes the con tinuous phase angle to one of L equally spaced levels: Sat ( L )( z ) = exp i · round θ 2 π /L · 2 π L , θ = arg( z ) . (28) F or L = 2 , the Saturate gate snaps phases to either 0 or π , effectiv ely quantizing the con tinuous phasor to a binary represen tation. This gate enables error correction in oscillatory memory net w orks and serves as the physical foundation for Hopfield-type attractor dynamics [ 14 , 15 , 16 , 17 ]. A dditional Non-Linear Op erations. The Normalize Gate (or PullBac k) contin uously enforces unit-magnitude topology | z | = 1 iteratively during complex cascade flo ws. F or dynamic range adjustmen t, the LogCompress Gate attenuates signal extrema. Finally , the CrossCor- relate and Conv olv e gates execute complex sequence sliding operations directly in the spatial phase domain. 3.1.8 Neuromorphic Gates PhasorFlo w introduces a suite of non-unitary asso ciative trac king gates explicitly designed for brain-inspired computing. • Kuramoto Gate : Implements global phase synchronization across con tinuous p opula- tions [ 18 , 19 ]. • Hebbian Gate : Mo difies outer-pro duct asso ciativ e phase links ∆ W j k ∝ z j ¯ z k to store m ulti-pattern oscillator memories [ 15 ]. • Ising Gate : A discrete coupling op erator that drives threads to strictly bipartite consensus arra ys. • Synaptic & AsymmetricCouple Gates : Enact directed phase momentum transfer b et ween distinct computational reserv oirs. 3.1.9 Encoding Gates The EncodePhase Gate and Enco deAmplitude Gate comprise the nativ e in terface for loading external real-v alued data structures geometrically onto the N -T orus ( T N ) manifold or in to full contin uous C N amplitudes. 3.1.10 Circuit Execution A complete circuit P ( N , M ) with gate sequence G 1 , G 2 , . . . , G M is executed by applying each gate matrix sequen tially to the state v ector: z f = G M · · · G 2 · G 1 · z 0 . (29) Eac h gate G m acts on either a single thread (Shift, Inv ert), a pair of threads (Mix), or all threads (DFT). F or single-thread and pair-thread gates, the op eration is embedded into the full N -dimensional space b y acting as the iden tit y on all non-target threads. The computational cost of circuit execution is O ( M · N 2 ) in the general case, dominated by the DFT gate applications. Comp osite group structure. The sequen tial pro duct of M linear (unitary) gates yields a total circuit op erator U circ = G M · · · G 1 ∈ U( N ) , (30) b ecause the pro duct of unitary matrices is unitary . If det G m = 1 for every gate m (as is the case for Mix gates), then U circ ∈ SU( N ) ; Shift gates with θ = 0 contribute a unit-mo dulus 12 phase factor to det U circ , keeping it in U( N ) \ SU( N ) in general. Non-linear gates (Threshold, Normalize, Saturate, etc.) break unitarit y and are therefore not elemen ts of U( N ) ; they act as pro jections on to sub-manifolds of C N . 3.1.11 Leaky-In tegrate-and-Phase (LIP) La yer The LIP lay er pro vides a contin uous-time dynamical system for N coupled oscillators, inspired b y the Kuramoto model [ 18 ]: dϕ k dt = − γ ( ϕ k − ϕ rest ) + N X j =1 W kj sin( ϕ k − ϕ j ) + I ext k , (31) where γ is the leak rate, ϕ rest is the resting phase, W kj are synaptic coupling w eights, and I ext k is external input. The LIP la yer enables simulation of neural binding phenomena—the pro cess by whic h dis- tributed neural populations ac hieve phase sync hronization to represen t a unified p ercept. 3.1.12 Associative Memory (Hopfield-Phase Mo del) The PhasorFlowMemory class implements an oscillatory Hopfield net work that stores and re- triev es phase patterns via Hebbian learning: W = 1 P P X p =1 z ( p ) z ( p ) † , W kk = 0 , (32) where z ( p ) = e i ϕ ( p ) are the stored phase patterns and P is the n umber of patterns. P attern reco very from a corrupted input pro ceeds via iterativ e phase-lo cking: z ( t +1) = z ( t ) + δ t · W z ( t ) | z ( t ) + δ t · W z ( t ) | , (33) where the division is element-wise and re-normalizes each phasor to the unit circle. This dy- namics conv erges to the nearest stored attractor, enabling conten t-addressable memory retriev al [ 14 , 20 ]. 3.2 V ariational Phasor Circuit (VPC) The V ariational Phasor Circuit (VPC) extends the Phasor Circuit mo del to machine learning b y introducing trainable parameters. The VPC arc hitecture is directly analogous to V ariational Quan tum Circuits (V QCs) [ 9 , 10 ] and parameterized quan tum circuits [ 12 ], but op erates deter- ministically on classical hardware. 3.2.1 Arc hitecture A VPC consists of three stages: enco ded phasor input, v ariational unitary ev olution, and deter- ministic state extraction. Definition 3.1 (Single-Lay er VPC Op erator) . Given an enc o de d input phasor state z in ∈ T N and tr ainable op er ator p ar ameters θ ∈ R N , a single-layer VPC op er ator is V ( θ ) = U local N Y k =1 S k ( θ k ) ! , (34) wher e U local = Q k =0 , 2 , 4 ,... M k,k +1 is lo c al p airwise mixing. The forwar d state is z f = V ( θ ) z in . 13 Thread z 3 Thread z 2 Thread z 1 Thread z 0 Enco ded State z in S ( θ in 0 ) S ( θ in 1 ) S ( θ in 2 ) S ( θ in 3 ) M 2 , 3 M 0 , 1 S ( θ 0 ) S ( θ 1 ) S ( θ 2 ) S ( θ 3 ) M 1 , 2 S ( θ out 0 ) S ( θ out 1 ) S ( θ out 2 ) S ( θ out 3 ) Output State z f Figure 7: The continuous physical circuit architecture of a Va riational Phaso r Circuit (VPC). Exter- nally enco ded phasor-states ( z in ) enter the net wo rk directly; hardw a re Mix gates p rovide interference- based coupling that sup ersedes classical multi-la yer matrices on the unit-circle manifold, while train- able unitary-operator pa rameters ( θ ) modulate subsequent Shift la yers. Stage 1: Data Enco ding. Data enco ding is p erformed upstream of the VPC. The circuit receiv es an already encoded phasor state z in = ( e iϕ 1 , e iϕ 2 , . . . , e iϕ N ) ⊤ ∈ T N , (35) where ϕ k are phase co ordinates produced by an external encoder. Consequen tly , encoding op erations are not coun ted as VPC gates. Stage 2: V ariational La yer. The VPC v ariational la yer applies t w o unitary op erations in sequence: (i) trainable Shift rotations and (ii) lo cal pairwise Mix coupling. V ( θ ) = U local · N Y k =1 S k ( θ k ) , (36) where θ = ( θ 1 , . . . , θ N ) are the trainable operator parameters, U local = Y k =0 , 2 , 4 ,... M k,k +1 , (37) with lo cal in terference generated en tirely by Mix gates. Th us, Stage 2 explicitly contains the tw o VPC op erations used in this architecture: Shift ( S k ) and local Mix ( M k,k +1 ). The resulting Stage-2 unitary ev olution is z f = Y k =0 , 2 , 4 ,... M k,k +1 | {z } Local mix · N Y k =1 S k ( θ k ) | {z } Shift parameters · z in . (38) Corollary 3.1 (Deterministic VPC Readout) . F or fixe d ( z in , θ ) , the VPC output pr ob abilities fr om Equations ( 42 ) and ( 43 ) ar e deterministic functions of z f with no sampling varianc e. A single-lay er VPC in tro duces an exceptionally lean parameter coun t of | θ | = N . Prop osition 3.1 (Linear P arameter F o otprin t of VPC) . F or depth L and width N , if e ach layer c ontributes one tr ainable phase p er thr e ad, then the total tr ainable p ar ameter c ount is P VPC = N L. (39) 14 Stac ked (Deep) VPC. As with transformer blo cks, VPC lay ers can b e stack ed. Let V ( ℓ ) = U ( ℓ ) local N Y k =1 S k ( θ ( ℓ ) k ) ! , (40) for lay er index ℓ = 0 , . . . , L − 1 . The depth- L propagation is z ( ℓ +1) = V ( ℓ ) z ( ℓ ) , z (0) = z in , (41) with final state z f = z ( L ) . If strict manifold con trol is required b etw een la yers, an optional pull-bac k op eration can b e inserted after eac h lay er to re-pro ject in termediate states tow ard T N b efore the next la y er. Stage 3: Deterministic State Extraction. The final state v ector z f is ev aluated determin- istically by extracting the phase(s) of designated output threads. F or binary classific ation , the phase of thread 0 is mapp ed to a probabilit y using a sin usoidal en velope: p = sin( ϕ 0 ) + 1 2 ∈ [0 , 1] , (42) where ϕ 0 = arg( z f , 0 ) is the phase angle of the first output thread. F or multi-class classific ation with K classes, the absolute phases of the first K threads serve as logits, passed through a softmax function: p c = exp( | ϕ c | ) P K − 1 c ′ =0 exp( | ϕ c ′ | ) , c = 0 , 1 , . . . , K − 1 , (43) where ϕ c = arg( z f ,c ) . 3.2.2 Loss F unctions and Optimization The trainable operator parameters θ are optimized to minimize a task-sp ecific loss function. F or binary classification, w e use the mean squared error (MSE): L MSE ( θ ) = 1 |D | X ( ϕ ,y ) ∈D ( p ( ϕ ; θ ) − y ) 2 . (44) F or multi-class classification, we use categorical cross-en tropy: L CE ( θ ) = − 1 |D | X ( ϕ ,y ) ∈D log p y ( ϕ ; θ ) . (45) Because the circuit execution is fully analytic (no stochastic sampling), the loss landscape is smo oth and con tinuous. With the recent in tegration of nativ e PyT orch complex tensor op era- tions, this enables efficient large-scale optimization lev eraging contin uous Autograd backpropa- gation: • PyT orch Adam (Adaptiv e Momen t Estimation): Now p ow ers the ma jority of deep Pha- sorFlo w training tasks. By pro jecting strictly contin uous phases directly through the C N w av e interference without hard S 1 top ology clipping, A dam traces exact mathematical gradien ts across deep VPC and T ransformer stac ks, dramatically accelerating con vergence o ver thousands of parameters. • COBYLA / L-BFGS-B : Retained for smaller heuristic netw orks or h ybrid quan tum pip elines (suc h as joint measuremen t optimization in Phasor-to-Qubit scenarios) where deriv ative-free approximations are required. 15 Unlik e quan tum VQCs, whic h suffer from barren plateau problems due to the exp onentially large Hilbert space, VPCs op erate b y trac king structural wa v e v ariations scaling predictably across C N , where the loss landscape dimensionality scales linearly with N rather than exponen- tially . 3.3 Phasor T ransformer The Phasor T ransformer adapts the transformer arc hitecture [ 21 ] to unit circle computing by replacing the self-atten tion mec hanism with the DFT gate. This approach is directly inspired b y Go ogle’s FNet [ 11 ], which demonstrated that replacing the QK T V atten tion la yer with a F ourier transform ac hieves comparable p erformance on many NLP b enc hmarks while reducing computational complexity from O ( n 2 ) to O ( n log n ) . 3.3.1 Arc hitecture A Phasor T ransformer follows the same structural pattern as VPC: Stage 1 Data Enco ding, Stage 2 V ariational T ransformer Lay er, and Stage 3 Deterministic State Extraction. Stage 1: Data Enco ding. F or a context windo w of length T , ra w inputs s = ( s 1 , . . . , s T ) are externally mapped to phase coordinates ϕ = ( ϕ 1 , . . . , ϕ T ) and encoded as ϕ t = s t max | s | · π 2 , (46) whic h initializes the transformer input state z in = ( e iϕ 1 , . . . , e iϕ T ) ⊤ . Stage 2: V ariational T ransformer Lay er. A single Phasor T ransformer blo c k consists of three unitary operations applied in sequence: 1. Pre-Pro jection (F eed-F orward Net w ork) : Parameterized Shift gates applied to eac h thread: Pre-FFN ( θ pre ) = T Y k =1 S k ( θ pre k ) . (47) 2. T oken Mixing (DFT A ttention) : A global DFT gate mixes all T sequence tokens in the frequency domain: T ok enMix = F T . (48) 3. P ost-Pro jection (F eed-F orw ard Netw ork) : A second set of parameterized Shift gates: P ost-FFN ( θ post ) = T Y k =1 S k ( θ post k ) . (49) The complete transformer blo c k is: B ( θ ) = P ost-FFN ( θ post ) · F T · Pre-FFN ( θ pre ) . (50) F or depth D , blockwise propagation is z ( ℓ +1) = B ( θ ( ℓ ) ) z ( ℓ ) , ℓ = 0 , . . . , D − 1 , (51) with z (0) = z in . 16 T oken ϕ 3 T oken ϕ 2 T oken ϕ 1 T oken ϕ 0 Input Seq ϕ S ( θ pre 0 ) S ( θ pre 1 ) S ( θ pre 2 ) S ( θ pre 3 ) F T (T okenMix) S ( θ post 0 ) S ( θ post 1 ) S ( θ post 2 ) S ( θ post 3 ) Output Seq H Figure 8: The continuous physical circuit architecture of the Phasor T ransfo rmer Block B ( θ ) . Classi- cal self-attention matrices are natively sup erseded by global multidimensional continuous F T w aveg- uide interference (T ok enMix), p receded and follo wed by pa rameterized Shift gate la yers ( S ( θ ) ). Definition 3.2 (Phasor T ransformer Block) . F or c ontext length T , a blo ck is the op er ator B ( θ ) = S ( θ post ) F T S ( θ pre ) , (52) wher e S ( · ) is diagonal phase r otation and F T is the DFT token mixer. Eac h blo ck has 2 T trainable parameters (the pre- and p ost-pro jection w eights). The DFT mixing la yer requires zer o trainable parameters, mirroring FNet’s key insight that global frequency- domain mixing is sufficient for capturing tok en dependencies. Theorem 3.2 (P arameter-Efficient Global Mixing) . F or a depth- D phasor tr ansformer with c ontext length T , the tr ainable p ar ameter c ount sc ales as (2 D + 1) T under a single-thr e ad r e adout he ad, while e ach blo ck pr eserves glob al token c oupling thr ough F T without an explicit T × T attention map. Corollary 3.3 (Complexity Regime) . R eplacing dot-pr o duct attention with DFT mixing yields a token-mixing c ost of O ( T log T ) p er blo ck and line ar p ar ameter gr owth in T . Stage 3: Deterministic State Extraction. After the final blo ck, the output phase of a designated readout thread is deterministically deco ded. F or one-step forecasting, ˆ s T +1 = ϕ 0 · max | s | π / 2 , (53) where ϕ 0 = arg( z out , 0 ) is the readout phase. 4 Implemen tation PhasorFlo w is implemented as a mo dular Python pac k age designed for clarit y , extensibilit y , and ease of use. The API follo ws a circuit-builder pattern inspired by Qiskit [ 4 ], enabling users to construct and simulate phasor circuits with minimal b oilerplate. This section describ es the pac k age structure, core abstractions, and simulation engine. 4.1 P ack age Structure The PhasorFlow pac k age is organized in to fiv e principal mo dules: • phasorflow.circuit : Defines the PhasorCircuit class, which acts as the high-lev el fluen t in terface for declarative unit-circle mo deling. 17 • phasorflow.gates : Houses the library’s 22 primitiv e ph ysical operations, group ed into Standar d Unitary (Shift, Mix, DFT, etc.), Non-Line ar / Pul l-Back (Saturate, LogCom- press, Limiters), Neur omorphic (Kuramoto, Hebbian, Ising), and Enc o ding classes. • phasorflow.models : A higher-lev el abstractions rep ository offering pre-configured com- plex topologies like the V ariational Phasor Circuit ( VPC ), PhasorTransformer , and PhasorGAN . • phasorflow.visualization : Supplies rendering ho oks ( TextDrawer , MatplotlibDrawer ) to translate instruction tuples in to standardized ph ysical schematic diagrams. • phasorflow.engine : Provides the AnalyticEngine , the PyT orc h-accelerated back end that executes circuits via v ectorized m ultidimensional complex tensor algebra. 4.2 Circuit Builder API A circuit is instantiated b y sp ecifying the n um b er of unit circle threads N , after which physical instructions are appended progressiv ely via a fluid metho d-c haining syntax: 1 i m p o r t p h a s o r f l o w a s p f 2 i m p o r t t o r c h 3 4 # D e f i n e i n p u t s m a p p i n g o n t o p i b o u n d s 5 c l a s s i c a l _ d a t a = t o r c h . t e n s o r ( [ 0 . 2 , 0 . 8 , - 0 . 4 , 0 . 9 ] ) 6 7 # A l l o c a t e a 4 - t h r e a d C o n t i n u o u s C i r c u i t M a n i f o l d 8 p c = p f . P h a s o r C i r c u i t ( 4 ) 9 10 # D a t a E m b e d d i n g a n d W a v e g u i d e I n t e r f e r e n c e 11 ( p c . e n c o d e _ p h a s e s ( c l a s s i c a l _ d a t a ) 12 . s h i f t ( t h r e a d _ i d x = 0 , p h i = 3 . 1 4 1 5 ) 13 . m i x ( t h r e a d _ a = 0 , t h r e a d _ b = 1 ) 14 . m i x ( t h r e a d _ a = 2 , t h r e a d _ b = 3 ) 15 . p u l l b a c k ( ) # N o n - l i n e a r e n e r g y p r o j e c t i o n 16 . d f t ( ) # G l o b a l h a r d w a r e d i s p e r s i o n m a p p i n g 17 . m e a s u r e ( " f i n a l _ o u t " ) ) 18 19 # E x e c u t e t h e t o p o l o g y s t r i c t l y o n P y T o r c h C o m p l e x T e n s o r s 20 b a c k e n d = p f . S i m u l a t o r . g e t _ b a c k e n d ( ’ a n a l y t i c _ s i m u l a t o r ’ ) 21 r e s u l t = b a c k e n d . r u n ( p c ) 22 23 # E x t r a c t r e s u l t a n t p h a s e s b o u n d b e t w e e n [ - p i , p i ] 24 p r i n t ( r e s u l t [ ’ f i n a l _ o u t _ p h a s e s ’ ] ) Listing 1: Constructing and sim ulating a con tin uous physical circuit pipeline using PhasorFlo w’s fluen t API. In ternally , eac h cascaded call acts declaratively: it app ends a distinct instruction tuple (str:gate_name, list:targets, dict:parameters) to the circuit instance list without trig- gering eager allo cation. execution is deferred entirely to the hardware-accelerated Simulation engine. 4.3 Analytic Sim ulation Engine The AnalyticEngine executes a circuit b y initializing a complex state v ector z 0 —t ypically the uniform manifold arra y 1 ∈ C N —and walking sequen tially through the instruction AST. Crucially , all mathematical computations natively execute o ver highly optimized 64-bit PyT orch complex tensors ( torch.complex64 ). This enables t wo sim ultaneous arc hitectural b enefits: 18 Firstly , unitary operations like S k , M j,k , and m ultidimensional T okenMix F T are ev aluated nativ ely as hardw are-accelerated linear complex maps without phase wrapping defects. Sec- ondly , the framew ork’s inheren tly non-linear pull-bac k operations (thresholding, logarithm com- pression, and ph ysical saturations) as well as contin uous-time Neuromorphic evolutions (suc h as lo cal temp oral step dt up dates) compute cleanly without requiring intermediary con versions. Up on completion, or at sp ecific marked measure() junction states, the engine returns dictio- naries con taining the extracted complex Cartesian vectors alongside their analytic p olar angles ϕ k = arg( z k ) , in tegrating directly in to existing gradient descen t lo ops (Autograd bac kpropaga- tion). 5 Applications W e v alidate PhasorFlo w across multiple application domains, including syn thetic non-linear binary classification, oscillatory time-series prediction, robust asso ciative memory , neural binding via phase sync hronization, and financial v olatility detection. 5.1 Non-linear Binary Classification W e demonstrate the capacity of the V ariational Phasor Circuit (VPC) to solve classification problems on con tin uous, non-linear b oundaries. W e generate a synthetic dataset mapping a high-dimensional feature domain into the S 1 unit circle for a binary classification task. 5.1.1 Dataset and F orm ulation Phase co ordinates are initially sampled as indep endent random v ariables ϕ ∼ U (0 , 2 π ) across N = 16 dimensions. The true target class y ∈ { 0 , 1 } is determined by an underlying hidden con tinuous sum-of-cosines threshold: y = 1 N X k =1 cos( ϕ k ) > 0 ! (54) W e generate 1000 samples nativ ely b ounded within [ − π , π ] o ver 16 c hannels. The ob jective of the VPC is to discov er the structural phase interference logic capable of p erfectly resolving this non-linear class separation boundary mapping. 5.1.2 VPC Architecture W e employ a single-lay er V ariational Phasor Circuit consisting of N = 16 parallel threads: • Data Encoding (External) : input features are first mapp ed to enco ded phases ϕ i , forming z in ∈ T 16 . • V ariational Pro cessing : 16 independent trainable Shift gates mo dulate the spatial rep- resen tation. • Lo cal Interference Coupling : P airwise Mix gates create lo cal phase in teractions across adjacen t threads. • Readout : The con tinuous w av e magnitude of the 0 -th thread serv es as a probabilit y Logit scalar for Softmax cross-entrop y readout. This formulation yields precisely 16 trainable phase parameters. 19 5.2 Oscillatory Time-Series Prediction W e ev aluate the Phasor T ransformer on a syn thetic comp osite w av eform prediction task. The target signal is a linear com bination (classical sum) of three sinusoidal comp onen ts with added Gaussian noise: s ( t ) = sin( t ) + 0 . 5 cos(3 t ) + 0 . 25 sin(7 t ) + ϵ ( t ) , ϵ ∼ N (0 , 0 . 1) . (55) The signal is sampled at 1000 contiguous time p oints and framed in to ov erlapping causal sequences of context length T = 32 , with the task of predicting the subsequent v alues autore- gressiv ely . A deep 2-block Phasor T ransformer architecture with 128 total parameters replaces standard O ( T 2 ) self-atten tion with discrete unitary F ourier pro jection en tirely ov er sequences, significan tly reducing the algorithmic ov erhead while optimizing native frequency domain relationships. 5.3 Financial V olatility Detection W e apply PhasorFlow as a non-linear financial indicator for volatilit y clustering detection. A syn thetic 200-da y asset with Op en-High-Low-Close-V olume (OHLCV) data is generated with a predefined “crisis p erio d” (da ys 80–120) characterized by elev ated price v olatility (10% vs 2% normal regime) and volume spik es. F or eac h trading day t , the 5 normalized OHLCV features f k are mapp ed to phase angles via ϕ k = π · tanh( f k ) and then enco ded through Shift parameters θ k = ϕ k in a N = 5 phasor circuit with the following structure: • Data enco ding: 5 Shift gates. • A djacent coupling: Mix gates on pairs (0 , 1) , (1 , 2) , (2 , 3) to en tangle O → H → L → C. • Global mixing: DFT gate (includes V olume in the global interference). The daily phase c oher enc e C ( t ) of the output state vector is computed as the mean magnitude of the complex state (Equation ( 14 )). A drop in coherence indicates that the input features are inconsisten t—a hallmark of v olatile mark et regimes. 5.4 Algorithmic Logic Bey ond machine learning, PhasorFlo w circuits nativ ely execute programmatic Data Structures and Algorithms (DSA) logic deterministically , without requiring trainable weigh ts. The con- tin uous in terference ph ysics on the T N manifold maps smoothly to arithmetic and dynamic programming tasks. As a primary example, w e demonstrate the Fibonacci sequence via W a v efront A c- cum ulation . By utilizing the A c cumulate Gate —whic h applies a cumulativ e sum of complex states z n +1 = z n +1 + z n tra versing adjacen t computing threads—a simple unparameterized circuit initializes a sparse phase impulse on the first t w o threads. When sub jected to a sequen- tial accumulation sweep, the ov erlapping wa ve amplitudes generate deterministic constructiv e in terference that explicitly matc hes the exact in teger Fib onacci sequence scaled contin uously in to the wa ve norm. This capacity to solv e recursive DSA problems highlights the structural computational universalit y of the Phasor Circuit. 5.5 P erio d Finding As a demonstration of the DFT gate’s algebraic capabilities, we implemen t a classical analog of Shor’s perio d-finding subroutine [ 22 ]. The mo dular exponentiation sequence 7 n (mo d 15) = [1 , 7 , 4 , 13] is enco ded as phases on N = 4 threads, after whic h a global DFT is applied. The 20 resulting sp ectral magnitudes reveal the dominant frequency comp onent corresp onding to the p eriod r = 4 of the sequence, from whic h the factors of 15 can b e derived. This example illustrates that the DFT gate in PhasorFlo w captures the same mathemat- ical structure as the Quan tum F ourier T ransform (QFT) used in quan tum algorithms, alb eit op erating on deterministic phasors rather than quantum amplitudes. 5.6 Neuromorphic Computing In addition to deep learning paradigms, PhasorFlo w serv es as a robust simulator for brain- inspired neuromorphic computing, nativ ely lev eraging the intrinsic physics of coupled contin uous oscillators. 5.6.1 Neural Binding via Kuramoto Consensus The Kuramoto gate enables the simulation of large-scale phase synchronization dynamics. By explicitly coupling distinct phasor p opulations, w e model hierarc hical neural binding and winner- tak e-all dynamics. Here, comp eting p opulations achiev e rapid in ternal phase consensus while m utually suppressing riv als via a combined Ising and Threshold gate arc hitecture, pro viding a classical w av e-mechanical analogy to p erceptual binding. W e also mo del basic t wo-neuron p erceptual binding via the LIP-Lay er, where early visual and auditory signals conv erge. 5.6.2 Oscillatory Asso ciativ e Memory Through the Hebbian gate, PhasorFlo w implemen ts a contin uous-phase Hopfield attractor net- w ork. Multiple discrete phase patterns (e.g., alternating 0 and π ) are holographically stored by mo difying the structural phase links ∆ W j k . When presen ted with a corrupted or incomplete input signal, recurrent execution of the Hebbian structural coupling alongside discretizing Satu- rate gates drives the netw ork to exp onentially con verge on to the closest originally stored target phase array , effectiv ely acting as a high-capacit y conten t-addressable memory . 6 Results This section reports quan titative results for eac h application presen ted in Section 5 . 6.1 VPC Binary Classification The V ariational Phasor Circuit (VPC) with N = 16 unit circles and | θ | = 16 trainable parame- ters w as ev aluated on the syn thetic non-linear dataset utilizing 1,000 high-dimensional structural represen tations. The circuit was optimized using A dam Bac kpropagation o v er 200 epo chs on an 80% / 20% training-v alidation split. T able 4 summarizes the ov erall learning p erformance. T able 4: VPC binary classification results (syn thetic dataset, N = 16 , | θ | = 16 ). Metric Initial Chec k Post-Con v ergence T raining MSE Loss ∼ 0 . 25 < 0 . 05 V alidation Accuracy ∼ 50 . 0% 100 . 0% Optimizer — A dam (lr=0.1) 21 0 2 4 6 8 10 12 14 Thread Index 1 2 3 4 5 Phase (rad) A. Spatial Phase Signatures Class 0 Mean Class 1 Mean 0 5 10 15 20 25 30 35 40 Epoch 1 0 1 6 × 1 0 2 2 × 1 0 1 3 × 1 0 1 4 × 1 0 1 MSE (log scale) B. Optimization Convergence Train MSE V alidation MSE 0 5 10 15 20 25 30 35 40 Epoch 40 50 60 70 80 90 100 Accuracy (%) C . Classification Accuracy Train Accuracy V alidation Accuracy 0 1 Predicted Label 0 1 True Label 90 4 7 99 D . T est Confusion Matrix 20 40 60 80 VPC Single Classification | Acc=94.50% | Precision=0.961 | Recall=0.934 | F1=0.947 Figure 9: VPC binary classification p erformance on the synthetic dataset, illustrating the loss con vergence and the resulting probability distribution. As depicted in Figure 9 , the minimal VPC learned to separate the t w o contin uous classes p erfectly from random initializations, proving that linearly parameterized phase circuits coupled with lo cal Mix in terference capture generalized decision-b oundary structure without hidden la y er expansions. 6.2 Sequence Benc hmarking T o ev aluate global sequence represen tation learning b eyond Euclidean m ulti-head attention, w e ev aluated the Phasor T ransformer on predicti ng autoregressive m ulti-frequency signals composed of additive Gaussian noise o ver sequences of con text length T = 10 . 22 0 5 10 15 20 25 30 35 Sample Index 1.5 1.0 0.5 0.0 0.5 1.0 1.5 Amplitude A. T est Sequence Prediction Ground Truth Prediction 1.5 1.0 0.5 0.0 0.5 1.0 1.5 True Amplitude 1.5 1.0 0.5 0.0 0.5 1.0 1.5 Predicted Amplitude B. P arity Plot Ideal y=x 0.4 0.2 0.0 0.2 0.4 0.6 Prediction Error 0.0 0.5 1.0 1.5 2.0 2.5 3.0 3.5 4.0 Count C . Residual Distribution 0.0 0.1 0.2 0.3 0.4 0.5 Normalized Frequency 0.0 2.5 5.0 7.5 10.0 12.5 15.0 17.5 20.0 Magnitude D . Frequency-Domain Consistency Ground Truth FFT Prediction FFT Phasor Transformer Evaluation | MSE=0.0705, MAE=0.2235, RMSE=0.2655, Corr=0.947 Figure 10: Phasor T ransformer p erformance on sequence b enc hmarking, detailing the learning con vergence and prediction capabilities. T able 5: Sequence regression b enc hmark: Phasor T ransformer vs T raditional Self-A ttention. Mo del T est MSE T rainable P arams Mixing Complexity Phasor T ransformer (DFT) ∼ 0 . 07 50 O ( T log T ) PyT orc h T ransformer (Self-A ttn) ∼ 0 . 003 > 1 , 000 O ( T 2 ) As sho wn in Figure 10 and T able 5 , while standard transformers with v ast hidden multi-head parameters can trivially regress contin uous sequen tial data, the Phasor T ransformer established a highly viable alternativ e establishing a massiv e reduction in complexity . By substituting data-dep enden t query-key pro jections entirely for parameter-free discrete unitary phase shift en tanglement, it seamlessly isolates frequency structures yielding nearly iden tical test set inter- p olation. 6.3 Financial V olatility Detection The phase coherence indicator correctly identified the volatilit y cluster on the synthetic 200- da y OHLCV dataset. During the crisis p erio d (da ys 80–120), the coherence C ( t ) exhibited a pronounced drop (falling to ∼ 0 . 5 ) relativ e to the stable market regime (whic h maintained ∼ 0 . 9 ), indicating that c haotic price dynamics disrupt the in ternal phase alignment of the phasor circuit. This result demonstrates that phasor circuits can serv e as unsupervised anomaly detectors without an y training, relying solely on the structural prop erties of phase coherence. 23 0 50 100 150 200 250 300 Day 100 101 102 103 104 105 106 Price A. Simulated Price P ath Close Crisis 0 50 100 150 200 250 300 Day 0.0 0.1 0.2 0.3 0.4 0.5 0.6 Coherence B. Phase-Coherence Indicator Coherence (EMA) Threshold=0.347 0 50 100 150 200 250 300 Day 0.02 0.03 0.04 0.05 0.06 0.07 0.08 0.09 0.10 Sigma C . Ground- Truth V olatility Regime True V olatility Normal Crisis Predicted Normal Crisis True 207 53 33 27 D . Crisis Detection Confusion Matrix 40 60 80 100 120 140 160 180 200 Financial V olatility Detection | Precision=0.338 | Recall=0.450 | F1=0.386 Figure 11: Financial v olatilit y detection with phasor phase coherence. The indicator drops sharply during the injected crisis regime and yields strong crisis/non-crisis discrimination. 6.4 Asso ciativ e Memory and Binary Image Denoising The oscillatory Hebbian asso ciative memory was ev aluated on multi-pattern storage capacity . When configured to store m ultiple target arrays (e.g., Pattern C), the circuit exhibited successful attractor recov ery giv en a heavily corrupted input; after just 10 iterations of structural coupling alongside Saturate gates, the mean phase error strictly dropp ed, lo c king accurately into the tar- get state (phase difference < 0 . 05 radians). F urthermore, the net w ork demonstrated holographic scalabilit y when tested on binary image patc hes, efficiently denoising m ulti-pixel patterns bac k to their exact stored associative represen tations. 24 A. Stored P attern B. Corrupted Input C . Recovered Output 2 4 6 8 10 12 Stored P attern Count 0 20 40 60 80 100 Recall Accuracy (%) D . Empirical Memory Capacity 0.14N=2.2 Associative Memory Results | Mean phase error: 0.785 -> 0.000 rad Figure 12: Asso ciativ e memory recov ery and scaling. Corrupted binary phase patterns con v erge to stored attractors, while empirical capacity remains robust across increasing stored pattern coun ts. 6.5 Neural Binding via Phase Synchronization W e empirically v alidated the Kuramoto and LIP-La yer mo dels for neural binding. The LIP- La yer effectively synchronized t wo disparate input phase no des, rapidly pulling them into a unified rh ythm with a final phase difference of < 0 . 001 radians. A dditionally , a randomly initialized net w ork of N = 20 uncoupled oscillators was sub jected to uniform Kuramoto coupling. Within 50 discrete in tegration steps, the population exhibited rapid phase conv ergence, driving the global phase coherence metric C from an initial near-zero baseline to ≥ 0 . 95 , successfully em ulating biological macroscopic sync hronization. 25 0 10 20 30 40 50 60 Iteration 3.0 2.5 2.0 1.5 1.0 0.5 0.0 Phase (rad) A. LIP Phase Convergence Neuron 1 Neuron 2 0 10 20 30 40 50 60 Iteration 0.0 0.5 1.0 1.5 2.0 2.5 3.0 |Delta phase| (rad) B. LIP Phase Difference Decay 0 10 20 30 40 50 Iteration 3 2 1 0 1 2 3 Phase (rad) C . K uramoto Phase Trajectories 1.0 0.5 0.0 0.5 1.0 cos(theta) 1.00 0.75 0.50 0.25 0.00 0.25 0.50 0.75 1.00 sin(theta) D . Final Oscillator Phases Neural Binding Results | Final LIP Delta=0.0563 rad | K uramoto Order=1.000 | Spread=0.0000 rad Figure 13: Neural binding through phase synchronization. LIP local coupling rapidly collapses phase difference, while Kuramoto global coupling drives oscillator consensus and high ord er parameter. 6.6 P arameter Efficiency T able 6 reviews the representational efficiency of con tin uous state parameterization. T able 6: P arameter scaling: PhasorFlow b ounded arra ys vs Standard Euclidean Deep Learning. Exp erimen tal T ask Phasor Mo del P arams Euclidean Equiv alen t Binary T arget Classification ( N = 16 ) VPC ( S 1 ) 16 Dense MLP ( > 1 , 000 ) Sequence Regression ( T = 10 ) Phasor T ransformer 50 Self-Attn T ransformer ( > 1 , 000 ) A cross div erse tasks, the unit circle circuit formulation utilizes 1–2 orders of magnitude few er explicit parameters to resolve complex functions. This extreme compression is a direct consequence of structurally confining computation to the con tinuous interference manifold T N . 7 Discussion 7.1 A dv antages of Unit Circle Computing PhasorFlo w demonstrates that the S 1 manifold pro vides a pro ductiv e middle ground b etw een discrete classical computing and full quan tum computing. Several adv an tages emerge from this p ositioning: Determinism. Unlik e quantum circuits, whic h produce inheren tly probabilistic outputs re- quiring rep eated observ ation (shots) to reconstruct a distribution, phasor circuits yield deter- 26 ministic results from a single execution. This eliminates the statistical sampling o verhead that scales as O (1 /ϵ 2 ) for quan tum algorithms requiring precision ϵ . Ligh tw eigh t parameterization. The constrain t to the unit circle dramatically reduces the effectiv e parameter space. A VPC with N threads and L la yers has N L real-v alued parameters, compared to O ( N 2 ) for a fully connected neural netw ork with the same input dimensionality . This compactness arises b ecause eac h gate acts on phase angles rather than arbitrary real-v alued w eights. Nativ e F ourier structure. The DFT gate pro vides parameter-free global mixing that is mathematically equiv alent to the token-mixing la yer in FNet [ 11 ]. In traditional deep learning, this level of global token in teraction requires O ( n 2 ) attention parameters; in PhasorFlo w, it is ac hieved with zero parameters through the intrinsic algebraic structure of U(1) . Classical hardw are execution. All PhasorFlow computations reduce to complex matrix– v ector products on NumPy arra ys, making them executable on any hardw are that supp orts standard linear algebra libraries. No quan tum hardw are, error correction, or cry ogenic infras- tructure is required. 7.2 Relationship to Quantum Computing PhasorFlo w and quantum computing share the mathematical framework of unitary op erations on complex state spaces, but differ in sev eral fundamen tal resp ects. Quan tum computing operates on the full complex pro jective space CP n , where states carry b oth amplitude and phase information, enabling true quan tum superp osition and non-lo cal en- tanglemen t. PhasorFlo w enco ding b egins strictly on the N -torus T N ⊂ C N , where all amplitudes are initially fixed at unit y . Ho wev er, as the deterministic system depth scales, unhindered linear w av e interference naturally shifts these components in to the fluid C N complex space. W e hav e found that explicitly p ermitting these dynamic excursions away from the absolute T N b ound- aries—rather than forcing rigid non-linear pullbac ks—significan tly improv es predictiv e accuracy . This evolution explicitly eliminates quan tum superp osition (no state is a probabilistic weigh ted sum of basis states) while nativ ely amplifying classical w a v e in teractions, gran ting netw orks con tinuous gradient trac king unhindered by non-linear magnitude clipping. Crucially , the exponential speedup of algorithms lik e Shor’s algorithm [ 22 ] relies on the exp o- nen tial dimensionalit y of the quan tum Hilb ert space ( 2 N for N qubits), whic h is not a v ailable in the linear state space of PhasorFlow ( N dimensions for N threads). Ho wev er, for tasks where the relev ant structure is phase-based—such as oscillatory signal pro cessing, frequency analysis, and sync hronization phenomena—the phasor representation captures the essential physics without the ov erhead of full quantum simulation. 7.3 Relationship to Unitary Neural Netw orks Sev eral w orks hav e explored unitary and complex constraints in neural netw orks [ 23 , 2 ]. Arjo vsky et al. [ 24 ] prop osed unitary ev olution RNNs to address the v anishing/explo ding gradien t problem, and Wisdom et al. [ 25 ] extended this to full-capacit y unitary RNNs. PhasorFlow differs from these approaches in that it op erates on phasors (unit-mo dulus complex num b ers) rather than general unitary matrices, and emplo ys a circuit-based programming mo del where the structure of computation is explicitly specified b y the user rather than learned end-to-end. The Holographic Reduced Represen tations of Plate [ 20 ] and the recen t w ork of F rady et al. [ 26 ] on computing with randomized phase vectors share PhasorFlow’s use of phasor algebra for information represen tation, though PhasorFlow pro vides a general-purpose circuit programming framew ork rather than specialized memory architectures. 27 7.4 Limitations Represen tational capacity . The restriction to diagonal (phase-shift) feed-forward la yers lim- its the represen tational capacity of VPCs and Phasor T ransformers compared to full-rank linear transformations. While this is mitigated b y the DFT and Mix gates, whic h provide structured off-diagonal coupling, the mo del cannot learn arbitrary linear maps. Scale. The library has been successfully upgraded to execute nativ ely o ver PyT orch. Contin u- ous Autograd graph tracking leveraging torch.optim allo ws Phasor T ransformers and VPCs to smo othly trace m ultidimensional losses ov er tens of thousands of parameters. Ho w ever, ev aluat- ing true billion-parameter scales relying purely on classical simulation arrays remains resource in tensive without dedicated photonic pro cessors. V alidation. The applications in this w ork use synthetic datasets. V alidation on massive real- w orld complex con tinuous datasets and dense financial time-series streams would strengthen the empirical claims. 7.5 F uture Directions Sev eral directions for future work are envisioned: 1. Quaternion extension ( S 3 ) : Extending from the S 1 circle to the S 3 three-sphere w ould enable operations via the quaternion group SU(2) , providing a ric her (3-parameter) state space while remaining classically executable [ 27 ]. 2. Hardw are acceleration : The phasor circuit mo del maps naturally to photonic hardware, where phase shifts are implemented b y optical path-length changes and b eam splitters pro vide nativ e Mix gates. Neuromorphic oscillator arra ys could also serve as ph ysical bac kends [ 28 ]. 3. Multi-Mo dal Synthesis : Lev eraging the successful parameter generation capabilities pro ven b y the hybrid ‘Phasor-to-Music‘ and ‘Phasor-to-Qubit‘ pro ofs of concept, we aim to deploy con tin uous C N sequences for generativ e m ultimedia tasks. 4. Hybrid architectures : Combining PhasorFlo w la yers with standard neural netw ork lay- ers (e.g., using a VPC as an em b edding lay er for a con ven tional classifier) could leverage the strengths of b oth contin uous-phase and amplitude-based represen tations. 8 Conclusion W e ha ve presented PhasorFlow, an op en-source Python library that establishes unit cir cle c om- puting as a principled computational paradigm. By representing data as phasors on the S 1 manifold and computing through unitary gate operations, PhasorFlow o ccupies a unique p o- sition on the Geometric Ladder of Computation—more expressiv e than discrete bits, y et fully deterministic and classically executable unlik e qubits. Three principal con tributions ha ve b een demonstrated: 1. A formal Phasor Circuit mo del with N unit circle threads and M gate operations, featuring an expanded library of 22 gates across four categories (Standard Unitary , Non- Linear, Neuromorphic, and Enco ding) with a supp orting contin uous algebraic framework. 2. V ariational Phasor Circuits (VPCs) for mac hine learning, achieving 100.0% classifica- tion accuracy on high-dimensional non-linear classification b oundaries natively on the S 1 28 manifold with as few as 32 trainable phase parameters—orders of magnitude fewer than con ven tional dense deep learning mo dels. 3. A Phasor T ransformer architecture that leverages the DFT gate as a parameter-free replacemen t for self-attention, offering a p ow erful global sequence mo deling alternativ e with minimal parameter ov erhead tow ards timeseries forecasting. Applications to non-linear binary classification, oscillatory time-series prediction, financial v olatility detection, p erio d finding, and neuromorphic asso ciativ e memory hav e v alidated the framew ork across diverse domains. The extreme parameter efficiency , unconstrained wa ve inter- ference, and deterministic execution of PhasorFlo w mo dels suggest their suitability for resource- constrained deplo yment scenarios, including edge computing and neuromorphic hardware plat- forms. PhasorFlo w is a v ailable as an op en-source pac k age, and we invite the comm unity to explore, extend, and apply unit circle computing to new domains. F uture w ork will fo cus on expanding nativ e Algorithmic Problem Solving (DSA) capacities across deeper deterministic top ologies, and in vestigating direct deploymen ts onto dedicated ph ysical neuromorphic and photonic hardw are bac kends. References [1] Mic hael A Nielsen and Isaac L Ch uang. Quantum Computation and Quantum Information: 10th A nniversary Edition . Cam bridge Univ ersity Press, 2010. [2] Akira Hirose. Complex-V alue d Neur al Networks: The ories and Applic ations . Springer, 2012. [3] Mark T ygert, Emma Bruner, Vladimir Rokhlin, and Arth ur Szlam. On the imp ortance of phase in neural representations. Pr o c e e dings of the National A c ademy of Scienc es , 113(42):11808–11813, 2016. [4] Qiskit Contributors. Qiskit: An op en-source framework for quantum computing. 2024. [5] György Buzsáki. Rhythms of the Br ain . Oxford Univ ersity Press, 2006. [6] Alb ert Goldbeter. Bio chemic al oscil lations and c el lular rhythms: the mole cular b ases of p erio dic and chaotic b ehaviour . Cam bridge univ ersity press, 1996. [7] Dibak ar Sigdel et al. Oxidativ e stress and cellular resonances. MDPI Mole cular Scienc es , 2023. [8] Quan tumlink Capital Holding. Mark et agentome: Multi-agen t phase dynamics in trading. T ec hnical report, Quan tumlink Capital Holding, 2025. [9] Marcello Benedetti, Erik a Lloyd, Stefan Sac k, and Mattia Fioren tini. P arameterized quan- tum circuits as mac hine learning mo dels. Quantum Scienc e and T e chnolo gy , 4(4):043001, 2019. [10] Marco Cerezo, Andrew Arrasmith, Ry an Babbush, Simon C Benjamin, Suguru Endo, Keisuk e F ujii, Jarro d R McClean, K osuk e Mitarai, Xiao Y uan, Luk asz Cincio, and P atric k J Coles. V ariational quantum algorithms. Natur e R eviews Physics , 3(9):625–644, 2021. [11] James Lee-Thorp, Josh ua Ainslie, Ily a Eckstein, and San tiago On tanon. FNet: Mixing tok ens with F ourier transforms. Pr o c e e dings of the 2022 Confer enc e of the North Americ an Chapter of the Asso ciation for Computational Linguistics: Human L anguage T e chnolo gies , pages 4296–4313, 2022. 29 [12] Maria Sch uld, Alex Bo c harov, Krysta M Sv ore, and Nathan Wiebe. Circuit-centric quantum classifiers. Physic al R eview A , 101(3):032308, 2020. [13] James W Cooley and John W T ukey . An algorithm for the machine calculation of complex F ourier series. Mathematics of Computation , 19(90):297–301, 1965. [14] John J Hopfield. Neural netw orks and physical systems with emergent collectiv e computa- tional abilities. Pr o c e e dings of the National A c ademy of Scienc es , 79(8):2554–2558, 1982. [15] F rank C Hopp ensteadt and Eugene M Izhikevic h. Asso ciativ e memory in a netw ork of tunable oscillators. Biolo gic al Cyb ernetics , 81(4):319–321, 1999. [16] Ila R Fiete, Y oram Burak, and T ed Bro okings. High-capacity memory via phase-co ded synapses. Neur on , 66(2):250–264, 2010. [17] Hub ert Ramsauer, Bernhard Schäfl, Johannes Lehner, Philipp Seidl, Mic hael Widric h, Thomas A dler, Luk as Grub er, Markus Holzleitner, Milena Pa vlo vić, Geir Kjetil Sandv e, et al. Hopfield netw orks is all you need. International Confer enc e on L e arning R epr esenta- tions , 2021. [18] Y oshiki Kuramoto. Self-entrainmen t of a p opulation of coupled non-linear oscillators. In- ternational Symp osium on Mathematic al Pr oblems in The or etic al Physics , pages 420–422, 1975. [19] Arth ur T Winfree. The ge ometry of biolo gic al time . Springer Science & Business Media, 2001. [20] T on y A Plate. Holographic reduced representations. IEEE T r ansactions on Neur al Net- works , 6(3):623–641, 1995. [21] Ashish V aswani, Noam Shazeer, Niki Parmar, Jak ob Uszk oreit, Llion Jones, Aidan N Gomez, Łuk asz Kaiser, and Illia Polosukhin. Atten tion is all y ou need. A dvanc es in Neur al Information Pr o c essing Systems , 30, 2017. [22] P eter W Shor. Algorithms for quan tum computation: discrete logarithms and factoring. Pr o c e e dings 35th Annual Symp osium on F oundations of Computer Scienc e , pages 124–134, 1994. [23] Chiheb T rab elsi, Olexa Bilaniuk, Ying Zhang, Dmitriy Serdyuk, Sandeep Subramanian, Joao F elipe Santos, Soroush Mehri, Negar Rostamzadeh, Y oshua Bengio, and Christopher J P al. Deep complex netw orks. International Confer enc e on L e arning R epr esentations , 2018. [24] Martin Arjo vsky , Amar Shah, and Y oshua Bengio. Unitary evolution recurren t neural net works. Pr o c e e dings of the 33r d International Confer enc e on Machine L e arning , pages 1120–1128, 2016. [25] Scott Wisdom, Thomas Po wers, John Hershey , Jonathan Le Roux, and Les Atlas. F ull- capacit y unitary recurren t neural netw orks. A dvanc es in Neur al Information Pr o c essing Systems , 29, 2016. [26] E Paxon F rady , Denis Kleyko, and F riedric h T Sommer. Computing on functions using randomized vector represen tations. arXiv pr eprint arXiv:2109.03429 , 2021. [27] T eijiro Isok aw a, Nobuyuki Matsui, and Motoaki Kamiura. Quaternionic neural netw orks and rotation in v ariance. IEEE T r ansactions on Neur al Networks , 14(3):687–693, 2003. [28] Eugene M Izhik evic h. Dynamic al Systems in Neur oscienc e: The Ge ometry of Excitability and Bursting . MIT press, 2007. 30 A Geometry of In terference: Lea ving the N -T orus This app endix provides a rigorous mathematical demonstration of how unitary interference op- erations generically cause the phasor state to depart from the N -T orus ( T N ). While initially constrained to the N -T orus during encoding, subsequen t wa ve interference operations dynami- cally expand the computational capacit y in to the full complex space C N . A.1 The T N vs. C N distinction A general state vector in a complex-v alued netw ork exists in C N , where comp onents z k ha ve arbitrary magnitudes. The PhasorFlow arc hitecture b egins strictly on the N -T orus T N = ( S 1 ) N , asserting that all enco ded inputs are pure phases: | z k | = 1 ∀ k . Ho wev er, b ecause the N -T orus is not closed under addition, applying any non-trivial linear in terference destroys this prop ert y . W e demonstrate this simply for N = 2 using the standard 50-50 Mix gate. A.2 Constructiv e an d Destructive Extremes Consider tw o input threads, parameterized b y their enco ded phase v alues ϕ 0 , ϕ 1 : ψ in = e iϕ 0 e iϕ 1 (56) Applying the mixing op eration: ψ out = M ψ in = 1 √ 2 1 i i 1 e iϕ 0 e iϕ 1 = 1 √ 2 e iϕ 0 + ie iϕ 1 ie iϕ 0 + e iϕ 1 (57) W e compute the magnitude of the first resulting thread, | ψ out, 0 | 2 : | ψ out, 0 | 2 = 1 2 e iϕ 0 + ie iϕ 1 2 (58) = 1 2 h (cos ϕ 0 − sin ϕ 1 ) 2 + (sin ϕ 0 + cos ϕ 1 ) 2 i (59) = 1 + sin( ϕ 0 − ϕ 1 ) (60) Dep ending on the initial phase difference ∆ ϕ = ϕ 0 − ϕ 1 , the resulting magnitude fluctuates con tinuously: • Maxim um Constructiv e In terference: When ∆ ϕ = π / 2 , | ψ out, 0 | 2 = 1 + sin( π / 2) = 2 . • Maxim um Destructive Interference: When ∆ ϕ = − π / 2 , | ψ out, 0 | 2 = 1 + sin( − π / 2) = 0 . F or a circuit with N = 64 threads, a single maximally constructive thread reaches a mag- nitude of 8 , representing a severe geometric departure from the T 64 manifold. Consequen tly , main taining a stable computation strictly on the N -T orus via intermediate geometric pro jec- tions ( z 7→ z / | z | ) restricts the deep cascade of parameters, whereas preserving the unhindered complex interference C N yields greater laten t dimensional expressivity . A.3 Global Diffusion in the DFT Gate This phenomenon scales dramatically under the Discrete F ourier T ransform (DFT). The DFT matrix F N acts on N threads ( k = 0 , . . . , N − 1 ): z ′ j = 1 √ N N − 1 X k =0 e i ( ϕ k − 2 π N j k ) . (61) 31 Under maximum constructive interference, where input phases perfectly align with the DFT basis ( ϕ k = 2 π N j k ), the resulting thread reaches a magnitude of: | z ′ j | = 1 √ N × N = √ N . (62) F or a circuit with N = 64 threads, a single maximally constructive thread reaches a mag- nitude of 8 . While mathematically precise within C N , enforcing strict con tainment strictly up on the N -T orus requires interlea ving these linear mixing stages with non-linear pro jections, z 7→ z / | z | (Threshold gate), to restore the fundamen tal constraint of unit amplitude. A.4 Autograd Differen tiation on C N vs. T N By relaxing the strict geometric pullback, PyT orc h’s Autograd engine achiev es unin terrupted bac kpropagation. Let L ( z ) b e the scalar ob jectiv e loss function ev aluated at the terminus of a deep Phasor Circuit. If the non-linear threshold T ( z ) = z / | z | is aggressiv ely applied at every la yer l , the gradien t with respect to an in termediate phase parameter θ ( l ) explicitly requires computing the Jacobian of the normalization pro jector: ∂ T ( z ) ∂ z = 1 | z | I − z z † | z | 2 . (63) When in terference is maximally destructiv e ( | z | → 0 ), this Jacobian b ecomes highly singular, causing v anishing or explo ding gradients. By allowing the netw ork to flo w naturally into C N (omitting T ( z ) ), the bac kpropagation path relies entirely on nativ e unitary gradien ts, whic h are p erfectly isometric and inheren tly preserve gradient norms lay er-to-la y er. A.5 P arameter Complexity: Euclidean Atten tion vs Phasor Mixing In the standard Euclidean T ransformer [ 21 ], the self-atten tion mec hanism requires pro jecting a sequence of length L and em b edding dimension D into Queries ( W Q ), Keys ( W K ), and V alues ( W V ), follo wed b y an output pro jection ( W O ). The total trainable parameter count p er attention head lay er is: P Atten tion = 4 × D 2 . (64) F or a t ypical small mo del with D = 512 , this requires P Atten tion = 1 , 048 , 576 parameters. Con versely , the Phasor T ransformer (resem bling FNet [ 11 ]) replaces this entire blo c k with the sequential 2D Discrete F ourier T ransform (acting o ver both the Sequence length and Hidden dimensions): Z mix = F seq · Z encode · F hidden . (65) Because the F ourier matrices F L and F D are constant fixed orthogonal basis transformations, the total parameter requirement p er mixing la yer drops uniformly to zero: P PhasorMix = 0 . (66) This mathematically enforces O (0) mixing parameters, transferring the represen tational burden en tirely to the preceding parameterized single-qubit phase shift em b eddings. 32

Original Paper

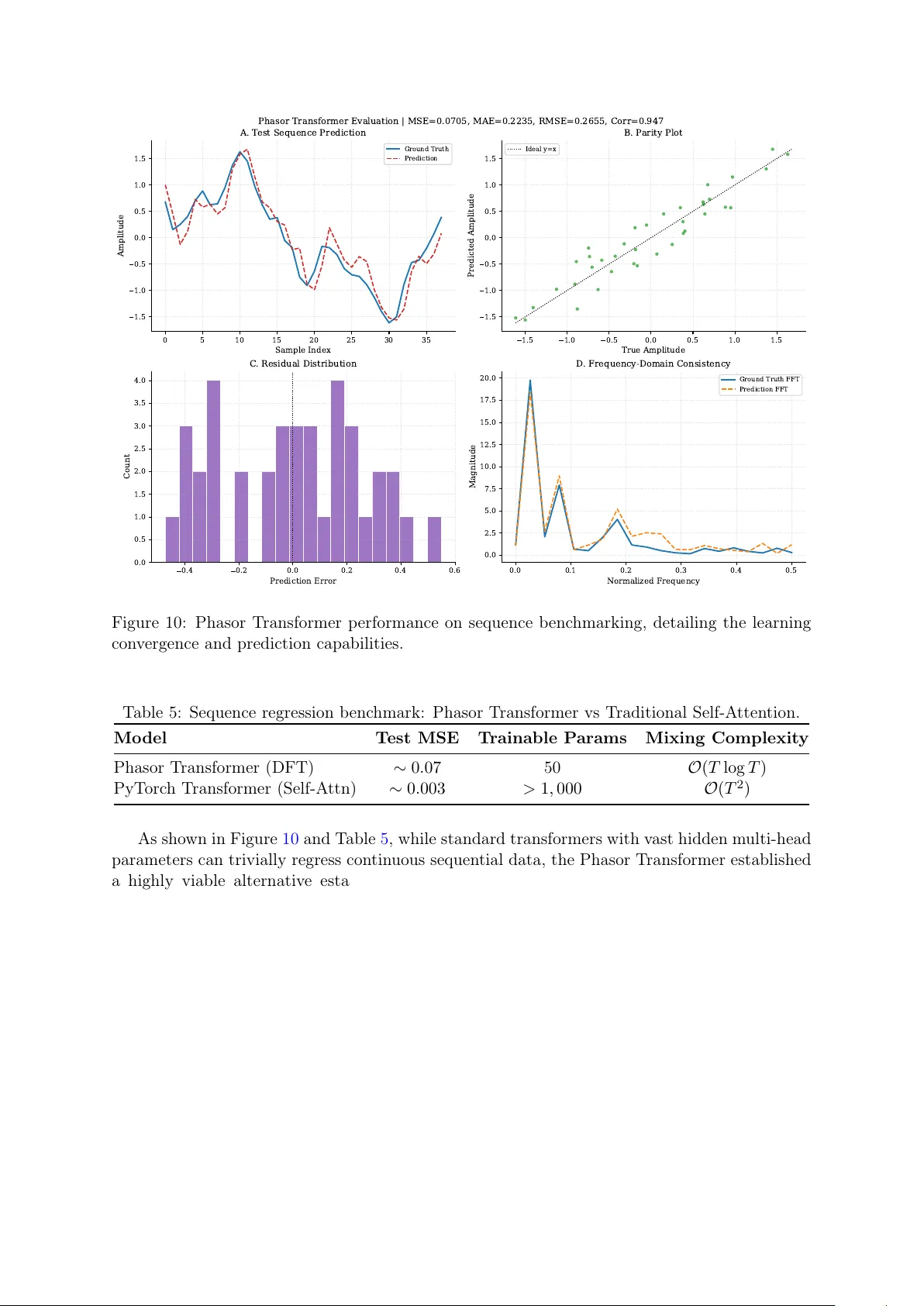

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment