I Know What I Don't Know: Latent Posterior Factor Models for Multi-Evidence Probabilistic Reasoning

Real-world decision-making, from tax compliance assessment to medical diagnosis, requires aggregating multiple noisy and potentially contradictory evidence sources. Existing approaches either lack explicit uncertainty quantification (neural aggregati…

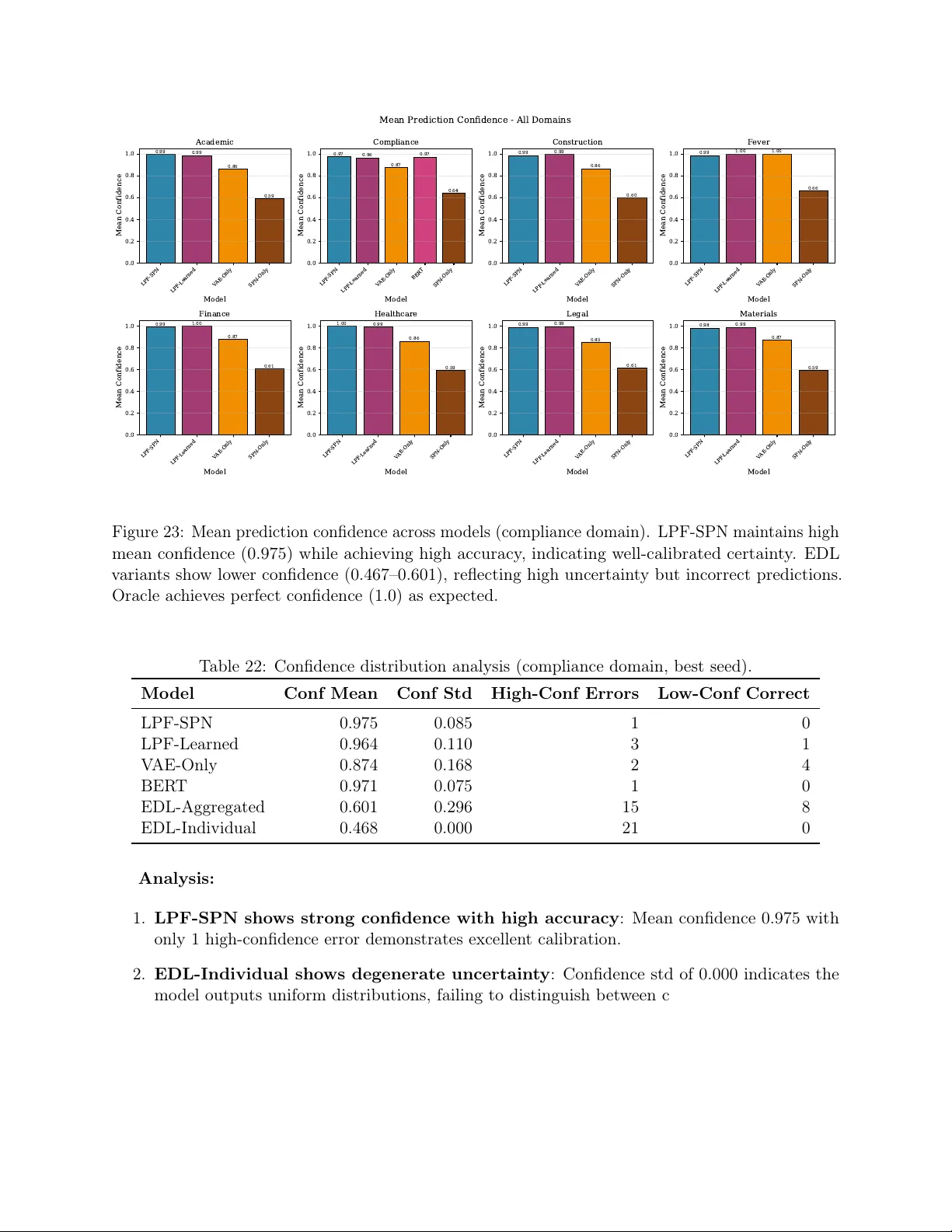

Authors: Aliyu Agboola Alege