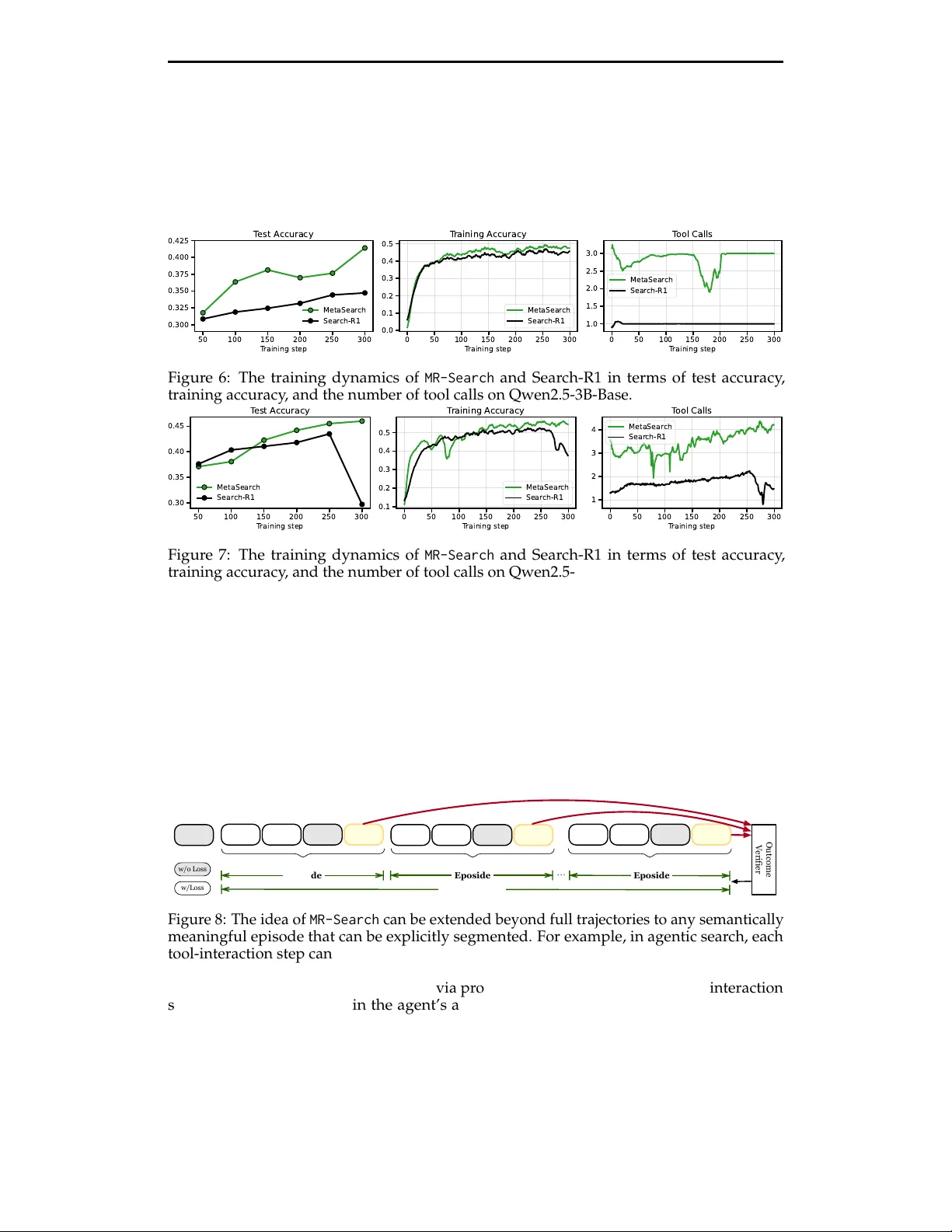

Meta-Reinforcement Learning with Self-Reflection for Agentic Search

This paper introduces MR-Search, an in-context meta reinforcement learning (RL) formulation for agentic search with self-reflection. Instead of optimizing a policy within a single independent episode with sparse rewards, MR-Search trains a policy tha…

Authors: Teng Xiao, Yige Yuan, Hamish Ivison