LUMINA: LLM-Guided GPU Architecture Exploration via Bottleneck Analysis

GPU design space exploration (DSE) for modern AI workloads, such as Large-Language Model (LLM) inference, is challenging because of GPUs' vast, multi-modal design spaces, high simulation costs, and complex design optimization objectives (e.g. perform…

Authors: Tao Zhang, Rui Ma, Shuotao Xu

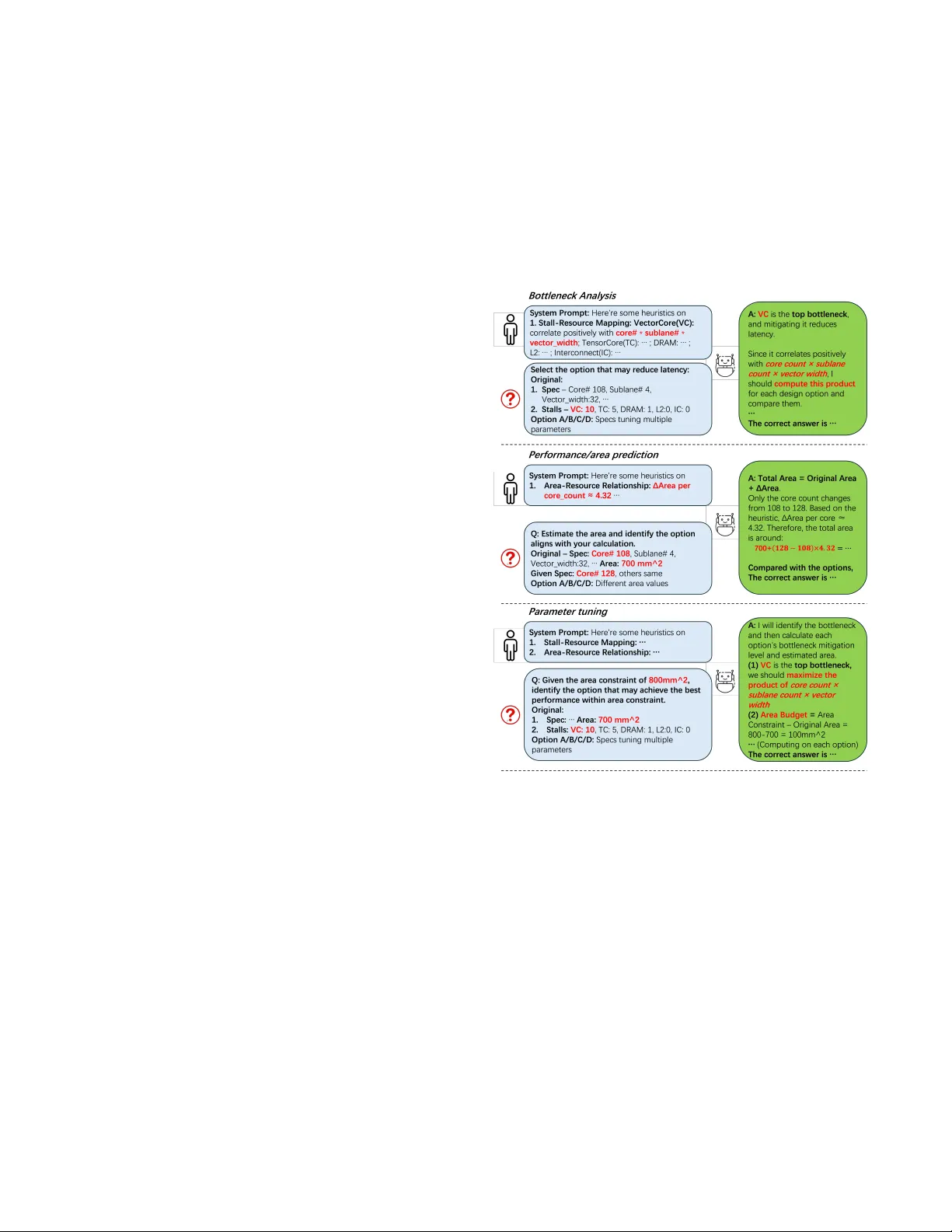

LUMINA: LLM-Guided GP U Architecture Exploration via Bottleneck Analysis T ao Zhang ∗ , Rui Ma ∗ , Shuotao Xu, Y ongqiang Xiong, Peng Cheng Microsoft Research China {zhangt, mrui, shuotaoxu, yqx, pengc}@microso.com Abstract GP U design space exploration (DSE) for mo dern AI workloads, such as Large-Language Model (LLM) inference, is challenging because of GP Us’ vast, multi-mo dal design spaces, high simulation costs, and complex design optimization objectives ( e .g., performance, power and area trade-os). Existing automated DSE methods are often prohibitively expensive , either requiring an excessive number of exploration samples or depending on intricate, manually crafted analyses of interdependent critical paths guided by human heuris- tics. W e present Lumina , an LLM-driven GP U architecture explo- ration framework that le verage AI to enhance the DSE eciency and ecacy for GP Us. Lumina extracts architectural knowledge from simulator code and performs sensitivity studies to automatically compose DSE rules,which are auto-corr e cted during e xploration. A core component of Lumina is a DSE Benchmark that compre- hensively evaluates and enhances LLMs’ capabilities across thr e e fundamental skills required for architecture optimization, which provides a principled and reproducible basis for model selection and ensuring consistent architectural reasoning . In the design space with 4.7 million possible samples, Lumina identies 6 designs of better performance and area than an A100 GP U eciently , using only 20 steps via LLM-assisted bottleneck analysis. In comparison, Lumina achieves 17.5 × higher than design space exploration eciency , and 32.9% better designs (i.e. Par eto Hypervolume) than Machine-Learning baselines, showcasing its ability to deliver high-quality design guidance with minimal search cost. 1 Introduction Graphics Processing Units (GP Us) have become the cornerstone of modern computing infrastructure in the age of articial intelli- gence (AI). With global data center spending projected to exceed $1 trillion by 2029 [ 12 ], largely driven by AI compute, optimiz- ing GP U architecture for major workloads—especially AI training and inference—is critical to reducing total cost of AI infrastructure ownership and improving sustainability . Howev er , optimizing GP Us via design space exploration (DSE) is often complex and time-consuming. For e xample, exploring 1000 GP U designs for one GPT -3 inference workload trace takes 6000 CP U hours via state-of-the-art GPU analytical modelling [ 37 ]. GP U DSE is dicult for three reasons. First, the design space is intrinsi- cally high-dimensional—spanning compute units, cache hierarchy , interconnects, and memory bandwidth—resulting in multi-modal parameter distributions that hinder ecient sear ch. Second, evalua- tion is costly , as each candidate typically requires hours of detailed simulation. Finally , the objective landscape is also multi-modal: ∗ Both authors contributed equally to this research. (a) T TFT (s) (b) TPOT (s) (c) Area ( mm 2 ) Figure 1. Design space visulizatin for a GPT3-175B [ 6 ] inference workload via rooine model [ 34 ]. Each sampled architecture is embedded via Principal Component Analysis [ 11 ] into two dimen- sions; distributions are capped for visual contrast. performance, p o wer , and area interact non-linearly , producing com- plex Pareto fronts that complicate trade-o decisions. Figure 1 illustrates these inherent challenges of the GP U DSE problem for LLM inference workloads. The design space comprises approxi- mately 4.7 million candidate architectures, each associated with distinct design objectives—including Time-to-First- T oken (T TFT), Time-Per-Output- T oken (TPOT) [ 26 ], and chip area. These objec- tives frequently conict with one another , resulting in intricate trade-os that further complicate the identication of an optimal design. Existing DSE methods often suer from low exploration e- ciency , typically requiring hundreds to thousands of samples to nd high-quality designs. They fall into two broad categories: expert-driven heuristics [ 17 , 27 , 31 ] and algorithmic ML-based tools [ 21 , 36 , 38 ]. Expert-base d approaches use rules such as critical- path analysis and stall-component mapping to identify bottlenecks, achieving reasonable Pareto hyper-volume (PHV) with a few hun- dred simulations. However , their heuristic nature requires substan- tial human domain expertise, limits generalization to new archi- tectures, and makes it dicult to capture complex interactions across multiple critical paths [ 9 ]. ML-based methods aim to ad- dress these limitations by learning the non-linear structure of the design space and can re veal insights beyond static heuristics. Y et, they require large numbers of high-delity simulation samples for training, making them expensive to deploy . Recent explosion in AGI capabilities oers a silver lining for advancing DSE. Emerging studies show that LLMs can enhance ML-based DSE by leveraging their pretrained domain kno wledge to guide sample selection [ 8 , 18 , 30 ]. This potential builds on LLMs’ demonstrated strengths in coding [ 8 ], reasoning [ 33 ], and applying domain-specic knowledge [ 19 ]. Howe ver , LLM p erformance varies widely across models [ 20 ], and issues such as hallucination [ 32 ] and incomplete domain understanding [ 19 ] limit their reliability . These challenges underscore the nee d for a systematic and rigorous T ao Zhang ∗ , Rui Ma ∗ , Shuotao Xu, Y ongqiang Xiong, Peng Cheng methodology to assess LLMs’ eectiveness for DSE, rather than relying on ad-hoc or anecdotal evaluations. In this paper we propose Lumina , an LLM-driven GP U archi- tecture exploration framework that delivers reliable and sample- ecient architectural reasoning, addressing both the generality limits of human-crafted heuristics and the instability of vanilla LLM agents. Lumina acquires architectural knowledge directly from simulator code and sensitivity studies, and then uses this knowledge to construct exploration rules that are subsequently auto-corrected as new samples are observed. At the core of Lumina is a DSE Benchmark that evaluates three fundamental LLM capabilities essential for architecture optimiza- tion: bottleneck attribution, performance/area prediction, and pa- rameter tuning. This benchmark systematically exposes LLM weak- nesses in DSE and pr ovides a principled, reproducible basis for select- ing LLMs capable of consistent architectural reasoning—moving beyond ad-hoc, model-dependent usage. W e make the following contributions in this paper: • Lumina Framework : An LLM-guided GP U DSE framework that is both reliable and sample-ecient. Using N VIDIA A100 as reference, Lumina achieves 32.9% higher PHV than ML baselines, improves sample eciency by up to 17.5 × , and is the only method to nd superior designs within 20 samples across a 4.7M-point search space. • DSE Benchmark : The rst benchmark for LLM-driven GP U DSE, assessing three essential capabilities for chip architec- tural reasoning: bottleneck attribution, performance/area prediction, and parameter tuning. It exposes systematic fail- ure cases in LLM-based analysis and oers a reproducible mechanism for selecting LLMs that reason consistently within Lumina . • New DSE Strategy : Lumina uncovers a counter-intuitive DSE strategy: reallocating area from cor e counts to tensor- compute units and memor y bandwidth improv es overall PP A. Via this strategy , Lumina uncovers two superior de- signs than A100: one achieves 1.805 × T TFT/Area and 1.770 × TPOT/Area, while another prioritizes T TFT with 0.592 × T TFT and 0.948 × TPOT than the baseline , both with reduced area. Paper Organization: Section 2 reviews existing DSE methods. Section 3 presents the Lumina framework, and Section 4 describes the DSE Benchmark. Se ction 5 details our implementation and evaluation results, and Section 6 concludes the paper . 2 Background 2.1 DSE Problem Formulation A typical DSE task over a multi-objective design space can be formulated as follows: Denition 1 (Design Space): The design space of a GP U no de is detailed in T able 1 and denoted by X , where each design point is represented as x ∈ X . Denition 2 (Pareto Optimality): A design point x ∗ is Pareto- optimal if Iimproving any objective requires sacricing at least one other objective. The set of all Pareto-optimal points forms the Pareto frontier . Denition 3 (Pareto Hyper v olume, PH V): The Pareto Hyper- volume measures the quality of a Pareto frontier by computing the 𝑚 -dimensional v olume dominated by a set of Pareto-optimal points T able 1. Example Design Space of a 8 GP U Node Parameter V alue Range # Interconnect Link Count 6, 12, 18, 24 4 Core Count 1, 2, 4, 8, 16, 32, 64, 96, 108, 128, 132, 136, 140, 256 14 Sublane Count 1, 2, 4, 8 4 Systolic Array Height and Width 4, 8, 16, 32, 64, 128 6 V ector Width 4, 8, 16, 32, 64, 128 6 SRAM Size (KB) 32, 64, 128, 192, 256, 512, 1024 7 T otal Global Buer (MB) 32, 64, 128, 256, 320, 512, 1024 7 Memory Channel Count 1–12 12 T otal Design Points Approximately 4 . 7 × 10 6 with respect to a given r eference point. A larger PH V indicates a better Pareto frontier . Problem Formulation : Given a GP U node design space X , the goal of DSE is to identify the pareto-optimal congurations x ∈ X upon multiple obje ctives, such as performance, power , and area and maximize PHV in a given time, measur e d by sample numbers. 2.2 Existing DSE Methods Existing DSE methods can b e broadly categorized into black-b ox approaches (including heuristic and machine-learning-based meth- ods) and white-box approaches ( driven by expert knowledge), as summarized in T able 2. Heuristic metho ds such as Grid Search [ 5 ] and Random W alker [ 35 ] do not exploit prior samples or exploration knowledge, leading to uncontrolled variance and random sampling eciency in de- sign quality [ 15 ]. In contrast, machine learning-based approaches such as Bayesian Optimization (BO), Genetic Algorithms ( GA), and Ant Colony Optimization (A CO) aim to identify promising design points through model-based or policy-driven feedback [ 2 , 15 , 18 ], a process we refer to as sample learning . Howev er , these methods generally suer from low sample eciency and limited scalability in high-dimensional DSE tasks [ 7 , 13 , 18 , 22 ]. The low sample ef- ciency arises from the sparsity of high-quality design points in the search space, necessitating more eective strategies to provide initial samples. The scalability issue, on the other hand, stems from the computational and memory costs that can grow cubically with the number of samples [23, 28]. Specically , BO relies on surrogate models to understand the PP A -resource mapping and utilizes acquisition function to improve toward optimization goal. Howev er , its cubic time complexity leads to poor scalability with large design spaces [22]. GA p erforms evolutionary search through mutation and crossov er but conv erges slowly , requiring over 10k samples in DNN DSE eval- uations [ 14 ]. A CO explores via pheromone-guided probabilistic sampling [ 10 ], exhibiting lower sample eciency than BO and GA under various benchmarks [ 15 ]. While distribute d or adap- tive variants have been proposed to improve scalability , large-scale evaluations still report limited improvement [23, 28]. LUMINA: LLM-Guided GP U Architecture Exploration via Boleneck Analysis T able 2. Comparison of representative DSE methods. Category Representative Method Sample Learning Sample Eciency Sample Scalibity Heuristic Grid Search [5] No Random High Random W alker [35] No Random High Machine Learning Bayesian Optimization (BO) [18] Y es Low [15] Low[22] Genetic Algorithms (GA) [14] Y es Low [15] Low[23] Ant Colony Optimization (A CO) [10] Y es Low [15] Low[28] Expertise-Driven Bottleneck-Removal via Critical Path Analysis [1] No High Low Lumina (Our work) Y es High Medium Beyond black-box search, Critical Path A nalysis [ 16 ] oers a white-box alternative that leverages expert knowledge of archi- tectural bottlenecks. As a representative, Archexplorer [ 1 ] per- forms b ottleneck-removal-driven DSE by reallocating resources along critical paths, achieving superior sample eciency and the highest PHV under e qual sampling budgets compared with black- box approaches. Howe ver , the bottleneck-to-resource mapping in such white-box approaches is heuristically dened, which pre vents renement through sampling and limits generality across architec- tures [3]. LLMs oer a mechanism to achieve the strengths of both meth- ods, the sample learning capability and the high sample eciency , by reecting on exploration trajectories and conducting human- like architectural reasoning to explore. Ho wever , their capabilities have not been systematically evaluated on DSE tasks. T o addr ess this gap, we design the Lumina framework with a comprehensive DSE Benchmark, forming a principled framework to assess model performance and enforce consistent architectural reasoning. 3 Lumina Framework 3.1 Overview of Lumina Figure 2. Lumina Framework Design Figure 2 presents the overall design of the Lumina Framework , which is structured around an iterative knowledge acquisition and renement loop. Lumina begins by interacting with the external simulation environment to extract preliminary Ar chite ctural Heuris- tic Knowledge (AHK) . This process is handled by the Qualitative Engine (QualE) , which parses the simulator codebase to attribute resources to metrics, and the Quantitative Engine (QuanE) , which quanties each resource ’s impact on PP A. The core of Lumina ’s optimization lies in its iterative process. Starting from an initial design, the framework evaluates the design and sends the results (e.g., PP A and critical-path data) to the Strategy Engine (SE) . Utilizing the knowledge from AHK, SE performs bot- tleneck analysis on the results and generates a mitigation strategy for the Exploration Engine (EE) , which proposes a new , informed design point. Meanwhile, the simulation results are stored in the Trajectory Memor y (TM) , which is use d to rene AHK in subse- quent iterations. This process repeats until the sampling budget is met, ultimately outputting a set of Pareto-optimal designs. The subsequent subsections detail Lumina ’s core components: §3.2 focuses on the automatic acquisition of AHK through the Qualitative and Quantitative Engines; §3.3 describes the utilization of AHK to guide DSE via the Strategy and Exploration Engines; and §3.4 elucidates the renement loop that enables continuous learning and cross-architecture scalability . 3.2 Architectural Heuristic Knowledge (AHK) Acquisition The initial step in the Lumina framework is the automated acquisi- tion of AHK , a critical capability that distinguishes our approach from conventional black-box and white-box methods. AHK ser ves as a structural and quantitative understanding of how architectural resources are attributed to PP A metrics. The acquisition is handled by two complementary modules: the alE and the anE . 3.2.1 Qualitative Engine ( alE ). The primar y role of the alE is to establish the structural boundaries of the AHK. It achieves this by leveraging the semantic understanding capabilities of a LLM to parse and interpret the simulator’s complex codebase. Specically , the alE performs static code analysis, utilizing the LLM’s interpr etative str ength to explicitly map the causal inuence of each resource hyper-parameters onto specic PP A metrics. This process generates an Inuence Map, which structurally denes the exact dependencies between a performance specication and the concrete architectural parameters within the design space. For instance, the map identies that peak vector compute throughput is inuenced by core count, sublane count, and vector unit, but has no direct structural dependency on the tensor unit. This derived, structurally constrained knowledge drastically reduces the search space for subsequent quantitative analysis, providing a level of informed pruning analogous to the initial heuristics emplo yed in traditional white-box methods. T ao Zhang ∗ , Rui Ma ∗ , Shuotao Xu, Y ongqiang Xiong, Peng Cheng 3.2.2 Quantitative Engine ( anE ). Building up on the struc- tural dep endencies dene d by the alE ’s Inuence Map, the anE is responsible for assigning quantitative inuence values to the AHK relationships. The anE achieves this by executing an au- tomated preliminary sensitivity analysis against the simulator , leveraging the LLM’s code generation and orchestration ca- pability to script and manage the necessary micro-benchmarks. By systematically observing the impact of granular changes ( e.g., a ± 1 unit perturbation in the Core Count) on the attributed PP A metrics (e.g., Area), the anE quanties the local inuence of each resource. This comprehensive quantication enables Lumina to initialize its exploration with robust, informe d priors, providing a signicant and measurable advantage over conv entional black- box methods that must learn the entire PP A-r esource mapping from scratch. Under complex performance models where measur- ing performance perturbations is costly , the anE can focus on estimating only pow er and area, which are faster to evaluate, while still providing informative priors for e xploration. 3.3 Strategy and Exploration Engine The Strategy and Exploration Engines are responsible for leveraging the acquired AHK to guide the DSE toward Par eto-optimal fronts eciently . 3.3.1 Strategy Engine ( SE ). The SE denes the b ottleneck mit- igation strategy based on critical-path feedback provided by the simulator . Upon identifying the most dominant performance stall (e.g., inter conne ct congestion or memory latency), the SE uses the AHK to propose a constrained set of design parameter adjustments aimed at alleviating that specic bottleneck. Crucially , the SE de- termines the aggressiveness of the search by deciding how many design parameters to modify simultaneously in the next iteration. For instance, if interconnect is the b ottleneck, the SE suggests maximizing interconnect link count while simultaneously se ek- ing a resource trade-o (e.g., reducing Core Count) based on the quantitative inuence factors. This guided approach ensur es that exploration is always purposeful and locally optimal. 3.3.2 Exploration Engine ( EE ). The EE serves as the integration layer between the Simulation Environment and Lumina ’s internal modules. It serializes the SE ’s design directives into the simulator’s required format, issues the evaluation request, and retrieves the re- sulting performance and critical-path metrics. The EE then records this feedback in the TM and returns the structur ed sample to the SE , enabling informed selection of subsequent design p oints. 3.4 Renement Loop The Renement Loop enables Lumina to iteratively rene its heuristics and overcome the static limitations of conventional white- box methods. In each iteration, it reects on the traje ctory histor y stored in the TM to identify past design attempts that failed to meet PP A targets and conclude the patterns to prevent their r epetition. This reection drives the core renement: obser ved performance data are used to update the quantitative inuence factors in the AHK, calibrating the model’s understanding of the PP A -resource mapping. Through these data-driven corrections, Lumina dynam- ically adapts to non-linear and complex b ehaviors in the design space, maintaining high sample eciency and scalability across diverse architectural congurations. 4 DSE Benchmark Being the backbone of Lumina framework, the LLM reasoning model needs to be carefully chosen or netuned to ensure its abil- ity to analyze the performance metrics and tune the parameters intelligently . Additionally , the model needs to properly follow the instructions from system prompts to (i) respect the design con- straints and (ii) avoid circular reasoning. Therefore , we design a Q&A based b enchmark to evaluate a mo del’s ability for architecture exploration purpose. Figure 3. Examples of three task in DSE benchmark. For each task, we show the associated evidences from human system prompt, question on the left and LLM’s correct answer on the right. Inspired by Longbench [ 4 ], we formulate each data sample as a multiple choice question. Each question starts with a system prompt and a description of the application target, ranging from primitive operators (e.g., matmul, layernorm, etc.) to full workload. A problem is then presented along with multiple-choice answers, of which only one is correct. The data samples can be categorized into three major tasks. Figure 3 shows example data for each task. Boleneck analysis. These questions evaluates the model’s fundamental capability to infer the relationship b etween perfor- mance counters and their corresponding architectural parameters. Each question prompt species the architectural conguration, the optimization objective, and the observed p erformance counter val- ues for the target application. The model is required to determine which parameters should be adjusted and in which direction to achieve impro vement toward the stated optimization goal. LUMINA: LLM-Guided GP U Architecture Exploration via Boleneck Analysis Performance/area prediction. These questions aim to assess the model’s capability to predict performance and area based on historical design trajectories and analytical models derived from source code. Each prompt provides several example architecture specications along with their corresponding performance and area results, as well as the source code of the ar ea model. The model is required to infer and select the correct performance or area value for a new architecture specication. Parameter tuning. These questions are intended to evaluate the model’s comprehensive capability in GP U architecture design space exploration. In addition to historical design trajectories and source code, each question species an initial design p oint, design constraints, and an optimization objective. The model is required to identify and select the design that best achieves the optimization goal while adhering to the stated constraints. 5 Evaluation 5.1 Experimental Setup W e evaluated various DSE methods on both the rooine mo del and the LLMCompass model [ 37 ]. LLMCompass is a GP U simulator spe- cialized for LLM inference workloads; it models software-hardware co-optimizations and achieves performance and area estimations within 10% relative error compared to real hardware . W e extended LLMCompass to include critical path analysis, enabling identi- cation of dominant stalls for both T TFT and TPOT metrics, with negligible overhead. 5.2 DSE Benchmark W e evaluate several state-of-the-art open-source LLMs, includ- ing Qwen3-Next-80B- A3B-Instruct (Qwen-3) [ 29 ], Phi-4-reasoning (Phi-4) [ 25 ] and Llama-3.1-8B-Instruct (Llama-3.1) [ 24 ]. For brevity , we refer to the models using the shortened names shown in paren- theses throughout the paper . The benchmark comprises 308 questions for bottleneck analysis, 127 for performance/area prediction, and 30 for parameter tun- ing. W e measure LLMs’ DSE capability using the accuracy metrics, which is computed as the proportion of correctly solved problems across the dataset. As shown in T able 3, Qwen-3 achieved the highest accuracy among the evaluated models under the default system prompt, which already provides the necessary architectural context. How- ever , the substantial variability across tasks indicates that additional error-handling me chanisms ar e required. T o understand these gaps, we analyzed the model’s systematic failure patterns and translated them into explicit corrective rules. With this additional human- crafted guidance, Qwen-3 achieved noticeably higher accuracy , as reported in the Accuracy (Enhanced) column. In Lumina , these rules are integrated into the Strategy Engine , enabling the system to enforce consistent and reliable architectural reasoning during design-space exploration. For bottlene ck analysis , models often selected multi-r esource con- gurations containing irrelevant parameters instead of targeting the resource most corr elated with the stall. W e therefore constrained the Strategy Engine to focus solely on the dominant bottleneck . A further limitation is that it fails to recognize the adverse eects of enlarging resources such as the systolic array height and width, which may cause signicant compute under-utilization. Mitigating T able 3. Accuracy across tasks and open-source LLMs Benchmark T ask Model Accuracy (Original) Accuracy (Enhanced) Bottleneck Analysis Phi-4 0.70 0.76 Qwen-3 0.73 0.80 Llama-3.1 0.47 0.53 Perf/Area Prediction Phi-4 0.42 0.61 Qwen-3 0.59 0.82 Llama-3.1 0.23 0.39 Parameter T uning Phi-4 0.30 0.48 Qwen-3 0.40 0.63 Llama-3.1 0.26 0.46 this gap requires enabling the model to reason from prior experi- ence or ne-tuning it on datasets that capture such design pitfalls. For performance and area prediction , models frequently computed deltas against a zero baseline rather than the dened sensitivity reference. T o ensure consistency , the Strategy Engine was required to always compute deltas relative to the sensitivity reference . For parameter tuning , mo dels often attempt to comp ensate for an unresolved dominant bottleneck by adjusting multiple non-critical resources, increasing reasoning comple xity beyond the model’s ca- pability . T o address this, we instructe d the Strategy Engine to adjust only the least critical resource when mitigating the dominant stall. 5.3 Lumina Framework W e evaluate the DSE methods under a GPT -3 inference workload using the full 4.7-million design space. W e use the N VIDIA A100 as the reference design (T able 4) and adopt 8-way tensor parallelism as the deployment strategy . T TFT latency is measured by executing a single GPT -3 layer with a batch size of 8 and an input sequence length of 2048. TPOT latency is dene d as the time to generate the 1024th output token under the same conguration. All operators are executed in FP16 precision. This setup ensures that both T TFT and TPOT characteristics are faithfully captured, pr oviding a real- istic basis for DSE evaluation. The comparison metrics are Pareto Hyper V olume (PH V)[ 1 ] and Sample Eciency , dened as number of points that are better than the r eference point in all objectives divides the total exploration samples. Sample eciency measures the fraction of evaluated designs that impr ove over the refer ence point; higher values indicate a more ecient exploration of the design space. W e begin by comparing the PH V , asso ciated variance, and sam- ple eciency of each metho d across 1,000 samples and multiple independent trials under rooine model evaluation. A s shown in Figure 4, Lumina achieves the highest mean PHV and sample eciency , outperforming all other metho ds by 32.9% in PHV and 17.5 × in sample eciency . Among the black-box baselines, ACO and RW exhibit comparable PH V and sample eciency , primarily due to their chance sampling behavior , as illustrated in Figur e 5. No- tably , A CO’s best-to-worst normalize d PHV ratio reaches up to 1.82x , indicating its high variability across runs. BO delivers con- sistently strong performance, ranking fourth overall, wher eas GA and GS consistently fail to discover high-quality designs. Further- more, Lumina maintains superior sample eciency across multiple runs , highlighting its stability and robustness. Next, we analyze the DSE pattern to show why Lumina outper- forms other methods. As shown in Figure 6, Lumina explores the T ao Zhang ∗ , Rui Ma ∗ , Shuotao Xu, Y ongqiang Xiong, Peng Cheng Figure 4. Mean PH V vs. Sample Eciency among DSE Metho ds. Figure 5. Distribution of PH V vs. Sample Eciency among DSE Methods. design space far more eciently by leveraging its understanding of the simulator’s internal mechanisms. In contrast, the state-of- the-art black-box baseline, A CO , adopts a far-to-near exploration strategy , consuming numerous samples mer ely to map the objec- tive space before reaching promising r egions. As a result, Lumina identies substantially more high-quality design points within the same sample budget—specically , 421 superior designs within 1,000 samples, compared to only 24 for ACO— demonstrating its sig- nicant advantage in sample eciency . Then, we evaluated all methods on the LLMCompass mo del . Owing to the high simulation cost of end-to-end inference evalu- ation, we imposed a strict sample budget of only 20 evaluations, which requires approximately one week to complete. Under this constraint, none of the black-box algorithms succeeded in iden- tifying any design that surpassed the reference point of N VIDIA A100 [ 37 ]. This failure primarily stems from the insucient number of samples, which prev ents these methods from eectively map- ping the high-dimensional design space. In contrast, Lumina was the only methodology that successfully discovered six de- signs surpassing the reference point , demonstrating its unique eectiveness under extreme sample constraints. Finally , we analyze the rationale behind the top-performing de- signs identied by Lumina relative to the NVIDIA A100 baseline, as shown in T able 4. Lumina consistently reallocates architectural Figure 6. Search Pattern Comparison b etween A CO and Lumina T able 4. T op-2 designs identied by Lumina Compared with NVIDIA A100 Specications Design A Design B A100 Interconnect Link Count 24 18 12 Core Count 64 96 108 Sublane Count 4 4 4 V e ctor Width 16 16 32 Systolic Array Height × W eight 32 × 32 32 × 32 16 × 16 SRAM Size (KB) 128 128 128 Global Buer (MB) 40 40 40 Memory Channel Count 6 6 5 Normalized T TFT 0.717 0.592 1.000 Normalized TPOT 0.947 0.948 1.000 Normalized Area 0.772 0.952 1.000 T TFT/Area 1.805 1.366 1.000 TPOT/Area 1.770 1.107 1.000 resources to address the dominant performance limits, demonstrat- ing that co-optimizing compute hierar chy , memory bandwidth, and interconnect bandwidth yields substantial gains. Concretely , it increases the interconnect link count (12 → 18/24) to bo ost communication capability , while osetting the area cost through a moderate reduction in core count (108 → 64/96). Both optimized designs retain a wide systolic array ( 32 × 32 ) and add one additional memory channel (5 → 6), thereby improving memory bandwidth and sustaining compute throughput. Design A delivers the best overall trade-o, achie ving 1.805 × T TFT/Area eciency and 1.77 × TPOT/Area eciency relativ e to A100, while using only 77% of its area. Design B attains comparable TPOT and reduces normalized T TFT to 0.592 , making it suitable for strict T TFT -oriented SLAs. These outcomes show that Lumina eectively identies balanced design points where compute and communication r esources ar e jointly optimized to maximize utiliza- tion. 6 Conclusion In this paper , we introduced Lumina , a novel LLM-Guided GP U architecture exploration frame work; a DSE b enchmark that sys- tematically evalutes LLM’s capability on DSE tasks and ensures consistent architectural reasoning; and two Lumina -discovered designs and their insights. LUMINA: LLM-Guided GP U Architecture Exploration via Boleneck Analysis References [1] Chen Bai, Jiayi Huang, Xuechao W ei, Yuzhe Ma, Sicheng Li, Hongzhong Zheng, Bei Y u, and Yuan Xie. 2023. ArchExplorer: Microarchitecture Exploration Via Bottleneck Analysis. In Proceedings of the 56th Annual IEEE/A CM International Symposium on Microarchitecture (Tor onto, ON, Canada) (MICRO ’23) . Association for Computing Machinery , New Y ork, N Y, USA, 268–282. doi: 10.1145/3613424. 3614289 [2] Chen Bai, Qi Sun, Jianwang Zhai, Y uzhe Ma, Bei Y u, and Martin D .F. W ong. 2021. BOOM-Explorer: RISC- V BOOM Microarchitecture Design Space Exploration Framework. In 2021 IEEE/ACM International Conference On Computer Aided Design (ICCAD) . 1–9. doi: 10.1109/ICCAD51958.2021.9643455 [3] Chen Bai, Jianwang Zhai, Yuzhe Ma, Bei Y u, and Martin D.F. W ong. 2024. T o- wards automated RISC- V microarchitecture design with reinforcement learning. In Proceedings of the Thirty-Eighth AAAI Conference on Articial Intelligence and Thirty-Sixth Conference on Innovative A pplications of Articial Intelligence and Fourte enth Symposium on Educational Advances in A rticial Intelligence (AAAI’24/IAAI’24/EAAI’24) . AAAI Press, Article 2, 9 pages. doi: 10.1609/aaai. v38i1.27750 [4] Y ushi Bai, Shangqing Tu, Jiajie Zhang, Hao Peng, Xiaozhi W ang, Xin Lv , Shulin Cao, Jiazheng Xu, Lei Hou, Y uxiao Dong, Jie T ang, and Juanzi Li. 2025. LongBench v2: T owards Deep er Understanding and Reasoning on Realistic Long-context Multitasks. arXiv:2412.15204 [cs.CL] hps://arxiv .org/abs/2412.15204 [5] James Bergstra and Y oshua Bengio. 2012. Random search for hyper-parameter optimization. J. Mach. Learn. Res. 13, null (Feb. 2012), 281–305. [6] T om B. Brown, Benjamin Mann, Nick Ryder , Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry, Amanda Askell, Sandhini Agarwal, Ariel Herbert-V oss, Gretchen Krueger , T om Henighan, Rewon Child, Aditya Ramesh, Daniel M. Ziegler , Jerey Wu, Clemens Winter , Christopher Hesse, Mark Chen, Eric Sigler , Mateusz Litwin, Scott Gray , Benjamin Chess, Jack Clark, Christopher Berner , Sam McCandlish, Alec Radford, Ilya Sutskever , and Dario Amodei. 2020. Language Models are Few-Shot Learners. CoRR abs/2005.14165 (2020). arXiv:2005.14165 hps://arxiv .org/abs/2005.14165 [7] Jacob Buckman, Danijar Hafner , George Tucker , Eugene Brevdo, and Honglak Lee. 2018. Sample-Ecient Reinforcement Learning with Stochastic Ensemble V alue Expansion. In Advances in Neural Information Processing Systems , S. Bengio , H. W allach, H. Larochelle, K. Grauman, N. Cesa-Bianchi, and R. Garnett (Eds.), V ol. 31. Curran Associates, Inc. hps://proceedings.neurips.cc/paper_files/paper/ 2018/file/f02208a057804ee16ac724d3cec53b- Paper .pdf [8] Angelica Chen, David M. Dohan, and David R. So. 2023. EvoPrompting: language models for code-level neural architecture search. In Proce edings of the 37th International Conference on Neural Information Processing Systems (New Orleans, LA, USA) (NIPS ’23) . Curran Associates Inc., Red Ho ok, N Y , USA, Article 342, 31 pages. [9] Brian A. Fields, Rastislav Bodík, Mark D . Hill, and Chris J. Newburn. 2003. Using Interaction Costs for Microarchitectural Bottleneck Analysis. In Proceedings of the 36th A nnual IEEE/ACM International Symposium on Microarchitecture (MICRO 36) . IEEE Computer Society , USA, 228. [10] Yiheng Gao and Benjamin Carrion Schafer . 2021. Eective High-Level Synthesis Design Space Exploration through a Novel Cost Function Formulation. In 2021 IEEE International Symposium on Circuits and Systems (ISCAS) . 1–5. doi: 10.1109/ ISCAS51556.2021.9401684 [11] Felipe L. Gewers, Gustavo R. Ferreira, Henrique Ferraz de Arruda, Filipi Nasci- mento Silva, Cesar H. Comin, Diego R. Amancio, and Luciano da F. Costa. 2018. Principal Component Analysis: A Natural Approach to Data Exploration. CoRR abs/1804.02502 (2018). arXiv:1804.02502 [12] Dell’Oro Group . 2025. Data Center Capex to Surpass $1 Trillion by 2029, Accord- ing to Dell’Oro Group. hps://w ww .delloro.com/news/data- center- capex- to- surpass- 1- trillion- by- 2029/ . Accessed: Nov . 7, 2025. [13] Hadi S. Jomaa, Josif Grabocka, and Lars Schmidt- Thieme. 2019. Hyp-RL : Hyp er- parameter Optimization by Reinforcement Learning. arXiv:1906.11527 [cs.LG] hps://arxiv .org/abs/1906.11527 [14] Sheng-Chun K ao and T ushar Krishna. 2020. GAMMA: A utomating the HW Map- ping of DNN Models on Accelerators via Genetic Algorithm. In 2020 IEEE/ACM International Conference On Computer Aided Design (ICCAD) . 1–9. [15] Srivatsan Krishnan, Amir Yazdanbakhsh, Shvetank Prakash, Jason Jabbour, Ikechukwu Uchendu, Susobhan Ghosh, Behzad Boroujerdian, Daniel Richins, Devashree Tripathy , Aleksandra Faust, and Vijay Janapa Re ddi. 2023. ArchGym: An Open-Source Gymnasium for Machine Learning Assisted Architecture De- sign (ISCA ’23) . Association for Computing Machinery, New Y ork, N Y, USA, Article 14, 16 pages. doi: 10.1145/3579371.3589049 [16] Jaewon Lee, Hanhwi Jang, and Jangwoo Kim. 2014. RpStacks: Fast and Accurate Processor Design Space Exploration Using Representative Stall-Event Stacks. In Proceedings of the 47th Annual IEEE/A CM International Symposium on Microar- chitecture (Cambridge, United Kingdom) (MICRO-47) . IEEE Computer Society , USA, 255–267. doi: 10.1109/MICRO.2014.26 [17] Dong Li, Minsoo Rhu, Daniel R. Johnson, Mike O’Connor , Mattan Erez, Doug Burger , Donald S. Fussell, and Stephen W . Redder. 2015. Priority-based cache allocation in throughput processors. In 2015 IEEE 21st International Symposium on High Performance Computer A rchitecture (HPCA) . 89–100. doi: 10.1109/HPCA. 2015.7056024 [18] Jingyuan Li, Jianrong Zhang, Y e Li, W enbo Yin, and Lingli Wang. 2025. LEMOE: LLM-Enhanced Multi-Objective Bayesian Optimization for Microar chitecture Exploration. In 2025 62nd ACM/IEEE Design Automation Conference (DA C) . 1–7. doi: 10.1109/DA C63849.2025.11132704 [19] Y ang Liu, Jiahuan Cao, Chongyu Liu, Kai Ding, and Lianwen Jin. 2025. Datasets for large language models: A compr ehensive sur vey . Articial Intelligence Review 58, 12 (2025), 403. [20] LMArena. 2025. O verview Leaderboard & Model Rankings. hps://lmarena.ai/ leaderboard . Accessed: 2025-11-05. [21] Donger Luo, Qi Sun, Xinheng Li, Chen Bai, Bei Y u, and Hao Geng. 2024. Knowing The Spec to Explore The Design via Transformed Bayesian Optimization. In Proceedings of the 61st ACM/IEEE Design Automation Conference (San Francisco, CA, USA) (DA C ’24) . Association for Computing Machinery, New Y ork, N Y , USA, Article 138, 6 pages. doi: 10.1145/3649329.3658262 [22] W esley J. Maddox, Maximilian Balandat, Andrew Gordon Wilson, and Eytan Bakshy . 2021. Bayesian optimization with high-dimensional outputs (NIPS ’21) . Curran Associates Inc., Red Hook, NY, USA, Article 1474, 14 pages. [23] María Martínez-Ballesteros, Jaume Bacardit, Alicia Troncoso, and José C. Riquelme. 2015. Enhancing the scalability of a genetic algorithm to discover quantitative association rules in large-scale datasets. Integr . Comput.-Aided Eng. 22, 1 (Jan. 2015), 21–39. doi: 10.3233/ICA- 140479 [24] Meta. 2024. Meta - Llama - 3.1 - 8B - Instruct. hps://huggingface.co/metaâĂŚllama/ LlamaâĂŚ3.1âĂŚ8BâĂŚInstruct . Accessed: 2025-11-15. [25] microsoft. 2024. phi4-reasoning. https://huggingface.co/microsoft/Phi-4- reasoning. Accessed: 2025-11-15. [26] MLCommons Association. 2025. MLPerf Inference v5.0 Advances Language Model Capabilities for GenAI. hps://mlcommons.org/2025/04/llm- inference- v5/ . Accessed: 2025-11-05. [27] V eynu Narasiman, Michael Shebanow, Chang Joo Lee, Rustam Miftakhutdinov , Onur Mutlu, and Y ale N. Patt. 2011. Improving GP U performance via large warps and two-level warp scheduling. In 2011 44th Annual IEEE/ACM International Symposium on Microarchitecture (MICRO) . 308–317. [28] Joshua Peake, Martyn Amos, Paraskevas Yiapanis, and Huw Lloyd. 2019. Scaling T echniques for Parallel Ant Colony Optimization on Large Problem Instances. In Proceedings of the Genetic and Evolutionary Computation Conference (GECCO ’19) . ACM, United States, 47–54. doi: 10.1145/3321707.3321832 The Genetic and Evolutionary Computation Conference 2019, GECCO’19 ; Conference date: 13-07-2019 Through 17-07-2019. [29] Qwen. 2025. Qwen3-Next-80B- A3B-Instruct. hps://huggingface .co/Qwen/ Qwen3- Next- 80B- A3B- Instruct . Accessed: 2025-11-15. [30] Mayk Caldas Ramos, Shane S. Michtav y , Marc D. Poroso, and Andrew D. White. 2025. Bayesian Optimization of Catalysis With In-Context Learning. arXiv:2304.05341 [physics.chem-ph] hps://ar xiv .org/abs/2304.05341 [31] Timothy G. Rogers, Mike O’Connor , and T or M. Aamodt. 2012. Cache-Conscious W avefront Scheduling. In 2012 45th Annual IEEE/ACM International Symposium on Microarchitecture . 72–83. doi: 10.1109/MICRO.2012.16 [32] S. M To whidul Islam Tonmo y, S M Mehedi Zaman, Vinija Jain, Anku Rani, Vipula Rawte, Aman Chadha, and Amitava Das. 2024. A Comprehensive Survey of Hallucination Mitigation T echniques in Large Language Models. arXiv:2401.01313 [cs.CL] hps://arxiv .org/abs/2401.01313 [33] Chaojie W ang, Y anchen Deng, Zhiyi Lyu, Liang Zeng, Jujie He, Shuicheng Y an, and Bo An. 2024. Q*: Improving multi-step reasoning for llms with deliberative planning. arXiv preprint arXiv:2406.14283 (2024). [34] Samuel Williams, Andrew W aterman, and David Patterson. 2009. Rooine: an insightful visual performance model for multicore architectures. Commun. ACM 52, 4 (April 2009), 65–76. doi: 10.1145/1498765.1498785 [35] Feng Xia, Jiaying Liu, Hansong Nie, Y onghao Fu, Liangtian W an, and Xiangjie Kong. 2020. Random W alks: A Review of Algorithms and Applications. IEEE Transactions on Emerging T opics in Computational Intelligence 4, 2 (2020), 95–107. doi: 10.1109/TETCI.2019.2952908 [36] Xiaoling Yi, Jialin Lu, Xiankui Xiong, Dong Xu, Li Shang, and Fan Y ang. 2023. Graph Representation Learning for Microarchitecture Design Space Exploration. In 2023 60th ACM/IEEE Design Automation Conference (D AC) . 1–6. doi: 10.1109/ DA C56929.2023.10247687 [37] Hengrui Zhang, August Ning, Rohan Baskar Prabhakar, and David W entzla. 2025. LLMCompass: Enabling Ecient Hardware Design for Large Language Model Inference. In Proceedings of the 51st A nnual International Symposium on Computer A rchitecture (Buenos Aires, Argentina) (ISCA ’24) . IEEE Press, 1080–1096. doi: 10.1109/ISCA59077.2024.00082 [38] Xin Zheng, Mingjun Cheng, Jiasong Chen, Huaien Gao, Xiaoming Xiong, and Shuting Cai. 2024. BSSE: Design Space Exploration on the BOOM With Semi- Supervised Learning. IEEE Transactions on V ery Large Scale Integration (VLSI) Systems 32, 5 (2024), 860–869. doi: 10.1109/T VLSI.2024.3368075

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment