Hyperparameter Trajectory Inference with Conditional Lagrangian Optimal Transport

Neural networks (NNs) often have critical behavioural trade-offs that are set at design time with hyperparameters-such as reward weights in reinforcement learning or quantile targets in regression. Post-deployment, however, user preferences can evolv…

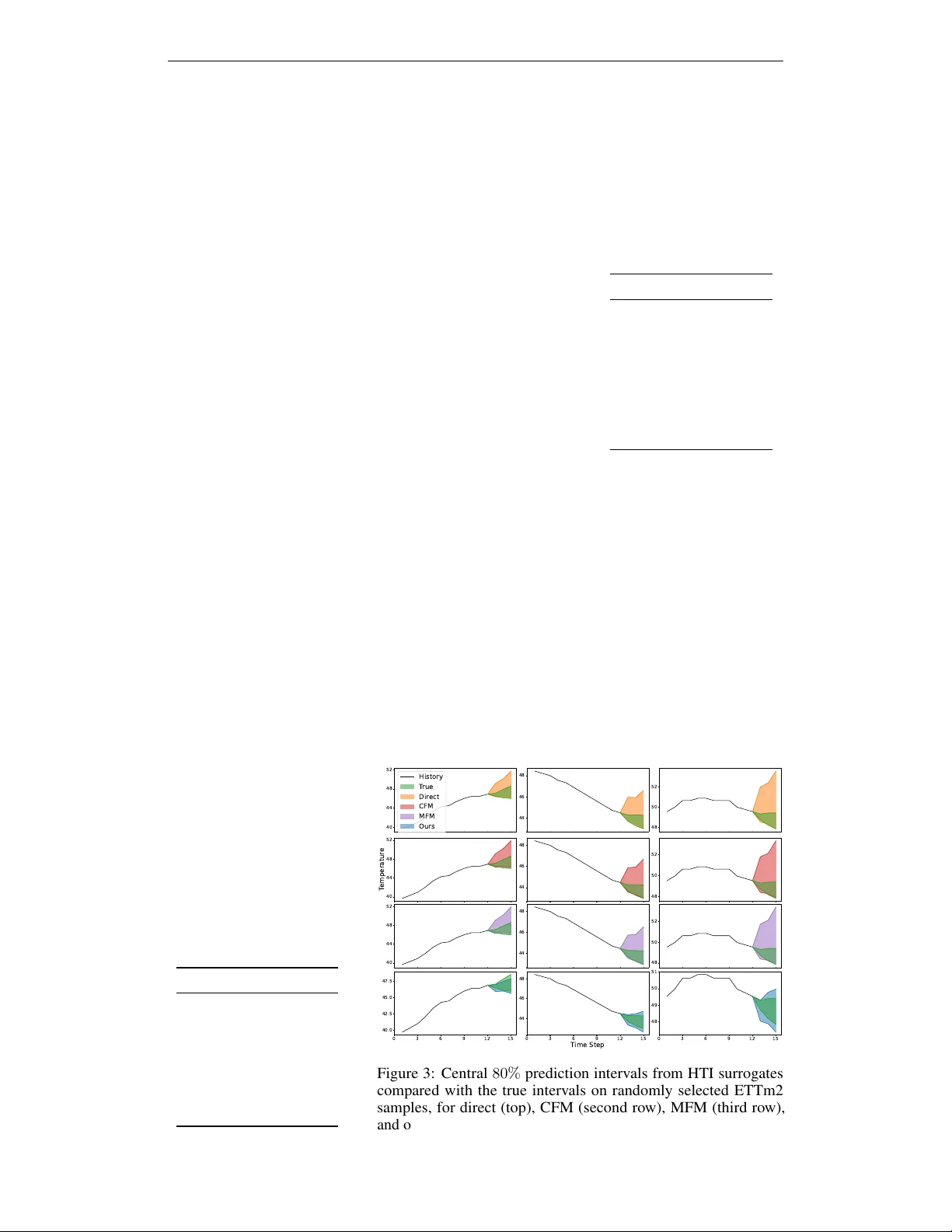

Authors: Harry Amad, Mihaela van der Schaar