Detecting Transportation Mode Using Dense Smartphone GPS Trajectories and Transformer Models

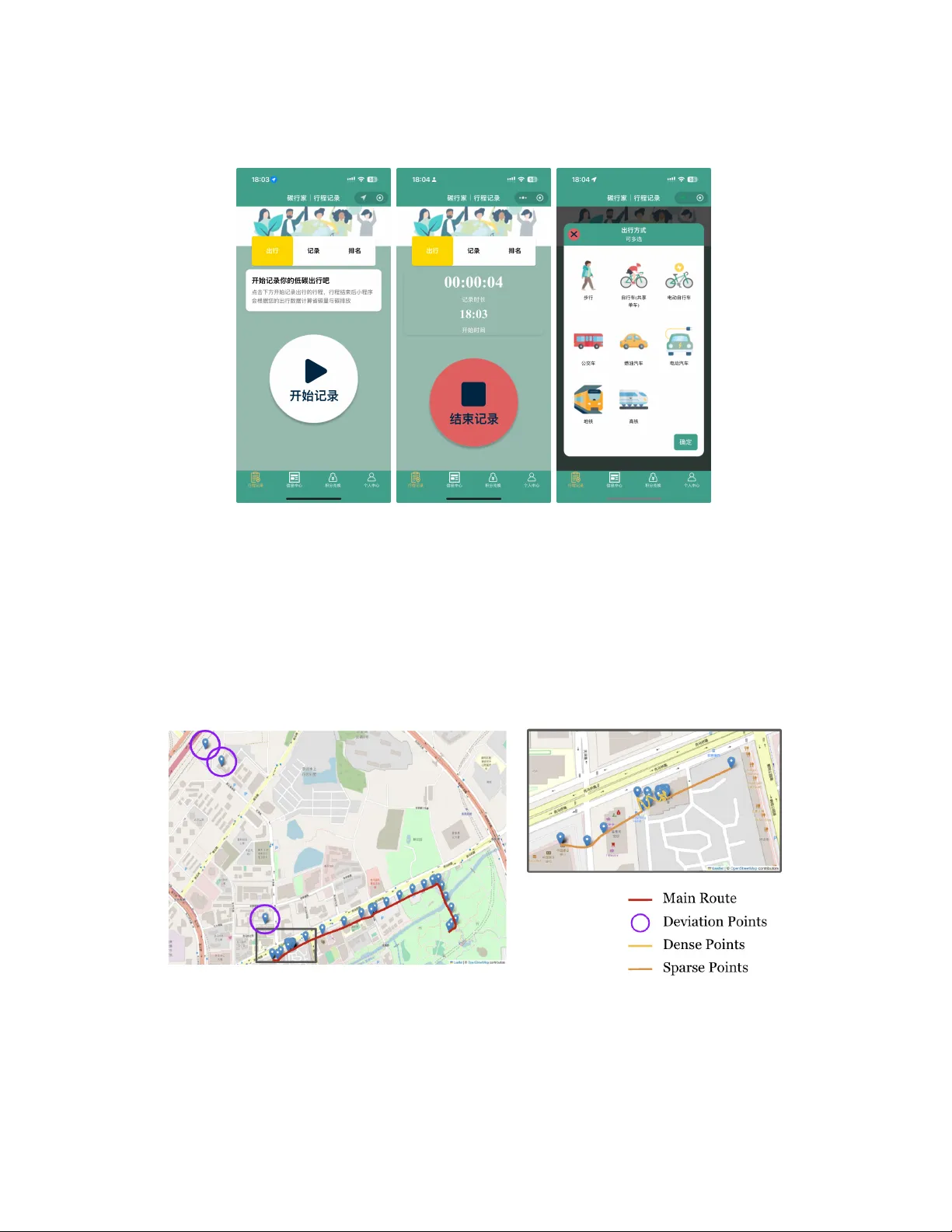

Transportation mode detection is an important topic within GeoAI and transportation research. In this study, we introduce SpeedTransformer, a novel Transformer-based model that relies solely on speed inputs to infer transportation modes from dense sm…

Authors: Yu, ong Zhang, Othmane Echchabi