Deep Learning Multi-Horizon Irradiance Nowcasting: A Comparative Evaluation of Three Methods for Leveraging Sky Images

We investigate three distinct methods of incorporating all-sky imager (ASI) images into deep learning (DL) irradiance nowcasting. The first method relies on a convolutional neural network (CNN) to extract features directly from raw RGB images. The se…

Authors: Erling W. Eriksen, Magnus M. Nygård, Niklas Erdmann

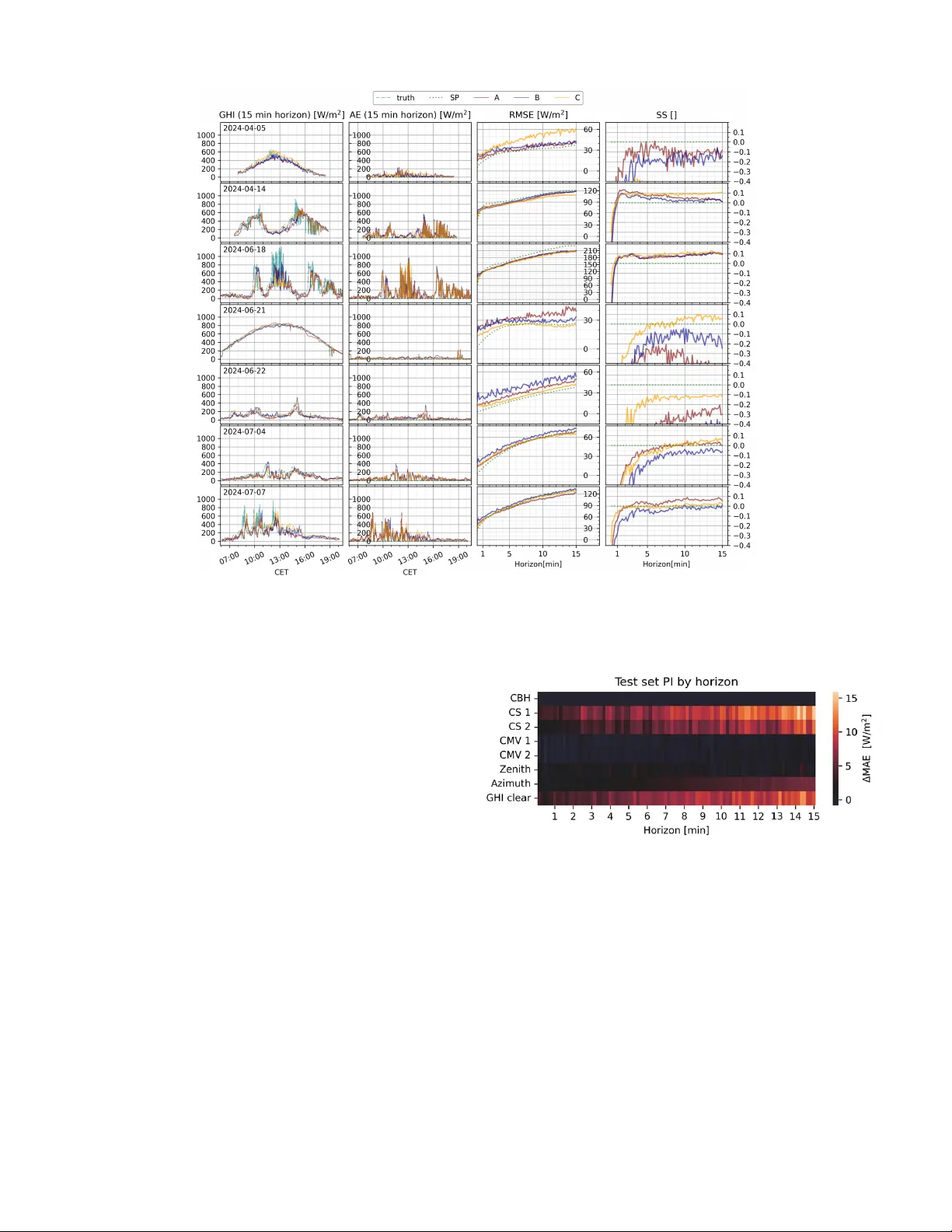

1 Deep Learning Multi-Horizon Irradiance No wcasting: A Comparati v e Ev aluation of Three Methods for Le v eraging Sky Images Erling W . Eriksen, Magnus M. Nyg ˚ ard, Niklas Erdmann and Heine N. Riise Abstract —W e in vestigate three distinct methods of incor po- rating all-sky imager (ASI) images into deep lear ning (DL) irradiance nowcasting. The first method relies on a con volutional neural network (CNN) to extract featur es directly fr om raw RGB images. The second method uses state-of-the-art algorithms to engineer 2D feature maps inf ormed by domain knowledge, e.g., cloud segmentation, the cloud motion vector , solar position, and cloud base height. These featur e maps are then passed to a CNN to extract compound features. The final method relies on aggregating the engineer ed 2D featur e maps into time-series input. Each of the thr ee methods were then used as part of a DL model trained on a high-fr equency , 29-day dataset to generate multi-horizon for ecasts of global horizontal irradiance up to 15 minutes ahead. The models were then evaluated using root mean squared error and skill score on 7 selected days of data. Aggregated engineered ASI features as model input yielded superior for ecasting performance, demonstrating that integration of ASI images into DL nowcasting models is possible without complex spatially-ordered DL-architectur es and inputs, under- scoring opportunities for alternativ e image processing methods as well as the potential f or improv ed spatial DL featur e pr ocessing methods. T ABLE I L I S T O F AC R O N Y MS U S E D I N T H E P R E S E N T WO R K Acronym Description PV Photov oltaics GHI Global Horizontal Irradiance ASI All-Sky Imager FO V Field of V iew NWP Numerical W eather Prediction DL Deep Learning DNN Dense Neural Network CNN Con volutional Neural Network LSTM Long Short-T erm Memory CC Cloud Classification CS Cloud Segmentation CMV Cloud Motion V ector CBH Cloud Base Height CO T Cloud Optical Thickness HSI Hue Saturation Intensity LOCF Last Observation Carried Forward PCA Principal Component Analysis PI Permutation Importance AE Absolute Error MAE Mean Absolute Error MSE Mean Squared Error RMSE Root Mean Squared Error SS Skill Score IFE Institute for Energy T echnology WMO W orld Meteorological Organization Erling W . Eriksen, Magnus M. Nyg ˚ ard and Heine N. Riise are with the Institute for Energy T echnology . Niklas Erdmann is with the University of Oslo Department of T echnology Systems. (Corresponding author: Erling W . Eriksen) I . I N T R O D U C T I O N Solar photovoltaics (PV) has more than doubled its share of the renewable energy ov er the past 5 years, bringing with it the demand for solutions to predict and manage variability in production [1]. Information about the future PV production, giv en through forecasts, can be used for po wer plant and grid control, energy markets trading, to minimize imbalance settlements, and reduce forecast error penalties [2], [3], [4]. Forecasts can also serve as a tool in systems combining PV with storage through hydrogen or batteries, where unregulated power generation with short-term variability is likely to result in increased degradation of the system [5], [6], [7]. The selection of a forecasting method depends on the target application and required forecast horizon. Numerical W eather Prediction (NWP) and satellite methods are valuable for day- ahead trading, while All Sky Imager (ASI) and satellite meth- ods can be lev eraged towards intraday trading and advanced control of grids and power plants. Forecasts utilizing ASIs usually tar get horizons 0 to 30 minutes into the future, a horizon range commonly referred to as nowcasting [8]. In this domain, the state-of-the-art methods consist of Deep Learning (DL) models applying images from ground-based ASIs and historical PV or irradiance time-series data as inputs [8], [9], [10]. W ithin DL nowcasting using ASIs, modern approaches vary , where Conv olutional Neural Network (CNN) based regression methods are most frequently applied due to their aptitude for image inputs [11]. Although there have been recent strides, e ven the most promising nowcasting methods struggle to consistently per- form on a level that is useful for applications. This illustrates the significant challenge of predicting the chaotic nature of the atmosphere. All recent dev elopment of successful nowcasting methods uses ASI images, making it essential to understand which aspects of ASI images are most useful for DL now- casting models. Many advances focus on purely data-dri ven techniques, applying new DL architectures. Howe ver , as seen in other AI applications, such as protein folding, including structural and physical information in the DL system can often create a more useful and accurate model [12]. T o in vestigate the contributions of ASI image information for nowcasting, this work compares three dif ferent methods of introducing ASI images into a Long Short-term Memory (LSTM) based DL model for solar forecasting. T wo of these utilize a CNN module for image inputs or feature maps in a hybrid CNN-LSTM architecture, a promising approach aiming 2 for the separate extraction of useful spatial and temporal patterns [13], [14]. The CNN-LSTM architecture has been explored in earlier works, but a structured mapping of which image qualities or feature extraction schemes are useful for this architecture has not been inv estigated. Focusing on this, we make the following contributions. • Comparativ e ev aluation of three ways to le verage ASI images for LSTM-based no wcasting models, showing that the performance is highly dependent on the preprocessing of the data. • Demonstration of dual camera setup for pixelwise cloud segmentation (CS), cloud motion vectors (CMV), solar position, and stereoscopic cloud base height (CBH) as input to a no wcasting DL model • High latitude( ≈ 60 ◦ ) e valuation of nowcasting models, expanding the applicability of nowcasting northward. These contributions are achiev ed through the following approach: Firstly , image features are extracted and engi- neered from an ASI setup. Secondly , an LSTM architecture is constructed, combined with a CNN for image features. Thirdly , this architecture is trained with 29 days of 10 second- resolution, historical global horizontal irradiance (GHI) data, in combination with either (A) ra w RGB images that are interpreted by the CNN and fed into the LSTM, (B) engineered feature maps that are interpreted by the CNN and fed into the LSTM or (C) aggregated features extracted from the feature maps, fed into the LSTM. This results in three different model architectures that are tested on sev en days of 10-second- resolution GHI data under various atmospheric conditions. These results are discussed in the context of how ASI images are best processed as inputs to a DL model, ho w feature extraction and engineering affect model performance, and how irradiance and cloud conditions impact model evaluation. This will inform future ASI nowcasting dev elopment and improv e nowcasting as a tool for handling the variability associated with solar energy generation. I I . M E T H O D S A. Measurement setup and data collection The irradiance data were collected using a spectrally flat ISO9060 Class A Kipp & Zonen SMP10 pyranometer installed to measure GHI at the Institute for Energy T echnology (IFE) in Kjeller , Norway ( 59 ◦ 58 ′ 20 . 69 ′′ N / 11 ◦ 3 ′ 8 . 67 ′′ E) located in a climate classified as humid continental, warm summer (Dfb) in the K ¨ oppen–Geiger climate classification system. In addition to irradiance measurements, image data was collected from two ASI systems (ASI-16/51 from CMS Ing. Dr . Schreder GmbH), henceforth referred to as ASI 1 and ASI 2. The two-camera setup is a requirement for estimating CBH using stereoscopic methods [15]. The position of the ASIs, separated by 1.12 km, and the pyranometer are shown in Fig. 1. RGB images were captured ev ery ten seconds, with a resolution of 1920 × 1920 pixels over a period of two years from July 2022 to July 2024. The GHI data was originally recorded with a logging frequency of 1 Hz, but was downsampled by decimation to a frequenc y of 0.1 Hz to align with the recording frequency of the image data. Fig. 1. Map showing the locations of ASI 1 and ASI 2 and the pyranometer measuring GHI. The distance between the two cameras is highlighted, and the inset shows example time series data measured by the pyranometer from 13:30 CET to 14:20 CET on June 15th, 2023. 1) Image pr ocessing: First, to standardize the orientation of ASI 1 and ASI 2 in relation to each other , the images were rotated to align along a North-South axis using the solar position detected in each image for seven clear sky days between February 2023 and September 2023. The sun was found using a linear threshold for the mean intensity of the 8-bit RGB channels of 245, along with a center of mass algorithm implemented in SciPy version 1.11.1. The azimuthal correction was determined to 6 . 5 ◦ and − 9 . 7 ◦ for ASI 1 and ASI 2, respectiv ely . The images were then down-sampled to 100 × 100 pixels using Lanczos resampling implemented in Pillow , to aid computational efficienc y [16], [17]. Previous studies report competiti ve performance down to 64x64 when applying image size reduction with a low-pass filter to av oid unwanted aliasing effects [18], [11]. B. Nowcasting methods Three methods of le veraging ASI images for DL no wcasting were compared. A diagram of the methods, labeled Method A, Method B, and Method C, is shown in Fig. 2. Three types of neural network structures were applied. The first type, the CNN, has been prov en to be suitable for lev eraging spatial relationships in spatial data sources [19], [20]. The second type, the LSTM, is a type of recurrent neural network that excels at extracting and using temporal information in sequences [21]. The third is a fully connected Dense Neural Network (DNN) layer with a linear activ ation, used as a regressor for the final GHI output [22]. All methods were implemented using version 2.16.1 of TensorFlow with version 3.3.3 of Keras [23], [24]. 1) Method A : represents an end-to-end DL architecture that takes ASI images along with historical GHI data as inputs. It consists of a CNN constructed to extract and compress information from a series of raw RGB images from ASI 1 into a multi variate input series. The output from the CNN layers, together with historical GHI, are inputted into a 2-layer LSTM network that extracts temporal information. A 2-layer LSTM was chosen due to its ability to handle complex tem- poral patterns ov er different timescales, which gav e increased performance in initial testing [25]. The outputs are then passed to a linear DNN layer , which predicts the future GHI values in a multi-horizon forecast. As shown in Fig. 2, Method A 3 Fig. 2. Diagram of the three feature engineering and prediction methods A, B, and C. The output from either Method A, B, or C is concatenated with past GHI values as inputs into the LSTM-DNN bulk of the architecture, which is common for all three methods. differs from Method B and C by receiving spatially resolved information from the RGB images, but without physically informed engineered features. 2) Method B : is similar to Method A, except that instead of downsampled ASI images, it receives engineered feature maps of the same size as the downsampled ASI images as input. These feature maps, described in section II-C, include pixelwise CS, CMV , and solar position for ASI 1 and ASI 2, as well as CBH exclusiv ely for ASI 1. The CBH was only extracted for ASI 1 due to the method’ s high computational cost. Method B receiv es both spatial resolution and physical information in the form of maps of the engineered features. 3) Method C : utilizes an aggregation detailed in section II-C5 of the engineered feature maps of Method B into multiv ariate time series, which are then used as input into a 2-layer LSTM network, with a DNN output layer . Since the spatial information from the engineered feature maps is aggregated, this method receiv es physically informed input without spatial resolution. C. F eatur e engineering Feature engineering can help compensate for the fact that not all types of information can be synthesized from raw data by a DL model [26], [27]. T o in vestigate feature engineering of ASI images for DL nowcasting, the following feature en- gineering techniques were implemented to extract descriptiv e features from the images captured by the ASIs. 1) Fishe ye distortion correction: T o account for the distor- tion of the zenith in each camera, as caused by the geometry of the fisheye lens, a reverse image projection was used to project the fisheye images to a flat plane. The ASI lens is described by an equidistant projection, characterized by the relation: θ pixel /θ F OV = r pixel /R img (1) where θ pixel and r pixel are the zenith angle and image radius of each pixel, respecti vely . R img is the total image radius and θ F OV is the zenith of the camera’ s Field Of V iew (FO V). Real lens geometries may deviate from the mathematical description, and so a table of deviations from the relationship described in Eq. (1) was received from the ASI manufacturer , publicly av ailable in a Zenodo repository [28]. These values were used to create a 7th-order polynomial fit describing the deviations, minimizing the MAE due to distortion correction to under 0.15 % . This polynomial was then used in combination with Eq. (1) to translate between the radius of each pixel in the raw image data and its zenith angle. This transformation was also made to subsequent engineered feature map represen- tations. The upper left subpanel in Fig. 3 shows an example RGB image captured by ASI 1 at 12:46:10 CET on 2023- 08-04. The subplot below shows the same image after the described projection and correction. 2) Cloud se gmentation: The first step in the cloud se g- mentation of each image was the determination of the sk y modality , which describes the number of peaks in the RGB pixel histograms, and is characteristic of the applicability of different types of segmentation thresholds [29], [30]. This was done similarly to the method proposed in [30], using the standard deviation of the saturation (S) in the Hue Saturation Intensity (HSI) image space, defined as: S = 1 − 3 ( R + G + B ) [ min ( R, G, B )] (2) where R , G and B are the pix el intensity normalized to a range [0 , 1] of the red, green, and blue channels, respectiv ely [31]. For the camera model and settings, an appropriate threshold between uni- and multimodal images for the standard de viation of S of each image was found through trial-and-error to be 0 . 09 . If the image was found to be multimodal, a K-means clustering was performed in the RGB color space to cluster pixels into 3 groups, as shown in Fig. 8 in Appendix A of the Appendix [32]. K-means was chosen as the se gmentation algo- 4 Fig. 3. Example image and feature maps extracted for ASI 1 at 2023-08-04 12:46:10 CET . rithm as it has shown high accuracy in segmenting the image space, without requiring labeled data [33]. Preprocessing of the images with normalization and a dimensionality reduction of the RGB image using principal component analysis (PCA) with 2 components improved speed and separability of the clustering [34]. Three clusters of pixel value distributions were identified and classified into clouds, sky , and the frame of the image. If the image excluding the frame was unimodal, mean- ing ov ercast or clear weather conditions, a linear threshold was applied on each image using the normalized Blue/Red ratio ( nB R ), as nB R = B − R B + R (3) to detect possible small clouds or gaps in the cloud cover , as proposed by Li et al. [30]. The thresholds were found through visual inspection to be 0 . 2 and 0 . 35 for clear and overcast weather conditions, respectiv ely . 3) Cloud Motion V ector: For ASI 1 and ASI 2, the CMV was estimated using subsequent images by application of the Farneb ¨ ack Optical flow algorithm implemented in version 4.8.0 of the Python library OpenCV [35] [36]. Using optical flow methods for cloud motion estimation has been found to yield more precise results than window correlation methods [37]. Farneb ¨ ack Optical flow was inv estigated by Raut et al., comparing extracted cloud motion vectors with radar measure- ments, concluding long-term stability in the CMV estimates, but with uncertainty likely in part due to measurement and cloud geometry [38]. An example of an extraction can be seen in the bottom right panel of Fig. 3. The algorithm was applied using the default parameters, except for a window size set to 4% of the image size, a polynomial order of 3, a polynomial σ of 2.0, and two iterations. Parameters were adjusted through trial and error for a robust CMV extraction without excessi ve blurring, and to fit with the image format. The CMV estimation is sensitiv e to noise from other moving objects in the FO V of the ASI. Close to ASI 2, there is a biofuel heating facility that expels steam from chimneys located in the FO V of the ASI. This affects the calculation of the CMV and cloud segmentation of this camera. In addition, the area is home to birds and insects that occasionally land or ingress onto the pole-mounted ASI, obscuring the view of the sky . These disturbances were identified and filtered using large CMV inconsistencies between the two cameras. The missing data were then filled by the last observation carried forward (LOCF) to maintain temporal consistency . The filters do not remov e the impact of these sources of noise completely , but reduce the number of outliers significantly . 4) Cloud Base Height: The pixel-wise CBH was estimated through the method proposed by Nguyen and Kleissl [15]. Some minor adjustments were made to improve the perfor- mance of the algorithm for the av ailable ASI setup. Firstly , to reduce the computational cost, the cloud segmentation was used as a mask to only calculate the CBH for identified cloud pixels. Secondly , the starting correlation window pixel size was reduced to 10 × 10 to account for lower resolution images and set to a maximum limit of 20% of the image. Thirdly , when determining the height of each pixel, many pixels in the circumsolar area were mislabeled by the algorithm as having the highest possible height in the search, likely due to the matching of parts of the sun in the correlation windows. T o mitigate this, a bin corresponding to an unrealistic cloud height was added, accumulating these pixels along with pixels with a high matching uncertainty , which were both discarded. Finally , if a pixel was discarded but a valid height was found for the same cloudy pixel at the previous timestep, this height was inserted. T o ensure the validation made by Kleissl and Nguyen against a ceilometer is representati ve for the smaller image sizes used in the current work, an inv estigation of the effect of image size on CBH estimates can be found in Appendix D of the Appendix. 5) F eatur e dataset construction: Since Method A takes RGB images as inputs, the RGB images were simply passed as a tensor after downsampling, resulting in tensors of shape ( bs × l b × w × h × c ) , where bs is the batch size, lb is the lookback, w and h are the the image height and width (in this case 100), and c are the channels corresponding to RGB. Method B takes the 2D feature maps as input, which were stacked in a tensor , resulting in tensors of shape ( bs × lb × w × h × c ) , where the number of channels is the number of engineered feature maps from the cameras. Each camera yields solar position, CBH, CS, and two components of cloud motion in the form of the CMV . In addition, both cameras combined yield one CBH mask for ASI 1. Combined, 5 Fig. 4. Left panel shows the distribution of training and testing days with respect to time of year and the maximum GHI clear . The gray shaded line indicates days where the maximum solar elevation is below 15 ◦ . The right panel shows the distribution of training and testing days variability and clearness index with respect to 465 other days between 2022-06-09 and 2024- 07-07. these resulted in a tensor with 9 channels. Method C takes numerical time series as input, which were constructed from the series of each engineered feature map. The CBH was aggregated as the median of the heights of the identified cloudy pixels. The CS was aggregated for each ASI as the ratio of cloudy pixels to the total number of pixels in the image. The CMV was aggregated as the mean independently for the x- and y-directions and for each ASI. The solar zenith and azimuth were used as representations of the solar position. Additionally , the simple extraterrestrial irradiance was used to encode the diurnal pattern of solar irradiation, implemented as GH I clear ( t ) = G 0 × cos( θ z ( t )) (4) where G 0 is the solar constant, i.e., the extraterrestrial ir - radiation receiv ed by the earth from the sun, and θ z is the solar zenith angle. The GH I clear was only used as a feature for Method C to encode the diurnal pattern. This resulted in tensors of shape ( bs × l b × ) , with 11 channels corresponding to the different extracted features. D. Data, training and evaluation The selected dataset contains 36 days of 10-second- resolution data from 2022-06-09 until 2024-07-07. T imes- tamps with a solar elev ation angle lower than 10 ◦ were excluded from the dataset, resulting in a total dataset size of 154,817 timestamps. The daily maximum extraterrestrial GHI clear , v ariability index, and clearness index of the days are shown in Fig. 4. The right panel shows the distribution of days in the training and test set with respect to the daily mean of the clearness index ¯ k and daily mean variability ¯ V . These are used as proposed in [39], where ¯ k = 1 t f t = t f X t 0 =0 k ( t ) (5) and ¯ V = v u u t 1 t f t = t f X t 0 =1 ( k ( t ) − k ( t − 1)) 2 (6) where k ( t ) = GH I ( t ) GH I clear ( t ) (7) calculated with GH I clear ( t ) as defined in Eq. 4 and measure- ment GH I ( t ) from the aforementioned pyranometer . For both ¯ k and ¯ V , t f is the last index of the timesteps in a day . The dataset was split into a training and validation set consisting of 120,339 timestamps in 29 days up until 2024- 03-27, while the test set consisted of 33,902 timesteps in 7 days from 2024-04-05 until 2024-07-07, respecting temporal consistency by testing on days in the future with respect to the training data. Out of the training and validation set, 43,381 timesteps were used to validate different model architectures and hyperparameter configurations. Fig. 4 sho ws the distribution of the selected training and testing days within the dataset period with respect to daily maximum GHI, daily mean clearness index ¯ k , and the daily mean variability index ¯ V . The training and test sets are shown within the distribution of 465 days from the same period between 2022-06-09 and 2024-07-07. The figure demonstrates that the selected days in both the training and test sets cover a representativ e range of the weather conditions experienced at the location. All GHI data were normalized using the solar constant G 0 . The hyperparameters of the model were optimized using the training and validation sets. The optimized hyperparameters were batch size, learning rate, training epochs, number of LSTM layers, number of neurons in LSTM layers, LSTM output layer , number of CNN Con volutional (Conv .) layers, number of CNN Conv . filters, CNN Con v . kernel size, and at what layer the CNN output was concatenated to the LSTM layers. Optimization of most of the hyperparameters followed a trial-and-error approach, while the batch size and learning rate were explored using a rough grid search for learning rates ( lr ) from 0 . 0000005 to 0 . 001 , doubling for each step, with a batch size ( bs ) of 1. Then, lr was assumed approximately dependent on bs , and scaled by the relation lr = N ew bs O ld bs × Ol d l r (8) as has been sho wn empirically in mini-batch scaling of CNNs [40]. When approximate bs and l r were determined, a limited search of [ l r/ 10 , lr / 5 , l r / 2 , l r , 2 lr, 5 lr, 10 l r ] was carried out. The number of training epochs was determined using early stopping on the validation set. All models were trained using mean absolute error (MAE) loss and the Adam optimizer algo- rithm implemented in version 2.16.1 of TensorFlow [41]. The MAE loss was chosen to avoid the strong punishment of the mean-squared error (MSE) loss on large errors, which often leads to an overly smooth forecast. The architectures were kept small, with 42,640 parameters in Method C and 85,300 parameters in Method A and Method B. For a more detailed description of the DL parameters in each of the three methods, see T able IV in Appendix C of the Appendix. Each model issued multi-horizon forecasts ranging from 10 seconds to 15 minutes, in increments of 10 seconds, totaling 90 individual forecast horizons. Using the RMSE, the MAE, and the skill score S S as S S = 1 − RM S E f or ecast RM S E ref erence . (9) 6 The output of each model was e valuated [39]. For the SS, the RMSE ref erence was the RMSE of a clearness persistence model defined as f persistence ( t + h ) = k ( t ) × GH I clear ( t + h ) . (10) where h is the horizon of the forecast and GH I clear is as defined in Eq. 4. E. F eatur e importance T o e valuate the importance of the input features, feature permutation importance (PI) was used [42]. PI shuffles the input dataset of a trained model, one feature at a time. This destroys the temporal information of the feature, while maintaining the feature’ s exact distribution. The PI is then quantified for each feature as P I i = M AE i − M AE ref , (11) where MAE ref is the MAE for a model predicting on input data without any shuffled features and MAE i is the MAE for a model predicting on input data with feature i shuf fled along the time axis. This results in an ev aluation of feature usefulness to the model. For each feature i , the mean of ten repetitions was used to minimize the ef fect of stochastic variability in the shuffling. I I I . R E S U LT S A N D D I S C U S S I O N A. Overall model performance The best models obtained with each of the three methods described in Fig. 2 were e valuated on the test data set. Fig. 5 presents the aggregated performance metrics for Model A, Model B, and Model C for the 7 test days together with the results for the smart persistence model used as reference. The bottom heat map sho ws the SS, while the top panel shows the RMSE plotted for each horizon. The lower panel demonstrates that all three models outperform the smart persistence model for time horizons longer than 1 minute. On av erage, Model C performs the best, with an average RMSE of 87.0 W/m 2 , compared with 87.8 W/m 2 for Model A and 90.3 W/m 2 for Model B. This is also seen in SS across horizons, where the av erage SS for horizons over 1 minute for model C is 5.7%, compared with 5.3 % for model A and 2 % for model B. For all three methods, both the RMSE and SS generally increase with horizon, with an exception between 2 and 4 minutes for Models A and B. B. Daily model performance T aking a closer look at the metrics for the test dataset, Fig. 6 shows forecasts and metrics for each of the 7 test days. The first column sho ws the measured GHI along with the forecasts for a 15-minute horizon, where the second column shows the associated absolute error AE i = | y i − ˆ y i | for the 15-minute horizon. The third and fourth columns show RMSE and SS, respectiv ely , where the metric is shown for each day and as dependent on forecast horizon. T able II shows the daily mean clearness index k , the daily mean variability index V , and the cloud classification (CC) Fig. 5. A comparison of RMSE and SS for models trained using the three methods shown in Fig. 2. based on WMO cloud genera (See T able III) determined through manual inspection using the WMO Cloud Atlas Cloud Identification guide [43], resolv ed for the morning, midday and ev ening of the day . The mean daily clearness and variability were calculated from Eq. (5) and Eq. (6). The days in the test set can generally be placed into two categories: Days with a lo w variability index or days with a high variability index. The days with lower variability are the days 2024-04-05, 2024-06-21, 2024-06-22, and 2024-07- 04. These days show low RMSE, ranging from 10 W/m 2 to 75 W/m 2 . Although the RMSE is low , the SS is most often found belo w 0, showing (as expected) that the performance of a smart persistence model improves with lower variability . For these days, Model C predominantly outperforms Model A and B, except for 2024-04-05, where it underperforms the other models by a large margin. This day is characterized as a completely ov ercast day with optically thin Altostratus (As) clouds. This causes the irradiance signal to be dampened, but the diurnal trend, i.e., low irradiance in the early morning and late afternoon and higher irradiance around noon, is still present. In these conditions, the cloud optical thickness (CO T) is an important quality , as variations within o vercast conditions are lar gely due to v ariations in CO T (accounting for the diurnal cycle). CO T is not part of the engineered features of Method B and Method C due to the lack of reliable methods to estimate CO T directly from ASI images. Here, Method A has the advantage of flexibility to attempt to extract the most relev ant information from the image, reg ardless of what is deemed physically important or has an av ailable robust method of estimation. Model B has a higher performance under these conditions than Model C, indicating that the richer information present in a spatial representation may compensate to some degree for lacking information in feature representation. The days in the test set with more variable conditions are 2024-04-14, 2024-06-18, and 2024-07-07. The forecast for these days is characterized by a higher RMSE ranging from 30 7 Fig. 6. From the left: The first column shows GHI for each test day , along with forecasts made by Model A, B, and C shown in Fig. 2 for a 15-minute forecast horizon, the second column shows the absolute error (AE) of each forecast made at each timestep. The third column shows the RMSE, while the fourth column shows the SS as defined in Eq. (9). Note that SP refers to the smart persistence model. W/m 2 to 210 W/m 2 . The skill score is also generally higher than for days with less variability . For these days, Models A and B exhibit similar patterns, where the SS of the models rise and fall for similar horizons, but with a positi ve offset for Model A, showing a better performance. Model C does not follow the same trends as closely , which is most apparent on 2024-04-14, when the SS is stable across horizons over 1 minute. Model C is better at predicting the last part of the day from 13:00 onward, corresponding to the transition from overcast to partially cloudy skies. Models A and B achiev e a higher performance for horizons between 1 and 2 minutes, indicating that the performance degrades as horizons become longer due to an imbalanced focus on transient cloud mov ements present in the 2D images and features. The maximum SS for all methods is also observed on the days with high variability . For Method A, the maximum of 13% is found for a horizon of 1 minute and 40 seconds on 2024-04-14, 11% for Method B for a horizon of 13 minutes and 10 seconds on 2024-06-18, and 13% for Method C for a horizon of 13 minutes and 20 seconds on 2024-06-18. This shows that the DL methods excel exactly where persistence models fail, when the atmosphere is unstable, and tv he irradiance is likely to change. Fig. 7. PI calculated from Eq. 11 for the features extracted by Method C, aggregated by forecast horizon, where a higher ∆ MAE indicates high feature importance. GHI clear is as calculated in Eq. 4. The numbers ”1” and ”2” refer to the ASI from which the features were extracted (see Fig. 1). C. F eatur e importance The ASI features of the best model (Model C) were inv es- tigated, with the PI shown in Fig. 7, where the upper panel shows the PI aggregated by horizon calculated on the test data. The shuffling of the CS features had the largest impact on the model, along with significant effects from GHI clear and the azimuth. The shuffling of the CBH and CMV features, on the other hand, showed little to no impact on performance. It is also clear that the feature importance increases with an increased horizon, showing that ASI feature inputs become more important as the horizon increases. Supporting evidence 8 T ABLE II T E S T S E T DA I LY C H A R AC T E R I S T I C S : k , V , A N D C L O U D C L A S S I FI C A T I O N ( C C ) BA S E D O N T H E W M O G E N E R A ( S E E T A B L E I I I ) . M O R N I N G I S F RO M 6 – 1 0 , M I D DAY F RO M 1 0 – 1 5 , E V E N I N G F RO M 1 5 – 2 0 . S L A S H E S B E T W E E N C C G E N E R A I N D I C A T E U N C E RT A I N T Y I N T H E D E T E R M I NATI O N O F C C G E N E R A , W H I C H W E R E D E T E R M I N E D U S I N G T H E W M O C L O U D C L A S S I FI C A T I O N G U I D E [ 4 3 ] . Day ¯ V ¯ k CC 04-05 0.0061 0.56 morning: As midday: As ev ening: As 04-14 0.050 0.74 morning: Cs, Ac midday: St ev ening: As, Cu into Cl 06-18 0.061 0.48 morning: Ns midday: Ac, Cu, Cb/Ns ev ening: Cb/Ns into Cu, Ac 06-21 0.0039 0.99 morning: Cl midday: Cl ev ening: Cl with small Ac 06-22 0.0027 0.19 morning: As, Cs midday: Ns ev ening: St/As, Ns 07-04 0.0023 0.20 morning: Ns midday: St, Ns/Cb ev ening: As/St, Ns 07-07 0.023 0.35 morning: St, Cu, As midday: Cu, As, Ac, Cb/Ns ev ening: Ns, As T ABLE III W M O C L O U D G E N E R A U S E D I N C L O U D C L A S S I FI C A T I O N [ 4 4 ] . Shorthand/CC Genera Cb Cumulunimb us Ns Nimbostratus As Altostratus St Stratus Cu Cumulus Ac Altocumulus Ci Cirrus Cl Clear of this trend can also be seen in the relationship between the decrease in the autocorrelation of the GHI and the correlations between the other features and the future GHI (See in Fig.9 of Appendix B of the Appendix). The feature importance shows that the model utilizes the CS to a lar ge degree, indicating that improving cloud se gmentation algorithms and estimation of cloudiness is a promising route for improving performance. Features that are not used by the model may be discarded to sa ve computation, such as the CBH and CMV . This will reduce the computational time of feature engineering and model training. For the CBH, this also avoids the costly deployment of a second ASI required to implement stereoscopic CBH algorithms. Howe ver , each of the methods also contains an inherent uncertainty , created by the aspects of the image data they use (resolution, distortion, noise) and the specifics in their methodologies. As a further step, improving the robustness of these features or enhancing post-processing may make these features also play a role in the performance of future models. The insight that CS improves performance can also be used in model dev elopment, either through the aforementioned improv ed feature engineering or as an integrated part of the model architecture, for example, as a secondary target, as in [45]. When dev eloping ASI equipment, one should consider what image qualities may be altered to make sky and cloud more separable, for example, by lowering the camera exposure or using HDR images to minimize overe xposure in the circumsolar area and on cloud edges. D. Method advantages and drawbac ks Determining which of the three approaches is the optimal strategy for the inclusion of ASI images into nowcasting models using DL is a multi-faceted judgment. Each of the methods have advantages and disadvantages related to al- gorithmic complexity , computational needs, performance and robustness. For Method A, the main adv antage of this end-to-end DL model is the flexibility and ease of using raw RGB data without feature engineering. This av oids the computational expense of some computationally costly calculations, i.e., the pixel-wise stereoscopic CBH, and av oids the inherent uncer- tainty introduced by the application of feature engineering algorithms. Additionally , this method provides fle xibility to the model by allowing useful image qualities to be selected inside the DL method itself. The main advantage of this method is also its drawback. Since the model has to condense the information in the RGB images, as well as extract temporal information, this is a more complex task than using already extracted feature information. This leads to longer training times and requires more training data. This was seen in training, where Method A was trained for 126 epochs, while Model B was trained for 92 epochs. Additionally , since two different ASI images may result in equal engineered features, while different features will always correspond to dif ferent ASI images. This means the possible space of features is smaller than the possible configuration of RGB images and the models, therefore, likely requires less data and less training. The main adv antage of Methods B and C is the input of information that is known to be important for the task at hand. Cloud information is important for nowcasting, and so presenting this information can simplify the task, improving performance. In addition, engineered features offer increased explainability , especially when the features are physically informed. By connecting DL features to physical variables of the system, their interpretation also becomes connected to a larger context. The feature importance shows that model C utilizes the CS to a large degree, which can be interpreted as the model values information on how cloudy it is. Such an interpretation is not possible for Method A. The main disad- vantage of methods B and C is that a lot of weight is placed on these features being accurate and robust. Additionally , not all information that is known to be important, like the CO T , has av ailable robust feature extraction methods that can be used. 9 Between Method B and Method C, aggregating physical features to a time-series yields a better performance, requires less storage for input data, and has lo wer training and inference times. Since the input of Method C is a less resolved version of the input of Method B, it is clear that the CNN in Method B does not yield features more valuable than manual aggregation and post-processing. Method C uses a very simple aggregation technique, the mean, or median, depending on the feature. Spatial information is intuitiv ely valuable for nowcasting, but Model C outperforms Method B, sho wing that a more complex model, extraction, or aggregation scheme is required to le verage the spatial information of the physically engineered features of Model B. A challenge of the performance ev aluations in this study , and nowcasting comparati ve studies in general, is that the day-to-day dif ferences in errors far outscale the differences between the dif ferent models. This creates dif ficulties in comparing the performance of the present models to other literature implementations with other datasets. It also raises the question of how to ev aluate forecasts in a way that is useful for PV power plant operators. This is especially challenging in ASI nowcasting, as the high frequency and amount of data make it challenging to include multiple years of data in training and ev aluation. With smaller datasets, there is a larger risk of excluding certain relev ant atmospheric conditions at a site. W ithout ensuring ev aluation under the same atmospheric conditions, comparisons are, at best, biased and, at worst, misleading. In such a comparison, differences in performance may be due to different atmospheric conditions and not model ability . T o tackle this, benchmarking studies and applications on common public datasets are in valuable. I V . C O N C L U S I O N In this work, three methods of lev eraging ASI images for multi-horizon DL nowcasting were compared. A comparison was made between CNN feature extraction from RGB images (Method A), CNN feature extraction from engineered feature maps (Method B), and using engineered features in the form of timeseries (Method C). Each of the feature sets was used as input to LSTM layers predicting GHI 10 s to 15 minutes ahead. For the 7-day test dataset consisting of 33,902 timesteps with di verse atmospheric conditions, Method C yielded the highest overall performance. Method C achiev ed an av erage RMSE of 87.0 W/m 2 , compared to 87.8 W / m 2 for Method A and 90.3 W / m 2 for Method B. Similar results were found for the SS, with some differences depending on the forecast horizon. Analysis of feature importance for method C showed increased reliance on engineered features with increasing fore- cast horizon. Based on this comparative ev aluation, physically informed timeseries as e xtracted by Method C is recommended for computational parsimony and robust performance when applying LSTM models. Based on the analysis of feature im- portance, CS, azimuth, and the GHI clear should be provided as features. The exception to this recommendation is in locations with frequent overcast conditions of thin altostratus clouds. This is a limitation of the feature set, which may be amended by future work in robust CO T feature extraction. These results demonstrate the viability of alternative im- age processing methods for nowcasting, highlighted by the fact that integrating physically relev ant timeseries features extracted from ASI images outperformed DL feature e xtraction using a standard CNN architecture. A P P E N D I X A E X A M P L E O F C L O U D S E G M E N TA T I O N Fig. 8 shows an example of a cloud segmentation, where the two upper plots show the image and segmentation, while the lower plot shows the pixel values in an RGB space. Fig. 8. Example of cloud segmentation using RGB-space k-means clustering. A P P E N D I X B C O R E L L O G R A M A S I F E A T U R E S Fig. 9. A corellogram showing the absolute Pearson correlation between the current value of a feature and the future value of the GHI, at a time denoted on the x-axis. Calculated from the 120 339 samples in the training/validation data. A P P E N D I X C D L M O D E L PA R A M E T E R S F O R T H E T H R E E M E T H O D S O F T H E C O M PA R I S O N For the DL architectures that were applied, T able IV shows selected hyperparameters and architecture descriptors of the three methods. The lookback refers to the amount of historical data each method receives. For Methods A and B, this also includes previous RGB images or feature maps, respectively . 10 The horizon is the furthest prediction time of the 90 predictions giv en for each 10 s intervals. Dropout refers to per layer drop out in the CNN architecture, units refer to the number of units per layer for the described layer type. Con volutional filters are in order from the start to the end of the CNN. Additionally , each con volutional layer is also followed directly by a 2 × 2 MaxPooling layer . For Methods A and B, in addition to the CNN layers described in T able IV, a trainable con volutional 1 × 1 layer was added at the head of the block, functioning as a pixelwise scaling layer . T ABLE IV D L A R C H I T E C T U R E P A R A M E T E R S F O R T H E T H R E E M E T H O D S . Method A B C Input features RGB Feature maps Feature series Parameters 85,228 85,300 42,640 Lookback 150 s 150 s 150 s Horizon 15 min 15 min 15 min Frequency 10 s 10 s 10 s Con v . layers 6 6 - Con v . filters 8,8,16,24,32,40 8,8,16,24,32,40 - Con v . kernel size 3 × 3 3 × 3 - Pooling layers 5 5 - Pooling size 2 × 2 2 × 2 - Padding valid valid - LSTM layers 2 2 2 LSTM units 25,25 25,25 25,25 Dense layers 1 1 1 Dense units 90 90 90 Dropout 0.05 0.05 0.0 Loss MAE MAE MAE Learning rate 1 . 5 × 10 − 5 1 . 5 × 10 − 5 2 × 10 − 4 Batch size 128 128 1024 Epochs 126 92 344 A P P E N D I X D C B H E S T I M A T I O N A N D I M AG E S I Z E The stereoscopic algorithm was validated against a ceilome- ter by the original authors, but for smaller image sizes, as the one used here, its validity is uncertain [15]. Fig. 10 shows the distribution of CBH estimates as a function of changing ASI image resolution. Fig. 10 sho ws that the estimates are relativ ely stable do wn to smaller image sizes, with a small shift tow ards lower cloud heights. This is likely in part due to the lo wer resolution decreasing the accuracy of the method, but also a reduction in error connected with estimates of saturated pixels being confused with the sun. The image has a glare in the upper left corner , which causes a misidentification in the area around it as the highest CBH in high-resolution images. This effect is not present in lower resolution images, due to the downsampling and lowpass filtering, resulting in a lower and more correct CBH estimate. Fig. 10. Distribution and plots of CBH estimates in an image taken on 2023- 06-15 13:32:40 CET , relating to image resolution. An area with an image glare is marked in the dashed yellow area, showing how the misidentification of this area reduces with smaller image sizes. 11 A C K N O W L E D G M E N T The authors ackno wledge funding from the Research Coun- cil of Norway through the projects KSP-K HYDR OSUN (grant number 328640) and KSP-K REHSYS (grant number 344423). The skillful work of Sigurd Brattheim for instrumental mainte- nance and ensurance of data quality is greatly acknowledged. / R E F E R E N C E S [1] IEA, “Rene wables 2024, ” IEA , p. 16, 2024. [Online]. A vailable: https://www .iea.org/reports/rene wables-2024 [2] C. W an, J. Zhao, Y . Song, Z. Xu, J. Lin, and Z. Hu, “Photovoltaic and solar power forecasting for smart grid energy management, ” CSEE Journal of P ower and Energy Systems , vol. 1, no. 4, pp. 38–46, 2015. [3] Ø. S. Klyve, M. M. Nyg ˚ ard, H. N. Riise, J. Fagerstr ¨ om, and E. S. Marstein, “The value of forecasts for pv power plants operating in the past, present and future scandinavian energy markets, ” Solar Energy , vol. 255, pp. 208–221, 2023. [4] O. Gandhi, W . Zhang, D. S. Kumar , C. D. Rodr ´ ıguez-Gallegos, G. M. Y agli, D. Y ang, T . Reindl, and D. Srinivasan, “The value of solar fore- casts and the cost of their errors: A revie w , ” Renewable and Sustainable Ener gy Reviews , vol. 189, p. 113915, 2024. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S1364032123007736 [5] M. J. E. Alam and T . K. Saha, “Cycle-life degradation assessment of battery energy storage systems caused by solar pv variability , ” in 2016 IEEE P ower and Ener gy Society General Meeting (PESGM) , 2016, pp. 1–5. [6] M. J. E. Alam, K. M. Muttaqi, and D. Sutanto, “ A novel approach for ramp-rate control of solar pv using energy storage to mitigate output fluctuations caused by cloud passing, ” IEEE T ransactions on Energy Con version , vol. 29, no. 2, pp. 507–518, 2014. [7] H. Ouabi, R. Lajouad, M. Kissaoui, and A. El Magri, “Hydrogen production by water electrolysis driven by a photovoltaic source: A revie w , ” e-Prime - Advances in Electrical Engineering, Electr onics and Ener gy , vol. 8, p. 100608, 2024. [Online]. A v ailable: https://www .sciencedirect.com/science/article/pii/S2772671124001888 [8] Y . Chu, M. Li, C. F . Coimbra, D. Feng, and H. W ang, “Intra-hour irradiance forecasting techniques for solar po wer integration: A re view , ” iScience , vol. 24, no. 10, p. 103136, 2021. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S2589004221011044 [9] Q. Paletta, G. Arbod, and J. Lasenby , “Benchmarking of deep learning irradiance forecasting models from sky images – an in-depth analysis, ” Solar Energy , vol. 224, pp. 855–867, 2021. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0038092X21004266 [10] S.-A. Logothetis, V . Salamalikis, S. W ilbert, J. Remund, L. F . Zarzalejo, Y . Xie, B. Nouri, E. Ntavelis, J. Nou, N. Hendrikx, L. V isser , M. Sengupta, M. P ´ o, R. Chauvin, S. Grieu, N. Blum, W . van Sark, and A. Kazantzidis, “Benchmarking of solar irradiance nowcast performance deriv ed from all-sky imagers, ” Renewable Energy , vol. 199, pp. 246–261, 2022. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0960148122013027 [11] Q. Paletta, G. T err ´ en-Serrano, Y . Nie, B. Li, J. Bieker , W . Zhang, L. Dubus, S. Dev , and C. Feng, “ Advances in solar forecasting: Computer vision with deep learning, ” Advances in Applied Ener gy , vol. 11, p. 100150, 2023. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S266679242300029X [12] J. Jumper, R. Evans, A. Pritzel, T . Green, M. Figurnov , O. Ronneberger , K. Tun yasuvunakool, R. Bates, A. ˇ Z ´ ıdek, A. Potapenko, A. Bridgland, C. Meyer , S. A. A. Kohl, A. J. Ballard, A. Cowie, B. Romera-Paredes, S. Nikolov , R. Jain, J. Adler , T . Back, S. Petersen, D. Reiman, E. Clancy , M. Zielinski, M. Steinegger , M. Pacholska, T . Berghammer , S. Bodenstein, D. Silver, O. V inyals, A. W . Senior, K. Kavukcuoglu, P . K ohli, and D. Hassabis, “Highly accurate protein structure prediction with alphafold, ” Nature , vol. 596, no. 7873, pp. 583–589, 2021. [Online]. A vailable: https://doi.org/10.1038/s41586-021-03819-2 [13] V . Sansine, P . Ortega, D. Hissel, and F . Ferrucci, “Hybrid deep learning model for mean hourly irradiance probabilistic forecasting, ” Atmospher e , vol. 14, no. 7, 2023. [Online]. A vailable: https://www .mdpi.com/2073- 4433/14/7/1192 [14] S. Xu, J. Liu, X. Huang, C. Li, Z. Chen, and Y . T ai, “Minutely multi-step irradiance forecasting based on all-sky images using lstm-informerstack hybrid model with dual feature enhancement, ” Renewable Ener gy , vol. 224, p. 120135, 2024. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0960148124002003 [15] D. A. Nguyen and J. Kleissl, “Stereographic methods for cloud base height determination using two sky imagers, ” Solar Ener gy , vol. 107, pp. 495–509, 2014. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0038092X14002333 [16] C. Duchon, “Lanczos filtering in one and two dimensions, ” J ournal of Applied Meteorology - J APPL METEOROL , vol. 18, pp. 1016–1022, 08 1979. [17] A. Clark, “Pillow (pil fork) documentation, ” 2015. [Online]. A vailable: https://buildmedia.readthedocs.or g/media/pdf/pillow/latest/pillo w .pdf [18] Y . Sun, V . V enugopal, and A. R. Brandt, “Short-term solar power forecast with deep learning: Exploring optimal input and output configu- ration, ” Solar Energy , vol. 188, pp. 730–741, 2019. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0038092X19306164 [19] K. Fukushima, “V isual feature extraction by a multilayered network of analog threshold elements, ” IEEE T ransactions on Systems Science and Cybernetics , vol. 5, no. 4, pp. 322–333, 1969. [20] A. Krizhevsky , I. Sutske ver , and G. Hinton, “Imagenet classification with deep conv olutional neural networks, ” vol. 25, 01 2012. [21] S. Hochreiter and J. Schmidhuber, “Long short-term memory , ” Neural Computation , vol. 9, pp. 1735–1780, 11 1997. [22] K. Hornik, M. Stinchcombe, and H. White, “Multilayer feedforward networks are universal approximators, ” Neural Networks , vol. 2, no. 5, pp. 359–366, 1989. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/0893608089900208 [23] M. Abadi, A. Agarwal, P . Barham, E. Brevdo, Z. Chen, C. Citro, G. S. Corrado, A. Davis, J. Dean, M. Devin, S. Ghemawat, I. Goodfellow , A. Harp, G. Irving, M. Isard, Y . Jia, R. Jozefowicz, L. Kaiser, M. Kudlur , J. Levenber g, D. Man ´ e, R. Monga, S. Moore, D. Murray , C. Olah, M. Schuster , J. Shlens, B. Steiner , I. Sutskev er , K. T alwar , P . T ucker , V . V anhoucke, V . V asudev an, F . V i ´ egas, O. V inyals, P . W arden, M. W attenberg, M. W icke, Y . Y u, and X. Zheng, “T ensorFlow: Large-scale machine learning on heterogeneous systems, ” 2015, software available from tensorflow .org. [Online]. A vailable: https://www .tensorflow .org/ [24] F . Chollet et al. , “Keras, ” https://keras.io, 2015. [25] M. Hermans and B. Schrauwen, “Training and analysing deep recurrent neural networks, ” in Advances in Neural Information Pr ocessing Sys- tems , C. Burges, L. Bottou, M. W elling, Z. Ghahramani, and K. W ein- berger , Eds., vol. 26. Curran Associates, Inc., 2013. [26] J. Heaton, “ An empirical analysis of feature engineering for predictive modeling, ” in SoutheastCon 2016 , 2016, pp. 1–6. [27] T . V erdonck, B. Baesens, M. ´ Oskarsd ´ ottir , and S. vanden Broucke, “Special issue on feature engineering editorial, ” Machine Learning , vol. 113, pp. 1–12, 08 2021. [28] E. W . Eriksen, “Supplementary data to ASI image processing for solar forecasting, ” 2025 [T echnical documentation], av ailable: 10.5281/zen- odo.17858670. Accessed on: 8 December 2025. [29] M. Hasenbalg, P . Kuhn, S. Wilbert, B. Nouri, and A. Kazantzidis, “Benchmarking of six cloud segmentation algorithms for ground-based all-sky imagers, ” Solar En- er gy , vol. 201, pp. 596–614, 2020. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0038092X2030147X [30] Q. Li, W . Lu, and J. Y ang, “ A hybrid thresholding algorithm for cloud detection on ground-based color images, ” Journal of Atmospheric and Oceanic T echnology , vol. 28, no. 10, pp. 1286 – 1296, 2011. [31] R. C. Gonzalez and R. E. W oods, Digital Image Processing 3r d Edition . USA: Prentice-Hall, Inc., 2014. [Online]. A v ailable: https://api.semanticscholar .org/CorpusID:43107812 [32] J. MacQueen, “Some methods for classification and analysis of multi- variate observations, ” in Proceedings of the F ifth Berkeley Symposium on Mathematical Statistics and Pr obability, V olume 1: Statistics , vol. 5. Univ ersity of California press, 1967, pp. 281–298. [33] S. Dinc, R. Russell, and L. A. C. Parra, “Cloud region segmentation from all sky images using double k-means clustering, ” in 2022 IEEE International Symposium on Multimedia (ISM) , 2022, pp. 261–262. [34] K. P . F .R.S., “Liii. on lines and planes of closest fit to systems of points in space, ” The London, Edinbur gh, and Dublin Philosophical Magazine and Journal of Science , vol. 2, no. 11, pp. 559–572, 1901. [35] Itseez, “Open source computer vision library , ” https://github .com/itseez/opencv , 2015. [36] G. Farneb ¨ ack, “T wo-frame motion estimation based on polynomial expansion, ” vol. 2749, 06 2003, pp. 363–370. [37] A. Zaher , S. Thil, J. Nou, A. Traor ´ e, and S. Grieu, “Comparative study of algorithms for cloud motion estimation using sky- imaging data, ” IF A C-P apersOnLine , vol. 50, no. 1, pp. 5934– 12 5939, 2017, 20th IF AC W orld Congress. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S2405896317320657 [38] B. A. Raut, P . Muradyan, R. Sankaran, R. C. Jackson, S. Park, S. A. Shahkarami, D. Dematties, Y . Kim, J. Swantek, N. Conrad, W . Gerlach, S. Shemyakin, P . Beckman, N. J. Ferrier, and S. M. Collis, “Optimizing cloud motion estimation on the edge with phase correlation and optical flow , ” Atmospheric Measurement T ec hniques , vol. 16, no. 5, pp. 1195–1209, 2023. [Online]. A vailable: https://amt.copernicus.org/articles/16/1195/2023/ [39] R. Marquez and C. F . M. Coimbra, “Proposed Metric for Evaluation of Solar Forecasting Models, ” Journal of Solar Energy Engineering , vol. 135, no. 1, p. 011016, 10 2012. [Online]. A vailable: https://doi.org/10.1115/1.4007496 [40] P . Goyal, P . Doll ´ ar , R. Girshick, P . Noordhuis, L. W esolowski, A. K yrola, A. Tulloch, Y . Jia, and K. He, “ Accurate, large minibatch sgd: Training imagenet in 1 hour , ” 06 2017. [41] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” 2017. [42] L. Breiman, “Random forests, ” Machine Learning , vol. 45, pp. 23–25, 2001. [43] WMO. (2017) Cloud identification guide, annex to the wmo technical regulations (volumes i to iii). [Online]. A vailable: https://cloudatlas.wmo.int/en/cloud-identification-guide.html [44] W . M. Organization, International Cloud Atlas , ser . Inter- national Cloud Atlas. Secretariat of the W orld Meteoro- logical Or ganization, 1975, no. v . 1. [Online]. A v ailable: https://books.google.no/books?id=JMVWzgEA CAAJ [45] Q. Paletta, A. Hu, G. Arbod, and J. Lasenby , “Eclipse: En visioning cloud induced perturbations in solar energy , ” Applied Ener gy , vol. 326, p. 119924, 2022. [Online]. A vailable: https://www .sciencedirect.com/science/article/pii/S0306261922011813

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment