GazeOnce360: Fisheye-Based 360° Multi-Person Gaze Estimation with Global-Local Feature Fusion

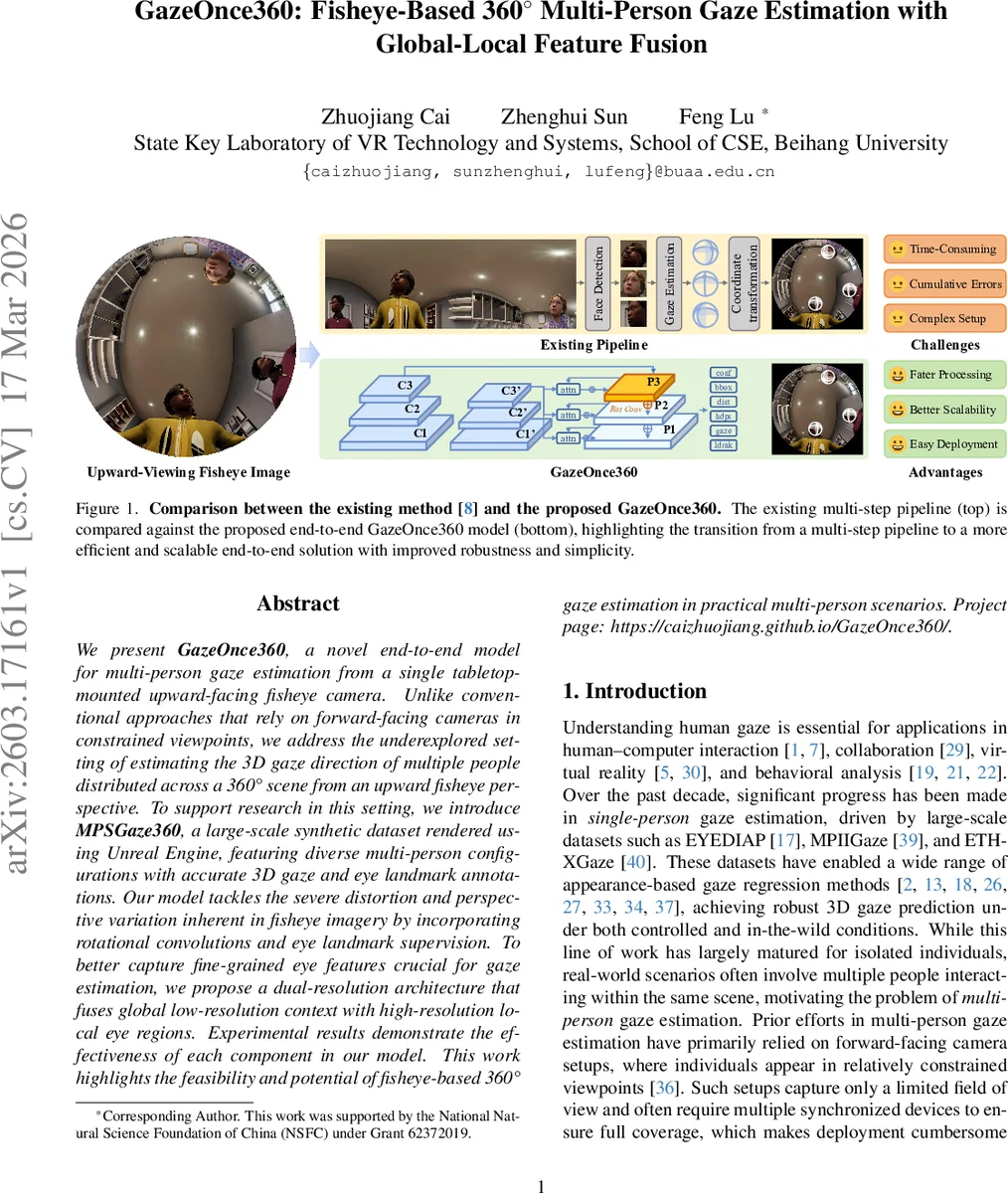

We present GazeOnce360, a novel end-to-end model for multi-person gaze estimation from a single tabletop-mounted upward-facing fisheye camera. Unlike conventional approaches that rely on forward-facing cameras in constrained viewpoints, we address the underexplored setting of estimating the 3D gaze direction of multiple people distributed across a 360° scene from an upward fisheye perspective. To support research in this setting, we introduce MPSGaze360, a large-scale synthetic dataset rendered using Unreal Engine, featuring diverse multi-person configurations with accurate 3D gaze and eye landmark annotations. Our model tackles the severe distortion and perspective variation inherent in fisheye imagery by incorporating rotational convolutions and eye landmark supervision. To better capture fine-grained eye features crucial for gaze estimation, we propose a dual-resolution architecture that fuses global low-resolution context with high-resolution local eye regions. Experimental results demonstrate the effectiveness of each component in our model. This work highlights the feasibility and potential of fisheye-based 360° gaze estimation in practical multi-person scenarios. Project page: https://caizhuojiang.github.io/GazeOnce360/.

💡 Research Summary

GazeOnce360 tackles the under‑explored problem of estimating 3D gaze directions for multiple people from a single upward‑facing fisheye camera mounted on a tabletop. Traditional multi‑person gaze methods rely on forward‑facing cameras, limited fields of view, and often multiple synchronized devices, which makes deployment cumbersome. By contrast, the proposed system captures the entire 360° surrounding scene with one fisheye lens, enabling compact, scalable sensing for everyday spaces such as collaborative workstations, reception counters, or service‑robot environments.

The paper introduces three technical contributions. First, it adopts rotational convolution, a variant of standard convolution that replicates the kernel at four orthogonal orientations (0°, 90°, 180°, 270°) and aggregates the responses. This operation endows the network with rotation‑equivariance, which is crucial for handling the severe radial distortion and extreme viewing angles inherent to fisheye imagery. Second, the model is trained with multi‑task supervision: in addition to 3D gaze vectors, it predicts face bounding boxes, 2D eye and face landmarks, head pose, and distance to camera. The landmark branch forces the network to learn fine‑grained ocular features, improving gaze regression accuracy. Third, a dual‑resolution architecture is designed. A low‑resolution global branch processes the full fisheye image to capture large‑scale context and to detect face boxes. For each detected face, a high‑resolution local branch extracts cropped facial patches, preserving fine eye details. Global and local feature maps are fused via a cross‑attention module, allowing the network to combine scene‑level cues (e.g., occlusions, lighting) with precise eye‑region information. During training, ground‑truth boxes are used for cropping; at inference time the model relies on its own detections, achieving a fully end‑to‑end pipeline without intermediate hand‑crafted steps.

To enable supervised learning, the authors create MPSGaze360, a large synthetic dataset rendered with Unreal Engine 5 and MetaHuman avatars. The pipeline randomly samples the number of subjects (1‑7), their spatial arrangement around the camera, head orientations (yaw ± 60°, pitch ± 35°, roll ± 5°), and local gaze angles (yaw/pitch ± 30°). It also varies lighting, skin tone, clothing, and hairstyles, producing 23,496 fisheye images with pixel‑accurate annotations: 3D gaze vectors (camera‑centric), 3D head poses, 2D eye/face landmarks, face bounding boxes, and distance. Each frame is rendered as five orthogonal perspective views and then re‑projected into a single 180° equidistant fisheye image, because UE5 lacks a native fisheye camera.

Extensive experiments validate each component. Ablation studies show that rotational convolution reduces mean angular error (MAE) by several degrees compared to standard convolutions, especially for subjects near the image periphery. Adding landmark supervision further improves MAE and landmark localization error. The dual‑resolution fusion yields a notable speed‑accuracy trade‑off: the global branch processes a down‑sampled image (e.g., 640×640), while the local branch works on cropped patches (e.g., 128×128), resulting in real‑time inference (>30 fps) on a single GPU. Compared against the prior multi‑stage pipeline GAM360, GazeOnce360 achieves lower MAE, higher detection recall, and eliminates cumulative errors caused by separate projection and detection stages.

Qualitative results on real fisheye captures (not seen during training) demonstrate good domain generalization: faces are correctly detected even when split across the fisheye boundary, and gaze vectors align with observed eye direction. The authors discuss limitations such as residual domain gap between synthetic and real data, and the reliance on accurate face detection for the local branch.

In summary, GazeOnce360 presents a practical, end‑to‑end solution for 360° multi‑person gaze estimation from upward‑facing fisheye cameras. By integrating rotation‑aware convolutions, multi‑task supervision, and a global‑local feature fusion architecture, it overcomes fisheye distortion, reduces redundant computation, and delivers accurate, real‑time gaze predictions. The synthetic MPSGaze360 dataset fills a critical gap in training data for this niche scenario. The work opens avenues for deploying gaze‑aware interfaces in crowded indoor environments, collaborative robotics, and smart spaces, and suggests future research directions such as domain adaptation, temporal gaze tracking, and multi‑camera fusion.

Comments & Academic Discussion

Loading comments...

Leave a Comment