From Non-Identifiability to Goal-Integrated Decision-Making in Parametric Inverse Optimization

Inverse optimization seeks to recover unknown objective parameters from observed decisions, yet fundamental questions about when recovery is possible have received limited formal treatment. This paper develops a comprehensive theoretical framework fo…

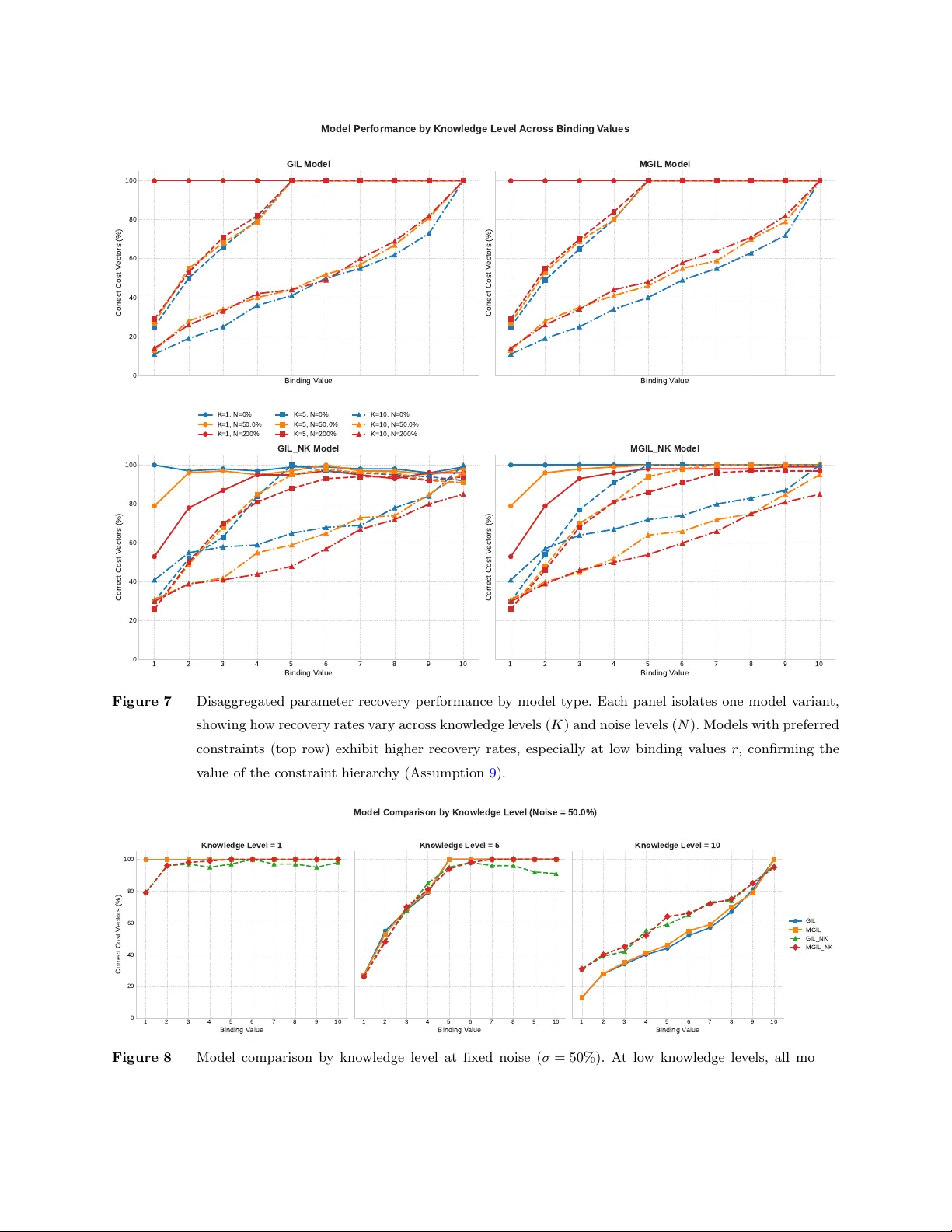

Authors: Farzin Ahmadi, Fardin Ganjkhanloo, Kimia Ghobadi