Conditional Distributional Treatment Effects: Doubly Robust Estimation and Testing

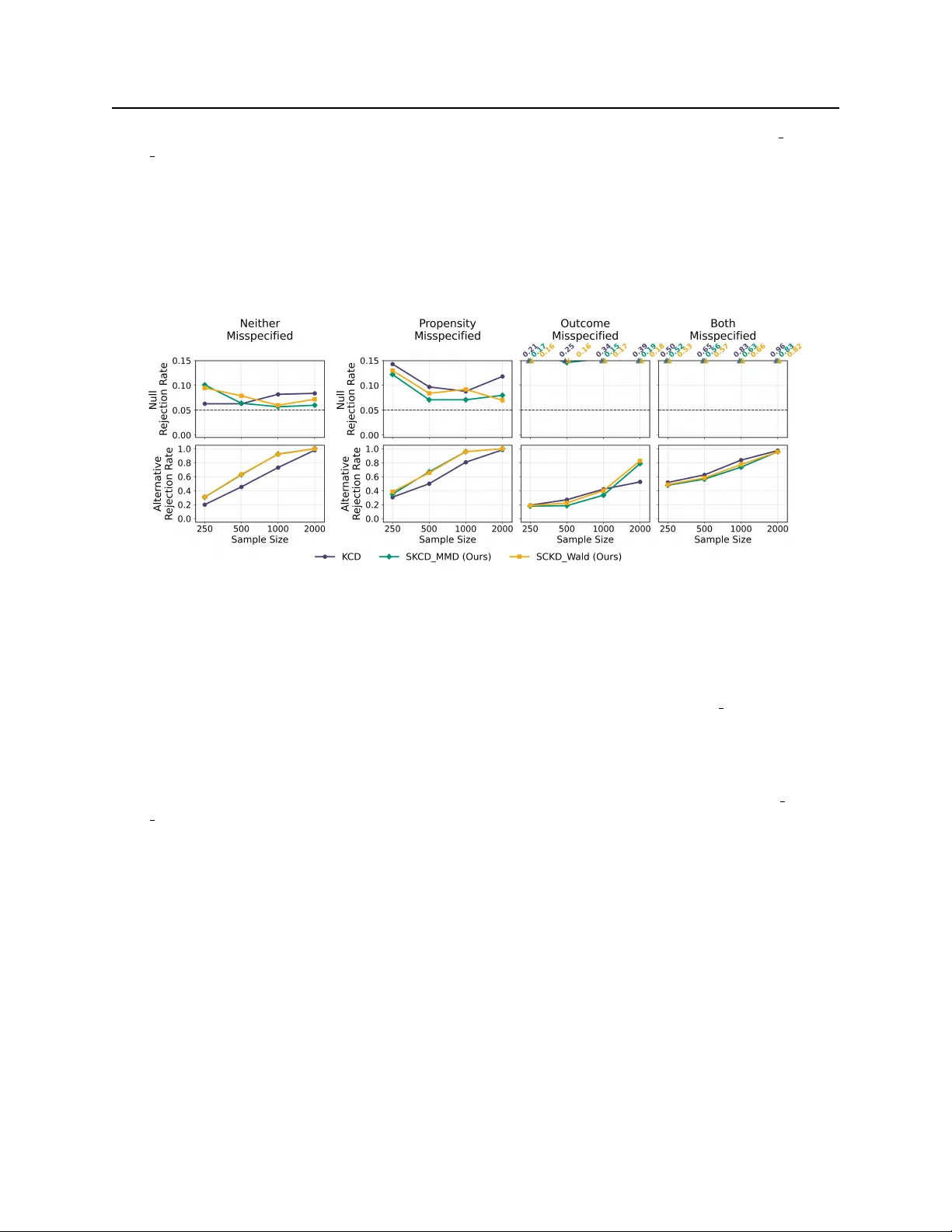

Beyond conditional average treatment effects, treatments may impact the entire outcome distribution in covariate-dependent ways, for example, by altering the variance or tail risks for specific subpopulations. We propose a novel estimand to capture s…

Authors: Saksham Jain, Alex Luedtke