Exponential stability of data-driven nonlinear MPC based on input/output models

We consider nonlinear model predictive control (MPC) schemes using surrogate models in the optimization step based on input-output data only. We establish exponential stability for sufficiently long prediction horizons assuming exponential stabilizab…

Authors: Lea Bold, Irene Schimperna, Karl Worthmann

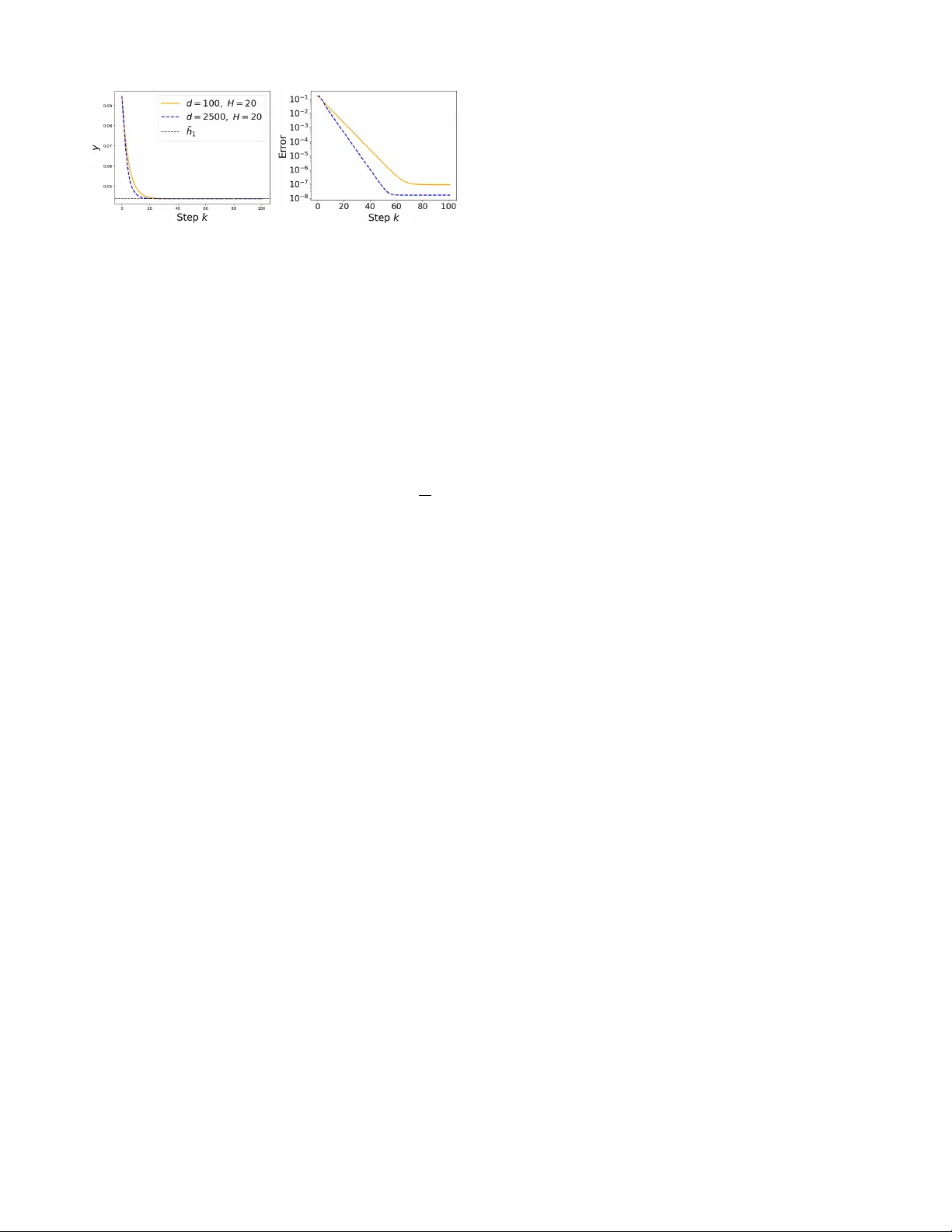

Exponential stability of data-driv en nonlinear MPC based on input/output models Lea Bold 1 , Irene Schimperna 2 , Karl W orthmann 1 , Johannes K ¨ ohler 3 Abstract — W e consider nonlinear model predictiv e control (MPC) schemes using surrogate models in the optimization step based on input-output data only . W e establish exponential stability for sufficiently long prediction horizons assuming exponential stabilizability and a proportional err or bound. Moreo ver , we verify the imposed condition on the approxima- tion using kernel interpolation and demonstrate the practical applicability to nonlinear systems with a numerical example. I . I N T RO D U C T I O N Model predictiv e control (MPC) is now adays a well- established advanced control technique, where the input is determined by solving a finite-horizon constrained optimal control problem [1]. For the stability analysis, terminal con- ditions are often utilized [2]. Alternativ ely , stability can be established using a sufficiently long prediction horizon and some stabilizability conditions related to the stage cost [3]– [6]. These results rely on the use of a positi ve-definite stage cost penalizing the de viation of the state from the desired set point. Howe ver , in practical applications, output weighting is often preferred as it is closely related to the closed- loop performance. This is particularly relev ant when MPC is based on input/output models. In this case, the resulting stage cost is only positive semi-definite in the system state, and the stability analysis requires more general tools, based on the condition that the stage cost is detectable [7], [8]. MPC relies on a reliable and accurate model, which explains the recent research interest in data-driv en ap- proaches [9]. T o this end, a plethora of modeling techniques has been employed in MPC. For linear system, an effec- tiv e method is based on the use of Willems’ fundamental lemma [10], which allo ws to use input/output data to directly obtain the MPC predictions. For nonlinear systems, the explored approaches include Gaussian processes [11], K oop- man operator theory [12], [13], neural networks [14], and many more. Despite the availability of many different mod- eling techniques, data-driv en models come with the presence of model-plant mismatch, which may impact the stability properties of the MPC scheme. T o fill this gap, recent works hav e derived conditions, in which data-dri ven MPC preserv es stability . Considering MPC without terminal conditions, [15] 1 L. Bold and K. W orthmann are with the Optimization-based Con- trol Group, Institute of Mathematics, TU Ilmenau, Ilmenau, Germany . [lea.bold, karl.worthmann]@tu-ilmenau.de . L. Bold and K. W orthmann gratefully acknowledge funding by the German Research Foundation (DFG; project numbers 545246093 and 535860958). 2 I. Schimperna is with the Department of Electrical, Computer and Biomedical Engineering, Univ ersity of Pa via, Pavia, Italy . irene.schimperna01@universitadipavia.it 3 J. K ¨ ohler is with the Department of Mechanical Engineering, Imperial College London, London, UK. j.kohler@imperial.ac.uk shows that asymptotic stability can be obtained in presence of proportional bounds on the modeling error, and proposes a learning method based on K oopman operator theory to obtain surrogate models satisfying the required bounds. Similar results ha ve been derived for MPC with terminal conditions in [16], considering models with parametric uncertainty . Finally , exponential stability of K oopman MPC with terminal conditions has been studied in [17], [18], where the latter also introduces a constraint-tightening approach to guarantee robust constraint satisfaction despite model-plant mismatch. These existing stability results [15]–[18] rely on state- space models necessitating state measurements. Howe ver , in many practical applications, only the system outputs are measurable, and, thus, MPC is designed on the base of input/output models. The goal of this paper, is to extend the results of asymptotic stability of MPC in presence of approximation errors to models in input/output form. In particular , we consider an MPC formulation without terminal conditions, in which stability is studied relying on a suffi- ciently long optimization horizon and a cost controllability property . In vie w of the model formulation, the MPC algo- rithm considers an output weighting stage cost, and, thus, the proofs are based on a cost detectability property [8]. W e sho w that exponential stability of the data-dri ven MPC closed loop can be achie ved if the surrogate model satisfies proportional error bounds, and if the system is exponential stabilizable. Moreov er , we show that kernel interpolation is a suitable learning technique to provide data-driv en surrogate models satisfying the required properties. Finally , we demonstrate our findings in numerical simulations. The paper is organized as follows. Section II introduces the considered control problem and the MPC algorithm. Stability properties of the closed loop system are analyzed in Section III. In Section IV, we sho w how models satisfying the required conditions can be learned using k ernel interpolation. Finally , numerical experiments are reported in Section V and conclusions are drawn in Section VI. Notation : For a symmetric positi ve-definite matrix M , λ ( M ) and λ ( M ) denote its maximum and minimum eigen value, respectiv ely . I n ∈ R n × n denotes the identity matrix, 0 n × m ∈ R n × m denotes the zero matrix, and we omit dimensions when clear from the context. diag denotes block diagonal matrices, ∥·∥ the Euclidean norm on R n , while for a matrix Q and a vector x , ∥ x ∥ 2 Q := x ⊤ Qx . Giv en two integers a, b , a < b , the abbreviation [ a : b ] := Z ∩ [ a, b ] is used. Moreo ver , we use the notation x ( b : a ) : = [ x ( b ) ⊤ , x ( b − 1) ⊤ , . . . , x ( a ) ⊤ ] ⊤ . Giv en a set S , we denote by S N = S × · · · × S ( N times). I I . P RO B L E M F O R M U L AT IO N A N D M P C A L G O R I T H M The aim of the paper is to control a nonlinear system using a surrogate model inferred from input/output data. The considered system is described by y ( k + 1) = F y ( x ( k ) , u ( k )) (1) x ( k ) = [ y ( k : k − ν + 1) ⊤ , u ( k − 1 : k − ν + 1) ⊤ ] ⊤ where y ( k ) ∈ R p is the system output and u ( k ) ∈ U is its control input. U ⊂ R m is a compact set containing the origin in its interior , and x ( k ) ∈ R n for n = ν p + ( ν − 1) m is the vector of ν past inputs and outputs. The parameter ν ∈ N defines how many of the past observations are required to fully characterize the dynamics, and corresponds to the lag of the system. For sake of simplicity , we assume that x ( k ) ∈ Ω ⊆ R n , where Ω contains the origin in its interior and is a positiv e in variant set for the system (1) under inputs u ∈ U . 1 W e assume that F y : R n × R m → R p is Lipschitz con- tinuous w .r .t. its first argument, i.e., there exists a Lipschitz constant L F y > 0 , such that ∥ F y ( x, u ) − F y ( x ′ , u ) ∥ ≤ L F y ∥ x − x ′ ∥ ∀ u ∈ U for all x, x ′ ∈ Ω . The system (1) can also be written in an equiv alent state-space form as x ( k + 1) = F x ( x ( k ) , u ( k )) with (2) F x ( x, u ) = F y ( x, u ) [ I ( ν − 1) p , 0 ( ν − 1) p × p +( ν − 1) m ] x u [0 ( ν − 2) m × ν p , I ( ν − 2) m , 0 ( ν − 2) m × m ] x (3) where F x ( x, u ) has a Lipschitz constant L F x . W e assume that the origin is a controlled equilibrium of system (2), i.e. F y (0 , 0) = 0 and F x (0 , 0) = 0 . The MPC design is based on a data-dri ven surrog ate model y ( k + 1) ≈ F ε y ( x ( k ) , u ( k )) (4) with right-hand side approximation F ε y of F y , where the superscript ε ∈ (0 , ¯ ε ] , ¯ ε > 0 , stands for the approximation accuracy and is used in the following to indicate a depen- dence on the surrogate dynamics. In Section IV we introduce kernel regression as a possible method to compute such a data-dri ven surrogate. The surrogate system also has an equiv alent state dynamics gi ven by F ε x , analogously defined to (3), which is also assumed to render the set Ω positiv e in variant to streamline the exposition. The goal of the paper is to sho w that in presence of suitable bounds on the modeling error, MPC using the surrogate model (4) in the optimization step stabilizes the system under control (1). The MPC is designed considering the quadratic input/output stage cost ℓ : R p × R m → R ≥ 0 giv en by ℓ ( y , u ) = ∥ y ∥ 2 Q + ∥ u ∥ 2 R , (5) 1 The case in which the inv ariance condition does not hold is studied in [15], [18], in which a uniform error bound is also required to tighten the constraints and to characterize the set in which stability holds. where Q = Q ⊤ ∈ R p × p and R = R ⊤ ∈ R m × m are positiv e- definite weighting matrices. The MPC cost function J ε N : Ω × U N → R ≥ 0 is giv en by J ε N ( ˆ x, u ) = X N − 1 k =0 ℓ ( y ε u ( k + 1; ˆ x ) , u ( k )) (6a) x ε u (0; ˆ x ) = ˆ x (6b) x ε u ( k + 1; ˆ x ) = F ε x ( x ε u ( k ; ˆ x ) , u ( k )) , k ∈ [0 : N ] (6c) y ε u ( k ; ˆ x ) = [ I p , 0 ( n − p ) × p ] x ε u ( k ; ˆ x ) , k ∈ [0 : N ] (6d) Finally , the MPC algorithm is reported in Algorithm 1. Algorithm 1 Data-dri ven nonlinear MPC Input : Horizon N ∈ N , surrogate F ε x , stage cost ℓ , input constraints U Initialization : Set k = 0 , and initialize the state as ˆ x = [ y (0 : − ν ) ⊤ , u ( − 1 : − ν ) ⊤ ] ⊤ (1) If k > 0 , measure output y ( k ) and set ˆ x = x ( k ) = [ y ( k : k − ν + 1) ⊤ , u ( k − 1 : k − ν + 1) ⊤ ] ⊤ (2) Solve the optimal control problem V ε N ( ˆ x ) : = min u J ε ( ˆ x, u ) s.t. (6b)-(6c)-(6d) u ( i ) ∈ U for i ∈ [0 : N − 1] u = { u ( i ) } N − 1 i =0 (OCP) to obtain the optimal control sequence { u ⋆ ( i ) } N − 1 i =0 (3) Apply the MPC feedback law µ ε N ( ˆ x ) = u ⋆ (0) to the plant to generate the closed loop y ( k + 1) = F y ( x ( k ) , µ ε N ( x ( k ))) , increment k = k + 1 , and go to Step (1). For the closed-loop analysis, we also introduce the nominal cost function J N , and the nominal (optimal) value function V N , that are defined analogously to J ε N and V ε N , but using the real system F x instead of the surrogate F ε x in (6c). I I I . S TA B I L I T Y A N A L Y S I S In order to prov e exponential stability of the data-driv en MPC closed-loop system, we require error bounds of the model and its smoothness properties, see, e.g., [19]. Assumption 1: For ev ery ε ∈ (0 , ¯ ε ] , let the surrogate model (4) satisfy 1) pr oportional error bounds ∥ F y ( x, u ) − F ε y ( x, u ) ∥ ≤ c ε x ∥ x ∥ + c ε u ∥ u ∥ (7) for all x ∈ Ω , u ∈ U with parameters c ε x and c ε u satisfying lim ε ↘ 0 max { c ε x , c ε u } = 0 . 2) uniform Lipschitz continuity in the first argument on Ω , there exists ¯ L > 0 such that, for every ε ∈ (0 , ¯ ε ] , there is a Lipschitz constant L F ε y with L F ε y ≤ ¯ L satisfying, for all x, x ′ ∈ Ω , u ∈ U , ∥ F ε y ( x, u ) − F ε y ( x ′ , u ) ∥ ≤ L F ε y ∥ x − x ′ ∥ (8) T o deri ve the stability results, it is important to notice that the considered stage cost (5) only penalizes the system output, and it is therefore only positiv e semi-definite in the state x . Hence, the standard stability results for positi ve definite cost functions (cf. [1], [4]) cannot be applied directly . Instead, the stability proof relies on cost detectability [3], [7]. In view of the NARX structure of the considered system (1) and model (4), the following result establishes this detectability . Pr oposition 1: (Cost detectability , adapted from [7, Re- mark 9]) Consider the quadratic storage function W ( x ) = ∥ x ∥ 2 P , P = diag( Q, . . . , 1 ν Q, R, . . . , 2 ν R ) , satisfying W ( x ( t )) = ν − 1 X k =0 ν − k ν ∥ y ( t − k ) ∥ 2 Q + ν − 1 X k =1 ν − k +1 ν ∥ u ( t − k ) ∥ 2 R . (9) For any x ∈ Ω , u ∈ U , x + = F x ( x, u ) and y + = F y ( x, u ) it holds that W ( x + ) ≤ ν − 1 ν W ( x ) + ℓ ( y + , u ) . (10) This result holds for any system in NARX form of (1) including the surrogate models F ε y , F ε x . The storage function W ( x ) = ∥ x ∥ 2 P with P ≻ 0 ensures that minimizing the stage cost ℓ ( y ( k + 1) , u ( k )) also driv es the state x ( k ) to the origin. Since the MPC algorithm does not include terminal con- ditions, the stability proof utilizes the following cost control- lability condition adapted from [8, Assumption 2]. Assumption 2 (Cost contr ollability): The system (1) with stage cost (5) is cost contr ollable on the set Ω , i.e., there exists a monotonically increasing and bounded sequence ( B N ) N ∈ N 0 such that, for ev ery ˆ x ∈ Ω and ev ery N ∈ N , there exists a control sequence u ∈ U N that satisfies the growth bound V N ( ˆ x ) ≤ J N ( ˆ x, u ) ≤ B N ∥ ˆ x ∥ 2 . (11) Cost controllability with a quadratic cost ℓ means that the system can be exponentially stabilized to the origin. Compared to the positiv e-definite-cost case [1], we cannot hav e only the stage cost ℓ on the right hand side, see [8] for a discussion. In the following, we show that if the system under control is cost controllable, then the same property is preserved by the surrogate model, and vice versa. This proposition follows the same lines of reasoning of [15, Proposition 1], but it is adapted to the positiv e semi-definite stage cost case, and, thus, it makes use of the cost detectability function W defined in (9). Pr oposition 2: Let Assumptions 1 and 2 hold. Then, for giv en ¯ N , the growth bound (11) is satisfied for the surrogate model (4) on Ω for all N ∈ [1 : ¯ N ] uniformly in ε , i.e., there exists a monotonically increasing sequence ( B ε N ) N ∈ [1: ¯ N ] parametrized in ε such that, for each pair ( ˆ x, N ) ∈ Ω × [1 : ¯ N ] , there exists u ∈ U N satisfying V ε N ( ˆ x ) ≤ J ε N ( ˆ x, u ) ≤ B ε N ∥ ˆ x ∥ 2 . (12) Moreov er , lim ε ↘ 0 B ε N = B N for all N ∈ [1 : ¯ N ] . The statement holds also upon switching the roles of F y and F ε y , i.e., if the cost controllability condition (11) holds for the surrogate model F ε y , then the growth bound (12) holds for the original system dynamics F y for all N ∈ [1 : ¯ N ] . Pr oof: In the proof, we study the difference between J ε N ( ˆ x, u ) and J N ( ˆ x, u ) . For sake of compactness, we omit the dependence of all the state and output sequences from the initial condition ˆ x . Follo wing similar steps to the proof of [15, Prop. 1], we have that J ε N ( ˆ x, u ) ≤ J N ( ˆ x, u ) + ¯ λ ( Q ) h N − 1 X k =0 e y ( k + 1) 2 + 2 e y ( k + 1) ∥ y u ( k + 1) ∥ i , where e y ( k ) := ∥ y ε u ( k ) − y u ( k ) ∥ . Next, we deriv e bounds on e 2 y ( k + 1) and e y ( k + 1) ∥ y u ( k + 1) ∥ . Note that by definition e y ( k ) ≤ e x ( k ) := ∥ x ε u ( k ) − x u ( k ) ∥ and that ∥ F x ( x, u ) − F ε x ( x, u ) ∥ = ∥ F y ( x, u ) − F ε y ( x, u ) ∥ , since the two functions only differ for the first p components. Analogously to [15], we hav e that e y ( k + 1) ≤ e x ( k + 1) ≤ ¯ c ( ∥ x u ( k ) ∥ + ∥ u ( k ) ∥ ) + ( L F x + c ε x ) e x ( k ) (13) ≤ ¯ c X k i =0 ( L F x + c ε x ) k − i ( ∥ x u ( i ) ∥ + ∥ u ( i ) ∥ ) (14) with ¯ c := max { c ε x , c ε u } . Let d : = L F x + c ε x and ℓ y ( i ) : = ℓ ( y u ( i + 1) , u ( i )) . Using Inequality (13) and the fact that ( a + b ) 2 ≤ 2 a 2 + 2 b 2 , we get e y ( k + 1) 2 ≤ 4 ¯ c 2 ( ∥ x u ( k ) ∥ 2 + ∥ u ( k ) ∥ 2 ) + 2 d 2 e x ( k ) 2 ≤ 4 ¯ c 2 ( ∥ x u ( k ) ∥ 2 + λ ( R ) − 1 ℓ y ( k )) + 2 d 2 e x ( k ) 2 ≤ 4 ¯ c 2 k X i =0 (2 d 2 ) k − i ( ∥ x u ( i ) ∥ 2 + λ ( R ) − 1 ℓ y ( i )) = 4¯ c 2 k X j =0 (2 d 2 ) j ( ∥ x u ( k − j ) ∥ 2 + λ ( R ) − 1 ℓ y ( k − j )) . (15) Analogously , lev eraging Inequality (14) and the fact that 2 ∥ a ∥∥ b ∥ ≤ ∥ a ∥ 2 + ∥ b ∥ 2 yields e y ( k ) ∥ y u ( k ) ∥ ≤ e x ( k ) ∥ y u ( k ) ∥ ≤ ¯ c k − 1 X i =0 d k − 1 − i ≤ 1 2 ( ∥ x u ( i ) ∥ 2 + ∥ u ( i ) ∥ 2 +2 ∥ y u ( k ) ∥ 2 ) z }| { ∥ x u ( i ) ∥∥ y u ( k ) ∥ + ∥ u ( i ) ∥∥ y u ( k ) ∥ ≤ ¯ c 2 k − 1 X i =0 d k − 1 − i ∥ x u ( i ) ∥ 2 + λ ( R ) − 1 ℓ y ( i ) + 2 ∥ y u ( k ) ∥ 2 = ¯ c 2 k − 1 X j =0 d j ∥ x u ( k − 1 − j ) ∥ 2 + λ ( R ) − 1 ℓ y ( k − 1 − j ) + 2 ∥ y u ( k ) ∥ 2 . (16) The terms in (15) and (16) are analogous to the ones obtained in the proof of [15, Prop. 1], except for the presence of the additional term ∥ x u ( k − 1 − j ) ∥ 2 , which cannot be included in ℓ y in view of the positive semi-definiteness of the cost. Hence, we need an additional bound for this term, that can be obtained in vie w of the properties of the quadratic storage function introduced in Proposition 1. In particular, for each k ∈ [1 : N − 1] we can apply (9) iterati vely and use that ν − 1 ν < 1 and the assumed cost controllability to obtain ∥ x u ( k ) ∥ 2 P ≤ ν − 1 ν ∥ x u ( k − 1) ∥ 2 P + ℓ y ( k − 1) ≤ X k − 1 i =0 ℓ y ( i ) + ∥ ˆ x ∥ 2 P ≤ B k ∥ ˆ x ∥ 2 + ∥ ˆ x ∥ 2 P , which, in view of the positiv e definiteness of P , implies ∥ x u ( k ) ∥ 2 ≤ λ ( P ) − 1 ( B k + λ ( P )) ∥ ˆ x ∥ 2 . This bound can be combined with the bounds in [15, Proposition 1] to obtain that J ε N ( ˆ x, u ) ≤ B N + 4 ¯ λ ( Q )¯ c 2 c N + 2 ¯ λ ( Q )¯ c ¯ c N ∥ ˆ x ∥ 2 =: B ε N ∥ ˆ x ∥ 2 , (17) where c N is giv en by N − 1 X k =0 k X j =0 (2 d 2 ) j λ ( P ) − 1 ( B k − j + λ ( P )) + N − 1 X j =0 (2 d 2 ) j B N − j λ ( R ) and ¯ c N : = 1 2 N − 1 X k =0 k X j =0 d j λ ( P ) − 1 ( B k − j + λ ( P )) + N − 1 X j =0 λ ( R ) − 1 d j B N − j + λ ( Q ) − 1 max k ∈ [0: N − 1] k − 1 X j =0 d j B N ! . In (17), for each N ∈ [1 : ¯ N ] , B ε N → B N for ¯ c := max { c ε x , c ε u } ↘ 0 as claimed. The symmetry of the statement can be proved with the same arguments of [15]. Since the considered stage cost is positi ve semi definite, the stability proof needs to consider Y ε N : = V ε N + W as candidate L yapunov function and cannot rely on the optimal value function V ε N only [3]. The following theorem shows exponential stability of the MPC using the surrogate model in the optimization step. Theor em 1: (Adapted from [8, Thm. 3]) Let Assump- tions 1 and 2 hold. Suppose that ε > 0 is sufficiently small and the horizon N is sufficiently lar ge so that N > N = 1 + log( ¯ γ ε ) − log( 1 ν ) log(1 + ¯ γ ε ) − log( ¯ γ ε + η ) (18) with V ε N ( x ) ≤ B ε N ∥ x ∥ 2 ≤ ¯ γ ε W ( x ) , ¯ γ ε = max N B ε N σ min ( P ) , η = ν − 1 ν . Then, there exists α N > 0 such that for all x ∈ Ω W ( x ) ≤ Y ε N ( x ) ≤ ( ¯ γ + 1) W ( x ) , (19a) Y ε N ( F ε x ( x, u )) ≤ Y ε N ( x ) − α N ν · W ( x ) . (19b) Inequality (19) ensures exponential stability , if the prediction model F ε x in MPC coincides with the actual dynamics. In the following theorem we deriv e the main result of the paper , i.e., exponential stability of the data-driv en MPC closed loop. T o do so, we rely on the error bounds of Assumption 1 to show that Y ε N is also a L yapunov function for the system (2) under the control law µ ε N , provided that ε is sufficiently small. Theor em 2: Let the assumptions of Proposition 2 and of Theorem 1 hold. Then, there exists ε 0 ∈ (0 , ¯ ε ] such that the MPC controller of Algorithm 1 ensures e xponential stability of the origin for all x (0) ∈ Ω and for ε ∈ (0 , ε 0 ) . Pr oof: In Theorem 1, we have shown the relaxed L yapunov inequality Y ε N ( F ε x ( ˆ x, u )) − Y ε N ( ˆ x ) ≤ − α N ν · W ( ˆ x ) (20) for the surrogate model F ε x . Considering the real system dynamics F x , we hav e that Y ε N ( F x ( ˆ x, µ ε N ( ˆ x ))) ± Y ε N ( F ε x ( ˆ x, µ ε N ( ˆ x ))) ≤ Y ε N ( ˆ x ) − 1 ν α N W ( ˆ x ) + V ε N ( F x ( ˆ x, µ ε N ( ˆ x ))) − V ε N ( F ε x ( ˆ x, µ ε N ( ˆ x ))) (21) + W ( F x ( ˆ x, µ ε N ( ˆ x ))) − W ( F ε x ( ˆ x, µ ε N ( ˆ x ))) . (22) Next, we exploit the proportional bounds of Assumption 1 to derive bounds for (21) and (22). T o this end, we re- call that F ε x ( ˆ x, µ ε N ( ˆ x )) = x ε u ⋆ (1; ˆ x ) , where u ⋆ is the optimal solution of (OCP) with initial state ˆ x , and we denote the real successor state of the system by x + := F x ( ˆ x, µ ε N ( ˆ x )) . u ♯ = ( u ♯ ( i )) N − 1 i =0 represents the solution of the MPC optimization problem initialized with ˜ x + := x ε u ⋆ (1; ˆ x ) , which is nev er computed in the practice but needed to define V ε N ( F ε x ( ˆ x, µ ε N ( ˆ x ))) . Moreover , we define y ε u ♯ ( i ; ˜ x + ) : = [ I p 0 ( n − p ) × p ] x ε u ♯ ( i ; ˜ x + ) and y ε u ♯ ( i ; x + ) : = [ I p 0 ( n − p ) × p ] y ε u ♯ ( i ; x + ) for all i ∈ [0 : N ] . Then, optimality of MPC yields V ε N ( F x ( ˆ x, µ ε N ( ˆ x ))) | {z } = V ε N ( x + ) ≤ J ε N ( x + , u ♯ ) − V ε N ( F ε x ( ˆ x, µ ε N ( ˆ x ))) (23) ≤ N − 1 X i =0 ℓ ( y ε u ♯ ( i + 1; x + ) , u ♯ ( i )) − ℓ ( y ε u ♯ ( i + 1; ˜ x + ) , u ♯ ( i )) . Consider now the i -th term of this summation ℓ ( y ε u ♯ ( i + 1; x + ) , u ♯ ( i )) − ℓ ( y ε u ♯ ( i + 1; ˜ x + ) , u ♯ ( i )) = ∥ y ε u ♯ ( i + 1; x + ) ∥ 2 Q − ∥ y ε u ♯ ( i + 1; ˜ x + ) ∥ 2 Q ≤∥ Q ∥∥ y ε u ♯ ( i + 1; x + ) − y ε u ♯ ( i + 1; ˜ x + ) ∥ · ∥ y ε u ♯ ( i + 1; x + ) + y ε u ♯ ( i + 1; ˜ x + ) ∓ y ε u ♯ ( i + 1; ˜ x + ) ∥ ≤ 2 ∥ Q ∥∥ y ε u ♯ ( i + 1; ˜ x + ) ∥∥ y ε u ♯ ( i + 1; ˜ x + ) − y ε u ♯ ( i + 1; x + ) ∥ + ∥ Q ∥∥ y ε u ♯ ( i + 1; ˜ x + ) − y ε u ♯ ( i + 1; x + ) ∥ 2 , (24) where we ha ve used ∥ a ∥ 2 M − ∥ b ∥ 2 M = ( a + b ) ⊤ M ( a − b ) ≤ ∥ M ∥∥ a − b ∥∥ a + b ∥ . In the following, we deri ve upper bounds for the terms ∥ y ε u ♯ ( i + 1; ˜ x + ) − y ε u ♯ ( i + 1; x + ) ∥ and ∥ y ε u ♯ ( i + 1; ˜ x + ) ∥ . First, we consider the term ∥ y ε u ♯ ( i + 1; ˜ x + ) − y ε u ♯ ( i + 1; x + ) ∥ , and we lev erage (7) and (8) of Assumption 1 to infer ∥ y ε u ♯ ( i + 1; ˜ x + ) − y ε u ♯ ( i + 1; x + ) ∥ ≤ ∥ x ε u ♯ ( i + 1; ˜ x + ) − x ε u ♯ ( i + 1; x + ) ∥ ≤ L i +1 F ε x ∥ x ε u ♯ (0; ˜ x + ) − x ε u ♯ (0; x + ) ∥ ≤ L i +1 F ε x ( c ε x ∥ ˆ x ∥ + c ε u ∥ µ ε N ( ˆ x ) ∥ ) . Second, we consider the term ∥ y ε u ♯ ( i + 1; ˜ x + ) ∥ . For the growth bound of F ε x deriv ed in Proposition 2 and for the relaxed L yapunov inequality (20), we have that V ε N ( F ε x ( ˆ x, µ ε N ( ˆ x ))) = X N − 1 i =0 ℓ ( y ε u ♯ ( i + 1; ˜ x + ) , u ♯ ( i )) ≤ V ε N ( ˆ x ) ≤ B ε N ∥ ˆ x ∥ 2 . Since, we ha ve the inequality ℓ ( y ε u ♯ ( i + 1; ˜ x + ) , u ♯ ( i )) ≥ ∥ y ε u ♯ ( i + 1; ˜ x + ) ∥ 2 Q for all i ∈ [0 : N − 1] , we also hav e ∥ y ε u ♯ ( i + 1; ˜ x + ) ∥ 2 Q ≤ B ε N ∥ ˆ x ∥ 2 . By exploiting standard inequalities of weighted squared norms and taking the square root, we have ∥ y ε u ♯ ( i + 1; ˜ x + ) ∥ ≤ ˜ c ∥ ˆ x ∥ , where ˜ c : = p B ε N /λ ( Q ) . Then, substituting these inequalities in (24), we get ℓ ( y ε u ♯ ( i + 1; x + ) , u ε ( i )) − ℓ ( y ε u ♯ ( i + 1; ˜ x + ) , u ♯ ( i )) ≤ 2 ∥ Q ∥ ˜ cL i +1 F ε x ( c ε x ∥ ˆ x ∥ 2 + c ε u ∥ ˆ x ∥∥ µ ε N ( ˆ x ) ∥ | {z } ≤ 1 2 c ε u ( ∥ ˆ x ∥ 2 + ∥ µ ε N ( ˆ x ) ∥ 2 ) ) +2 ∥ Q ∥ L 2( i +1) F ε x ( c ε x ) 2 ∥ ˆ x ∥ 2 + ( c ε u ) 2 ∥ µ ε N ( ˆ x ) ∥ 2 . (25) The terms in (22) can be studied with a similar reasoning by noting that W ( F x ( ˆ x, µ ε N ( ˆ x ))) − W ( F ε x ( ˆ x, µ ε N ( ˆ x ))) = W ([ F y ( ˆ x, µ ε N ( ˆ x )) ⊤ , ([ I , 0] ˆ x ) ⊤ , µ ε N ( ˆ x ) ⊤ , ([0 , I , 0] ˆ x ) ⊤ ] ⊤ ) − W ([ F ε y ( ˆ x, µ ε N ( ˆ x )) ⊤ , ([ I , 0] ˆ x ) ⊤ , µ ε N ( ˆ x ) ⊤ , ([0 , I , 0] ˆ x ) ⊤ ] ⊤ ) = ∥ F y ( ˆ x, µ ε N ( ˆ x )) ∥ 2 Q − ∥ F ε y ( ˆ x, µ ε N ( ˆ x )) ∥ 2 Q = ∥ y ε u ♯ (0; x + ) ∥ 2 Q − ∥ y ε u ♯ (0; ˜ x + ) ∥ 2 Q ≤ 2 ∥ Q ∥ ˜ c ( c ε x ∥ ˆ x ∥ 2 + 1 2 c ε u ( ∥ ˆ x ∥ 2 + ∥ µ ε N ( ˆ x ) ∥ 2 )) + 2 ∥ Q ∥ ( c ε x ) 2 ∥ ˆ x ∥ 2 + ( c ε u ) 2 ∥ µ ε N ( ˆ x ) ∥ 2 , where the last bound is obtained applying the same reasoning we used for (24) considering i + 1 = 0 . Substituting this back in (21), (22) and (23), we obtain Y ε N ( F x ( ˆ x, µ ε N ( ˆ x ))) ≤ Y ε N ( ˆ x ) − 1 ν α N W ( ˆ x ) + C x ∥ ˆ x ∥ 2 + C u ∥ µ ε N ( ˆ x ) ∥ 2 , where C x := 2 ∥ Q ∥ P N i =0 ˜ cL i F ε x ( c ε x + 1 2 c ε u ) + ( L i F ε x c ε x ) 2 and C u := 2 ∥ Q ∥ P N i =0 ˜ cL i F ε x 1 2 c ε u +( L i F ε x c ε u ) 2 . The v alues of C x and C u can be made arbitrarily small with sufficiently small proportionality constants c ε x and c ε u . Moreover , in view of the results of Proposition 2, we have that ∥ µ ε N ( ˆ x ) ∥ 2 R ≤ V ε N ( ˆ x ) ≤ B ε N ∥ ˆ x ∥ 2 , which implies that ∥ µ ε N ( ˆ x ) ∥ ≤ p B ε N /λ ( R ) ∥ ˆ x ∥ . Then, there exists a sufficiently small ε 0 such that c ε x , c ε u are sufficiently small to ensure the inequality − 1 ν α N W ( ˆ x ) + C x ∥ ˆ x ∥ 2 + C u ∥ µ ε N ( ˆ x ) ∥ 2 < − ¯ α ∥ ˆ x ∥ 2 for ¯ α ∈ (0 , 1 ν α N ) and for all ε ∈ (0 , ε 0 ) . This completes the proof and, thus, shows e xponential stability of the MPC closed loop based on the surrogate model (4). I V . K E R N E L I N T E R P O L A T I O N : D A TA - D R I VE N M O D E L S In the following, we show how we can learn a function F ε y satisfying Assumption 1 from input-output data using k ernel interpolation [20]. Specifically , we have a data set X consisting of ξ i = ( x i , u i ) ∈ Ω × U =: Ω ξ ⊆ R n + m with y i = F y ( ξ i ) , i ∈ [1 : D ] . Since we can identify each component independently , we focus on estimating F ε y for a scalar output ( p = 1 ) to simplify the exposition. W e denote the fill distance of this data by h X : = sup ( x,u ) ∈ Ω × U min ( x i ,u i ) ∈X ∥ ( x, u ) − ( x i , u i ) ∥ . Suppose that this data set contains the origin, i.e., 0 ∈ X . Let k : Ω ξ × Ω ξ → R ≥ 0 be a symmetric, strictly positiv e kernel with corresponding reproducing kernel Hilbert space (RKHS) denoted by H . Furthermore, suppose that F y ∈ H . Kernel interpolation yields the unique function that interpo- lates the data with the minimal RKHS norm, which is giv en by F ε y ( ξ ) = k X ( ξ ) ⊤ K − 1 X Y , (26) with k X : Ω ξ → R D , k X ( ξ ) = ( k ( ξ , ξ i )) D i =1 , kernel matrix K X = ( k ( ξ i , ξ j )) D i,j =1 ∈ R D × D , and Y = ( y i ) D i =1 ∈ R D . Kernel interpolation enjoys the following error bound (cf. [21, Sec. 14.1]) | F ε y ( ξ ) − F y ( ξ ) | ≤ P ( ξ ) ∥ F y ∥ H ∀ ξ ∈ Ω (27) with the power function P : Ω ξ → R ≥ 0 , P ( ξ ) = q k ( ξ , ξ ) − k X ( ξ ) ⊤ K − 1 X k X ( ξ ) . Suppose the RKHS is norm equi valent to the Sobolev space of order s , e.g., by choosing a corresponding Matern or W endland kernel k of sufficient smoothness. Then, according to [22, Thm. 5.4], the power function satisfies P ( ξ ) ≤ C h s − ( n + m ) / 2 − 1 X ∥ ξ ∥ for some constant C > 0 , where we use the fact that 0 ∈ X , i.e., the origin is contained in the data set. By setting s > 1+ ( n + m ) / 2 , we satisfy the proportional error bounds with ε giv en by the fill distance h X . Furthermore, if kernel k is twice continuous differentiable with a bounded Hessian, then both functions F ε y , F y ∈ H are also Lipschitz continuous [23]. In conclusion, Assumption 1 holds by using kernel in- terpolation if: (i) the data has a small enough fill distance h X → 0 , (ii) the equilibrium at the origin is contained in the data set, and (iii) the unkno wn function F y lies in the RKHS H with a suitably chosen kernel k . V . N U M E R I C A L E X A M P L E The exponential stability of the data-driv en MPC is illus- trated in a two-tank example [24] described by ˙ h 1 = c 12 p h 2 − h 1 + c 2 p h 1 ˙ h 2 = 1 A 1 u − c 1 , 2 p h 2 − h 1 (28) Fig. 1. T rajectories y ( k ) (left) and state errors ∥ x ( k ) − ¯ x ∥ , ¯ x = [ ¯ h 1 , ¯ h 1 , ¯ u ] ⊤ , (right) of the data-driven MPC closed loop for k ∈ [0 : 100] , where the models are obtained with D ∈ { 101 , 2501 } data points. with constants A 1 = 0 . 001 , c 1 , 2 = 0 . 0254 , c 2 = 0 . 0261 . The system (28) is integrated by using the classical fourth-order Runge–Kutta method (RK4) using a sampling time ∆ t = 10 s. The output of the system is gi ven by y = h 1 , and the control objective is to steer the system to the equilibrium point ¯ h = (0 . 0438 , 0 . 09) ⊤ , ¯ u = 5 . 461 · 10 − 6 , while respect- ing the input constraint u ∈ U : = [3 . 16 · 10 − 6 , 4 . 76 · 10 − 5 ] . The MPC algorithm is based on a surrogate model ob- tained via kernel interpolation, using the W endland kernel function k ( x, x ′ ) = ϕ ( ∥ x − x ′ ∥ ) , with ϕ ( r ) : = 1 30 (1 − r ) 5 (5 r + 1) for r ∈ [0 , 1] and 0 otherwise. The lag of the system is ν = 2 , leading to a model with a state x ∈ Ω ⊆ R 3 . W e consider the domain Ω = [0 , 0 . 5] 2 × U . T o ensure proportional error bounds, the first data point is in the reference equilibrium, i.e. x 1 = [ ¯ h 1 , ¯ h 1 , ¯ u ] , u 1 = ¯ u and y 1 = ¯ h 1 . F or the simulations, we consider datasets with D ∈ { 101 , 2501 } data points. In order to obtain models with an equilibrium point in the origin, the input and output data are shifted with respect to their reference and rescaled before the model identification. For MPC, we consider a prediction horizon N = 20 as well as weights Q = 1 and R = 10 − 1 . The simulation results are reported in Fig. 1, where it is shown that in presence of enough data, the modeling errors are sufficiently small to ensure exponential stability of the data-driven MPC closed loop, with a faster error decrease rate and a higher precision for the model identified using more data. V I . C O N C L U S I O N S In this paper , we ha ve pro vided suf ficient conditions such that data-driven MPC without terminal conditions ensures exponential stability for nonlinear input-output systems. The key requirement is the presence of a proportional error bound, which can be achiev ed using kernel interpolation. An interesting open research direction is the inclusion of general noise in this framework using Gaussian process models [11]. R E F E R E N C E S [1] L. Gr ¨ une and J. Pannek, Nonlinear model predictive control . Springer , 2017. [2] J. B. Rawlings, D. Q. Mayne, and M. Diehl, Model Pr edictive Contr ol: Theory , Computation, and Design , vol. 2. Nob Hill Publishing Madison, WI, 2017. [3] G. Grimm, M. J. Messina, S. E. Tuna, and A. R. T eel, “Model predictiv e control: for want of a local control L yapunov function, all is not lost, ” IEEE T ransactions on Automatic Contr ol , vol. 50, no. 5, pp. 546–558, 2005. [4] L. Gr ¨ une, J. Pannek, M. Seehafer, and K. W orthmann, “ Analysis of unconstrained nonlinear MPC schemes with time varying control horizon, ” SIAM Journal on Control and Optimization , vol. 48, no. 8, pp. 4938–4962, 2010. [5] J.-M. Coron, L. Gr ¨ une, and K. W orthmann, “Model predictiv e control, cost controllability , and homogeneity , ” SIAM Journal on Control and Optimization , vol. 58, no. 5, pp. 2979–2996, 2020. [6] M. Granzotto, R. Postoyan, L. Bus ¸oniu, D. Ne ˇ si ´ c, and J. Daafouz, “Finite-horizon discounted optimal control: stability and performance, ” IEEE T ransactions on Automatic Contr ol , vol. 66, no. 2, pp. 550–565, 2020. [7] J. K ¨ ohler , M. A. M ¨ uller , and F . Allg ¨ ower , “Constrained nonlinear output regulation using model predictive control, ” IEEE Tr ansactions on Automatic Contr ol , vol. 67, no. 5, pp. 2419–2434, 2021. [8] J. K ¨ ohler , M. N. Zeilinger, and L. Gr ¨ une, “Stability and performance analysis of NMPC: Detectable stage costs and general terminal costs, ” IEEE T ransactions on Automatic Contr ol , vol. 68, no. 10, pp. 6114– 6129, 2023. [9] J. Berberich and F . Allg ¨ ower , “ An overvie w of systems-theoretic guarantees in data-driv en model predictiv e control, ” Annual Review of Contr ol, Robotics, and Autonomous Systems , vol. 8, no. 1, pp. 77– 100, 2025. [10] J. Coulson, J. L ygeros, and F . D ¨ orfler , “Distrib utionally robust chance constrained data-enabled predictive control, ” IEEE T ransactions on Automatic Contr ol , vol. 67, no. 7, pp. 3289–3304, 2021. [11] A. Scampicchio, E. Arcari, A. Lahr, and M. N. Zeilinger, “Gaussian processes for dynamics learning in model predictiv e control, ” Annual Reviews in Contr ol , vol. 60:101034, 2025. [12] M. K orda and I. Mezi ´ c, “Linear predictors for nonlinear dynamical systems: K oopman operator meets model predictive control, ” Auto- matica , vol. 93, pp. 149–160, 2018. [13] R. Str ¨ asser , K. W orthmann, I. Mezi ´ c, J. Berberich, M. Schaller , and F . Allg ¨ ower , “ An overvie w of Koopman-based control: From error bounds to closed-loop guarantees, ” Annual Reviews in Control , vol. 61:101035, 2026. [14] T . Salzmann, E. Kaufmann, J. Arrizabalaga, M. Pav one, D. Scara- muzza, and M. Ryll, “Real-time neural MPC: Deep learning model predictiv e control for quadrotors and agile robotic platforms, ” IEEE Robotics and A utomation Letters , vol. 8, no. 4, pp. 2397–2404, 2023. [15] I. Schimperna, K. W orthmann, M. Schaller, L. Bold, and L. Magni, “Data-driv en model predictive control: Asymptotic stability despite approximation errors exemplified in the Koopman framew ork, ” arXiv pr eprint arXiv:2505.05951 , 2025. [16] S. J. Kuntz and J. B. Rawlings, “Beyond inherent robustness: strong stability of MPC despite plant-model mismatch, ” IEEE T ransactions on Automatic Contr ol , 2025. [17] X. Shang, J. Cort ´ es, and Y . Zheng, “On the exponential stability of K oopman model predicti ve control, ” in Proceedings of Machine Learn- ing Research of the Learning for Dynamics and Contr ol Conference, Los Angeles, California , 2026. [18] I. Schimperna, L. Bold, J. K ¨ ohler , K. W orthmann, and L. Magni, “Stability of data-dri ven Koopman MPC with terminal conditions, ” in 24th IEEE Eur opean Contr ol Conference , 2026. Accepted for publication. ArXiv preprint [19] R. Str ¨ asser , M. Schaller, K. W orthmann, J. Berberich, and F . Allg ¨ ower , “SafEDMD: A Koopman-based data-dri ven controller design frame- work for nonlinear dynamical systems, ” A utomatica , vol. 185:112732, 2026. [20] H. W endland, Scattered data appr oximation , vol. 17. Cambridge univ ersity press, 2004. [21] G. E. Fasshauer and M. J. McCourt, Kernel-based approximation methods using Matlab , v ol. 19. W orld Scientific Publishing Company , 2015. [22] M. Kanagaw a, P . Hennig, D. Sejdinovic, and B. K. Sriperumb udur , “Gaussian processes and kernel methods: A re view on connections and equiv alences, ” arXiv pr eprint arXiv:1807.02582 , 2018. [23] C. Fiedler , “Lipschitz and H ¨ older continuity in reproducing kernel Hilbert spaces, ” arXiv preprint , 2023. [24] P . Bist ´ ak and M. Huba, “Model reference control of a two tank system, ” in Pr oc. 18th IEEE International Confer ence on System Theory , Contr ol and Computing (ICSTCC) , pp. 952–957, 2014.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment