pADAM: A Plug-and-Play All-in-One Diffusion Architecture for Multi-Physics Learning

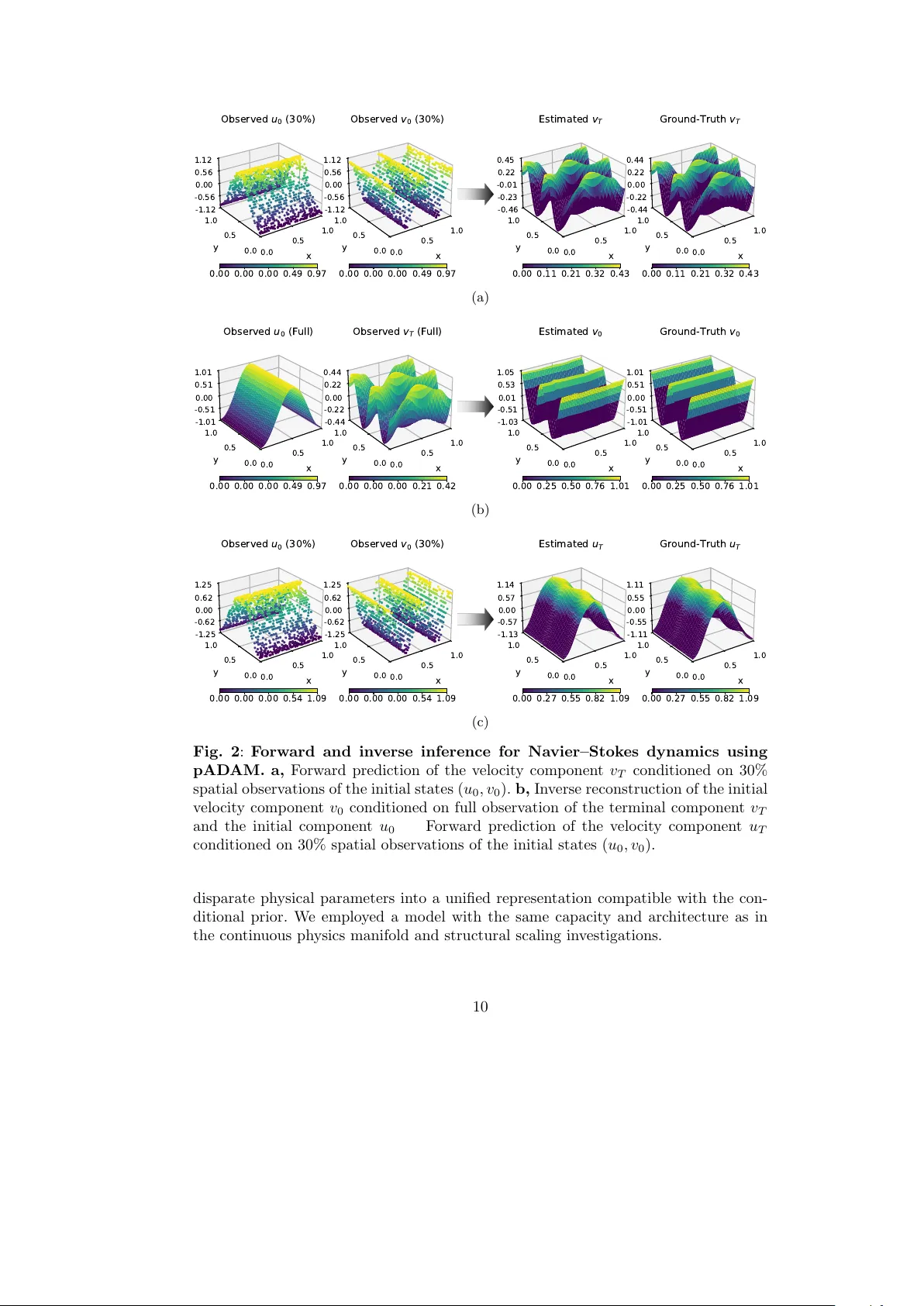

Generalizing across disparate physical laws remains a fundamental challenge for artificial intelligence in science. Existing deep-learning solvers are largely confined to single-equation settings, limiting transfer across physical regimes and inferen…

Authors: Amirhossein Mollaali, Bongseok Kim, Christian Moya