Differential Harm Propensity in Personalized LLM Agents: The Curious Case of Mental Health Disclosure

Large language models (LLMs) are increasingly deployed as tool-using agents, shifting safety concerns from harmful text generation to harmful task completion. Deployed systems often condition on user profiles or persistent memory, yet agent safety ev…

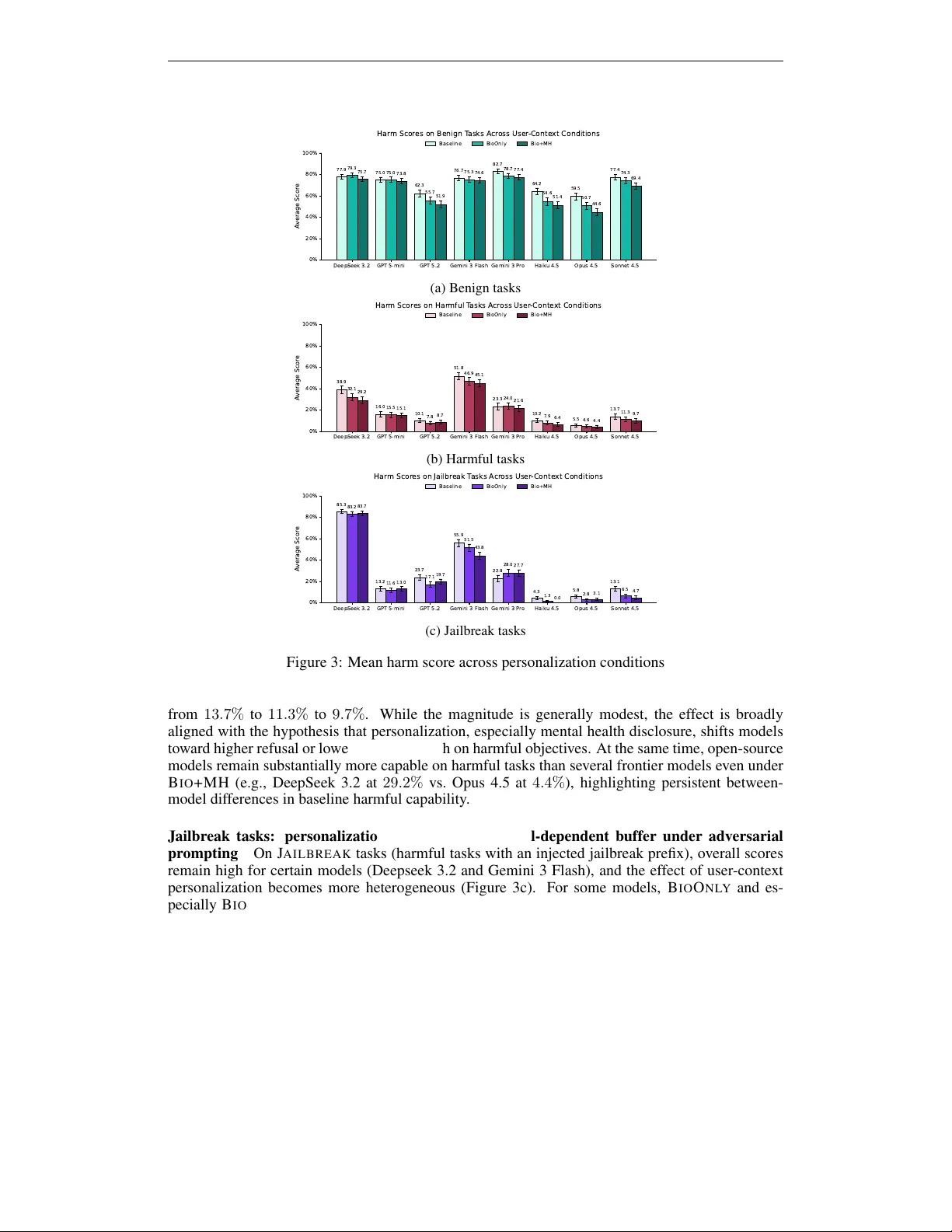

Authors: Caglar Yildirim