GeMA: Learning Latent Manifold Frontiers for Benchmarking Complex Systems

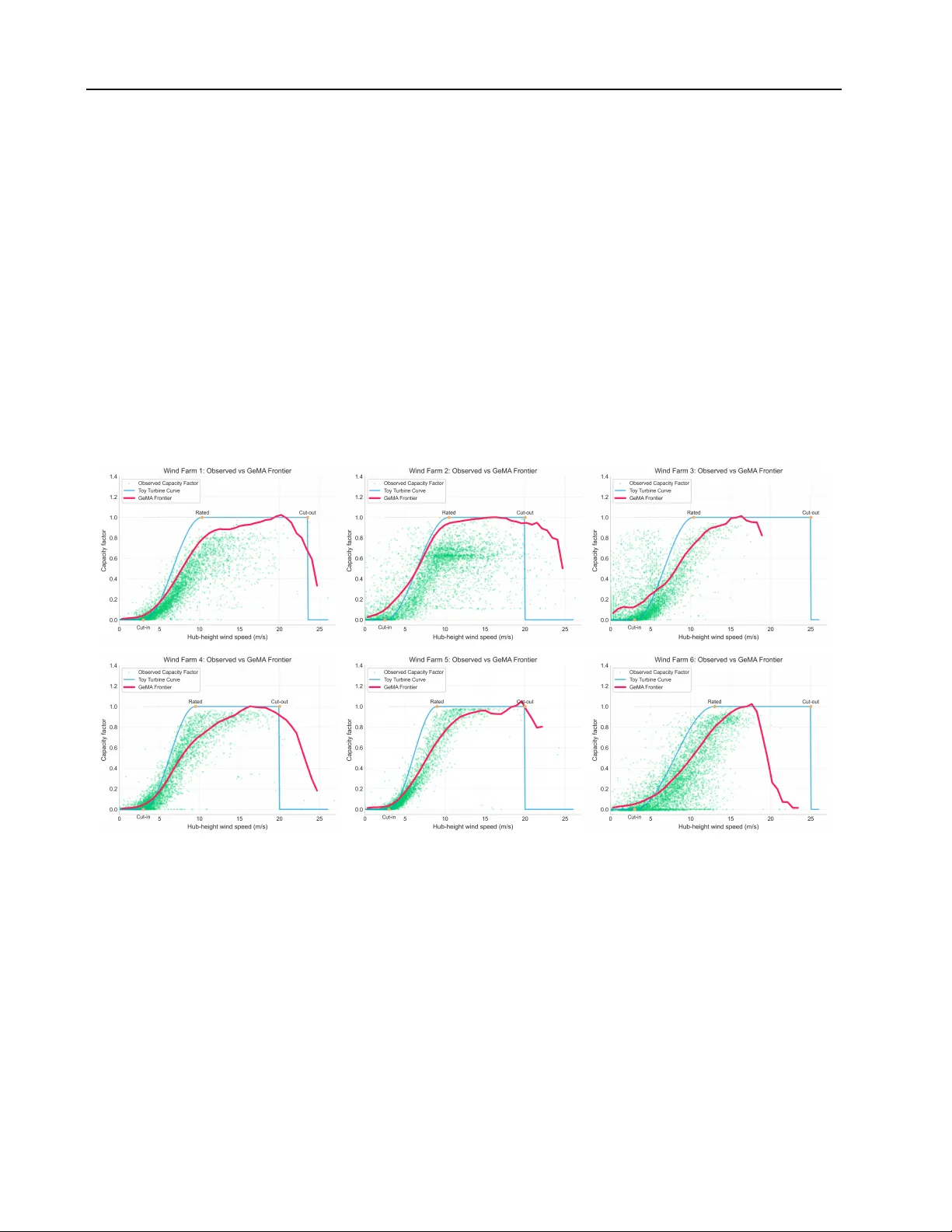

Benchmarking the performance of complex systems such as rail networks, renewable generation assets and national economies is central to transport planning, regulation and macroeconomic analysis. Classical frontier methods, notably Data Envelopment An…

Authors: Jia Ming Li, Anupriya, Daniel J. Graham