Nonlinear Information Theory: Characterizing Distributional Uncertainty in Communication Models with Sublinear Expectation

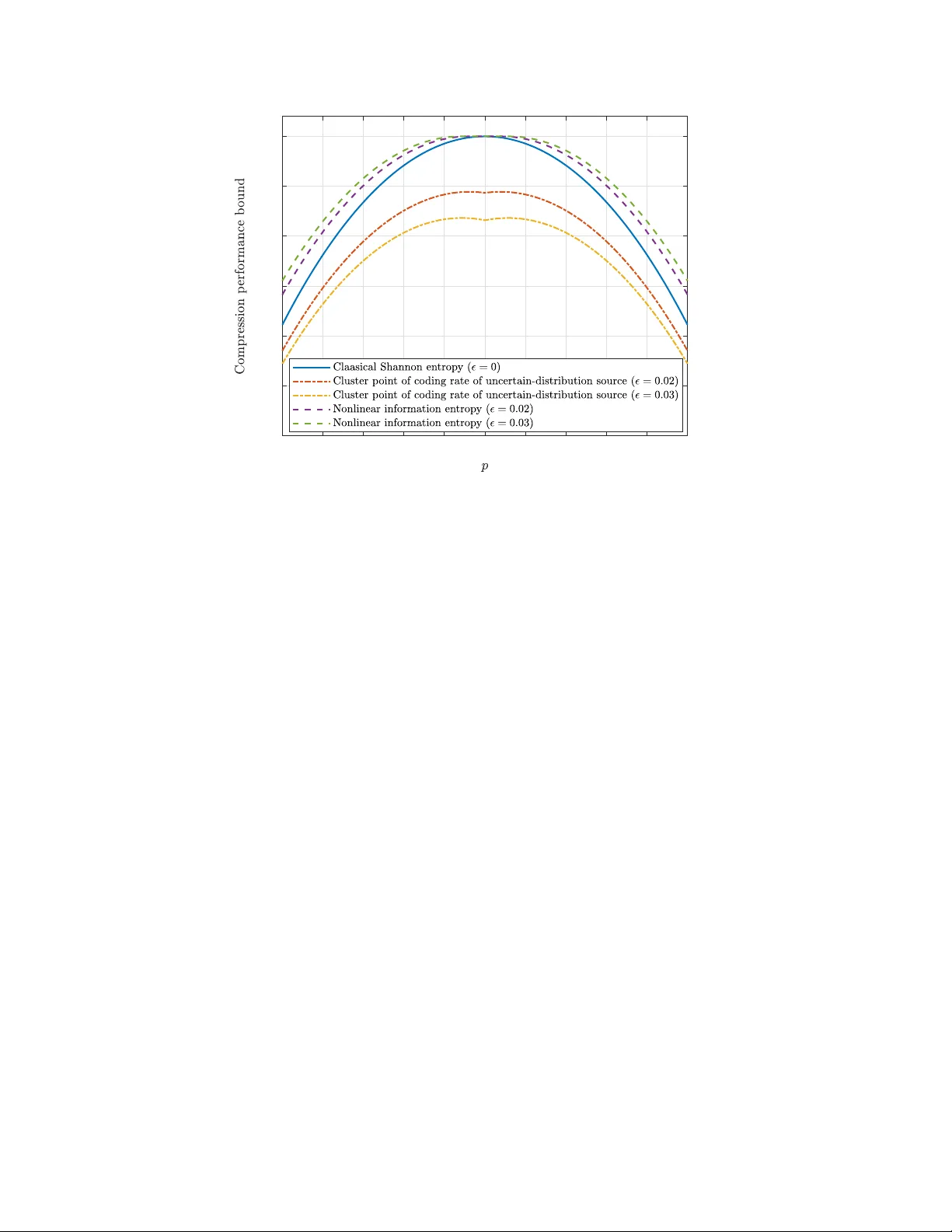

A mathematical framework for information-theoretic analysis is established, with a new viewpoint of describing transmitted messages and communication channels by the nonlinear expectation theory, beyond the framework of classical probability theory. …

Authors: Wen-Xuan Lang, Shaoshi Yang, Jianhua Zhang