Machines acquire scientific taste from institutional traces

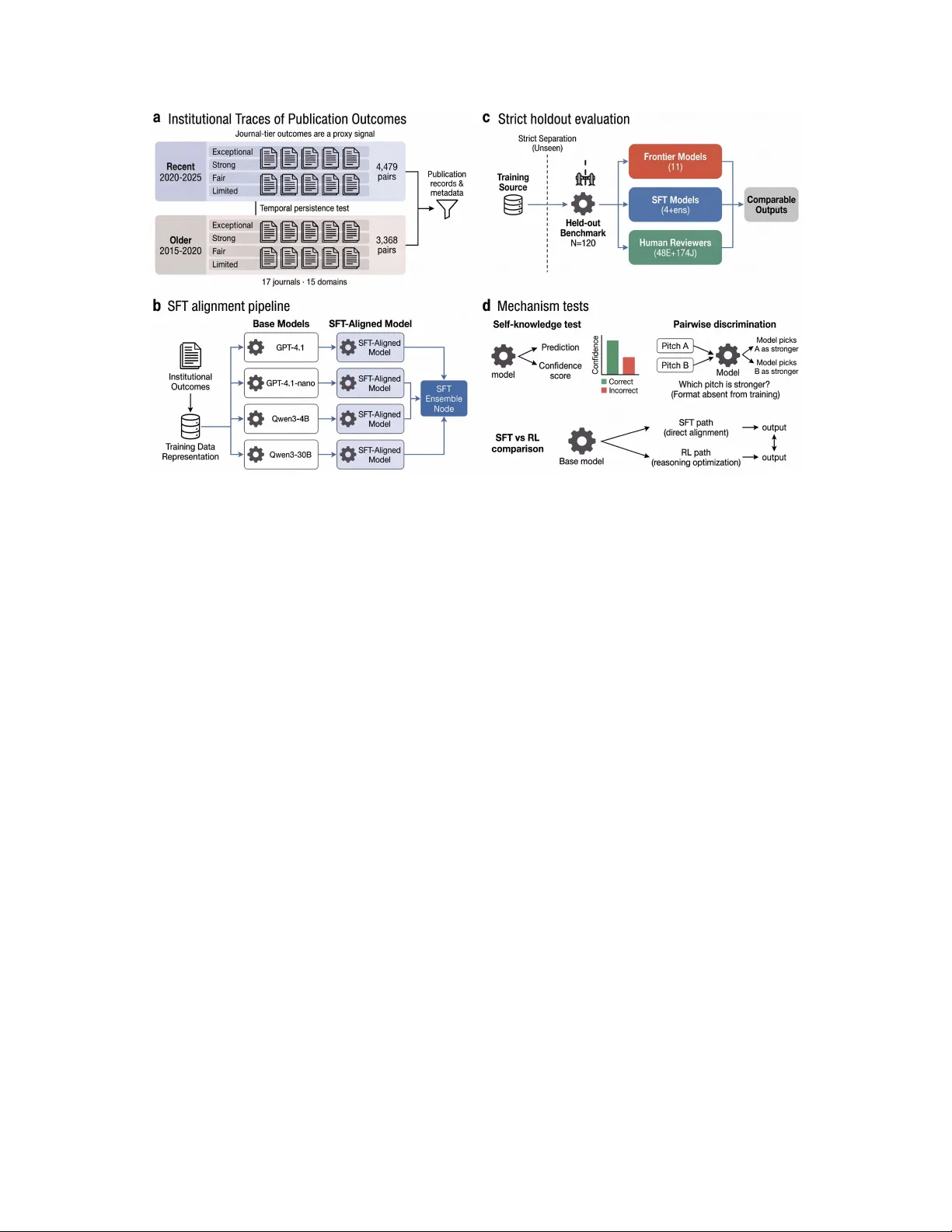

Artificial intelligence matches or exceeds human performance on tasks with verifiable answers, from protein folding to Olympiad mathematics. Yet the capacity that most governs scientific advance is not reasoning but taste: the ability to judge which …

Authors: Ziqin Gong, Ning Li, Huaikang Zhou