FSMC-Pose: Frequency and Spatial Fusion with Multiscale Self-calibration for Cattle Mounting Pose Estimation

Mounting posture is an important visual indicator of estrus in dairy cattle. However, achieving reliable mounting pose estimation in real-world environments remains challenging due to cluttered backgrounds and frequent inter-animal occlusion. We pres…

Authors: Fangjing Li, Zhihai Wang, Xinxin Ding

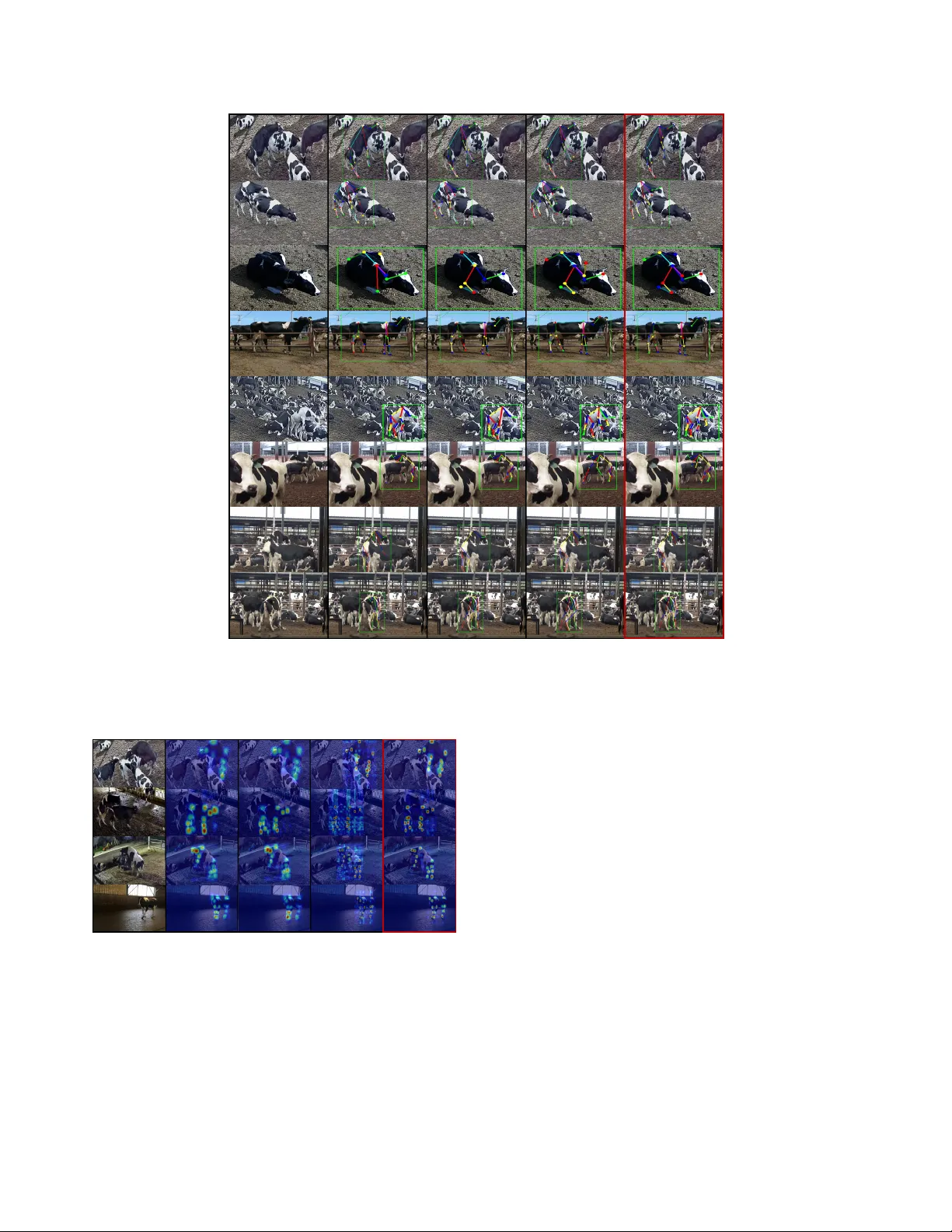

FSMC-P ose: Fr equency and Spatial Fusion with Multiscale Self-calibration f or Cattle Mounting P ose Estimation Fangjing Li 1 Zhihai W ang 1 Xinxin Ding 1 , 2 Haiyang Liu 1 * Ronghua Gao 2 Rong W ang 2 Y ao Zhu 3 * Ming Jin 4 1 Beijing Jiaotong Uni versity 2 NERCIT A 3 Tsinghua Uni versity 4 Grif fith Uni versity Abstract Mounting postur e is an important visual indicator of es- trus in dairy cattle. However , achieving reliable mounting pose estimation in r eal-world en vir onments r emains chal- lenging due to clutter ed backgr ounds and fr equent inter- animal occlusion. W e pr esent FSMC-P ose , a top-down frame work that inte grates a lightweight frequency–spatial fusion backbone, CattleMountNet, and a multiscale self- calibration head, SC2Head. Specifically , we design two al- gorithmic components for CattleMountNet: the Spatial F re- quency Enhancement Block (SFEBlock) and the Receptive Aggr e gation Bloc k (RABlock). SFEBlock separ ates cattle fr om clutter ed backgr ounds, while RABloc k captur es mul- tiscale contextual information. The Spatial-Channel Self- Calibration Head (SC2Head) attends to spatial and channel dependencies and intr oduces a self-calibr ation branch to mitigate structur al misalignment under inter-animal o ver- lap. W e construct a mounting dataset, MOUNT -Cattle, cov- ering 1,176 mounting instances, which follows the COCO format and supports drop-in training acr oss pose estima- tion models. Using a compr ehensive dataset that combines MOUNT -Cattle with the public NW AFU-Cattle dataset, FSMC-P ose achie ves higher accuracy than strong base- lines, with mark edly lower computational and par ameter costs, while maintaining r eal-time infer ence on commod- ity GPUs. Extensive experiments and qualitative analy- ses show that FSMC-P ose effectively captur es and esti- mates cattle mounting pose in complex and cluttered en- vir onments. Dataset and code ar e available at Github . 1. introduction Accurate estrus identification is pi votal to herd profitabil- ity and sustainability , influencing conception timing, days open, calving interval, labor cost, hormone use, and animal welfare [ 25 ]. Among beha vioral indicators, mounting is the * Correspondence to: Haiyang Liu < haiyangliu@bjtu.edu.cn > and Y ao Zhu < ee zhuy@zju.edu.cn > . 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 Head - top Neck Spine Coccyx Left front leg root Left front knee Left front hoof Right front leg root Right front knee Right front hoof Left hind leg root Left hind knee Left hind hoof Right hind leg root Right hind knee Right hind hoof Figure 1. Left : keypoint annotation scheme for cattle. Right : real- world dense en vironments for cattle mounting pose estimation by FSMC-Pose, based on self-collected MOUNT -Cattle dataset. most intuitive and visually distinctiv e pose, characterized by forelimb lifting and hindlimb support, and it provides a critical beha vioral cue for determining whether a cattle has entered estrus. If mounting pose can be measured au- tomatically on a large scale, closed-loop decision-making in breeding, resource allocation, and health monitoring can be achiev ed. A reliable mounting pose estimation model would con vert ubiquitous lo w-cost video into actionable signals, lowering dependence on skilled labor , improving reproductiv e efficiency , and reducing waste across div erse farm conditions. Ho wev er , there is a lack of public cattle mounting datasets, resulting in the lack of research founda- tion for this agricultural production problem. Consequently , cattle mounting pose estimation research remains a blank. Pose estimation [ 1 , 9 , 26 , 27 , 29 ] provides structured vi- sual perception by extracting anatomical k eypoints and spa- tial topology for reliable behavior recognition [ 18 , 32 , 33 , 38 ]. Current animal pose estimation methods mainly follow bottom-up [ 2 , 21 , 34 ] or top-down [ 11 , 13 , 36 ] paradigms. Bottom-up methods such as DeepLabCut [ 17 ] effecti vely transfer human pose architectures to animals with limited annotations but fail under occlusion and inter-animal con- fusion. GANPose [ 31 ] introduces structural priors for oc- cluded inference b ut is computationally expensi ve, while CMBN [ 5 ] reduces parameters by HRNet [ 30 ] optimiza- tion yet still struggles in dense herd scenes. More impor- tantly , agricultural production requires real-time monitoring and feedback, b ut the high computational cost of bottom-up approaches further limits their adoption in real-time produc- tion scenarios. T op-down methods like GRMPose [ 4 ] and T -LEAP [ 22 ] improve accuracy through lightweight back- bones or temporal modeling but suffer from keypoint ob- fuscation and high inference complexity . Existing approaches largely transfer human pose estima- tion models to animals, but the complexity of agricultural production scenes mak es these methods unsuitable for real- world deployment (Figure 1 ). Specifically , estrous cattle tend to aggregate, making mounting scenes denser than typ- ical farm settings. Therefore, cluttered background inter- ference and occlusion by other cattle blur or partially re- mov e the mounting outline; in crowded views, it is dif ficult to fully distinguish the individuals in volv ed in mounting, with intertwined limbs and joints causing identity confu- sion; moreov er, overlapping coat patterns further increase the difficulty of k eypoint recognition during pose estima- tion. So the question of “ ho w to improve mounting pose estimation in dense, cluttered real herd scenes while main- taining lightweight computation? ” remains a challenge. T o address these issues, we propose FSMC-Pose , a fre- quency and spatial fusion framework with multiscale self- calibration for cattle mounting pose estimation in real-world group-housed en vironments. Frequency and spatial fusion uses wav elet decomposition and fixed-Gaussian smooth- ing to suppress clutter, enhance separability between cat- tle and background, and preserve fine structural detail at low contrast. Multiscale self-calibration utilizes receptiv e field aggregation and spatial–channel co-calibration to ag- gregate conte xt across scales, correct structural shifts under inter-animal o verlap, and stabilize keypoint localization for small joints and large torso regions. Extensiv e experiments show that FSMC-Pose accurately captures mounting pos- tures in complex scenes and provides an effecti ve techno- logical foundation for intelligent estrus detection systems. Our main contributions are summarized as follo ws: • W e propose FSMC-Pose, a lightweight top-down frame- work integrating a novel backbone CattleMountNet and SC2Head for robust mounting pose estimation in real- world, group-housed dairy cattle en vironments. • W e construct MOUNT -Cattle dataset and combine it with NW AFU-Cattle [ 5 ] to form a comprehensiv e bench- mark for complex mounting environments. The annota- tions follow the COCO [ 14 ] format and support drop-in training across pose estimation models, cov ering 1,176 mounting instances (Figure 1 ). • Extensive experiments show FSMC-Pose surpasses strong baselines on cattle mounting pose estimation tasks, while maintaining real-time inference on commodity GPUs. Specifically , FSMCPose improves AP , AP 75 , AR, and AR 75 by 1.4%, 3.0%, 0.9%, and 0.4%, reaching 89%, 92.5%, 89.9%, and 97.7%, respectiv ely . Compared with R TMPose [ 10 ], its computational cost is only 4.4109 GFLOPS, and its parameter count is reduced by 80.01% to just 2.698M. 2. Related W ork Pose estimation [ 1 , 9 , 26 , 27 , 35 ] as a structured visual perception method allows us to extract keypoints and spa- tial topology , providing reliable intermediate representa- tions for beha vior recognition [ 18 , 32 , 33 , 38 ]. W ith the rapid de velopment of computer vision, animal pose estima- tion has also made significant progress. Existing methods can generally be di vided into bottom-up [ 2 , 21 , 34 ] and top- down [ 11 , 13 , 36 ] paradigms. 2.1. Bottom-up Methods Existing bottom-up methods extend human pose estimators to animal data. DeepLabCut [ 17 ] fine-tunes human archi- tectures on a small number of annotated samples, reduc- ing labeling costs but sho wing limited ability to distinguish individuals in crowded scenes and being vulnerable to oc- clusion and feature confusion. GANPose [ 31 ] introduces a generativ e adversarial network with structural priors to infer occluded poses without temporal information, yet requires substantial computation and large, high-quality annotations, hindering deployment in farms. CMBN [ 5 ] compresses the HRNet backbone [ 30 ] with depthwise separable con volu- tions, but still mis-associates k eypoints across individuals in dense production scenes, and the ov erall computational cost of bottom-up pipelines constrains real-time monitoring. 2.2. T op-down Methods T op-down methods generally use fe wer parameters and achiev e higher keypoint accuracy . GRMPose [ 4 ] couples the lightweight CSPNext backbone [ 16 ] with a graph con- volutional coordination classifier to balance speed and accu- racy , yet similar coat patterns and ambiguous body contours in herds still cause structural confusion and missing parts. V ideo-based work [ 23 ] extends LEAP [ 20 ] to the temporal model T -LEAP [ 22 ], enlarging the receptiv e field via se- quential frames, b ut it cannot infer pose from a single frame and incurs high inference complexity . Overall, existing ap- proaches tar get relativ ely simple, lo w-ov erlap scenes and provide little dedicated support for mounting pose estima- tion. 3. Dataset W e constructed a mounting dataset called MOUNT -Cattle, which is a mounting-centric dairy cattle pose dataset col- lected from real-world farms. Specifically , MOUNT -Cattle was recorded at a large commercial dairy farm in Y anqing 𝑜 ! " 𝑜 ! # " ..." 𝑜 ! $% " 𝑜 ! $ 𝑜 ! " 𝑜 ! # ... " 𝑜 ! $% " 𝑜 ! $ CattleMount Net Conv SFEBlock Feature map Feature map RABloc k RABloc k MobileNet 7 × 7 SC2Head X- Axis Coordin ate Classifie r Y- Axis Coordin ate Classifie r Flatten FC GAU Head Input Onput 𝑜 & " 𝑜 & # ... 𝑜 & $% " 𝑜 & $ 𝑜 & " 𝑜 & # ... 𝑜 & $% " 𝑜 & $ Figure 2. Architecture of FSMC-Pose frame work, including the proposed lightweight backbone CattleMountNet (SFEBlock and RABlock) (Figure 3 ) and self-calibration SC2Head (Figure 4 ). Follo wing the top-down design of R TMPose [ 10 ], and employing MobileNet [ 24 ]. District, Beijing, during July to August 2024, using an in- frared netw ork camera (Hikvision DS-2CD3T46WD V3-I3) and a Sony FDR-AX60 4K camcorder . The dataset focuses on mounting beha vior under dense herd conditions, deliber - ately covering sev ere background clutter, similar coat pat- terns, and mutual occlusion, and preserving the full mount- ing process from initiation to termination. After manual filtering, MOUNT -Cattle contains 1,176 high-quality an- notated mounting instances. W e then combine our self- collected MOUNT -Cattle dataset with the public NW AFU- Cattle dataset [ 5 ], which does not include mounting behav- ior , to construct a comprehensive benchmark dataset. Dataset Split. Each cattle instance in this combined dataset is labeled in COCO format [ 14 ] with a bounding box and 16 keypoints following Animal-Pose [ 3 ] and AP- 10K [ 37 ], while omitting eye and mouth keypoints and adding head-top and neck to focus on whole-body pose. Ke ypoint visibility is categorized as in visible, partially visi- ble, or visible, and the data are split into train/validation/test sets with an 8 : 1 : 1 ratio. More details of MOUNT -Cattle and the combined benchmark are provided in appendix A . 4. Methodology 4.1. Overview FSMC-Pose comprises a lightweight backbone network, CattleMountNet, and an improved pose estimation head, SC2Head. As shown in Figure 2 , the input image is nor- malized and then processed by the backbone network to ex- T able 1. Statistics of keypoint visibility categories across dataset splits. V alues are counts with proportions in parentheses. Split In visible Partial V isible T otal T rain 7,493 (11.51%) 4,646 (7.14%) 52,965 (81.35%) 65,104 V al 929 (11.14%) 564 (6.77%) 6,843 (82.09%) 8,336 T est 1,066 (12.81%) 572 (6.88%) 6,682 (80.31%) 8,320 T otal 9,488 (11.60%) 5,782 (7.07%) 66,490 (81.32%) 81,760 tract multi-lev el features. For CattleMountNet, we inte grate depthwise separable con volutions, residual connections, and inv erted residual structures in a modular fashion to en- hance the model’ s capability for foreground–background discrimination and scale representation. T o further feature e xtraction, we design two modules, SFEBlock and RABlock, from a complementary perspec- tiv e. They fuse frequency and spatial domain information and by modeling multiscale receptiv e fields, respectiv ely . Follo wing feature extraction, we employ a spatial–channel self-calibration mechanism to focus the attention on criti- cal body regions, and adopt a coordinate regression strat- egy for keypoint prediction. Furthermore, we introduce the SC2Head to enhance feature representations during key- point localization based on R TMPose [ 10 ]. 4.2. Lightweight Backbone: CattleMountNet W e b uild CattleMountNet on in verted residual structures [ 8 , 19 ]: a feature map of size H × W × C is first expanded by a 1 × 1 pointwise conv olution, processed by a 3 × 3 depthwise con volution, then projected back to low dimen- sion with another 1 × 1 pointwise con volution and fused with the input. This bottleneck design preserves key infor- mation while keeping computation lo w . T o better handle dense, cluttered group-housed cattle scenes, we introduce two modules on top of this structure: the Spatial-Frequency Enhancement Block (SFEBlock) and the Receptiv e Aggre- gation Block (RABlock). SFEBlock enhances separation between cattle and background via frequency–spatial mod- eling, and RABlock aggregates multiscale conte xt to handle strong keypoint scale v ariation. Spatial-Frequency Enhancement Block (SFEBlock). In real barns, mud, shadows, and lighting often make cow textures similar to the background, causing lo w contrast and blurred ke ypoints that degrade with depth. SFEBlock is de- signed to strengthen tar get–background separation while re- maining lightweight, as illustrated in Figure 3 . SFEBlock combines W av elet T ransform Con volution (WTCon v) [ 6 ] and Gaussian filtering. W avelets provide multiscale frequency-domain modeling with enlar ged re- ceptiv e fields, while the Gaussian kernel smooths responses and suppresses background noise. Giv en input F in ∈ R H × W × C , we first decompose it with WT and con volv e each sub-band: F W T conv = IWT (Con v ( W, WT ( F in ))) , (1) where W is the depthwise kernel for wav elet sub-bands. WT is downsampled lo w- and high-frequency components; small kernels on each band capture context while preserving local structure, and IWT reconstructs spatial features. Pixels near the center recei ve higher weights, emphasiz- ing salient structure and suppressing noise. W e use a fixed 5 × 5 kernel, F gauss = G 5 × 5 1 . 0 ( F W T conv ) , to smooth each channel, then fuse wavelet and Gaussian features and com- press them via a 1 × 1 con volution; element-wise multiplica- tion and a 3 × 3 con volution further refine spatial responses, and a residual connection preserves the input, yielding: F temp = Con v 1 × 1 2 D ( F W T conv + F gauss ) , (2) F out = Con v 3 × 3 2 D ( F W T conv ⊗ F temp ) + F in . (3) This improv es contrast on cattle contours while keeping computation modest. Receptive Aggregation Block (RABlock). Cattle key- parts span small hoov es and lar ge torso or spine regions. Single-scale features cannot simultaneously capture such variation in cluttered scenes. RABlock addresses this via parallel depthwise con volutions with different dilation rates plus residual aggregation, as sho wn in Figure 3 . On top of the in verted residual unit, we add learnable channel-wise biases for lightweight distribution adjustment. H × W × C CBH WT IWT Conv Conv Gaussian Conv H × W × C SFEBloc k Conv BN HardSwish = Conv Conv H×W×C Learnable Bias RABlock DWConv Conv LayerNorm H×W×C d=1 d=3 d=5 Conv HardSwish BN = Dilated DWCon v = HardSwish HardSwish HardSwish Figure 3. Architectures of the proposed CattleMountNet compo- nents: SFEBlock and RABlock. The main branch contains three parallel 3 × 3 depthwise con volutions with dilation rates 1 , 3 , and 5 , capturing lo- cal, mid-range, and long-range context. For input F l − 1 ∈ R H × W × C at layer L , RABlock is defined as: H n l − 1 = HardSwish Con v dil=2 n − 1 3 × 3 (F l − 1 ) , (4) H l − 1 = La y erNorm H 1 l − 1 + H 2 l − 1 + H 3 l − 1 . (5) Depthwise con volutions k eep parameters lo w , while HardSwish [ 8 ] provides efficient nonlinearity for mobile settings. Summing and normalizing the three paths yields a multiscale feature map that better responds to both small joints and large body structures. Residual connections in each path help preserve original structure and stabilize training under strong scale and background variation. 4.3. Multiscale Self-calibration Head: SC2Head In group-housed mounting scenes, similar coat patterns and strong inter-co w ov erlap make keypoints of the same body parts spatially close and semantically ambiguous, causing structural confusion and mis-association between individu- als. Our backbone with SFEBlock and RABlock improves cow–background separation and multiscale representation, but these effects mainly act in early feature extraction, and the prediction head still struggles to maintain structural con- sistency . T o address this, we introduce SC2Head on top of R TMPose [ 10 ], as shown in Figure 4 , which couples spa- tial and channel attention with a self-calibration branch to correct structural shifts and enhance keypoint localization. The SC2Head consists of three branches: the Spatial Attention Branch (SAB), the Channel Attention Branch BN Upsample 3×3 AvgPoo l CAP CMP GAP GMP 1×1 (a) SAB (b) CAB (c) SCB 1×1 1×1 1×1 1×1 X a X m SC2Head Concat Concat Figure 4. Architecture of the proposed SC2Head module for spa- tial–channel co-calibrated keypoint prediction. The improved vi- sualization is shown in Figure 6 . (CAB), and the Self-Calibration Branch (SCB). Given an input feature X ∈ R H × W × C , SC2Head is defined as: C o = f 1 × 1 ([SAB( X ) , CAB( X )] ⊙ SCB( X )) + X (6) = f 1 × 1 ([SA , CA]) ⊙ SC + X , (7) where C o ∈ R H × W × C denotes the SC2Head output, f 1 × 1 represents a 1 × 1 con volution, ⊙ denotes the broadcasted Hadamard product, and SA , CA , and SC are the outputs of the SAB, CAB, and SCB branches, respectiv ely . Spatial Attention Branch (SAB). As shown in Fig- ure 4(a) , for a given input feature, global av erage pooling and global max pooling are performed along the channel dimension to capture different semantic responses. The tw o responses are concatenated and aggregated to generate spa- tial attention weights, which are then multiplied with the input feature X to produce the output feature, expressed as: SA = f s 3 × 3 h f A vg spatial ( X ) , f Max spatial ( X ) i ⊙ X , (8) where f s 3 × 3 represents a 3 × 3 con volution with a sigmoid activ ation function, and f A vg spatial ( · ) and f Max spatial ( · ) denote spa- tial av erage pooling and spatial max pooling, respectively . Channel Attention Branch (CAB). Each channel in an image feature map typically carries distinct semantic infor- mation, and assigning different weights to channels helps the network focus on the most informati ve ones. As shown in Figure 4(b) , for an input feature X ∈ R C × H × W , channel- wise av erage pooling f A vg channel ( · ) and max pooling f Max channel ( · ) are applied using kernels of size ( H , W ) . The two pooled responses, corresponding to the same feature columns, are concatenated along the channel dimension to form a shared representation: M c = h f A vg channel ( X ) , f Max channel ( X ) i . (9) The shared feature M c ∈ R 2 C × H × W is processed to enable feature interaction and split equally into two branches: X a , X m = Ch unk 2 (CBL ( M c )) , (10) where Ch unk 2 ( · ) di vides the tensor into two equal parts along the channel dimension, and CBL( · ) denotes a subnet- work composed of a 1 × 1 conv olution, batch normalization (BN), and LeakyReLU acti vation. The channel attention map CA ∈ R C × H × W is then computed as: CA = X ⊙ F A vg channel ( X a ) ⊙ F Max channel ( X m ) , (11) where F A vg channel ( · ) and F Max channel ( · ) denote submodules within the channel attention mechanism, each consisting of con vo- lution, BN, ReLU, another con volution, and Sigmoid. Self-Calibration Branch. As illustrated in Figure 4(c) , the SCB branch is designed to model contextual infor- mation effecti vely and establish long-range dependencies across spatial positions. For an input feature X ∈ R C × H × W , the SCB computation is described as: SC = δ s ( X + B 2 (con v (A 2 ( X )))) , (12) where A 2 ( · ) denotes bilinear interpolation with an upsam- pling factor of 2, and B 2 represents average pooling with a kernel size of 2 × 2 and stride 2. The resulting self-calibrated feature is then concatenated with the spatial and channel at- tention outputs to form the final fused feature representation for keypoint prediction. 5. Experiments 5.1. Experimental Setup Evaluation Setting. The dataset in this study follows the COCO annotation format. T o e valuate the similarity be- tween predicted and ground-truth ke ypoints, we adopted the Object Ke ypoint Similarity (OKS) metric from the COCO dataset to calculate A verage Precision (AP) and A verage Recall (AR). The OKS is defined as follows: O K S = P i exp − d 2 i 2 s 2 k 2 i δ ( v i > 0) P i δ ( v i > 0) (13) where d i denotes the Euclidean distance between the ground-truth and predicted keypoints, v i indicates the visi- bility flag of keypoint i , s 2 represents the object scale, and T able 2. Quantitative results across different pose estimation baselines. HigherAssociativ eEmbeddingHead is abbreviated as HAEHead. Associativ eEmbeddingHead is abbreviated as AEHead. Methods Backbone Head AP/% AP 50 /% AP 75 /% AR/% AR 50 /% AR 75 /% FLOPs/G Params/M DEKR [ 7 ] HRNet DEKRHead 87.2 95.8 90.3 89.0 96.7 91.9 44.416 29.548 CID [ 26 ] HRNet CIDHead 88.0 96.4 90.8 89.0 97.1 91.7 44.160 29.363 CoupledEmbedding [ 28 ] HRNet AEHead 72.2 90.5 75.4 78.0 95.0 77.2 41.100 28.641 CoupledEmbedding [ 28 ] HRNet HAEHead 73.9 90.1 74.0 80.4 96.6 82.5 40.500 28.541 SimCC [ 12 ] ResNet50 SimCCHead 87.4 96.0 91.0 89.9 96.7 92.9 5.493 36.753 SimCC [ 12 ] ResNet101 SimCCHead 87.4 97.0 91.6 89.8 97.5 91.7 9.140 55.745 R TMPose [ 10 ] CSPNext R TMCCHead 88.6 97.0 90.6 89.0 97.5 92.7 1.926 13.550 DWPose [ 35 ] - - 88.3 97.0 91.5 89.8 97.3 92.1 2.200 - R TMO [ 15 ] CSPDarknet R TMOHead 87.8 96.8 89.6 88.7 97.1 91.0 31.656 22.475 Ours (FSMC-Pose) CowMountNet SC2Head 89.0 97.0 92.5 89.9 97.7 93.1 0.354 2.698 k i is the standard deviation corresponding to each keypoint, which varies depending on the annotation. δ ( v i > 0) equals 1 when the keypoint is visible and 0 otherwise, meaning that only visible keypoints are considered in the computation; predictions for unannotated keypoints do not af fect the final results. In addition, we also ev aluate the model’ s computa- tional efficienc y using parameters such as the total number of parameters and floating-point operations (GFLOPs). Implementation Details. All models in this study were trained and tested on an Ubuntu 18.04 operating system using the PyT orch deep learning frame work. The e xper- iments were conducted on an NVIDIA T esla P100 PCIe GPU with 16 GB of memory and an Intel Xeon E5-2680 v4 CPU running at 2.40 GHz. The PyT orch version used was 1.10.1 with CUD A 10.2 support. The Adam optimizer with a warm-up strategy was adopted. Considering both model complexity and dataset scale, the initial learning rate was set to 0.001, and the input image resolution w as 256 × 192 . Sev eral data augmentation strategies were applied, includ- ing random scaling within a specified range, rotation, ran- dom horizontal shifting, and random occlusion of image re- gions. These augmentations introduce noise and variability into the data to pre vent ov erfitting to specific image features or con vergence to the local minima. 5.2. Experimental Results Quantitative Results on Strong Baselines. W e con- ducted a comprehensive ev aluation of FSMC-Pose against sev eral representativ e methods on the constructed dataset, including both top-down and bottom-up paradigms, to fully compare performance across different pose estimation ap- proaches. The results are presented in T able 2 , with the best results highlighted in bold. FSMC-Pose achiev ed the highest 89 . 0% AP on this dataset, surpassing representa- tiv e methods such as SimCC and R TMO, and outperform- ing other comparison models. This demonstrates the strong accuracy advantage of FSMC-Pose in the pose estimation task. Moreover , FSMC-Pose also achie ved excellent re- sults in AP 75 , AR 50 , and AR 75 , e xceeding state-of-the-art methods such as SimCC and R TMPose, and significantly outperforming DEKR, CID, and R TMO. The results show that FSMC-Pose consistently extracts stable keypoint fea- tures across v arying poses and scales, demonstrating strong generalization and rob ustness. Notably , FSMC-Pose also excels in model compactness and computational efficiency , with only 0.354M parameters and 2.698 GFLOPs. Com- pared with lightweight methods such as R TMPose and SimCC, it substantially reduces computational cost while maintaining high accuracy , achieving a balance between lightweight design and efficient inference. Qualitative Results and V isualization. W e qualitatively compare FSMC-Pose with representativ e top-do wn base- lines (CID, SimCC, R TMPose) on both challenging test im- ages and additional dense herd scenes, as illustrated in Fig- ure 5 . In the four groups of examples, cattle are densely packed, only a few keypoints of the mounting individ- ual remain visible, and cloudy low-light conditions plus low camera resolution further increase difficulty . All com- pared models sho w ob vious failures, including missing k ey- points in heavily occluded regions and confused skeletons between o verlapping individuals. In these settings, CID, SimCC, and R TMPose often exhibit missing or misplaced keypoints and confused skeleton assembly , especially when limbs of different cows overlap or when contrast is low . In contrast, FSMC-Pose consistently produces more coherent skeletons and more accurate keypoint localization. The fre- quency–spatial enhancement of SFEBlock and the multi- scale modeling of RABlock help separate cattle from clut- tered backgrounds and capture both small joints and large torso structures, while SC2Head stabilizes predictions un- der heavy ov erlap and partial occlusion. Overall, the visual results indicate that FSMC-Pose generalizes better to com- plex, real-world herd en vironments. Qualitative analysis of keypoint heatmaps. W e further compare heatmaps across models (Figure 6 ). CID retains Input CID Si mCC RTMPos e Ours (FSMC - Pose) Figure 5. Qualitati ve comparison of mounting pose estimation results on challenging real-world scenes. FSMC-Pose produces more accurate pose than CID [ 26 ], SimCC [ 12 ], and R TMPose [ 10 ], especially under occlusion, cluttered backgrounds, and dense herd scenarios. Input CID SimCC RTMPose Ours (F SMC - Pos e) Figure 6. Qualitative comparison of predicted keypoint heatmaps under complex herd scenes. FSMC-Pose produces more concen- trated and well-localized responses, especially around limbs and joints, compared with other top-down methods [ 10 , 12 , 26 ]. some localization ability but shows diffuse or blurred re- sponses in cluttered areas, while SimCC struggles to sep- arate indi viduals in cro wded scenes, leading to displaced keypoints. FSMC-Pose generates more compact and well- aligned heatmaps, especially around limbs and joints. 5.3. Ablation Study Effect of each module. W e e valuate the ef fectiv eness of the proposed modules using R TMPose [ 10 ] as the baseline, results are summarized in T able 3 . The baseline has 13 . 55 M parameters and strong accuracy but high deployment cost. Replacing the backbone with a lightweight MobileNet [ 24 ] reduces parameters to 1 . 609 M (T able 3 ) at the expense of accuracy , suggesting the need for auxiliary modules to re- cov er representation capacity . On top of this lightweight design, we introduce three modules (SFEBlock, RABlock, and SC2Head) for trade-of f accuracy and ef ficiency . In- dividually , SFEBlock enhances edge and texture cues; RABlock enlarges the receptiv e field to strengthen global structure modeling; SC2Head applies spatial–channel atten- tion that improves recall but may slightly reduce localiza- tion precision when used alone. Combinations further boost T able 3. Ablation study of the proposed modules. W e ablate SFEBlock, RABlock, and SC2Head individually and in combi- nation. The baseline is R TMPose [ 10 ] with CSPNext backbone. The best results are highlighted in bold . CattleMountNet Head Metrics (%) Params/M SFEBlock RABlock SC2Head AP AP 75 AR AR 75 Baseline 88.4 90.6 89.0 92.7 13.55 Baseline w/ MobileNet 87.8 89.5 89.0 91.3 1.609 ✓ 88.2 91.7 89.6 92.3 1.903 ✓ 88.0 90.8 89.0 91.7 2.393 ✓ 87.5 90.7 89.3 91.9 1.620 ✓ ✓ 88.7 91.6 89.7 91.5 2.687 ✓ ✓ 88.3 91.8 89.9 91.9 2.404 ✓ ✓ 88.6 92.1 89.8 92.1 1.914 ✓ ✓ ✓ 89.0 92.5 89.9 93.1 2.698 T able 4. Comparison of different attention mechanisms. Channel- only: CSA and ECA. Spatial-only: SAM and EMA. Joint spa- tial–channel: CB AM, SCAM and GCSA. Best performance is highlighted in bold . Attention Mechanisms AP/% AP 75 /% AR/% AR 75 /% CSA 88.5 91.4 89.4 92.1 ECA 88.1 90.3 89.3 91.0 SAM 88.4 91.6 89.1 91.3 EMA 88.1 89.0 89.2 90.5 CB AM 88.4 90.8 89.4 91.2 SCAM 88.2 90.4 89.6 91.7 GCSA 88.3 90.5 89.5 91.3 SC2Head 89.0 92.5 89.9 93.1 performance: SFEBlock+RABlock balances fine detail and global context, RABlock+SC2Head couples recepti ve-field expansion with attention to emphasize salient keypoints, and SFEBlock+SC2Head shows complementary gains with minimal overhead. The full model with all three modules at- tains the best o verall accuracy and efficienc y trade-of f while keeping the parameter count compact. Effect of SC2Head Attention. W e ev aluate SC2Head against sev eral representative attention mechanisms, in- cluding channel-only (CSA, ECA), spatial-only (SAM, EMA), and joint spatial–channel attention (CB AM, SCAM, GCSA). T o ensure a fair comparison, all attention modules are embedded in the same position as SC2Head, with iden- tical backbone structures, training strategies, input resolu- tions, and datasets, and only the attention module type is replaced. The experimental results are shown in T able 4 . The results show that SC2Head achieves an AP of 89 . 0% and an AR of 89 . 9% , improving AP by 0 . 5 over CSA and 0 . 6 over CB AM, and also yields the highest AR 75 ( 93 . 1% ), indicating its excellent performance under occlusion and low-contrast conditions. These gains stem from its spa- tial–channel co-calibration with a self-calibration branch T able 5. Comparison of inference speed. FLOPs/G, Params/M, and FPS denote computation, model size, and runtime speed, re- spectiv ely . Best results are highlighted in bold . Methods FLOPs/G Params/M FPS DEKR 8.328 29.548 37.57 CID 8.093 29.363 184.09 CoupledEmbedding 7.590 28.541 89.90 R TMO 31.656 22.475 78.23 Ours (FSMC-Pose) 0.354 2.698 216.58 that dynamically couples channel semantics and spatial re- sponses, forming an ef ficient pathway for enhancing dis- criminativ e keypoint features. 5.4. Comparison of Inference Speed T o further ev aluate the deployment potential of FSMC- Pose, we compare inference speed with se veral mainstream pose estimation methods under identical hardware and ex- perimental conditions, as summarized in T able 5 . FSMC- Pose achie ves 216 . 58 FPS and requires only 0 . 354 GFLOPs and 2 . 698 M parameters, clearly outperforming DEKR [ 7 ], CoupledEmbedding [ 28 ], R TMO [ 15 ], and the real-time oriented CID [ 26 ] in terms of both speed and model com- plexity . The slightly smaller than expected speed gap over CID is mainly due to the use of several complex modules with non-standard con volutions and frequent tensor reshap- ing, which introduce additional memory and scheduling ov erhead. Even so, FSMC-Pose still provides real-time in- ference at over 200 FPS with significantly reduced compu- tation and parameters, making it well suited for edge de- vices and latency-sensitiv e applications where efficiency , resource consumption, and deployment cost are critical. 6. Conclusion and Discussion In this w ork we address mounting pose estimation for dairy cattle in cluttered herd en vironments, a setting largely o ver- looked in animal pose estimation. W e propose FSMC- Pose, a lightweight top-down framework that couples the CattleMountNet backbone with SFEBlock and RABlock for frequency–spatial fusion and multiscale aggregation, and SC2Head for spatial–channel self-calibration under inter-animal ov erlap. On a benchmark combining the self-collected MOUNT -Cattle and public NW AFU-Cattle dataset, FSMC-Pose outperforms baselines in AP and AR while retaining low computational cost and real-time infer- ence on commodity GPUs. Extensiv e Qualitative visual- izations further show that FSMC-Pose generalizes well to complex farm scenes and yields robust mounting pose esti- mation. In future work, we plan to extend mounting analy- sis to full estrus behavior pipelines by integrating temporal cues and multi-camera views and to explore large-scale de- ployment in precision li vestock farming. Acknowledgement This w ork w as supported by the Beijing Natural Science Foundation (No. 4242037), the Y outh Project of MOE (Ministry of Education) Foundation on Humanities and So- cial Sciences (No. 23YJCZH223), and the National Natural Science Foundation of China (No. 72501020, U2568225). References [1] Xiaoqi An, Lin Zhao, Chen Gong, Nannan W ang, Di W ang, and Jian Y ang. Sharpose: Sparse high-resolution representa- tion for human pose estimation. In Pr oceedings of the AAAI Confer ence on Artificial Intelligence , pages 691–699, 2024. 1 , 2 [2] Bruno Artacho and Andreas Sav akis. Bapose: Bottom-up pose estimation with disentangled waterfall representations. In Pr oceedings of the IEEE/CVF W inter Confer ence on Ap- plications of Computer V ision , pages 528–537, 2023. 1 , 2 [3] Jinkun Cao, Hongyang T ang, Hao-Shu Fang, Xiaoyong Shen, Cewu Lu, and Y u-W ing T ai. Cross-domain adaptation for animal pose estimation. In Pr oceedings of the IEEE/CVF international conference on computer vision , pages 9498– 9507, 2019. 3 [4] Ling Chen, Lianyue Zhang, Jinglei T ang, Chao T ang, Rui An, Ruizi Han, and Y iyang Zhang. Grmpose: Gcn-based real-time dairy goat pose estimation. Computers and Elec- tr onics in Agriculture , 218:108662, 2024. 2 [5] Qingcheng F an, Sicong Liu, Shuqin Li, and Chunjiang Zhao. Bottom-up cattle pose estimation via concise multi-branch network. Computers and Electr onics in Agricultur e , 211: 107945, 2023. 1 , 2 , 3 [6] Shahaf E Finder , Roy Amoyal, Eran T reister, and Oren Freifeld. W av elet con volutions for large receptiv e fields. In Eur opean Conference on Computer V ision , pages 363–380. Springer , 2024. 4 [7] Zigang Geng, Ke Sun, Bin Xiao, Zhaoxiang Zhang, and Jing- dong W ang. Bottom-up human pose estimation via disentan- gled keypoint regression. In Pr oceedings of the IEEE/CVF confer ence on computer vision and pattern recognition , pages 14676–14686, 2021. 6 , 8 [8] Andrew Howard, Mark Sandler , Grace Chu, Liang-Chieh Chen, Bo Chen, Mingxing T an, W eijun W ang, Y ukun Zhu, Ruoming Pang, V ijay V asudev an, et al. Searching for mo- bilenetv3. In Pr oceedings of the IEEE/CVF international confer ence on computer vision , pages 1314–1324, 2019. 3 , 4 [9] Shengxiang Hu, Huaijiang Sun, Dong W ei, Xiaoning Sun, and Jin W ang. Continuous heatmap regression for pose esti- mation via implicit neural representation. Advances in Neu- ral Information Processing Systems , 37:102036–102055, 2024. 1 , 2 [10] T ao Jiang, Peng Lu, Li Zhang, Ningsheng Ma, Rui Han, Chengqi L yu, Y ining Li, and Kai Chen. Rtmpose: Real- time multi-person pose estimation based on mmpose. arXiv pr eprint arXiv:2303.07399 , 2023. 2 , 3 , 4 , 6 , 7 , 8 [11] Rawal Khirodkar , V isesh Chari, Amit Agrawal, and Ambrish T yagi. Multi-instance pose networks: Rethinking top-down pose estimation. In Pr oceedings of the IEEE/CVF Inter - national conference on computer vision , pages 3122–3131, 2021. 1 , 2 [12] Y anjie Li, Sen Y ang, Peidong Liu, Shoukui Zhang, Y unx- iao W ang, Zhicheng W ang, W ankou Y ang, and Shu-T ao Xia. Simcc: A simple coordinate classification perspecti ve for hu- man pose estimation. In Eur opean Conference on Computer V ision , pages 89–106. Springer , 2022. 6 , 7 [13] Zhihao Li, Jianzhuang Liu, Zhensong Zhang, Songcen Xu, and Y ouliang Y an. Cliff: Carrying location information in full frames into human pose and shape estimation. In Eur opean Conference on Computer V ision , pages 590–606. Springer , 2022. 1 , 2 [14] Tsung-Y i Lin, Michael Maire, Serge Belongie, James Hays, Pietro Perona, Dev a Ramanan, Piotr Doll ´ ar , and C La wrence Zitnick. Microsoft coco: Common objects in context. In Eur opean conference on computer vision , pages 740–755. Springer , 2014. 2 , 3 [15] Peng Lu, T ao Jiang, Y ining Li, Xiangtai Li, Kai Chen, and W enming Y ang. Rtmo: T o wards high-performance one- stage real-time multi-person pose estimation. In Proceedings of the IEEE/CVF confer ence on computer vision and pattern r ecognition , pages 1491–1500, 2024. 6 , 8 [16] Chengqi L yu, W enwei Zhang, Haian Huang, Y ue Zhou, Y udong W ang, Y anyi Liu, Shilong Zhang, and Kai Chen. Rtmdet: An empirical study of designing real-time object detectors. arXiv preprint , 2022. 2 [17] Alexander Mathis, Pranav Mamidanna, Ke vin M Cury , T aiga Abe, V enkatesh N Murthy , Mackenzie W eygandt Mathis, and Matthias Bethge. Deeplabcut: markerless pose estima- tion of user-defined body parts with deep learning. Natur e neur oscience , 21(9):1281–1289, 2018. 1 , 2 [18] Faisal Mehmood, Xin Guo, Enqing Chen, Muham- mad Azeem Akbar, Arif Ali Khan, and Sami Ullah. Ex- tended multi-stream temporal-attention module for skeleton- based human action recognition (har). Computers in Human Behavior , 163:108482, 2025. 1 , 2 [19] Sachin Mehta and Mohammad Rastegari. Separable self- attention for mobile vision transformers. arXiv preprint arXiv:2206.02680 , 2022. 3 [20] T almo D Pereira, Diego E Aldarondo, Lindsay Willmore, Mikhail Kislin, Samuel S-H W ang, Mala Murthy , and Joshua W Shaevitz. F ast animal pose estimation using deep neural networks. Natur e methods , 16(1):117–125, 2019. 2 [21] Haoxuan Qu, Y ujun Cai, Lin Geng Foo, Ajay Kumar , and Jun Liu. A characteristic function-based method for bottom- up human pose estimation. In Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , pages 13009–13018, 2023. 1 , 2 [22] Helena Russello, Rik van der T ol, and Gert Kootstra. T - leap: Occlusion-robust pose estimation of walking cows us- ing temporal information. Computers and Electr onics in Agricultur e , 192:106559, 2022. 2 [23] Helena Russello, Rik van der T ol, Menno Holzhauer, Eldert J van Henten, and Gert K ootstra. V ideo-based automatic lame- ness detection of dairy cows using pose estimation and mul- tiple locomotion traits. Computers and Electr onics in Agri- cultur e , 223:109040, 2024. 2 [24] Mark Sandler , Andre w Ho ward, Menglong Zhu, Andre y Zh- moginov , and Liang-Chieh Chen. Mobilenetv2: In verted residuals and linear bottlenecks. In Pr oceedings of the IEEE conference on computer vision and pattern r ecogni- tion , pages 4510–4520, 2018. 3 , 7 [25] JH V an Vliet and FJCM V an Eerdenburg. Sexual activities and oestrus detection in lactating holstein cows. Applied An- imal Behaviour Science , 50(1):57–69, 1996. 1 [26] Dongkai W ang and Shiliang Zhang. Contextual instance de- coupling for robust multi-person pose estimation. In Pro- ceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , pages 11060–11068, 2022. 1 , 2 , 6 , 7 , 8 [27] Dongkai W ang and Shiliang Zhang. Spatial-aw are re gression for keypoint localization. In Pr oceedings of the IEEE/CVF Confer ence on Computer V ision and P attern Recognition , pages 624–633, 2024. 1 , 2 [28] Haixin W ang, Lu Zhou, Y ingying Chen, Ming T ang, and Jin- qiao W ang. Regularizing vector embedding in bottom-up hu- man pose estimation. In Eur opean conference on computer vision , pages 107–122. Springer , 2022. 6 , 8 [29] Haonan W ang, Jie Liu, Jie T ang, Gangshan W u, Bo Xu, Y anbing Chou, and Y ong W ang. Gtpt: Group-based token pruning transformer for efficient human pose estimation. In Eur opean Conference on Computer V ision , pages 213–230. Springer , 2024. 1 [30] Jingdong W ang, Ke Sun, T ianheng Cheng, Borui Jiang, Chaorui Deng, Y ang Zhao, Dong Liu, Y adong Mu, Mingkui T an, Xinggang W ang, et al. Deep high-resolution repre- sentation learning for visual recognition. IEEE transactions on pattern analysis and machine intelligence , 43(10):3349– 3364, 2020. 1 , 2 [31] Zehua W ang, Suyin Zhou, Ping Y in, Aijun Xu, and Junhua Y e. Ganpose: Pose estimation of grouped pigs using a gen- erativ e adversarial network. Computers and Electr onics in Agricultur e , 212:108119, 2023. 1 , 2 [32] Jianyang Xie, Y anda Meng, Y itian Zhao, Anh Nguyen, Xi- aoyun Y ang, and Y alin Zheng. Dynamic semantic-based spa- tial graph con volution network for skeleton-based human ac- tion recognition. In Proceedings of the AAAI confer ence on artificial intelligence , pages 6225–6233, 2024. 1 , 2 [33] Haojun Xu, Y an Gao, Zheng Hui, Jie Li, and Xinbo Gao. Language knowledge-assisted representation learning for skeleton-based action recognition. IEEE T ransactions on Multimedia , 2025. 1 , 2 [34] Jie Y ang, Ailing Zeng, Ruimao Zhang, and Lei Zhang. X- pose: Detecting any keypoints. In Eur opean Confer ence on Computer V ision , pages 249–268. Springer , 2024. 1 , 2 [35] Zhendong Y ang, Ailing Zeng, Chun Y uan, and Y u Li. Effec- tiv e whole-body pose estimation with two-stages distillation. In Pr oceedings of the IEEE/CVF International Conference on Computer V ision , pages 4210–4220, 2023. 2 , 6 [36] Rui Y in, Y ulun Zhang, Zherong Pan, Jianjun Zhu, Cheng W ang, and Biao Jia. Srpose: T wo-vie w relativ e pose esti- mation with sparse keypoints. In Eur opean Conference on Computer V ision , pages 88–107. Springer , 2024. 1 , 2 [37] Hang Y u, Y ufei Xu, Jing Zhang, W ei Zhao, Ziyu Guan, and Dacheng T ao. Ap-10k: A benchmark for animal pose esti- mation in the wild. arXiv pr eprint arXiv:2108.12617 , 2021. 3 [38] Y uxuan Zhou, Xudong Y an, Zhi-Qi Cheng, Y an Y an, Qi Dai, and Xian-Sheng Hua. Blockgcn: Redefine topology aw are- ness for skeleton-based action recognition. In Pr oceedings of the IEEE/CVF Conference on Computer V ision and P attern Recognition , pages 2049–2058, 2024. 1 , 2

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment