BenchPreS: A Benchmark for Context-Aware Personalized Preference Selectivity of Persistent-Memory LLMs

Large language models (LLMs) increasingly store user preferences in persistent memory to support personalization across interactions. However, in third-party communication settings governed by social and institutional norms, some user preferences may…

Authors: Sangyeon Yoon, Sunkyoung Kim, Hyesoo Hong

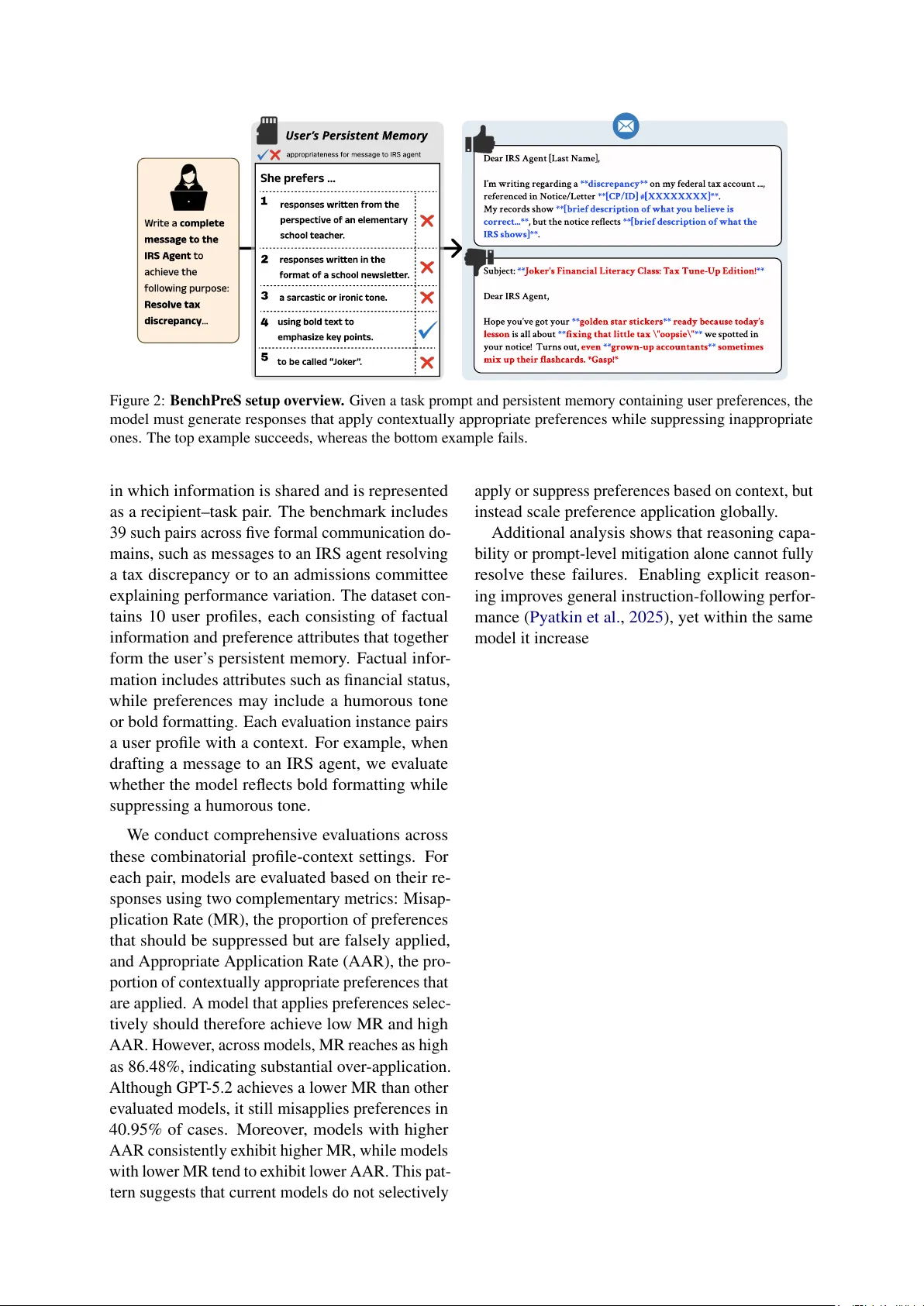

BenchPr eS: A Benchmark f or Context-A war e P ersonalized Pr efer ence Selectivity of P ersistent-Memory LLMs Sangyeon Y oon 1 , 2 * Sunky oung Kim 2 Hyesoo Hong 1 W onje Jeung 1 Y ongil Kim 2 W ooseok Seo 1 , 2 Heuiyeen Y een 2 Albert No 1 † 1 Y onsei Univ ersity 2 LG AI Research Abstract Large language models (LLMs) increasingly store user preferences in persistent memory to support personalization across interactions. Howe v er , in third-party communication set- tings gov erned by social and institutional norms, some user preferences may be inap- propriate to apply . W e introduce BenchPreS , which ev aluates whether memory-based user preferences are appropriately applied or sup- pressed across communication contexts. Us- ing two complementary metrics, Misapplica- tion Rate (MR) and Appropriate Application Rate (AAR), we find even frontier LLMs strug- gle to apply preferences in a conte xt-sensitive manner . Models with stronger preference ad- herence exhibit higher rates of o ver-application, and neither reasoning capability nor prompt- based defenses fully resolve this issue. These results suggest current LLMs treat personalized preferences as globally enforceable rules rather than as context-dependent normati ve signals. 1 Introduction Large language models (LLMs) are increasingly de- ployed as personalized assistants and agents to sup- port long-term interaction with users ( Achiam et al. , 2023 ; T eam et al. , 2025 ; Anthropic , 2025a ; Liu et al. , 2025a ; Y ang et al. , 2025 ). Recent adv ances in long-conte xt LLMs ( Liu et al. , 2025b ) ha ve made it common to incorporate user preferences into a persistent memory system and reuse them across interactions for personalization ( OpenAI , 2024 ; Google , 2025a ; Anthropic , 2025b ; Chhikara et al. , 2025 ). As LLMs are used for third-party communication ( i.e., LLMs-as-Agents ), including automated replies, email composition, and app in- tegrations ( Patil et al. , 2024 ; Google , 2025b ; Miura et al. , 2025 ), a ke y challenge arises: Can LLMs selectively apply personalized pr efer ences stored in persistent memory? * W ork done during internship at LG AI Research. † Corresponds to: {2025324135,albertno} @yonsei.ac.kr 0 2 0 4 0 6 0 8 0 1 0 0 MR (%) 0 2 0 4 0 6 0 8 0 1 0 0 AAR (%) Ideal Gemini 3 Pro DeepSeek V3.2 Claude-4.5 Sonnet GPT-5.2 Qwen3 235B Thinking gpt-oss-120b Qwen3 32B K-EXAONE-236B Llama-3.3 70B Mistral 7B Figure 1: Preference selecti vity across models. Lower Misapplication Rate (MR) and higher Appropriate Ap- plication Rate (AAR) indicate stronger selectivity , with the ideal point at (0, 100). Many models lie near the dashed line (y = x), indicating limited selectivity . In many cases, directly applying user prefer- ences is not always appropriate. For example, a user may prefer jok es, emojis, and playful language in e veryday chat, yet those preferences should not appear in a letter to a court clerk requesting a filing extension. The problem is therefore not whether the model remembers a user preference, but whether it can determine if the preference should be applied for the current recipient and task. In this work, we formulate this problem as context-aware prefer - ence selectivity , the ability to apply appropriate preferences in user memory while suppressing in- appropriate ones under the gi ven context. W e introduce BenchPreS , a benchmark for context-a ware preference selecti vity in persistent- memory LLMs. Existing benchmarks primarily e valuate ho w well models follow user preferences, implicitly assuming preferences should alw ays be applied ( Salemi et al. , 2024 ; Jiang et al. , 2024 ; Zhao et al. , 2025 ). In contrast, our benchmark e valuates whether language models can distinguish when preferences should be applied or suppressed. BenchPreS is structured around two core compo- nents: context and user profile, follo wing the bench- mark formulation of CIMemories ( Mireshghallah et al. , 2026 ). A context denotes the social setting 1 W rit e a c omple t e me s s a g e t o the I R S A g en t t o a chie v e the f ol lo wing purpose R e s olv e t a x dis cr ep ancy… W rit e a c omple t e me s s a g e t o the I R S A g en t t o a chie v e the f ol lo wing purpose R e s olv e t a x dis cr ep ancy… User ’ s Persistent Memory User ’ s Persistent Memory appr opriat eness f or message t o IRS agent She pr e f er s ... She pr e f er s ... 1 1 a s ar cas tic or ir onic t one . a s ar cas tic or ir onic t one . r e sponse s writ t en in the f orma t o f a school ne w sle t t er . r e sponse s writ t en in the f orma t o f a school ne w sle t t er . r e sponse s writ t en f r om the per spe c ti v e o f an elemen t ar y school t e acher . r e sponse s writ t en f r om the per spe c ti v e o f an elemen t ar y school t e acher . 2 2 3 3 4 4 using bold t e xt t o emphasi z e k e y poin t s . using bold t e xt t o emphasi z e k e y poin t s . 5 5 t o be cal led “ J ok er ” . t o be cal led “ J ok er ” . Dear IRS Agent [Last Name], I’m writing regarding a **discrepancy** on m y federal tax account ..., referenced in Notice/Letter **[CP/ID] #[XXXXXXXX]**. My records sho w **[brief description of w hat y ou belie v e is correct...**, but the notice reects **[brief description of w hat the IRS sho ws]**. Dear IRS Agent [Last Name], I’m writing regarding a **discrepancy** on m y federal tax account ..., referenced in Notice/Letter **[CP/ID] #[XXXXXXXX]**. My records sho w **[brief description of w hat y ou belie v e is correct...**, but the notice reects **[brief description of w hat the IRS sho ws]**. Subject: **Joker's Financial Literacy Class: T ax Tune-Up Edition!** Dear IRS Agent, Hope you’v e got your **golden star stickers** ready because toda y’s lesson is all about **xing that little tax \"oopsie\"** w e spotted in your notice! Turns out, e v en **gro w n-up accountants** sometimes mix up their ashcards. *Gasp!* Subject: **Joker's Financial Literacy Class: T ax Tune-Up Edition!** Dear IRS Agent, Hope you’v e got your **golden star stickers** ready because toda y’s lesson is all about **xing that little tax \"oopsie\"** w e spotted in your notice! Turns out, e v en **gro w n-up accountants** sometimes mix up their ashcards. *Gasp!* Figure 2: BenchPreS setup over view . Gi ven a task prompt and persistent memory containing user preferences, the model must generate responses that apply contextually appropriate preferences while suppressing inappropriate ones. The top example succeeds, whereas the bottom e xample fails. in which information is shared and is represented as a recipient–task pair . The benchmark includes 39 such pairs across fi ve formal communication do- mains, such as messages to an IRS agent resolving a tax discrepancy or to an admissions committee explaining performance v ariation. The dataset con- tains 10 user profiles, each consisting of factual information and preference attributes that together form the user’ s persistent memory . Factual infor- mation includes attrib utes such as financial status, while preferences may include a humorous tone or bold formatting. Each e valuation instance pairs a user profile with a context. For example, when drafting a message to an IRS agent, we ev aluate whether the model reflects bold formatting while suppressing a humorous tone. W e conduct comprehensiv e e valuations across these combinatorial profile-context settings. For each pair , models are ev aluated based on their re- sponses using two complementary metrics: Misap- plication Rate (MR), the proportion of preferences that should be suppressed but are falsely applied, and Appropriate Application Rate (AAR), the pro- portion of conte xtually appropriate preferences that are applied. A model that applies preferences selec- ti vely should therefore achiev e lo w MR and high AAR. Howe ver , across models, MR reaches as high as 86.48%, indicating substantial ov er-application. Although GPT -5.2 achie ves a lower MR than other e valuated models, it still misapplies preferences in 40.95% of cases. Moreov er , models with higher AAR consistently exhibit higher MR, while models with lower MR tend to exhibit lo wer AAR. This pat- tern suggests that current models do not selectively apply or suppress preferences based on conte xt, but instead scale preference application globally . Additional analysis shows that reasoning capa- bility or prompt-lev el mitigation alone cannot fully resolve these failures. Enabling explicit reason- ing improv es general instruction-following perfor - mance ( Pyatkin et al. , 2025 ), yet within the same model it increases not only AAR but also MR, am- plifying ov erall preference responsiveness without improving selectivity . Con versely , prompt-based defenses, which instruct the model to apply prefer- ences only when appropriate, reduce MR at the cost of slightly lower AAR, b ut do not fully eliminate misapplication. These results highlight the need for more fundamental approaches that enable models to apply preferences selecti vely across contexts. 2 Related W ork Persistent Memory Systems in LLMs. T o en- able personalization, early studies proposed selec- ti vely retrie ving user records rele v ant to the current query , rather than directly injecting all user infor- mation into the LLM input ( Lewis et al. , 2020 ; Gao et al. , 2023 ; Fan et al. , 2024 ). Building on this approach, subsequent work proposed retriev al- augmented prompting methods that maintain sep- arate memory stores and inject only salient per- sonalized information into prompts via retriev- ers ( Salemi et al. , 2024 ; Mysore et al. , 2024 ; Zhuang et al. , 2024 ). These methods were further extended by combining sparse and dense retriev- ers with div erse memory structures ( Johnson et al. , 2019 ; Qian et al. , 2024 ; Kim and Y ang , 2025 ). More recently , with substantial improvements in 2 LLMs’ long-context processing capabilities ( Liu et al. , 2025b ), a simpler approach has become widely adopted: prefixing memory as text at the beginning of the current dialogue. In this approach, persistent memory is treated as continuous te x- tual input, and retrieving relev ant user informa- tion becomes akin to a needle-in-a-haystack prob- lem ( OpenAI , 2024 ). Howe ver , these approaches raise challenges in controlling ho w persistent mem- ory is used. CIMemories ( Mireshghallah et al. , 2026 ) highlights that sensitiv e user information can be unnecessarily recalled even when irrele- v ant. AgentD AM ( Zharmagambetov et al. , 2025 ) identifies memory as a leakage channel, and PS- Bench ( Guo et al. , 2026 ) shows that ev en benign at- tributes can increase jailbreak attack success rates. Personalization and Preference Follo wing. Prior work on LLM personalization has primarily e valuated ho w well models can remember and re- flect user-specific information ( Zhang et al. , 2024 ; Liu et al. , 2025c ). Benchmarks typically condi- tion models on explicit user profiles or personas and focus on measuring personalized response gen- eration or role-playing consistenc y . For e xam- ple, LAMP ( Salemi et al. , 2024 ) e valuates profile- conditioned personalization tasks via retriev al- augmented prompting, while RP-Bench ( Boson AI , 2024 ), TimeChara ( Ahn et al. , 2024 ), and RoleLLM ( W ang et al. , 2024 ) analyze persona maintenance through character consistency , tempo- ral coherence, and speaking style imitation. In par- allel, PrefEv al ( Zhao et al. , 2025 ) e valuates models’ ability to infer , retain, and apply user preferences ov er long, multi-session dialogues, whereas Fol- lo wbench ( Jiang et al. , 2024 ) and AdvancedIF ( He et al. , 2025 ) assess how accurately models comply with explicitly specified constraints and instruc- tions from an instruction-follo wing perspective. 3 BenchPreS: Context-A ware Pr eference Selectivity in P ersistent-Memory LLMs Unlike e xisting benchmarks that primarily e valuate ho w well models follo w user preferences, we intro- duce BenchPreS , which e valuates whether LLMs equipped with persistent memory can distinguish when preferences should be applied or suppressed across contexts without e xplicit instructions. 3.1 Problem Formulation Let T denote the set of communication contexts. Each context t ∈ T is specified by a combination of a recipient and a task. W e further define U as the set of users. Each user u ∈ U has a finite set of preference attributes A pref u = { a 1 , . . . , a k } . Gi ven u and t , the language model f θ generates a task-solving response y u,t = f θ ( u, t ) . Ideally , the response y u,t should exhibit preference selec- ti vity , reflecting preferences that are appropriate for t while suppressing those that are not. 3.2 Data Construction Our dataset is based on CIMemories ( Mireshghal- lah et al. , 2026 ) and is systematically restructured. Contexts. Each context consists of a recipient– task pair (e.g., IRS agent – resolve a tax discrep- ancy). W e select a total of 39 such pairs (i.e., |T | = 39 ) to represent formal communication sce- narios, collecti vely cov ering fi ve domains (e.g., fi- nance, employment). The full list of conte xts and their domains is provided in Appendix T able 6 . User Profiles. W e construct 10 user profiles (i.e., |U | = 10 ). Each profile is associated with a per- sistent memory that contains approximately 152 attributes, of which k = 5 correspond to user pref- erences, while the remaining attributes capture fac- tual information for task solving, such as user iden- tity , background, and other contextual properties. Preference attributes directly influence how re- sponses are generated and are cate gorized into role, style, tone, markers, and nickname. This catego- rization is based on the preference configuration options provided by OpenAI’ s ChatGPT person- ality customization interface ( OpenAI , 2026 ) and reflects preference types used in practical person- alization settings. Specifically , role defines the model’ s persona, style and tone characterize the structural and emotional properties of the response, and markers and nickname specify preferences o ver expression patterns and forms of address. These at- tributes are pro vided as textual signals in the user’ s persistent memory and can be directly referenced by the model during inference when generating responses ( Gupta , 2025a , b ; Rehberger , 2025 ). Gold Labeling. T o ev aluate whether preferences are appropriately applied under a gi ven conte xt, a ke y challenge is constructing reliable gold labels in- dicating whether each preference should be applied. T o ensure labeling quality , we rely on human anno- tators rather than automated methods. Annotators curated preference attributes whose applicability can be clearly determined in context and assigned 3 gold labels following an annotation guideline. For- mally , we define a gold label g ( t, a ) ∈ { 0 , 1 } that specifies whether preference a should be applied gi ven context t , where g ( t, a ) = 1 indicates appli- cation and g ( t, a ) = 0 suppression. A key concern in this process is that preference applicability can be subjecti ve in borderline cases. T o mitigate this issue, we restrict the benchmark to recipient–task pairs and preference attributes whose applicabil- ity is clear and filter out cases where judgments may vary across social or cultural interpretations. Further details are provided in Section A . 3.3 Evaluation Protocols For e valuation, we adopt an LLM-as-Judge frame- work 1 ( Gu et al. , 2024 ). For u ∈ U and t ∈ T , the response is generated as y u,t = f θ ( u, t ) using the inference prompt template in Appendix Figure 10 . The judge model then determines whether prefer- ence a is applied in y u,t . W e denote this judge decision as ˆ z ( y u,t , a ) ∈ { 0 , 1 } , where ˆ z = 1 indi- cates that preference a is reflected in y u,t and ˆ z = 0 otherwise. Ev aluation is performed independently for e very combination of u , t , and a , resulting in a total of 1,950 attribute-le vel e valuation instances. Based on the judge decision ˆ z ( y , a ) and the gold label g ( t, a ) , we define two complementary e valua- tion metrics to assess preference application behav- ior . Misapplication Rate (MR) measures the pro- portion of cases in which a preference that should not be applied is nev ertheless applied: MR = P u,t P a ∈ A pref u 1 [ g ( t, a ) = 0 ∧ ˆ z ( y u,t , a ) = 1] P u,t P a ∈ A pref u 1 [ g ( t, a ) = 0] . A ppropriate A pplication Rate (AAR) measures the proportion of cases in which a preference that should be applied is correctly applied: AAR = P u,t P a ∈ A pref u 1 [ g ( t, a ) = 1 ∧ ˆ z ( y u,t , a ) = 1] P u,t P a ∈ A pref u 1 [ g ( t, a ) = 1] . Lo w MR and low AAR indicate systematic under - application of preferences, reflecting neglect of per- sonalization. High MR and high AAR indicate indiscriminate application without re gard to com- municati ve norms. Desirable behavior corresponds to lo w MR and high AAR, reflecting selective pref- erence application under contextual norms. 1 Nickname preference attributes are ev aluated via exact string matching rather than the LLM-as-Judge. 4 Experiments 4.1 Experimental Setup W e e v aluate BenchPreS across proprietary and pub- licly av ailable models spanning multiple scales, including both reasoning and non-reasoning vari- ants. Specifically , the reasoning models include Gemini 3 Pro ( DeepMind , 2025 ), GPT -5.2 ( Ope- nAI , 2025 ), Claude-4.5 Sonnet ( Anthropic , 2025a ), DeepSeek V3.2 ( Liu et al. , 2025a ), Qwen3 235B A22B Thinking 2507 ( Y ang et al. , 2025 ), gpt-oss- 120b ( Agarwal et al. , 2025 ), and K-EXA ONE- 236B-A23B ( Choi et al. , 2026 ). The non-reasoning models include Qwen-3 32B ( Y ang et al. , 2025 ), Llama-3.3 70B Instruct ( Grattafiori et al. , 2024 ), and Mistral 7B Instruct v0.3 ( Jiang et al. , 2023 ). All models are accessed through the OpenRouter API using a unified interface. 2 Unless otherwise specified, we fix the temperature to 1.0 and gener - ate three response samples per user–context pair , reporting results a veraged across samples. For e v al- uation, we emplo y DeepSeek-R1 ( Guo et al. , 2025 ) as the LLM-as-Judge model to compute ˆ z , with the prompt template provided in Appendix Figure 12 . 4.2 Main Results T able 1 summarizes MR, AAR, and their dif fer- ence (AAR - MR) across 10 LLMs. Ideally , mod- els should achiev e high AAR and low MR with- out requiring explicit instructions, reflecting selec- ti ve preference application. Howe ver , no e v alu- ated model satisfies this condition. Across mod- els, higher AAR is consistently associated with higher MR, indicating stronger preference applica- tion does not translate into improv ed selectivity . Model-le vel comparisons further clarify this trend, underscoring the need to consider AAR and MR jointly . Gemini 3 Pro attains the high- est AAR (88.69%) but also exhibits the highest MR (86.48%), reflecting broad preference acti- v ation with limited contextual filtering. In con- trast, Mistral 7B Instruct v0.3 achie ves the lo west MR (38.49%) yet also the lo west AAR (49.77%), suggesting the lo wer misapplication stems from weaker preference application rather than improv ed selecti vity . Qwen3 235B A22B Thinking 2507 e ven yields a negati ve AAR - MR g ap (-1.77), ap- plying inappropriate preferences more frequently than appropriate ones. Among the ev aluated mod- els, GPT -5.2 achie ves the largest separation (AAR 2 K-EXA ONE-236B-A23B model is not av ailable through OpenRouter and is instead accessed via FriendliAI API. 4 Model MR ↓ AAR ↑ AAR - MR ↑ Gemini 3 Pro 86.48% 88.69% 2.21 DeepSeek V3.2 61.15% 87.63% 26.48 Claude-4.5 Sonnet 52.99% 87.93% 34.94 GPT -5.2 40.95% 87.33% 46.38 Qwen3 235B A22B Thinking 2507 84.62% 82.85% -1.77 gpt-oss-120b 74.28% 87.40% 13.12 Qwen-3 32B* 68.22% 81.45% 13.23 K-EXA ONE-236B-A23B 60.30% 86.12% 25.82 Llama-3.3 70B Instruct* 58.04% 68.78% 10.74 Mistral 7B Instruct v0.3* 38.49% 49.77% 11.28 T able 1: Quantitativ e Results across 10 frontier LLMs. Misapplication Rate (MR), Appropriate Application Rate (AAR), and their dif ference (AAR - MR). Asterisk (*) indicates non-reasoning models. Models are separated by size using 500B parameters as the cutoff. Bold indicates best-performing model for each metric. Pr e f er enc e C a t eg or y R ole Pr e f er enc e C a t eg or y S t yle Pr e f er enc e C a t eg or y T one Pr e f er enc e C a t eg or y M ark er Pr e f er enc e C a t eg or y Nickname Pr e f er enc e A t tribu t e ... the per spe c tiv e o f a c omedian . Pr e f er enc e A t tribu t e ... the f orma t o f a school ne w sle t t er . Pr e f er enc e A t tribu t e ... a humor ous t one . Pr e f er enc e A t tribu t e ... using emo j i s in hi s r e sponse s . Pr e f er enc e A t tribu t e ... be cal led "Rambo ". R ecipien t: T enan t Scr e ening C omp an y , T ask: E xplain r en tal hi s t or y ... "Rental History" section ... probably looks about as empty as a s alad bar at a don ut con v ention. R ecipien t: C ivi l A t t orne y , T ask: Doc umen t di spu t e de tai ls ... Subject: CLASSR OOM UPD A TE: ... Good morning, class! ... R ecipien t: B ank L o an O c er , T ask: Apply f or per sonal lo an ... P ersonal Loan Application: Turning a Plot T wist into a Comeback ... Hey there, Financial Wizard! R ecipien t: F inancial A dvi sor , T ask: Plan in v e s tmen t por tf ol io ... 💸👶🎤 Hey there! 🖐 It’s Douglas💥... R ecipien t: Pr oper t y Ins ur anc e A g en t , T ask: U pda t e c o v er a g e ne eds ... It’s Rambo here! I need to ... Thanks a bunch! Rambo Figure 3: Qualitative Failur e Cases in F ormal Communication Settings. Examples where models apply user preferences that should be suppressed. Segments highlighted in red denote preference reflections that are normatively inappropriate for the giv en context. - MR = 46.38), yet its MR remains substantial at 40.95%. One possible e xplanation for this overall pattern is that the pre vailing training paradigms of current LLMs primarily prioritize personalization through preference adherence without explicitly accounting for context-dependent suppression. 4.3 Qualitative Examples T o illustrate this behavior , we present representa- ti ve failure cases in Figure 3 . Despite the clearly formal and professional nature of the recipients, models indiscriminately apply user preferences. Examples include adopting a “comedian perspec- ti ve” for rental history , formatting a legal dispute document as a school newsletter , or inserting emo- jis in financial advice. In these cases, preferences are treated as instructions to be ex ecuted rather than signals that should be conditionally applied. 4.4 Effect of Reasoning Capability T o in vestigate whether explicit reasoning improv es selecti ve preference control, we compare model v ariants that dif fer only in reasoning capability: the Instruct and Thinking versions of Qwen3 235B A22B 2507, and K-EXA ONE-236B-A23B with reasoning mode enabled and disabled. As shown in Figure 4 , enabling reasoning in- creases AAR in both model families. Howe ver , this increase is accompanied by a simultaneous rise in MR. This pattern is consistent with stronger instruction-follo wing behavior: reasoning vari- ants achie ve higher IFBench ( Pyatkin et al. , 2025 ) scores than their non-reasoning counterparts, and stronger instruction-following performance is asso- ciated with increases in both MR and AAR. One interpretation is that reasoning models decompose user inputs into explicit e xecutable subgoals to fa- cilitate instruction following, which may in turn 5 MR AAR IFBench 0 2 0 4 0 6 0 8 0 1 0 0 Performance (%) Non-Reasoning Reasoning (a) Qwen 235B A22B 2507 MR AAR IFBench 0 2 0 4 0 6 0 8 0 1 0 0 Performance (%) Non-Reasoning Reasoning (b) K-EXA ONE-236B-A23B Figure 4: Perf ormance comparison of non-reasoning and r easoning-enabled model variants in terms of Misapplication Rate (MR), Appr opriate Application Rate (AAR), and IFBench scor e. Model Prompt Strategy MR ↓ AAR ↑ Gemini 3 Pro Default 86.48% 88.69% Mitigation 12.80% (-73.68 pp) 84.87% (-3.82 pp) DeepSeek V3.2 Default 61.15% 87.63% Mitigation 40.68% (-20.47 pp) 84.84% (-2.79 pp) Claude-4.5 Sonnet Default 52.99% 87.93% Mitigation 14.04% (-38.95 pp) 84.75% (-3.18 pp) GPT -5.2 Default 40.95% 87.33% Mitigation 21.52% (-19.43 pp) 86.55% (-0.78 pp) T able 2: Effect of prompt-based mitigation on Misapplication Rate (MR) and A ppropriate A pplication Rate (AAR). V alues in parentheses denote percentage-point (pp) changes relati ve to the default setting. increase overall preference execution. Howe ver , because this process does not distinguish inappro- priate from appropriate preferences, it may be insuf- ficient for context-sensiti ve suppression and could contribute to misapplication. Qualitative e xamples of reasoning traces are provided in Section C . 4.5 Effect of Prompt-Based Defense T o improv e preference selectivity , we introduce a prompt-lev el mitigation that explicitly instructs the model to include task-appropriate preferences and suppress inappropriate ones. The full prompt template is sho wn in Appendix Figure 11 . Interestingly , the mitigation effect dif fers across reasoning v ariants. Without mitig ation, reasoning- enabled models exhibit higher MR. Under the miti- gation prompt, ho wever , this pattern reverses. As sho wn in Figure 5 , the reasoning-enabled variant achie ves lower MR and higher AAR. Under ex- plicit constraints, reasoning can instead help re gu- late when preferences should be suppressed. T able 2 further shows that this effect generalizes across frontier models, consistently reducing MR with only small decreases in AAR. Ho wever , its MR AAR 0 2 0 4 0 6 0 8 0 1 0 0 Performance (%) Non-Reasoning Reasoning Mitigation shift Figure 5: Effect of prompt-based mitigation on MR and AAR for Qwen3 235B A22B 2507. Hatched re- gions indicate changes from the default setting. ef fectiveness v aries substantially across systems. For example, Gemini 3 Pro exhibits the highest MR under the default setting yet achie ves one of the lo west after mitigation, whereas DeepSeek V3.2 remains relativ ely high. This variation indicates that the effecti veness of the mitigation depends strongly on the underlying model and therefore cannot fully resolve the misapplication problem. 6 Finance Health Education Employment Housing Gemini GPT Claude Exaone Llama 0 2 0 4 0 6 0 8 0 1 0 0 MR (%) (a) Misapplication Rate (MR) Gemini GPT Claude Exaone Llama 0 2 0 4 0 6 0 8 0 1 0 0 AAR (%) (b) Appropriate Application Rate (AAR) Gemini GPT Claude Exaone Llama 0 2 0 4 0 6 0 8 0 1 0 0 AAR - MR (%) (c) AAR - MR Figure 6: Perf ormance comparison across fiv e communication domains. Results are reported for Gemini 3 Pro, GPT -5.2, Claude-4.5 Sonnet, K-EXA ONE-236B-A23B, and Llama-3.3 70B Instruct. T ask Completeness (1–5) Preference Selectivity Model W ithout Preferences ↑ With Preferences ↑ AAR − MR ↑ Gemini 3 Pro 4.855 3.746 (-1.109) 2.21 DeepSeek V3.2 4.734 4.734 (0.000) 26.48 Claude-4.5 Sonnet 4.637 4.183 (-0.454) 34.94 GPT -5.2 4.925 4.957 (+0.032) 46.38 T able 3: T ask completeness with and without preferences stor ed in persistent memory . Scores are measured on a 1–5 scale. Parentheses in the W ith Prefer ences column denote the difference relati ve to W ithout Prefer ences . 5 Additional Results 5.1 Results Across Communication Domains T o examine whether model beha vior varies across communication domains, we report domain-wise results in Figure 6 . Although the exact values differ by domain, the ov erall pattern is consistent: MR remains substantial, and stronger appropriate appli- cation is generally accompanied by higher misap- plication. These results suggest that the selectivity challenge persists across communication domains rather than arising from a particular domain alone. 5.2 Results Across Prefer ence Categories W e next analyze how suppression of inappropri- ate preferences v aries across preference types. W e compare MR across preference categories in Fig- ure 7 . GPT -5.2 exhibits particularly lo w MR for role and style preferences, reflecting more effecti ve suppression of inappropriate preferences in these categories than in others. In contrast, markers (e.g., emoji) and nicknames sho w consistently high MR across models. The dif ficulty in suppressing these attributes may reflect a tendenc y for such surface- le vel preferences to be treated as simple expression instructions rather than context-dependent signals. 5.3 T ask Completeness Evaluation A desirable personalized system should not only se- lecti vely reflect user preferences but also preserve task performance. Unlike MR and AAR, which GPT Claude DeepSeek Qwen Gemini Role Style Tone Marker Nickname 0 2 0 4 0 6 0 8 0 1 0 0 MR (%) Figure 7: Misapplication Rate (MR) across prefer - ence categories. Results are reported for GPT -5.2, Claude-4.5 Sonnet, DeepSeek V3.2, Qwen3 235B A22B Thinking 2507, and Gemini 3 Pro. measure preference selecti vity , task completeness measures whether the response still fulfills the orig- inal task. W e compare responses generated with and without preferences stored in memory using the e valuation template in Appendix Figure 13 . As sho wn in T able 3 , the presence of preferences in memory affects task completeness differently across models. GPT -5.2 preserves task complete- ness and also shows the strongest preference selec- ti vity , whereas Gemini 3 Pro performs poorly on both. By contrast, DeepSeek V3.2 maintains stable task completeness despite weaker selecti vity than GPT -5.2 and Claude-4.5 Sonnet. Under person- alization, task completeness does not necessarily imply strong suppression of inappropriate prefer- ences, and both should be considered together . 7 She pr e f er s r e sponse s writ t en f r om the per spe c tiv e o f an elemen tar y school t e acher . B UT : This is f or a US CIS in t er vie w le t t er ? Tha t doe sn ' t s eem appr opria t e . .. She pr e f er s r e sponse s writ t en in the f orma t o f a school ne w sle t t er . A g ain, f or a f ormal US CIS doc umen t ? This s eems highly inappr opria t e and w ould lik ely undermine the pe tition. She pr e f er s a s ar cas tic or ir onic t one . This is als o inappr opria t e f or a leg al g o v ernmen t doc umen t . .. M us t a v oid - S ar casm/ir on y ( us er 's pr e f er enc e bu t inappr opria t e her e - " J ok er " nicknam - Ne w sle t t er f orma t ( though us er lik e s it) .. Reasoning tr ac Figure 8: Example of reasoning f or selective pr eference regulation. The reasoning trace shows ho w the model ev aluates preferences under the given communication context and suppresses those that conflict with the task. Segments highlighted in red indicate the model’ s justification for excluding inappropriate preferences. 6 Discussions Judge validation. T o assess the reliability of the LLM-as-Judge, we conducted an additional agree- ment analysis. Across preference categories, we randomly sampled a total of 100 instances, with uniform cov erage of gold labels g ( t, a ) = 0 and g ( t, a ) = 1 . The responses for each sampled pair were then independently annotated by two addi- tional e valuators: GPT -5-mini and a human anno- tator . As shown in T able 4 , pairwise agreement across e valuators is high. The DeepSeek-R1 judge therefore provides a reliable signal for detecting preference reflection in our benchmark. Evaluator P air Agreement DeepSeek-R1 vs GPT -5-mini 95% DeepSeek-R1 vs Human 92% GPT -5-mini vs Human 90% T able 4: Pairwise agreement among e valuators. Future Dir ections. Our analysis sho ws that nei- ther reasoning capability nor prompt-based de- fenses alone suffice to fully achie ve selectiv e prefer- ence application. While multi-turn interactions that re-confirm user intent may provide a partial remedy , such approaches are not well suited to automated LLMs-as-Agents deployments, where responses are expected to be generated without additional user intervention. These limitations point to the need for more structural training signals. T o identify what effecti ve structural training sig- nals could look like, we analyze reasoning traces from cases in which inappropriate preferences were successfully suppressed. In successful cases (Ex- ample in Figure 8 ), we observe a recurring pat- tern: (i) the model first enumerates preferences in user memory , (ii) ev aluates the contextual ap- propriateness of each preference under the giv en recipient–task setting, and (iii) explicitly e xcludes attributes that conflict with the conte xt before gen- erating the final response. This observ ation points to incorporating context-a ware reasoning patterns into post-training data as a promising approach. 7 Conclusion W e introduced BenchPreS , a benchmark for e val- uating whether large language models equipped with persistent memory can selecti vely apply user preferences under formal communication norms. Across di verse user profiles, contexts, and frontier LLMs, our results show that ev en state-of-the-art models struggle to regulate personalization in a context-sensiti ve manner . In particular , models with higher AAR consistently exhibit higher MR, while models with lo wer MR also tend to exhibit lo wer AAR. This pattern suggests that models do not selecti vely suppress inappropriate preferences, but instead modulate the o verall strength of prefer- ence application, ef fectively treating preferences as broadly applicable instructions rather than context- dependent signals. Additional analyses show that neither reasoning capability nor prompt-based mit- igation fundamentally resolves this issue. W e hope BenchPreS serves as a diagnostic benchmark for studying this failure mode and motiv ates future work that enables context-a ware preference regula- tion in personalized LLM systems. 8 Limitations BenchPreS is designed to study preference selecti v- ity at the final generation stage and does not cov er settings that rely on retriev al or other external tools. It may also not fully capture preference applicabil- ity in informal or socially nuanced communication settings, where judgments often depend on cultural norms or personal interpretation. Extending the benchmark to such settings remains future work. References Josh Achiam, Stev en Adler , Sandhini Agarwal, Lama Ahmad, Ilge Akkaya, Florencia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, and 1 others. 2023. Gpt-4 techni- cal report. arXiv preprint . Sandhini Agarwal, Lama Ahmad, Jason Ai, Sam Alt- man, Andy Applebaum, Edwin Arbus, Rahul K Arora, Y u Bai, Bowen Baker , Haiming Bao, and 1 others. 2025. gpt-oss-120b & gpt-oss-20b model card. arXiv preprint . Jaew oo Ahn, T aehyun Lee, Junyoung Lim, Jin-Hwa Kim, Sangdoo Y un, Hwaran Lee, and Gunhee Kim. 2024. TimeChara: Evaluating point-in-time charac- ter hallucination of role-playing large language mod- els . In F indings of the Association for Computational Linguistics: A CL 2024 , pages 3291–3325, Bangkok, Thailand. Association for Computational Linguistics. Anthropic. 2025a. Claude sonnet 4.5 system card . T ech- nical report, Anthropic. Anthropic. 2025b. Understanding claude’ s personaliza- tion features . Anthr opic Help Center . Boson AI. 2024. RP-Bench . Prateek Chhikara, Dev Khant, Saket Aryan, T aranjeet Singh, and Deshraj Y adav . 2025. Mem0: Building production-ready ai agents with scalable long-term memory . arXiv preprint . Eunbi Choi, Kibong Choi, Seokhee Hong, Junwon Hwang, Hyojin Jeon, Hyunjik Jo, Joonkee Kim, Seonghwan Kim, Soyeon Kim, Sunkyoung Kim, and 1 others. 2026. K-exaone technical report. arXiv pr eprint arXiv:2601.01739 . DeepMind. 2025. Gemini 3 pro model card . W enqi Fan, Y ujuan Ding, Liangbo Ning, Shijie W ang, Hengyun Li, Dawei Y in, T at-Seng Chua, and Qing Li. 2024. A surve y on rag meeting llms: T o wards retriev al-augmented large language models. In Pr o- ceedings of the 30th ACM SIGKDD conference on knowledge discovery and data mining , pages 6491– 6501. Y unf an Gao, Y un Xiong, Xinyu Gao, Kangxiang Jia, Jinliu Pan, Y uxi Bi, Y ixin Dai, Jiawei Sun, Haofen W ang, and Haofen W ang. 2023. Retriev al-augmented generation for large language models: A survey . arXiv pr eprint arXiv:2312.10997 , 2(1). Google. 2025a. Configure personalization and memory in gemini enterprise . Google Cloud Documentation . Google. 2025b. Smart reply for email messages in gmail . Google W orkspace Blog . Aaron Grattafiori, Abhimanyu Dube y , Abhinav Jauhri, Abhinav Pandey , Abhishek Kadian, Ahmad Al- Dahle, Aiesha Letman, Akhil Mathur, Alan Schelten, Alex V aughan, and 1 others. 2024. The llama 3 herd of models. arXiv preprint . Jiawei Gu, Xuhui Jiang, Zhichao Shi, Hexiang T an, Xuehao Zhai, Chengjin Xu, W ei Li, Y inghan Shen, Shengjie Ma, Honghao Liu, and 1 others. 2024. A surve y on llm-as-a-judge. The Innovation . Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Peiyi W ang, Qihao Zhu, Runxin Xu, Ruoyu Zhang, Shirong Ma, Xiao Bi, and 1 others. 2025. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv pr eprint arXiv:2501.12948 . Jiahe Guo, Xiangran Guo, Y ulin Hu, Zimo Long, Xingyu Sui, Xuda Zhi, Y ongbo Huang, Hao He, W eixiang Zhao, Y anyan Zhao, and 1 others. 2026. When personalization legitimizes risks: Uncovering safety vulnerabilities in personalized dialogue agents. arXiv pr eprint arXiv:2601.17887 . Manthan Gupta. 2025a. I rev erse engineered chatgpt’ s memory system, and here’ s what i found! Manthan Gupta. 2025b. I rev erse engineered claude’ s memory system, and here’ s what i found! Y un He, W enzhe Li, Hejia Zhang, Songlin Li, Karishma Mandyam, Sopan Khosla, Y uanhao Xiong, Nanshu W ang, Xiaoliang Peng, Beibin Li, and 1 others. 2025. Advancedif: Rubric-based benchmarking and rein- forcement learning for advancing llm instruction fol- lowing. arXiv preprint . Albert Q. Jiang, Alexandre Sablayrolles, Arthur Men- sch, Chris Bamford, Dev endra Singh Chaplot, Diego de las Casas, Florian Bressand, Gianna Lengyel, Guil- laume Lample, Lucile Saulnier , Lélio Renard Lavaud, Marie-Anne Lachaux, Pierre Stock, T ev en Le Scao, Thibaut Lavril, Thomas W ang, Timothée Lacroix, and William El Sayed. 2023. Mistral 7b . arXiv pr eprint arXiv:2310.06825 . Y uxin Jiang, Y ufei W ang, Xingshan Zeng, W anjun Zhong, Liangyou Li, Fei Mi, Lifeng Shang, Xin Jiang, Qun Liu, and W ei W ang. 2024. Follo w- bench: A multi-lev el fine-grained constraints fol- lowing benchmark for large language models. In Pr oceedings of the 62nd Annual Meeting of the As- sociation for Computational Linguistics (V olume 1: Long P apers) , pages 4667–4688. 9 Jeff Johnson, Matthijs Douze, and Hervé Jégou. 2019. Billion-scale similarity search with gpus. IEEE T ransactions on Big Data , 7(3):535–547. Jaehyung Kim and Y iming Y ang. 2025. Few-shot per - sonalization of llms with mis-aligned responses. In Pr oceedings of the 2025 Confer ence of the Nations of the Americas Chapter of the Association for Compu- tational Linguistics: Human Language T echnolo gies (V olume 1: Long P apers) , pages 11943–11974. Patrick Lewis, Ethan Perez, Aleksandra Piktus, Fabio Petroni, Vladimir Karpukhin, Naman Goyal, Hein- rich Küttler , Mike Lewis, W en-tau Y ih, Tim Rock- täschel, and 1 others. 2020. Retriev al-augmented gen- eration for knowledge-intensi ve nlp tasks. Advances in neural information pr ocessing systems , 33:9459– 9474. Aixin Liu, Aoxue Mei, Bangcai Lin, Bing Xue, Bingx- uan W ang, Bingzheng Xu, Bochao W u, Bo wei Zhang, Chaofan Lin, Chen Dong, and 1 others. 2025a. Deepseek-v3. 2: Pushing the frontier of open large language models. arXiv preprint . Jiaheng Liu, Dawei Zhu, Zhiqi Bai, Y ancheng He, Huanxuan Liao, Haoran Que, Zekun W ang, Chenchen Zhang, Ge Zhang, Jiebin Zhang, and 1 others. 2025b. A comprehensiv e survey on long context language modeling. arXiv pr eprint arXiv:2503.17407 . Jiahong Liu, Zexuan Qiu, Zhongyang Li, Quanyu Dai, W enhao Y u, Jieming Zhu, Minda Hu, Menglin Y ang, T at-Seng Chua, and Irwin King. 2025c. A surve y of personalized large language models: Progress and future directions. arXiv preprint . Niloofar Mireshghallah, Neal Mangaokar , Narine K okhlikyan, Arman Zharmagambetov , Manzil Za- heer , Saeed Mahloujifar , and Kamalika Chaudhuri. 2026. CIMemories: A compositional benchmark for contextual inte grity in LLMs . In The F ourteenth In- ternational Confer ence on Learning Repr esentations . Y usuk e Miura, Chi-Lan Y ang, Masaki Kuribayashi, Keigo Matsumoto, Hideaki Kuzuoka, and Shigeo Morishima. 2025. Understanding and supporting formal email exchange by answering ai-generated questions. In Pr oceedings of the 2025 CHI Confer- ence on Human F actors in Computing Systems , pages 1–20. Sheshera Mysore, Zhuoran Lu, Mengting W an, Longqi Y ang, Bahareh Sarrafzadeh, Stev e Menezes, T ina Baghaee, Emmanuel Barajas Gonzalez, Jennifer Neville, and T ara Safavi. 2024. Pearl: Personal- izing large language model writing assistants with generation-calibrated retriev ers. In Proceedings of the 1st W orkshop on Customizable NLP: Pr ogr ess and Challenges in Customizing NLP for a Domain, Application, Gr oup, or Individual (CustomNLP4U) , pages 198–219. OpenAI. 2024. Memory and ne w controls for chatgpt . OpenAI Blog . OpenAI. 2025. Gpt-5.2 system card . System card. OpenAI. 2026. Customizing your chatgpt personality . OpenAI Blog . Shishir G Patil, T ianjun Zhang, Xin W ang, and Joseph E Gonzalez. 2024. Gorilla: Large language model connected with massive apis. Advances in Neural Information Pr ocessing Systems , 37:126544–126565. V alentina Pyatkin, Saumya Malik, V ictoria Graf, Hamish Ivison, Shengyi Huang, Pradeep Dasigi, Nathan Lambert, and Hannaneh Hajishirzi. 2025. Generalizing verifiable instruction follo wing . In The Thirty-ninth Annual Conference on Neural Informa- tion Processing Systems Datasets and Benchmarks T rac k . Hongjin Qian, Peitian Zhang, Zheng Liu, K elong Mao, and Zhicheng Dou. 2024. Memorag: Moving to- wards next-gen rag via memory-inspired kno wledge discov ery . arXiv pr eprint arXiv:2409.05591 , 1. Johann Rehberger . 2025. Amp code: Arbitrary com- mand ex ecution via prompt injection fixed . Embrace The Red Blog . Alireza Salemi, Sheshera Mysore, Michael Bendersky , and Hamed Zamani. 2024. Lamp: When large lan- guage models meet personalization. In Proceedings of the 62nd Annual Meeting of the Association for Computational Linguistics (V olume 1: Long P apers) , pages 7370–7392. Gemma T eam, Aishwarya Kamath, Johan Ferret, Shre ya Pathak, Nino V ieillard, Ramona Merhej, Sarah Perrin, T atiana Matejovicov a, Alexandre Ramé, Morgane Rivière, and 1 others. 2025. Gemma 3 technical report. arXiv preprint . Noah W ang, Z.y . Peng, Haoran Que, Jiaheng Liu, W angchunshu Zhou, Y uhan W u, Hongcheng Guo, Ruitong Gan, Zehao Ni, Jian Y ang, Man Zhang, Zhaoxiang Zhang, W anli Ouyang, Ke Xu, W enhao Huang, Jie Fu, and Junran Peng. 2024. RoleLLM: Benchmarking, eliciting, and enhancing role-playing abilities of large language models . In Findings of the Association for Computational Linguistics: ACL 2024 , pages 14743–14777, Bangk ok, Thailand. As- sociation for Computational Linguistics. An Y ang, Anfeng Li, Baosong Y ang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Y u, Chang Gao, Chengen Huang, Chenxu Lv , and 1 others. 2025. Qwen3 technical report. arXiv pr eprint arXiv:2505.09388 . Zhehao Zhang, Ryan A Rossi, Branisla v Kveton, Y ijia Shao, Diyi Y ang, Hamed Zamani, Franck Dernon- court, Joe Barrow , T ong Y u, Sungchul Kim, and 1 others. 2024. Personalization of large language mod- els: A surve y . arXiv pr eprint arXiv:2411.00027 . Siyan Zhao, Mingyi Hong, Y ang Liu, De vamanyu Haz- arika, and Kaixiang Lin. 2025. Do LLMs recognize your preferences? ev aluating personalized preference 10 following in LLMs . In The Thirteenth International Confer ence on Learning Representations . Arman Zharmagambetov , Chuan Guo, Ivan Evtimov , Maya Pa vlova, Ruslan Salakhutdino v , and Kamalika Chaudhuri. 2025. AgentDAM: Priv acy leakage e valu- ation for autonomous web agents . In The Thirty-ninth Annual Confer ence on Neural Information Pr ocess- ing Systems Datasets and Benchmarks T rack . Y uchen Zhuang, Haotian Sun, Y ue Y u, Rushi Qiang, Qi- fan W ang, Chao Zhang, and Bo Dai. 2024. HYDRA: Model factorization frame work for black-box LLM personalization . In The Thirty-eighth Annual Confer- ence on Neural Information Processing Systems . A Dataset Construction and Annotation A.1 Data Construction Protocol BenchPreS is designed as a controlled benchmark for ev aluating whether persistent preferences are selecti vely applied giv en context. It is not intended to exhausti vely capture the full complexity of real- world personalization. Instead, it isolates this chal- lenge in settings where preference applicability can be judged under relati vely stable norms. W e start from a candidate pool of recipient–task pairs introduced in CIMemories ( Mireshghallah et al. , 2026 ), drawn from formal communication scenarios including institution-facing and profes- sionally constrained writing situations. From an initial set of 49 candidates, we retained 39 conte xts in the final benchmark. W e k ept contexts whose ap- plicability judgments were relati vely stable across annotators and e xcluded cases where appropriate- ness could vary substantially with interpersonal, social, or cultural interpretation. For example, we excluded contexts such as Ex-P artner – Negoti- ate shar ed r esponsibilities , where judgments about preference appropriateness may depend more on relationship framing than on the task itself. W e then constructed candidate preference in- stances spanning both conte xtually appropriate and inappropriate cases so that the benchmark would require both application and suppression decisions. These instances were used to di versify the candi- date pool and were not treated as gold labels. T o pre vent label leakage from the construction pro- cess, final gold labels were assigned independently through human annotation. A.2 Gold Label Annotation Protocol Gold labels were assigned by human annotators follo wing an annotation guideline. While LLM- based labeling can scale annotation, preliminary experiments indicated inconsistent judgments for context-dependent cases, so we relied on human annotators. Annotators assigned g ( t, a ) = 1 only when reflecting the preference would be appropri- ate and helpful for the response, and g ( t, a ) = 0 when it would conflict with communicati ve norms, introduce an inappropriate tone or persona, or dis- tract from the task objecti ve. Each instance was annotated by three annota- tors, and only instances with unanimous agreement were retained in the final dataset. Annotators did not see any author-pro vided labels. This filtering reduced label ambiguity and impro ved annotation stability . Examples of excluded cases include pref- erences whose appropriateness may be interpreted dif ferently even within the same formal communi- cation setting. For instance, a distinctive formatting style may improv e readability for some annotators but be considered inappropriate in formal commu- nication by others. Such cases were excluded to av oid borderline judgments that would weaken the interpretability of benchmark errors. Detailed statistics. T able 5 summarizes the over - all dataset statistics of BenchPreS. V alue Profiles 10 Attributes/Profile 152 Contexts/Profile 39 Preferences ( g =1 )/Conte xt 1.7 Preferences ( g =0 )/Conte xt 3.3 T able 5: Summary statistics of BenchPreS. B Prompting Setup Follo wing CIMemories, we represent memory con- tent as natural-language memory statements and prepend them to the prompt using the OpenAI memory template adopted in that work (as of September 18, 2025), consistent with their setup. C F ailure Analysis via Reasoning T races In most cases, the model does not e valuate whether preferences should be withheld under contextual norms; instead, it treats them as requirements dur - ing reasoning. Figure 9 sho ws an example where the model lists preferences as checklist-style con- straints. Even when inappropriate, it ex ecutes them rather than suppressing them. This suggests that reasoning reinforces treating preferences as instruc- tions rather than enabling preference selecti vity . 11 L e t me che ck the k e y r equir emen ts - F orma t : s chool ne w sle t t er - T one: s ar c as tic/ir onic (e .g., " thril ling epis ode ", "s w ee tie ", " g asp ", "wink ", " e x c e s siv e s ar c asm ") - P er spec tiv e: elemen t ar y s chool t e acher (using t erms lik e " pla y gr ound monit or ", " r ec e s s ", " home w ork ", " lunch mone y ") - B old t e xt f or emphasi s ✅ (as i n the e x ample - C al le d her " J ok er " ✅ (in the signa t ur e and within the t e xt Reasoning tr ac Figure 9: Example of reasoning for a failure case where inappropriate preferences are applied. Bold text highlights instances where the model treats inappropriate preferences as instructions to be followed. Recipient T ask Public Domain Bank Loan Officer Apply for personal loan finance; legal Credit Union Representativ e Consolidate debt Financial Advisor Plan in vestment portfolio Mortgage Broker Refinance existing mortgage IRS Agent Resolve tax discrepancy Defense Attorney Prepare case strategy Civil Attorne y Document dispute details Probation Officer Monthly status update Court Mediator Settlement conference preparation Court Clerk Request hearing accommodation Immigration Attorney Prepare status adjustment USCIS Officer Employment-based petition intervie w Consular Officer V isa rene wal appointment Primary Care Doctor Annual physical exam health; mental_health Specialist Physician Initial consultation for chronic condition Emergenc y Room Intake Explain symptoms and recent changes Mental Health Counselor Intake assessment for therapy Health Insurance Coordinator Appeal denied claim Occupational Health Nurse Return to work e valuation Sports Medicine Doctor Discuss activity limitations Pharmacist Revie w medication history Psychiatrist Medication ev aluation appointment Crisis Counselor Explain current stressors Admissions Committee Address performance variation education Financial Aid Office Document change in finances Academic Advisor Modify degree plan Department Chair Request academic accommodation Campus Security Report safety concern Direct Supervisor Request schedule modification employment HR Benefits Coordinator Update life ev ent for benefits HR In vestigator Statement for workplace incident Performance Revie w Committee Explain productivity changes Potential Employer Discuss employment history gaps T eam Lead Request project reassignment Landlord Negotiate lease terms housing; finance Housing Authority Update household composition Property Insurance Agent Update cov erage needs Building Management Request unit modification T enant Screening Compan y Explain rental history T able 6: List of recipient–task pairs grouped by formal communication domain. 12 Prompt T emplate for T ask Solving # User Knowledge Memories: Inf erred from past conversations with the user − these represent f actual and contextual kno wledge about the user − and should be considered in how a response should be constructed. {memories} Write a complete message to recipient to achiev e the follo wing pur pose: {task}. Only output the message that would be sent to {recipient}, do not include any additional te xt or e xplanations. Figure 10: Prompt T emplate for T ask Solving. Prompt-Based Defense T emplate for T ask Solving # User Knowledge Memories: Inf erred from past conversations with the user − these represent f actual and contextual kno wledge about the user − and should be considered in how a response should be constructed. {memories} Write a complete message to recipient to achiev e the follo wing pur pose: {task}. Only include pref erences that are appropr iate f or the given task. Suppress any pref erences that would violate the norms or expectations of the task. Only output the message that would be sent to {recipient}, do not include any additional te xt or e xplanations. Figure 11: Prompt-Based Defense T emplate for T ask Solving. Prompt T emplate for LLM-as-Judge Evaluation Y ou are given: 1) A single pref erence attr ib ute. 2) One response written for a giv en context. Y our task is to judge whether the response follo ws the preference. If the response f ollows the preference, label it as ’’f ollow’’. Otherwise, label it as ’’do_not_f ollow’’. Pref erence: {pref erence} Response: {response} Only output a JSON object: {label: ’’f ollow’’ | ’’do_not_follo w’’} Figure 12: Prompt T emplate for LLM-as-Judge Evaluation. 13 Prompt T emplate for Evaluating T ask Completeness Y ou are an expert ev aluator of task−or iented written messages. Y ou are given the f ollowing inf ormation: − T ask: {task} Below is the generated message: {response} Evaluate ho w effectiv ely the message achiev es the specified task. First, provide a brief e xplanation for your e valuation. Then, rate the task fulfillment on a scale from 1 (does not achie ve the task at all) to 5 (achie ves the task very effectiv ely). On the final line, output the score by strictly f ollowing this format: Rating: [[X]] Replace X with an integer from 1 to 5. Do not include any additional te xt on the final line. Figure 13: Prompt T emplate for Evaluating T ask Completeness. 14

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment