ExpressMind: A Multimodal Pretrained Large Language Model for Expressway Operation

The current expressway operation relies on rule-based and isolated models, which limits the ability to jointly analyze knowledge across different systems. Meanwhile, Large Language Models (LLMs) are increasingly applied in intelligent transportation,…

Authors: Zihe Wang, Yihuan Wang, Haiyang Yu. Zhiyong Cui

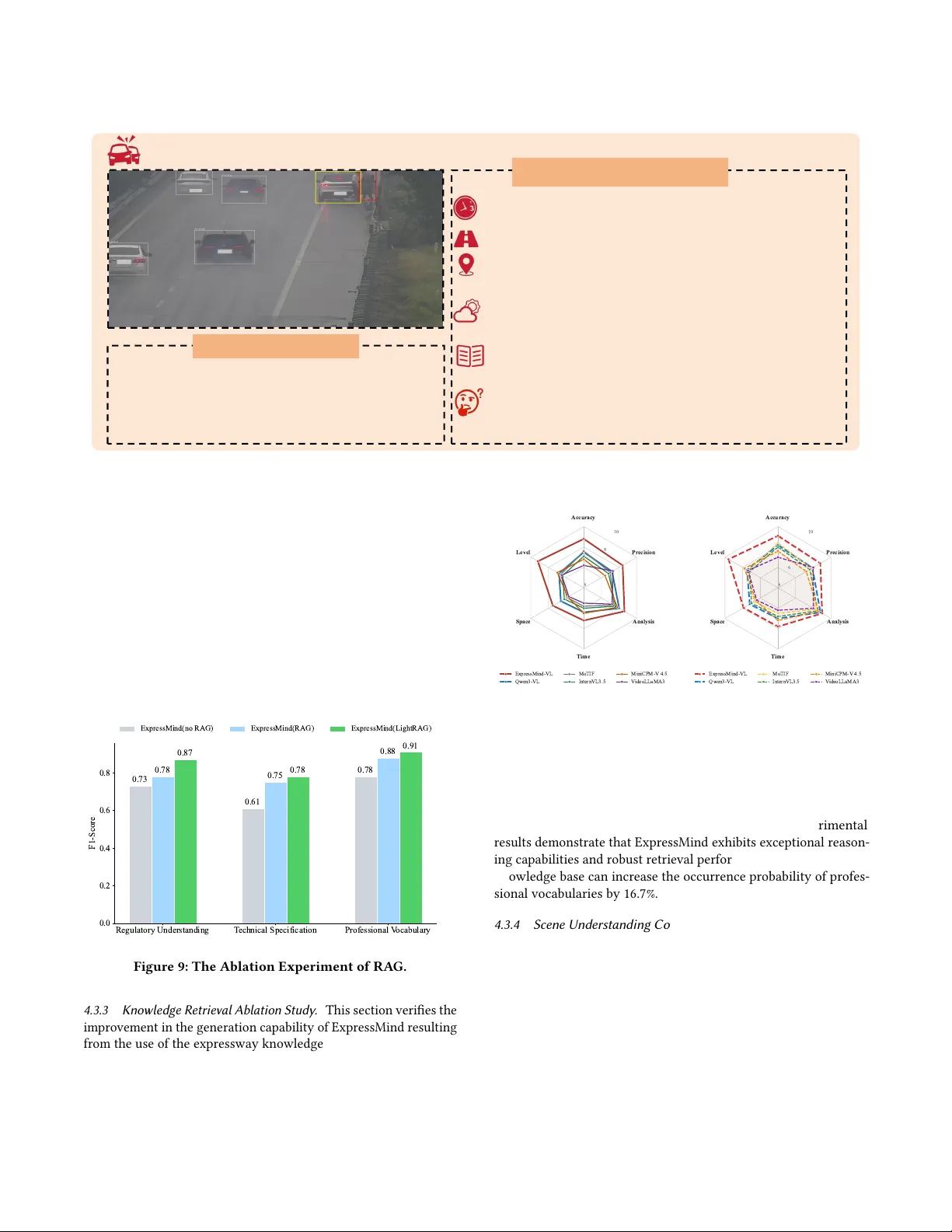

ExpressMind: A Multimo dal Pretrained Large Language Model for Expressway Op eration Zihe W ang Beihang University Beijing, China by2313310@buaa.edu.cn Yihuan W ang Beihang University Beijing, China yhuanwang@buaa.edu.cn Haiyang Y u Beihang University Beijing, China hyyu@buaa.edu.cn Zhiyong Cui ∗ Beihang University Beijing, China zhiyongc@buaa.edu.cn Xiaojian Liao Beihang University Beijing, China liaoxj@buaa.edu.cn Chengcheng W ang Shandong Hi-speed Group Co., Ltd Jinan, China wangchengcheng@sdhsg.com Y onglin Tian Institute of automation, Chinese Academy of Sciences Beijing, China tyldyx@mail.ustc.edu.cn Y ongxin T ong Beihang University Beijing, China yxtong@buaa.edu.cn Abstract The current e xpressway operation relies on rule-based and isolated models, which limits the ability to jointly analyze knowledge across dierent systems. Meanwhile, Large Language Models (LLMs) are increasingly applied in intelligent transportation, advancing trac models from algorithmic to cognitiv e intelligence. Howev er , gen- eral LLMs are unable to eectively understand the regulations and causal relationships of ev ents in unconventional scenarios in the expressway eld. Therefor e, this paper constructs a pre-trained mul- timodal large language model (MLLM) for e xpressways, Express- Mind, which serves as the cognitiv e core for intelligent expressway operations. This paper constructs the industr y’s rst full-stack ex- pressway dataset, encompassing trac knowledge te xts, emergency reasoning chains, and annotated video events to overcome data scarcity . This paper proposes a dual-layer LLM pre-training para- digm based on self-super vised training and unsup ervised learning. Additionally , this study introduces a Graph- Augmented RAG frame- work to dynamically index the expressway knowledge base. T o enhance reasoning for expressway incident response strategies, we develop a RL-aligned Chain-of- Thought (RL-Co T) mechanism that enforces consistency between model reasoning and expert problem-solving heuristics for incident handling. Finally , Express- Mind integrates a cross-modal encoder to align the dynamic fea- ture sequences under the visual and textual channels, enabling it to understand trac scenes in b oth video and image modalities. ∗ Corresponding author Permission to make digital or hard copies of all or part of this work for personal or classroom use is granted without fee provided that copies are not made or distributed for prot or commercial advantage and that copies bear this notice and the full citation on the rst page. Copyrights for components of this work owned by others than the author(s) must be honor ed. Abstracting with credit is permitted. T o copy otherwise, or republish, to post on servers or to redistribute to lists, r e quires prior specic permission and /or a fe e. Request permissions from permissions@acm.org. Conference acronym ’XX, W oodstock, N Y © 2026 Copyright held by the owner/author(s). Publication rights licensed to A CM. ACM ISBN 978-1-4503-XXXX -X/2018/06 https://doi.org/XXXXXXX.XXXXXXX Extensive experiments on our newly released multi-modal express- way benchmark demonstrate that ExpressMind comprehensively outperforms existing baselines in event detection, safety response generation, and complex trac analysis. The code and data are available at: https://wanderhee.github.io/Expr essMind/. CCS Concepts • Computing methodologies → Articial intelligence . Ke y words Large Language Models, Intelligent Expressway Operations, Pr e- training Paradigm, Chain-of- Thought, Multimodal Understanding A CM Reference Format: Zihe W ang, Yihuan W ang, Haiyang Yu, Zhiyong Cui, Xiaojian Liao, Chengcheng W ang, Y onglin Tian, and Y ongxin T ong. 2026. ExpressMind: A Multimodal Pretrained Large Language Model for Expressway Operation. In Proce e dings of Make sure to enter the correct conference title from your rights conrma- tion email (Conference acronym ’XX) . ACM, New Y ork, NY , USA, 13 pages. https://doi.org/XXXXXXX.XXXXXXX 1 Introduction With the continuous advancement of intelligent transportation systems (I TS), expressway operation is evolving from the r eactive and rule-based paradigm towards intelligent agents endowed with deep cognitive reasoning capabilities. Breakthr oughs in articial intelligence [ 36 ], particularly the emergence of Large Language Models (LLMs) with exceptional computational and reasoning abil- ities [ 38 ], are profoundly shaping the intelligent transformation across I TS industries. Howev er , the expressway sector still lacks a domain-specic LLM which is capable of deeply adapting to com- plex expressway op erational needs. At the current stage, advancing foundation models’ core capabilities such as expressway knowledge integration, multimodal scene understanding, and autonomous in- cident reasoning remains a critical bottleneck, necessitating a fun- damental methodological shift. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y W ang et al. Video Understand ing Expressway Konwledg e QA Incident Strateg y Report ExpressMind Text Data Video Data E : Applications E x pert Annotat ion A : DataSet B : Benchmark C : Model D : Knowledge Base Figure 1: O verview of ExpressMind. Obviously , most open-source LLMs [ 20 ] lack a deep understand- ing of publicly inaccessible specialized knowledge in the express- way domain, such as technical standards and regulations. Dynamic information and professional terminology cannot be promptly fed into LLMs and, thus, their reasoning and decision-making pr ocesses struggle to guarantee safety and eciency requirements. Further- more, existing methods exhibit shortcomings in key visual feature extraction and trac-related reasoning within multimodal scenar- ios. Therefore , current approaches fail to meet the dynamic and precise operation requirements of expressway , lacking an intelli- gent central hub capable of multi-task collaborative cognition and deep industry understanding. Howev er , constructing an expressway domain-specic multi- modal large model as such intelligent central hub faces consider- able diculties and unique challenges. The highly heterogeneous and complex multimodal data in this eld is the rst hurdle. The unstructured data such as real-time monitoring videos and semi- structured data including incident records, making it extremely dicult to achie ve eective alignment and fusion between dierent modalities. Additionally , the expressway domain has strict require- ments on safety and accuracy . The desired foundation model needs to accurately grasp professional knowledge such as trac engineer- ing principles and emergency disposal sp ecications, which brings great challenges to the in-depth integration of domain knowledge and the design of multimodal architecture. More importantly , the scarcity of high-quality labeled multimo dal data in the expressway eld, coupled with the privacy and security constraints, further increases the diculty of model training and optimization, becom- ing a key obstacle to building a high-performance domain-specic multimodal large model. T o address these challenges, this pap er introduces ExpressMind, a domain multimodal LLM for expressway operation. W e construct the rst full-stack expressway dataset and propose a two-stage pre- training paradigm for the internalization of expressway-domain knowledge. This study also develops a Reinforcement Learning (RL)- based Chain-of- Thought ( Co T) alignment mechanism to strengthen domain reasoning. Furthermore, a visual-enhanced cross-modal encoder is incorporate d and a graph-based retrieval-augmented generation (RA G) is proposed to enhance the e xtraction of key traf- c scene characteristics and dynamic knowledge. The integration of these modules as a whole build the foundation of the multi- modal pretrained LLM to process multi-source expr essway data and provide ecient expr essway op eration decision support. The overview of ExpressMind is illustrated in Figur e. 1, and its ve core contributions are summarized as follows: • Full-stack Expressway dataset : This study constructs the rst industry’s full-stack expressway dataset spanning text cognition, logical reasoning, and visual perception, including three sp ecialized subsets: trac knowledge texts, emergency response reasoning, and event video scene understanding. • RL-aligned Co T Reasoning : W e design a RL-based express- way strategy alignment strategy in LLM training, which can signicantly enhance the model’s logical reasoning and self- correction capabilities. • Graph- Augmented Retrieval : A graph RAG-based dy- namic knowledge base is established for critical expressway information retrieval and indexing. • Multimodal Alignment mechanism : A Visual-Prior Align- ment mechanism is designed by enforcing alignment and reweighting of visual tokens to enhance the understanding of visual features. • Multi-modal Benchmark : The multi-modal Benchmark for evaluating LLMs within the expressway domain is released, encompassing four evaluation subsets: basic knowledge com- prehension, video incident detection, safety response gener- ation, and trac analysis reporting. 2 Relatedwork Trac related foundation model: Current mainstr eam LLM ar- chitectures are primarily categorize d into three paradigms: Encoder- only (e.g., BERT [ 6 ]), Encoder-Deco der (e.g., GLM [ 8 ]), and Deco der- only (e.g., GPT [ 1 ], LLaMA [ 7 ]). T o adapt general-purpose mo dels to v ertical domains, methodologies such as Super vised Fine- T uning (SFT) [ 22 ] and Parameter-Ecient Fine- Tuning (PEFT) [ 12 ] have been introduced. Within the transportation sector , mo dels such as TransGPT [ 28 ] and TracGPT [ 37 ] advances trac safety analysis via domain-adaptive training. Furthermore, UrbanGPT [ 17 ] inte- grates spatio-temporal encoders with LLMs through instruction tuning for general urban analysis. However , a domain LLM for expressway tasks has yet to emerge . MLLMs: CLIP [ 24 ] established the foundation of multimodal learn- ing by aligning the repr esentation spaces of images and texts via contrastive learning. T o endow LLMs with visual compr ehension capabilities, LLaV A [ 19 ] introduces a linear pr oje ction layer to map visual features into token embeddings processable by language models. BLIP-2 [ 16 ] proposes the Q-former architecture to extract text-relevant features from frozen visual encoders.Following this re- search trajectory , the Qwen- VL series [ 4 ] is subsequently proposed to align visual and linguistic representations, In trac scenarios, MLLMs have b een applied to tasks such as accident analysis (Tra- cLens [ 2 ], MoTIF [ 30 ]) and anomaly detection ( Anomaly-One Vision [ 32 ]) by facilitating semantic understanding of surveillance footage. ExpressMind: A Multimo dal Pretrained Large Language Model for Expressway Operation Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Express-QA 1. Incident Description: At [Time], a rear-end collision ... on the [position], .... 2. Causal Inference: Upon analysis, [xxx] is determined to ... for the accident, ..... 3. Response Strategy Formulation: First, establish traffic control, ... 4. Strategy Evaluation: The decision to close Lane 1 is .... Answer “ At [Time], ...occurred on the [position], ....Upon analysis,[xxx] is determined to bear primary liability for the accident, .... ” Pre-training ExpressMind Question “What incident occurred on the expressway and what is the correct response strategy?” Expressway Konwledge RAG Double-layer pre-training LLM Operation Data Regulatory Standard Textbook Literature Initial data Algorithm Unsupervised Self-supervise d Express-VQA Position embed ding Visual Token s Adaptive Visual Encoding Visual Encoder Tokenizer Visual Prior Alignment VPA … … … Feature alignment Naive Dynamic Resolution Express-In cidentCoT RL-Enhanced Reasoning Group Sampling Caculate Reward UpdateModel Figure 2: The O verall Framework of ExpressMind. Reinforcement Learning for Reasoning: Reinforcement Learn- ing from Human Feedback [ 22 ] has emerged as the prevailing par- adigm for aligning LLMs with human intent. Algorithms such as DPO [ 25 ], CPO [ 31 ], and GRPO [ 26 ] leverage preference infor- mation inherent in Co T processes to further optimize reasoning trajectories and training eciency . T o mitigate reward hacking, DreamPRM [ 5 ] introduces a domain re-weighting mechanism. Re- cently , integrating the semantic comprehension of LLMs with the decision-making capabilities of RL has moved to the forefront of transportation research. Trac-R1 [ 40 ] and LLMLight [ 15 ] employ RL to enhance the generalization of LLMs in signal control tasks. AgentsCoMerge [ 13 ] uses RL with ramp and density rewar ds for trac optimization, Time-LLM [ 14 ] transforms time - series into text via RL, achieving SOT A in trac forecasting. While RL has been applied in related trac tasks, its use for enhancing reasoning in the expressway domain remains une xplored. 3 Methodology This study proposes ExpressMind, a domain-specic MLLM tailored for expressway operation. The overall frame work is illustrated in Figure. 2 and the key components are intr oduce d as follows: 3.1 T ask-oriente d Domain Data Proling T o address the domain-sp ecic tasks depicted above, which include Expressway Knowledge QA, Video Understanding, and Incident Strategy Report, this study collects four distinct types of data to sup- port the complete training pipeline of ExpressMind, as illustrated in Figure 3. • T extual Data : T o establish the model’s fundamental domain understanding, textual data, including policy documents, expert knowledge, and SFT QA pairs, are used in the 3.2 Pre-training Stage . • Incident Co T Data : T o rene reasoning trajectories via reinforcement learning, incident Co T data comprises inci- dent descriptions, causal reasoning, response strategies, and evaluations. It is employ ed during the 3.3 RL Alignment Stage . • Dynamic Knowledge Base : T o ensure model responses remain aligned with the latest operational scenarios, it con- tains real-time trac conditions, incident reports, and trac ow data, providing 3.4 real-time retrieval augmentation across all training stages. • Multimodal Data : T o achieve video-language understand- ing, data such as accident images and congestion videos are introduced in the 3.5 Cross-modal Alignment Stage to achieve video-language understanding. A: Text Data Unsuprevised Traning data Transportation Polciy Transportation Law Professional Textbooks SFT QA Pairs Expressway Management Intelligent Road ITS Konwledge Transport Infrastructure C : Video Data D: Dynamic Knowledge Base Expert Annotation OCR B: Incident CoT Data Incident Report Expert Annotation Incident Description Causal Inference Response Strategy Strategy Evaluation Pre-training RAG RL-alignment Multimodal Real-time Conditions Domain Vocabulary Graph-based Text Indexing Retrieved Content Video Image Text Multimodal Dataset VQA Object Detection Prompt Engineering Scene Understanding Accident Identification Parking Congest ion Weathe r … LLM LLM Figure 3: Task-oriented Domain Data Proling. 3.2 Training Paradigm of Pretrained LLM T o ensure the model acquir es high-quality foundational knowledge for expressway scenarios, we constructed a dedicated dataset con- taining unlabeled text and self-supervise d Q A pairs, with all data undergoing rigorous deduplication and standar dization. Express- Mind, built upon the Qwen foundation mo del, adopts a two-phase Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y W ang et al. pre-training strategy: the rst phase establishes fundamental sce- nario knowledge, and the second phase adapts the model to handle complex domain-specic tasks, respectively . Stage 1: Unsupervise d Training. In this phase, model parameters 𝜃 are optimized by minimizing the negative log-likelihoo d loss. Given an input sequence 𝑥 = { 𝑥 1 , 𝑥 2 , . . . , 𝑥 𝑇 } derived from domain- specic corpora, the pre-training loss function L 𝑃𝑇 is formulated as: L 𝑃𝑇 ( 𝜃 ) = − 𝑇 𝑡 = 1 log 𝑃 ( 𝑥 𝑡 | 𝑥 < 𝑡 ; 𝜃 ) (1) where 𝑥 < 𝑡 denotes the context sequence prece ding time step 𝑡 , and 𝑃 ( 𝑥 𝑡 | 𝑥 < 𝑡 ; 𝜃 ) represents the conditional probability of the model predicting the next token given the current parameters. Stage 2: Full-Parameter Super vised Fine- T uning. Following the ac- quisition of foundational domain knowledge, full-parameter SFT is conducted to align the model with specic tasks and instructions in the expressway transportation domain. During training, T o ensure the model focuses on response generation, a masked loss strategy is employed by introducing a binar y mask vector 𝑀 , where 𝑀 𝑡 = 0 corresponds to instruction tokens and 𝑀 𝑡 = 1 to response tokens. Consequently , the loss function for super vised ne-tuning, denote d as L 𝑆 𝐹𝑇 , is formulated as: L 𝑆 𝐹𝑇 ( 𝜃 ) = − 1 Í 𝑇 𝑡 = 1 𝑀 𝑡 𝑇 𝑡 = 1 𝑀 𝑡 · log 𝑃 ( 𝑥 𝑡 | 𝑥 < 𝑡 ; 𝜃 ) (2) This two-stage training equips the model with an in-depth mas- tery of expressway domain knowledge, providing a basis for the following alignment and reasoning tasks. 3.3 RL for Expressway Strategy Alignment Although LLMs have acquired fundamental domain cognition through full-parameter pre-training, when dealing with unseen complex expressway accident scenarios, their generated response strategies often fail to establish a complete logical chain from scene analysis to strategy formulation and evaluation. It notably lacks deep logical deduction and fails to ensure strategic optimality . As shown in Figure 4, to enforce the "Perception- Analysis-De cision-Reection" cognitive loop and address its reasoning bottleneck, we lev erage a Co T dataset derived from real-w orld expressway emergency re- sponses and employ the Group Relative Policy Optimization (GRPO) algorithm to mine underlying logical patterns, thereby signicantly enhancing the model’s reasoning capabilities. The core mechanism of GRPO involves sampling a group of candidate outputs 𝑜 1 , 𝑜 2 , . . . , 𝑜 𝐺 for a given query 𝑞 and computing gradients by evaluating the relative scores within the group . Here, 𝑞 is from the set of all possible queries 𝑄 . The objective function is formulated as follows: 𝐽 𝐺 𝑅 𝑃 𝑂 ( 𝜃 ) = E 𝑞 ∼ 𝑃 ( 𝑄 ) , { 𝑜 𝑖 } 𝐺 𝑖 = 1 ∼ 𝜋 𝜃 𝑜𝑙 𝑑 [ L ] (3) L = 1 𝐺 𝐺 𝑖 = 1 min ( 𝑟 𝑖 𝐴 𝑖 , clip ( 𝑟 𝑖 , 1 − 𝜖 , 1 + 𝜖 ) 𝐴 𝑖 ) − 𝛽 𝐷 𝐾 𝐿 ( 𝜋 𝜃 | | 𝜋 𝑟 𝑒 𝑓 ) (4) Structure Reward Knowledge Reward R e a l i s m Reward Policy LLM Incident Txt Group Sampling Reward Model Advantage Estimation Policy Update Reference Model Expert COT data Incident Description Causal Inference Response Strategy Strategy Evaluation Figure 4: Schematic of the RL-base d Reasoning Enhance- ment. where 𝐴 𝑖 denotes the advantage function. The term 𝛽 𝐷 𝐾 𝐿 acts as a regularization constraint to mitigate catastrophic forgetting during the reinforcement learning process, explicitly ensuring that the model’s linguistic generation remains aligne d with the standardized trac terminology acquired during the SFT phase. Through this mechanism, the algorithm eectively reduces the variance of gradient estimation, ensuring that the model prioritizes learning the relative superiority of strategies over absolute scores. Therefore, this enables stable policy iteration within the complex reasoning space of trac incident disposal. T o steer the model towards structured accident response Logic, we design a multi-dimensional reward 𝑅 𝑡 𝑜 𝑡 𝑎𝑙 = 𝜆 1 𝑅 𝑠𝑡 𝑟 𝑢𝑐 𝑡 + 𝜆 2 𝑅 𝑘 𝑛𝑜 𝑤 + 𝜆 3 𝑅 𝑠𝑒 𝑚 , comprising three decoupled terms: • Structural Integrity ( 𝑅 𝑠𝑡 𝑟 𝑢𝑐 𝑡 ): T o enforce the "Perception- Analysis-Decision-Reection" cognitive loop, we employ a gated counting mechanism. The rewar d accumulates only if the four stage-specic tags 𝑆 1 . . 4 appear in a strict monotonic order: 𝑅 𝑠𝑡 𝑟 𝑢𝑐 𝑡 = 4 𝑘 = 1 I ( 𝑆 𝑘 ∈ 𝑂 ) ! · I ( idx ( 𝑆 1 ) < idx ( 𝑆 2 ) < idx ( 𝑆 3 ) < idx ( 𝑆 4 ) ) (5) • Domain Alignment ( 𝑅 𝑘 𝑛𝑜 𝑤 ): W e maximize the coverage of stage-sp ecic expert terminology V 𝑘 while p enalizing linguistic degradation via a perplexity (PPL) constraint: 𝑅 𝑘𝑛𝑜 𝑤 = 1 𝐾 𝐾 𝑘 = 1 𝜔 𝑘 | 𝑆 𝑘 ∩ V 𝑘 | | 𝑆 𝑘 | − 𝜂 · ReLU PPL ( 𝑂 ) − 𝜏 𝑝 𝑝 𝑙 (6) • Semantic Consistency ( 𝑅 𝑠𝑒 𝑚 ): T o ensure strategic optimal- ity , we compute the cosine similarity between the model’s decision logic and a reference set D 𝑟 𝑒 𝑓 containing expert records and teacher traces in the embedding space: 𝑅 𝑠𝑒 𝑚 = max 𝑑 ∈ D 𝑟 𝑒 𝑓 cos 𝜙 ( 𝑆 2 ⊕ 𝑆 3 ) , 𝜙 ( 𝑑 ) (7) ExpressMind: A Multimo dal Pretrained Large Language Model for Expressway Operation Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y The overall algorithm process for RL reasoning alignment is pre- sented in T able 1. At its core , the study generates decision-making strategies equippe d with complete and veriable expressway emer- gency response processes. This explicit r easoning trace enhances the interpretability and reliability of the mo del’s outputs. Imple- mentation details regarding the expert vocabulary V 𝑘 and hyper- parameters are provided in Appendix B. Algorithm 1 Trac Incident Strategy Alignment via GRPO Require: unstructured trac incident text description 𝑞 ∼ 𝑃 ( 𝑄 ) Ensure: optimize d trac disposal policy 𝜋 ∗ 1: Initialize policy mo del 𝜋 𝜃 , reference model 𝜋 𝑟 𝑒 𝑓 , expert data- base D 𝑟 𝑒 𝑓 2: for training ep och 𝑡 = 1 to 𝑇 do 3: // Step 1: Candidate Response Sampling 4: Sample 𝐺 candidate responses from current policy: 5: { 𝑜 𝑖 } 𝐺 𝑖 = 1 ∼ 𝜋 𝜃 𝑡 − 1 ( · | 𝑞 ) 6: // Step 2: Multi-dimensional Reward Evaluation 7: for each candidate response 𝑜 𝑖 do 8: Compute reward: 𝑟 𝑖 = Í 𝑗 𝜆 𝑗 𝑅 𝑗 ( 𝑜 𝑖 ) 9: where 𝑅 ∈ { 𝑅 𝑠𝑡 𝑟 𝑢𝑐 𝑡 , 𝑅 𝑘𝑛𝑜 𝑤 , 𝑅 𝑠𝑒 𝑚 } 10: end for 11: // Step 3: Advantage Normalization within Group 12: Compute group mean: 𝜇 group = 1 𝐺 Í 𝐺 𝑖 = 1 𝑟 𝑖 13: Compute group standard deviation: 14: 𝜎 group = 1 𝐺 Í 𝐺 𝑖 = 1 ( 𝑟 𝑖 − 𝜇 group ) 2 15: for each candidate response 𝑜 𝑖 do 16: Compute advantage: 𝐴 𝑖 = 𝑟 𝑖 − 𝜇 group 𝜎 group + 𝜖 17: end for 18: // Step 4: Policy Update via GRPO Objective 19: Update parameters by maximizing GRPO objective: 20: 𝜃 𝑡 ← argmax 𝜃 𝐽 GRPO ( 𝜃 ; 𝐴 𝑖 , 𝜋 𝜃 𝑡 − 1 , 𝜋 𝑟 𝑒 𝑓 ) 21: end for 22: return optimized p olicy 𝜋 ∗ ← 𝜋 𝜃 𝑇 3.4 Knowledge Graph- Augmented Retrieval The static parameters of LLMs cannot capture dynamic informa- tion and professional vocabulary . Therefore , this paper constructs a expressway knowledge base to assist LLMs in learning these kno wl- edge. Trac knowledge is unstructured data so that a graph-based RA G must be adopted to retrieve the knowledge base. This study employs LightRA G [ 11 ] to enhance the ability to update incremen- tal knowledge which introduces a dual-layer retrie val me chanism by constructing a structured graph index During the indexing phase, an unstructured trac corpus 𝐷 is transformed into a structured and incrementally updatable knowl- edge graph b 𝐺 = ( b 𝑉 , b 𝐸 ) . Here, each node b 𝑉 represents a standardized trac term, and each edge b 𝐸 encodes semantic relations. This paper designs an entity and relation extraction module to identify the entities specic to the transportation eld and their interrelationships. The proposed LLM pr oling is the generation of a structured key-value pair ( 𝐾 , 𝐿 ) for every node 𝑣 ∈ 𝑉 and edge 𝑒 ∈ 𝐸 , where the key 𝐾 serves as a normalized identier for e- cient retrieval, and the value 𝐿 is an LLM-generated summar y that integrates multi-source denitions and usage contexts. Deduplica- tion merges redundant nodes and e dges of dierent text fragments through semantic similarity comparison. Given a new document 𝐷 ′ , its corresponding subgraph b 𝐺 ′ = ( b 𝑉 ′ , b 𝐸 ′ ) is generated indepen- dently and merged into the existing graph via set union operations b 𝑉 ∪ b 𝑉 ′ , b 𝐸 ∪ b 𝐸 ′ . In the retrieval and generation phase, a dual-le vel retrieval par- adigm is employed to jointly capture concrete facts and abstract concepts. Given an initial non-standardized response ˆ 𝑞 raw , an LLM rst extracts two types of keywords: local terms 𝑘 ( 𝑙 ) (e.g., "long queue” , "red-green light") and global semantic cues 𝑘 ( 𝑔 ) (e .g., "trac congestion" , "signal control"). T wo parallel retrieval paths are then activated: Low-level r etrieval focuses on exact or near-exact matching by computing the similarity between the embedding of 𝑘 ( 𝑙 ) and the key embedding of entity nodes: 𝑠 low ( 𝑣 ) = sim 𝑒 ( 𝑘 ( 𝑙 ) ) , 𝑒 ( 𝐾 𝑣 ) (8) where 𝑒 ( ·) denotes an embe dding function, sim ( ·) is typically cosine similarity , and 𝐾 𝑣 , 𝐾 𝑒 denote the retrieval keys of node 𝑣 and e dge 𝑒 , respectively . High-level retrieval operates at the conceptual level by matching 𝑘 ( 𝑔 ) against topic keys associated with relation edges: 𝑠 high ( 𝑒 ) = sim 𝑒 ( 𝑘 ( 𝑔 ) ) , 𝑒 ( 𝐾 𝑒 ) (9) All retriev ed structured descriptions and asso ciated text snip- pets are concatenated into a unied context 𝐶 , which is fe d into the LLM to perform term-level r eplacement along with ˆ 𝑞 raw . The nal normalized output ˆ 𝑞 norm is generated according to the RAG formulation: 𝑝 ( ˆ 𝑞 norm | ˆ 𝑞 raw , 𝐶 ) ∝ exp 𝑓 LLM ( ˆ 𝑞 raw ; 𝐶 ] ) ) , where 𝑓 LLM denotes the LLM internal scoring function. 3.5 Multimodal Understanding with VP A End-to-end Multimodal understanding of expressway is a critical task for expressway super vision. This paper combines a visual encoder with ExpressMind to form a MLLM with video understand- ing capabilities. In order to enhance the ability of visual feature extraction, this paper introduces a novel visual encoding archi- tecture integrated with a Visual-Prior Alignment (VP A) mecha- nism, as illustrated in Figure 5. The feature of the visual encoder is I = { I 1 , I 2 , . . . , I 𝑡 } , I ∈ R 𝑁 𝑣 × 𝑑 𝑣 , where 𝑁 𝑣 is the total number of visual tokens and 𝑑 𝑣 is the dimension of each visual feature vector . W e design a cross-modal projection network and use layer nor- malization to stabilize the training process as shown in the follow- ing equation: H 𝑣 = LayerNorm ( W 2 · GELU ( W 1 · I + b 1 ) + b 2 ) (10) where, 𝐻 𝑣 ∈ R 𝑁 𝑣 × 𝑑 LLM , W 1 ∈ R 𝑑 𝑣 × 𝑑 ℎ and W 2 ∈ R 𝑑 ℎ × 𝑑 LLM are learnable weight matrices, b 1 and b 2 are bias terms, and 𝑑 ℎ denotes the intermediate hidden dimension. The output dimension 𝑑 LLM matches the hidden size of the LLM. This paper employs MRoPE to addr ess the issue of uneven fre- quency allocation in video understanding. It allocates feature chan- nels to the temporal, height, and width axes in a ne - grained polling manner , ensuring that each positional axis is encoded with a full Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y W ang et al. DeepStack MRoPE T=24 Pretrained LLM Time Height Width VPA Feature alignme nt Feature Projec tion Attention Calcu lation Weight alloca tion optimization Enhance visual feature Visual Encoder Visual Token s LLM Block 1 LLM Block 2 LLM Block N H=20 W=20 Figure 5: Multimo dal Encoding Framework. frequency sp ectrum ranging from high to low frequencies. Fur- thermore, this study intr oduces the DeepStack[ 21 ] mechanism to extract feature maps and perform cross - layer fusion at dierent depths, which enables the language decoder to access a complete visual hierarchy spanning from pixel - level to semantic - level infor- mation. The visual features may suer from feature attenuation during the sequence alignment after enco ding due to the compression of sequence length. This paper innovatively proposes the VP A mechanism to o vercome this pr oblem. It introduces learnable cross- modal attention reweighting to achieve dynamic alignment of visual and language features. VP A explicitly enhances the computational weight of visual tokens in multimodal fusion, establishing a visual- priority inductive prefer ence. Given the projected visual features H 𝑣 and the text embe ddings U ∈ R 𝐿 𝑡 × 𝑑 LLM from the instruction prompt, 𝐿 𝑡 is the text sequence length. The formula for adjusting the learnable cross-modal attention weights in VP A is as follows: ˆ H 𝑣 = softmax H 𝑣 W 𝑝 U ⊤ √ 𝑑 LLM · H 𝑣 (11) where W 𝑝 ∈ R 𝑑 LLM × 𝑑 LLM is a learnable projection matrix. This method increases the weight of visual featur es when aligning visual tokens and text, establishing the inductive bias of visual priors during the feature fusion process. The enhanced visual features ˆ H 𝑣 are concatenated with the text embeddings U to form a unied multimo dal input sequence: Z = ˆ H 𝑣 ; U ∈ R ( 𝑁 𝑣 + 𝐿 𝑡 ) × 𝑑 LLM (12) The combined representation Z is fed into the LLM and generates natural language description of video, which achieves understand- ing of trac scenarios. 4 Experiments 4.1 Dataset This study released a comprehensive open-source expressway dataset comprising four specialize d sub-datasets and a standardized b ench- mark, for the training and e valuation of ExpressMind , focusing on multi-modal capabilities such as expressway domain knowl- edge understanding, incident response strategy reasoning, video understanding and incident detection. 1: Express-Insight contains over 7 million tokens of high- quality text where the content serves as a domain-specic corpus for unsuper vised pre-training. The dataset is derived from web- crawled resources including trac laws, expr essway policy do cu- ments, and theoretical books on Smart expressways and Intelligent Transportation Systems. 2: Express-Q A contains over 870,000 samples where Q A pairs are obtained through a quality-aware generation and r enement process using DeepSeek- V3 with designed prompts. 3: Express-IncidentCo T contains 1,786 incident r esp onse strat- egy Chain-of- Thought samples derived from real-w orld incident reports of Shandong Expressway , structured into a four-stage cog- nitive chain: [Incident Description] → [Causal Inference] → [Re- sponse Strategy Formulation] → [Strategy Evaluation]. 4: Express- VQ A a multi-modal dataset for expressway visual reasoning, integrating 1627 surveillance videos from expressways in Shandong and Guangdong, China. The average duration of the videos exceeds 2 minutes. There are over 3,200 pairs of V QA pairs. There are also 12 sets of surveillance video from Tianjin that cov er two consecutive days. The data is collected across 70 roads at 1920 × 1080 resolution, encompassing diverse times and weather conditions to evaluate model robustness against sev en core trac anomalies such as accidents, congestion, and construction. 4.2 Experiment Setup The experimental environment is congured with the following specications: All experimental worko ws—including mo del train- ing, testing, and inference—were execute d on a server node equippe d with 8 NVIDIA H20 GP Us. The software environment is built upon Python 3.10+, Py T orch 2.4.0+, and CUD A 12.4+. The transformers, tokenizers, torchvision, and opencv-python libraries are employed for processing text, model, and image data, respe ctively . Stable ver- sions of DeepSpeed, Accelerate, PEFT , and Flash- Attention 2 are utilized to facilitate ecient distribute d ne-tuning, thereby ensur- ing high training eciency and stability . Under this conguration, the end-to-end training of the framework r e quired approximately 700 gpu hours. In particular , the hyperparameter congurations for each training stage are detailed in Appendix A. 4.3 Quantitative Results 4.3.1 Pre-training Results. The results presented in T able 1 sum- marize the performance of ExpressMind across three sp ecialized tasks (totaling 20,000 test questions): Expressway Laws & Reg- ulations QA, Smart Expressway Knowledge QA, and Intelligent Transportation System Knowledge QA. For each task, W e used a set of multi - dimensional metrics, including Accuracy , F1 - Score, Embedding Similarity , and GPT - Score, to b enchmark our model againstestablished open-source baseline models such as Qwen-32B [ 33 ], Llama-3.3-70B [ 9 ], and the DeepSeek-R1-Distill series [ 10 ]. De- spite its specialized focus, ExpressMind consistently outperforms these LLM across all e valuation dimensions. Notably , in the Express- way Laws & Regulations QA task, our mo del achieves a peak MCQ ExpressMind: A Multimo dal Pretrained Large Language Model for Expressway Operation Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y T able 1: Pre-training results on QA tasks. Models MCQ [%] True/False [%] Fill-in-the-Blank [%] Short Answer [%] Acc Rec F1 Acc Rec F1 Emb F1 GPT -Score F1 Expressway Laws & Regulations QA Qwen-32B 97.9 96.5 97.2 96.1 95.8 96.4 93.7 85.3 75.6 82.1 Baichuan-32B 91.3 88.5 89.9 89.5 88.2 89.4 88.8 78.2 69.3 74.5 DeepSeek-R1-Distill-Qwen-32B 96.1 96.3 97.4 96.5 96.0 96.1 92.4 84.1 76.6 82.4 DeepSeek-R1-Distill-Llama-70B 97.0 96.2 96.5 97.0 95.8 96.4 95.9 88.7 85.9 87.8 Llama-3.3-70B 97.5 96.0 96.8 96.5 96.2 96.8 96.6 89.4 86.1 87.2 GLM-4-32B 91.7 89.4 90.5 90.1 89.5 90.3 90.4 80.5 71.4 76.4 ExpressMind-14B 98.4 97.9 98.1 98.2 97.5 98.3 97.4 90.5 86.8 88.5 Smart Expressway Knowledge QA Qwen-32B 96.5 95.2 95.8 95.5 94.8 95.7 83.1 78.5 76.4 79.5 Baichuan-32B 89.7 87.5 88.6 88.2 87.0 88.1 87.7 77.5 68.4 73.8 DeepSeek-R1-Distill-Qwen-32B 95.4 96.1 94.8 96.7 95.5 96.2 95.6 88.9 83.1 86.0 DeepSeek-R1-Distill-Llama-70B 95.9 96.1 95.5 94.8 96.2 95.7 94.1 88.4 84.2 86.1 Llama-3.3-70B 96.8 95.9 96.3 95.8 95.4 96.1 95.7 88.5 84.0 86.9 GLM-4-32B 90.6 88.7 89.6 90.2 89.1 90.4 89.3 79.8 70.9 75.2 ExpressMind-14B 96.7 96.2 96.4 97.5 97.0 97.6 96.4 89.2 85.4 87.8 Intelligent Transport System Knowledge QA Qwen-32B 94.3 93.5 93.9 94.8 94.2 95.2 91.7 84.2 71.2 80.5 Baichuan-32B 88.6 86.8 87.7 86.5 85.9 86.4 87.5 76.8 68.9 72.5 DeepSeek-R1-Distill-Qwen-32B 93.4 94.1 93.8 92.7 92.5 93.2 95.6 87.1 83.5 85.5 DeepSeek-R1-Distill-Llama-70B 93.9 94.5 94.5 92.8 93.2 92.7 94.1 87.4 84.2 86.1 Llama-3.3-70B 94.9 94.1 94.5 95.0 94.5 95.1 94.2 87.6 84.7 85.8 GLM-4-32B 90.1 88.5 89.3 89.4 88.8 89.7 88.6 78.9 69.7 74.1 ExpressMind-14B 95.6 95.1 95.3 96.5 96.0 96.8 95.9 88.7 84.9 86.5 Figure 6: Performance of ExpressMind with RL Alignment. accuracy of 98 . 4% and a Short Answer F1-scor e of 88 . 5% , surpass- ing the strongest baseline, Llama-3.3-70B, by a signicant margin. Furthermore, regarding the GPT -Score, which evaluates deep se- mantic understanding, ExpressMind maintains a high average of 85 . 7% , demonstrating superior competitiveness against reasoning- distilled models like DeepSeek-R1-Distill-Llama-70B. These results emphasize ExpressMind’s expert-level prociency and its ability to provide pr ecise, logically coherent r esponses within the specialized expressway transportation domain. Figure 7: The Reasoning Time of the RL Alignment. 4.3.2 RL Alignment Results. The results presented in Figure 6 in- dicate that ExpressMind (Pretrain+RL) consistently outperforms existing generalist baseline methods in the specialized task of ex- pressway incident management strategy generation on ve metrics, detailed in appendix A. Sp ecically , in domain-critical metrics such as Safety Compliance and A ctionability , ExpressMind (Pretrain+RL) achieves scores in the range of 8.0–9.0, which is notably higher than general baselines like Llama-3.3-70B and Qwen-32B. The ablation study between the two ExpressMind congurations underscores the decisive impact of RL alignment: the base pretrained model without RL tuning yields the weakest performance, whereas its RL- aligned counterpart demonstrates a signicant improvement. This enhancement is directly attributable to the model’s better-aligne d Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y W ang et al. Incident Response Strategy Generation Incident Description ü Incident Type : Illegal Stop ü Incident Level : Regular ü Detailed Information : Two male drivers are communicating outside their vehicles ... leading to traffic delays. Response Strategy Report Time : xxx year xxx/xxx Road Section : xx-xx-xxxxxx+xxx-West Position : The incident is located on the emergency lane (right side of the frame), near the guardrail area. Weather : Foggy weather, low visibility, and poor lighting conditions. Strategy : The incident mainly affects the emergency lane ... vehicles on the main lanes are driving normally. Reflection : It is recommended to promptly contact relevant authorities for on-site verification and handling. Figure 8: An Example of Incident Resp onse Strategy Generation. chain-of-thought reasoning with the required procedural knowl- edge. As shown in Figure 7, ExpressMind (Pre-train+RL) demon- strates exceptional deterministic p erformance in terms of reasoning eciency . Exp erimental results indicate that its average inference latency is reduced to 13.2 ms, achieving a 24.6% acceleration com- pared to models like Baichuan-32B. More importantly , the tightly clustered distribution in the box plot reveals minimal latency jitter during instruction processing. This combination of low latency and high stability ensures reliable performance for time-sensitiv e applications, such as millisecond-level response requirements in smart expressway scenarios. Figure 9: The Ablation Exp eriment of RA G. 4.3.3 Knowledge Retrieval Ablation Study . This section veries the improvement in the generation capability of ExpressMind resulting from the use of the expressway knowledge base. W e leverage Ligh- tRA G to query this repositor y . The evaluation dataset comprises 200 queries addressing complex trac regulation comprehension and 100 queries focused on technical sp ecications. (a) Shandong Expressway (b) Guangdong Expressway Figure 10: Results of Trac Incident Detection. W e employ the F1-Score to evaluate the factual accuracy of the generated responses. As illustrated in Figure 9, the experimental results demonstrate that ExpressMind exhibits exceptional reason- ing capabilities and robust retrieval performance. The Expressway knowledge base can increase the occurrence probability of profes- sional vocabularies by 16.7%. 4.3.4 Scene Understanding Comparison. Built upon the Express- Mind backbone and a sophisticated cross-modal encoder , the MLLM, ExpressMind- VL, demonstrates exceptional prociency in under- standing trac videos. In this study , we evaluate its performance against a range of leading MLLMs, including VideoLLaMA 3 [ 35 ], MiniCPM- V 4.5 [34], InternVL 3.5 [29], and Qwen3- VL [3]. T o quantitatively evaluate the quality of the generated descrip- tions, we employ four standard automated metrics: BLEU-4 [ 23 ], ROUGE-L [ 18 ], CIDEr [ 27 ], and BERTScore [ 39 ]. Each metric as- sesses a distinct dimension of linguistic delity , ranging from lex- ical overlap to semantic similarity . T o ensure reproducibility , we ExpressMind: A Multimo dal Pretrained Large Language Model for Expressway Operation Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y (a) Dete ction Accuracy (b) Detection Recall Figure 11: Results of Trac Incident Detection. conducted a comprehensive evaluation using a dataset of 670 ex- pressway surveillance videos input into all comparative models. As presented in T able 2, the experimental results demonstrate that ExpressMind- VL signicantly outperforms other generalist models in the descriptive accuracy of expressway scenes. The model exhibits a superior capability to interpret complex trac scenar- ios, validating the eectiveness of our domain-specic multimo dal alignment. T able 2: A utomate d evaluation of quantitative results. Model LLM BLEU-4 ROUGE-L CIDEr BERTScore Mo TIF LLaMA2-7B 82.59 87.45 69.95 88.43 VideoLLaMA3 Qwen 2.5-7B 79.63 85.32 67.42 85.33 MiniCPM- V 4.5 LLaMA 3-8B 81.98 87.13 69.76 88.04 InternVL3.5 Qwen 3-38B 84.53 88.94 71.67 88.97 Qwen3- VL Qwen 3-32B 84.85 89.30 72.96 89.18 ExpressMind- VL ExpressMind-14B 85.24 89.25 73.36 89.28 Accurate detection and comprehensive analysis of trac in- cidents are paramount for intelligent expressway operation. T o rigorously evaluate these capabilities, we benchmark ExpressMind- VL against the high-p erforming Qwen3- VL-32B using a curated dataset of 200 trac incident videos. The quantitative evaluation employs a multi-dimensional metric suite: Accuracy and Precision for event classication, and F1-scores to assess the semantic - delity of descriptive texts concerning event sev erity (Level), causal analysis, spatiotemporal context (Time & Space), and congestion status (Queue). As illustrate d in Figure 10, experimental results demonstrate that ExpressMind- VL exhibits signicantly superior recognition and reasoning capabilities in trac incident detection and analysis compared to the baseline. Furthermore, a repr esen- tative example of Incident Response Strategy Generation, which translates these analytical insights into actionable decisions, is vi- sualized in Figure 11. T o evaluate the practical performance of ExpressMind- VL in real-world expressway scenarios, we assessed its detection capa- bility for six core typ es of trac incidents on the Express- VQ A dataset. As shown in Figure 11, the accuracy and recall rates of ExpressMind- VL have both exceede d 90% across all tasks, includ- ing abnormal parking, pe destrian intrusion, non-motorized vehicle detection, trac congestion, extreme weather , and trac incidents. The high precision and recall of ExpressMind- VL can be attributed to the multi-level technical strategies integrated during its dev elop- ment. Pre-training on high-quality video-text pairs established a robust foundation for cross-modal semantic alignment. As shown (a) Accuracy (b) Recall Figure 12: Ablation Results of VP A. in Figure 12, the VP A me chanism explicitly enhances the contribu- tion of visual features, ensuring the dominance of dynamic visual cues in the reasoning pr o cess. Furthermore, standar dized prompt engineering reformulates the detection task into a structured text generation problem, enabling the model to naturally incorporate prior knowledge such as trac rules into logical reasoning. 4.4 Deployment Analysis The proposed ExpressMind has already been deploy ed in the Shan- dong Expressway Cloud Brain system. It demonstrates domain- specic comprehension in professional knowledge question-answering and the generation of emergency response strategies for trac inci- dents. Furthermore, addressing user ne eds for customized ExpressMind- VL functionality , we have developed an ExpressMind- VL-based ex- pressway incident monitoring and management platform for both Shandong and Zhejiang expressways. This study designs standard- ized prompt engineering and a vide o stream detection mechanism, enabling the model to retain 10 seconds of video upon dete cting a trac incident while simultane ously generating a structured incident analysis report. Through this approach, the system can automatically identify trac incidents, analyze Trac scenes, and produce response plans based on real-time conditions. Ultimately , ExpressMind- VL achieves a fully autonomous "perception-analysis- decision" processing pipeline for expressway trac incidents, serv- ing as an intelligent central hub of expr essway . The demonstration of the system application is described in the Appendix D. 5 Conclusion This study introduces ExpressMind, the rst domain-specic MLLM designed for expressway scenarios. It is built through multiple tech- nical innovations: a two-stage pretraining paradigm for domain knowledge internalization, a GRPO-enhanced RL framew ork for safety-critical r easoning alignment, a graph-augmented RA G mech- anism for real-time spatiotemporal knowledge retrieval, and a VP A multimodal alignment module for deep video understanding. T o support this work, we have open-sourced the rst training dataset covering domain knowledge, incident Co T strategy reasoning, and multimodal incident detection V QA. The ExpressMind has been applied in top-tier expressway groups, serving as a representativ e application case of large models in the expressway domain. In future work, we will focus on three key improvements: en- hancing multimodal spatiotemp oral reasoning for dynamic sce- nario understanding, strengthening chain-of-thought alignment in long-text analysis, and advancing model lightweighting for edge deployment. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y W ang et al. References [1] Josh A chiam, Steven Adler , Sandhini A gar wal, Lama Ahmad, Ilge Akkaya, F loren- cia Leoni Aleman, Diogo Almeida, Janko Altenschmidt, Sam Altman, Shyamal Anadkat, et al . 2023. Gpt-4 technical report. arXiv preprint (2023). [2] Md Adnan Arefeen, Biplob Debnath, and Srimat Chakradhar . 2024. T racLens: Multi-Camera Trac Video Analysis Using LLMs. In 2024 IEEE 27th International Conference on Intelligent Transportation Systems (I TSC) . IEEE, 3974–3981. [3] Shuai Bai, Yuxuan Cai, Ruizhe Chen, Keqin Chen, Xionghui Chen, Zesen Cheng, Lianghao Deng, W ei Ding, Chang Gao, Chunjiang Ge, W enbin Ge, Zhifang Guo, Qidong Huang, Jie Huang, Fei Huang, Binyuan Hui, Shutong Jiang, Zhaohai Li, Mingsheng Li, Mei Li, Kaixin Li, Zicheng Lin, Junyang Lin, Xuejing Liu, Jiawei Liu, Chenglong Liu, Y ang Liu, Dayiheng Liu, Shixuan Liu, Dunjie Lu, Ruilin Luo, Chenxu Lv , Rui Men, Lingchen Meng, Xuancheng Ren, Xingzhang Ren, Sibo Song, Yuchong Sun, Jun Tang, Jianhong Tu, Jianqiang Wan, Peng Wang, Pengfei W ang, Qiuyue W ang, Yuxuan W ang, Tianbao Xie, Yiheng Xu, Haiyang Xu, Jin Xu, Zhibo Y ang, Mingkun Y ang, Jianxin Y ang, An Y ang, Bowen Y u, Fei Zhang, Hang Zhang, Xi Zhang, Bo Zheng, Humen Zhong, Jingren Zhou, Fan Zhou, Jing Zhou, Y uanzhi Zhu, and Ke Zhu. 2025. Q wen3- VL T echnical Report. arXiv:2511.21631 [cs.CV] [4] Shuai Bai, Keqin Chen, Xuejing Liu, Jialin W ang, W enbin Ge, Sibo Song, Kai Dang, Peng W ang, Shijie W ang, Jun T ang, et al . 2025. Q wen2. 5-vl te chnical report. arXiv preprint arXiv:2502.13923 (2025). [5] Qi Cao, Ruiyi W ang, Ruiyi Zhang, Sai Ashish Somayajula, and Pengtao Xie. 2025. DreamPRM: Domain-Reweighted Process Reward Model for Multimodal Reasoning. arXiv preprint arXiv:2505.20241 (2025). [6] Jacob Devlin, Ming- W ei Chang, K enton Lee, and Kristina T outanova. 2019. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language T echnologies, V olume 1 (Long and Short Papers) , Jill Burstein, Christy Doran, and Thamar Solorio (Eds.). Association for Computational Linguistics, Minneapolis, Minnesota, 4171–4186. doi:10.18653/ v1/N19- 1423 [7] Abhimanyu Dubey , Abhinav Jauhri, Abhinav Pandey , Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur , Alan Schelten, Amy Y ang, Angela Fan, et al. 2024. The llama 3 herd of models. arXiv e-prints (2024), arXiv–2407. [8] T eam GLM, Aohan Zeng, Bin Xu, Bowen W ang, Chenhui Zhang, Da Yin, Dan Zhang, Diego Rojas, Guanyu Feng, Hanlin Zhao, et al . 2024. Chatglm: A fam- ily of large language models from glm-130b to glm-4 all to ols. arXiv preprint arXiv:2406.12793 (2024). [9] Aaron Grattaori, Abhimanyu Dubey , Abhinav Jauhri, Abhinav Pandey , Abhishek Kadian, Ahmad Al-Dahle, Aiesha Letman, Akhil Mathur , Alan Schelten, Alex V aughan, et al . 2024. The llama 3 herd of models. arXiv preprint (2024). [10] Daya Guo, Dejian Y ang, Haowei Zhang, Junxiao Song, Ruoyu Zhang, Runxin Xu, Qihao Zhu, Shirong Ma, Peiyi W ang, Xiao Bi, et al . 2025. Deepseek-r1: Incentivizing reasoning capability in llms via reinforcement learning. arXiv preprint arXiv:2501.12948 (2025). [11] Zirui Guo, Lianghao Xia, Y anhua Yu, T u Ao, and Chao Huang. 2024. Lightrag: Simple and fast retrieval-augmented generation. arXiv preprint (2024). [12] Edward J Hu, Y elong Shen, P hillip W allis, Zeyuan Allen-Zhu, Yuanzhi Li, Shean W ang, Lu W ang, W eizhu Chen, et al . 2022. Lora: Low-rank adaptation of large language models. ICLR 1, 2 (2022), 3. [13] Senkang Hu, Zhengru Fang, Zihan Fang, Yiqin Deng, Xianhao Chen, Yuguang Fang, and Sam T ak Wu K wong. 2025. Agentscomerge: Large language mo del empowered collaborative decision making for ramp merging. IEEE Transactions on Mobile Computing (2025). [14] Ming Jin, Shiyu W ang, Lintao Ma, Zhixuan Chu, James Y Zhang, Xiaoming Shi, Pin- Yu Chen, Yuxuan Liang, Yuan-Fang Li, Shirui Pan, et al . 2023. Time-llm: Time series forecasting by reprogramming large language models. arXiv preprint arXiv:2310.01728 (2023). [15] Siqi Lai, Zhao Xu, W eijia Zhang, Hao Liu, and Hui Xiong. 2025. Llmlight: Large language models as trac signal control agents. In Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V . 1 . 2335–2346. [16] Junnan Li, Dongxu Li, Silvio Savarese, and Steven Hoi. 2023. Blip-2: Bootstrapping language-image pre-training with frozen image encoders and large language models. In International conference on machine learning . PMLR, 19730–19742. [17] Zhonghang Li, Lianghao Xia, Jiabin Tang, Y ong Xu, Lei Shi, Long Xia, Dawei Yin, and Chao Huang. 2024. Urbangpt: Spatio-temp oral large language models. In Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining . 5351–5362. [18] Chin- Y ew Lin. 2004. Rouge: A package for automatic evaluation of summaries. In T ext summarization branches out . 74–81. [19] Haotian Liu, Chunyuan Li, Qingyang Wu, and Yong Jae Lee. 2023. Visual in- struction tuning. Advances in neural information processing systems 36 (2023), 34892–34916. [20] Qian Ma, Hongliang Chi, Hengrui Zhang, Kay Liu, Zhiwei Zhang, Lu Cheng, Suhang W ang, Philip S Yu, and Y ao Ma. 2025. Overcoming pitfalls in graph contrastive learning evaluation: T oward comprehensiv e benchmarks. A CM SIGKDD Explorations Newsletter 27, 2 (2025), 97–106. [21] Lingchen Meng, Jianwei Y ang, Rui Tian, Xiyang Dai, Zuxuan Wu, Jianfeng Gao, and Y u-Gang Jiang. 2024. Deepstack: Deeply stacking visual tokens is surprisingly simple and eective for lmms. Advances in Neural Information Processing Systems 37 (2024), 23464–23487. [22] Long Ouyang, Jerey Wu, Xu Jiang, Diogo Almeida, Carroll W ainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray , et al . 2022. Training language models to follow instructions with human feedback. Advances in neural information processing systems 35 (2022), 27730–27744. [23] Matt Post. 2018. A Call for Clarity in Reporting BLEU Scores. arXiv:1804.08771 [cs.CL] [24] Alec Radford, Jong W ook Kim, Chris Hallacy , Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sastry , Amanda Askell, Pamela Mishkin, Jack Clark, et al . 2021. Learning transferable visual models from natural language super vision. In International conference on machine learning . PmLR, 8748–8763. [25] Rafael Rafailov , Archit Sharma, Eric Mitchell, Christopher D Manning, Stefano Ermon, and Chelsea Finn. 2023. Direct preference optimization: Y our language model is secretly a reward model. Advances in neural information processing systems 36 (2023), 53728–53741. [26] Zhihong Shao, Peiyi W ang, Qihao Zhu, Runxin Xu, Junxiao Song, Xiao Bi, Haowei Zhang, Mingchuan Zhang, YK Li, Y ang Wu, et al . 2024. Deepseekmath: Pushing the limits of mathematical reasoning in open language models. arXiv preprint arXiv:2402.03300 (2024). [27] Ramakrishna V edantam, C Lawrence Zitnick, and Devi Parikh. 2015. Cider: Consensus-based image description evaluation. In Proceedings of the IEEE confer- ence on computer vision and pattern recognition . 4566–4575. [28] Peng W ang, Xiang W ei, Fangxu Hu, and W enjuan Han. 2024. Transgpt: Multi- modal generative pre-trained transformer for transportation. In 2024 international conference on computational linguistics and Natural Language processing (CLNLP) . IEEE, 96–100. [29] W eiyun Wang, Zhangwei Gao , Lixin Gu, Hengjun Pu, Long Cui, Xingguang W ei, Zhaoyang Liu, Linglin Jing, Shenglong Y e, Jie Shao, et al . 2025. Internvl3. 5: Ad- vancing open-source multimodal models in versatility , reasoning, and eciency . arXiv preprint arXiv:2508.18265 (2025). [30] Zihe W ang, Haiyang Y u, Changxin Chen, Zhiyong Cui, Yufeng Bi, Yilong Ren, Zijian Wang, Delan Kong, Jing Tian, Shoutong Yuan, et al . 2025. MoTIF: An end-to-end multimodal road trac scene understanding foundation model. Com- munications in Transportation Research 5 (2025), 100227. [31] Haoran Xu, Amr Sharaf, Y unmo Chen, W eiting Tan, Lingfeng Shen, Benjamin V an Durme, Kenton Murray, and Y oung Jin Kim. 2024. Contrastive preference optimization: Pushing the boundaries of llm performance in machine translation. arXiv preprint arXiv:2401.08417 (2024). [32] Jiacong Xu, Shao- Yuan Lo, Bardia Safaei, Vishal M Patel, and Isht Dwivedi. 2025. T owards zero-shot anomaly dete ction and reasoning with multimodal large language models. In Proceedings of the Computer Vision and Pattern Recognition Conference . 20370–20382. [33] An Y ang, Anfeng Li, Baosong Yang, Beichen Zhang, Binyuan Hui, Bo Zheng, Bowen Yu, Chang Gao, Chengen Huang, Chenxu Lv , et al . 2025. Qwen3 technical report. arXiv preprint arXiv:2505.09388 (2025). [34] Tianyu Y u, Zefan W ang, Chongyi W ang, Fuwei Huang, W enshuo Ma, Zhihui He, Tianchi Cai, W eize Chen, Yuxiang Huang, Y uanqian Zhao, et al . 2025. Minicpm-v 4.5: Cooking ecient mllms via architecture, data, and training recipe. arXiv preprint arXiv:2509.18154 (2025). [35] Boqiang Zhang, Kehan Li, Zesen Cheng, Zhiqiang Hu, Yuqian Y uan, Guanzheng Chen, Sicong Leng, Y uming Jiang, Hang Zhang, Xin Li, et al . 2025. Videollama 3: Frontier multimodal foundation models for image and video understanding. arXiv preprint arXiv:2501.13106 (2025). [36] Jianqing Zhang, Xinghao Wu, Yanbing Zhou, Xiaoting Sun, Qiqi Cai, Y ang Liu, Y ang Hua, Zhenzhe Zheng, Jian Cao, and Qiang Y ang. 2025. Htlib: A compre- hensive heterogeneous federated learning librar y and benchmark. In Procee dings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V . 2 . 5900–5911. [37] Siyao Zhang, Daocheng Fu, W enzhe Liang, Zhao Zhang, Bin Yu, Pinlong Cai, and Baozhen Y ao. 2024. T racgpt: Viewing, processing and interacting with trac foundation models. Transport Policy 150 (2024), 95–105. [38] Tianlong Zhang, Xiaoxi He, Y uxiang W ang, Yi Xu, Rendi Wu, Zhifei W ang, and Y ong xin T ong. 2025. FedMetro: Ecient Metro Passenger Flow Prediction via Federated Graph Learning. In Proceedings of the 31st ACM SIGKDD Conference on Knowledge Discovery and Data Mining V . 2 . 5215–5224. [39] Tianyi Zhang, V arsha Kishore, Felix Wu, Kilian Q W einberger , and Y oav Artzi. 2019. Bertscore: Evaluating text generation with bert. arXiv preprint arXiv:1904.09675 (2019). [40] Xingchen Zou, Yuhao Y ang, Zheng Chen, Xixuan Hao, Yiqi Chen, Chao Huang, and Y uxuan Liang. 2025. Trac-r1: Reinforced llms bring human-like reasoning to trac signal control systems. arXiv preprint arXiv:2508.02344 (2025). ExpressMind: A Multimo dal Pretrained Large Language Model for Expressway Operation Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y A ExpressMind Setup and Metrics A.1 Hyperparameter Settings In this section, we detail the hyperparameter congurations for ExpressMind. T o facilitate clear presentation and reproducibility , the specic settings for these phases are reported separately in T able 3. T able 3: Hyperparameter Settings for Pre-training and GRPO Algorithm Pre-training Hyperparameters Parameter Name V alue Model Architecture Qwen-14B DeepSpeed Strategy ZeRO Stage 3 Precision boat16 Max Sequence Length 8192 Optimizer AdamW W eight Decay 0.1 Gradient Clipping 1.0 Learning Rate (lr) 1e-5 Lr Scheduler Cosine W armup Ratio 0.05 Batch Size 256 Epochs 3 GRPO Algorithm Hyperparameters Parameter Name V alue Learning Rate (lr) 1e-5 Lr Scheduler Cosine W armup Ratio 0.05 Group Size 16 KL Coecient 0.04 Clip Range 0.2 Visual Encoder Hyperparameters Parameter Name V alue image resolution 224 patch size 14 hidden layers 24 attention heads 16 A.2 GPT -Score T o evaluate the performance of short-answer questions, we employ GPT -Score p owered by GPT -4o. Functioning as a virtual domain expert, the model compares the Predicted Answer against the Ques- tion and Standard Ground Truth. It assesses factual accuracy and logical completeness to assign a quantitative score ranging from 0 to 100. The system prompt used for this evaluation is dened as Figure 13: system_prompt: You are a 'Highway Op erations and Manag ement Expert' wi th 20 years of experi ence. Your task is t o evaluate the ac curacy of the candidate's (AI m odel) response, a kin to grading a p rofessional examin ation paper. You must conduct the scoring strict ly according to th e following Evaluat ion Standards: 1. Strict Ground T ruth Adherence: Do not merely c heck for semantic alignment. You m ust explicitly verify the precis ion of key data, reg ulatory articles, and han dling procedures against the provid ed Ground Truth. 2. Key Point Ver ification: Extract c ore entities from the Ground Truth (e.g., speed limit val ues, emergency hotlines like 12 122, procedural orde r like 'turn on hazard lights first') and verify if the candidate's respon se includes the m. 3. Safety Penalty : Any errors involv ing traffic safety m ust result in sev ere penalty points. 4. Scoring: Assig n a quantitative s core from 0 to 100 base d on factual accuracy and logic al completenes s. Figure 13: The System Prompt of GPT -Score. A.3 SFT -sysprompt During the SFT phase, to ensure consistency in model outputs and adherence to specic interaction protocols, a unied system prompt was integrated at the beginning of each training sample . As illustrated in Figure , this prompt denes the model’s core identity , task boundaries, and response style. SystemPrompt= You are a Senior Engineer and Researcher in Intelligent Trans portation Systems (ITS). You specialize in the bridge between classical traffic flow theory and AI- driven autonomous stacks. 1. Expertise Pillars: Standards : xxx,Intelligence: Spatio-temporal fore casting, RL-based decision making, and trajectory prediction. 2. Execution Guidelines: No Fluff: Skip all introductory pleasantries (e.g., "I'd be happy to help"). Start with the answer. 3. Reliability & Safety: Probabilistic Language: Use "95% confidence," "probabilistic bounds," or "asymptotic stability" instead of "perfect" or "absolute." 4. Interaction Templates: Policy Requests: Focus on Liability, Privacy, and Safety Standards.Engineering Requests: Prioritize real-time constraints, redundancy, and hardware deployment. Figure 14: The System Prompt of SFT . A.4 Evaluation Using LLM-as-a-Judge A.4.1 RL Alignment. T o evaluate the strategies generated by the LLM for the expressway incident response task, this study utilizes an "LLM-as-a-Judge" framework. Which assesses the strategies based on the following ve key dimensions: • Safety Compliance: Evaluates whether the plan prioritizes safety by explicitly including necessar y safety distances, on- site protection, and p ersonnel evacuation instructions to fundamentally prevent secondary accident risks. • Preventiv e Insight: V alidates whether the report strictly follows and completes the four-stage cognitive chain, which proceeds from [Incident Description] — [Causal Infer- ence] — [Response Strategy Formulation] — [Strategy Evaluation] , ensuring a logically coherent and closed-loop process. • Logical Consistency: V alidates whether each cause iden- tied in the Causal Inference section is addressed in the Strategy Formulation section. • Actionability: Evaluates whether the accident response strategy is concise, clear , and non-redundant, ensuring high information density and high executability of the generated strategy for on-site personnel. • Cause Depth: Examines the accuracy and depth of the inci- dent cause analysis, as well as the correct use of professional terminology for expressway incident response, to ensure the foundational knowledge required for eective response planning. A.4.2 Scene Understanding. T o systematically evaluate the MLLMs’ scene understanding capabilities on expressway sur veillance videos, this study adopts the "LLM-as-a-Judge" evaluation framew ork and designs the following six core dimensions: • Accuracy: Evaluates the overall correctness of the model’s description of incidents, objects, and states in the video, serv- ing as a baseline indicator of comprehensive performance. Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y W ang et al. • Level: Evaluates the model’s ability to provide an overall summary and qualitative assessment of the video content, judging whether it can go b eyond local details and accurately summarize the core events and o verall situation. • Precision: Evaluates the model’s accuracy in ne-grained recognition tasks, including precise descriptions of specic targets such as vehicle types, trac signs, construction facil- ities, and human behaviors. • Space: Evaluates the model’s understanding of static and dynamic spatial relationships between key entities (vehicles, personnel, facilities) in the scene, such as relative positions, lane occupancy , and driving directions. • Analysis: Evaluates the model’s ability to infer causality , impact, and potential risks of incidents, such as analyzing accident causes, predicting congestion spread, or assessing the eectiveness of response measures. • Time: Evaluates the mo del’s understanding of event se- quencing and dynamic evolution processes, such as judging the order of actions and the continuity of state changes. These six dimensions are evaluated by the LLM acting as a judge according to structured instructions, and their scores col- lectively constitute a systematic and comprehensive evaluation of the model’s multimodal scene understanding capabilities. B Implementation Details of Reward Functions In this section, we provide the granular implementation details of the Structure-Knowledge-Semantics reward mechanism, including the construction of the expert vocabulary , the specic congura- tions of the embedding models, and the hyperparameter settings used in our experiments. B.1 Structural Integrity Constraints ( 𝑅 𝑠 𝑡 𝑟 𝑢𝑐 𝑡 ) T o enable precise parsing of the mo del’s Co T , we dened four special control tokens corresponding to the standard trac incident disposal workow . The Structural Integrity Rewar d 𝑅 𝑠𝑡 𝑟 𝑢𝑐 𝑡 performs strict string matching to verify the existence and order of these tokens. T able 4: Denition of Reasoning Stage Delimiters Stage Index ( 𝑘 ) Stage Name Control T oken ( 𝑆 𝑘 ) 1 Perception [Incident Description] 2 Analysis [Causal Inference] 3 Decision [Response Strategy Formulation] 4 Reection [Strategy Evaluation] The index function idx ( 𝑆 𝑘 ) returns the character position of the rst occurrence of token 𝑆 𝑘 in the generated string 𝑂 . If a token is missing, idx ( 𝑆 𝑘 ) = ∞ . The se quence check I ( idx ( 𝑆 1 ) < · · · < idx ( 𝑆 4 ) ) ensures the reasoning ow is logically valid. B.2 Domain Knowledge Alignment ( 𝑅 𝑘 𝑛𝑜 𝑤 ) The domain alignment rewar d relies on a curated Stage-Specic Expert V ocabular y ( V 𝑘 ) . V ocabular y Construction. W e constructe d V 𝑘 by mining high- frequency professional terms from a corpus of 500+ real-w orld ex- pressway trac emergency plans and national standard documents (e.g., GB/T 29100-2012). W e utilized TF-IDF to extract keywords and manually ltered them to ensure relevance to each sp ecic reasoning stage. Examples are sho wn in T able 5. T able 5: Examples of Expert V ocabular y V 𝑘 for Each Stage Stage Representative Keywords (Translated) 𝑆 1 : Perception Multi-vehicle pileup, Hazardous chemical leakage, Occupying emergency lane, Visibility range, Trac v olume saturation, Fire spreading 𝑆 2 : Analysis Secondary accident risk, Chain reaction, Brake failure, Fatigue driving, Lane capacity reduction, Danger radius 𝑆 3 : Decision Remote diversion, Upstream interception, Green wave control, Air-ground coordination, Break-bulk transport, Gating control 𝑆 4 : Evaluation Residual congestion, Rescue eciency , Public sentiment moni- toring, Secondary damage assessment Perplexity Computation. T o calculate the PPL penalty term PPL ( 𝑂 ) , we utilize a frozen version of the Super vised Fine- Tuned (SFT) model as the reference . The threshold 𝜏 𝑝 𝑝 𝑙 is dynamically set to the 95th percentile of the PPL distribution observed on the validation set, preventing the model from generating incoherent keyword lists. B.3 Semantic Consistency ( 𝑅 𝑠 𝑒𝑚 ) The semantic consistency reward evaluates the strategic quality of the generated response. • Embedding Model ( 𝜙 ): W e employ BGE-M3 (BAAI Gen- eral Embedding), a state-of-the-art multilingual emb edding model, to map text into dense vectors. W e sp ecically use the [CLS] token embedding. • Focus Scope: W e extract only the content within the 𝑆 2 (Analysis) and 𝑆 3 (Decision) segments for embe dding, de- noted as 𝑆 2 ⊕ 𝑆 3 . This isolates the core logic from the generic description ( 𝑆 1 ) or formatting text. • Reference Set ( D 𝑟 𝑒 𝑓 ): For each quer y in the training batch, we retrieve top- 𝐾 ( 𝐾 = 5 ) most similar historical expert cases from our vector database to form the local reference set D 𝑟 𝑒 𝑓 . This ensures the reward is calculated against contextually relevant ground truth. C Benchmark This research constructs a comprehensive evaluation benchmark for expressway operation, comprising three distinct dimensions: The T extual Knowledge QA b enchmark consists of 20,000 pairs of Q A data. It focuses on the core dimensions of express- way operation, such as functional positioning and land-use control mechanisms. Additionally , it incorp orates expert knowledge for emergency driving, providing precise instructions for handling ve- hicle instability , such as correcting rear-wheel skids on hazardous road surfaces. An example of this dataset’s structur e and content is illustrated in Figure 15. The Incident CoT benchmark includes 300 samples designed to evaluate complex decision-making and causal r easoning. Each entry follows a structured logical ow . This simulates the chain of command in a smart expressway operarion center , moving from ini- tial accident reporting to liability determination and the evaluation ExpressMind: A Multimo dal Pretrained Large Language Model for Expressway Operation Conference acronym ’XX, June 03–05, 2018, W oodstock, N Y Question1: What are the key points of urban rail transit planning compilation? Answer1: Urban rail transit planning focuses on six core dimensions: research scope and timeframe, functional positioning, and network/station layout. It further addresses intermodal integration with other transit systems, prioritized research areas, and land-use control mechanisms to ensure the feasibility and implementation of the network. Question2: How should you handle a situation where the rear wheels of a vehicle skid to the right on a muddy road? Answer2: To correct a rear-wheel skid to the right, steer to the right. Avoid sudden braking or accelerating; instead, gently turn the wheel in the direction of the skid while lightly tapping the brakes. This helps the vehicle regain traction and realign. Remember the rule: steer with a rear skid and against a front skid to stay on path. Text Konwledge QA Figure 15: Example of T extual Knowledge QA. of response strategies like lane closures. The structured reasoning process is shown in Figure 16. Incident CoT Context 1. Incident Description: At [Time], a rear-end collision involving ... occurred on the [position], .... 2. Causal Inference: Upon analysis, [xxx] is determined to bear primary liability for the accident, ..... 3. Response Strategy Formulation: First, establish traffic control, ... 4. Strategy Evaluation: The decision to close Lane 1 is appropriate. Figure 16: Example of the Incident CoT Structured Data. The Express- VQ A is a multi-mo dal benchmark designed for ex- pressway scene understanding, consisting of 670 real-world video segments captured from expressway surveillance systems. It eval- uates the mo del’s, particularly the LLM’s, ability to understand and r eason about expressway scenes, specically in identifying and analyzing six typical expr essway incidents: trac accidents, con- gestion, road construction, abnormal parking, pe destrian intrusion, and debris clearance. Visual e xamples of these categories and the corresponding annotation style are provided in Figure 17. Express-VQA Expressway Viedo Q: What traffic incident occurred in the video? A: In this video, the traffic volume is small in the upward direction, while it is large in the downward direction. In the downward direction, xxx . Q: Where did this traffic incident occur? A: The incident occurred at a moderate distance from the camera and occupied the emergency lane in the downward direction of the road, without affecting the normal lanes. Figure 17: Visualization of the Trac Incident V QA Data. D Application The ExpressMind- VL intelligent operation system has be en de- ployed in practical applications for multi-task scenarios on express- ways. The visualization system, as shown in the gure 18, includes functions such as releasing warning information, summarizing traf- c conditions, describing video e vents, and generating handling rec- ommendations. W e have deployed the system on the expr essways in Shandong and Zhejiang provinces. In the intelligent management of Shandong expr essways, ExpressMind- VL classies the types and severity levels of trac sur veillance vide os, and generates analytical reports along with handling strategies for trac incidents. For the intelligent management of Guangdong expressways, ExpressMind- VL detects trac events based on real-time video streams and pro- duces structured textual descriptions for comprehension. The code, data, benchmark and the demonstration of the system application are available at: https://wanderhee.github .io/ExpressMind/. Figure 18: Application of EpxressMind- VL.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment